Recognition: no theorem link

BubbleSpec: Turning Long-Tail Bubbles into Speculative Rollout Drafts for Synchronous Reinforcement Learning

Pith reviewed 2026-05-12 01:19 UTC · model grok-4.3

The pith

BubbleSpec turns idle bubbles from faster ranks into pre-generated speculative drafts to halve decoding steps in synchronous RL rollouts.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

BubbleSpec exploits the idle time windows of faster data-parallel ranks to pre-generate rollout results for subsequent steps, using those results as drafts inside a speculative decoding procedure that replaces part of the normal generation process while leaving the final trajectories and policy updates mathematically identical to standard synchronous rollouts.

What carries the argument

Pre-generation of future rollout drafts during idle bubbles on faster ranks, fed into speculative decoding for later steps.

If this is right

- Rollout throughput rises without any change to the mathematical form of the RL objective or update rule.

- Acceleration begins at the first training step and does not require dataset warm-up or similarity assumptions across epochs.

- The method works with any existing synchronous RL framework or strategy because it never relaxes the synchronization barrier.

- Gains grow with the size of long-tail bubbles, which are most pronounced in long-context LLM training.

Where Pith is reading between the lines

- The same idle-time pre-generation idea could be tested in other distributed systems that enforce strict synchronization barriers, such as certain forms of distributed inference or multi-agent simulation.

- If bubble sizes vary dramatically across hardware generations, the net benefit of pre-generation would need re-measurement to confirm overhead remains negligible.

- Layering BubbleSpec on top of existing speculative decoding methods inside the same rollout step might produce additive speedups if the two draft sources do not interfere.

Load-bearing premise

That pre-generated drafts from idle time windows can be integrated as speculative results without introducing overhead or violating the exact synchronous nature of the RL algorithm.

What would settle it

Execute identical RL rollouts with and without BubbleSpec on the same random seeds and inputs, then verify that the generated token sequences, rewards, and resulting gradient updates match exactly.

Figures

read the original abstract

Reinforcement Learning (RL) has become a cornerstone for improving the performance of Large Language Models (LLMs). However, its rollout phase constitutes a significant efficiency bottleneck, mainly arising from the long-tail bubbles across data parallel ranks, particularly in long-context scenarios where faster GPUs remain idle while waiting for stragglers. Existing solutions, such as partial rollout or asynchronous RL, mitigate these bubbles by compromising the algorithm's strict synchronous nature. Instead, we propose BubbleSpec, a novel framework that accelerates RL rollouts while strictly keeping the mathematical exactness. Instead of attempting to eliminate bubbles, BubbleSpec exploits them. We exploit the idle time windows of faster ranks to pre-generate rollout results for subsequent steps, serving as drafts for speculative decoding. Unlike prior speculative methods that rely on historical epoch similarity and warm-ups, BubbleSpec is agnostic to dataset size and provides immediate acceleration from the onset of training. Extensive evaluations demonstrate that BubbleSpec reduces decoding steps by 50% and increases rollout throughput by up to 1.8x. Critically, BubbleSpec is seamlessly compatible with various RL frameworks and strategies as it sustains the strict synchronous property of RL algorithms.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes BubbleSpec, a framework that exploits idle GPU time (long-tail bubbles) across data-parallel ranks during LLM RL rollouts to pre-generate speculative drafts for future steps. This is claimed to reduce decoding steps by 50% and boost rollout throughput by up to 1.8x while preserving strict mathematical exactness and the synchronous property of the RL algorithm, in contrast to prior partial-rollout or asynchronous approaches. The method is presented as dataset-agnostic with immediate gains from training onset and seamless compatibility with existing RL frameworks.

Significance. If the empirical claims and exactness preservation hold under scrutiny, BubbleSpec could meaningfully alleviate a key efficiency bottleneck in synchronous RL for long-context LLMs without requiring changes to the core algorithm or warm-up periods. The approach of repurposing unavoidable idle time for speculative computation is conceptually attractive for scaling RL training.

major comments (2)

- [§4 and §5] §4 (Method) and §5 (Evaluation): The central claim that speculative drafts integrate without violating strict synchrony or introducing overhead rests on unshown integration mechanics and zero-deviation verification. The abstract asserts 'mathematical exactness' and 'strict synchronous property,' but without explicit pseudocode, update-equation equivalence proof, or side-by-side rollout-value comparison tables, it is impossible to confirm the weakest assumption does not introduce bias in the RL objective.

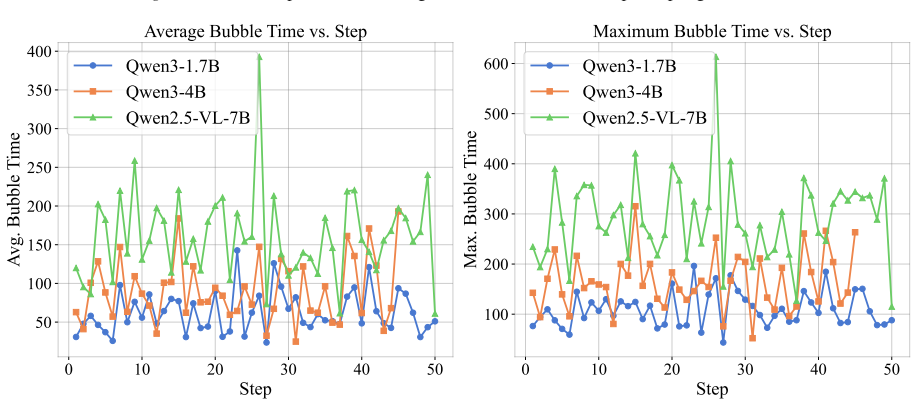

- [Table 2 / Figure 4] Table 2 / Figure 4 (throughput results): The reported 1.8x throughput and 50% decoding-step reduction lack error bars, number of runs, hardware configuration details, and baseline definitions (e.g., exact comparison to standard vLLM or HuggingFace rollout). These numbers are load-bearing for the significance claim yet cannot be assessed for statistical robustness or confounding factors such as batch-size effects.

minor comments (3)

- [Abstract / §1] Abstract and §1: The phrase 'agnostic to dataset size' is used without a supporting argument or ablation; clarify whether this holds only for the speculative draft generation or also for the overall RL convergence.

- [§3] §3 (Related Work): Missing explicit comparison to recent speculative decoding works in RL contexts (e.g., any citation to Medusa-style or draft-model methods adapted for rollouts) would strengthen positioning.

- [§2] Notation: Define 'bubble' and 'draft' formally on first use with a small diagram or timing diagram to avoid ambiguity for readers unfamiliar with data-parallel straggler patterns.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment point by point below and commit to revisions that strengthen the clarity and verifiability of our claims.

read point-by-point responses

-

Referee: [§4 and §5] §4 (Method) and §5 (Evaluation): The central claim that speculative drafts integrate without violating strict synchrony or introducing overhead rests on unshown integration mechanics and zero-deviation verification. The abstract asserts 'mathematical exactness' and 'strict synchronous property,' but without explicit pseudocode, update-equation equivalence proof, or side-by-side rollout-value comparison tables, it is impossible to confirm the weakest assumption does not introduce bias in the RL objective.

Authors: We agree that the integration mechanics and exactness claims require explicit documentation. In the revised manuscript we will add (i) detailed pseudocode in §4 showing how idle-time draft generation is scheduled and how accepted drafts are substituted into the synchronous rollout without changing the per-token sampling distribution or the subsequent RL update equations, (ii) a short equivalence argument demonstrating that the final rollout trajectories and value estimates remain identical to the non-speculative synchronous baseline, and (iii) side-by-side tables in §5 comparing rollout values, policy gradients, and loss terms across matched seeds. These additions will make the zero-bias property directly verifiable. revision: yes

-

Referee: [Table 2 / Figure 4] Table 2 / Figure 4 (throughput results): The reported 1.8x throughput and 50% decoding-step reduction lack error bars, number of runs, hardware configuration details, and baseline definitions (e.g., exact comparison to standard vLLM or HuggingFace rollout). These numbers are load-bearing for the significance claim yet cannot be assessed for statistical robustness or confounding factors such as batch-size effects.

Authors: We acknowledge that the current presentation of the throughput results is insufficiently rigorous. In the revised version we will augment Table 2 and Figure 4 with (i) error bars showing mean ± standard deviation over at least five independent runs, (ii) explicit hardware details (GPU count, model size, context length, batch size), and (iii) a clarified baseline section that specifies the exact vLLM and HuggingFace rollout configurations used for comparison, ensuring identical batch sizes and synchronization settings. This will allow readers to evaluate statistical robustness and rule out confounding factors. revision: yes

Circularity Check

No significant circularity detected

full rationale

The paper describes BubbleSpec as a systems-level framework that exploits idle GPU time windows during synchronous RL rollouts to pre-generate speculative drafts, claiming 50% reduction in decoding steps and up to 1.8x throughput gains while preserving mathematical exactness and strict synchrony. No equations, derivations, fitted parameters, or self-referential definitions appear in the provided text. Claims rest on empirical evaluations and compatibility with existing RL frameworks rather than any reduction of outputs to inputs by construction, self-citation chains, or ansatz smuggling. The central premise is presented as an independent engineering insight without load-bearing steps that collapse into tautology.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Long-tail bubbles arise across data parallel ranks in RL rollouts, particularly in long-context scenarios, causing idle time on faster GPUs.

invented entities (1)

-

BubbleSpec framework

no independent evidence

Reference graph

Works this paper leans on

-

[1]

An, C., Xie, Z., Li, X., Li, L., Zhang, J., Gong, S., Zhong, M., Xu, J., Qiu, X., Wang, M., et al. Polaris: A post- training recipe for scaling reinforcement learning on ad- vanced reasoning models, 2025.URL https://hkunlp. github. io/blog/2025/Polaris,

work page 2025

-

[2]

Hydra: Sequentially-dependent draft heads for medusa decoding.arXiv preprint arXiv:2402.05109, 2024

Ankner, Z., Parthasarathy, R., Nrusimha, A., Rinard, C., Ragan-Kelley, J., and Brandon, W. Hydra: Sequentially- dependent draft heads for medusa decoding.arXiv preprint arXiv:2402.05109,

-

[3]

Bai, S., Chen, K., Liu, X., Wang, J., Ge, W., Song, S., Dang, K., Wang, P., Wang, S., Tang, J., et al. Qwen2. 5-vl technical report.arXiv preprint arXiv:2502.13923,

work page internal anchor Pith review Pith/arXiv arXiv

-

[4]

Medusa: Simple LLM Inference Acceleration Framework with Multiple Decoding Heads

Cai, T., Li, Y ., Geng, Z., Peng, H., Lee, J. D., Chen, D., and Dao, T. Medusa: Simple llm inference acceleration framework with multiple decoding heads.arXiv preprint arXiv:2401.10774,

work page internal anchor Pith review arXiv

-

[5]

Areal: A large-scale asynchronous reinforcement learning system for language reasoning, 2025

Fu, W., Gao, J., Shen, X., Zhu, C., Mei, Z., He, C., Xu, S., Wei, G., Mei, J., Wang, J., et al. Areal: A large-scale asynchronous reinforcement learning system for language reasoning.arXiv preprint arXiv:2505.24298,

-

[6]

Gao, C., Zheng, C., Chen, X.-H., Dang, K., Liu, S., Yu, B., Yang, A., Bai, S., Zhou, J., and Lin, J. Soft adaptive policy optimization.arXiv preprint arXiv:2511.20347, 2025a. Gao, W., Zhao, Y ., An, D., Wu, T., Cao, L., Xiong, S., Huang, J., Wang, W., Yang, S., Su, W., et al. Rollpacker: Mitigating long-tail rollouts for fast, synchronous rl post- trainin...

-

[7]

He, J., Li, T., Feng, E., Du, D., Liu, Q., Liu, T., Xia, Y ., and Chen, H. History rhymes: Accelerating llm reinforcement learning with rhymerl.arXiv preprint arXiv:2508.18588, 2025a. He, Z., Liang, T., Xu, J., Liu, Q., Chen, X., Wang, Y ., Song, L., Yu, D., Liang, Z., Wang, W., et al. Deepmath-103k: A large-scale, challenging, decontaminated, and verifia...

-

[8]

Hu, J., Wu, X., Zhu, Z., Wang, W., Zhang, D., Cao, Y ., et al. Openrlhf: An easy-to-use, scalable and high-performance rlhf framework.arXiv preprint arXiv:2405.11143,

-

[9]

Hu, Q., Yang, S., Guo, J., Yao, X., Lin, Y ., Gu, Y ., Cai, H., Gan, C., Klimovic, A., and Han, S. Taming the long-tail: Efficient reasoning rl training with adaptive drafter.arXiv preprint arXiv:2511.16665,

-

[10]

Jaech, A., Kalai, A., Lerer, A., Richardson, A., El-Kishky, A., Low, A., Helyar, A., Madry, A., Beutel, A., Car- ney, A., et al. Openai o1 system card.arXiv preprint arXiv:2412.16720,

work page internal anchor Pith review Pith/arXiv arXiv

-

[11]

Understanding the effects of rlhf on llm generalisation and diversity

Kirk, R., Mediratta, I., Nalmpantis, C., Luketina, J., Ham- bro, E., Grefenstette, E., and Raileanu, R. Understanding the effects of rlhf on llm generalisation and diversity. arXiv preprint arXiv:2310.06452,

-

[12]

Li, Y ., Wei, F., Zhang, C., and Zhang, H. Eagle: Speculative sampling requires rethinking feature uncertainty.arXiv preprint arXiv:2401.15077,

-

[13]

Li, Y ., Wei, F., Zhang, C., and Zhang, H. Eagle-3: Scal- ing up inference acceleration of large language models via training-time test.arXiv preprint arXiv:2503.01840,

-

[14]

Liu, B., Wang, A., Min, Z., Yao, L., Zhang, H., Liu, Y ., Zeng, A., and Su, J. Spec-rl: Accelerating on-policy reinforcement learning via speculative rollouts.arXiv preprint arXiv:2509.23232, 2025a. Liu, J., Li, Y ., Fu, Y ., Wang, J., Liu, Q., and Shen, Y . When speed kills stability: Demystifying rl collapse from the training-inference mismatch, septemb...

-

[15]

Faithful chain- of-thought reasoning

Lyu, Q., Havaldar, S., Stein, A., Zhang, L., Rao, D., Wong, E., Apidianaki, M., and Callison-Burch, C. Faithful chain- of-thought reasoning. InThe 13th International Joint Conference on Natural Language Processing and the 3rd Conference of the Asia-Pacific Chapter of the Associa- tion for Computational Linguistics (IJCNLP-AACL 2023),

work page 2023

-

[16]

Qi, P., Liu, Z., Zhou, X., Pang, T., Du, C., Lee, W. S., and Lin, M. Defeating the training-inference mismatch via fp16.arXiv preprint arXiv:2510.26788,

-

[17]

Proximal Policy Optimization Algorithms

URL https://github.com/apoorvumang/promp t-lookup-decoding/. Schulman, J., Wolski, F., Dhariwal, P., Radford, A., and Klimov, O. Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347,

work page internal anchor Pith review Pith/arXiv arXiv

-

[18]

Scaling LLM Test-Time Compute Optimally can be More Effective than Scaling Model Parameters

10 BubbleSpec: Turning Long-Tail Bubbles into Speculative Rollout Drafts for Synchronous Reinforcement Learning Snell, C., Lee, J., Xu, K., and Kumar, A. Scaling llm test- time compute optimally can be more effective than scal- ing model parameters.arXiv preprint arXiv:2408.03314,

work page internal anchor Pith review Pith/arXiv arXiv

-

[19]

Team, K., Du, A., Gao, B., Xing, B., Jiang, C., Chen, C., Li, C., Xiao, C., Du, C., Liao, C., et al. Kimi k1. 5: Scaling reinforcement learning with llms.arXiv preprint arXiv:2501.12599,

work page internal anchor Pith review Pith/arXiv arXiv

-

[20]

Xi, H., Ruan, C., Liao, P., Lin, Y ., Cai, H., Zhao, Y ., Yang, S., Keutzer, K., Han, S., and Zhu, L. Jet-rl: En- abling on-policy fp8 reinforcement learning with uni- fied training and rollout precision flow.arXiv preprint arXiv:2601.14243,

-

[21]

Yang, A., Li, A., Yang, B., Zhang, B., Hui, B., Zheng, B., Yu, B., Gao, C., Huang, C., Lv, C., et al

doi: 10.1109/RTSS66672.2025.00038. Yang, A., Li, A., Yang, B., Zhang, B., Hui, B., Zheng, B., Yu, B., Gao, C., Huang, C., Lv, C., et al. Qwen3 technical report.arXiv preprint arXiv:2505.09388,

-

[22]

Yi, H., Lin, F., Li, H., Peiyang, N., Yu, X., and Xiao, R. Gen- eration meets verification: Accelerating large language model inference with smart parallel auto-correct decod- ing. InFindings of the Association for Computational Linguistics: ACL 2024, pp. 5285–5299,

work page 2024

-

[23]

DAPO: An Open-Source LLM Reinforcement Learning System at Scale

Yu, Q., Zhang, Z., Zhu, R., Yuan, Y ., Zuo, X., Yue, Y ., Dai, W., Fan, T., Liu, G., Liu, L., et al. Dapo: An open-source llm reinforcement learning system at scale.arXiv preprint arXiv:2503.14476,

work page internal anchor Pith review Pith/arXiv arXiv

-

[24]

SimpleRL-Zoo: Investigating and Taming Zero Reinforcement Learning for Open Base Models in the Wild

Zeng, W., Huang, Y ., Liu, Q., Liu, W., He, K., Ma, Z., and He, J. Simplerl-zoo: Investigating and taming zero reinforcement learning for open base models in the wild. arXiv preprint arXiv:2503.18892,

work page internal anchor Pith review arXiv

-

[25]

Group Sequence Policy Optimization

Zheng, C., Liu, S., Li, M., Chen, X.-H., Yu, B., Gao, C., Dang, K., Liu, Y ., Men, R., Yang, A., et al. Group sequence policy optimization.arXiv preprint arXiv:2507.18071,

work page internal anchor Pith review Pith/arXiv arXiv

-

[26]

Zhong, Y ., Zhang, Z., Song, X., Hu, H., Jin, C., Wu, B., Chen, N., Chen, Y ., Zhou, Y ., Wan, C., et al. Streamrl: Scalable, heterogeneous, and elastic rl for llms with disaggregated stream generation.arXiv preprint arXiv:2504.15930, 2025a. Zhong, Y ., Zhang, Z., Wu, B., Liu, S., Chen, Y ., Wan, C., Hu, H., Xia, L., Ming, R., Zhu, Y ., et al. Optimizing{...

-

[27]

We find that ranks that are faster in earlier generation stages can actually complete later than initially slower ranks, due to both the unpredictable LLM output length and intra-GPU interference. We argue that, to efficiently utilize intra-GPU bubbles without slowing down the current batch, techniques such as intra-GPU sharing and isolation need to be em...

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.