Recognition: 2 theorem links

· Lean TheoremTight Generalization Bounds for Noiseless Inverse Optimization

Pith reviewed 2026-05-12 01:40 UTC · model grok-4.3

The pith

Noiseless inverse optimization achieves a tight high-probability O(d/T) generalization bound on the induced action set.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

In the noiseless setting of inverse optimization, demonstrations are produced exactly by optimizing an unknown ground-truth objective. For consistent estimators of the objective parameters, the induced action set generalizes with high probability at the rate O(d/T). This rate is tight across the considered estimators. The corresponding regret lower bounds match the upper bounds known for the adversarial setting, making the stochastic noiseless IO effectively adversarial for these estimators.

What carries the argument

The induced action set, the collection of actions that would be chosen by optimizing the estimated objective on new contexts, whose deviation from the true optimal actions is controlled by the generalization bound.

If this is right

- The number of demonstrations required for small generalization error scales linearly with the dimension d.

- Uniqueness of optimal actions upgrades the guarantees to match best-arm identification rates from the bandit literature.

- Both instantaneous and cumulative regret inherit the tight O(d/T) scaling and the match to adversarial upper bounds.

- Parameter-free algorithms recover the objective with lower per-iteration computational cost than standard solvers.

Where Pith is reading between the lines

- The equivalence to adversarial regret suggests that techniques developed for robust adversarial inverse optimization may transfer directly to this stochastic noiseless case.

- In domains that assume perfect rationality, such as certain economic or robotic planning settings, the results quantify the sample needs but also warn that performance remains vulnerable to worst-case data ordering.

Load-bearing premise

Demonstrations are generated exactly by optimizing a single ground-truth objective with no noise or suboptimality.

What would settle it

A family of noiseless demonstration sequences and a consistent estimator for which the probability that the induced action set deviates from the true one by more than c d/T remains bounded away from zero for large T and some fixed c.

Figures

read the original abstract

Inverse optimization (IO) seeks to infer the parameters of a decision-maker's objective from observed context--action data. We study noiseless IO, where demonstrations are generated by a ground-truth objective. We provide a high-probability ${O}(\frac{d}{T})$ generalization bound for the induced action set, where $d$ is the number of unknown parameters and $T$ is the size of the training dataset. We strengthen these guarantees under additional conditions that ensure uniqueness of the chosen action, bringing our IO guarantees in line with best-arm identification results in the bandit literature. We further show that the ${O}(\frac{d}{T})$ rate is tight over all consistent estimators considered here, and extend the result to both instantaneous and cumulative regret. Notably, the resulting regret lower bound matches the corresponding upper bounds in the adversarial setting, indicating that the stochastic IO setting is effectively adversarial for the class of estimators studied here. Finally, we propose a parameter-free algorithm with lower per-iteration complexity than generic solvers. Experiments validate the predicted rates and illustrate the tightness of our bounds.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper studies noiseless inverse optimization, deriving a high-probability O(d/T) generalization bound on the induced action set from context-action demonstrations generated by a ground-truth objective. It strengthens the bound to bandit-like rates under additional uniqueness conditions on the optimal action, proves that the O(d/T) rate is tight for all consistent estimators considered, extends the analysis to instantaneous and cumulative regret (with the lower bound matching adversarial upper bounds), and proposes a parameter-free algorithm with reduced per-iteration complexity. Experiments are used to validate the predicted scaling and illustrate tightness.

Significance. If the derivations hold, the work supplies the first tight, non-vacuous sample-complexity bounds for noiseless IO and explicitly connects the setting to best-arm identification in bandits. The observation that the stochastic IO problem is effectively adversarial for consistent estimators is a notable conceptual contribution. The parameter-free algorithm provides a concrete practical advance over generic solvers. Experiments confirming the O(d/T) scaling lend empirical support to the theory.

major comments (2)

- The tightness claim is stated to hold over all consistent estimators; the precise definition of consistency and the exact estimator class must be stated explicitly (e.g., in the section containing the lower-bound construction) because this class is load-bearing for both the upper and lower bounds.

- The regret lower bound is asserted to match adversarial upper bounds; the manuscript should include a direct side-by-side comparison of constants and the precise adversarial setting (e.g., the section on regret extensions) to substantiate the 'effectively adversarial' conclusion.

minor comments (2)

- Notation for the induced action set and the recovered objective should be introduced with a dedicated definition block early in the paper to improve readability.

- The abstract and introduction mention 'additional conditions ensuring uniqueness'; a brief discussion of how often these conditions hold in typical IO applications would help readers assess the strengthened guarantees.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive comments. We address each major comment below and will revise the manuscript accordingly.

read point-by-point responses

-

Referee: The tightness claim is stated to hold over all consistent estimators; the precise definition of consistency and the exact estimator class must be stated explicitly (e.g., in the section containing the lower-bound construction) because this class is load-bearing for both the upper and lower bounds.

Authors: We agree that an explicit definition is needed to support the tightness claim. In the revised manuscript we will add, in the lower-bound section, a formal definition of consistency (estimators that recover the ground-truth objective almost surely as T tends to infinity under the noiseless model) together with the precise class of estimators to which the claim applies (the parameter-free algorithm and all generic convex solvers that return a feasible point in the dual polytope). This will make clear that the O(d/T) rate is tight precisely for this class. revision: yes

-

Referee: The regret lower bound is asserted to match adversarial upper bounds; the manuscript should include a direct side-by-side comparison of constants and the precise adversarial setting (e.g., the section on regret extensions) to substantiate the 'effectively adversarial' conclusion.

Authors: We will strengthen the presentation as suggested. In the revised regret-extensions section we will insert a direct side-by-side comparison (in text and a small table) of the leading constants in our stochastic lower bound and the matching adversarial upper bounds from the best-arm-identification literature, together with an explicit statement of the adversarial setting (an adversary that chooses contexts and feasible actions to force linear regret against any consistent estimator). This will substantiate the claim that the stochastic noiseless IO problem is effectively adversarial for the estimators studied. revision: yes

Circularity Check

No significant circularity detected

full rationale

The derivation of the O(d/T) high-probability generalization bound on the induced action set proceeds directly from the noiseless model, the definition of the induced action set as actions optimal under the recovered objective, and standard concentration arguments over T samples with d parameters. Tightness follows from an independent lower-bound construction over a family of objectives with margin-separated optimal actions, without reducing to fitted quantities or self-referential definitions. Regret extensions and the parameter-free algorithm are likewise derived from the same primitives without circular steps. The result is self-contained against external benchmarks and does not rely on load-bearing self-citations or ansatzes smuggled from prior work.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Demonstrations are generated exactly by a ground-truth objective (noiseless setting)

- domain assumption Additional conditions ensure uniqueness of the chosen action

Lean theorems connected to this paper

-

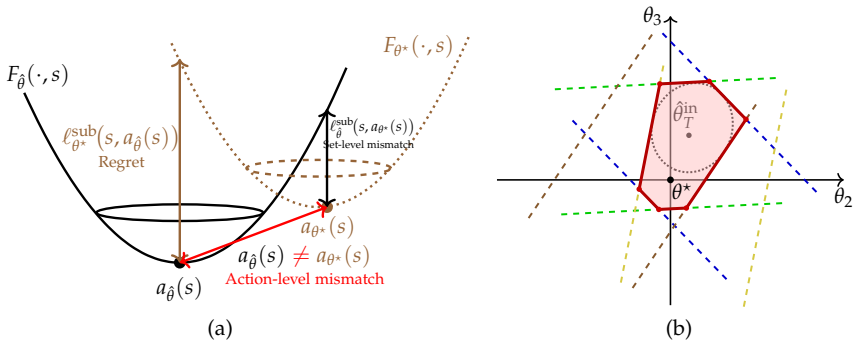

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanreality_from_one_distinction unclearWe develop a scenario program view of noiseless IO, where each demonstration induces a random convex feasibility constraint on the parameter θ (Campi & Garatti, 2008). This yields a high-probability O(d/T) bound on set-level mismatch (Theorem 2.1).

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe further show that the O(d/T) rate is tight over all consistent estimators... the resulting regret lower bound matches the corresponding upper bounds in the adversarial setting.

Reference graph

Works this paper leans on

-

[1]

Ahuja, R. K. and Orlin, J. B. Inverse optimization. Operations Research, 49 0 (5): 0 771--783, 2001

work page 2001

-

[2]

Akhtar, S. A., Kolarijani, A. S., and Mohajerin Esfahani, P. Learning for control: An inverse optimization approach. IEEE Control Systems Letters, 6: 0 187--192, 2021

work page 2021

-

[3]

Inverse optimization with noisy data

Aswani, A., Shen, Z.-J., and Siddiq, A. Inverse optimization with noisy data. Operations Research, 66 0 (3): 0 870--892, 2018

work page 2018

-

[4]

Mostly exploration-free algorithms for contextual bandits

Bastani, H., Bayati, M., and Khosravi, K. Mostly exploration-free algorithms for contextual bandits. Management Science, 67 0 (3): 0 1329--1349, 2021

work page 2021

-

[5]

Ben-David, S., Cesa-Bianchi, N., and Long, P. M. Characterizations of learnability for classes of \ 0,..., n \ -valued functions. Journal of computer and system sciences (Print), 50 0 (1): 0 74--86, 1995

work page 1995

-

[6]

Bertsimas, D., Gupta, V., and Paschalidis, I. C. Data-driven estimation in equilibrium using inverse optimization. Mathematical Programming, 153 0 (2): 0 595--633, 2015

work page 2015

-

[7]

Contextual inverse optimization: Offline and online learning

Besbes, O., Fonseca, Y., and Lobel, I. Contextual inverse optimization: Offline and online learning. Operations Research, 73 0 (1): 0 424--443, 2025

work page 2025

-

[8]

A characterization of multiclass learnability

Brukhim, N., Carmon, D., Dinur, I., Moran, S., and Yehudayoff, A. A characterization of multiclass learnability. In 2022 IEEE 63rd Annual Symposium on Foundations of Computer Science (FOCS), pp.\ 943--955. IEEE, 2022

work page 2022

-

[9]

Campi, M. C. and Garatti, S. The exact feasibility of randomized solutions of uncertain convex programs. SIAM Journal on Optimization, 19 0 (3): 0 1211--1230, 2008

work page 2008

-

[10]

Chan, T. C., Mahmood, R., and Zhu, I. Y. Inverse optimization: T heory and applications. Operations Research, 73 0 (2): 0 1046--1074, 2025

work page 2025

-

[11]

Diamond, S. and Boyd, S. CVXPY : A P ython-embedded modeling language for convex optimization. Journal of Machine Learning Research, 17 0 (83): 0 1--5, 2016

work page 2016

-

[12]

Offline reinforcement learning via inverse optimization

Dimanidis, I., Ok, T., and Mohajerin Esfahani, P. Offline reinforcement learning via inverse optimization. arXiv preprint arXiv:2502.20030, 2025

-

[13]

Dzhaparidze, K. and Van Zanten, J. On bernstein-type inequalities for martingales. Stochastic processes and their applications, 93 0 (1): 0 109--117, 2001

work page 2001

-

[14]

El Balghiti, O., Elmachtoub, A. N., Grigas, P., and Tewari, A. Generalization bounds in the predict-then-optimize framework. Mathematics of Operations Research, 48 0 (4): 0 2043--2065, 2023

work page 2043

-

[15]

Elmachtoub, A. N. and Grigas, P. Smart “predict, then optimize”. Management Science, 68 0 (1): 0 9--26, 2022

work page 2022

-

[16]

Freedman, D. A. On tail probabilities for martingales. The Annals of Probability, pp.\ 100--118, 1975

work page 1975

-

[17]

Contextual recommendations and low-regret cutting-plane algorithms

Gollapudi, S., Guruganesh, G., Kollias, K., Manurangsi, P., Leme, R., and Schneider, J. Contextual recommendations and low-regret cutting-plane algorithms. Advances in Neural Information Processing Systems, 34: 0 22498--22508, 2021

work page 2021

-

[18]

Hazan, E. et al. Introduction to online convex optimization. Foundations and Trends in Optimization , 2 0 (3-4): 0 157--325, 2016

work page 2016

-

[19]

Imputing a convex objective function

Keshavarz, A., Wang, Y., and Boyd, S. Imputing a convex objective function. In IEEE International Symposium on Intelligent Control, pp.\ 613--619, 2011

work page 2011

-

[20]

Lattimore, T. and Szepesv \'a ri, C. Bandit algorithms. Cambridge University Press, 2020

work page 2020

-

[21]

Li, J. Y.-M. Inverse optimization of convex risk functions. Management Science, 67 0 (11): 0 7113--7141, 2021

work page 2021

-

[22]

Mohajerin Esfahani, P., Shafieezadeh-Abadeh, S., Hanasusanto, G. A., and Kuhn, D. Data-driven inverse optimization with imperfect information. Mathematical Programming, 167 0 (1): 0 191--234, 2018

work page 2018

-

[23]

Natarajan, B. K. On learning sets and functions. Machine Learning, 4 0 (1): 0 67--97, 1989

work page 1989

-

[24]

The Optimal Sample Complexity of Multiclass and List Learning

Pabbaraju, C. The optimal sample complexity of multiclass and list learning. arXiv preprint arXiv:2604.24749, 2026

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[25]

Inverse optimization via learning feasible regions

Ren, K., Mohajerin Esfahani, P., and Georghiou, A. Inverse optimization via learning feasible regions. arXiv preprint arXiv:2505.15025, 2025

-

[26]

Sakaue, S., Tsuchiya, T., Bao, H., and Oki, T. Online inverse linear optimization: Efficient logarithmic-regret algorithm, robustness to suboptimality, and lower bound. Advances in Neural Information Processing Systems, 34, 2025

work page 2025

-

[27]

Shalev-Shwartz, S. and Ben-David, S. Understanding machine learning: From theory to algorithms. Cambridge university press, 2014

work page 2014

-

[28]

Learning in inverse optimization: Incenter cost, augmented suboptimality loss, and algorithms

Zattoni Scroccaro, P., Atasoy, B., and Mohajerin Esfahani, P. Learning in inverse optimization: Incenter cost, augmented suboptimality loss, and algorithms. Operations Research, 73 0 (5): 0 2661--2679, 2025 a

work page 2025

-

[29]

Inverse optimization for routing problems

Zattoni Scroccaro, P., van Beek, P., Mohajerin Esfahani, P., and Atasoy, B. Inverse optimization for routing problems. Transportation Science, 59 0 (2): 0 301--321, 2025 b

work page 2025

-

[30]

Online convex programming and generalized infinitesimal gradient ascent

Zinkevich, M. Online convex programming and generalized infinitesimal gradient ascent. In Proceedings of the International Conference on Machine Learning, pp.\ 928--936, 2003

work page 2003

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.