Recognition: 2 theorem links

· Lean TheoremLearning Generative Dynamics with Soft Law Constraints: A McKean-Vlasov FBSDE Approach

Pith reviewed 2026-05-12 01:46 UTC · model grok-4.3

The pith

Stochastic paths can be generated to match observed endpoint and intermediate distributions by solving a McKean-Vlasov FBSDE with soft marginal penalties.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Generation from distributional observations is achieved by casting the problem as a McKean-Vlasov control problem whose optimality system is an FBSDE; the backward component receives a continuous drift induced by soft energy constraints on the terminal and time-marginal laws. For quadratic running cost and constant diffusion the system reduces to an equation linking the flat derivatives of the value function to score-like training signals. The resulting neural solver produces sample paths whose empirical marginals at the supervised times closely follow the observed distributions, as verified on low-dimensional benchmarks and on structured high-dimensional data such as SMPL-H pose sequences.

What carries the argument

McKean-Vlasov FBSDE whose backward drift is supplied by soft energy penalties on the prescribed marginal laws, supplying the training signal for the neural control approximator.

If this is right

- The learned dynamics remain coupled across all particles through the mean-field objective rather than being reduced to independent interpolations.

- No explicit optimal-transport map or hard interpolation between observed distributions is required.

- The same FBSDE solver can be applied to both low-dimensional synthetic marginals and high-dimensional structured data such as human motion sequences.

- Intermediate marginal supervision can be added at arbitrary times without changing the overall architecture.

Where Pith is reading between the lines

- The soft-constraint formulation may extend naturally to settings where the underlying particles already interact through a mean field, such as crowd or swarm models.

- Because the method retains full stochasticity, it could be used to produce multiple plausible futures consistent with the same sequence of observed marginals.

- Scaling the neural FBSDE solver to very high-dimensional latent spaces may require additional variance-reduction techniques not explored in the present experiments.

Load-bearing premise

Soft energy penalties on terminal and time-marginal laws can be translated into a stable continuous drift in the backward FBSDE that a neural solver can enforce without hard constraints or numerical blow-up.

What would settle it

Generate many paths with the trained solver on a benchmark where exact marginal distributions are known, then compare the empirical distributions at the supervised times; a statistically significant mismatch between the generated and prescribed marginals would falsify the claim that the soft penalties are sufficient.

Figures

read the original abstract

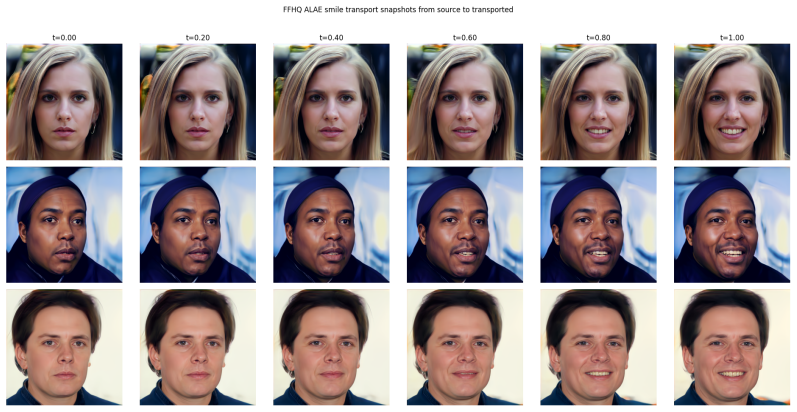

We propose a generative framework for learning stochastic dynamics from endpoint and intermediate distributional observations. The method formulates generation as a McKean-Vlasov control problem in which terminal and time-marginal laws are enforced through soft energy constraints. The associated optimality system is a forward-backward stochastic differential equation (FBSDE) whose backward component receives a continuous drift induced by the marginal law penalties. This provides a principled alternative to hard interpolation or optimal transport maps between observed distributions: the model learns a stochastic path law whose dynamics remain globally coupled through the mean-field objective. We derive the reduced FBSDE system for quadratic control cost and constant diffusion, connecting terminal and marginal law flat derivatives to score-like training signals. The resulting neural solver is evaluated on low-dimensional distributional benchmarks, where it recovers smooth stochastic paths matching prescribed marginal laws. In a higher-dimensional ALAE latent space, endpoint supervision is used as a qualitative stress test for transporting non-smiling faces toward smiling ones in a pretrained representation. We then use articulated human motion as a structured high-dimensional case study on a curated AMASS low-to-high position dataset, using SMPL-H pose sequences and reduced pose representations. The experiments show that soft marginal law constraints can produce coherent stochastic trajectories whose intermediate distributions follow the observed evolution of human motion. The code is available at https://github.com/murex/deep-mkv-gen/tree/main.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a generative framework for learning stochastic dynamics from endpoint and intermediate distributional observations by casting the problem as a McKean-Vlasov control problem with soft energy constraints on terminal and time-marginal laws. The optimality system is an FBSDE whose backward drift is induced by the penalties; for quadratic cost and constant diffusion this reduces to a system whose backward component incorporates flat derivatives of the penalties, interpreted as score-like signals. A neural solver is implemented and tested on low-dimensional benchmarks (recovering smooth paths matching prescribed marginals), an ALAE latent-space face transport task, and AMASS human motion data using SMPL-H poses, with code released at the provided GitHub link.

Significance. If the soft-constraint enforcement can be shown to produce sufficiently tight marginal matching without numerical instability, the method supplies a principled mean-field alternative to hard interpolation or optimal-transport maps for learning path measures. The open-source code is a clear strength for reproducibility. However, the current lack of quantitative error metrics and stability analysis weakens the practical assessment of the framework.

major comments (2)

- [Experiments] Experiments section: the low-dimensional benchmarks and AMASS case study report only qualitative success (coherent trajectories, matching observed evolution). No Wasserstein distances, KL divergences, or other quantitative residuals measuring violation of the target marginal laws are provided, nor is there any sweep or analysis of penalty strength versus solver stability or particle approximation error.

- [Reduced FBSDE derivation] Derivation of the reduced FBSDE: the claim that the backward drift incorporates flat derivatives of the penalty functionals (score-like signals) is central to the method, yet the manuscript supplies neither the explicit reduced equations nor the intermediate steps connecting the McKean-Vlasov optimality system to the neural training signals. Without these, the reduction cannot be verified.

minor comments (1)

- [Abstract / Derivation] The term 'flat derivatives' is used without a precise definition or reference; a short clarifying sentence or citation would improve readability.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback and positive recognition of the framework's potential as a mean-field alternative for learning path measures. We address each major comment below and agree that the suggested additions will strengthen the manuscript.

read point-by-point responses

-

Referee: [Experiments] Experiments section: the low-dimensional benchmarks and AMASS case study report only qualitative success (coherent trajectories, matching observed evolution). No Wasserstein distances, KL divergences, or other quantitative residuals measuring violation of the target marginal laws are provided, nor is there any sweep or analysis of penalty strength versus solver stability or particle approximation error.

Authors: We acknowledge that the experiments in the current manuscript emphasize qualitative demonstration of coherent trajectory generation and marginal matching. In the revised version we will augment the low-dimensional and AMASS sections with quantitative metrics, specifically Wasserstein-2 distances and KL divergences between generated and target marginals at selected times, together with a parameter sweep on penalty strength and particle count to quantify stability and approximation error. revision: yes

-

Referee: [Reduced FBSDE derivation] Derivation of the reduced FBSDE: the claim that the backward drift incorporates flat derivatives of the penalty functionals (score-like signals) is central to the method, yet the manuscript supplies neither the explicit reduced equations nor the intermediate steps connecting the McKean-Vlasov optimality system to the neural training signals. Without these, the reduction cannot be verified.

Authors: The reduction is derived in Section 3 by specializing the general McKean-Vlasov FBSDE optimality system to quadratic control cost and constant diffusion, yielding a backward drift that incorporates the flat (Gateaux) derivatives of the penalty functionals. To improve verifiability we will expand this section in the revision with the complete intermediate algebraic steps and the fully explicit reduced FBSDE system, making the link to the score-like training signals transparent. revision: yes

Circularity Check

Derivation of reduced FBSDE from McKean-Vlasov optimality system is self-contained

full rationale

The paper formulates generation as a McKean-Vlasov control problem with soft energy constraints on terminal and time-marginal laws, then derives the associated optimality system as an FBSDE whose backward drift is induced by the penalty functionals. For quadratic cost and constant diffusion it explicitly reduces the system by linking flat derivatives of the penalties to score-like signals. No step reduces by construction to fitted inputs, self-definitions, or load-bearing self-citations; the derivation follows from standard stochastic control theory applied to the stated objective. Experiments on benchmarks and motion data serve as external validation rather than tautological confirmation. The chain therefore remains independent of its own outputs.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Existence of solutions to the McKean-Vlasov FBSDE optimality system

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel contradictsIn all experiments, we choose A=R^d, and b(t,x,μ,a)=a, σ=σ I_d, ℓ(x,a)=½‖a‖²

Reference graph

Works this paper leans on

-

[1]

Generative modeling for time series via Schr{\

Generative modeling for time series via Schr \ " o \ dinger bridge , author=. arXiv preprint arXiv:2304.05093 , year=

-

[2]

Probabilistic Theory of Mean Field Games with Applications

Carmona, Ren. Probabilistic Theory of Mean Field Games with Applications

-

[3]

Forward--Backward Stochastic Differential Equations and Controlled

Carmona, Ren. Forward--Backward Stochastic Differential Equations and Controlled. Annals of Probability , year =

-

[4]

33rd Conference on Neural Information Processing Systems (

Towards Robust and Stable Deep Learning Algorithms for Forward Backward Stochastic Differential Equations , author =. 33rd Conference on Neural Information Processing Systems (

-

[5]

Three Algorithms for Solving High-Dimensional Fully Coupled

Ji, Shaolin and Peng, Shige and Peng, Ying and Zhang, Xichuan , journal =. Three Algorithms for Solving High-Dimensional Fully Coupled

-

[6]

Communications in Mathematics and Statistics , year =

Deep Learning-Based Numerical Methods for High-Dimensional Parabolic Partial Differential Equations and Backward Stochastic Differential Equations , author =. Communications in Mathematics and Statistics , year =

-

[7]

Proceedings of the National Academy of Sciences , year =

Solving High-Dimensional Partial Differential Equations Using Deep Learning , author =. Proceedings of the National Academy of Sciences , year =

-

[8]

Score-Based Generative Modeling through Stochastic Differential Equations

Score-Based Generative Modeling through Stochastic Differential Equations , author =. arXiv preprint arXiv:2011.13456 , year =

work page internal anchor Pith review Pith/arXiv arXiv 2011

-

[9]

Advances in Neural Information Processing Systems , year =

Denoising Diffusion Probabilistic Models , author =. Advances in Neural Information Processing Systems , year =

-

[10]

International Conference on Learning Representations , year =

Flow Matching for Generative Modeling , author =. International Conference on Learning Representations , year =

-

[11]

International Conference on Learning Representations , year =

Building Normalizing Flows with Stochastic Interpolants , author =. International Conference on Learning Representations , year =

-

[12]

ICML Workshop on New Frontiers in Learning, Control, and Dynamical Systems , year =

Improving and Generalizing Flow Matching via Optimal Transport , author =. ICML Workshop on New Frontiers in Learning, Control, and Dynamical Systems , year =

-

[13]

Flow Straight and Fast: Learning to Generate and Transfer Data with Rectified Flow

Flow Straight and Fast: Learning to Generate and Transfer Data with Rectified Flow , author =. arXiv preprint arXiv:2209.03003 , year =

work page internal anchor Pith review Pith/arXiv arXiv

- [14]

- [15]

- [16]

-

[17]

Diffusion Bridge Mixture Transports,

Peluchetti, Stefano , journal =. Diffusion Bridge Mixture Transports,

-

[19]

Random Fields and Diffusion Processes , author =

-

[20]

arXiv preprint arXiv:2211.01364 , year =

An Optimal Control Perspective on Diffusion-Based Generative Modeling , author =. arXiv preprint arXiv:2211.01364 , year =

-

[21]

Path integral sampler: a stochastic control approach for sampling

Path Integral Sampler: A Stochastic Control Approach for Sampling , author =. arXiv preprint arXiv:2111.15141 , year =

-

[22]

Journal of Machine Learning Research , year =

Estimation of Non-Normalized Statistical Models by Score Matching , author =. Journal of Machine Learning Research , year =

-

[23]

A Connection Between Score Matching and Denoising Autoencoders , author =. Neural Computation , year =

-

[24]

Density Ratio Estimation in Machine Learning , author =

-

[25]

LightSBB-M: Bridging Schr\"odinger and Bass for Generative Diffusion Modeling

LightSBB-M: Bridging Schr\"odinger and Bass for Generative Diffusion Modeling , author=. arXiv:2601.19312 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[26]

Proceedings of the 41st International Conference on Machine Learning , year =

Light and Optimal Schrödinger Bridge Matching , author =. Proceedings of the 41st International Conference on Machine Learning , year =

-

[27]

IEEE Transactions on Information Theory , year =

Divergence Estimation for Multidimensional Densities via k -Nearest-Neighbor Distances , author =. IEEE Transactions on Information Theory , year =

-

[28]

Japanese Journal of Mathematics , year =

Mean Field Games , author =. Japanese Journal of Mathematics , year =

-

[29]

Large Population Stochastic Dynamic Games: Closed-Loop

Huang, Minyi and Malham. Large Population Stochastic Dynamic Games: Closed-Loop. Communications in Information and Systems , year =

- [30]

-

[31]

Luise, Giulia and Rudi, Alessandro and Pontil, Massimiliano and Ciliberto, Carlo , title =. 2018 , booktitle =

work page 2018

-

[32]

Sinkhorn Distances: Lightspeed Computation of Optimal Transport , year =

Cuturi, Marco , booktitle =. Sinkhorn Distances: Lightspeed Computation of Optimal Transport , year =

-

[33]

SpringerBriefs in Mathematics , year =

Mean Field Games and Mean Field Type Control Theory , author =. SpringerBriefs in Mathematics , year =

-

[34]

Notes on Mean Field Games: From

Cardaliaguet, Pierre , year =. Notes on Mean Field Games: From

- [35]

-

[36]

Topics in Propagation of Chaos , author =

-

[37]

Systems & Control Letters , year =

Adapted Solution of a Backward Stochastic Differential Equation , author =. Systems & Control Letters , year =

- [38]

- [39]

-

[40]

Optimal Transport: Old and New , author =

-

[41]

Gradient Flows in Metric Spaces and in the Space of Probability Measures , author =

-

[42]

Optimal Transport for Applied Mathematicians , author =

-

[43]

Generalization of an Inequality by

Otto, Felix and Villani, C. Generalization of an Inequality by. Journal of Functional Analysis , year =

- [44]

-

[45]

Bakry, Dominique and Gentil, Ivan and Ledoux, Michel , series =. Analysis and Geometry of

-

[46]

The Variational Formulation of the

Jordan, Richard and Kinderlehrer, David and Otto, Felix , journal =. The Variational Formulation of the

-

[47]

Proceedings of the 32nd International Conference on Machine Learning , year =

Deep Unsupervised Learning Using Nonequilibrium Thermodynamics , author =. Proceedings of the 32nd International Conference on Machine Learning , year =

-

[48]

Advances in Neural Information Processing Systems , year =

Generative Modeling by Estimating Gradients of the Data Distribution , author =. Advances in Neural Information Processing Systems , year =

-

[49]

Stochastic Processes and their Applications , year =

Reverse-Time Diffusion Equation Models , author =. Stochastic Processes and their Applications , year =

-

[50]

Conference on Learning Theory (

Theoretical Guarantees for Sampling and Inference in Generative Models with Latent Diffusions , author =. Conference on Learning Theory (

-

[51]

Chen, Tianrong and Liu, Guan-Horng and Theodorou, Evangelos A , booktitle =. Likelihood Training of

-

[52]

arXiv preprint arXiv:2304.13534 , year =

A Mean-Field Games Laboratory for Generative Modeling , author =. arXiv preprint arXiv:2304.13534 , year =

-

[53]

Proceedings of the National Academy of Sciences , year =

Alternating the Population and Control Neural Networks to Solve High-Dimensional Stochastic Mean-Field Games , author =. Proceedings of the National Academy of Sciences , year =

-

[54]

Proceedings of the National Academy of Sciences , year =

A Machine Learning Framework for Solving High-Dimensional Mean Field Game and Mean Field Control Problems , author =. Proceedings of the National Academy of Sciences , year =

- [55]

-

[56]

Proceedings of the 38th International Conference on Machine Learning , year =

Learning Transferable Visual Models From Natural Language Supervision , author =. Proceedings of the 38th International Conference on Machine Learning , year =

- [57]

-

[58]

Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV) , year =

AMASS: Archive of Motion Capture as Surface Shapes , author =. Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV) , year =

-

[59]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , year=

A Style-Based Generator Architecture for Generative Adversarial Networks , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , year=

-

[60]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , year=

Adversarial Latent Autoencoders , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , year=

-

[61]

Interpolating between Optimal Transport and

Feydy, Jean and S. Interpolating between Optimal Transport and. Proceedings of the 22nd International Conference on Artificial Intelligence and Statistics (

-

[62]

Germain, Maximilien and Mikael, Joseph and Warin, Xavier , title =. Method. Comput. Appl. Prob. , month = mar, pages =. 2022 , issue_date =. doi:, abstract =

work page 2022

-

[63]

Journal of Machine Learning , volume =

Mean-Field Neural Networks-Based Algorithms for McKean-Vlasov Control Problems , author =. Journal of Machine Learning , volume =. 2024 , doi =

work page 2024

-

[64]

Carmona, Ren\'. Convergence Analysis of Machine Learning Algorithms for the Numerical Solution of Mean Field Control and Games I: The Ergodic Case , journal =. 2021 , doi =

work page 2021

-

[65]

The Annals of Applied Probability , number =

Ren. The Annals of Applied Probability , number =. 2022 , doi =

work page 2022

-

[66]

Jiang, Yifan and Xu, Renyuan and Zhang, Luhao , journal=. Schr

-

[67]

Ma, Jin and Tan, Ying and Xu, Renyuan , journal=. Schr

-

[68]

Dynamic programming for optimal control of stochastic

Pham, Huy\^en and Wei, Xiaoli , journal=. Dynamic programming for optimal control of stochastic

-

[69]

Generative Modeling via Nonlinear

Henry-Labord\`ere, Pierre and Grau, Mathias , journal=. Generative Modeling via Nonlinear

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.