Recognition: 2 theorem links

· Lean TheoremUnderstanding Student Effort Using Response-Time Propensities During Problem Solving

Pith reviewed 2026-05-12 01:55 UTC · model grok-4.3

The pith

Response-time propensities from algebra tutoring logs form stable effort measures whose link to learning efficiency depends on student proficiency.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Response-time propensities estimated via hierarchical models on step-level logs from algebra tutoring systems capture trait-like individual differences in effort beyond correctness. Slower propensities associate with greater performance improvement per completed step for higher-proficiency students, consistent with constructive processing, whereas for lower-proficiency students the relation is weak or negative, consistent with unproductive struggle or idling. These associations are strongest early in practice sequences and attenuate later in the class period.

What carries the argument

Student- and knowledge-component-level response-time propensities: adjustments to typical step timing extracted from a hierarchical model that accounts for skill difficulty and serves as a scalable proxy for trait-like effort during multi-step problem solving.

If this is right

- Response-time propensities offer a practical signal for incorporating temporal process data into learner models beyond correctness or raw time.

- Adaptive supports can be timed and differentiated by early-session propensities and proficiency level to address emerging disengagement.

- Learning efficiency gains from slower propensities are most detectable at the start of practice sequences.

- The measure supports targeting interventions when effort is most diagnostic of persistence.

- Stability across problems allows use as an individual-differences variable in educational technology.

Where Pith is reading between the lines

- Similar hierarchical modeling of response times could be tested in other tutoring domains if difficulty adjustments are made domain-specifically.

- Real-time propensity estimates might enable dynamic problem selection or hint timing before disengagement sets in.

- Tracking changes in propensities over longer periods could reveal whether effort patterns predict broader course outcomes.

Load-bearing premise

That step-to-step response time, after adjusting for skill difficulty in the hierarchical model, primarily captures trait-like effort differences rather than momentary factors, unmodeled problem features, or idling.

What would settle it

If within-student stability of the propensities disappeared across separate tutoring sessions or if their conditional links to learning efficiency vanished after adding controls for hint usage and exact problem features, the interpretation as stable effort traits would not hold.

Figures

read the original abstract

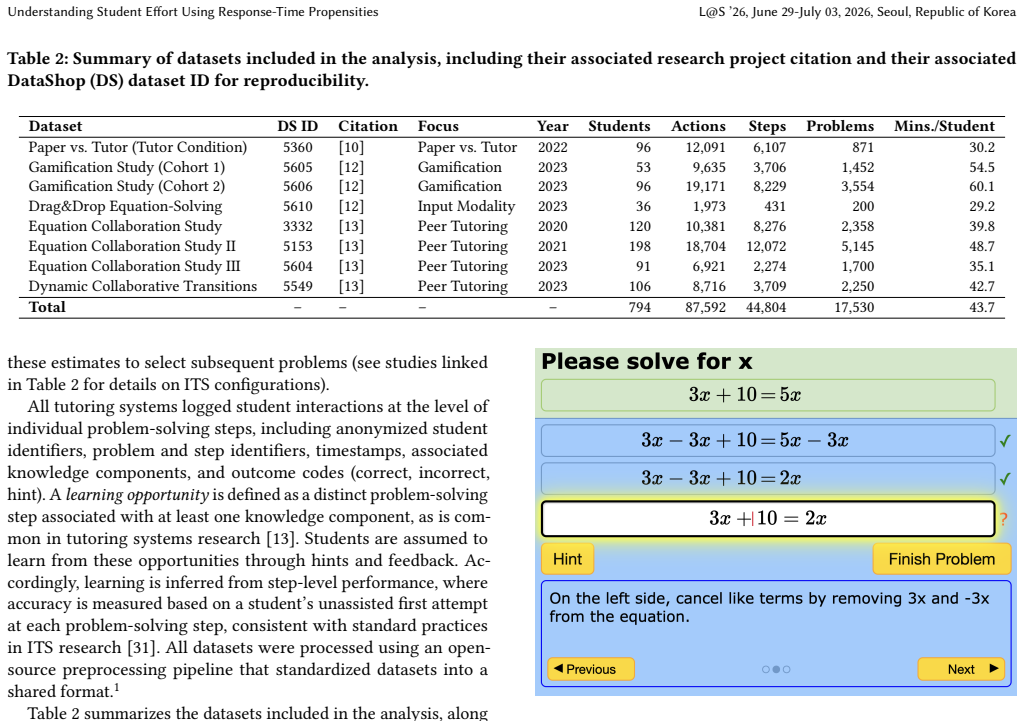

Adaptive learning systems can produce substantial learning gains, yet many students engage for too brief or too superficial a period to benefit. A central obstacle is measuring effort. Effort during multi-step problem solving is rarely directly observed, and common log-based proxies, such as time on task, cannot distinguish between a student working carefully and a student encountering a harder problem. We examine step-to-step response time as a scalable effort signal by modeling trait-like differences in students' typical response timing during tutoring (while adjusting for skill difficulty). Using step-level logs from eight classroom deployments of algebra tutoring systems (2020 to 2023) across six U.S. schools (794 students), we estimate student- and knowledge-component-level propensities using hierarchical models and relate them to learning efficiency, defined as performance improvement per completed solution step. Response-time propensities show moderate to strong stability within students, supporting their use as an individual differences measure beyond correctness. At the same time, their relationship to learning is not uniform but conditional on the learner and context. Slower propensities predict greater learning efficiency for higher-proficiency students, consistent with constructive processing, whereas for lower-proficiency students, slower propensities are weakly related or even negative, consistent with unproductive struggle or idling. These associations are strongest early in practice sequences and attenuate later in the class period, highlighting an actionable window for detecting emerging disengagement and low persistence. Overall, response-time propensities provide a practical way to incorporate temporal process data into learner models and to target adaptive supports when effort is most diagnostic.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper analyzes step-level response time logs from eight classroom deployments of algebra tutoring systems (794 students across six U.S. schools, 2020-2023). It estimates student- and KC-level response-time propensities via hierarchical models that adjust for skill difficulty, reports moderate-to-strong within-student stability of these propensities, and relates them to learning efficiency (performance gains per completed step). The key findings are conditional: slower propensities predict higher learning efficiency among higher-proficiency students but are weakly or negatively related among lower-proficiency students, with associations strongest early in practice sequences and attenuating later.

Significance. If the propensities can be shown to index stable effort rather than residual confounds, the work supplies a scalable, log-based process measure that augments correctness data in learner models for adaptive systems. The conditional proficiency and temporal effects are potentially actionable for real-time intervention design. The multi-deployment classroom dataset and focus on within-student stability are concrete strengths that support ecological validity.

major comments (3)

- [Methods (hierarchical modeling)] The hierarchical model (described in the methods) adjusts solely for KC-level skill difficulty. Without reported checks for within-KC problem-feature variation, ability-speed correlations, or transient state effects, the student propensities remain vulnerable to confounding; this directly undermines the interpretation that they primarily capture trait-like effort and therefore the differential links to learning efficiency.

- [Results (learning efficiency regressions)] The results relating propensities to learning efficiency (performance improvement per step) report conditional associations but provide no effect sizes, confidence intervals, model-fit diagnostics, or sensitivity analyses to alternative specifications. These omissions are load-bearing because the central claim of non-uniform, proficiency-moderated effects rests on the statistical robustness of those associations.

- [Results (stability analysis)] Stability of propensities within students is asserted as supporting their use as an individual-differences measure, yet no comparison to alternative effort proxies, split-half reliability, or explicit test of whether stability survives additional controls for proficiency is shown. This weakens the claim that the measure adds information beyond correctness.

minor comments (2)

- [Abstract] The abstract would be clearer if it briefly stated the random-effects structure and estimation method of the hierarchical model.

- [Figures] Figures displaying propensity distributions or regression coefficients should include error bars or credible intervals to aid interpretation of the reported associations.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed comments, which identify several opportunities to strengthen the clarity and robustness of our analyses. We address each major comment below and outline the revisions we will make.

read point-by-point responses

-

Referee: [Methods (hierarchical modeling)] The hierarchical model (described in the methods) adjusts solely for KC-level skill difficulty. Without reported checks for within-KC problem-feature variation, ability-speed correlations, or transient state effects, the student propensities remain vulnerable to confounding; this directly undermines the interpretation that they primarily capture trait-like effort and therefore the differential links to learning efficiency.

Authors: We agree that the primary model adjusts for KC-level difficulty and that explicit checks for additional confounds would improve interpretability. In the revision we will add (1) within-KC analyses incorporating available problem-feature metadata, (2) reported correlations between estimated propensities and proficiency to quantify ability-speed relations, and (3) a sensitivity analysis with session-level controls to address transient state effects. These will appear in an expanded Methods section and supplementary materials. We note that the observed stability of propensities across independent deployments already provides some protection against purely transient or context-specific confounds, but the additional checks will make this explicit. revision: partial

-

Referee: [Results (learning efficiency regressions)] The results relating propensities to learning efficiency (performance improvement per step) report conditional associations but provide no effect sizes, confidence intervals, model-fit diagnostics, or sensitivity analyses to alternative specifications. These omissions are load-bearing because the central claim of non-uniform, proficiency-moderated effects rests on the statistical robustness of those associations.

Authors: We will revise the Results section to report standardized effect sizes for the key propensity-by-proficiency interactions, 95% confidence intervals for all coefficients, model-fit statistics (R-squared, AIC), and residual diagnostics. We will also add sensitivity analyses using alternative proficiency thresholds and model specifications (with and without random slopes). These elements will be included in the main text and a new supplementary table. revision: yes

-

Referee: [Results (stability analysis)] Stability of propensities within students is asserted as supporting their use as an individual-differences measure, yet no comparison to alternative effort proxies, split-half reliability, or explicit test of whether stability survives additional controls for proficiency is shown. This weakens the claim that the measure adds information beyond correctness.

Authors: We will augment the stability analysis with split-half reliability estimates, direct comparisons of propensity stability to that of correctness-based measures and other available log proxies, and a supplementary test of stability after residualizing propensities on proficiency. These additions will demonstrate incremental validity beyond correctness while preserving the multi-deployment ecological validity of the original results. revision: yes

Circularity Check

No significant circularity: propensities and learning efficiency are distinct empirical measures

full rationale

The paper estimates student- and KC-level response-time propensities via hierarchical modeling of step-level logs (adjusting only for skill difficulty), then reports empirical associations between those propensities and an independently defined learning-efficiency metric (performance improvement per completed step). Stability within students is likewise an observed property of the fitted propensities, not a definitional identity. No equation reduces the reported conditional relationships (slower propensities predicting efficiency for high-proficiency students) to the propensity definition itself, no self-citation chain supplies a load-bearing uniqueness theorem, and no ansatz is smuggled in. The use of the same logs for time-based and correctness-based quantities does not create circularity when the quantities remain distinct.

Axiom & Free-Parameter Ledger

free parameters (1)

- student response-time propensity

axioms (1)

- domain assumption Response time after difficulty adjustment primarily reflects effort or persistence

invented entities (1)

-

response-time propensity

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

rt_log∼1+ (1|student) + (1|skill). ... correct∼opp.+ (1+opp.|student) + (1+opp.|skill).

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Response-time propensities show moderate to strong stability within students... Slower propensities predict greater learning efficiency for higher-proficiency students

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

Kamil Akhuseyinoglu and Peter Brusilovsky. 2021. Data Driven Modeling of Learners Individual Differences for Predicting Engagement and Success in Online Learning. InProceedings of the 29th ACM Conference on User Modeling Adaptation and Personalization. Association for Computing Machinery, New York, 201 212. doi:10.1145/3450613.3456834

-

[2]

Vincent Aleven, Conrad Borchers, Yun Huang, Tomohiro Nagashima, Bruce McLaren, Paulo Carvalho, Octav Popescu, Jonathan Sewall, and Kenneth Koedinger. 2025. An integrated platform for studying learning with intelligent tutoring systems: CTAT+ TutorShop.arXiv preprint arXiv:2502.10395(2025). Understanding Student Effort Using Response-Time Propensities L@S ...

-

[3]

Husni Almoubayyed, Stephen E Fancsali, and Steve Ritter. 2023. Instruction- embedded assessment for reading ability in adaptive mathematics software. In LAK23: 13th International Learning Analytics and Knowledge Conference. 366–377

work page 2023

-

[4]

Şeyhmus Aydoğdu. 2020. Predicting Student Final Performance Using Artificial Neural Networks in Online Learning Environments.Education and Information Technologies25 (2020), 1913–1927. doi:10.1007/s10639-019-10053-x

-

[5]

Ryan Baker, Jason Walonoski, Neil Heffernan, Ido Roll, Albert Corbett, and Kenneth Koedinger. 2008. Why students engage in “gaming the system” behavior in interactive learning environments.Journal of Interactive Learning Research19, 2 (2008), 185–224

work page 2008

-

[6]

Ryan SJd Baker. 2007. Is gaming the system state-or-trait? Educational data mining through the multi-contextual application of a validated behavioral model. InComplete On-Line Proceedings of the Workshop on Data Mining for User Mod- eling at the 11th International Conference on User Modeling 2007, Vol. 2007. User Modeling Inc Boston, MA, 76–80

work page 2007

-

[7]

Ryan S Baker et al. 2019. Challenges for the future of educational data mining: The Baker learning analytics prizes.Journal of educational data mining11, 1 (2019), 1–17

work page 2019

-

[8]

Ryan SJD Baker, Adriana MJB De Carvalho, Jay Raspat, Vincent Aleven, Al- bert T Corbett, and Kenneth R Koedinger. 2009. Educational software features that encourage and discourage “gaming the system”. InArtificial Intelligence in Education. Ios Press, 475–482

work page 2009

-

[9]

Douglas Bates, Martin Mächler, Ben Bolker, and Steve Walker. 2015. Fitting linear mixed-effects models using lme4.Journal of statistical software67 (2015), 1–48

work page 2015

-

[10]

Conrad Borchers, Paulo F Carvalho, Meng Xia, Pinyang Liu, Kenneth R Koedinger, and Vincent Aleven. 2023. What makes problem-solving practice effective? Comparing paper and AI tutoring. InEuropean conference on technology enhanced learning. Springer, 44–59

work page 2023

- [11]

-

[12]

Conrad Borchers, Tomohiro Nagashima, Pinyang Liu, Martha W Alibali, and Vincent Aleven. 2025. Is More Gamification Better? Evaluating Playful Interac- tions and Narratives for Algebra Learning. InEuropean Conference on Technology Enhanced Learning. Springer, 76–90

work page 2025

-

[13]

Conrad Borchers, Kexin Yang, Jionghao Lin, Nikol Rummel, Kenneth R Koedinger, and Vincent Aleven. 2024. Combining dialog acts and skill modeling: What chat interactions enhance learning rates during ai-supported peer tutoring?. InProceedings of the 17th International Conference on Educational Data Mining. 117–130

work page 2024

-

[14]

I. Bråten, N. Latini, and Y. E. Haverkamp. 2022. Predictors and outcomes of behavioral engagement in the context of text comprehension: when quantity means quality.Reading and Writing35 (2022), 687–711. doi:10.1007/s11145-021- 10174-4

-

[15]

Jenny Yun-Chen Chan, Erin R Ottmar, and Ji-Eun Lee. 2022. Slow down to speed up: Longer pause time before solving problems relates to higher strategy efficiency.Learning and Individual Differences93 (2022), 102109

work page 2022

-

[16]

Albert Corbett, Megan McLaughlin, and K Christine Scarpinatto. 2000. Modeling student knowledge: Cognitive tutors in high school and college.User modeling and user-adapted interaction10, 2 (2000), 81–108

work page 2000

-

[17]

Albert T Corbett and John R Anderson. 1994. Knowledge tracing: Modeling the acquisition of procedural knowledge.User modeling and user-adapted interaction 4, 4 (1994), 253–278

work page 1994

-

[18]

Steve C. Dang. 2022.Exploring Behavioral Measurement Models of Learner Moti- vation. Ph. D. Dissertation. Carnegie Mellon University

work page 2022

-

[19]

Steven C Dang and Kenneth R Koedinger. 2020. The Ebb and Flow of Student Engagement: Measuring Motivation through Temporal Pattern of Self-Regulation. International Educational Data Mining Society(2020)

work page 2020

-

[20]

Benjamin W Domingue, Klint Kanopka, Ben Stenhaug, Michael J Sulik, Tanesia Beverly, Matthieu Brinkhuis, Ruhan Circi, Jessica Faul, Dandan Liao, Bruce Mc- Candliss, et al. 2022. Speed–accuracy trade-off? Not so fast: Marginal changes in speed have inconsistent relationships with accuracy in real-world settings. Journal of Educational and Behavioral Statist...

work page 2022

-

[21]

Taryn Eames, Emma Brunskill, Bogdan Yamkovenko, Kodi Weatherholtz, and Philip Oreopoulos. 2026. Computer-assisted learning in the real world: How Khan Academy influences student math learning.Proceedings of the National Academy of Sciences123, 1 (2026), e2507708123

work page 2026

-

[22]

Tino Endres, Oliver Lovell, David Morkunas, Werner Rieß, and Alexander Renkl

-

[23]

Can prior knowledge increase task complexity? Cases in which higher prior knowledge leads to higher intrinsic cognitive load.British Journal of Educational Psychology93 (2022), 305–317. doi:10.1111/bjep.12563

-

[24]

Ashish Gurung, Anthony F Botelho, and Neil T Heffernan. 2021. Examining student effort on help through response time decomposition. InLAK21: 11th international learning analytics and knowledge conference. 292–301

work page 2021

- [25]

-

[26]

LAURENCE Holt. 2024. The 5 percent problem.Education Next(2024)

work page 2024

-

[27]

Yun Huang, Steven Dang, J Elizabeth Richey, Pallavi Chhabra, Danielle R Thomas, Michael W Asher, Nikki G Lobczowski, Elizabeth A McLaughlin, Judith M Harack- iewicz, Vincent Aleven, et al. 2023. Using latent variable models to make gaming- the-system detection robust to context variations.User Modeling and User-Adapted Interaction33, 5 (2023), 1211

work page 2023

-

[28]

Militsa Ivanova, Michalis Michaelides, and Hanna Eklöf. 2020. How does the number of actions on constructed-response items relate to test-taking effort and performance?Educational Research and Evaluation26, 5–6 (2020), 252–274

work page 2020

-

[29]

Militsa G. Ivanova and Michalis P. Michaelides. 2023. Measuring Test-Taking Effort on Constructed-Response Items with Item Response Time and Number of Actions.Practical Assessment, Research & Evaluation28, 15 (2023)

work page 2023

-

[30]

Slava Kalyuga. 2009. The expertise reversal effect. InManaging cognitive load in adaptive multimedia learning. IGI Global Scientific Publishing, 58–80

work page 2009

-

[31]

Kenneth R Koedinger and Vincent Aleven. 2007. Exploring the assistance dilemma in experiments with cognitive tutors.Educational psychology review19, 3 (2007), 239–264

work page 2007

-

[32]

Kenneth R Koedinger, Ryan SJd Baker, Kyle Cunningham, Alida Skogsholm, Brett Leber, and John Stamper. 2010. A data repository for the EDM community: The PSLC DataShop.Handbook of educational data mining43 (2010), 43–56

work page 2010

-

[33]

Kenneth R Koedinger, Paulo F Carvalho, Ran Liu, and Elizabeth A McLaughlin

-

[34]

An astonishing regularity in student learning rate.Proceedings of the National Academy of Sciences120, 13 (2023), e2221311120

work page 2023

-

[35]

Kenneth R Koedinger, Albert T Corbett, and Charles Perfetti. 2012. The Knowledge-Learning-Instruction framework: Bridging the science-practice chasm to enhance robust student learning.Cognitive science36, 5 (2012), 757–798

work page 2012

-

[36]

Kenneth R Koedinger, Jihee Kim, Julianna Zhuxin Jia, Elizabeth A McLaughlin, and Norman L Bier. 2015. Learning is not a spectator sport: Doing is better than watching for learning from a MOOC. InProceedings of the second (2015) ACM conference on learning@ scale. 111–120

work page 2015

-

[37]

Xiaojing J Kong, Steven L Wise, and Dennison S Bhola. 2007. Setting the response time threshold parameter to differentiate solution behavior from rapid-guessing behavior.Educational and Psychological Measurement67, 4 (2007), 606–619

work page 2007

-

[38]

Vitomir Kovanovic, Dragan Gašević, Shane Dawson, Srećko Joksimovic, and Ryan Baker. 2015. Does time-on-task estimation matter? Implications on validity of learning analytics findings.Journal of Learning Analytics2, 3 (2015), 81–110

work page 2015

-

[39]

Vitomir Kovanović, Dragan Gašević, Shane Dawson, Srećko Joksimović, Ryan S Baker, and Marek Hatala. 2015. Penetrating the black box of time-on-task esti- mation. InProceedings of the fifth international conference on learning analytics and knowledge. 184–193

work page 2015

-

[40]

Chia-An Lee, Jian-Wei Tzeng, Nen-Fu Huang, and Yu-Sheng Su. 2021. Prediction of Student Performance in Massive Open Online Courses Using Deep Learning System Based on Learning Behaviors.Educational Technology & Society24, 3 (2021), 130–146

work page 2021

-

[41]

Ji-Eun Lee, Carly Siegel Thorp, Arba Kamberi, and Erin Ottmar. 2025. Unpacking strategy efficiency: Examining the relations between pre-solving pause time and productivity in a digital mathematics game.Metacognition and Learning20, 1 (2025), 31

work page 2025

-

[42]

Qiujie Li, Di Xu, Rachel Baker, Amanda Holton, and Mark Warschauer. 2022. Can student-facing analytics improve online students’ effort and success by affecting how they explain the cause of past performance?Computers & Education185 (2022), 104517

work page 2022

-

[43]

Ran Liu and Kenneth RK Koedinger. 2017. Towards Reliable and Valid Mea- surement of Individualized Student Parameters.International Educational Data Mining Society(2017)

work page 2017

-

[44]

Wu, Xingyu Zhang, Melanie Stefan, Johanna Gutlerner, and Chanmin Kim

Yan Liu, Audrey Béliveau, Henrike Besche, Amery D. Wu, Xingyu Zhang, Melanie Stefan, Johanna Gutlerner, and Chanmin Kim. 2021. Bayesian Mixed Effects Model and Data Visualization for Understanding Item Response Time and Response Order in Open Online Assessment.Frontiers in Education5 (2021), 607260. doi:10. 3389/feduc.2020.607260

-

[45]

Edwin A Locke and Gary P Latham. 2019. The development of goal setting theory: A half century retrospective.Motivation science5, 2 (2019), 93

work page 2019

-

[46]

Yanjin Long, Kenneth Holstein, and Vincent Aleven. 2018. What exactly do students learn when they practice equation solving? refining knowledge com- ponents with the additive factors model. InProceedings of the 8th International Conference on Learning Analytics and Knowledge. 399–408

work page 2018

-

[47]

Raymond Lynch, Adrian Hurley, Olivia Cumiskey, Brian Nolan, and Bridgeen McGlynn. 2019. Exploring the relationship between homework task difficulty, student engagement and performance.Irish Educational Studies38, 1 (2019), 89–103

work page 2019

-

[48]

Mehrdad Mirzaei, Shaghayegh Sahebi, and Peter Brusilovsky. 2020. Detecting Trait versus Performance Student Behavioral Patterns Using Discriminative Non- Negative Matrix Factorization. InProceedings of the 33rd International Florida Artificial Intelligence Research Society Conference. Association for the Advance- ment of Artificial Intelligence, 439–444

work page 2020

-

[49]

Jaclyn Ocumpaugh et al. 2015. Baker Rodrigo Ocumpaugh monitoring protocol (BROMP) 2.0 technical and training manual.New York, NY and Manila, Philippines: Teachers College, Columbia University and Ateneo Laboratory for the Learning Sciences60 (2015). L@S ’26, June 29-July 03, 2026, Seoul, Republic of Korea Conrad Borchers, Lijin Zhang, Kexin Yang, Tomohiro...

work page 2015

-

[50]

Ernesto Panadero. 2017. A review of self-regulated learning: Six models and four directions for research.Frontiers in Psychology8 (2017), 422. doi:10.3389/fpsyg. 2017.00422

-

[51]

Jan Papoušek, Vít Stanislav, and Radek Pelánek. 2016. Impact of question difficulty on engagement and learning. InInternational Conference on Intelligent Tutoring Systems. Springer, 267–272

work page 2016

-

[52]

Michael Schneider and Bianca A. Simonsmeier. 2025. How does prior knowledge affect learning? A review of 16 mechanisms and a framework for future research. Learning and Individual Differences122 (2025), 102744. doi:10.1016/j.lindif.2025. 102744

-

[53]

Sharmistha Self. 2013. Utilizing online tools to measure effort: Does it really improve student outcome?International Review of Economics Education14 (2013), 36–45

work page 2013

-

[54]

Yee Lee Shing and Garvin Brod. 2016. Effects of Prior Knowledge on Memory: Implications for Education.Mind, Brain, and Education10, 3 (2016), 153–161. doi:10.1111/mbe.12110

-

[55]

Dirk Tempelaar, Quan Nguyen, and Bart Rienties. 2020. Learning analytics and the measurement of learning engagement. InAdoption of data analytics in higher education learning and teaching. Springer, 159–176

work page 2020

-

[56]

David Thissen. 1983. Timed testing: An approach using item response theory. In New horizons in testing. Elsevier, 179–203

work page 1983

-

[57]

Kirk Vanacore, Amanda Barany, Shruti Mehta, Jaclyn Ocumpaugh, Ryan S Baker, Nabil Al Nahin, Vishal Kuvar, Caitlin Mills, and Owen Henkel. 2025. Down- shifting: The Nuanced Antecedents of Seeking Easier Content. InProceedings of the 19th International Conference of the Learning Sciences-ICLS 2025, pp. 637-645. International Society of the Learning Sciences

work page 2025

-

[58]

Vanacore, Ashish Gurung, Andrew A

Kirk P. Vanacore, Ashish Gurung, Andrew A. McReynolds, Allison Liu, Stacy T. Shaw, and Neil T. Heffernan. 2023. Impact of Non-Cognitive Interventions on Student Learning Behaviors and Outcomes: An Analysis of Seven Large-Scale Experimental Interventions. InProceedings of the 13th International Learning Analytics and Knowledge Conference. ACM, 165–174. doi...

-

[59]

Kurt VanLehn. 2011. The relative effectiveness of human tutoring, intelligent tutoring systems, and other tutoring systems.Educational psychologist46, 4 (2011), 197–221

work page 2011

-

[60]

Shiyu Wang and Yinghan Chen. 2020. Using response times and response accu- racy to measure fluency within cognitive diagnosis models.psychometrika85, 3 (2020), 600–629

work page 2020

-

[61]

Steven L. Wise and Xiaojing Kong. 2005. Response Time Effort: A New Measure of Examinee Motivation in Computer-Based Tests.Applied Measurement in Education18, 2 (2005), 163–183

work page 2005

-

[62]

A. E. Witherby and S. K. Carpenter. 2022. The rich-get-richer effect: Prior knowl- edge predicts new learning of domain-relevant information.Journal of Ex- perimental Psychology: Learning, Memory, and Cognition48, 4 (2022), 483–498. doi:10.1037/xlm0001026

-

[63]

Zambrano, Femke Kirschner, John Sweller, and Paul A

Jimmy R. Zambrano, Femke Kirschner, John Sweller, and Paul A. Kirschner. 2019. Effects of prior knowledge on collaborative and individual learning.Learning and Instruction63 (2019), 101214. doi:10.1016/j.learninstruc.2019.05.011

-

[64]

Barry J. Zimmerman. 2000. Attaining self-regulation: A social cognitive perspec- tive.Handbook of Self-Regulation(2000), 13–39. doi:10.1016/B978-012109890- 2/50031-7

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.