Recognition: no theorem link

PoHAR: Understanding Hyperlocal Human Activities with Pollution Sensor Networks

Pith reviewed 2026-05-12 02:47 UTC · model grok-4.3

The pith

Distributed air quality sensors can detect specific indoor human activities on-device with over 97 percent accuracy using self-supervised grouping.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

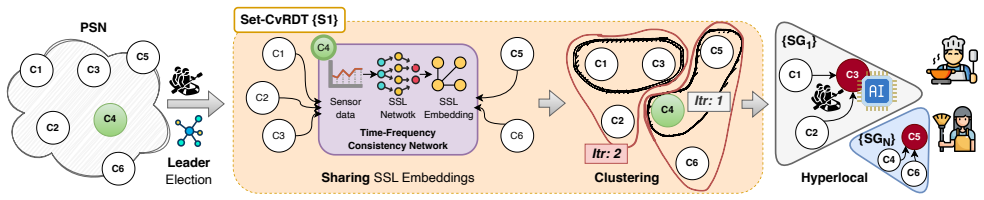

PoHAR implements a conflict-free replicated data primitive for sharing sensor readings, a hierarchical clustering routine on ESP32 devices that uses a self-supervised distance metric to identify groups of sensors affected by the same activity, and a leader-based inference stage that applies off-the-shelf machine-learning classifiers to those groups, delivering 97.41 percent accuracy on general indoor activities and 99.68 percent on cooking while keeping end-to-end latency below 34 microseconds.

What carries the argument

Hierarchical clustering driven by a self-supervised distance metric that groups activity-affected sensors without labeled examples, followed by leader-based inference on the resulting clusters.

If this is right

- On-device inference becomes feasible for distributed low-power sensor networks with sub-34-microsecond latency.

- Cooking detection reaches 99.68 percent accuracy and general indoor activity detection reaches 97.41 percent using standard classifiers without custom training.

- Privacy-preserving activity monitoring scales to entire households using only air-quality hardware already deployed for pollution tracking.

- Collaborative decisions can be made locally among sensors rather than requiring a central server or cloud round-trip.

Where Pith is reading between the lines

- The same clustering approach could be tested on other environmental signals such as temperature or humidity spikes to distinguish activities from appliance use.

- Integration with existing smart-home platforms would allow automatic responses, like ventilation adjustments, triggered directly by detected activities.

- Because the method needs no labels, it could be deployed quickly in new homes or care facilities where collecting annotated data is impractical.

- Extending the leader-election step to handle sensor failures might improve robustness in real-world deployments where individual nodes drop out.

Load-bearing premise

Changes in the sensor readings are caused mainly by the targeted human activities rather than by unrelated factors such as ventilation shifts or outside pollution, and the self-supervised metric can correctly identify the affected sensor groups from unlabeled data alone.

What would settle it

A controlled test in which specific activities occur while ventilation rates or external pollution sources are varied independently; if clustering fails to isolate the activity-affected sensors or classification accuracy falls below 90 percent, the core claim does not hold.

Figures

read the original abstract

Low-cost air quality sensors are becoming ubiquitous in our daily lives as public awareness of air pollution continues to grow, and people take measures to monitor and improve the air they breathe indoors. Besides the standard operation of these sensors, fluctuations in environmental parameters can be leveraged to understand human behavior and activities in indoor spaces. Unlike traditional audio-visual, Radio Frequency, and inertial sensors, air quality sensors are easily scalable to a household, are privacy-preserving, and more economical. Such distributed sensor networks must jointly make decisions to monitor indoor occupants for downstream smart home and healthcare applications. However, due to low processing power, memory, and energy, they often struggle to maintain distributed data consensus and identify activity-affected sensor groups for accurate on-device inference. In this paper, we propose PoHAR framework that implements: (i) a conflict-free replicated data primitive for data sharing, (ii) a hierarchical clustering for ESP32 to detect activity-affected sensor groups with a self-supervised distance metric, and (iii) a leader-based group inference with off-the-shelf ML classifiers, enabling the sensor network to collaboratively detect hyperlocal indoor activities. Our extensive experiments demonstrated on-device activity detection, achieving 97.41% accuracy for indoor activity and 99.68% for cooking activity, using off-the-shelf ML models with latency below 34 microseconds.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes the PoHAR framework for detecting hyperlocal indoor human activities via networks of low-cost air quality sensors. It introduces (i) a conflict-free replicated data primitive for distributed sensor data sharing, (ii) a hierarchical clustering algorithm running on ESP32 devices that employs a self-supervised distance metric to identify activity-affected sensor groups without requiring labels, and (iii) a leader-based inference scheme that applies off-the-shelf ML classifiers for on-device activity detection. The authors report experimental results claiming 97.41% accuracy for general indoor activities and 99.68% accuracy for cooking activities, with inference latency below 34 microseconds.

Significance. If the empirical claims can be substantiated with adequate dataset documentation, ablation studies, and controls for confounding variables, the work would represent a meaningful contribution to privacy-preserving ubiquitous sensing. It demonstrates how distributed-systems primitives (conflict-free replication and leader election) can be combined with lightweight ML to enable collaborative inference on severely resource-constrained devices, potentially enabling scalable smart-home and healthcare applications that avoid audio-visual or inertial sensors.

major comments (2)

- [Abstract / Experimental Evaluation] Abstract and Experimental Evaluation: The central performance claims of 97.41% indoor-activity accuracy and 99.68% cooking-activity accuracy are stated without any accompanying information on dataset size, number of participants or households, cross-validation strategy, baseline comparisons, or explicit handling of confounding factors (ventilation changes, external pollution, sensor drift). These omissions render the reported accuracies impossible to evaluate and directly undermine the load-bearing empirical contribution.

- [Framework Description (hierarchical clustering)] Hierarchical Clustering and Self-Supervised Distance Metric: The framework relies on a self-supervised distance metric to group sensors affected by the same activity, yet no ablation, sensitivity analysis, or comparison against ground-truth activity locations is provided to demonstrate that the metric captures activity signals rather than spurious correlations. Without such validation, the subsequent leader-based inference step cannot be shown to deliver the claimed accuracies.

minor comments (2)

- [System Design] The description of the conflict-free replicated data primitive would benefit from a short pseudocode listing or explicit reference to the underlying CRDT operations used.

- [Figures and Experimental Setup] Figure captions and experimental-setup descriptions should explicitly state the number of sensors, sampling rates, and environmental conditions to improve reproducibility.

Simulated Author's Rebuttal

We thank the referee for their insightful comments and the opportunity to improve our manuscript. We provide point-by-point responses to the major comments and have revised the paper accordingly to address the concerns raised.

read point-by-point responses

-

Referee: [Abstract / Experimental Evaluation] Abstract and Experimental Evaluation: The central performance claims of 97.41% indoor-activity accuracy and 99.68% cooking-activity accuracy are stated without any accompanying information on dataset size, number of participants or households, cross-validation strategy, baseline comparisons, or explicit handling of confounding factors (ventilation changes, external pollution, sensor drift). These omissions render the reported accuracies impossible to evaluate and directly undermine the load-bearing empirical contribution.

Authors: We agree with the referee that the abstract and the Experimental Evaluation section in the submitted manuscript did not include adequate details on the dataset, evaluation protocol, baselines, and confounding factors. This was an oversight in the presentation. We have revised the manuscript to add a new subsection titled 'Experimental Setup and Dataset' that documents the data collection process, including the number of households and participants involved, the duration of experiments, the cross-validation strategy employed, and comparisons with baseline methods. Additionally, we have included a discussion on how confounding factors like ventilation changes, external pollution, and sensor drift were mitigated or accounted for in the experiments. These revisions provide the necessary context to evaluate the reported accuracy figures. revision: yes

-

Referee: [Framework Description (hierarchical clustering)] Hierarchical Clustering and Self-Supervised Distance Metric: The framework relies on a self-supervised distance metric to group sensors affected by the same activity, yet no ablation, sensitivity analysis, or comparison against ground-truth activity locations is provided to demonstrate that the metric captures activity signals rather than spurious correlations. Without such validation, the subsequent leader-based inference step cannot be shown to deliver the claimed accuracies.

Authors: We thank the referee for highlighting this important aspect of the hierarchical clustering approach. The original manuscript introduced the self-supervised distance metric but indeed lacked explicit ablation studies, sensitivity analyses, or direct comparisons to ground-truth sensor groupings. We have addressed this by adding an 'Ablation and Validation' subsection in the revised manuscript. This includes ablation experiments removing or altering the self-supervised metric, sensitivity analysis on key parameters such as the number of clusters and distance thresholds, and a validation against ground-truth activity locations derived from controlled experiments where activity locations were known. These additions confirm that the metric identifies activity-affected groups effectively and supports the overall accuracy claims. revision: yes

Circularity Check

No significant circularity; empirical claims rest on experiments, not self-referential derivations

full rationale

The paper introduces the PoHAR framework with three components: a conflict-free data primitive, hierarchical clustering via a self-supervised distance metric on ESP32 devices, and leader-based inference using off-the-shelf ML models. Performance figures (97.41% indoor activity accuracy, 99.68% cooking activity accuracy, sub-34μs latency) are presented as outcomes of extensive real-world experiments on sensor networks rather than any closed-form derivation, prediction, or first-principles result. No equations, fitted parameters renamed as predictions, or self-citation chains that bear the central claim are present in the provided text. The self-supervised metric is a design choice for label-free grouping and does not reduce the reported accuracies to the metric's own definition by construction. The work is therefore self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

A general method for human activity recog- nition in video,

N. Robertson and I. Reid, “A general method for human activity recog- nition in video,”Computer Vision and Image Understanding, vol. 104, no. 2-3, pp. 232–248, 2006

work page 2006

-

[2]

Audio-based human activity recognition using non-markovian ensemble voting,

J. A. Stork, L. Spinello, J. Silva, and K. O. Arras, “Audio-based human activity recognition using non-markovian ensemble voting,” in2012 IEEE RO-MAN: The 21st IEEE International Symposium on Robot and Human Interactive Communication, pp. 509–514, IEEE, 2012

work page 2012

-

[3]

Airsense: an intelligent home- based sensing system for indoor air quality analytics,

B. Fang, Q. Xu, T. Park, and M. Zhang, “Airsense: an intelligent home- based sensing system for indoor air quality analytics,” inProceedings of the 2016 ACM International Joint Conference on Pervasive and Ubiquitous Computing, pp. 109–119, ACM, 2016

work page 2016

-

[4]

P. C. Ribeiro, J. Santos-Victor, and P. Lisboa, “Human activity recog- nition from video: modeling, feature selection and classification archi- tecture,” inProceedings of International Workshop on Human Activity Recognition and Modelling, vol. 61, p. 78, 2005

work page 2005

-

[5]

Human activity recog- nition for video surveillance,

W. Lin, M.-T. Sun, R. Poovandran, and Z. Zhang, “Human activity recog- nition for video surveillance,” in2008 IEEE international symposium on circuits and systems (ISCAS), pp. 2737–2740, IEEE, 2008

work page 2008

-

[6]

Transfer learning for improved audio- based human activity recognition,

S. Ntalampiras and I. Potamitis, “Transfer learning for improved audio- based human activity recognition,”Biosensors, vol. 8, no. 3, p. 60, 2018

work page 2018

-

[7]

Audio- and video-based human activity recognition systems in healthcare,

S. Cristina, V . Despotovic, R. P ´erez-Rodr´ıguez, and S. Aleksic, “Audio- and video-based human activity recognition systems in healthcare,”IEEE Access, vol. 12, pp. 8230–8245, 2024

work page 2024

-

[8]

Continuous multi- user activity tracking via room-scale mmwave sensing,

A. Sen, A. Das, S. Pradhan, and S. Chakraborty, “Continuous multi- user activity tracking via room-scale mmwave sensing,” in2024 23rd ACM/IEEE International Conference on Information Processing in Sen- sor Networks, pp. 163–175, IEEE, 2024

work page 2024

-

[9]

Evaluating self-supervised learn- ing for wifi csi-based human activity recognition,

K. Xu, J. Wang, H. Zhu, and D. Zheng, “Evaluating self-supervised learn- ing for wifi csi-based human activity recognition,”ACM Transactions on Sensor Networks, vol. 21, no. 2, pp. 1–38, 2025

work page 2025

-

[10]

Collossl: Collaborative self-supervised learning for human activity recognition,

Y . Jain, C. I. Tang, C. Min, F. Kawsar, and A. Mathur, “Collossl: Collaborative self-supervised learning for human activity recognition,” Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies, vol. 6, no. 1, pp. 1–28, 2022

work page 2022

-

[11]

A. Abedin, M. Ehsanpour, Q. Shi, H. Rezatofighi, and D. C. Ranas- inghe, “Attend and discriminate: Beyond the state-of-the-art for human activity recognition using wearable sensors,”Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies, vol. 5, no. 1, pp. 1–22, 2021

work page 2021

-

[12]

Indoor air quality monitor market outlook,

Future Market Insights, “Indoor air quality monitor market outlook,” tech. rep., Future Market Insights, 2023

work page 2023

-

[13]

Indoor air quality dataset with activities of daily living in low to middle-income communities,

P. Karmakar, S. Pradhan, and S. Chakraborty, “Indoor air quality dataset with activities of daily living in low to middle-income communities,” Advances in Neural Information Processing Systems, vol. 37, pp. 70076– 70100, 2024

work page 2024

-

[14]

Exploring indoor air quality dynamics in developing nations: A perspective from india,

P. Karmakar, S. Pradhan, and S. Chakraborty, “Exploring indoor air quality dynamics in developing nations: A perspective from india,”ACM Journal on Computing and Sustainable Societies, vol. 2, no. 3, pp. 1–40, 2024

work page 2024

-

[15]

Exploiting air quality monitors to perform indoor surveillance: Academic setting,

P. Karmakar, S. Pradhan, and S. Chakraborty, “Exploiting air quality monitors to perform indoor surveillance: Academic setting,” inAdjunct Proceedings of the 26th International Conference on Mobile Human- Computer Interaction, pp. 1–6, 2024

work page 2024

-

[16]

Ra-mosaic: Resource adaptive edge ai optimization over spatially multiplexed video streams,

I. Gokarn, Y . Hu, T. Abdelzaher, and A. Misra, “Ra-mosaic: Resource adaptive edge ai optimization over spatially multiplexed video streams,” ACM Transactions on Multimedia Computing, Communications and Applications, vol. 21, no. 9, pp. 1–25, 2025

work page 2025

-

[17]

Pix2vox: Context-aware 3d reconstruction from single and multi-view images,

H. Xie, H. Yao, X. Sun, S. Zhou, and S. Zhang, “Pix2vox: Context-aware 3d reconstruction from single and multi-view images,” inProceedings of the IEEE/CVF international conference on computer vision, pp. 2690– 2698, 2019

work page 2019

-

[18]

Heed: a hybrid, energy-efficient, distributed clustering approach for ad hoc sensor networks,

O. Younis and S. Fahmy, “Heed: a hybrid, energy-efficient, distributed clustering approach for ad hoc sensor networks,”IEEE Transactions on mobile computing, vol. 3, no. 4, pp. 366–379, 2004

work page 2004

-

[19]

Distributed energy-efficient hierarchi- cal clustering for wireless sensor networks,

P. Ding, J. Holliday, and A. Celik, “Distributed energy-efficient hierarchi- cal clustering for wireless sensor networks,” inInternational conference on distributed computing in sensor systems, pp. 322–339, Springer, 2005

work page 2005

-

[20]

L. Qing, Q. Zhu, and M. Wang, “Design of a distributed energy-efficient clustering algorithm for heterogeneous wireless sensor networks,”Com- puter communications, vol. 29, no. 12, pp. 2230–2237, 2006

work page 2006

-

[21]

A communication- efficient distributed clustering algorithm for sensor networks,

A. Taherkordi, R. Mohammadi, and F. Eliassen, “A communication- efficient distributed clustering algorithm for sensor networks,” in22nd International Conference on Advanced Information Networking and Applications-Workshops (aina workshops 2008), pp. 634–638, IEEE, 2008

work page 2008

-

[22]

Self-supervised contrastive pre-training for time series via time-frequency consistency,

X. Zhang, Z. Zhao, T. Tsiligkaridis, and M. Zitnik, “Self-supervised contrastive pre-training for time series via time-frequency consistency,” Advances in neural information processing systems, vol. 35, pp. 3988– 4003, 2022

work page 2022

-

[23]

Y . Wang, V . Narasayya, Y . He, and S. Chaudhuri, “Pack: An efficient partition-based distributed agglomerative hierarchical clustering algo- rithm for deduplication,”Proceedings of the VLDB Endowment, vol. 15, no. 6, pp. 1132–1145, 2022

work page 2022

-

[24]

In search of an understandable consensus algorithm,

D. Ongaro and J. Ousterhout, “In search of an understandable consensus algorithm,” in2014 USENIX Annual Technical Conference, pp. 305–319, USENIX Association, 2014

work page 2014

-

[25]

Radhar: Human activity recognition from point clouds generated through a millimeter- wave radar,

A. D. Singh, S. S. Sandha, L. Garcia, and M. Srivastava, “Radhar: Human activity recognition from point clouds generated through a millimeter- wave radar,” inProceedings of the 3rd ACM Workshop on Millimeter- wave Networks and Sensing Systems, pp. 51–56, 2019

work page 2019

-

[26]

Cube- learn: End-to-end learning for human motion recognition from raw mmwave radar signals,

P. Zhao, C. X. Lu, B. Wang, N. Trigoni, and A. Markham, “Cube- learn: End-to-end learning for human motion recognition from raw mmwave radar signals,”IEEE Internet of Things Journal, vol. 10, no. 12, pp. 10236–10249, 2023

work page 2023

-

[27]

Multi-har: Human activity recognition in multi-person scenes based on mmwave sensing,

X. Zeng, Y . Shi, and A. Zhou, “Multi-har: Human activity recognition in multi-person scenes based on mmwave sensing,” in2022 IEEE 8th International Conference on Computer and Communications (ICCC), pp. 1789–1793, IEEE, 2022

work page 2022

-

[28]

J. Yan, X. Zhang, C. Tan, and D. Li, “Skelformer: An adaptive hierar- chical transformer-based approach on skeleton graphs for human action recognition in video sequences,”PloS one, vol. 21, no. 1, pp. 1–20, 2026

work page 2026

-

[29]

A review of video-based human activity recognition: theory, methods and applications,

T. F. N. Bukht, H. Rahman, M. Shaheen, A. Algarni, N. A. Almujally, and A. Jalal, “A review of video-based human activity recognition: theory, methods and applications,”Multimedia Tools and Applications, vol. 84, no. 17, pp. 18499–18545, 2025

work page 2025

-

[30]

3d is here: Point cloud library (pcl),

R. B. Rusu and S. Cousins, “3d is here: Point cloud library (pcl),” in 2011 IEEE international conference on robotics and automation, pp. 1–4, IEEE, 2011

work page 2011

-

[31]

4d spatio-temporal convnets: Minkowski convolutional neural networks,

C. Choy, J. Gwak, and S. Savarese, “4d spatio-temporal convnets: Minkowski convolutional neural networks,” inProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp. 3075–3084, 2019

work page 2019

-

[32]

Pointnet: Deep learning on point sets for 3d classification and segmentation,

C. R. Qi, H. Su, K. Mo, and L. J. Guibas, “Pointnet: Deep learning on point sets for 3d classification and segmentation,” inProceedings of the IEEE conference on computer vision and pattern recognition, pp. 652– 660, 2017

work page 2017

-

[33]

Pointnet++: Deep hierarchical feature learning on point sets in a metric space,

C. R. Qi, L. Yi, H. Su, and L. J. Guibas, “Pointnet++: Deep hierarchical feature learning on point sets in a metric space,”Advances in neural information processing systems, vol. 30, 2017

work page 2017

-

[34]

Point-gnn: Graph neural network for 3d object detection in a point cloud,

W. Shi and R. Rajkumar, “Point-gnn: Graph neural network for 3d object detection in a point cloud,” inProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp. 1711–1719, 2020

work page 2020

-

[35]

A simple frame- work for contrastive learning of visual representations,

T. Chen, S. Kornblith, M. Norouzi, and G. Hinton, “A simple frame- work for contrastive learning of visual representations,” inInternational conference on machine learning, pp. 1597–1607, PmLR, 2020

work page 2020

-

[36]

Efficient hierarchical clustering of large high dimensional datasets,

S. Gilpin, B. Qian, and I. Davidson, “Efficient hierarchical clustering of large high dimensional datasets,” inProceedings of the 22nd ACM international conference on Information & Knowledge Management, pp. 1371–1380, 2013

work page 2013

-

[37]

emlearn: Machine Learning inference engine for Microcontrollers and Embedded Devices,

J. Nordby, M. Cooke, and A. Horvath, “emlearn: Machine Learning inference engine for Microcontrollers and Embedded Devices,” Mar. 2019

work page 2019

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.