Recognition: no theorem link

Outlier-Robust Diffusion Solvers for Inverse Problems

Pith reviewed 2026-05-12 04:22 UTC · model grok-4.3

The pith

Diffusion models for inverse problems become robust to outliers by explicitly estimating noise and using Huber-loss reweighted least squares.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

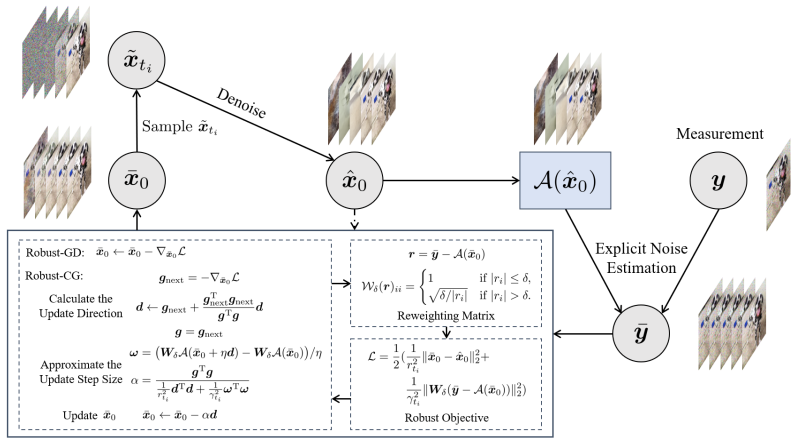

By first estimating noise to clean the measurements and then optimizing a Huber-loss-based iteratively reweighted least squares objective that incorporates the diffusion prior, the method produces solutions that remain accurate even when outliers are present in the data.

What carries the argument

Huber-loss iteratively reweighted least squares objective applied after explicit noise estimation, solved by gradient or conjugate gradient steps while respecting the diffusion prior.

If this is right

- The approach applies to both linear and nonlinear inverse problems.

- Conjugate gradient solving removes the need for careful learning-rate tuning required by plain gradient descent.

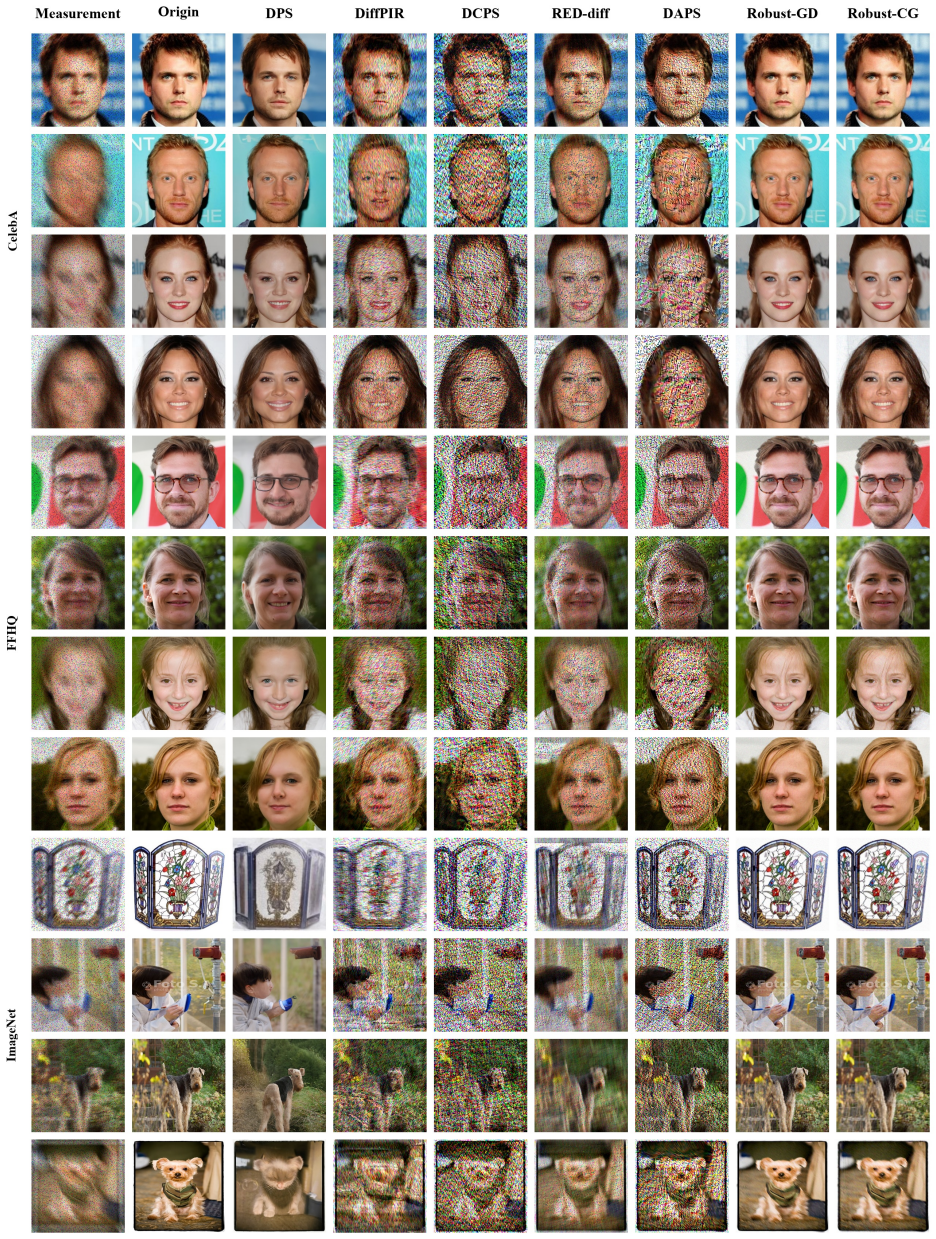

- Extensive tests on multiple image datasets show outperformance over recent diffusion-model methods in most outlier conditions.

- Robustness holds under varying outlier strengths and task types.

Where Pith is reading between the lines

- The same noise-estimation plus reweighting pattern could be tried with other generative priors besides diffusion models.

- In applications such as medical or astronomical imaging the method may reduce the amount of manual data cleaning needed before inversion.

- If the diffusion prior itself is weak on the target domain, the robustness gains may shrink even when the reweighting works as intended.

Load-bearing premise

The explicit noise estimation step must separate outliers and noise from the true signal without introducing new distortions that the diffusion model cannot correct.

What would settle it

A test set of synthetic inverse problems with precisely known outlier locations and magnitudes where the method's reconstructions show higher error or visible artifacts than a non-robust diffusion baseline.

Figures

read the original abstract

Methods based on diffusion models (DMs) for solving inverse problems (IPs) have recently achieved remarkable performance. However, DM-based methods typically struggle against outliers, which are common in real-world measurements. In this work, to tackle IPs with outliers, we first refine the measurement via explicit noise estimation to mitigate the effect of noise. Subsequently, we formulate an iteratively reweighted least squares objective based on the Huber loss to address the outliers. We propose a method utilizing gradient descent to approximately solve the corresponding optimization problem for the robust objective. To avoid delicate tuning of the learning rate required by the gradient descent method, we further employ the conjugate gradient method with an efficient strategy for updating. Extensive experiments on multiple image datasets for linear and nonlinear tasks under various conditions demonstrate that our proposed methods exhibit robustness to outliers and outperform recent DM-based methods in most cases.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes outlier-robust diffusion-model solvers for inverse problems. It first applies explicit noise estimation to refine measurements and mitigate noise effects, then formulates an iteratively reweighted least squares (IRLS) objective using the Huber loss to handle outliers. The resulting optimization is solved approximately via gradient descent or, to avoid learning-rate tuning, via conjugate gradient with an efficient update strategy. Extensive experiments across multiple image datasets, covering linear and nonlinear tasks under varied conditions, are reported to show improved robustness to outliers and outperformance over recent DM-based methods in most cases.

Significance. If the noise-estimation step isolates outliers without distorting the signal recovered by the diffusion prior and the Huber-loss IRLS remains compatible with the diffusion model, the approach offers a practical, parameter-light extension of existing DM-IP frameworks. The dual solver options (GD and CG) and the breadth of experiments on linear/nonlinear tasks across datasets constitute a useful empirical contribution for real-world inverse problems with contaminated measurements.

minor comments (3)

- [Abstract] The abstract states that the methods 'outperform recent DM-based methods in most cases' but provides no quantitative metrics, specific baselines, or outlier-level details; a summary table or explicit comparison metrics should appear in the experimental section.

- [Methods] The 'efficient strategy for updating' the conjugate-gradient solver is mentioned but not specified; pseudocode or the exact update rule (e.g., how the weighting matrix is refreshed inside the CG loop) should be provided in the methods section to ensure reproducibility.

- [Experiments] The Huber-loss threshold is listed as a free parameter; its selection procedure, sensitivity analysis, or default value across experiments should be stated explicitly.

Simulated Author's Rebuttal

We thank the referee for the positive and accurate summary of our manuscript, the assessment of its significance, and the recommendation for minor revision. No specific major comments were raised.

Circularity Check

No significant circularity detected

full rationale

The paper presents a direct integration of standard robust statistics tools (explicit noise estimation followed by Huber-loss-based IRLS solved via gradient descent or conjugate gradient) into an existing diffusion-model inverse-problem solver. No equations or steps reduce the claimed robustness or performance gains to a fitted parameter, self-citation chain, or definitional tautology. The method is described as an application of known techniques rather than a self-derived result, and the central claims rest on experimental validation across datasets rather than internal construction from the inputs.

Axiom & Free-Parameter Ledger

free parameters (1)

- Huber loss threshold

axioms (2)

- domain assumption Diffusion models provide a useful prior for solving linear and nonlinear inverse problems via guidance or sampling.

- standard math Conjugate gradient converges reliably on the quadratic subproblems arising from IRLS.

Reference graph

Works this paper leans on

-

[1]

The perception-distortion tradeoff

Yochai Blau and Tomer Michaeli. The perception-distortion tradeoff. In CVPR, 2018. 5

work page 2018

-

[2]

Decoding by linear programming

Emmanuel J Cand `es and Terence Tao. Decoding by linear programming. IEEE Transactions on Information Theory,

-

[3]

OID: Outlier identifying and discarding in blind im- age deblurring

Liang Chen, Faming Fang, Jiawei Zhang, Jun Liu, and Guixu Zhang. OID: Outlier identifying and discarding in blind im- age deblurring. In ECCV, 2020. 1

work page 2020

-

[4]

Robust learning of diffusion models with extremely noisy conditions

Xin Chen, Gillian Dobbie, Xinyu Wang, Feng Liu, Di Wang, and Jingfeng Zhang. Robust learning of diffusion models with extremely noisy conditions. arXiv preprint arXiv:2510.10149, 2025. 4, 7

-

[5]

Handling outliers in non-blind image deconvolution

Sunghyun Cho, Jue Wang, and Seungyong Lee. Handling outliers in non-blind image deconvolution. In ICCV, 2011. 2

work page 2011

-

[6]

MR im- age denoising and super-resolution using regularized reverse diffusion

Hyungjin Chung, Eun Sun Lee, and Jong Chul Ye. MR im- age denoising and super-resolution using regularized reverse diffusion. IEEE Transactions on Medical Imaging, 2022. 1

work page 2022

-

[7]

Improving diffusion models for inverse prob- lems using manifold constraints

Hyungjin Chung, Byeongsu Sim, Dohoon Ryu, and Jong Chul Ye. Improving diffusion models for inverse prob- lems using manifold constraints. In NeurIPS, 2022. 2

work page 2022

-

[8]

Parallel diffusion models of operator and image for blind inverse problems

Hyungjin Chung, Jeongsol Kim, Sehui Kim, and Jong Chul Ye. Parallel diffusion models of operator and image for blind inverse problems. In CVPR, 2023. 1

work page 2023

-

[9]

Diffusion posterior sampling for general noisy inverse problems

Hyungjin Chung, Jeongsol Kim, Michael T Mccann, Marc L Klasky, and Jong Chul Ye. Diffusion posterior sampling for general noisy inverse problems. In ICLR, 2023. 2, 6, 7

work page 2023

-

[10]

LatentPaint: Image inpainting in latent space with diffusion models

Ciprian Corneanu, Raghudeep Gadde, and Aleix M Mar- tinez. LatentPaint: Image inpainting in latent space with diffusion models. In CVPR, 2024. 1

work page 2024

-

[11]

Outlier-robust esti- mation of a sparse linear model usingℓ 1-penalized Huber’s M-estimator

Arnak Dalalyan and Philip Thompson. Outlier-robust esti- mation of a sparse linear model usingℓ 1-penalized Huber’s M-estimator. In NeurIPS, 2019. 1, 2

work page 2019

-

[12]

ImageNet: A large-scale hierarchical image database

Jia Deng, Wei Dong, Richard Socher, Li-Jia Li, Kai Li, and Li Fei-Fei. ImageNet: A large-scale hierarchical image database. In CVPR, 2009. 5

work page 2009

-

[13]

Deep outlier handling for image deblurring

Jiangxin Dong and Jinshan Pan. Deep outlier handling for image deblurring. IEEE Transactions on Image Processing,

-

[14]

Blind image deblurring with outlier handling

Jiangxin Dong, Jinshan Pan, Zhixun Su, and Ming-Hsuan Yang. Blind image deblurring with outlier handling. In ICCV, 2017. 1

work page 2017

-

[15]

Hybird regularization improves diffusion- based inverse problem solving

Hongkun Dou, Zeyu Li, Jinyang Du, Lijun Yang, Wen Yao, and Yue Deng. Hybird regularization improves diffusion- based inverse problem solving. In ICLR, 2025. 2

work page 2025

-

[16]

Diffusion posterior sampling for linear inverse problem solving: A filtering perspective

Zehao Dou and Yang Song. Diffusion posterior sampling for linear inverse problem solving: A filtering perspective. In ICLR, 2024. 1

work page 2024

-

[17]

Con- sistent regression when oblivious outliers overwhelm

Tommaso d’Orsi, Gleb Novikov, and David Steurer. Con- sistent regression when oblivious outliers overwhelm. In ICML, 2021. 2

work page 2021

-

[18]

A mathematical introduction to compressive sensing

Simon Foucart and Holger Rauhut. A mathematical introduction to compressive sensing. Springer New York,

-

[19]

Implicit diffusion models for continuous super-resolution

Sicheng Gao, Xuhui Liu, Bohan Zeng, Sheng Xu, Yan- jing Li, Xiaoyan Luo, Jianzhuang Liu, Xiantong Zhen, and Baochang Zhang. Implicit diffusion models for continuous super-resolution. In CVPR, 2023. 1

work page 2023

-

[20]

GANs trained by a two time-scale update rule converge to a local Nash equi- librium

Martin Heusel, Hubert Ramsauer, Thomas Unterthiner, Bernhard Nessler, and Sepp Hochreiter. GANs trained by a two time-scale update rule converge to a local Nash equi- librium. In NeurIPS, 2017. 6

work page 2017

-

[21]

Denoising diffu- sion probabilistic models

Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffu- sion probabilistic models. In NeurIPS, 2020. 2

work page 2020

-

[22]

Robust compressed sensing MRI with deep generative priors

Ajil Jalal, Marius Arvinte, Giannis Daras, Eric Price, Alexandros G Dimakis, and Jon Tamir. Robust compressed sensing MRI with deep generative priors. In NeurIPS, 2021. 1, 2, 6

work page 2021

-

[23]

Divide-and-conquer posterior sampling for denoising diffusion priors

Yazid Janati, Badr Moufad, Alain Durmus, Eric Moulines, and Jimmy Olsson. Divide-and-conquer posterior sampling for denoising diffusion priors. In NeurIPS, 2024. 2, 6

work page 2024

-

[24]

Improved Fletcher–Reeves and Dai–Yuan conjugate gradient methods with the strong Wolfe line search

Xianzhen Jiang and Jinbao Jian. Improved Fletcher–Reeves and Dai–Yuan conjugate gradient methods with the strong Wolfe line search. Journal of Computational and Applied Mathematics, 2019. 5

work page 2019

-

[25]

A style-based generator architecture for generative adversarial networks

Tero Karras, Samuli Laine, and Timo Aila. A style-based generator architecture for generative adversarial networks. In CVPR, 2019. 5

work page 2019

-

[26]

Elucidating the design space of diffusion-based generative models

Tero Karras, Miika Aittala, Timo Aila, and Samuli Laine. Elucidating the design space of diffusion-based generative models. In NeurIPS, 2022. 2

work page 2022

-

[27]

Denoising diffusion restoration models

Bahjat Kawar, Michael Elad, Stefano Ermon, and Jiaming Song. Denoising diffusion restoration models. In NeurIPS,

-

[28]

Iterative methods for linear and nonlinear equations

Carl T Kelley. Iterative methods for linear and nonlinear equations. SIAM, 1995. 5

work page 1995

-

[29]

Diederik Kingma, Tim Salimans, Ben Poole, and Jonathan Ho. Variational diffusion models. In NeurIPS, 2021. 3

work page 2021

-

[30]

Iteratively reweighted least squares for basis pursuit with global linear convergence rate

Christian K ¨ummerle, Claudio Mayrink Verdun, and Dominik St¨oger. Iteratively reweighted least squares for basis pursuit with global linear convergence rate. In NeurIPS, 2021. 4

work page 2021

-

[31]

Generalization bounds in the presence of outliers: A median-of-means study

Pierre Laforgue, Guillaume Staerman, and Stephan Cl´emenc ¸on. Generalization bounds in the presence of outliers: A median-of-means study. In ICML, 2021. 2

work page 2021

-

[32]

Ji Li and Chao Wang. Integrating reweighted least squares with plug-and-play diffusion priors for noisy image restora- tion. In AAAI, 2026. 1

work page 2026

-

[33]

Decoupled data consistency with diffusion purification for image restoration

Xiang Li, Soo Min Kwon, Shijun Liang, Ismail R Alkhouri, Saiprasad Ravishankar, and Qing Qu. Decoupled data con- sistency with diffusion purification for image restoration. arXiv preprint arXiv:2403.06054, 2024. 2

-

[34]

High dimensional robustM-estimation: Arbitrary corruption and heavy tails

Liu Liu, Tianyang Li, and Constantine Caramanis. High dimensional robustM-estimation: Arbitrary corruption and heavy tails. arXiv preprint arXiv:1901.08237, 2019. 2

-

[35]

High dimensional robust sparse regression

Liu Liu, Yanyao Shen, Tianyang Li, and Constantine Cara- manis. High dimensional robust sparse regression. InICAIS,

-

[36]

Deep learning face attributes in the wild

Ziwei Liu, Ping Luo, Xiaogang Wang, and Xiaoou Tang. Deep learning face attributes in the wild. In ICCV, 2015. 5

work page 2015

-

[37]

Regularized M- estimators with nonconvexity: Statistical and algorithmic theory for local optima

Po-Ling Loh and Martin J Wainwright. Regularized M- estimators with nonconvexity: Statistical and algorithmic theory for local optima. 2015. 2

work page 2015

-

[38]

DPM-Solver: A fast ODE solver for dif- fusion probabilistic model sampling in around 10 steps

Cheng Lu, Yuhao Zhou, Fan Bao, Jianfei Chen, Chongxuan Li, and Jun Zhu. DPM-Solver: A fast ODE solver for dif- fusion probabilistic model sampling in around 10 steps. In NeurIPS, 2022. 2

work page 2022

-

[39]

Cheng Lu, Yuhao Zhou, Fan Bao, Jianfei Chen, Chongxuan Li, and Jun Zhu. DPM-Solver++: Fast solver for guided sampling of diffusion probabilistic models. arXiv preprint arXiv:2211.01095, 2022. 3

-

[40]

Repaint: Inpainting using denoising diffusion probabilistic models

Andreas Lugmayr, Martin Danelljan, Andres Romero, Fisher Yu, Radu Timofte, and Luc Van Gool. Repaint: Inpainting using denoising diffusion probabilistic models. In CVPR,

-

[41]

A variational perspective on solving inverse problems with diffusion models

Morteza Mardani, Jiaming Song, Jan Kautz, and Arash Vah- dat. A variational perspective on solving inverse problems with diffusion models. In ICLR, 2023. 2, 6

work page 2023

-

[42]

Deep learning via hessian-free opti- mization

James Martens et al. Deep learning via hessian-free opti- mization. In ICML, 2010. 5

work page 2010

-

[43]

Solving audio inverse problems with a diffusion model

Eloi Moliner, Jaakko Lehtinen, and Vesa V ¨alim¨aki. Solving audio inverse problems with a diffusion model. In ICASSP,

-

[44]

Variational dif- fusion posterior sampling with midpoint guidance

Badr Moufad, Yazid Janati, Lisa Bedin, Alain Durmus, Ran- dal Douc, Eric Moulines, and Jimmy Olsson. Variational dif- fusion posterior sampling with midpoint guidance. In ICLR,

-

[45]

Naoki Murata, Koichi Saito, Chieh-Hsin Lai, Yuhta Takida, Toshimitsu Uesaka, Yuki Mitsufuji, and Stefano Ermon. GibbsDDRM: A partially collapsed Gibbs sampler for solv- ing blind inverse problems with denoising diffusion restora- tion. In ICML, 2023. 1

work page 2023

-

[46]

Jorge Nocedal and Stephen J Wright. Numerical optimization. Springer, 2006. 5

work page 2006

-

[47]

Robust kernel estimation with outliers handling for image deblurring

Jinshan Pan, Zhouchen Lin, Zhixun Su, and Ming-Hsuan Yang. Robust kernel estimation with outliers handling for image deblurring. In CVPR, 2016. 1, 2

work page 2016

-

[48]

Multiscale structure guided diffusion for image deblurring

Mengwei Ren, Mauricio Delbracio, Hossein Talebi, Guido Gerig, and Peyman Milanfar. Multiscale structure guided diffusion for image deblurring. In CVPR, 2023. 1

work page 2023

-

[49]

Palette: Image-to-image diffusion models

Chitwan Saharia, William Chan, Huiwen Chang, Chris Lee, Jonathan Ho, Tim Salimans, David Fleet, and Mohammad Norouzi. Palette: Image-to-image diffusion models. In SIGGRAPH, 2022. 1

work page 2022

-

[50]

Unsu- pervised vocal dereverberation with diffusion-based genera- tive models

Koichi Saito, Naoki Murata, Toshimitsu Uesaka, Chieh-Hsin Lai, Yuhta Takida, Takao Fukui, and Yuki Mitsufuji. Unsu- pervised vocal dereverberation with diffusion-based genera- tive models. In ICASSP, 2023. 1

work page 2023

-

[51]

Kernel dif- fusion: An alternate approach to blind deconvolution

Yash Sanghvi, Yiheng Chi, and Stanley H Chan. Kernel dif- fusion: An alternate approach to blind deconvolution. In ECCV, 2025. 1

work page 2025

-

[52]

ResDiff: Combining CNN and diffusion model for image super-resolution

Shuyao Shang, Zhengyang Shan, Guangxing Liu, LunQian Wang, XingHua Wang, Zekai Zhang, and Jinglin Zhang. ResDiff: Combining CNN and diffusion model for image super-resolution. In AAAI, 2024. 1

work page 2024

-

[53]

Learning with bad train- ing data via iterative trimmed loss minimization

Yanyao Shen and Sujay Sanghavi. Learning with bad train- ing data via iterative trimmed loss minimization. In ICML,

-

[54]

Unsupervised de- tection of distribution shift in inverse problems using diffu- sion models

Shirin Shoushtari, Edward P Chandler, Yuanhao Wang, M Salman Asif, and Ulugbek S Kamilov. Unsupervised de- tection of distribution shift in inverse problems using diffu- sion models. In arXiv preprint arXiv:2505.11482, 2025. 7

-

[55]

Deep unsupervised learning using nonequilibrium thermodynamics

Jascha Sohl-Dickstein, Eric Weiss, Niru Maheswaranathan, and Surya Ganguli. Deep unsupervised learning using nonequilibrium thermodynamics. In ICML, 2015. 2

work page 2015

-

[56]

Solving inverse problems with latent diffusion models via hard data consistency

Bowen Song, Soo Min Kwon, Zecheng Zhang, Xinyu Hu, Qing Qu, and Liyue Shen. Solving inverse problems with latent diffusion models via hard data consistency. In ICLR,

-

[57]

Denois- ing diffusion implicit models

Jiaming Song, Chenlin Meng, and Stefano Ermon. Denois- ing diffusion implicit models. In ICLR, 2021. 2, 3

work page 2021

-

[58]

Pseudoinverse-guided diffusion models for inverse problems

Jiaming Song, Arash Vahdat, Morteza Mardani, and Jan Kautz. Pseudoinverse-guided diffusion models for inverse problems. In ICLR, 2023. 1, 2

work page 2023

-

[59]

Robust image restoration with an adaptive Huber function based fidelity

Lingfei Song and Hua Huang. Robust image restoration with an adaptive Huber function based fidelity. International Journal of Computer Vision, 2024. 1, 2, 4, 6

work page 2024

-

[60]

Score-based generative modeling through stochastic differential equa- tions

Yang Song, Jascha Sohl-Dickstein, Diederik P Kingma, Ab- hishek Kumar, Stefano Ermon, and Ben Poole. Score-based generative modeling through stochastic differential equa- tions. In ICLR, 2021. 2, 3

work page 2021

-

[61]

Solv- ing inverse problems in medical imaging with score-based generative models

Yang Song, Liyue Shen, Lei Xing, and Stefano Ermon. Solv- ing inverse problems in medical imaging with score-based generative models. In ICLR, 2022. 1

work page 2022

-

[62]

Explore image deblurring via encoded blur kernel space

Phong Tran, Anh Tuan Tran, Quynh Phung, and Minh Hoai. Explore image deblurring via encoded blur kernel space. In CVPR, 2021. 7

work page 2021

-

[63]

Introduction to the mathematics of inversion in remote sensing and indirect measurements

Sean Twomey. Introduction to the mathematics of inversion in remote sensing and indirect measurements. Courier Dover Publications, 2019. 1

work page 2019

-

[64]

DMPlug: A plug-in method for solving inverse problems with diffusion models

Hengkang Wang, Xu Zhang, Taihui Li, Yuxiang Wan, Tian- cong Chen, and Ju Sun. DMPlug: A plug-in method for solving inverse problems with diffusion models. InNeurIPS,

-

[65]

Zero-shot image restoration using denoising diffusion null-space model

Yinhuai Wang, Jiwen Yu, and Jian Zhang. Zero-shot image restoration using denoising diffusion null-space model. In ICLR, 2023. 1

work page 2023

-

[66]

Further analysis of outlier detection with deep generative models

Ziyu Wang, Bin Dai, David Wipf, and Jun Zhu. Further analysis of outlier detection with deep generative models. In NeurIPS, 2020. 2

work page 2020

-

[67]

Yan Wu, Mihaela Rosca, and Timothy Lillicrap. Deep com- pressed sensing. In ICML, 2019. 1

work page 2019

-

[68]

Improving diffusion inverse problem solving with decoupled noise annealing

Bingliang Zhang, Wenda Chu, Julius Berner, Chenlin Meng, Anima Anandkumar, and Yang Song. Improving diffusion inverse problem solving with decoupled noise annealing. In CVPR, 2025. 2, 3, 4, 5, 6, 7

work page 2025

-

[69]

The unreasonable effectiveness of deep features as a perceptual metric

Richard Zhang, Phillip Isola, Alexei A Efros, Eli Shechtman, and Oliver Wang. The unreasonable effectiveness of deep features as a perceptual metric. In CVPR, 2018. 6

work page 2018

-

[70]

UniPC: A unified predictor-corrector framework for fast sampling of diffusion models

Wenliang Zhao, Lujia Bai, Yongming Rao, Jie Zhou, and Jiwen Lu. UniPC: A unified predictor-corrector framework for fast sampling of diffusion models. In NeurIPS, 2023. 2

work page 2023

-

[71]

Yang Zheng, Wen Li, and Zhaoqiang Liu. Integrating in- termediate layer optimization and projected gradient descent for solving inverse problems with diffusion models. In ICML, 2025. 2

work page 2025

-

[72]

Denoising diffu- sion models for plug-and-play image restoration

Yuanzhi Zhu, Kai Zhang, Jingyun Liang, Jiezhang Cao, Bi- han Wen, Radu Timofte, and Luc Van Gool. Denoising diffu- sion models for plug-and-play image restoration. In CVPR,

-

[73]

2, 3, 5, 6 Outlier-Robust Diffusion Solvers for Inverse Problems Supplementary Material A. Comparison with IRLS-PnPDP Both our methods and the recent work, IRLS-PnPDP [32], employ the well-established iteratively reweighted least squares (IRLS) strategy to address outlier problems in IPs. Our methods leverage this strategy to mitigate outliers within the ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.