Recognition: no theorem link

A Scalable and Unified Framework to Weighted Rank Aggregation

Pith reviewed 2026-05-12 04:12 UTC · model grok-4.3

The pith

A small subset of input rankings contains a local one-median that approximates the global one-median for Ulam, footrule, Hamming, and Kendall-tau distances and their weighted versions.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that a key structural property holds across the listed distance measures: the one-median computed on a carefully chosen small subset of the input rankings approximates the global one-median to within a constant factor. This property directly yields a unified framework for weighted rank aggregation and, when instantiated in the MPC model, produces (2-α)-approximation algorithms that run in a constant number of rounds while using local memory sublinear in the number of candidates and total memory linear or near-linear in the number of candidates.

What carries the argument

The structural property that the local one-median on a small subset of input rankings approximates the global one-median; this property is shown to hold for Ulam, footrule, Hamming, and Kendall-tau distances (and weights) and is used to design the MPC algorithms.

If this is right

- A (2-α)-approximation to the Ulam 1-median is obtained in a constant number of MPC rounds with local memory sublinear in n and total memory near-linear in n.

- Analogous (2-ζ)-approximation algorithms exist for Spearman's footrule and for the element-weighted versions of Hamming and Kendall-tau distances under the same MPC resource bounds.

- An improved 1.968-approximation algorithm for the Ulam distance extends immediately to the weighted setting.

- The same structural property supplies a general framework that applies uniformly to all four distance measures and their weighted variants.

Where Pith is reading between the lines

- If the same subset property holds for other natural ranking distances, the framework would immediately supply MPC algorithms for those measures as well.

- The constant-round MPC guarantees suggest that the resulting algorithms can be implemented in distributed systems where each machine sees only a vanishing fraction of the candidate set.

- The subset-selection idea may transfer to related median problems on permutations or on other metric spaces arising in voting and preference aggregation.

Load-bearing premise

The local one-median on a small subset of the input rankings approximates the global one-median for each of the distance measures considered.

What would settle it

A concrete collection of rankings over a moderate number of candidates for which every small subset's local one-median has total distance strictly larger than (2-α) times the optimal global one-median under Ulam distance.

Figures

read the original abstract

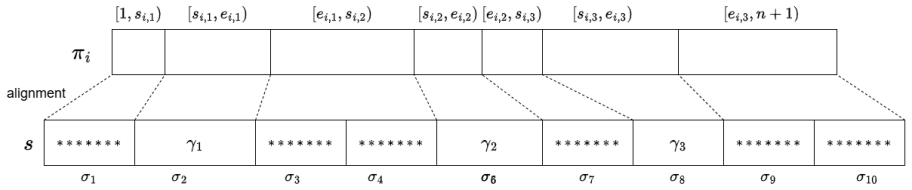

The rank aggregation problem seeks to combine multiple rank orderings of the same set of candidates into a single consensus ordering. Such problems arise in diverse domains, including web search, employment, college admissions, and voting. In this work we focus on the 1-median objective: given a set of m rankings over [n], the goal is to compute a ranking that minimizes the sum of its distances to all input rankings. We study rank aggregation under several classical distance metrics: Ulam distance, Spearman's footrule, Hamming distance, and Kendall-tau, as well as their weighted variants. Our contributions begin with a novel unified framework that identifies a key structural property: it suffices to focus on a small subset of rankings, where the corresponding local one-median provides a good approximation to the global median. This principle extends across these distance measures, yielding a general algorithmic framework for weighted rank aggregation. Building on this, we present a new approximation algorithm for rank aggregation under the Ulam distance that scales in the Massively Parallel Computation (MPC) model. Our algorithm computes a $(2-\alpha)$-approximation, for a constant $\alpha>0$, to the 1-median in a constant number of rounds, using local memory sublinear in n and total memory near-linear in n. We further design new MPC approximation algorithms for Spearman's footrule and for the element-weighted variants of Hamming and Kendall-tau distances. For each metric, we obtain a $(2-\zeta)$-approximation, for a constant $\zeta>0$, to the 1-median in a constant number of rounds, using local memory sublinear in n and total memory linear or near-linear in n. Moreover, for the Ulam distance, we simplify and strengthen the analysis of Chakraborty et al., obtaining an improved 1.968-approximation that further extends to the weighted setting.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces a unified framework for the weighted 1-median rank aggregation problem under Ulam, Spearman's footrule, Hamming, and Kendall-tau distances. The core idea is a structural property: a local 1-median on a small subset of input rankings approximates the global 1-median. This yields MPC algorithms achieving (2-α)-approximation for Ulam and (2-ζ) for the others in constant rounds, with sublinear local memory and near-linear total memory. The work also simplifies and strengthens the analysis of Chakraborty et al. to obtain a 1.968-approximation for Ulam that extends to the weighted setting.

Significance. If the structural property holds with the stated constants and the MPC implementations are realizable, the framework offers a scalable, unified approach to large-scale rank aggregation relevant to web search, voting, and admissions. The extension to weighted variants, the constant-round MPC scaling, and the improved Ulam ratio are concrete strengths. The paper supplies algorithmic descriptions that appear implementable and gives credit to prior work by strengthening its analysis.

major comments (2)

- [MPC Algorithms for Ulam Distance] MPC model description (Ulam algorithm): the claim of local memory sublinear in n while operating on permutations of [n] (each of size Θ(n)) is load-bearing for the constant-round claim. The input distribution, how full rankings are accessed for Ulam distance or median computations, and the local primitives used must be specified explicitly; without this the sublinear-memory guarantee cannot be verified.

- [Unified Framework] Structural property (framework section): the reduction showing that the local 1-median on the selected subset yields a (2-α) approximation to the global 1-median for Ulam (and analogous factors for other metrics) is central. The subset size, selection method, and explicit dependence of α (or ζ) on the distance must be stated with the proof; if the subset size is not independent of n in the required way, the MPC round and memory bounds are affected.

minor comments (2)

- [Abstract] Abstract: the constants α and ζ are described only as positive; providing their explicit values or dependence on the metric would improve readability.

- [Introduction] Notation: ensure consistent use of weighted vs. unweighted variants when stating the approximation guarantees.

Simulated Author's Rebuttal

We thank the referee for their thorough review and valuable comments on our paper. We address the major comments point by point below and will incorporate the necessary clarifications in the revised manuscript.

read point-by-point responses

-

Referee: [MPC Algorithms for Ulam Distance] MPC model description (Ulam algorithm): the claim of local memory sublinear in n while operating on permutations of [n] (each of size Θ(n)) is load-bearing for the constant-round claim. The input distribution, how full rankings are accessed for Ulam distance or median computations, and the local primitives used must be specified explicitly; without this the sublinear-memory guarantee cannot be verified.

Authors: We appreciate the referee pointing out the need for more explicit details on the MPC implementation for the Ulam distance algorithm. The sublinear local memory is achieved by distributing the input rankings across machines and using the structural property to reduce the problem to a small sampled subset of rankings, where local computations are performed using standard MPC primitives for distance calculations that do not require each machine to hold full permutations at all times. In the revision, we will add a dedicated subsection detailing the input distribution model, the sampling procedure for the subset, how Ulam distances are computed locally on the reduced instance, and the specific local primitives (such as sorting and LIS computations for Ulam) used. This will verify the constant-round and memory bounds. revision: yes

-

Referee: [Unified Framework] Structural property (framework section): the reduction showing that the local 1-median on the selected subset yields a (2-α) approximation to the global 1-median for Ulam (and analogous factors for other metrics) is central. The subset size, selection method, and explicit dependence of α (or ζ) on the distance must be stated with the proof; if the subset size is not independent of n in the required way, the MPC round and memory bounds are affected.

Authors: We agree that clearly stating the parameters of the structural property is essential. In our framework, the subset is selected via a sampling method with size independent of n (specifically O(1/ε) or similar depending on the approximation parameter, but constant for fixed α), and the proof shows the approximation factor explicitly in terms of the distance properties. The selection can be done in one MPC round with appropriate communication. We will revise the framework section to include the complete proof with explicit subset size, selection method, and the dependence of α and ζ on each distance metric. This ensures the MPC bounds are preserved as the local computations remain on small subsets with sublinear memory. revision: yes

Circularity Check

No circularity: combinatorial property and MPC algorithms are independently derived

full rationale

The paper's core contribution is a structural property (local 1-median on small subset approximates global) proven for Ulam, footrule, Hamming, Kendall-tau and weighted variants, from which approximation algorithms are built. No equations, definitions, or predictions reduce to fitted parameters or self-referential inputs by construction. The MPC claims use standard communication bounds and the identified property without circular reduction. Simplification of Chakraborty et al. is external and not load-bearing for the new framework. The derivation is self-contained.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption A small subset of candidate rankings exists such that the local 1-median on that subset approximates the global 1-median under Ulam, footrule, Hamming, and Kendall-tau distances (and weighted variants).

Reference graph

Works this paper leans on

-

[1]

Geneprioritizationthroughgenomicdatafusion.Nature biotechnology, 24(5):537–544, 2006

Stein Aerts, Diether Lambrechts, Sunit Maity, Peter Van Loo, Bert Coessens, Frederik De Smet, Leon-Charles Tranchevent, Bart De Moor, Peter Marynen, Bassem Hassan, et al. Geneprioritizationthroughgenomicdatafusion.Nature biotechnology, 24(5):537–544, 2006. 1, 4

work page 2006

-

[2]

Aggregating inconsistent information: Ranking and clustering.J

Nir Ailon, Moses Charikar, and Alantha Newman. Aggregating inconsistent information: Ranking and clustering.J. ACM, 55(5):23:1–23:27, 2008. 1, 9, 34

work page 2008

-

[3]

Ranking tournaments.SIAM Journal on Discrete Mathematics, 20(1):137–142,

Noga Alon. Ranking tournaments.SIAM Journal on Discrete Mathematics, 20(1):137–142,

-

[4]

Parallel algorithms for geometric graph problems

Alexandr Andoni, Aleksandar Nikolov, Krzysztof Onak, and Grigory Yaroslavtsev. Parallel algorithms for geometric graph problems. In David B. Shmoys, editor,Symposium on Theory of Computing, STOC 2014, New York, NY, USA, May 31 - June 03, 2014, pages 574–583. ACM, 2014. doi: 10.1145/2591796.2591805. URLhttps://doi.org/10.1145/ 2591796.2591805. 1, 3

-

[5]

On the hardness of maximum rank aggregation problems.Journal of Discrete Algorithms, 31: 2–13, 2015

Christian Bachmaier, Franz J Brandenburg, Andreas Gleißner, and Andreas Hofmeier. On the hardness of maximum rank aggregation problems.Journal of Discrete Algorithms, 31: 2–13, 2015. 1

work page 2015

-

[6]

Group recommendations with rank aggregation and collaborative filtering

Linas Baltrunas, Tadas Makcinskas, and Francesco Ricci. Group recommendations with rank aggregation and collaborative filtering. InProceedings of the fourth ACM conference on Recommender systems, page 119–126, 2010. 1 40

work page 2010

-

[7]

Communication steps for parallel query processing.J

Paul Beame, Paraschos Koutris, and Dan Suciu. Communication steps for parallel query processing.J. ACM, 64(6):40:1–40:58, 2017. doi: 10.1145/3125644. URLhttps://doi. org/10.1145/3125644. 1, 3

-

[8]

On the complexity of crossings in permutations.Discrete Mathematics, 309(7):1813–1823, 2009

Therese Biedl, Franz J Brandenburg, and Xiaotie Deng. On the complexity of crossings in permutations.Discrete Mathematics, 309(7):1813–1823, 2009. 1

work page 2009

-

[9]

On the hardness of computing an average curve

Kevin Buchin, Anne Driemel, and Martijn Struijs. On the hardness of computing an average curve. InSWAT, volume 162 ofLIPIcs, pages 19:1–19:19. Schloss Dagstuhl - Leibniz- Zentrum für Informatik, 2020. 5

work page 2020

-

[10]

Approximating the median under the ulam metric

Diptarka Chakraborty, Debarati Das, and Robert Krauthgamer. Approximating the median under the ulam metric. InProceedings of the 2021 ACM-SIAM Symposium on Discrete Algorithms, pages 761–775, 2021. 1, 5

work page 2021

-

[11]

Clustering permutations: New techniques with streaming applications

Diptarka Chakraborty, Debarati Das, and Robert Krauthgamer. Clustering permutations: New techniques with streaming applications. InITCS, volume 251 ofLIPIcs, pages 31:1– 31:24. Schloss Dagstuhl - Leibniz-Zentrum für Informatik, 2023. 1, 4, 5, 6, 9, 34, 35

work page 2023

-

[12]

Spectral mle: Top-k rank aggregation from pairwise com- parisons

Yuxin Chen and Changho Suh. Spectral mle: Top-k rank aggregation from pairwise com- parisons. InInternational Conference on Machine Learning, pages 371–380, 2015. 1

work page 2015

-

[13]

Classification of permutation distance metrics for fitness landscape analysis

Vincent A Cicirello. Classification of permutation distance metrics for fitness landscape analysis. InInternational Conference on Bio-inspired Information and Communication, pages 81–97. Springer, 2019. 1

work page 2019

-

[14]

Rearrangement inequalities.Canadian Journal of Mathematics, 24(5):930– 943, 1972

Peter W Day. Rearrangement inequalities.Canadian Journal of Mathematics, 24(5):930– 943, 1972. 33

work page 1972

-

[15]

Persi Diaconis and Ronald L Graham. Spearman’s footrule as a measure of disarray.Journal of the Royal Statistical Society Series B: Statistical Methodology, 39(2):262–268, 1977. 1

work page 1977

- [16]

-

[17]

Nick Fischer, Elazar Goldenberg, Mursalin Habib, and Karthik C. S. Hardness of median and center in the ulam metric. InESA, volume 351 ofLIPIcs, pages 111:1–111:17. Schloss Dagstuhl - Leibniz-Zentrum für Informatik, 2025. 1

work page 2025

-

[18]

Goodrich, Nodari Sitchinava, and Qin Zhang

Michael T. Goodrich, Nodari Sitchinava, and Qin Zhang. Sorting, searching, and simula- tion in the mapreduce framework. In Takao Asano, Shin-Ichi Nakano, Yoshio Okamoto, and Osamu Watanabe, editors,Algorithms and Computation - 22nd International Sym- posium, ISAAC 2011, Yokohama, Japan, December 5-8, 2011. Proceedings, volume 7074 ofLecture Notes in Compu...

-

[19]

Massively parallel ap- proximation algorithms for edit distance and longest common subsequence

MohammadTaghi Hajiaghayi, Saeed Seddighin, and Xiaorui Sun. Massively parallel ap- proximation algorithms for edit distance and longest common subsequence. InProceedings of the Thirtieth Annual ACM-SIAM Symposium on Discrete Algorithms, pages 1654–1672. SIAM, 2019. 9, 40

work page 2019

-

[20]

The fine-grained complexity of median and center string problems under edit distance

Gary Hoppenworth, Jason W Bentley, Daniel Gibney, and Sharma V Thankachan. The fine-grained complexity of median and center string problems under edit distance. In28th Annual European Symposium on Algorithms, ESA 2020, 2020. 5 41

work page 2020

-

[21]

Fast and Parallelizable Ranking with Out- liers from Pairwise Comparisons

Sungjin Im and Mahshid Montazer Qaem. Fast and Parallelizable Ranking with Out- liers from Pairwise Comparisons. In Ulf Brefeld, Elisa Fromont, Andreas Hotho, Arno Knobbe, Marloes Maathuis, and Céline Robardet, editors,Machine Learning and Knowl- edge Discovery in Databases, pages 173–188. Springer International Publishing, 2020. ISBN 978-3-030-46150-8. d...

-

[22]

Sublinear time algorithms for metric space problems

Piotr Indyk. Sublinear time algorithms for metric space problems. InProceedings of the thirty-first annual ACM symposium on Theory of computing, pages 428–434, 1999. 9, 13

work page 1999

-

[23]

Piotr Indyk.High-dimensional computational geometry. stanford university, 2001. 9, 13, 15

work page 2001

-

[24]

Karloff, Siddharth Suri, and Sergei Vassilvitskii

Howard J. Karloff, Siddharth Suri, and Sergei Vassilvitskii. A model of computation for mapreduce. In Moses Charikar, editor,Proceedings of the Twenty-First Annual ACM- SIAM Symposium on Discrete Algorithms, SODA 2010, Austin, Texas, USA, January 17- 19, 2010, pages 938–948. SIAM, 2010. doi: 10.1137/1.9781611973075.76. URLhttps: //doi.org/10.1137/1.978161...

-

[25]

Mathematics without numbers.Daedalus, 88(4):577–591, 1959

John G Kemeny. Mathematics without numbers.Daedalus, 88(4):577–591, 1959. 1

work page 1959

-

[26]

A new measure of rank correlation.Biometrika, 30(1-2):81–93, 1938

Maurice G Kendall. A new measure of rank correlation.Biometrika, 30(1-2):81–93, 1938. 1

work page 1938

-

[27]

Claire Kenyon-Mathieu and Warren Schudy. How to rank with few errors. InProceedings of the 39th Annual ACM Symposium on Theory of Computing, pages 95–103, 2007. 1

work page 2007

-

[28]

Raivo Kolde, Sven Laur, Priit Adler, and Jaak Vilo. Robust rank aggregation for gene list integration and meta-analysis.Bioinformatics, 28(4):573–580, 2012. 1

work page 2012

-

[29]

Rank aggregation algorithms for fair consensus

Caitlin Kuhlman and Elke Rundensteiner. Rank aggregation algorithms for fair consensus. Proceedings of the VLDB Endowment, 13(12), 2020. 1

work page 2020

-

[30]

Generalizeddistancesbetweenrankings

RaviKumarandSergeiVassilvitskii. Generalizeddistancesbetweenrankings. InProceedings of the 19th International Conference on World Wide Web, pages 571–580, 2010. 1

work page 2010

-

[31]

Minimizing time-to-rank: A learning and recommendation approach

Haoming Li, Sujoy Sikdar, Rohit Vaish, Junming Wang, Lirong Xia, and Chaonan Ye. Minimizing time-to-rank: A learning and recommendation approach. InProceedings of the Twenty-Eighth International Joint Conference on Artificial Intelligence, pages 1408–1414,

-

[32]

2-approximating feedback vertex set in tournaments.ACM Trans

Daniel Lokshtanov, Pranabendu Misra, Joydeep Mukherjee, Fahad Panolan, Geevarghese Philip, and Saket Saurabh. 2-approximating feedback vertex set in tournaments.ACM Trans. Algorithms, 17(2):11:1–11:14, 2021. 34

work page 2021

-

[33]

Building a 70 billion word corpus of English from ClueWeb

Jan Pomikálek, Miloš Jakubíček, and Pavel Rychlý. Building a 70 billion word corpus of English from ClueWeb. InProceedings of the Eighth International Conference on Lan- guage Resources and Evaluation (LREC’12), pages 502–506. European Language Resources Association (ELRA), 2012. URLhttps://aclanthology.org/L12-1624/. 1, 4

work page 2012

-

[34]

V Yu Popov. Multiple genome rearrangement by swaps and by element duplications.The- oretical computer science, 385(1-3):115–126, 2007. 1

work page 2007

-

[35]

Permutation distance measures for memetic algo- rithms with population management

Marc Sevaux and Kenneth Sörensen. Permutation distance measures for memetic algo- rithms with population management. InProceedings of 6th Metaheuristics International Conference, MIC 2005, pages 832–838. University of Vienna, 2005. 1

work page 2005

- [36]

-

[37]

Footrule for measuring correlation.British Journal of Psychology, 2(1): 89, 1906

Charles Spearman. Footrule for measuring correlation.British Journal of Psychology, 2(1): 89, 1906. 1

work page 1906

-

[38]

Ryuichi Takanobu, Tao Zhuang, Minlie Huang, Jun Feng, Haihong Tang, and Bo Zheng. Aggregating e-commerce search results from heterogeneous sources via hierarchical rein- forcement learning. InThe World Wide Web Conference, pages 1771–1781, 2019. 1, 4

work page 2019

-

[39]

Condorcet’s theory of voting.American Political science review, 82(4): 1231–1244, 1988

H Peyton Young. Condorcet’s theory of voting.American Political science review, 82(4): 1231–1244, 1988. 1

work page 1988

-

[40]

H Peyton Young and Arthur Levenglick. A consistent extension of condorcet’s election principle.SIAM Journal on applied Mathematics, 35(2):285–300, 1978. 1 43

work page 1978

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.