Recognition: 2 theorem links

· Lean TheoremLearning from Acceptance: Cumulative Regret in the Game of Coding

Pith reviewed 2026-05-12 03:17 UTC · model grok-4.3

The pith

A data collector learns an adversary's unknown utility trade-off to achieve sublinear cumulative regret across all acceptance rules in repeated coding games.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

In the game of coding with an unknown adversary utility, the data collector acting as Stackelberg leader can use repeated interactions to learn the trade-off between acceptance and estimation error. The proposed algorithm narrows its search to promising acceptance rules using feedback from each round, and this yields a proof of sublinear cumulative regret while numerical experiments confirm practical effectiveness.

What carries the argument

The refine-around-promising acceptance rules algorithm, which iteratively narrows selection based on observed acceptance outcomes and estimation quality to control total loss over the full trajectory.

If this is right

- Cumulative regret over T rounds stays o(T), so average per-round loss approaches zero.

- Every acceptance rule tried during learning contributes to the total bound, not just the final one.

- Numerical experiments demonstrate that the regret remains controlled across varied adversary trade-offs.

- The collector can adapt rules dynamically using only the binary acceptance feedback from each interaction.

Where Pith is reading between the lines

- Similar refinement of search could extend to other repeated leader-follower games where one party observes only partial outcomes like acceptance.

- Systems running indefinitely might prioritize cumulative metrics to keep overall estimate quality high even while learning.

- The approach could be tested for robustness by allowing the adversary's utility to change slowly over time rather than staying fixed.

Load-bearing premise

The adversary maintains one fixed but unknown utility function that trades off getting submissions accepted against degrading the collector's final estimate.

What would settle it

Simulating the algorithm over thousands of rounds with a fixed adversary utility and measuring whether cumulative regret grows linearly with the number of rounds instead of sublinearly.

Figures

read the original abstract

Classical coding-theoretic guarantees often rely on trust assumptions, such as requiring sufficiently many honest nodes compared with adversarial ones. These assumptions are difficult to enforce in open decentralized systems where participants are not centrally certified. At the same time, such environments often contain incentive mechanisms: participants may be rewarded only when their submitted data are accepted and the system remains functional. This changes the role of an adversary. Rather than acting as a pure saboteur, a strategic adversary may submit data that are consistent enough to be accepted while still degrading the quality of the final estimate. The game-of-coding framework models this strategic interaction between a data collector (DC) and an adversary. Existing works on the game of coding mostly consider the complete-information case, where the DC knows how the adversary trades off acceptance and estimation error. In this paper, we study an incomplete-information version of the game of coding in which the DC, acting as a Stackelberg leader, does not know the adversary's utility trade-off and must learn through repeated interaction. Prior work on the unknown-adversary setting considered an explore-then-commit objective, where only the final selected acceptance rule is evaluated. In contrast, we study the full learning trajectory: every acceptance rule used during the algorithm is executed and contributes to performance. We propose an algorithm that refines its search around promising acceptance rules, prove that it achieves sublinear cumulative regret, and evaluate its performance through numerical experiments.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper studies an incomplete-information Stackelberg game of coding in which the data collector (leader) does not know the adversary's fixed but unknown utility trade-off between acceptance and estimation error. It proposes a refinement algorithm that iteratively narrows its search over acceptance rules based on observed outcomes, proves that the algorithm attains sublinear cumulative regret over the entire interaction trajectory, and reports numerical experiments comparing the learned rules against baselines.

Significance. If the sublinear-regret claim holds under the stated assumptions, the work meaningfully extends prior complete-information and explore-then-commit analyses of the game of coding to the more realistic cumulative-performance setting. The result supplies a concrete learning procedure with theoretical guarantees for decentralized systems that rely on incentive-compatible acceptance rules, and the numerical experiments provide initial empirical support.

major comments (2)

- [§4.2] §4.2, Theorem 1: the sublinear regret bound is stated to hold for any Lipschitz utility, yet the proof sketch invokes a uniform discretization of the acceptance-rule space whose mesh size depends on an unspecified Lipschitz constant; without an explicit bound on that constant or a data-dependent choice of mesh, the claimed O(√T) rate is not guaranteed to be parameter-free.

- [§5.1] §5.1, Table 1: the reported cumulative regret for the proposed algorithm is compared only against a fixed-grid baseline and an explore-then-commit oracle; an additional comparison against a standard no-regret bandit algorithm (e.g., EXP3 or UCB on the same discretized space) is needed to isolate the benefit of the refinement heuristic.

minor comments (3)

- [Abstract] The abstract claims 'numerical experiments' but does not name the performance metric (cumulative regret, final estimation error, or both) or the range of adversary trade-off parameters; a single sentence clarifying these would improve readability.

- [§3] Notation: the symbol r_t for the acceptance rule chosen at round t is introduced in §3 without an accompanying table of symbols; readers must hunt through the text to recall that r_t belongs to a compact subset of [0,1]^d.

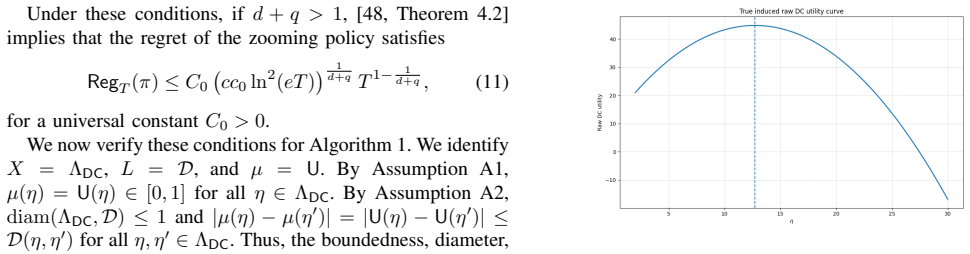

- [Figure 2] Figure 2 caption: the x-axis label 'rounds' is ambiguous because the plot mixes learning rounds with evaluation rounds; relabeling as 'interaction rounds' would remove confusion.

Simulated Author's Rebuttal

We thank the referee for the careful review and the recommendation of minor revision. The comments highlight important aspects of our theoretical analysis and experimental design. We address them point by point below and have made revisions accordingly.

read point-by-point responses

-

Referee: [§4.2] §4.2, Theorem 1: the sublinear regret bound is stated to hold for any Lipschitz utility, yet the proof sketch invokes a uniform discretization of the acceptance-rule space whose mesh size depends on an unspecified Lipschitz constant; without an explicit bound on that constant or a data-dependent choice of mesh, the claimed O(√T) rate is not guaranteed to be parameter-free.

Authors: We agree with the observation. The discretization in the proof of Theorem 1 is uniform and its mesh size is chosen proportionally to 1/L to control the approximation error, leading to a regret bound whose leading constant depends on the Lipschitz constant L of the utility. Since L is a fixed property of the (unknown) adversary, the bound is O(sqrt(T)) with a multiplicative factor depending on L, which is still sublinear. We will revise §4.2 to explicitly state this dependence in the theorem and proof sketch, clarifying that the result holds for any fixed Lipschitz utility with the constant scaling accordingly. We do not have a data-dependent mesh in the current version, but the revision will make the assumptions transparent. revision: yes

-

Referee: [§5.1] §5.1, Table 1: the reported cumulative regret for the proposed algorithm is compared only against a fixed-grid baseline and an explore-then-commit oracle; an additional comparison against a standard no-regret bandit algorithm (e.g., EXP3 or UCB on the same discretized space) is needed to isolate the benefit of the refinement heuristic.

Authors: We appreciate this suggestion, as it helps demonstrate the specific advantage of the refinement approach. We have conducted additional numerical experiments implementing EXP3 and UCB on the discretized acceptance rule space used in our algorithm. The updated Table 1 in §5.1 now includes these baselines, and the results indicate that our method achieves lower cumulative regret compared to these standard algorithms, validating the benefit of the heuristic. The revision is included in the new manuscript version. revision: yes

Circularity Check

No significant circularity identified

full rationale

The paper introduces a new refinement algorithm for the incomplete-information game-of-coding setting, proves a sublinear cumulative regret bound for the full learning trajectory (distinct from prior explore-then-commit objectives), and supports the claims with numerical experiments. The derivation relies on standard online learning techniques under the stated Stackelberg assumptions with observable outcomes; no step reduces by construction to a fitted parameter, self-definition, or self-citation chain. The central result is an independent algorithmic contribution with an external proof and empirical validation.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Adversary possesses a fixed but unknown utility function that trades off acceptance probability against estimation error

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe propose an algorithm that refines its search around promising acceptance rules, prove that it achieves sublinear cumulative regret... Algorithm 1 Game-of-Coding Zooming Algorithm... Reg_T(π_Z) = O((C_z ℓ̄² ln² T)^{1/(d_z+2)} T^{(d_z+1)/(d_z+2)})

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearFor any threshold... the induced probability of acceptance and the estimation error lie on a curve determined by the statistical model... independent of the utility functions

Reference graph

Works this paper leans on

-

[1]

V . Guruswami, A. Rudra, and M. Sudan,Essential Coding Theory. Draft is Available, 2022

work page 2022

-

[2]

Frame codes for distributed coded com- putation,

R. Yosibash and R. Zamir, “Frame codes for distributed coded com- putation,” in2021 11th International Symposium on Topics in Coding (ISTC), pp. 1–5, 2021

work page 2021

-

[3]

Codedsketch: A coding scheme for distributed computation of approximated matrix multipli- cation,

T. Jahani-Nezhad and M. A. Maddah-Ali, “Codedsketch: A coding scheme for distributed computation of approximated matrix multipli- cation,”IEEE Transactions on Information Theory, vol. 67, no. 6, pp. 4185–4196, 2021

work page 2021

-

[4]

Analog error-correcting codes,

R. M. Roth, “Analog error-correcting codes,”IEEE Transactions on Information Theory, vol. 66, no. 7, pp. 4075–4088, 2020

work page 2020

-

[5]

General coded computing: Adversarial settings,

P. Moradi, H. Akbarinodehi, and M. A. Maddah-Ali, “General coded computing: Adversarial settings,”arXiv preprint arXiv:2502.08058, 2025

-

[6]

On the optimal recovery threshold of coded matrix mul- tiplication,

S. Dutta, M. Fahim, F. Haddadpour, H. Jeong, V . Cadambe, and P. Grover, “On the optimal recovery threshold of coded matrix mul- tiplication,”IEEE Transactions on Information Theory, vol. 66, no. 1, pp. 278–301, 2019

work page 2019

-

[7]

Short-dot: Computing large linear transforms distributedly using coded short dot products,

S. Dutta, V . Cadambe, and P. Grover, “Short-dot: Computing large linear transforms distributedly using coded short dot products,”Advances In Neural Information Processing Systems, vol. 29, 2016

work page 2016

-

[8]

Polynomial codes: an opti- mal design for high-dimensional coded matrix multiplication,

Q. Yu, M. Maddah-Ali, and S. Avestimehr, “Polynomial codes: an opti- mal design for high-dimensional coded matrix multiplication,”Advances in Neural Information Processing Systems, vol. 30, 2017

work page 2017

-

[9]

Q. Yu and A. S. Avestimehr, “Entangled polynomial codes for secure, private, and batch distributed matrix multiplication: Breaking the" cubic" barrier,” in2020 IEEE International Symposium on Information Theory (ISIT), pp. 245–250, IEEE, 2020

work page 2020

-

[10]

Lagrange coded computing: Optimal design for resiliency, security, and privacy,

Q. Yu, S. Li, N. Raviv, S. M. M. Kalan, M. Soltanolkotabi, and S. A. Avestimehr, “Lagrange coded computing: Optimal design for resiliency, security, and privacy,” inThe 22nd International Conference on Artificial Intelligence and Statistics, pp. 1215–1225, PMLR, 2019

work page 2019

-

[11]

Blockchains cannot rely on honesty,

J. Sliwinski and R. Wattenhofer, “Blockchains cannot rely on honesty,” inThe 19th International Conference on Autonomous Agents and Multiagent Systems (AAMAS 2020), 2019

work page 2020

-

[12]

Fact and fiction: Chal- lenging the honest majority assumption of permissionless blockchains,

R. Han, Z. Sui, J. Yu, J. Liu, and S. Chen, “Fact and fiction: Chal- lenging the honest majority assumption of permissionless blockchains,” inProceedings of the 2021 ACM Asia Conference on Computer and Communications Security, pp. 817–831, 2021

work page 2021

-

[13]

J. S. Gans and H. Halaburda, “"Zero Cost" majority attacks on per- missionless blockchains,” tech. rep., National Bureau of Economic Research, 2023

work page 2023

-

[14]

Bitcoin: A peer-to-peer electronic cash system,

N. S. Bitcoin, “Bitcoin: A peer-to-peer electronic cash system,” 2008

work page 2008

-

[15]

V . Buterinet al., “Ethereum white paper,”GitHub repository, vol. 1, pp. 22–23, 2013

work page 2013

-

[16]

SoK: Blockchain technology and its potential use cases,

S. Ruoti, B. Kaiser, A. Yerukhimovich, J. Clark, and R. Cunningham, “SoK: Blockchain technology and its potential use cases,”arXiv preprint arXiv:1909.12454, 2019

-

[17]

Blockchain for deep learning: review and open challenges,

M. Shafay, R. W. Ahmad, K. Salah, I. Yaqoob, R. Jayaraman, and M. Omar, “Blockchain for deep learning: review and open challenges,” Cluster Computing, vol. 26, no. 1, pp. 197–221, 2023

work page 2023

-

[18]

Survey on the convergence of machine learning and blockchain,

S. Ding and C. Hu, “Survey on the convergence of machine learning and blockchain,” inProceedings of SAI Intelligent Systems Conference, pp. 170–189, Springer, 2022

work page 2022

-

[19]

Blockchain meets machine learn- ing: a survey,

S. Kayikci and T. M. Khoshgoftaar, “Blockchain meets machine learn- ing: a survey,”Journal of Big Data, vol. 11, no. 1, pp. 1–29, 2024

work page 2024

-

[20]

Blockchain for ai: A disruptive integration,

R. Tian, L. Kong, X. Min, and Y . Qu, “Blockchain for ai: A disruptive integration,” in2022 IEEE 25th International Conference on Computer Supported Cooperative Work in Design (CSCWD), pp. 938–943, IEEE, 2022

work page 2022

-

[21]

Blockchain for ai: Review and open research challenges,

K. Salah, M. H. U. Rehman, N. Nizamuddin, and A. Al-Fuqaha, “Blockchain for ai: Review and open research challenges,”IEEE Access, vol. 7, pp. 10127–10149, 2019

work page 2019

-

[22]

Game of coding: Beyond honest-majority assumptions,

H. A. Nodehi, V . R. Cadambe, and M. A. Maddah-Ali, “Game of coding: Beyond honest-majority assumptions,”IEEE Transactions on Information Theory (submitted), 2024

work page 2024

-

[23]

Game of Coding for Vector-Valued Computations

H. A. Nodehi, P. Moradi, S. Mohajer, and M. A. Maddah-Ali, “Game of coding for vector-valued computations,”arXiv preprint arXiv:2602.04810, 2026

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[24]

Game of coding: Sybil resistant decentralized machine learning with minimal trust assumption,

H. A. Nodehi, V . R. Cadambe, and M. A. Maddah-Al, “Game of coding: Sybil resistant decentralized machine learning with minimal trust assumption,”arXiv preprint, 2024. https://arxiv.org/abs/2410.05540

-

[25]

H. A. Nodehi, V . R. Cadambe, and M. A. Maddah-Ali, “Game of coding: Coding theory in the presence of rational adversaries, motivated by decentralized machine learning,”arXiv preprint arXiv:2601.02313, 2026

-

[26]

Game of coding with an unknown adversary,

H. Akbari Nodehi, P. Moradi, and M. A. Maddah-Ali, “Game of coding with an unknown adversary,” in2025 IEEE International Symposium on Information Theory (ISIT), (Ann Arbor, MI, USA), 2025

work page 2025

-

[27]

Computing the optimal strategy to commit to,

V . Conitzer and T. Sandholm, “Computing the optimal strategy to commit to,” inProceedings of the 7th ACM conference on Electronic commerce, pp. 82–90, 2006

work page 2006

-

[28]

Robust stackelberg equilibria,

J. Gan, M. Han, J. Wu, and H. Xu, “Robust stackelberg equilibria,” arXiv preprint arXiv:2304.14990, 2023

-

[29]

Learning and approximat- ing the optimal strategy to commit to,

J. Letchford, V . Conitzer, and K. Munagala, “Learning and approximat- ing the optimal strategy to commit to,” inAlgorithmic Game Theory: Second International Symposium, SAGT 2009, Paphos, Cyprus, October 18-20, 2009. Proceedings 2, pp. 250–262, Springer, 2009

work page 2009

-

[30]

Learning optimal commitment to overcome insecurity,

A. Blum, N. Haghtalab, and A. D. Procaccia, “Learning optimal commitment to overcome insecurity,”Advances in Neural Information Processing Systems, vol. 27, 2014

work page 2014

-

[31]

Learning optimal strategies to commit to,

B. Peng, W. Shen, P. Tang, and S. Zuo, “Learning optimal strategies to commit to,” inProceedings of the AAAI Conference on Artificial Intelligence, vol. 33, pp. 2149–2156, 2019

work page 2019

-

[32]

Learning to play sequential games versus unknown opponents,

P. G. Sessa, I. Bogunovic, M. Kamgarpour, and A. Krause, “Learning to play sequential games versus unknown opponents,”Advances in neural information processing systems, vol. 33, pp. 8971–8981, 2020

work page 2020

-

[33]

Commit- ment without regrets: Online learning in stackelberg security games,

M.-F. Balcan, A. Blum, N. Haghtalab, and A. D. Procaccia, “Commit- ment without regrets: Online learning in stackelberg security games,” inProceedings of the sixteenth ACM conference on economics and computation, pp. 61–78, 2015

work page 2015

-

[34]

Learning in stackelberg games with non-myopic agents,

N. Haghtalab, T. Lykouris, S. Nietert, and A. Wei, “Learning in stackelberg games with non-myopic agents,” inProceedings of the 23rd ACM Conference on Economics and Computation, pp. 917–918, 2022

work page 2022

-

[35]

Strategic classification from revealed preferences,

J. Dong, A. Roth, Z. Schutzman, B. Waggoner, and Z. S. Wu, “Strategic classification from revealed preferences,” inProceedings of the 2018 ACM Conference on Economics and Computation, pp. 55–70, 2018

work page 2018

-

[36]

The value of knowing a demand curve: Bounds on regret for online posted-price auctions,

R. Kleinberg and T. Leighton, “The value of knowing a demand curve: Bounds on regret for online posted-price auctions,” in44th Annual IEEE Symposium on Foundations of Computer Science, 2003. Proceedings., pp. 594–605, IEEE, 2003

work page 2003

-

[37]

Asymptotically efficient adaptive allocation rules,

T. L. Lai and H. Robbins, “Asymptotically efficient adaptive allocation rules,”Advances in Applied Mathematics, vol. 6, no. 1, pp. 4–22, 1985

work page 1985

-

[38]

Finite-time analysis of the multiarmed bandit problem,

P. Auer, N. Cesa-Bianchi, and P. Fischer, “Finite-time analysis of the multiarmed bandit problem,”Machine Learning, vol. 47, no. 2–3, pp. 235–256, 2002

work page 2002

-

[39]

Regret analysis of stochastic and nonstochastic multi-armed bandit problems,

S. Bubeck and N. Cesa-Bianchi, “Regret analysis of stochastic and nonstochastic multi-armed bandit problems,”Foundations and Trends in Machine Learning, vol. 5, no. 1, pp. 1–122, 2012

work page 2012

-

[40]

T. Lattimore and C. Szepesvári,Bandit Algorithms. Cambridge Univer- sity Press, 2020

work page 2020

-

[41]

Introduction to multi-armed bandits,

A. Slivkins, “Introduction to multi-armed bandits,”Foundations and Trends in Machine Learning, vol. 12, no. 1–2, pp. 1–286, 2019

work page 2019

-

[42]

The continuum-armed bandit problem,

R. Agrawal, “The continuum-armed bandit problem,”SIAM Journal on Control and Optimization, vol. 33, no. 6, pp. 1926–1951, 1995

work page 1926

-

[43]

Nearly tight bounds for the continuum-armed ban- dit problem,

R. D. Kleinberg, “Nearly tight bounds for the continuum-armed ban- dit problem,” inAdvances in Neural Information Processing Systems, vol. 17, 2004

work page 2004

-

[44]

Improved rates for the stochastic continuum-armed bandit problem,

P. Auer, R. Ortner, and C. Szepesvári, “Improved rates for the stochastic continuum-armed bandit problem,” inProceedings of the 20th Annual Conference on Learning Theory, pp. 454–468, Springer, 2007

work page 2007

-

[45]

Multi-armed bandits in metric spaces,

R. Kleinberg, A. Slivkins, and E. Upfal, “Multi-armed bandits in metric spaces,” inProceedings of the Fortieth Annual ACM Symposium on Theory of Computing, pp. 681–690, 2008

work page 2008

-

[46]

S. Bubeck, R. Munos, G. Stoltz, and C. Szepesvári, “X-armed bandits,” Journal of Machine Learning Research, vol. 12, pp. 1655–1695, 2011

work page 2011

-

[47]

V on Stackelberg,Market structure and equilibrium

H. V on Stackelberg,Market structure and equilibrium. Springer Science & Business Media, 2010

work page 2010

-

[48]

Multi-armed bandits in metric spaces,

R. Kleinberg, A. Slivkins, and E. Upfal, “Multi-armed bandits in metric spaces,” inProceedings of the fortieth annual ACM symposium on Theory of computing, pp. 681–690, 2008

work page 2008

-

[49]

Probability inequalities for sums of bounded random variables,

W. Hoeffding, “Probability inequalities for sums of bounded random variables,”The collected works of Wassily Hoeffding, pp. 409–426, 1994. APPENDIXA DISCUSSION OFASSUMPTIONS ANDZOOMINGDIMENSION This appendix discusses the assumptions used in Theorem 1. A. Utility Normalization Theorem 1 assumes 0≤U(η)≤1,∀η∈Λ DC.(14) This is a standard normalization. Suppo...

work page 1994

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.