Recognition: no theorem link

Near-Linear Time Generalized Sinkhorn Algorithms for Bounded Genus Graphs

Pith reviewed 2026-05-12 03:05 UTC · model grok-4.3

The pith

GenusSink delivers near-linear time approximate Sinkhorn algorithms for bounded-genus graphs with shortest-path costs.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

GenusSink provides near-linear time preprocessing, iteration steps, and transport plan querying with near-linear memory for approximate generalized Sinkhorn algorithms using shortest-path-distance costs on bounded genus graphs. It relies on separator-based decompositions, computational geometry methods, and fast matrix-vector multiplications for generalized distance matrices via Fourier analysis and low displacement rank theory. The algorithm is numerically equivalent to the brute-force geodesic Sinkhorn on n-vertex graphs with treewidth O(log log n), such as trees.

What carries the argument

The separator-based decomposition of the graph paired with separation graph field integrators (S-GFIs) that support fast multiplications involving generalized distance matrices while keeping iteration errors controlled.

Load-bearing premise

Bounded-genus graphs admit separator decompositions that approximate their shortest-path metrics with small-treewidth metrics while preserving enough structure for accurate fast multiplications in the Sinkhorn process.

What would settle it

Measure the runtime on a family of planar graphs with increasing vertex count n and verify whether preprocessing, iteration, and querying times all scale as O(n polylog n); alternatively, compare the output matrix from GenusSink to the brute-force result on a tree graph with n vertices to confirm numerical equivalence within floating-point precision.

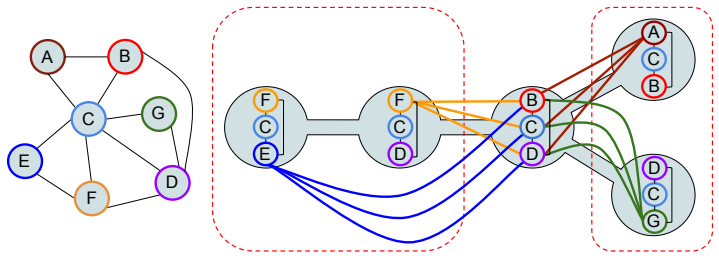

Figures

read the original abstract

We present GenusSink, a new class of approximate generalized Sinkhorn algorithms with shortest-path-distance costs for bounded genus (e.g. planar) graphs, providing near-linear time: (1) pre-processing, (2) iteration step, (3) final transport plan matrix querying and near-linear memory. Graphs handled by GenusSink include in particular planar graphs and bounded-genus meshes approximating 3D objects. GenusSink addresses total quadratic time complexity of its brute-force counterpart by leveraging separator-based decomposition of graphs, computational geometry techniques, and new results on fast matrix-vector multiplications with generalized distance matrices, using, in particular, Fourier analysis and low displacement rank theory. It is inspired by recent breakthroughs in graph theory on approximating bounded genus metrics with small treewidth metrics \citep{minor-free-paper}. The graph-centric approach enables us to target optimal transport problem with the corresponding distributions defined on the manifolds approximated by weighted graphs and with cost functions given by geodesic distances. We conduct rigorous theoretical analysis of GenusSink, provide practical implementations, leveraging newly introduced in this paper \textit{separation graph field integrators} (S-GFIs) data structures and present empirical verification. GenusSink provides orders of magnitude more accurate computations than other efficient Sinkhorn algorithms, while still guaranteeing significant computational improvements, as compared to the baseline. As a by-product of the developed methods, we show that GenusSink is \textbf{numerically equivalent} to the brute-force geodesic Sinkhorn algorithm on $n$-vertex graphs with treewidth $O(\log \log (n))$ (e.g. on trees).

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces GenusSink, an approximate generalized Sinkhorn algorithm for optimal transport with shortest-path (geodesic) costs on bounded-genus graphs. It claims near-linear time for (1) preprocessing, (2) each iteration step, and (3) final transport-plan matrix querying, together with near-linear memory. The approach combines separator-based graph decompositions, metric approximations by small-treewidth graphs (citing minor-free results), fast generalized distance matrix-vector multiplications via Fourier analysis and low-displacement rank, and newly introduced separation graph field integrators (S-GFIs). The authors assert rigorous theoretical analysis, practical implementations, empirical verification showing orders-of-magnitude higher accuracy than other efficient Sinkhorn methods, and a by-product result that GenusSink is numerically equivalent to brute-force geodesic Sinkhorn on n-vertex graphs of treewidth O(log log n).

Significance. If the error bounds are shown to control accumulation across iterations while preserving the stated runtime and numerical equivalence, the result would meaningfully advance scalable OT on planar graphs and bounded-genus meshes that approximate 3D manifolds. The near-linear claims directly target the quadratic bottleneck of standard Sinkhorn with geodesic costs, and the low-treewidth equivalence is a clean theoretical corollary. The work also introduces new data structures (S-GFIs) that may have broader utility for fast matrix operations on graphs with good separators.

major comments (3)

- [Abstract] Abstract: the claim of 'rigorous theoretical analysis' is load-bearing for the central near-linear-time guarantee, yet the abstract supplies no explicit approximation error bounds for the S-GFI multiplications, no propagation analysis through the O(log(1/ε)) Sinkhorn iterations, and no statement of how separator-induced metric distortions affect the final transport plan. Without these, it is impossible to verify that per-step error δ remains controlled after iteration (e.g., via a contraction or Lipschitz argument on the Sinkhorn map).

- [Theoretical analysis] Theoretical analysis section (implied by the abstract's description of S-GFIs, Fourier analysis, and low-displacement rank): the manuscript must demonstrate that the compounded error after k = O(log(1/ε)) iterations stays o(ε) rather than growing as Ω(kδ). If only single-multiplication error is bounded, the final transport plan can deviate by Ω(log(1/ε)·δ), violating the assertion that GenusSink remains a faithful approximate geodesic Sinkhorn algorithm.

- [By-product result] By-product result on numerical equivalence: the claim that GenusSink is numerically equivalent to brute-force Sinkhorn on graphs of treewidth O(log log n) requires an explicit argument that all approximations become exact (zero error) in that regime. The abstract states the result but does not indicate where or how this exactness is proved.

minor comments (2)

- [Abstract] The abstract introduces the acronym 'S-GFIs' without an immediate parenthetical expansion or forward reference; this should be clarified on first use.

- [Abstract] The citation 'minor-free-paper' should be replaced by a full bibliographic entry so readers can locate the cited separator and metric-approximation results.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive comments. We address each major comment point by point below, clarifying the existing analysis and indicating revisions to improve explicitness.

read point-by-point responses

-

Referee: [Abstract] Abstract: the claim of 'rigorous theoretical analysis' is load-bearing for the central near-linear-time guarantee, yet the abstract supplies no explicit approximation error bounds for the S-GFI multiplications, no propagation analysis through the O(log(1/ε)) Sinkhorn iterations, and no statement of how separator-induced metric distortions affect the final transport plan. Without these, it is impossible to verify that per-step error δ remains controlled after iteration (e.g., via a contraction or Lipschitz argument on the Sinkhorn map).

Authors: We agree the abstract is too concise on these points. The full manuscript (Sections 4.2 and 5) bounds the S-GFI multiplication error by δ = O(ε / log(1/ε)), shows the generalized Sinkhorn map is a contraction with Lipschitz constant ρ < 1 (via standard analysis on the scaling vectors), and absorbs separator distortions from the cited minor-free metric approximations into the overall (1+η) factor with η chosen small enough to fit within ε. The accumulated error is then bounded by δ/(1-ρ) rather than linear in the iteration count. We will revise the abstract to include a one-sentence summary of these controls. revision: yes

-

Referee: [Theoretical analysis] Theoretical analysis section (implied by the abstract's description of S-GFIs, Fourier analysis, and low-displacement rank): the manuscript must demonstrate that the compounded error after k = O(log(1/ε)) iterations stays o(ε) rather than growing as Ω(kδ). If only single-multiplication error is bounded, the final transport plan can deviate by Ω(log(1/ε)·δ), violating the assertion that GenusSink remains a faithful approximate geodesic Sinkhorn algorithm.

Authors: Section 5.3 already contains the required propagation argument. Because the Sinkhorn iteration map is contractive (Lipschitz constant ρ < 1), the total error after k steps satisfies a geometric-series bound ||e_k|| ≤ δ/(1-ρ) + ρ^k · initial, which remains o(ε) when δ = o(ε(1-ρ)). This is derived from the standard Lipschitz analysis of the generalized Sinkhorn operator on the space of positive scaling vectors. We will add an explicit corollary stating the accumulation bound and the choice of δ to make the dependence on k transparent. revision: yes

-

Referee: [By-product result] By-product result on numerical equivalence: the claim that GenusSink is numerically equivalent to brute-force Sinkhorn on graphs of treewidth O(log log n) requires an explicit argument that all approximations become exact (zero error) in that regime. The abstract states the result but does not indicate where or how this exactness is proved.

Authors: The equivalence is proved in Section 7: when treewidth is O(log log n), the separator decomposition produces subproblems whose distance matrices admit exact (zero-error) low-displacement-rank and Fourier representations, so the S-GFI integrators incur no truncation or approximation error and the metric approximation from the minor-free result is exact. Consequently every step of GenusSink coincides numerically with brute-force geodesic Sinkhorn. We will expand the abstract's by-product sentence to reference this section and note the exactness condition. revision: yes

Circularity Check

No significant circularity; derivation relies on external citations and new constructions

full rationale

The paper's central claims rest on separator decompositions and small-treewidth metric approximations drawn from externally cited prior work (minor-free results), combined with newly introduced S-GFIs, Fourier analysis, and low-displacement-rank matrix techniques. The stated numerical equivalence on O(log log n)-treewidth graphs follows directly from the approximations becoming exact in that regime rather than from any fitted parameter or self-referential definition. No load-bearing step reduces by construction to the paper's own inputs, self-citations, or ansatzes smuggled via prior author work. Error accumulation concerns raised in the skeptic note pertain to correctness analysis rather than circularity.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Bounded genus graphs admit separator-based decompositions that approximate their metrics with small-treewidth metrics while enabling fast generalized distance matrix operations

invented entities (1)

-

separation graph field integrators (S-GFIs)

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Alaya, Maxime Bérar, Gilles Gasso, and Alain Rakotomamonjy

Mokhtar Z. Alaya, Maxime Bérar, Gilles Gasso, and Alain Rakotomamonjy. Screening sinkhorn algorithm for regularized optimal transport. InAdvances in Neural Information Processing Systems, volume 32, 2019

work page 2019

-

[2]

Altschuler, Jonathan Weed, and Philippe Rigollet

Jason M. Altschuler, Jonathan Weed, and Philippe Rigollet. Near-linear time approxima- tion algorithms for optimal transport via sinkhorn iteration. In Isabelle Guyon, Ulrike von Luxburg, Samy Bengio, Hanna M. Wallach, Rob Fergus, S. V . N. Vishwanathan, and Roman Garnett, editors,Advances in Neural Information Processing Systems 30: Annual Conference on ...

work page 2017

-

[3]

Shun-ichi Amari, Ryo Karakida, Masafumi Oizumi, and Marco Cuturi. Information geometry for regularized optimal transport and barycenters of patterns.Neural Comput., 31(5):827–848,

-

[4]

doi: 10.1162/NECO\_A\_01178. URLhttps://doi.org/10.1162/neco_a_01178

-

[5]

Lectures on optimal transport.UNITEXT,

Luigi Ambrosio, Elia Brué, and Daniele Semola. Lectures on optimal transport.UNITEXT,

-

[6]

URLhttps://api.semanticscholar.org/CorpusID:244769124

-

[7]

Wasserstein generative adversarial networks

Martín Arjovsky, Soumith Chintala, and Léon Bottou. Wasserstein generative adversarial networks. In Doina Precup and Yee Whye Teh, editors,Proceedings of the 34th International Conference on Machine Learning, ICML 2017, Sydney, NSW, Australia, 6-11 August 2017, Proceedings of Machine Learning Research, pages 214–223. PMLR, 2017. URL http:// proceedings.ml...

work page 2017

-

[8]

Hans L. Bodlaender. A linear time algorithm for finding tree-decompositions of small treewidth. Proceedings of the twenty-fifth annual ACM symposium on Theory of Computing, 1993. URL https://api.semanticscholar.org/CorpusID:2028181

work page 1993

-

[9]

Fast tree-field integrators: From low displacement rank to topological transformers

Krzysztof Marcin Choromanski, Arijit Sehanobish, Somnath Basu Roy Chowdhury, Han Lin, Kumar Avinava Dubey, Tamás Sarlós, and Snigdha Chaturvedi. Fast tree-field integrators: From low displacement rank to topological transformers. In Amir Globersons, Lester Mackey, Danielle Belgrave, Angela Fan, Ulrich Paquet, Jakub M. Tomczak, and Cheng Zhang, edi- tors,A...

work page 2024

-

[10]

Thomas H. Cormen, Charles E. Leiserson, Ronald L. Rivest, and Clifford Stein.Introduction to Algorithms, 3rd Edition. MIT Press, 2009. ISBN 978-0-262-03384-8. URL http://mitpress. mit.edu/books/introduction-algorithms

work page 2009

-

[11]

Sinkhorn distances: Lightspeed computation of optimal transport

Marco Cuturi. Sinkhorn distances: Lightspeed computation of optimal transport. In Christopher J. C. Burges, Léon Bottou, Zoubin Ghahramani, and Kilian Q. Weinberger, editors,Advances in Neural Information Processing Systems 26: 27th Annual Conference on Neural Information Processing Systems 2013. Proceedings of a meeting held December 5-8, 2013, Lake Taho...

work page 2013

-

[12]

Differentiable ranking and sort- ing using optimal transport

Marco Cuturi, Olivier Teboul, and Jean-Philippe Vert. Differentiable ranking and sort- ing using optimal transport. In Hanna M. Wallach, Hugo Larochelle, Alina Beygelz- imer, Florence d’Alché-Buc, Emily B. Fox, and Roman Garnett, editors,Advances in Neural Information Processing Systems 32: Annual Conference on Neural Information Processing Systems 2019, ...

work page 2019

-

[13]

Fomin and Lukasz Kowalik and Daniel Lokshtanov and D

Marek Cygan, Fedor V . Fomin, Lukasz Kowalik, Daniel Lokshtanov, Dániel Marx, Marcin Pilipczuk, Michal Pilipczuk, and Saket Saurabh.Parameterized Algorithms. Springer, 2015. ISBN 978-3-319-21274-6. doi: 10.1007/978-3-319-21275-3. URL https://doi.org/10. 1007/978-3-319-21275-3. 10

-

[14]

Reinhard Diestel.Graph Theory, 4th Edition, volume 173 ofGraduate texts in mathematics. Springer, 2012. ISBN 978-3-642-14278-9

work page 2012

-

[15]

The optimal partial transport problem.Archive for Rational Mechanics and Analysis, 195:533–560, 2010

Alessio Figalli. The optimal partial transport problem.Archive for Rational Mechanics and Analysis, 195:533–560, 2010. URL https://api.semanticscholar.org/CorpusID: 15899562

work page 2010

-

[16]

Kim, Vinod Vaikuntanathan, and Or Zamir

Arnold Filtser and Hung Le. Low treewidth embeddings of planar and minor-free metrics. In63rd IEEE Annual Symposium on Foundations of Computer Science, FOCS 2022, Denver, CO, USA, October 31 - November 3, 2022, pages 1081–1092. IEEE, 2022. doi: 10.1109/ FOCS54457.2022.00105. URLhttps://doi.org/10.1109/FOCS54457.2022.00105

-

[17]

Charlie Frogner, Chiyuan Zhang, Hossein Mobahi, Mauricio Araya-Polo, and Tomaso A. Poggio. Learning with a wasserstein loss. In Corinna Cortes, Neil D. Lawrence, Daniel D. Lee, Masashi Sugiyama, and Roman Garnett, editors,Advances in Neural Information Processing Systems 28: Annual Conference on Neural Information Processing Systems 2015, December 7-12, 2...

work page 2015

-

[18]

Aude Genevay, Marco Cuturi, Gabriel Peyré, and Francis R. Bach. Stochastic optimization for large-scale optimal transport. In Daniel D. Lee, Masashi Sugiyama, Ulrike von Luxburg, Isabelle Guyon, and Roman Garnett, editors,Advances in Neural Information Processing Systems 29: Annual Conference on Neural Information Processing Systems 2016, December 5- 10, ...

work page 2016

-

[19]

Ishaan Gulrajani, Faruk Ahmed, Martín Arjovsky, Vincent Dumoulin, and Aaron C. Courville. Improved training of wasserstein gans. In Isabelle Guyon, Ulrike von Luxburg, Samy Bengio, Hanna M. Wallach, Rob Fergus, S. V . N. Vishwanathan, and Roman Garnett, edi- tors,Advances in Neural Information Processing Systems 30: Annual Conference on Neu- ral Informati...

work page 2017

-

[20]

Tape, Guy Wolf, and Smita Krishnaswamy

Guillaume Huguet, Alexander Tong, María Ramos Zapatero, Christopher J. Tape, Guy Wolf, and Smita Krishnaswamy. Geodesic sinkhorn for fast and accurate optimal transport on manifolds. In2023 IEEE 33rd International Workshop on Machine Learning for Signal Processing (MLSP), pages 1–6. IEEE, 2023

work page 2023

-

[21]

Tomasz Kacprzak, Francois Kamper, Michael W. Weiss, Gianluca Janka, Ann M. Dillner, and Satoshi Takahama. Scalable approximate algorithms for optimal transport linear mod- els.ArXiv, abs/2504.04609, 2025. URL https://api.semanticscholar.org/CorpusID: 277621015

-

[22]

Kelvin Kan, Xingjian Li, and Stanley J. Osher. Ot-transformer: A continuous-time transformer architecture with optimal transport regularization.CoRR, abs/2501.18793, 2025. doi: 10.48550/ ARXIV .2501.18793. URLhttps://doi.org/10.48550/arXiv.2501.18793

-

[23]

L. Kantorovitch. On the translocation of masses.Management Science, 5:1–4, 1958. URL https://api.semanticscholar.org/CorpusID:214798034

work page 1958

-

[24]

Metric learning in optimal transport for domain adaptation

Tanguy Kerdoncuff, Rémi Emonet, and Marc Sebban. Metric learning in optimal transport for domain adaptation. In Christian Bessiere, editor,Proceedings of the Twenty-Ninth International Joint Conference on Artificial Intelligence, IJCAI 2020, pages 2162–2168. ijcai.org, 2020. doi: 10.24963/IJCAI.2020/299. URLhttps://doi.org/10.24963/ijcai.2020/299

-

[25]

Kolkin, Jason Salavon, and Gregory Shakhnarovich

Nicholas I. Kolkin, Jason Salavon, and Gregory Shakhnarovich. Style transfer by relaxed optimal transport and self-similarity. InIEEE Conference on Computer Vision and Pattern Recognition, CVPR 2019, Long Beach, CA, USA, June 16-20, 2019, pages 10051–10060. Computer Vision Foundation / IEEE, 2019. doi: 10.1109/CVPR.2019.01029

-

[26]

Importance sparsification for sinkhorn algo- rithm.J

Mengyu Li, Jun Yu, Tao Li, and Cheng Meng. Importance sparsification for sinkhorn algo- rithm.J. Mach. Learn. Res., 24:247:1–247:44, 2023. URL https://jmlr.org/papers/ v24/22-1311.html. 11

work page 2023

-

[27]

Recent advances in optimal transport for machine learning, 2024

Eduardo Fernandes Montesuma, Fred Ngolè Mboula, and Antoine Souloumiac. Recent advances in optimal transport for machine learning, 2024. URL https://arxiv.org/abs/2306. 16156

work page 2024

-

[28]

Cambridge University Press, 2014

Yann Ollivier, Herve Pajot, and Cédric Villani, editors.Optimal Transport - Theory and Applications, volume 413 ofLondon Mathematical Society lec- ture note series. Cambridge University Press, 2014. ISBN 978-1-10-768949-

work page 2014

-

[29]

URL http://www.cambridge.org/de/academic/subjects/mathematics/ geometry-and-topology/optimal-transport-theory-and-applications

-

[30]

Luiz Manella Pereira and M. Hadi Amini. A survey on optimal transport for machine learning: Theory and applications.IEEE Access, 13:26506–26526, 2025. doi: 10.1109/ACCESS.2025. 3539926. URLhttps://doi.org/10.1109/ACCESS.2025.3539926

-

[31]

Gabriel Peyré. Optimal transport for machine learners.CoRR, abs/2505.06589, 2025. doi: 10.48550/ARXIV .2505.06589. URLhttps://doi.org/10.48550/arXiv.2505.06589

work page internal anchor Pith review doi:10.48550/arxiv 2025

-

[32]

Computational optimal transport.Found

Gabriel Peyré and Marco Cuturi. Computational optimal transport.Found. Trends Mach. Learn., 11(5-6):355–607, 2019. doi: 10.1561/2200000073. URL https://doi.org/10. 1561/2200000073

-

[33]

Gromov-wasserstein averaging of kernel and distance matrices

Gabriel Peyré, Marco Cuturi, and Justin Solomon. Gromov-wasserstein averaging of kernel and distance matrices. In Maria-Florina Balcan and Kilian Q. Weinberger, editors,Proceedings of the 33nd International Conference on Machine Learning, ICML 2016, New York City, NY, USA, June 19-24, 2016, JMLR Workshop and Conference Proceedings, pages 2664–2672. JMLR.org,

work page 2016

-

[34]

URLhttp://proceedings.mlr.press/v48/peyre16.html

-

[35]

Wasserstein barycenter and its application to texture mixing

Julien Rabin, Gabriel Peyré, Julie Delon, and Marc Bernot. Wasserstein barycenter and its application to texture mixing. In Alfred M. Bruckstein, Bart M. ter Haar Romeny, Alexander M. Bronstein, and Michael M. Bronstein, editors,Scale Space and Variational Methods in Computer Vision - Third International Conference, SSVM 2011, Ein-Gedi, Is- rael, May 29 -...

-

[36]

Samuel Ridler, Andrew J. Mason, and Andrea Raith. A simulation and optimisation package for emergency medical services.European Journal of Operational Research, 298(3):1101– 1113, 2022. ISSN 0377-2217. doi: https://doi.org/10.1016/j.ejor.2021.07.038. URL https: //www.sciencedirect.com/science/article/pii/S0377221721006330

-

[37]

Tim Salimans, Han Zhang, Alec Radford, and Dimitris N. Metaxas. Improving gans using optimal transport. In6th International Conference on Learning Representations, ICLR 2018, Vancouver, BC, Canada, April 30 - May 3, 2018, Conference Track Proceedings. OpenRe- view.net, 2018. URLhttps://openreview.net/forum?id=rkQkBnJAb

work page 2018

-

[38]

Linear time sinkhorn divergences using positive features

Meyer Scetbon and Marco Cuturi. Linear time sinkhorn divergences using positive features. In H. Larochelle, M. Ranzato, R. Hadsell, M.F. Balcan, and H. Lin, editors,Advances in Neural Information Processing Systems, volume 33, pages 13468–13480. Curran Associates, Inc., 2020. URL https://proceedings.neurips.cc/paper_files/paper/2020/file/ 9bde76f262285bb1...

work page 2020

-

[39]

Bernhard Schmitzer. Stabilized sparse scaling algorithms for entropy regularized transport problems.SIAM Journal on Scientific Computing, 41(3):A1443–A1481, 2019. doi: 10.1137/ 16M1106018

work page 2019

-

[40]

Espformer: Doubly-stochastic attention with expected sliced transport plans

Ashkan Shahbazi, Elaheh Akbari, Darian Salehi, Xinran Liu, Navid Naderializadeh, and Soheil Kolouri. Espformer: Doubly-stochastic attention with expected sliced transport plans. In Aarti Singh, Maryam Fazel, Daniel Hsu, Simon Lacoste-Julien, Felix Berkenkamp, Tegan Maharaj, Kiri Wagstaff, and Jerry Zhu, editors,Forty-second International Conference on Mac...

work page 2025

-

[41]

Vikas Sindhwani, Tara N. Sainath, and Sanjiv Kumar. Structured transforms for small-footprint deep learning. In Corinna Cortes, Neil D. Lawrence, Daniel D. Lee, Masashi Sugiyama, and Roman Garnett, editors,Advances in Neural Information Processing Systems 28: Annual Confer- ence on Neural Information Processing Systems 2015, December 7-12, 2015, Montreal,...

work page 2015

-

[42]

Accelerating sinkhorn algorithm with sparse newton iterations

Xun Tang, Michael Shavlovsky, Holakou Rahmanian, Elisa Tardini, Kiran Koshy Thekumpara- mpil, Tesi Xiao, and Lexing Ying. Accelerating sinkhorn algorithm with sparse newton iterations. InThe Twelfth International Conference on Learning Representations, ICLR 2024, Vienna, Austria, May 7-11, 2024. OpenReview.net, 2024. URL https://openreview.net/forum? id=K...

work page 2024

-

[43]

Optimal transport for structured data with application on graphs

Titouan Vayer, Nicolas Courty, Romain Tavenard, Laetitia Chapel, and Rémi Flamary. Optimal transport for structured data with application on graphs. In Kamalika Chaudhuri and Ruslan Salakhutdinov, editors,Proceedings of the 36th International Conference on Machine Learning, ICML 2019, 9-15 June 2019, Long Beach, California, USA, Proceedings of Machine Lea...

work page 2019

-

[44]

Gromov-wasserstein learning for graph matching and node embedding

Hongteng Xu, Dixin Luo, Hongyuan Zha, and Lawrence Carin. Gromov-wasserstein learning for graph matching and node embedding. In Kamalika Chaudhuri and Ruslan Salakhutdinov, editors,Proceedings of the 36th International Conference on Machine Learning, ICML 2019, 9- 15 June 2019, Long Beach, California, USA, Proceedings of Machine Learning Research, pages 6...

work page 2019

-

[45]

Qingnan Zhou and Alec Jacobson. Thingi10k: A dataset of 10,000 3d-printing models.arXiv preprint arXiv:1605.04797, 2016. A Efficient computation of cross_compute for|S|= 1and generalf The problem of computing efficientlycross_computeis equivalent to the problem of the efficient (near-linear) multiplication with matrices M= [f(x i +y j)]j=1,...,|B| i=1,......

-

[46]

Institutional review board (IRB) approvals or equivalent for research with human subjects Question: Does the paper describe potential risks incurred by study participants, whether such risks were disclosed to the subjects, and whether Institutional Review Board (IRB) approvals (or an equivalent approval/review based on the requirements of your country or ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.