Recognition: 1 theorem link

· Lean TheoremChladniSonify: A Visual-Acoustic Mapping Method for Chladni Patterns in New Media Art Creation

Pith reviewed 2026-05-12 02:20 UTC · model grok-4.3

The pith

ChladniSonify provides a real-time system that classifies Chladni patterns with over 99 percent accuracy and maps them directly to sound frequencies.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The authors build an end-to-end pipeline that classifies Chladni patterns using a lightweight convolutional neural network enhanced with CBAM attention, achieving 99.33% accuracy at 7 milliseconds inference time, and maps each pattern to its theoretical sine wave frequency with zero error, all within under 50 milliseconds total latency when integrated with Max/MSP for artistic use.

What carries the argument

A lightweight CNN with CBAM module that focuses on slender nodal lines to classify patterns from a theory-derived dataset calibrated by simulation; this classifier then drives frequency selection in the sonification engine.

Load-bearing premise

The simulated Chladni patterns from numerical programming and finite element calibration sufficiently match real-world physical patterns for the classifier to perform reliably in artistic applications.

What would settle it

Running the classifier on a set of photographs of actual sand patterns formed on vibrating plates and measuring if accuracy remains near 99% or drops significantly.

Figures

read the original abstract

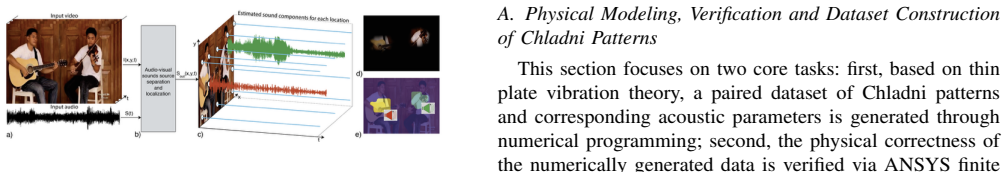

In new media art creation, the mapping between vision and hearing is often subjective. As a classic carrier of sound visualization, Chladni patterns have great potential in building audio-visual mapping mechanisms. However, existing tools face pain points: high technical barriers for simulation, offline computing failing real-time interaction, and uncontrollable mapping rules in general sonification tools. To address these, this paper proposes ChladniSonify, a real-time visual-acoustic mapping method for Chladni patterns. Based on Kirchhoff-Love plate theory, we build a paired dataset via numerical programming and calibrate it using ANSYS finite element simulation. Focusing on the slender nodal lines of Chladni patterns, we adopt a lightweight CNN with CBAM to achieve high-precision, low-latency pattern classification. Finally, we build an end-to-end system in Python and Max/MSP, mapping recognized patterns to corresponding sine wave frequencies. Results show the system has excellent usability: the classification module achieves 99.33% accuracy on the test set with 7.03 ms inference latency; the mapped frequency matches the theoretical value with zero deviation; the average end-to-end latency is under 50 ms, meeting real-time interactive needs. This work provides a reproducible engineering prototype for Chladni audio-visual art creation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims to introduce ChladniSonify, a real-time visual-acoustic mapping system for Chladni patterns in new media art. It generates a paired dataset via numerical programming based on Kirchhoff-Love plate theory, calibrates it with ANSYS finite element simulation, classifies patterns using a lightweight CNN with CBAM attention mechanism, and maps classified patterns to sine-wave frequencies in an integrated Python and Max/MSP pipeline. Reported results include 99.33% classification accuracy on the test set, 7.03 ms inference latency, zero deviation between mapped and theoretical frequencies, and average end-to-end latency under 50 ms, meeting real-time interactive requirements.

Significance. If the simulation-to-reality gap is addressed, the work supplies a reproducible engineering prototype that could lower barriers for artists by automating objective audio-visual mappings from Chladni figures. It is credited for grounding data generation and calibration in established plate theory, achieving low-latency performance suitable for interactive use, and providing an end-to-end implementation that directly addresses offline computing and subjective mapping issues in existing tools.

major comments (2)

- [Results] Results section: The 99.33% accuracy, 7.03 ms inference latency, zero frequency deviation, and <50 ms end-to-end latency are obtained exclusively on a test set generated from Kirchhoff-Love theory and ANSYS calibration. No experiments using camera-captured images from physical Chladni setups (e.g., sand on vibrating plates) are reported, leaving the claim of suitability for authentic patterns in real-time artistic applications untested against real-world factors such as irregular nodal lines or lighting artifacts.

- [Dataset construction and experimental setup] Dataset construction and experimental setup: The manuscript provides no details on the total number of generated patterns, the train-test split ratio, or any cross-validation procedure used to support the 99.33% accuracy figure. These omissions are load-bearing for evaluating the reliability of the central classification and latency claims.

minor comments (2)

- [Abstract] Abstract: The description of the classification module would benefit from stating the number of pattern classes and the test-set size to contextualize the accuracy metric.

- [Implementation] Implementation details: The integration of the CBAM module within the CNN backbone and the exact frequency-mapping logic in Max/MSP could be clarified with pseudocode or a diagram for better reproducibility.

Simulated Author's Rebuttal

We thank the referee for the thorough and constructive review. The comments highlight important aspects of our evaluation and reporting that we will address in the revision. Below we respond point by point to the major comments.

read point-by-point responses

-

Referee: [Results] Results section: The 99.33% accuracy, 7.03 ms inference latency, zero frequency deviation, and <50 ms end-to-end latency are obtained exclusively on a test set generated from Kirchhoff-Love theory and ANSYS calibration. No experiments using camera-captured images from physical Chladni setups (e.g., sand on vibrating plates) are reported, leaving the claim of suitability for authentic patterns in real-time artistic applications untested against real-world factors such as irregular nodal lines or lighting artifacts.

Authors: We agree that all reported metrics derive from the simulated and ANSYS-calibrated dataset. The manuscript focuses on establishing a reproducible, theory-grounded pipeline for real-time mapping, with the simulation calibrated to match finite-element results. We acknowledge that this leaves the simulation-to-reality gap untested for factors such as lighting variations or irregular nodal lines in physical setups. In the revised manuscript we will add an explicit Limitations subsection that states the current evaluation is simulation-based, qualifies the suitability claims for artistic applications, and outlines planned future work involving physical plate experiments and camera capture. No new physical data will be collected for this revision, but the text will be adjusted to avoid overstatement. revision: partial

-

Referee: [Dataset construction and experimental setup] Dataset construction and experimental setup: The manuscript provides no details on the total number of generated patterns, the train-test split ratio, or any cross-validation procedure used to support the 99.33% accuracy figure. These omissions are load-bearing for evaluating the reliability of the central classification and latency claims.

Authors: We apologize for these omissions. The revised manuscript will report the exact total number of generated patterns, the train-test split ratio employed, and the rationale for not using k-fold cross-validation (dataset size and computational considerations). These additions will allow readers to assess the reliability of the 99.33% accuracy and latency figures. revision: yes

- Physical validation on camera-captured Chladni patterns, which would require new hardware experiments and data collection not performed in the present study.

Circularity Check

No significant circularity in the derivation chain

full rationale

The paper generates a synthetic dataset from Kirchhoff-Love plate theory via numerical programming, calibrates it with ANSYS, trains and evaluates a CNN+CBAM classifier on held-out portions of that same synthetic data, and implements a direct lookup mapping from classified pattern IDs to the frequencies used to generate them. The 99.33% test accuracy is a standard supervised-learning metric on the synthetic distribution and does not reduce to the inputs by construction. The reported zero frequency deviation follows immediately from the identity mapping (correct classification yields the exact generating frequency) but is presented only as confirmation of intended system behavior, not as an independent empirical prediction or load-bearing justification for the method. No self-citations, uniqueness theorems, ansatzes, or renamings of known results appear in the chain. The pipeline is therefore self-contained within its simulated domain; absence of physical experiments is a generalizability limitation, not a circularity in the logical derivation.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Kirchhoff-Love plate theory accurately describes the nodal lines of Chladni patterns for the purpose of generating training data

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.leanreality_from_one_distinction unclearBased on the classical Kirchhoff-Love thin plate vibration theory, this study constructs a paired dataset... natural frequency fn,m = 1/(2πa²) √(D/ρh) · λn,m

Reference graph

Works this paper leans on

-

[1]

Image generation associated with music?

Y . Qiu and H. Kataoka, “Image generation associated with music?” in Proc of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops. Piscataway, NJ: IEEE Press, 2018

work page 2018

-

[2]

Mathematically modeling chladni’s patterns,

R. S. P. Kanchanapalli, H. Haffner, and G. Prabhakar, “Mathematically modeling chladni’s patterns,” University of California, Berkeley, R, 2025

work page 2025

-

[3]

E. F. Chladni,Entdeckungen ¨uber die Theorie des Klanges. Leipzig: Breitkopf und H ¨artel, 1787

-

[4]

K. Uno and K. Yokosawa, “Cross-modal correspondence between auditory pitch and visual elevation modulates audiovisual temporal recalibration,” Sci Rep, vol. 12, p. 21308, 2022

work page 2022

-

[5]

Application of computer virtual technology in college physics simulation experiment teaching system,

S. Tan, J. Huo, and X. Wang, “Application of computer virtual technology in college physics simulation experiment teaching system,”Journal of University of Science and Technology of China, vol. 35, no. 3, pp. 429– 433, 2005

work page 2005

-

[6]

¨Uber das gleichgewicht und die bewegung einer elastischen scheibe,

G. Kirchhoff, “¨Uber das gleichgewicht und die bewegung einer elastischen scheibe,”Journal f ¨ur die reine und angewandte Mathematik, vol. 40, pp. 51–88, 1850

-

[7]

Cbam: Convolutional block attention module,

J. Park, J. Y . Leeet al., “Cbam: Convolutional block attention module,” inComputer Vision-ECCV 2018. Cham: Springer, 2018, pp. 3–19

work page 2018

-

[8]

NASA, “Vibration of plates,” NASA Scientific and Technical Information Division, Washington DC, R, 1969

work page 1969

-

[9]

Reproduction design of musical patterns of zenghouyi chime bells based on visualization technology,

Y . Ding and A. H. Zhong, “Reproduction design of musical patterns of zenghouyi chime bells based on visualization technology,”Journal of Wuhan Textile University, vol. 38, no. 5, pp. 43–51, 2025

work page 2025

-

[10]

H. Zhao, C. Gan, A. Rouditchenko, C. V ondrick, J. McDermott, and A. Torralba, “The sound of pixels,”arXiv preprint arXiv:1804.03160, 2018

-

[11]

Q. Li, Z. Wang, S. Cuiet al., “Lightweight space-based remote sensing object detection algorithm fused with multi-attention mechanism,”Journal of Image and Graphics, vol. 30, no. 12, pp. 3955–3968, 2025

work page 2025

-

[12]

Binocular vision location and measurement method based on multi-scale attention mechanism transunet,

Y . Yang, S. Xu, M. Zhanget al., “Binocular vision location and measurement method based on multi-scale attention mechanism transunet,” Optics and Precision Engineering, vol. 33, no. 16, pp. 2502–2515, 2025

work page 2025

-

[13]

Wheat field fire smoke detection from uav images using cnn-cbam,

A. Alsalem and M. Zohdy, “Wheat field fire smoke detection from uav images using cnn-cbam,” in2024 2nd International Conference on Artificial Intelligence, Blockchain, and Internet of Things, 2024, pp. 1–8

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.