Recognition: 2 theorem links

· Lean TheoremTotal Generalized Variation regularization closes the gap between neural-eld and classical methods in seismic travel-time tomography

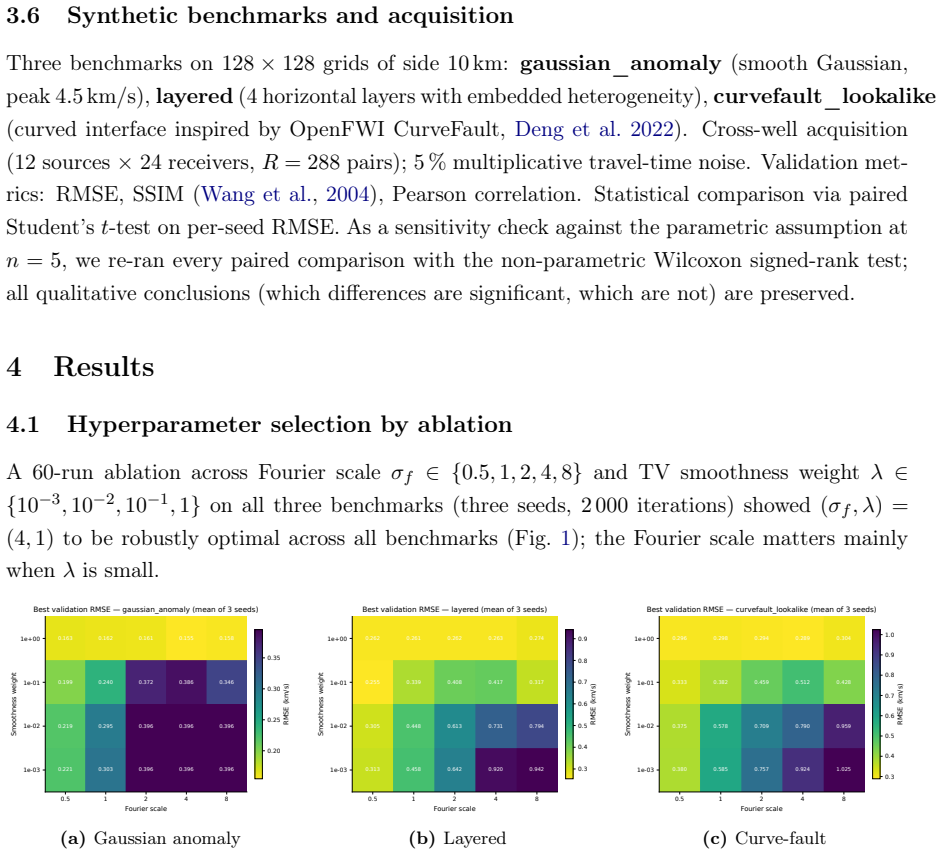

Pith reviewed 2026-05-12 04:15 UTC · model grok-4.3

The pith

Neural-field tomography with jointly optimized TGV² regularization matches classical grid solvers on smooth models and outperforms them on layered and faulted benchmarks.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

MIMIR-TGV² ties a classical FMM-LSMR baseline with auto-tuned hyperparameters on the Gaussian benchmark and significantly outperforms it on layered (44 percent RMSE reduction) and curved-fault (33 percent reduction) benchmarks. Replacing TGV² with TV degrades performance on Gaussian and layered models, while curriculum-annealed TV improves Gaussian RMSE by only 5.4 percent, confirming that TV's staircase bias is intrinsic. The results empirically validate the Bredies-Kunisch-Pock prediction that piecewise-affine priors are better suited to subsurface velocity recovery than piecewise-constant TV priors, and the central design choice in physics-informed neural-field inversion is the regulariz.

What carries the argument

Second-order total generalized variation (TGV²) whose auxiliary vector field is parametrized by a second neural network and jointly optimized with the Fourier-feature velocity network, enforcing piecewise-affine smoothness without classical inner primal-dual loops.

If this is right

- Replacing TGV² with TV degrades performance on Gaussian and layered benchmarks.

- Curriculum-annealed TV improves Gaussian RMSE by only 5.4 percent, showing the staircase bias is intrinsic to the regularizer.

- Piecewise-affine priors suit subsurface velocity recovery better than piecewise-constant TV priors.

- The central design choice in neural-field inversion is the regularizer rather than network architecture.

- The full pipeline reproduces in under one hour on consumer hardware.

Where Pith is reading between the lines

- The continuous neural representation may allow easier handling of irregular or sparse acquisition geometries than fixed-grid classical methods.

- The same joint neural parametrization of TGV² could be tested on other inverse problems that currently use total-variation or L2 smoothing, such as full-waveform inversion.

- If the performance advantage persists on field data with incomplete coverage, hybrid classical-neural workflows may become practical for complex geology.

Load-bearing premise

The three synthetic benchmarks with cross-well geometry and 5 percent Gaussian noise are representative of the regularization behavior that appears on real, noisy, incomplete field data.

What would settle it

Running the identical comparison on real cross-well seismic field data from a site with independent borehole velocity logs or known geologic structures and checking whether the reported RMSE reductions and statistical significance still hold.

Figures

read the original abstract

Travel-time tomography forces a trade-off between mesh resolution and stability in which the regularizer choice dominates what can be recovered. We introduce MIMIR, a differentiable framework that represents the 2D velocity field as a Fourier-feature neural network, replacing the grid-based slowness vector with a continuous, infinitely differentiable function. Prior neural-field tomography has staircased smooth fields under total-variation (TV) priors or oscillated near interfaces under $L^2$ Laplacian smoothing. We adopt second-order total generalized variation (TGV$^2$) and parametrize its auxiliary vector field as a second neural network jointly optimized with the velocity field, eliminating the inner Chambolle-Pock primal-dual loop that classically dominates TGV computation. On three synthetic benchmarks (Gaussian, horizontally layered, curved-fault inspired by OpenFWI) using cross-well acquisition, 5% travel-time noise, and five seeds, MIMIR-TGV$^2$ ties a classical FMM-LSMR baseline with auto-tuned hyperparameters on the Gaussian ($p=0.134$, paired $t$-test) and significantly outperforms it on layered ($p<0.0001$, 44% RMSE reduction) and curved-fault ($p=0.0002$, 33% reduction). Replacing TGV$^2$ with TV degrades performance on Gaussian ($p=0.004$) and layered ($p=0.003$); curriculum-annealed TV improves Gaussian RMSE by only 5.4%, confirming that TV's staircase bias is intrinsic to the regularizer rather than a scheduling artifact. The results empirically validate the Bredies-Kunisch-Pock prediction that piecewise-affine priors are better suited to subsurface velocity recovery than piecewise-constant TV priors. We argue that the central design choice in physics-informed neural-field inversion is not the network architecture but the regularizer. The full pipeline reproduces in under one hour on consumer hardware.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces MIMIR, a differentiable neural-field framework for 2D seismic travel-time tomography in which the velocity model is represented by a Fourier-feature neural network and second-order total generalized variation (TGV²) is imposed by jointly optimizing a second neural network that parametrizes the auxiliary vector field. On three synthetic cross-well benchmarks (Gaussian, horizontally layered, curved-fault) with 5% i.i.d. Gaussian noise, MIMIR-TGV² is reported to tie an auto-tuned classical FMM-LSMR baseline on the Gaussian model (p=0.134) and to outperform it on the layered (44% RMSE reduction, p<0.0001) and curved-fault (33% reduction, p=0.0002) models; replacing TGV² by TV degrades performance, supporting the preference for piecewise-affine priors.

Significance. If the empirical comparisons hold, the work is significant because it supplies concrete evidence that neural-field tomography can match or exceed classical grid-based methods once an appropriate higher-order regularizer is used, thereby shifting emphasis from network architecture to regularizer design in physics-informed inversion. The reproducible pipeline that runs in under one hour on consumer hardware and the explicit comparison against an auto-tuned baseline are strengths that facilitate follow-up work.

major comments (3)

- [Abstract and §4] Abstract and §4 (Experimental results): The reported p-values and RMSE reductions are obtained from only five random seeds, yet the manuscript provides neither per-seed RMSE values, standard deviations, nor a precise description of the paired t-test implementation (including degrees of freedom and any multiple-comparison correction). This information is load-bearing for the central claim that MIMIR-TGV² “ties” or “significantly outperforms” the classical baseline.

- [Methods] Methods (TGV² parametrization): The elimination of the classical Chambolle-Pock inner loop rests on the assumption that a second neural network can faithfully represent the TGV auxiliary vector field without introducing additional bias. No quantitative ablation, approximation-error bound, or comparison against a classical TGV solver on the same velocity field is supplied, leaving open whether the reported gains are due to TGV² itself or to the particular neural parametrization.

- [§5] §5 (Discussion): The conclusion that “the central design choice … is not the network architecture but the regularizer” is drawn exclusively from three synthetic models with complete cross-well coverage and i.i.d. Gaussian noise. Because real travel-time data typically exhibit correlated noise, incomplete illumination, and forward-model mismatch, the manuscript should either provide additional experiments on more realistic synthetics or qualify the scope of the generalization.

minor comments (3)

- [Introduction] The acronym “MIMIR” and the precise definition of the Fourier-feature mapping are introduced without an explicit equation or reference on first use.

- [Abstract] The abstract states that “curriculum-annealed TV improves Gaussian RMSE by only 5.4%” but does not specify the annealing schedule or the number of epochs used for the curriculum; this detail should appear in the methods or supplementary material.

- [Figures] Figure captions and axis labels for the velocity-model reconstructions should explicitly state the color scale and the location of the cross-well sources/receivers to allow direct visual comparison with the quantitative RMSE values.

Simulated Author's Rebuttal

We thank the referee for the thoughtful and constructive report. The comments highlight important aspects of statistical reporting, validation of the TGV² parametrization, and the scope of our conclusions. We address each major comment below and outline revisions that will strengthen the manuscript without altering its core claims.

read point-by-point responses

-

Referee: [Abstract and §4] Abstract and §4 (Experimental results): The reported p-values and RMSE reductions are obtained from only five random seeds, yet the manuscript provides neither per-seed RMSE values, standard deviations, nor a precise description of the paired t-test implementation (including degrees of freedom and any multiple-comparison correction). This information is load-bearing for the central claim that MIMIR-TGV² “ties” or “significantly outperforms” the classical baseline.

Authors: We agree that the statistical details require expansion for full transparency. In the revised manuscript we will add an appendix table that lists the per-seed RMSE values for both MIMIR-TGV² and the FMM-LSMR baseline on each of the three models, together with the corresponding standard deviations. We will also state explicitly that the paired t-tests were performed with scipy.stats.ttest_rel (n = 5 pairs, df = 4) and that no multiple-comparison correction was applied, as the tests were pre-planned and limited to the primary baseline comparison per model. revision: yes

-

Referee: [Methods] Methods (TGV² parametrization): The elimination of the classical Chambolle-Pock inner loop rests on the assumption that a second neural network can faithfully represent the TGV auxiliary vector field without introducing additional bias. No quantitative ablation, approximation-error bound, or comparison against a classical TGV solver on the same velocity field is supplied, leaving open whether the reported gains are due to TGV² itself or to the particular neural parametrization.

Authors: The concern is well-founded; we did not supply a direct numerical check of the neural auxiliary field against a classical solver. In the revised manuscript we will add a short validation subsection that, for a representative fixed velocity field drawn from the benchmarks, reports the TGV² value obtained by the jointly optimized neural auxiliary network versus the value returned by a standard Chambolle-Pock implementation on the same field, thereby quantifying any residual approximation error. revision: yes

-

Referee: [§5] §5 (Discussion): The conclusion that “the central design choice … is not the network architecture but the regularizer” is drawn exclusively from three synthetic models with complete cross-well coverage and i.i.d. Gaussian noise. Because real travel-time data typically exhibit correlated noise, incomplete illumination, and forward-model mismatch, the manuscript should either provide additional experiments on more realistic synthetics or qualify the scope of the generalization.

Authors: We accept that the present experiments are confined to idealized synthetic conditions. In the revised discussion we will insert an explicit qualifying paragraph that (i) reiterates the synthetic nature of the benchmarks, (ii) notes that real data may contain correlated noise and incomplete illumination, and (iii) states that the relative advantage of TGV² over TV may therefore change under those conditions, while still arguing that the regularizer remains the dominant design choice within the tested regime. revision: yes

Circularity Check

No circularity: results are empirical comparisons to external baseline

full rationale

The paper's central claims consist of empirical RMSE reductions and p-values from direct comparisons of MIMIR-TGV² against an independent classical FMM-LSMR baseline (with auto-tuned hyperparameters) on three fixed synthetic velocity models. No equations, derivations, or self-citations reduce these performance metrics to quantities defined by the authors' own fitted parameters. The adoption of TGV² follows the external Bredies-Kunisch-Pock framework without load-bearing self-citation chains, and the auxiliary field parametrization is a methodological choice rather than a tautological redefinition. The derivation chain is self-contained because the reported superiority (e.g., 44% RMSE reduction on layered model) is measured against an external solver and ground-truth synthetics, not constructed from the method's own outputs.

Axiom & Free-Parameter Ledger

free parameters (1)

- TGV regularization weights

axioms (2)

- domain assumption The Fourier-feature network produces an infinitely differentiable velocity field whose gradients are well-behaved for TGV computation.

- ad hoc to paper The second neural network can faithfully represent the auxiliary vector field of TGV² without additional bias.

Reference graph

Works this paper leans on

-

[1]

R. C. Aster and B. Borchers and C. H. Thurber , title =. 2018 , pages =

work page 2018

- [2]

-

[3]

C. H. Thurber , title =. J. Geophys. Res. , volume =

- [4]

-

[5]

C. C. Paige and M. A. Saunders , title =. ACM Trans. Math. Softw. , volume =

-

[6]

D. C.-L. Fong and M. A. Saunders , title =. SIAM J. Sci. Comput. , volume =

-

[7]

L. I. Rudin and S. Osher and E. Fatemi , title =. Physica D , volume =

-

[8]

T. F. Chan and S. Esedoglu , title =. SIAM J. Appl. Math. , volume =

-

[9]

A. Y. Anagaw and M. D. Sacchi , title =. J. Geophys. Eng. , volume =

-

[10]

E. Esser and L. Guasch and T. Total variation regularization strategies in full-waveform inversion , journal =

-

[11]

K. Bredies and K. Kunisch and T. Pock , title =. SIAM J. Imaging Sci. , volume =

- [12]

- [13]

-

[14]

M. Tancik and P. P. Srinivasan and B. Mildenhall and S. Fridovich-Keil and N. Raghavan and U. Singhal and R. Ramamoorthi and J. T. Barron and R. Ng , title =. Adv. Neural Inform. Process. Syst. , volume =

-

[15]

B. Mildenhall and P. P. Srinivasan and M. Tancik and J. T. Barron and R. Ramamoorthi and R. Ng , title =. European Conference on Computer Vision (ECCV) , pages =

- [16]

-

[17]

Y. Yang and B. Engquist and J. Sun and B. F. Hamfeldt , title =. Geophysics , volume =

- [18]

-

[19]

J. D. Smith and K. Azizzadenesheli and Z. E. Ross , title =. IEEE Trans. Geosci. Remote Sens. , volume =. 2020 , doi =

work page 2020

- [20]

-

[21]

J. Adler and O. Solving ill-posed inverse problems using iterative deep neural networks , journal =

-

[22]

S. Arridge and P. Maass and O. Solving inverse problems using data-driven models , journal =

- [23]

-

[24]

E. Kobler and A. Effland and K. Kunisch and T. Pock , title =. IEEE Trans. Pattern Anal. Mach. Intell. , volume =

- [25]

-

[26]

J. A. Sethian , title =. Proc. Natl. Acad. Sci. , volume =

-

[27]

D. P. Kingma and J. Ba , title =. International Conference on Learning Representations (ICLR) , year =

-

[28]

Z. Wang and A. C. Bovik and H. R. Sheikh and E. P. Simoncelli , title =. IEEE Trans. Image Process. , volume =

-

[29]

C. Deng and S. Feng and H. Wang and X. Zhang and P. Jin and Y. Feng and Q. Zeng and Y. Chen and Y. Lin , title =. Adv. Neural Inform. Process. Syst. , volume =

-

[30]

J. K. Furtney , title =. 2024 , howpublished =

work page 2024

-

[31]

M. Raissi and P. Perdikaris and G. E. Karniadakis , title =. J. Comput. Phys. , volume =

-

[32]

G. E. Karniadakis and I. G. Kevrekidis and L. Lu and P. Perdikaris and S. Wang and L. Yang , title =. Nat. Rev. Phys. , volume =

-

[33]

N. J. Lindsey and E. R. Martin and D. S. Dreger and B. Freifeld and S. Cole and S. R. James and B. L. Biondi and J. B. Ajo-Franklin , title =. Geophys. Res. Lett. , volume =

- [34]

-

[35]

C. Tape and Q. Liu and A. Maggi and J. Tromp , title =. Geophys. J. Int. , volume =

- [36]

-

[37]

M. J. Bianco and P. Gerstoft and J. Traer and E. Ozanich and M. A. Roch and S. Gannot and C.-A. Deledalle , title =. J. Acoust. Soc. Am. , volume =

- [38]

-

[39]

C. R. Harris et al. , title =. Nature , volume =

- [40]

-

[41]

J. D. Hunter , title =. Comput. Sci. Eng. , volume =

- [42]

-

[43]

E. J. Cand. Enhancing sparsity by reweighted _. J. Fourier Anal. Appl. , volume =

-

[44]

P. J. Huber , title =. Ann. Math. Stat. , volume =

-

[45]

C. R. Vogel and M. E. Oman , title =. SIAM J. Sci. Comput. , volume =

- [46]

-

[47]

Z. E. Ross and M.-A. Meier and E. Hauksson and T. H. Heaton , title =. Bull. seism. Soc. Am. , volume =

- [48]

-

[49]

S. M. Mousavi and W. L. Ellsworth and W. Zhu and L. Y. Chuang and G. C. Beroza , title =. Nat. Commun. , volume =

-

[50]

A. Rasht-Behesht and C. Huber and K. Shukla and G. E. Karniadakis , title =. J. Geophys. Res.: Solid Earth , volume =

- [51]

-

[52]

J. Adler and O. \"O ktem. Solving ill-posed inverse problems using iterative deep neural networks. Inverse Probl., 33 0 (12): 0 124007, 2017

work page 2017

- [53]

-

[54]

A. Y. Anagaw and M. D. Sacchi. Edge-preserving seismic imaging using the total variation method. J. Geophys. Eng., 9 0 (2): 0 138--146, 2012

work page 2012

-

[55]

S. Arridge, P. Maass, O. \"O ktem, and C.-B. Sch \"o nlieb. Solving inverse problems using data-driven models. Acta Numer., 28: 0 1--174, 2019

work page 2019

-

[56]

R. C. Aster, B. Borchers, and C. H. Thurber. Parameter Estimation and Inverse Problems. Elsevier, 3 edition, 2018

work page 2018

-

[57]

M. Benning and M. Burger. Modern regularization methods for inverse problems. Acta Numer., 27: 0 1--111, 2018

work page 2018

-

[58]

M. J. Bianco, P. Gerstoft, J. Traer, E. Ozanich, M. A. Roch, S. Gannot, and C.-A. Deledalle. Machine learning in acoustics: theory and applications. J. Acoust. Soc. Am., 146 0 (5): 0 3590--3628, 2019

work page 2019

-

[59]

K. Bredies and M. Holler. A TGV -based framework for variational image decompression, zooming, and reconstruction. Part I : analytics. SIAM J. Imaging Sci., 8 0 (4): 0 2814--2850, 2014

work page 2014

-

[60]

K. Bredies, K. Kunisch, and T. Pock. Total generalized variation. SIAM J. Imaging Sci., 3 0 (3): 0 492--526, 2010

work page 2010

-

[61]

E. J. Cand \`e s, M. B. Wakin, and S. P. Boyd. Enhancing sparsity by reweighted _ 1 minimization. J. Fourier Anal. Appl., 14 0 (5--6): 0 877--905, 2008

work page 2008

-

[62]

A. Chambolle and T. Pock. A first-order primal-dual algorithm for convex problems with applications to imaging. J. Math. Imaging Vis., 40 0 (1): 0 120--145, 2011

work page 2011

-

[63]

T. F. Chan and S. Esedoglu. Aspects of total variation regularized L1 function approximation. SIAM J. Appl. Math., 65 0 (5): 0 1817--1837, 2005

work page 2005

-

[64]

C. Deng, S. Feng, H. Wang, X. Zhang, P. Jin, Y. Feng, Q. Zeng, Y. Chen, and Y. Lin. OpenFWI : large-scale multi-structural benchmark datasets for seismic full waveform inversion. In Adv. Neural Inform. Process. Syst., volume 35, 2022

work page 2022

- [65]

-

[66]

D. C.-L. Fong and M. A. Saunders. LSMR : an iterative algorithm for sparse least-squares problems. SIAM J. Sci. Comput., 33 0 (5): 0 2950--2971, 2011

work page 2011

-

[67]

J. K. Furtney. scikit-fmm: the fast marching method for Python , version 2024.05.29. https://github.com/scikit-fmm/scikit-fmm, 2024

work page 2024

-

[68]

P. J. Huber. Robust estimation of a location parameter. Ann. Math. Stat., 35 0 (1): 0 73--101, 1964

work page 1964

-

[69]

G. E. Karniadakis, I. G. Kevrekidis, L. Lu, P. Perdikaris, S. Wang, and L. Yang. Physics-informed machine learning. Nat. Rev. Phys., 3 0 (6): 0 422--440, 2021

work page 2021

-

[70]

D. P. Kingma and J. Ba. Adam : a method for stochastic optimization. In International Conference on Learning Representations (ICLR), 2015

work page 2015

- [71]

- [72]

-

[73]

Y. Liu, W. Lin, X. Yang, and Y. Sun. Implicit neural representations for inverse problems in controlled-source electromagnetics. Geophys. J. Int., 233 0 (3): 0 1689--1706, 2023

work page 2023

-

[74]

S. Lunz, O. \"O ktem, and C.-B. Sch \"o nlieb. Adversarial regularizers in inverse problems. Adv. Neural Inform. Process. Syst., 31, 2018

work page 2018

-

[75]

B. Mildenhall, P. P. Srinivasan, M. Tancik, J. T. Barron, R. Ramamoorthi, and R. Ng. NeRF : representing scenes as neural radiance fields for view synthesis. In European Conference on Computer Vision (ECCV), pages 405--421, 2020

work page 2020

-

[76]

S. M. Mousavi, W. L. Ellsworth, W. Zhu, L. Y. Chuang, and G. C. Beroza. Earthquake transformer : an attentive deep-learning model for simultaneous earthquake detection and phase picking. Nat. Commun., 11 0 (1): 0 3952, 2020

work page 2020

-

[77]

C. C. Paige and M. A. Saunders. LSQR : an algorithm for sparse linear equations and sparse least squares. ACM Trans. Math. Softw., 8 0 (1): 0 43--71, 1982

work page 1982

- [78]

-

[79]

A. Rasht-Behesht, C. Huber, K. Shukla, and G. E. Karniadakis. Physics-informed neural networks ( PINNs ) for wave propagation and full waveform inversions. J. Geophys. Res.: Solid Earth, 127 0 (5): 0 e2021JB023120, 2022

work page 2022

-

[80]

Z. E. Ross, M.-A. Meier, E. Hauksson, and T. H. Heaton. Generalized seismic phase detection with deep learning. Bull. seism. Soc. Am., 108 0 (5A): 0 2894--2901, 2018

work page 2018

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.