Recognition: 2 theorem links

· Lean TheoremProspective Compression in Human Abstraction Learning

Pith reviewed 2026-05-12 03:47 UTC · model grok-4.3

The pith

Humans acquire reusable abstractions by targeting compression of future tasks rather than past ones when task demands change over time.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

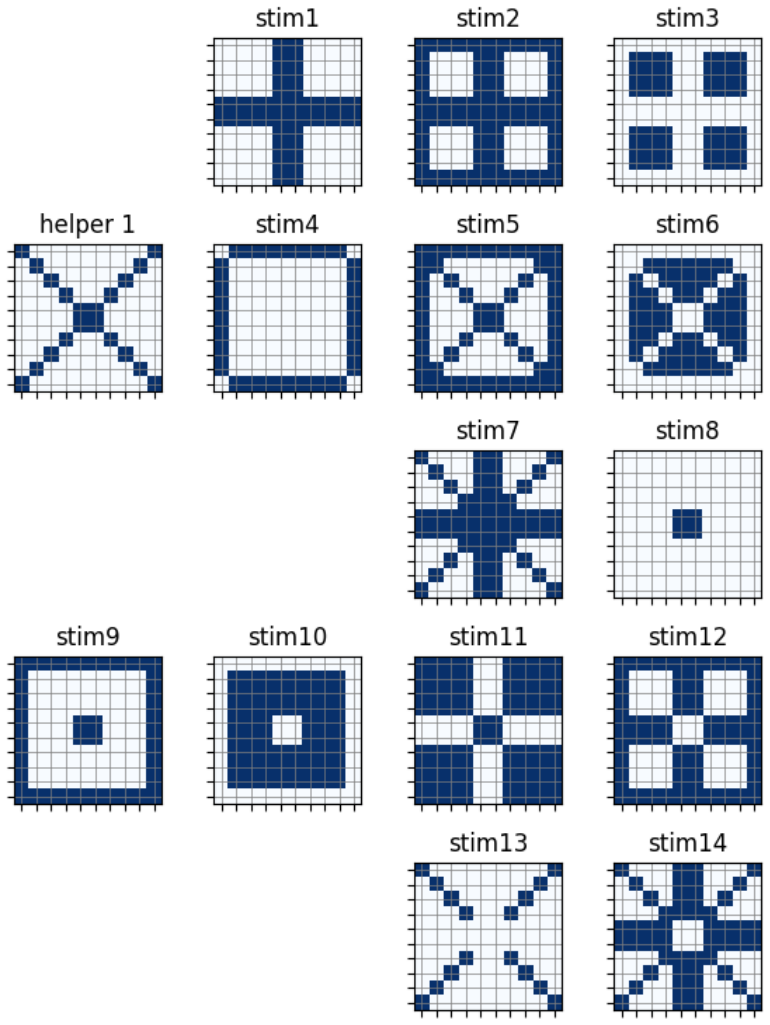

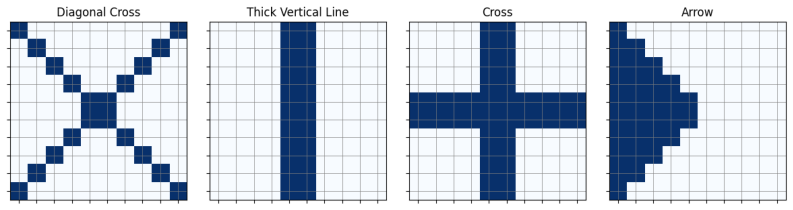

In the Pattern Builder Task with its carry-forward helpers and shifting latent curricula, human participants select abstractions in a manner consistent with minimizing the expected description length of future tasks generated by the hidden process, rather than minimizing description length over observed tasks or following patterns typical of LLM-based synthesis.

What carries the argument

Prospective compression: the mechanism of selecting reusable abstractions that reduce the anticipated cost of describing tasks that will arise later from an evolving generative process.

If this is right

- Standard retrospective library-learning algorithms cannot fully account for human abstraction choices under non-stationary conditions.

- LLM-based program synthesis models miss the prospective sensitivity humans show to latent task structure.

- Effective online library learning requires explicit mechanisms for anticipating future task distributions.

- Complementary latent curricula can be used to experimentally dissociate prospective from retrospective strategies.

- Human performance in the task reflects detection of the hidden generative process rather than surface statistics of past examples.

Where Pith is reading between the lines

- Program synthesis systems could improve by adding forward-looking prediction of task distributions instead of relying only on compression of collected examples.

- The same prospective logic may apply in other shifting domains such as adaptive skill acquisition or sequential decision making.

- If prospective compression holds, it predicts that disrupting future-task expectations while keeping past data fixed should alter abstraction choices in measurable ways.

- Extending the task to include noisier or more open-ended primitives could test whether the effect survives when the set of possible helpers is less constrained.

Load-bearing premise

The Pattern Builder Task and its six computational models isolate prospective compression behavior from retrospective compression and from LLM inductive biases.

What would settle it

If participants' helper selections across the two complementary curricula match the predictions of a retrospective compression model more closely than those of a prospective model, the central claim would be falsified.

Figures

read the original abstract

A core challenge in program synthesis is online library learning: the incremental acquisition of reusable abstractions under uncertainty about future task demands. Existing algorithms treat library learning as retrospective compression over a static task distribution, where the learned library is determined by the corpus of past tasks. However, real-world learning domains are often non-stationary, with tasks arising from a generative process that evolves over time. We propose and test the hypothesis that in non-stationary domains human library learning selects abstractions prospectively: targeting compression of future tasks. We study this question using the Pattern Builder Task, a visual program synthesis paradigm in which participants construct increasingly complex geometric patterns from a small set of primitives, transformations, and custom helpers that carry forward across trials. Using this task, we conduct two experiments with complementary latent curricula, designed to dissociate between behaviors consistent with prospective compression, and alternative library learning accounts. Using six computational models spanning online library learning strategies, we show that human abstraction behavior reflects sensitivity to latent, non-stationary structure in the task-generating process. This behavior is consistent with prospective compression, and cannot be captured by existing retrospective compression-based algorithms, or inductive biases modeled by LLM-based program synthesis.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that human abstraction learning in non-stationary domains proceeds via prospective compression (targeting future task distributions) rather than retrospective compression over past tasks. This is tested in the Pattern Builder Task using two experiments with complementary latent curricula, where human abstraction choices are compared against six computational models spanning online library learning strategies; the results are argued to show that human behavior tracks latent non-stationary structure and cannot be explained by retrospective algorithms or LLM-based program synthesis inductive biases.

Significance. If the dissociation between prospective and retrospective accounts holds, the work would demonstrate that humans maintain sensitivity to evolving generative processes when acquiring reusable abstractions, with direct implications for cognitive models of library learning and for designing AI program synthesis systems that handle non-stationary task streams. The empirical human data and multi-model comparison constitute a strength, though verification is currently limited by missing implementation and analysis details.

major comments (3)

- [Computational Models] The central dissociation claim (human data align with prospective compression but not retrospective models or LLM synthesis) is load-bearing and requires explicit verification that the six models are faithful implementations without implicit forward-looking mechanisms or task-distribution assumptions. The manuscript must detail exact model specifications, parameter settings, and how they were adapted to the online non-stationary setting.

- [Experiments] The complementary latent curricula are presented as the key experimental manipulation to produce measurable divergence between prospective and retrospective optimal abstractions. The paper should provide quantitative evidence (e.g., simulation results or information-theoretic measures) that the curricula achieve this separation without introducing shared inductive biases that could allow retrospective models to succeed.

- [Results] Soundness is limited by the absence of reported statistical analysis details, data exclusion criteria, error bars, and model comparison metrics (e.g., likelihood ratios or cross-validation scores). These are required to substantiate that retrospective models fail to capture human choices while the prospective model succeeds.

minor comments (1)

- [Introduction] Clarify the precise operational definitions of 'prospective compression' and 'retrospective compression' with reference to the specific model formulations, to avoid ambiguity in interpreting the model comparisons.

Simulated Author's Rebuttal

We thank the referee for their constructive comments, which identify key areas requiring additional detail and analysis to strengthen the manuscript's claims. We address each major comment point by point below, committing to revisions that provide the requested verification without altering the core findings.

read point-by-point responses

-

Referee: [Computational Models] The central dissociation claim (human data align with prospective compression but not retrospective models or LLM synthesis) is load-bearing and requires explicit verification that the six models are faithful implementations without implicit forward-looking mechanisms or task-distribution assumptions. The manuscript must detail exact model specifications, parameter settings, and how they were adapted to the online non-stationary setting.

Authors: We agree that explicit verification of model fidelity is essential. In the revised manuscript, we will expand the Methods section and add a dedicated supplementary appendix containing the full pseudocode for each of the six models, exact parameter settings (including any regularization or search hyperparameters), and step-by-step descriptions of their adaptation to the online, non-stationary Pattern Builder Task. These implementations follow the original retrospective formulations strictly, with no forward-looking components or assumptions about future task distributions introduced. revision: yes

-

Referee: [Experiments] The complementary latent curricula are presented as the key experimental manipulation to produce measurable divergence between prospective and retrospective optimal abstractions. The paper should provide quantitative evidence (e.g., simulation results or information-theoretic measures) that the curricula achieve this separation without introducing shared inductive biases that could allow retrospective models to succeed.

Authors: We will incorporate a new subsection under Experiments that reports simulation results and information-theoretic analyses of the two curricula. This will include mutual information calculations between the evolving task distributions and the optimal libraries under prospective versus retrospective strategies, demonstrating measurable divergence. We will also analyze the generative processes of the curricula to confirm the absence of shared inductive biases that could inadvertently favor retrospective models. revision: yes

-

Referee: [Results] Soundness is limited by the absence of reported statistical analysis details, data exclusion criteria, error bars, and model comparison metrics (e.g., likelihood ratios or cross-validation scores). These are required to substantiate that retrospective models fail to capture human choices while the prospective model succeeds.

Authors: We acknowledge that these details are currently underspecified. The revised Results section will include comprehensive statistical reporting: data exclusion criteria (e.g., based on completion rates and outlier detection), error bars on all relevant figures, full model comparison metrics including likelihood ratios, AIC/BIC scores, and cross-validation performance for human choice prediction under each model. These additions will quantitatively support the dissociation between prospective and retrospective accounts. revision: yes

Circularity Check

No significant circularity in empirical dissociation of compression strategies

full rationale

The paper's claims rest on human behavioral data collected in the Pattern Builder Task under two complementary latent curricula, compared against six independently specified computational models (retrospective compression algorithms and LLM-based synthesis). No mathematical derivation chain, self-definitional equations, fitted parameters presented as predictions, or load-bearing self-citations appear in the provided text; the central result is an empirical contrast between observed human abstraction choices and model predictions, which remains falsifiable against external benchmarks and does not reduce to its own inputs by construction.

Axiom & Free-Parameter Ledger

free parameters (1)

- parameters in the six computational models

axioms (1)

- domain assumption The Pattern Builder Task and latent curricula designs isolate prospective vs retrospective compression behaviors

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe propose and test the hypothesis that in non-stationary domains human library learning selects abstractions prospectively: targeting compression of future tasks... H∗ = arg maxH⊂P EP(P∗|C1:t)[CU(H,P∗)]

-

IndisputableMonolith/Foundation/BranchSelection.leanbranch_selection unclearExisting algorithms treat library learning as retrospective compression over a static task distribution... corpus compression utility of human top-k helpers

Reference graph

Works this paper leans on

-

[1]

Advances in Neural Information Processing Systems , volume=

Learning abstract structure for drawing by efficient motor program induction , author=. Advances in Neural Information Processing Systems , volume=

-

[2]

Proceedings of the ACM on Programming Languages , volume=

Top-down synthesis for library learning , author=. Proceedings of the ACM on Programming Languages , volume=. 2023 , publisher=

work page 2023

-

[3]

Human-level concept learning through probabilistic program induction , author=. Science , volume=. 2015 , publisher=

work page 2015

-

[4]

Structure-mapping: A theoretical framework for analogy , author=. Cognitive Science , volume=. 1983 , publisher=

work page 1983

-

[5]

Trends in Cognitive Sciences , volume=

Chunking mechanisms in human learning , author=. Trends in Cognitive Sciences , volume=. 2001 , publisher=

work page 2001

-

[6]

Planning with Generative Cognitive Maps , author=. NeurIPS 2025 Workshop on Bridging Language, Agent, and World Models for Reasoning and Planning , year =

work page 2025

-

[7]

Journal of Phonetics , volume=

Emergence of combinatorial structure and economy through iterated learning with continuous acoustic signals , author=. Journal of Phonetics , volume=. 2014 , publisher=

work page 2014

-

[8]

Philosophical Transactions of the Royal Society A , volume=

Dreamcoder: growing generalizable, interpretable knowledge with wake--sleep bayesian program learning , author=. Philosophical Transactions of the Royal Society A , volume=. 2023 , publisher=

work page 2023

-

[9]

International Conference of Learning Representations , year=

Map induction: Compositional spatial submap learning for efficient exploration in novel environments , author=. International Conference of Learning Representations , year=

-

[11]

The Quarterly Journal of Experimental Psychology Section A , volume=

The search for simplicity: A fundamental cognitive principle? , author=. The Quarterly Journal of Experimental Psychology Section A , volume=. 1999 , publisher=

work page 1999

-

[12]

A foundation model to predict and capture human cognition , author=. Nature , volume=. 2025 , publisher=

work page 2025

-

[14]

Advances in Neural Information Processing Systems , volume=

Communicating natural programs to humans and machines , author=. Advances in Neural Information Processing Systems , volume=

-

[17]

and Mador-Haim, Sela and Martin, Milo M.K

Udupa, Abhishek and Raghavan, Arun and Deshmukh, Jyotirmoy V. and Mador-Haim, Sela and Martin, Milo M.K. and Alur, Rajeev , title =. Proceedings of the ACM SIGPLAN Conference on Programming Language Design and Implementation , volume =

-

[18]

International conference on computer aided verification , pages=

Recursive program synthesis , author=. International conference on computer aided verification , pages=. 2013 , organization=

work page 2013

-

[19]

Trends in cognitive sciences , volume=

Simplicity: a unifying principle in cognitive science? , author=. Trends in cognitive sciences , volume=. 2003 , publisher=

work page 2003

-

[21]

Exploring the hierarchical structure of human plans via program generation , author=. Cognition , volume=. 2025 , publisher=

work page 2025

-

[23]

PLOS Computational Biology , volume=

Approximate planning in spatial search , author=. PLOS Computational Biology , volume=. 2024 , publisher=

work page 2024

-

[24]

Discrete Applied Mathematics , volume=

The maximum edge biclique problem is NP-complete , author=. Discrete Applied Mathematics , volume=. 2003 , publisher=

work page 2003

-

[25]

Library learning with e-graphs on jazz harmony

Library learning with e-graphs on jazz harmony , author=. 2026 , eprint=. doi:10.48550/arXiv.2605.04622 , url=

work page internal anchor Pith review Pith/arXiv arXiv doi:10.48550/arxiv.2605.04622 2026

-

[26]

Nature Communications , volume=

Symbolic metaprogram search improves learning efficiency and explains rule learning in humans , author=. Nature Communications , volume=. 2024 , publisher=

work page 2024

-

[27]

Online library learning in human visual puzzle solving , author=. 2026 , journal=

work page 2026

-

[28]

Foundations and Trends in Programming Languages , volume=

Program synthesis , author=. Foundations and Trends in Programming Languages , volume=. 2017 , publisher=

work page 2017

-

[29]

Nature Human Behaviour , volume=

A model of conceptual bootstrapping in human cognition , author=. Nature Human Behaviour , volume=. 2024 , publisher=

work page 2024

-

[30]

Teaching Recombinable Motifs Through Simple Examples , author=. Cognitive Science , volume=. 2025 , publisher=

work page 2025

- [31]

-

[32]

Behavioral and brain sciences , volume=

Resource-rational analysis: Understanding human cognition as the optimal use of limited computational resources , author=. Behavioral and brain sciences , volume=. 2020 , publisher=

work page 2020

-

[33]

Advances in Neural Information Processing Systems , volume=

Using natural language and program abstractions to instill human inductive biases in machines , author=. Advances in Neural Information Processing Systems , volume=

-

[34]

Proceedings of the 47th Annual Meeting of the Cognitive Science Society , year=

Bootstrapping in Geometric Puzzle Solving , author=. Proceedings of the 47th Annual Meeting of the Cognitive Science Society , year=

-

[35]

Bootstrap learning via modular concept discovery , author=. 2013 , organization=

work page 2013

-

[36]

Proceedings of the ACM on Programming Languages , number=

Cao, David and Kunkel, Rose and Nandi, Chandrakana and Willsey, Max and Tatlock, Zachary and Polikarpova, Nadia , title =. Proceedings of the ACM on Programming Languages , number=

-

[37]

Advances in Neural Information Processing Systems , volume=

Program synthesis and semantic parsing with learned code idioms , author=. Advances in Neural Information Processing Systems , volume=

-

[38]

Mining idioms from source code , author=. Proceedings of the ACM SIGSOFT international symposium on foundations of software engineering , pages=

-

[39]

IEEE Transactions on Software Engineering , volume=

Mining semantic loop idioms , author=. IEEE Transactions on Software Engineering , volume=

-

[40]

International Conference on Learning Representations , year=

LILO: Learning Interpretable Libraries by Compressing and Documenting Code , author=. International Conference on Learning Representations , year=

-

[41]

Communicating natural programs to humans and machines

Sam Acquaviva, Yewen Pu, Marta Kryven, Theodoros Sechopoulos, Catherine Wong, Gabrielle Ecanow, Maxwell Nye, Michael Tessler, and Josh Tenenbaum. Communicating natural programs to humans and machines. Advances in Neural Information Processing Systems, 35: 0 3731--3743, 2022

work page 2022

-

[42]

Aws Albarghouthi, Sumit Gulwani, and Zachary Kincaid. Recursive program synthesis. In International conference on computer aided verification, pages 934--950. Springer, 2013

work page 2013

-

[43]

Miltiadis Allamanis, Earl T Barr, Christian Bird, Premkumar Devanbu, Mark Marron, and Charles Sutton. Mining semantic loop idioms. IEEE Transactions on Software Engineering, 44 0 (7): 0 651--668, 2018

work page 2018

-

[44]

Program Synthesis with Large Language Models

Jacob Austin, Augustus Odena, Maxwell Nye, Maarten Bosma, Henryk Michalewski, David Dohan, Ellen Jiang, Carrie Cai, Michael Terry, Quoc Le, et al. Program synthesis with large language models. arXiv preprint arXiv:2108.07732, 2021

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[45]

A foundation model to predict and capture human cognition

Marcel Binz, Elif Akata, Matthias Bethge, Franziska Br \"a ndle, Fred Callaway, Julian Coda-Forno, Peter Dayan, Can Demircan, Maria K Eckstein, No \'e mi \'E ltet o , et al. A foundation model to predict and capture human cognition. Nature, 644 0 (8078): 0 1002--1009, 2025

work page 2025

-

[46]

Top-down synthesis for library learning

Matthew Bowers, Theo X Olausson, Lionel Wong, Gabriel Grand, Joshua B Tenenbaum, Kevin Ellis, and Armando Solar-Lezama. Top-down synthesis for library learning. Proceedings of the ACM on Programming Languages, 7 0 (POPL): 0 1182--1213, 2023

work page 2023

-

[47]

babble: Learning better abstractions with e-graphs and anti-unification

David Cao, Rose Kunkel, Chandrakana Nandi, Max Willsey, Zachary Tatlock, and Nadia Polikarpova. babble: Learning better abstractions with e-graphs and anti-unification. Proceedings of the ACM on Programming Languages, 0 (POPL), 2023

work page 2023

-

[48]

Nick Chater. The search for simplicity: A fundamental cognitive principle? The Quarterly Journal of Experimental Psychology Section A, 52 0 (2): 0 273--302, 1999

work page 1999

-

[49]

Nick Chater and Paul Vit \'a nyi. Simplicity: a unifying principle in cognitive science? Trends in cognitive sciences, 7 0 (1): 0 19--22, 2003

work page 2003

-

[50]

Evaluating Large Language Models Trained on Code

Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde De Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, et al. Evaluating large language models trained on code. arXiv preprint arXiv:2107.03374, 2021

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[51]

On the Measure of Intelligence

Fran c ois Chollet. On the measure of intelligence. arXiv preprint arXiv:1911.01547, 2019

work page internal anchor Pith review arXiv 1911

-

[52]

Exploring the hierarchical structure of human plans via program generation

Carlos G Correa, Sophia Sanborn, Mark K Ho, Frederick Callaway, Nathaniel D Daw, and Thomas L Griffiths. Exploring the hierarchical structure of human plans via program generation. Cognition, 255: 0 105990, 2025

work page 2025

-

[53]

Bootstrap learning via modular concept discovery

Eyal Dechter, Jonathan Malmaud, Ryan Prescott Adams, and Joshua B Tenenbaum. Bootstrap learning via modular concept discovery. International Joint Conferences on Artificial Intelligence, 2013

work page 2013

-

[54]

Kevin Ellis, Lionel Wong, Maxwell Nye, Mathias Sable-Meyer, Luc Cary, Lore Anaya Pozo, Luke Hewitt, Armando Solar-Lezama, and Joshua B Tenenbaum. Dreamcoder: growing generalizable, interpretable knowledge with wake--sleep bayesian program learning. Philosophical Transactions of the Royal Society A, 381 0 (2251): 0 20220050, 2023

work page 2023

-

[55]

Chunking mechanisms in human learning

Fernand Gobet, Peter CR Lane, Steve Croker, Peter CH Cheng, Gary Jones, Iain Oliver, and Julian M Pine. Chunking mechanisms in human learning. Trends in Cognitive Sciences, 5 0 (6): 0 236--243, 2001

work page 2001

-

[56]

Lilo: Learning interpretable libraries by compressing and documenting code

Gabriel Grand, Lionel Wong, Matthew Bowers, Theo X Olausson, Muxin Liu, Joshua B Tenenbaum, and Jacob Andreas. Lilo: Learning interpretable libraries by compressing and documenting code. In International Conference on Learning Representations, 2024

work page 2024

-

[57]

Sumit Gulwani, Oleksandr Polozov, and Rishabh Singh. Program synthesis. Foundations and Trends in Programming Languages, 4 0 (1-2): 0 1--119, 2017

work page 2017

-

[58]

Teaching recombinable motifs through simple examples

Huang Ham, Bonan Zhao, Thomas L Griffiths, and Natalia V \'e lez. Teaching recombinable motifs through simple examples. Cognitive Science, 49 0 (8): 0 e70103, 2025

work page 2025

-

[59]

Bootstrapping in geometric puzzle solving

Xiangying He, Bonan Zhao, and Neil R Bramley. Bootstrapping in geometric puzzle solving. In Proceedings of the 47th Annual Meeting of the Cognitive Science Society, pages 4911--4917, 2025

work page 2025

-

[60]

Fast and flexible: Human program induction in abstract reasoning tasks

Aysja Johnson, Wai Keen Vong, Brenden M Lake, and Todd M Gureckis. Fast and flexible: Human program induction in abstract reasoning tasks. arXiv preprint arXiv:2103.05823, 2021

-

[61]

Approximate planning in spatial search

Marta Kryven, Suhyoun Yu, Max Kleiman-Weiner, Tomer Ullman, and Joshua Tenenbaum. Approximate planning in spatial search. PLOS Computational Biology, 20 0 (11): 0 e1012582, 2024

work page 2024

-

[62]

Cognitive maps are generative programs

Marta Kryven, Cole Wyeth, Aidan Curtis, and Kevin Ellis. Cognitive maps are generative programs. arXiv preprint arXiv:2504.20628, 2025

-

[63]

Using natural language and program abstractions to instill human inductive biases in machines

Sreejan Kumar, Carlos G Correa, Ishita Dasgupta, Raja Marjieh, Michael Y Hu, Robert Hawkins, Jonathan D Cohen, Karthik Narasimhan, Tom Griffiths, et al. Using natural language and program abstractions to instill human inductive biases in machines. Advances in Neural Information Processing Systems, 35: 0 167--180, 2022

work page 2022

-

[64]

Human-level concept learning through probabilistic program induction

Brenden M Lake, Ruslan Salakhutdinov, and Joshua B Tenenbaum. Human-level concept learning through probabilistic program induction. Science, 350 0 (6266): 0 1332--1338, 2015

work page 2015

-

[65]

Learning programs: A hierarchical bayesian approach

Percy Liang, Michael I Jordan, and Dan Klein. Learning programs: A hierarchical bayesian approach. In Proceedings of the 26th International Conference on Machine Learning, volume 10, pages 639--646, 2010

work page 2010

-

[66]

Falk Lieder and Thomas L Griffiths. Resource-rational analysis: Understanding human cognition as the optimal use of limited computational resources. Behavioral and brain sciences, 43: 0 e1, 2020

work page 2020

-

[67]

The maximum edge biclique problem is np-complete

Ren \'e Peeters. The maximum edge biclique problem is np-complete. Discrete Applied Mathematics, 131 0 (3): 0 651--654, 2003

work page 2003

-

[68]

Planning with generative cognitive maps

Jeffrey Qin, Albert Yang, Cole Wyeth, Ziheng Xu, Kevin Ellis, and Marta Kryven. Planning with generative cognitive maps. In NeurIPS 2025 Workshop on Bridging Language, Agent, and World Models for Reasoning and Planning, 2025

work page 2025

-

[69]

Library learning with e-graphs on jazz harmony

Zeng Ren, Maddy Bowers, Xinyi Guan, and Martin Rohrmeier. Library learning with e-graphs on jazz harmony, 2026. URL https://arxiv.org/abs/2605.04622

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[70]

Symbolic metaprogram search improves learning efficiency and explains rule learning in humans

Joshua S Rule, Steven T Piantadosi, Andrew Cropper, Kevin Ellis, Maxwell Nye, and Joshua B Tenenbaum. Symbolic metaprogram search improves learning efficiency and explains rule learning in humans. Nature Communications, 15 0 (1): 0 6847, 2024

work page 2024

-

[71]

Map induction: Compositional spatial submap learning for efficient exploration in novel environments

Sugandha Sharma, Aidan Curtis, Marta Kryven, Josh Tenenbaum, and Ila Fiete. Map induction: Compositional spatial submap learning for efficient exploration in novel environments. International Conference of Learning Representations, 2022

work page 2022

-

[72]

Program synthesis and semantic parsing with learned code idioms

Eui Chul Shin, Miltiadis Allamanis, Marc Brockschmidt, and Alex Polozov. Program synthesis and semantic parsing with learned code idioms. In Advances in Neural Information Processing Systems, volume 32, 2019

work page 2019

-

[73]

Learning abstract structure for drawing by efficient motor program induction

Lucas Tian, Kevin Ellis, Marta Kryven, and Josh Tenenbaum. Learning abstract structure for drawing by efficient motor program induction. Advances in Neural Information Processing Systems, 33: 0 2686--2697, 2020

work page 2020

-

[74]

Deshmukh, Sela Mador-Haim, Milo M.K

Abhishek Udupa, Arun Raghavan, Jyotirmoy V. Deshmukh, Sela Mador-Haim, Milo M.K. Martin, and Rajeev Alur. Transit: specifying protocols with concolic snippets. Proceedings of the ACM SIGPLAN Conference on Programming Language Design and Implementation, 48: 0 287–296, 2013

work page 2013

- [75]

-

[76]

A model of conceptual bootstrapping in human cognition

Bonan Zhao, Christopher G Lucas, and Neil R Bramley. A model of conceptual bootstrapping in human cognition. Nature Human Behaviour, 8 0 (1): 0 125--136, 2024

work page 2024

-

[77]

Online library learning in human visual puzzle solving

Pinzhe Zhao, Emanuele Sansone, Marta Kryven, and Bonan Zhao. Online library learning in human visual puzzle solving. Proceedings of the 47th Annual Meeting of the Cognitive Science Society, 2026

work page 2026

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.