Recognition: no theorem link

GraphInstruct: A Progressive Benchmark for Diagnosing Capability Gaps in LLM Graph Generation

Pith reviewed 2026-05-12 03:48 UTC · model grok-4.3

The pith

A progressive benchmark shows verification-guided iteration with adaptive prompting outperforms standard methods for LLM graph generation.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

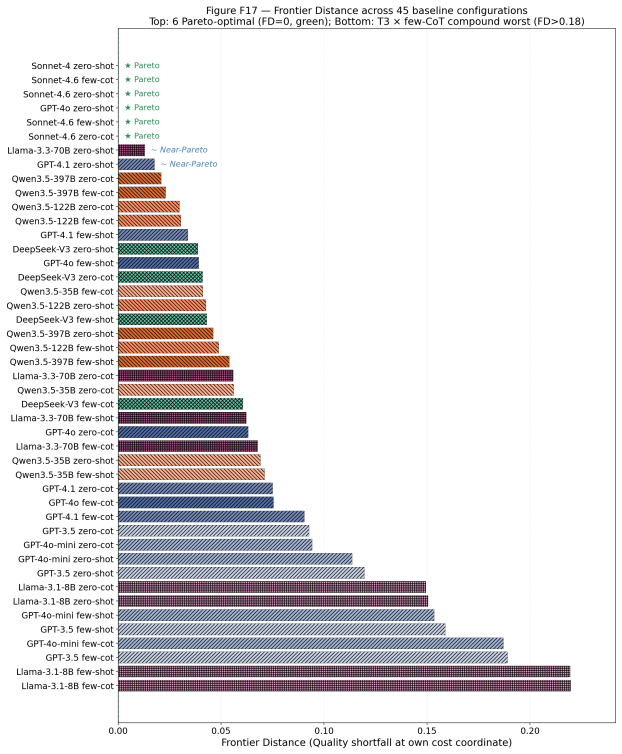

GraphInstruct organizes LLM graph generation evaluation into six progressive complexity levels and five dimensions, supported by 800 instructions and over 1500 reference solutions. Across 12 models and 45 configurations, it shows peak discriminative power at multi-constraint composition, absence of a dominant prompting strategy, and invariance of domain-semantic constraints to iteration. Building on these signals, a verification-guided iterative framework employing constraint-aware adaptive prompting exceeds the performance limits of conventional prompt engineering.

What carries the argument

The progressive stratification into six complexity levels and five evaluation dimensions, paired with the verification-guided iterative framework using constraint-aware adaptive prompting.

Load-bearing premise

The six hand-defined complexity levels and five evaluation dimensions, together with the hand-authored instructions and synthesized references, provide an unbiased and comprehensive map of LLM capability gaps in graph generation.

What would settle it

A single-pass prompting method that matches or exceeds the iterative framework's results across all six complexity levels on the same set of models would falsify the claim that iteration is needed to surpass the prompt-engineering ceiling.

Figures

read the original abstract

Graph-structured data underpins applications from citation analysis and social-network modeling to molecular design and knowledge-graph construction, and Large Language Models (LLMs) are increasingly used as prompt-driven graph synthesizers. Classical graph-generation reviews catalog deep generative models and their evaluation primitives, but predate the LLM era and provide no foundation for evaluating instruction-following graph synthesis. Recent LLM-era benchmarks evaluate models along graph-type or task-domain axes; such organizations, however, average over structural complexity and cannot localize where in the complexity spectrum an LLM breaks down. To close this diagnostic gap, we introduce GraphInstruct, a progressive-complexity benchmark that stratifies LLM graph generation into six complexity levels and five evaluation dimensions, paired with 800 hand-authored instructions, 1,582 algorithmically synthesized reference solutions, and a 12-LLM capability evaluation across 45 (model, strategy) configurations. We find that discriminative power peaks at multi-constraint composition rather than reasoning depth, that no single prompting strategy dominates across levels or model families, and that domain-semantic constraints remain iteration-invariant under all tested methods -- pointing to retrieval rather than additional compute as the next research frontier. Atop the benchmark, a verification-guided iterative framework with constraint-aware adaptive prompting consistently surpasses the prompt-engineering ceiling on tested target models, demonstrating that the benchmark's fine-grained signals drive method development.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces GraphInstruct, a progressive benchmark for diagnosing LLM capability gaps in instruction-following graph generation. It stratifies 800 hand-authored instructions into six complexity levels and five evaluation dimensions, paired with 1,582 algorithmically synthesized reference solutions, and reports results from evaluating 12 LLMs across 45 (model, strategy) configurations. Key findings include peak discriminative power at multi-constraint composition, absence of a dominant prompting strategy, and iteration-invariant failures on domain-semantic constraints; the authors additionally present a verification-guided iterative framework with constraint-aware adaptive prompting that outperforms standard prompt-engineering baselines.

Significance. If the complexity stratification proves non-arbitrary and the reported improvements are robust, the work supplies a much-needed fine-grained diagnostic instrument for LLM graph synthesis that moves beyond coarse task-domain or graph-type axes. The scale of the evaluation (12 models, 45 configurations) and the demonstration that benchmark signals can drive a new prompting method constitute concrete strengths; the identification of retrieval as a frontier for domain-semantic constraints is a useful, falsifiable pointer for follow-on research.

major comments (2)

- [Benchmark Construction] Benchmark Construction section: the six hand-defined complexity levels and the claim that 'discriminative power peaks at multi-constraint composition' rest on an unvalidated partitioning. No monotonic degradation of success rates with level, inter-rater reliability statistics, or correlation with model-agnostic graph-complexity metrics (treewidth, constraint-satisfaction hardness) are reported, leaving open the possibility that observed patterns reflect surface features of the hand-authored instructions rather than intrinsic generation difficulty.

- [Evaluation and Results] Evaluation and Results section: the central claim that the verification-guided iterative framework 'consistently surpasses the prompt-engineering ceiling' because of the benchmark's fine-grained signals requires explicit implementation details of the constraint-aware adaptive prompting, per-configuration success rates with error bars, and statistical tests across the 45 setups. Without these, it is impossible to confirm that gains are attributable to the benchmark rather than to the particular instruction distribution or unstated hyper-parameters.

minor comments (2)

- [Abstract] Abstract and §4: the pairing between the 800 instructions and 1,582 references is not stated explicitly; clarify whether every instruction has a unique reference or whether some references serve multiple instructions.

- [Figures/Tables] Figure and table captions: ensure all evaluation dimensions and complexity levels are defined in the caption or a nearby table so that readers can interpret results without returning to the main text.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment below and specify the revisions planned for the manuscript.

read point-by-point responses

-

Referee: [Benchmark Construction] Benchmark Construction section: the six hand-defined complexity levels and the claim that 'discriminative power peaks at multi-constraint composition' rest on an unvalidated partitioning. No monotonic degradation of success rates with level, inter-rater reliability statistics, or correlation with model-agnostic graph-complexity metrics (treewidth, constraint-satisfaction hardness) are reported, leaving open the possibility that observed patterns reflect surface features of the hand-authored instructions rather than intrinsic generation difficulty.

Authors: The six levels were constructed by incrementally composing constraints (structural, numerical, domain-semantic) in a manner intended to reflect increasing instruction complexity for graph generation. We acknowledge the absence of formal validation metrics such as inter-rater reliability or correlations with treewidth/hardness measures. In revision we will add: per-level success rate tables across all models to document the observed patterns; a note that strict monotonic degradation is not theoretically required given heterogeneous LLM capabilities; and exploratory correlations using constraint count as a proxy metric. The peak discriminative power at multi-constraint composition remains an empirical observation from the 45 configurations, but we will qualify the claim to reflect the hand-authored nature of the partitioning. revision: partial

-

Referee: [Evaluation and Results] Evaluation and Results section: the central claim that the verification-guided iterative framework 'consistently surpasses the prompt-engineering ceiling' because of the benchmark's fine-grained signals requires explicit implementation details of the constraint-aware adaptive prompting, per-configuration success rates with error bars, and statistical tests across the 45 setups. Without these, it is impossible to confirm that gains are attributable to the benchmark rather than to the particular instruction distribution or unstated hyper-parameters.

Authors: We will expand the Evaluation and Results section with: explicit pseudocode and description of the constraint-aware adaptive prompting mechanism; a supplementary table reporting per-configuration success rates (with standard deviations from repeated runs where performed); and statistical tests (paired comparisons with bootstrap intervals) across the 45 (model, strategy) setups. These additions will make transparent that the framework leverages the benchmark's fine-grained failure signals for targeted adaptation rather than relying on generic prompting. We agree the original version omitted sufficient implementation and statistical detail. revision: yes

Circularity Check

No circularity: empirical benchmark with direct measurements only

full rationale

This is a pure empirical benchmark paper introducing hand-authored instructions, algorithmically synthesized references, and LLM evaluations across configurations. No derivations, equations, fitted parameters, or predictions appear in the abstract or described content. Outcomes are reported as direct measurements against external references. No self-citations are invoked as load-bearing premises. The central claims rest on observed performance differences, not on any reduction to inputs by construction. This aligns with the default expectation for non-circular empirical studies.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

- [1]

-

[2]

Xiang, Sheng and Wen, Dong and Cheng, Dawei and Zhang, Ying and Qin, Lu and Qian, Zhengping and Lin, Xuemin , title =. The. 2022 , volume =

work page 2022

-

[3]

Demirci, Ege and Kerur, Rithwik and Singh, Ambuj , title =. Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (ACL) Student Research Workshop , year =

-

[4]

arXiv preprint arXiv:2403.14358 , year =

Yao, Yang and Wang, Xin and Zhang, Zeyang and Qin, Yijian and Wang, Ziwei and Chu, Xu and Yang, Yuekui and Zhu, Wenwu and Mei, Hong , title =. arXiv preprint arXiv:2403.14358 , year =

-

[5]

International Conference on Learning Representations (ICLR) , year =

Tang, Jianheng and Zhang, Qifan and Li, Yuhan and Liu, Nuo and Hua, Hongzhi and Jin, Jiawei and Wang, Yi and Huang, Xiao , title =. International Conference on Learning Representations (ICLR) , year =

-

[6]

Findings of the Association for Computational Linguistics (ACL) , year =

Wang, Jianing and Wu, Junda and Hou, Yupeng and Liu, Yao and Gao, Ming and McAuley, Julian , title =. Findings of the Association for Computational Linguistics (ACL) , year =

-

[7]

International Conference on Learning Representations (ICLR) , year =

Peng, Jie and Ji, Jiarui and Lei, Runlin and Wei, Zhewei and Liu, Yongchao and Hong, Chuntao , title =. International Conference on Learning Representations (ICLR) , year =

-

[8]

International Conference on Learning Representations (ICLR) , year =

Fatemi, Bahare and Halcrow, Jonathan and Perozzi, Bryan , title =. International Conference on Learning Representations (ICLR) , year =

-

[9]

Advances in Neural Information Processing Systems (NeurIPS) , year =

Wang, Heng and Feng, Shangbin and He, Tianxing and Tan, Zhaoxuan and Han, Xiaochuang and Tsvetkov, Yulia , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[10]

Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining (KDD) , year =

Chen, Nuo and Li, Yuhan and Tang, Jianheng and Li, Jia , title =. Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining (KDD) , year =

-

[11]

Tang, Jiabin and Yang, Yuhao and Wei, Wei and Shi, Lei and Su, Lixin and Cheng, Suqi and Yin, Dawei and Huang, Chao , title =. Proceedings of the 47th International ACM SIGIR Conference on Research and Development in Information Retrieval (SIGIR) , year =

-

[12]

Findings of the Association for Computational Linguistics (ACL) , year =

Jin, Bowen and Xie, Chulin and Zhang, Jiawei and Roy, Kashob Kumar and Zhang, Yu and Li, Zheng and Li, Ruirui and Tang, Xianfeng and Wang, Suhang and Meng, Yu and Han, Jiawei , title =. Findings of the Association for Computational Linguistics (ACL) , year =

-

[13]

Proceedings of the AAAI Conference on Artificial Intelligence (AAAI) , year =

Besta, Maciej and Blach, Nils and Kubicek, Ales and Gerstenberger, Robert and Podstawski, Michal and Gianinazzi, Lukas and Gajda, Joanna and Lehmann, Tomasz and Niewiadomski, Hubert and Nyczyk, Piotr and Hoefler, Torsten , title =. Proceedings of the AAAI Conference on Artificial Intelligence (AAAI) , year =

-

[14]

International Conference on Learning Representations (ICLR) , year =

Luo, Linhao and Li, Yuan-Fang and Haffari, Gholamreza and Pan, Shirui , title =. International Conference on Learning Representations (ICLR) , year =

-

[15]

Findings of the Association for Computational Linguistics (EACL) , year =

Ye, Ruosong and Zhang, Caiqi and Wang, Runhui and Xu, Shuyuan and Zhang, Yongfeng , title =. Findings of the Association for Computational Linguistics (EACL) , year =

-

[16]

and Kaiser, Lukasz and Polosukhin, Illia , title =

Vaswani, Ashish and Shazeer, Noam and Parmar, Niki and Uszkoreit, Jakob and Jones, Llion and Gomez, Aidan N. and Kaiser, Lukasz and Polosukhin, Illia , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[17]

and Mann, Benjamin and Ryder, Nick and Subbiah, Melanie and Kaplan, Jared D

Brown, Tom B. and Mann, Benjamin and Ryder, Nick and Subbiah, Melanie and Kaplan, Jared D. and Dhariwal, Prafulla and others , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[18]

arXiv preprint arXiv:2303.08774 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[19]

Llama 2: Open Foundation and Fine-Tuned Chat Models

Touvron, Hugo and Martin, Louis and Stone, Kevin and others , title =. arXiv preprint arXiv:2307.09288 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[20]

Grattafiori, Aaron and Dubey, Abhimanyu and Jauhri, Abhinav and others , title =. arXiv preprint arXiv:2407.21783 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[21]

Yang, An and Yang, Baosong and Hui, Binyuan and Zheng, Bo and others , title =. arXiv preprint arXiv:2407.10671 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[22]

Yang, An and others , title =. arXiv preprint arXiv:2412.15115 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[23]

arXiv preprint arXiv:2412.19437 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[24]

Advances in Neural Information Processing Systems (NeurIPS) , year =

Wei, Jason and Wang, Xuezhi and Schuurmans, Dale and Bosma, Maarten and Chi, Ed and Le, Quoc and Zhou, Denny , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[25]

Advances in Neural Information Processing Systems (NeurIPS) , year =

Kojima, Takeshi and Gu, Shixiang Shane and Reid, Machel and Matsuo, Yutaka and Iwasawa, Yusuke , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[26]

International Conference on Learning Representations (ICLR) , year =

Wang, Xuezhi and Wei, Jason and Schuurmans, Dale and Le, Quoc and Chi, Ed and Narang, Sharan and Chowdhery, Aakanksha and Zhou, Denny , title =. International Conference on Learning Representations (ICLR) , year =

-

[27]

Advances in Neural Information Processing Systems (NeurIPS) , year =

Yao, Shunyu and Yu, Dian and Zhao, Jeffrey and Shafran, Izhak and Griffiths, Thomas and Cao, Yuan and Narasimhan, Karthik , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[28]

International Conference on Learning Representations (ICLR) , year =

Yao, Shunyu and Zhao, Jeffrey and Yu, Dian and Du, Nan and Shafran, Izhak and Narasimhan, Karthik and Cao, Yuan , title =. International Conference on Learning Representations (ICLR) , year =

-

[29]

Advances in Neural Information Processing Systems (NeurIPS) , year =

Madaan, Aman and Tandon, Niket and Gupta, Prakhar and Hallinan, Skyler and Gao, Luyu and Wiegreffe, Sarah and others , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[30]

Advances in Neural Information Processing Systems (NeurIPS) , year =

Shinn, Noah and Cassano, Federico and Gopinath, Ashwin and Narasimhan, Karthik and Yao, Shunyu , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[31]

International Conference on Learning Representations (ICLR) , year =

Gou, Zhibin and Shao, Zhihong and Gong, Yeyun and Shen, Yelong and Yang, Yujiu and Duan, Nan and Chen, Weizhu , title =. International Conference on Learning Representations (ICLR) , year =

-

[32]

International Conference on Learning Representations (ICLR) , year =

Huang, Jie and Chen, Xinyun and Mishra, Swaroop and Zheng, Huaixiu Steven and Yu, Adams Wei and Song, Xinying and Zhou, Denny , title =. International Conference on Learning Representations (ICLR) , year =

-

[33]

International Conference on Learning Representations (ICLR) , year =

Zhou, Denny and Scharli, Nathanael and Hou, Le and Wei, Jason and Scales, Nathan and Wang, Xuezhi and Schuurmans, Dale and Cui, Claire and Bousquet, Olivier and Le, Quoc and Chi, Ed , title =. International Conference on Learning Representations (ICLR) , year =

-

[34]

Kipf, Thomas N. and Welling, Max , title =. International Conference on Learning Representations (ICLR) , year =

- [35]

-

[36]

and Ying, Rex and Leskovec, Jure , title =

Hamilton, William L. and Ying, Rex and Leskovec, Jure , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[37]

International Conference on Learning Representations (ICLR) , year =

Xu, Keyulu and Hu, Weihua and Leskovec, Jure and Jegelka, Stefanie , title =. International Conference on Learning Representations (ICLR) , year =

-

[38]

Advances in Neural Information Processing Systems (NeurIPS) , year =

Hu, Weihua and Fey, Matthias and Zitnik, Marinka and Dong, Yuxiao and Ren, Hongyu and Liu, Bowen and Catasta, Michele and Leskovec, Jure , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[39]

You, Jiaxuan and Ying, Rex and Ren, Xiang and Hamilton, William L. and Leskovec, Jure , title =. International Conference on Machine Learning (ICML) , year =

-

[40]

International Conference on Learning Representations (ICLR) , year =

Shi, Chence and Xu, Minkai and Zhu, Zhaocheng and Zhang, Weinan and Zhang, Ming and Tang, Jian , title =. International Conference on Learning Representations (ICLR) , year =

-

[41]

ICML 2018 Deep Generative Models Workshop , year =

De Cao, Nicola and Kipf, Thomas , title =. ICML 2018 Deep Generative Models Workshop , year =

work page 2018

-

[42]

International Conference on Artificial Neural Networks (ICANN) , year =

Simonovsky, Martin and Komodakis, Nikos , title =. International Conference on Artificial Neural Networks (ICANN) , year =

-

[43]

International Conference on Machine Learning (ICML) , year =

Jin, Wengong and Barzilay, Regina and Jaakkola, Tommi , title =. International Conference on Machine Learning (ICML) , year =

-

[44]

Advances in Neural Information Processing Systems (NeurIPS) , year =

You, Jiaxuan and Liu, Bowen and Ying, Zhitao and Pande, Vijay and Leskovec, Jure , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[45]

International Conference on Learning Representations (ICLR) , year =

Vignac, Clement and Krawczuk, Igor and Siraudin, Antoine and Wang, Bohan and Cevher, Volkan and Frossard, Pascal , title =. International Conference on Learning Representations (ICLR) , year =

-

[46]

International Conference on Machine Learning (ICML) , year =

Jo, Jaehyeong and Lee, Seul and Hwang, Sung Ju , title =. International Conference on Machine Learning (ICML) , year =

-

[47]

Transactions on Machine Learning Research (TMLR) , year =

Liang, Percy and Bommasani, Rishi and Lee, Tony and Tsipras, Dimitris and Soylu, Dilara and Yasunaga, Michihiro and others , title =. Transactions on Machine Learning Research (TMLR) , year =

-

[48]

Transactions on Machine Learning Research (TMLR) , year =

Srivastava, Aarohi and Rastogi, Abhinav and Rao, Abhishek and Shoeb, Abu Awal Md and Abid, Abubakar and others , title =. Transactions on Machine Learning Research (TMLR) , year =

-

[49]

International Conference on Learning Representations (ICLR) , year =

Hendrycks, Dan and Burns, Collin and Basart, Steven and Zou, Andy and Mazeika, Mantas and Song, Dawn and Steinhardt, Jacob , title =. International Conference on Learning Representations (ICLR) , year =

-

[50]

Advances in Neural Information Processing Systems (NeurIPS) Datasets and Benchmarks Track , year =

Zheng, Lianmin and Chiang, Wei-Lin and Sheng, Ying and Zhuang, Siyuan and Wu, Zhanghao and Zhuang, Yonghao and others , title =. Advances in Neural Information Processing Systems (NeurIPS) Datasets and Benchmarks Track , year =

-

[51]

Training Verifiers to Solve Math Word Problems

Cobbe, Karl and Kosaraju, Vineet and Bavarian, Mohammad and Chen, Mark and Jun, Heewoo and Kaiser, Lukasz and others , title =. arXiv preprint arXiv:2110.14168 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[52]

Evaluating Large Language Models Trained on Code

Chen, Mark and Tworek, Jerry and Jun, Heewoo and Yuan, Qiming and Pinto, Henrique Ponde de Oliveira and others , title =. arXiv preprint arXiv:2107.03374 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[53]

Suzgun, Mirac and Scales, Nathan and Sch. Challenging. Findings of the Association for Computational Linguistics (ACL) , year =

-

[54]

Scaling Laws for Neural Language Models

Kaplan, Jared and McCandlish, Sam and Henighan, Tom and Brown, Tom B. and Chess, Benjamin and Child, Rewon and others , title =. arXiv preprint arXiv:2001.08361 , year =

work page internal anchor Pith review Pith/arXiv arXiv 2001

-

[55]

Advances in Neural Information Processing Systems (NeurIPS) , year =

Hoffmann, Jordan and Borgeaud, Sebastian and Mensch, Arthur and Buchatskaya, Elena and Cai, Trevor and Rutherford, Eliza and others , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[56]

Transactions on Machine Learning Research (TMLR) , year =

Wei, Jason and Tay, Yi and Bommasani, Rishi and Raffel, Colin and Zoph, Barret and Borgeaud, Sebastian and others , title =. Transactions on Machine Learning Research (TMLR) , year =

-

[57]

Advances in Neural Information Processing Systems (NeurIPS) , year =

Schaeffer, Rylan and Miranda, Brando and Koyejo, Sanmi , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[58]

and Khashabi, Daniel and Hajishirzi, Hannaneh , title =

Wang, Yizhong and Kordi, Yeganeh and Mishra, Swaroop and Liu, Alisa and Smith, Noah A. and Khashabi, Daniel and Hajishirzi, Hannaneh , title =. Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (ACL) , year =

-

[59]

International Conference on Learning Representations (ICLR) , year =

Xu, Can and Sun, Qingfeng and Zheng, Kai and Geng, Xiubo and Zhao, Pu and Feng, Jiazhan and Tao, Chongyang and Jiang, Daxin , title =. International Conference on Learning Representations (ICLR) , year =

-

[60]

Advances in Neural Information Processing Systems (NeurIPS) , year =

Ouyang, Long and Wu, Jeffrey and Jiang, Xu and Almeida, Diogo and Wainwright, Carroll and Mishkin, Pamela and others , title =. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[61]

Ruan, Yaxing Cai, Ruihang Lai, Ziyi Xu, Yilong Zhao, and Tianqi Chen

Dong, Yixin and Ruan, Charlie F. and Cai, Yaxing and Lai, Ruihang and Xu, Ziyi and Zhao, Yilong and Chen, Tianqi , title =. arXiv preprint arXiv:2411.15100 , year =

-

[62]

Willard, Brandon T. and Louf, R\'. Efficient Guided Generation for Large Language Models , journal =

-

[63]

Proceedings of the ACM on Programming Languages , volume =

Beurer-Kellner, Luca and Fischer, Marc and Vechev, Martin , title =. Proceedings of the ACM on Programming Languages , volume =. 2023 , doi =

work page 2023

-

[64]

Emergence of Scaling in Random Networks , journal =

Barab. Emergence of Scaling in Random Networks , journal =

-

[65]

Watts, Duncan J. and Strogatz, Steven H. , title =. Nature , volume =. 1998 , doi =

work page 1998

- [66]

-

[67]

Newman, Mark , title =

-

[68]

Papineni, Kishore and Roukos, Salim and Ward, Todd and Zhu, Wei-Jing , title =. Proceedings of the 40th Annual Meeting of the Association for Computational Linguistics (ACL) , year =

-

[69]

Text Summarization Branches Out: Proceedings of the ACL Workshop , year =

Lin, Chin-Yew , title =. Text Summarization Branches Out: Proceedings of the ACL Workshop , year =

-

[70]

Zhang, Tianyi and Kishore, Varsha and Wu, Felix and Weinberger, Kilian Q. and Artzi, Yoav , title =. International Conference on Learning Representations (ICLR) , year =

-

[71]

Gretton, Arthur and Borgwardt, Karsten M. and Rasch, Malte J. and Sch. A Kernel Two-Sample Test , journal =

-

[72]

Devlin, Jacob and Chang, Ming-Wei and Lee, Kenton and Toutanova, Kristina , title =. Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics (NAACL) , year =

work page 2019

-

[73]

Sen, Prithviraj and Namata, Galileo and Bilgic, Mustafa and Getoor, Lise and Galligher, Brian and Eliassi-Rad, Tina , title =. AI Magazine , volume =. 2008 , doi =

work page 2008

-

[74]

and Sterling, Teague and Mysinger, Michael M

Irwin, John J. and Sterling, Teague and Mysinger, Michael M. and Bolstad, Erin S. and Coleman, Ryan G. , title =. Journal of Chemical Information and Modeling , volume =

-

[75]

and Rupp, Matthias and von Lilienfeld, O

Ramakrishnan, Raghunathan and Dral, Pavlo O. and Rupp, Matthias and von Lilienfeld, O. Anatole , title =. Scientific Data , volume =

-

[76]

ACM Transactions on Knowledge Discovery from Data (TKDD) , volume =

Leskovec, Jure and Kleinberg, Jon and Faloutsos, Christos , title =. ACM Transactions on Knowledge Discovery from Data (TKDD) , volume =. 2007 , doi =

work page 2007

-

[77]

ACM Transactions on Information Systems (TOIS) , volume =

Huang, Lei and Yu, Weijiang and Ma, Weitao and Zhong, Weihong and Feng, Zhangyin and Wang, Haotian and others , title =. ACM Transactions on Information Systems (TOIS) , volume =. 2025 , doi =

work page 2025

-

[78]

Constitutional AI: Harmlessness from AI Feedback

Bai, Yuntao and Kadavath, Saurav and Kundu, Sandipan and Askell, Amanda and Kernion, Jackson and Jones, Andy and others , title =. arXiv preprint arXiv:2212.08073 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[79]

Datasheets for Datasets , journal =

Gebru, Timnit and Morgenstern, Jamie and Vecchione, Briana and Vaughan, Jennifer Wortman and Wallach, Hanna and Iii, Hal Daum. Datasheets for Datasets , journal =. 2021 , doi =

work page 2021

-

[80]

Huang, Haoyu and Chen, Chong and Sheng, Zeang and Li, Yang and Zhang, Wentao , title =. Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing (EMNLP) , year =

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.