Recognition: no theorem link

Coarsening Linear Non-Gaussian Causal Models with Cycles

Pith reviewed 2026-05-12 03:24 UTC · model grok-4.3

The pith

High-dimensional cyclic causal models in the LiNG setting coarsen to a recoverable low-dimensional DAG.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

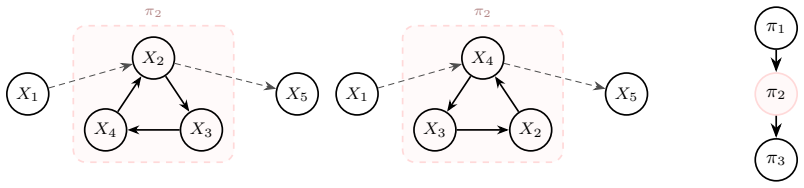

In the linear non-Gaussian setting, high-dimensional causal models may contain cycles, yet the coarsened low-dimensional structure over variable clusters is a causal DAG that is identifiable and invariant across the observational equivalence class defined by reversals of directed cycles.

What carries the argument

The coarsening map from high-dimensional LiNG models to an invariant low-dimensional DAG.

Load-bearing premise

The high-dimensional linear non-Gaussian model produces a coarsened low-dimensional DAG that stays the same no matter how cycles are reversed in equivalent representations.

What would settle it

A concrete dataset generated from a LiNG model containing cycles in which the learned low-dimensional structure differs for two observationally equivalent high-dimensional graphs.

Figures

read the original abstract

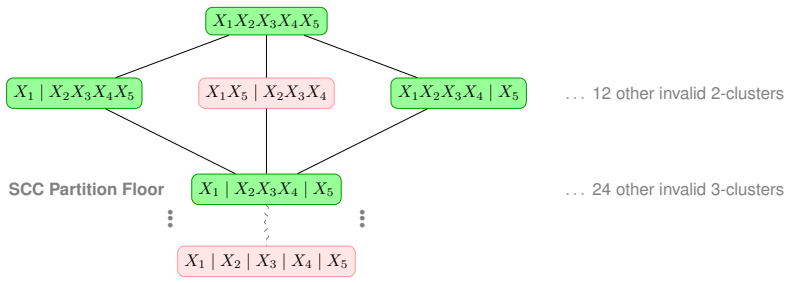

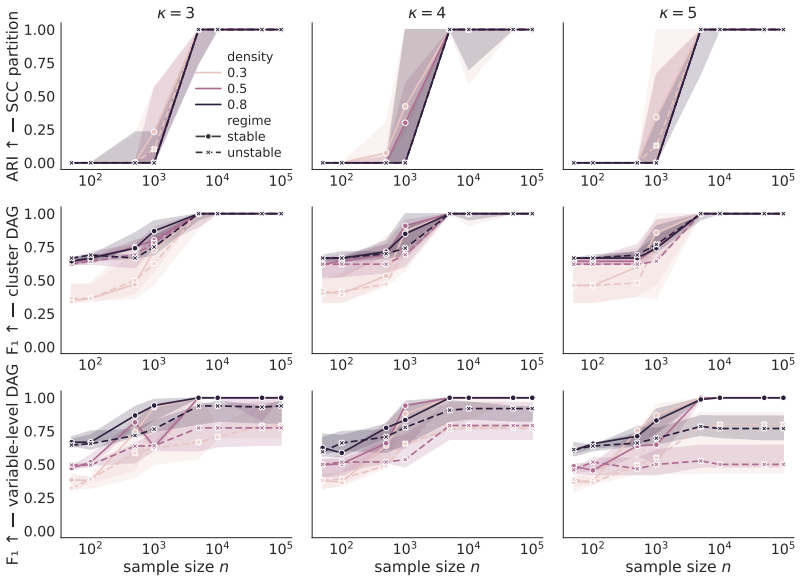

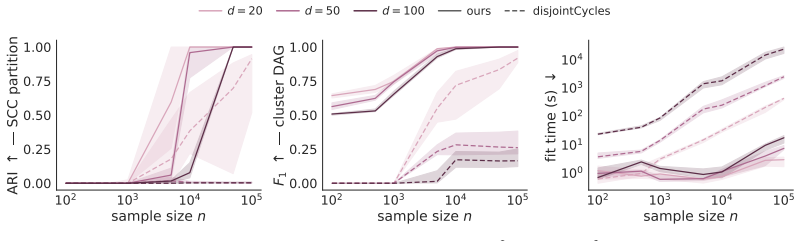

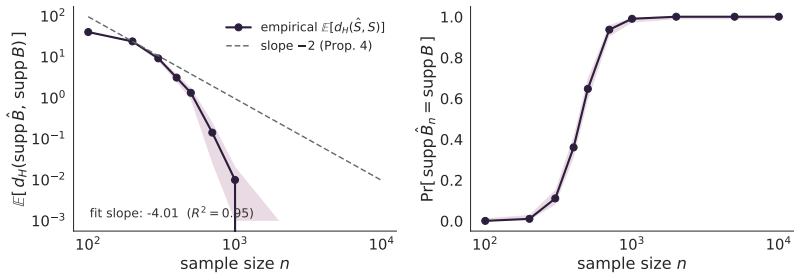

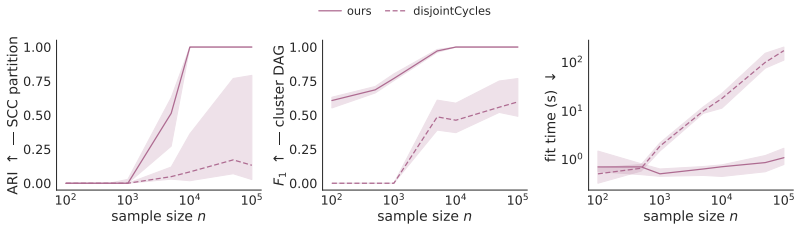

Recent work on causal abstraction, in particular graphical approaches focusing on causal structure between clusters of variables, aims to summarize a high-dimensional causal structure in terms of a low-dimensional one. Existing methods for learning such summaries from data assume that both the high- and low-dimensional structures are acyclic, which is helpful for causal effect identification and reasoning but excludes many high-dimensional models and thus limits applicability. We show that in the linear non-Gaussian (LiNG) setting, the high-dimensional acyclicity assumption can be relaxed while still allowing recovery of a low-dimensional causal directed acyclic graph (DAG). We further connect identifiability of this low-dimensional DAG to existing results: LiNG models with cycles are observationally identifiable only up to an equivalence class whose members differ by reversals of directed cycles; our low-dimensional DAG, which is invariant across all members of a given equivalence class, thus forms a natural representative of the class. While existing approaches for learning this observational equivalence class over high-dimensional variables have exponential time complexity, our low-dimensional summary is learned in worst-case cubic time and comes with explicit bounds on the sample complexity. We provide open source code and experiments on synthetic data to corroborate our theoretical results.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that in the linear non-Gaussian (LiNG) setting, high-dimensional causal models with cycles can be coarsened into a low-dimensional DAG that remains identifiable and invariant across the observational equivalence class of the high-dimensional model (whose members differ by directed cycle reversals). It connects this invariance to existing LiNG identifiability results, shows that the low-dimensional summary can be recovered in worst-case cubic time with explicit sample-complexity bounds, and provides synthetic experiments plus open-source code.

Significance. If the central invariance result holds, the work meaningfully relaxes the acyclicity assumption in causal abstraction while retaining identifiability and computational tractability for LiNG models. The explicit link to cycle-reversal equivalence classes, the cubic-time algorithm, and the provision of reproducible code are concrete strengths that would broaden the scope of high-dimensional causal discovery methods.

major comments (2)

- [Theoretical identifiability results (main theorem on invariance)] The load-bearing claim that the coarsened low-dimensional DAG is invariant across all members of the high-dimensional LiNG observational equivalence class (i.e., under arbitrary directed-cycle reversals) is stated in the abstract and connected to prior results, but the argument does not isolate or prove the case in which a cycle crosses cluster boundaries. In that case the effective linear coefficients and noise terms between supernodes can change, so the low-dimensional skeleton and orientation need not remain constant.

- [Algorithm and complexity analysis] The cubic-time recovery procedure and sample-complexity bounds are presented as consequences of the invariance, yet without an explicit lemma showing that the coarsening map commutes with cycle reversal when inter-cluster edges are involved, it is unclear whether the complexity claims survive the same counter-examples that would break invariance.

minor comments (2)

- [Preliminaries] Notation for the coarsening operation (aggregation into supernodes) and the induced low-dimensional adjacency matrix should be introduced with a small illustrative example early in the paper to make the invariance statement easier to parse.

- [Abstract and Section on sample complexity] The abstract asserts 'explicit bounds on the sample complexity'; the main text should state whether these bounds are derived from scratch or obtained by reduction to an existing LiNG result, and whether they depend on the number of clusters.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive feedback. We address the two major comments point by point below and will revise the manuscript to provide the requested explicit arguments.

read point-by-point responses

-

Referee: The load-bearing claim that the coarsened low-dimensional DAG is invariant across all members of the high-dimensional LiNG observational equivalence class (i.e., under arbitrary directed-cycle reversals) is stated in the abstract and connected to prior results, but the argument does not isolate or prove the case in which a cycle crosses cluster boundaries. In that case the effective linear coefficients and noise terms between supernodes can change, so the low-dimensional skeleton and orientation need not remain constant.

Authors: We agree that the current proof sketch in Section 3 connects the low-dimensional invariance to existing LiNG equivalence-class results but does not isolate the inter-cluster cycle case. This is a genuine gap in the exposition. In the revision we will add a dedicated lemma (with full proof) showing that cycle reversals across cluster boundaries preserve the effective linear coefficients and noise terms between supernodes up to the observational equivalence class, thereby keeping both the skeleton and orientation of the coarsened DAG constant. The revised theorem statement will explicitly reference this lemma. revision: yes

-

Referee: The cubic-time recovery procedure and sample-complexity bounds are presented as consequences of the invariance, yet without an explicit lemma showing that the coarsening map commutes with cycle reversal when inter-cluster edges are involved, it is unclear whether the complexity claims survive the same counter-examples that would break invariance.

Authors: We acknowledge that the complexity analysis in Section 4 is stated as following directly from the invariance result. To close this loop we will insert an explicit lemma proving that the coarsening map commutes with arbitrary cycle reversals (including those involving inter-cluster edges). With this lemma in place, the cubic-time algorithm and the sample-complexity bounds will be shown to hold uniformly over the entire observational equivalence class. The revised manuscript will contain the lemma, the updated proof of the complexity claims, and a brief discussion of why no counter-examples arise. revision: yes

Circularity Check

No significant circularity detected in derivation chain.

full rationale

The paper derives recovery of an invariant low-dimensional DAG via coarsening in the LiNG setting with cycles, then connects this invariance to prior results on observational equivalence classes under cycle reversals. No quoted step reduces the central claim to a self-definition, a fitted parameter renamed as prediction, or a load-bearing self-citation whose content is unverified within the paper. The coarsening map and cubic-time algorithm are presented as independent constructions with explicit sample-complexity bounds, and the abstract treats the equivalence-class connection as external support rather than re-deriving the input. The derivation chain is therefore self-contained against the stated assumptions.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Variables follow linear structural equations with non-Gaussian noise terms

- domain assumption The low-dimensional summary DAG is invariant across all members of the observational equivalence class obtained by reversing directed cycles

Reference graph

Works this paper leans on

-

[1]

Structure learning for cyclic linear causal models

Carlos Améndola, Philipp Dettling, Mathias Drton, Federica Onori, and Jun Wu. Structure learning for cyclic linear causal models. InConference on Uncertainty in Artificial Intelligence, pages 999–1008. PMLR, 2020

work page 2020

-

[2]

Tara V . Anand, Adèle H. Ribeiro, Jin Tian, and Elias Bareinboim. Causal effect identification in cluster DAGs. InAAAI Conference on Artificial Intelligence, 2023

work page 2023

-

[3]

Sander Beckers and Joseph Y . Halpern. Abstracting causal models. InAAAI Conference on Artificial Intelligence, 2019

work page 2019

-

[4]

John Adrian Bondy and U. S. R. Murty.Graph Theory with Applications. Elsevier, New York, 1976

work page 1976

-

[5]

Stephan Bongers, Patrick Forré, Jonas Peters, and Joris M. Mooij. Foundations of structural causal models with cycles and latent variables.Annals of Statistics, 49(5):2885–2915, 2021

work page 2021

-

[6]

Aiyou Chen and Peter J. Bickel. Consistent independent component analysis and prewhitening. IEEE Transactions on Signal Processing, 53(10):3625–3632, 2005

work page 2005

-

[7]

Haoyue Dai, Immanuel Albrecht, Peter Spirtes, and Kun Zhang. Distributional equivalence in linear non-Gaussian latent-variable cyclic causal models: Characterization and learning. In International Conference on Learning Representations (ICLR), 2026

work page 2026

-

[8]

On the lasso for graphical continuous Lyapunov models

Philipp Dettling, Mathias Drton, and Mladen Kolar. On the lasso for graphical continuous Lyapunov models. In Francesco Locatello and Vanessa Didelez, editors,Proceedings of the Third Conference on Causal Learning and Reasoning, volume 236 ofProceedings of Machine Learning Research, pages 514–550. PMLR, 01–03 Apr 2024

work page 2024

-

[9]

Mathias Drton, Marina Garrote-López, Niko Nikov, Elina Robeva, and Y . Samuel Wang. Causal discovery for linear non-Gaussian models with disjoint cycles. In Silvia Chiappa and Sara Magliacane, editors,Proceedings of the Forty-first Conference on Uncertainty in Artificial Intelligence, volume 286 ofProceedings of Machine Learning Research, pages 1064–1083....

work page 2025

-

[10]

Estimating a causal order among groups of variables in linear models

Doris Entner and Patrik O Hoyer. Estimating a causal order among groups of variables in linear models. InInternational Conference on Artificial Neural Networks, pages 84–91. Springer, 2012

work page 2012

-

[11]

Jan Eriksson and Visa Koivunen. Identifiability, separability, and uniqueness of linear ICA models.IEEE Signal Processing Letters, 11(7):601–604, 2004

work page 2004

-

[12]

Patrick Forré and Joris M. Mooij. Constraint-based causal discovery for non-linear structural causal models with cycles and latent confounders. InConference on Uncertainty in Artificial Intelligence (UAI), 2018

work page 2018

-

[13]

Causal interpretation of stochastic differential equations

Niels Hansen and Alexander Sokol. Causal interpretation of stochastic differential equations. Electronic Journal of Probability, 19:1–24, 2014

work page 2014

-

[14]

Comparing partitions.Journal of Classification, 2(1):193– 218, 1985

Lawrence Hubert and Phipps Arabie. Comparing partitions.Journal of Classification, 2(1):193– 218, 1985. 10

work page 1985

-

[15]

Fast and robust fixed-point algorithms for independent component analysis

Aapo Hyvärinen. Fast and robust fixed-point algorithms for independent component analysis. IEEE Transactions on Neural Networks, 10(3):626–634, 1999

work page 1999

-

[16]

Aapo Hyvärinen, Juha Karhunen, and Erkki Oja.Independent Component Analysis. John Wiley & Sons, May 2001

work page 2001

-

[17]

Yoshinobu Kawahara, Kenneth Bollen, Shohei Shimizu, and Takashi Washio. GroupLiNGAM: Linear non-Gaussian acyclic models for sets of variables.arXiv preprint arXiv:1006.5041, 2010

-

[18]

Harold W. Kuhn. The Hungarian method for the assignment problem.Naval Research Logistics Quarterly, 2(1–2):83–97, 1955

work page 1955

-

[19]

Gustavo Lacerda, Peter Spirtes, Joseph Ramsey, and Patrik O. Hoyer. Discovering cyclic causal models by independent components analysis. InConference on Uncertainty in Artificial Intelligence (UAI), 2008

work page 2008

-

[20]

Michael S. Lewicki and Terrence J. Sejnowski. Learning overcomplete representations.Neural Computation, 12(2):337–365, 2000

work page 2000

-

[21]

Francisco Madaleno, Pratik Misra, and Alex Markham. Coarsening causal DAG models. In Bijan Mazaheri and Niels Richard Hansen, editors,Proceedings of the Fifth Conference on Causal Learning and Reasoning, Proceedings of Machine Learning Research. PMLR, Apr 2026

work page 2026

-

[22]

Takashi N. Maeda and Shohei Shimizu. RCD: Repetitive causal discovery of linear non- Gaussian acyclic models with latent confounders. InInternational Conference on Artificial Intelligence and Statistics (AISTATS), 2020

work page 2020

-

[23]

Fourth moments and independent component analysis.Statistical Science, 30(3):372–390, 2015

Jari Miettinen, Klaus Nordhausen, Hannu Oja, and Sara Taskinen. Fourth moments and independent component analysis.Statistical Science, 30(3):372–390, 2015

work page 2015

-

[24]

Mooij, Dominik Janzing, and Bernhard Schölkopf

Joris M. Mooij, Dominik Janzing, and Bernhard Schölkopf. From ordinary differential equa- tions to structural causal models: the deterministic case. InProceedings of the Twenty-Ninth Conference on Uncertainty in Artificial Intelligence, UAI’13, page 440–448. AUAI Press, 2013

work page 2013

-

[25]

Identifiability and estimation in continuous Lyapunov models.arXiv preprint arXiv:2603.17142, 2026

Cecilie Olesen Recke and Niels Richard Hansen. Identifiability and estimation in continuous Lyapunov models.arXiv preprint arXiv:2603.17142, 2026

-

[26]

Identifiability in graphical discrete Lyapunov models.arXiv preprint arXiv:2601.21818, 2026

Cecilie Olesen Recke, Sarah Lumpp, Nataliia Kushnerchuk, Janike Oldekop, Jiayi Li, Jane Ivy Coons, and Elina Robeva. Identifiability in graphical discrete Lyapunov models.arXiv preprint arXiv:2601.21818, 2026

-

[27]

Dominik Rothenhäusler, Christina Heinze, Jonas Peters, and Nicolai Meinshausen. Backshift: Learning causal cyclic graphs from unknown shift interventions.Advances in neural information processing systems, 28, 2015

work page 2015

-

[28]

Rubenstein, Sebastian Weichwald, Stephan Bongers, Joris M

Paul K. Rubenstein, Sebastian Weichwald, Stephan Bongers, Joris M. Mooij, Dominik Janzing, Moritz Grosse-Wentrup, and Bernhard Schölkopf. Causal consistency of structural equation models. InConference on Uncertainty in Artificial Intelligence (UAI), 2017

work page 2017

-

[29]

Karen Sachs, Omar Perez, Dana Pe’er, Douglas A. Lauffenburger, and Garry P. Nolan. Causal protein-signaling networks derived from multiparameter single-cell data.Science, 308(5721):523–529, 2005

work page 2005

-

[30]

Saber Salehkaleybar, Amiremad Ghassami, Negar Kiyavash, and Kun Zhang. Learning linear non-Gaussian causal models in the presence of latent variables.Journal of Machine Learning Research, 21(39):1–24, 2020

work page 2020

-

[31]

Near-optimal experiment design in linear non-Gaussian cyclic models

Ehsan Sharifian, Saber Salehkaleybar, and Negar Kiyavash. Near-optimal experiment design in linear non-Gaussian cyclic models. In D. Belgrave, C. Zhang, H. Lin, R. Pascanu, P. Koniusz, M. Ghassemi, and N. Chen, editors,Advances in Neural Information Processing Systems, volume 38, pages 63520–63538. Curran Associates, Inc., 2025. 11

work page 2025

-

[32]

Hoyer, Aapo Hyvärinen, and Antti Kerminen

Shohei Shimizu, Patrik O. Hoyer, Aapo Hyvärinen, and Antti Kerminen. A linear non-Gaussian acyclic model for causal discovery.Journal of Machine Learning Research, 7:2003–2030, 2006

work page 2003

-

[33]

Shohei Shimizu, Takanori Inazumi, Yasuhiro Sogawa, Aapo Hyvärinen, Yoshinobu Kawahara, Takashi Washio, Patrik O. Hoyer, and Kenneth Bollen. DirectLiNGAM: A direct method for learning a linear non-Gaussian structural equation model.Journal of Machine Learning Research, 12:1225–1248, 2011

work page 2011

-

[34]

Directed cyclic graphical representations of feedback models

Peter Spirtes. Directed cyclic graphical representations of feedback models. InConference on Uncertainty in Artificial Intelligence (UAI), 1995

work page 1995

-

[35]

Depth-first search and linear graph algorithms.SIAM Journal on Computing, 1(2):146–160, 1972

Robert Tarjan. Depth-first search and linear graph algorithms.SIAM Journal on Computing, 1(2):146–160, 1972

work page 1972

-

[36]

Learning linear non-gaussian polytree models

Daniele Tramontano, Anthea Monod, and Mathias Drton. Learning linear non-gaussian polytree models. InUncertainty in Artificial Intelligence, pages 1960–1969. PMLR, 2022

work page 1960

-

[37]

Graphical continuous Lyapunov models

Gherardo Varando and Niels Richard Hansen. Graphical continuous Lyapunov models. In Jonas Peters and David Sontag, editors,Proceedings of the 36th Conference on Uncertainty in Artificial Intelligence (UAI), volume 124 ofProceedings of Machine Learning Research, pages 989–998. PMLR, 03–06 Aug 2020

work page 2020

-

[38]

Jonas Wahl, Urmi Ninad, and Jakob Runge. Foundations of causal discovery on groups of variables.Journal of Causal Inference, 12(1):20230041, 2024. A Additional Comments A.1 On Interventional Extensions Under soft interventions, the cyclic SCM becomes X I = (I−B) −1(ε+δ) , so intervention effects propagate through the whole graph, including back through cy...

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.