Recognition: 2 theorem links

· Lean TheoremJoint sparse coding and temporal dynamics support context reconfiguration

Pith reviewed 2026-05-12 04:43 UTC · model grok-4.3

The pith

Joint sparse coding and temporal dynamics enable context reconfiguration while preserving prior knowledge.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

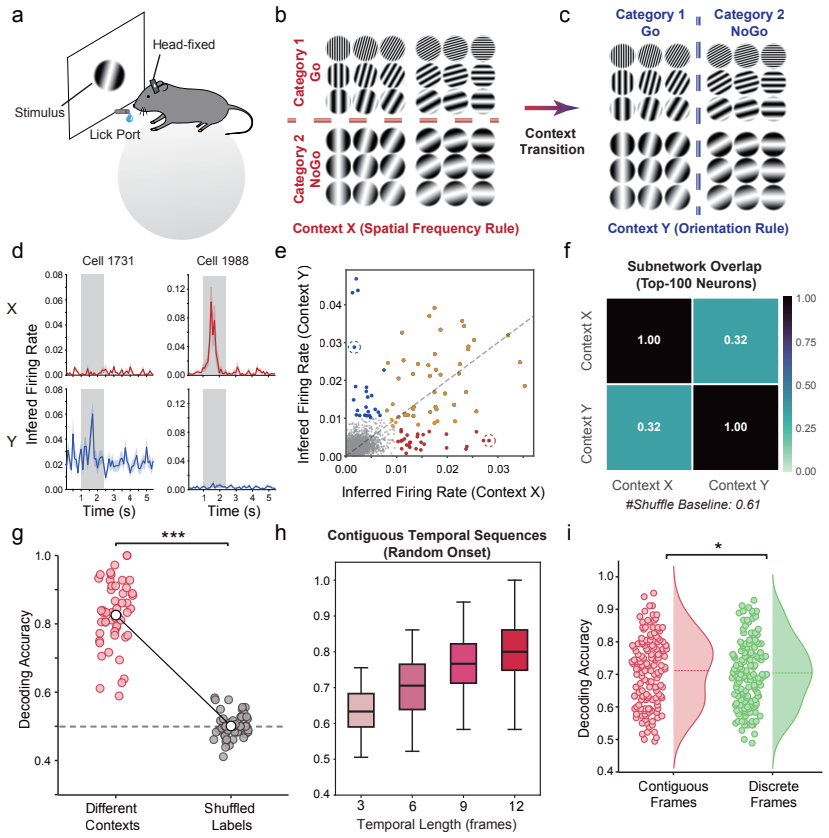

Sparsity in context-dependent representations reduces cross-context interference, whereas temporal dynamics within the network activity further enhance context separability across time. Networks endowed with both properties, such as spiking neural networks, exhibit improved retention during lifelong learning without auxiliary heuristics.

What carries the argument

Joint sparse coding and temporal dynamics that together constrain neural activity to maintain context separability and retention.

If this is right

- Sparsity limits overlap between representations of different contexts.

- Temporal dynamics add separation along the time dimension.

- Spiking neural networks that combine both traits retain prior knowledge better without extra protective rules.

- The same activity constraints support energy-efficient adaptation in changing environments.

Where Pith is reading between the lines

- Similar joint mechanisms could be tested for their role in other brain areas beyond the mPFC.

- The combination might guide the design of new continual-learning algorithms that avoid catastrophic forgetting.

- Hardware implementations could exploit the energy-efficient nature of these constraints to reduce power use in deployed networks.

Load-bearing premise

The sparsity and temporal dynamics observed in the mPFC are causally responsible for context separability and retention rather than merely correlated with other unmeasured factors.

What would settle it

Disrupting sparsity or temporal dynamics in the mPFC increases cross-context interference and forgetting, or adding these features to non-spiking networks produces no measurable gain in retention.

Figures

read the original abstract

Adaptive behavior requires the brain to transition between distinct contexts while maintaining representations of prior experience. The ability to reconfigure neural representations without erasing previously acquired knowledge is central to learning in dynamic environments, yet the neural mechanisms that support this balance remain unclear. Understanding these mechanisms is also critical for addressing catastrophic forgetting in artificial systems designed for lifelong learning. Here, we identify joint sparse coding and temporal dynamics in both the mouse medial prefrontal cortex (mPFC) and computational networks as mechanisms that help preserve prior representations during context transitions. Specifically, sparsity in context-dependent representations reduces cross-context interference, whereas temporal dynamics within the network activity further enhance context separability across time. Strikingly, networks endowed with both properties, such as spiking neural networks, exhibit improved retention during lifelong learning without auxiliary heuristics. These findings establish joint sparse coding and temporal dynamics as a core mechanism supporting flexible context reconfiguration in lifelong learning and, through their activity constraining nature, as an energy-efficient architectural principle for stable adaptation. Together, they provide a mechanistic framework for understanding how the brain preserves prior knowledge while flexibly adapting to new contexts.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript claims that joint sparse coding and temporal dynamics, observed in mouse mPFC during context transitions, reduce cross-context interference and enhance separability; computational networks (especially spiking neural networks) endowed with both properties show improved retention in lifelong learning tasks without auxiliary heuristics, providing a mechanistic and energy-efficient framework for context reconfiguration in biological and artificial systems.

Significance. If the causal role of these joint properties is isolated, the work would link specific neural coding features to stable lifelong learning, offering a biologically grounded principle for mitigating catastrophic forgetting in AI and a testable account of mPFC function in dynamic environments. The integration of in vivo observations with network simulations is a strength.

major comments (2)

- [Abstract] Abstract: The central claim that 'networks endowed with both properties, such as spiking neural networks, exhibit improved retention during lifelong learning without auxiliary heuristics' requires explicit ablation experiments that add equivalent sparsity and temporal dynamics to non-spiking baselines while holding fixed parameter count, learning rule, and task structure; without such controls, retention gains cannot be attributed to the claimed joint properties rather than correlated SNN features (e.g., event-driven updates or surrogate-gradient training).

- [Model experiments] Model experiments section: The assertion that sparsity reduces interference and temporal dynamics enhance separability is presented as causal support for reconfiguration, yet the manuscript provides no independent benchmarks or falsifiable predictions outside the fitted network behaviors to demonstrate that these factors are operative rather than epiphenomenal.

minor comments (2)

- [Methods] Clarify the precise definition and measurement of 'temporal dynamics' (e.g., time constants, recurrence) and 'sparse coding' (e.g., L0 vs. L1, population vs. single-unit) when comparing mPFC data to network models.

- [Figures] Figure legends should explicitly state the number of animals, trials, and statistical tests used for the mPFC context-separability analyses.

Simulated Author's Rebuttal

We are grateful to the referee for their insightful comments on our manuscript. We address the major comments point-by-point below and outline the revisions we plan to make.

read point-by-point responses

-

Referee: [Abstract] Abstract: The central claim that 'networks endowed with both properties, such as spiking neural networks, exhibit improved retention during lifelong learning without auxiliary heuristics' requires explicit ablation experiments that add equivalent sparsity and temporal dynamics to non-spiking baselines while holding fixed parameter count, learning rule, and task structure; without such controls, retention gains cannot be attributed to the claimed joint properties rather than correlated SNN features (e.g., event-driven updates or surrogate-gradient training).

Authors: We concur that rigorous controls are essential to attribute the observed benefits specifically to the combination of sparse coding and temporal dynamics. Accordingly, in the revised manuscript, we will add ablation studies that equip non-spiking baseline networks with comparable sparsity constraints and temporal integration mechanisms. These ablations will keep the total parameter count, learning rules, and task specifications fixed to ensure fair comparison. This will clarify whether the retention improvements stem from the joint properties or other SNN-specific characteristics. revision: yes

-

Referee: [Model experiments] Model experiments section: The assertion that sparsity reduces interference and temporal dynamics enhance separability is presented as causal support for reconfiguration, yet the manuscript provides no independent benchmarks or falsifiable predictions outside the fitted network behaviors to demonstrate that these factors are operative rather than epiphenomenal.

Authors: Our model experiments systematically vary the degree of sparsity and the presence of temporal dynamics to quantify their impact on cross-context interference and separability, providing direct evidence within the computational framework. To further address this point, we will include additional benchmarks against standard continual learning algorithms and formulate explicit, falsifiable predictions regarding mPFC neural dynamics during context switches that can be tested in future in vivo studies. revision: partial

Circularity Check

No significant circularity in derivation chain

full rationale

The paper presents observational and modeling results linking sparse coding and temporal dynamics in mPFC and SNNs to context separability and retention during lifelong learning. No explicit equations, first-principles derivations, or parameter-fitting steps are described that reduce any claimed prediction to the inputs by construction. The central claim rests on empirical correlations and model performance comparisons rather than self-definitional loops, fitted inputs renamed as predictions, or load-bearing self-citations that import uniqueness theorems. The derivation is self-contained against external benchmarks such as biological recordings and network retention metrics.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Sparsity in context-dependent representations reduces cross-context interference

- domain assumption Temporal dynamics enhance context separability across time

Reference graph

Works this paper leans on

-

[1]

Task set and prefrontal cortex.Annual Review of Neuroscience, 31: 219–245, 2008

Katsuyuki Sakai. Task set and prefrontal cortex.Annual Review of Neuroscience, 31: 219–245, 2008. doi: 10.1146/annurev.neuro.31.060407.125642

-

[2]

Roshan Cools, Luke Clark, and Trevor W Robbins. Mechanisms of cognitive set flexibility in the human brain.Journal of Neuroscience, 24(50):11208–11216, 2004. doi: 10.1523/ JNEUROSCI.4099-04.2004

work page 2004

-

[3]

An integrative theory of prefrontal cortex function

Earl K Miller and Jonathan D Cohen. An integrative theory of prefrontal cortex function. Annual Review of Neuroscience, 24:167–202, 2001. doi: 10.1146/annurev.neuro.24.1.167

-

[4]

Conflict monitoring and cognitive control.Psychological Review, 108(3):624–652, 2004

Matthew M Botvinick, Jonathan D Cohen, and Cameron S Carter. Conflict monitoring and cognitive control.Psychological Review, 108(3):624–652, 2004. doi: 10.1037/0033-295X. 108.3.624

-

[5]

Interplay of hippocampus and prefrontal cortex in memory.Current Biology, 23(17):R764–R773, 2013

Alison R Preston and Howard Eichenbaum. Interplay of hippocampus and prefrontal cortex in memory.Current Biology, 23(17):R764–R773, 2013. doi: 10.1016/j.cub.2013.05.041

-

[6]

Rebecca Place, Anja Farovik, Melissa Brockmann, and Howard Eichenbaum. Bidirec- tional prefrontal–hippocampal interactions support context-guided memory.Nature Neu- roscience, 19(8):992–994, 2016. doi: 10.1038/nn.4327

-

[7]

Torfi Sigurdsson and Sevil Duvarci. Hippocampal–prefrontal interactions in cognition, behavior and psychiatric disease.Frontiers in Systems Neuroscience, 9:190, 2016. doi: 10.3389/fnsys.2015.00190

-

[8]

Yann LeCun, Yoshua Bengio, and Geoffrey Hinton. Deep learning.Nature, 521(7553): 436–444, 2015. doi: 10.1038/nature14539

-

[9]

Continual lifelong learning with neural networks: A review.Neural networks, 113:54–71, 2019

German I Parisi, Ronald Kemker, Jose L Part, Christopher Kanan, and Stefan Wermter. Continual lifelong learning with neural networks: A review.Neural networks, 113:54–71, 2019

work page 2019

-

[10]

Matthias De Lange, Rahaf Aljundi, Marc Masana, Sarah Parisot, Xu Jia, Aleˇ s Leonardis, Gregory Slabaugh, and Tinne Tuytelaars. A continual learning survey: Defying forgetting in classification tasks.IEEE transactions on pattern analysis and machine intelligence, 44 (7):3366–3385, 2021

work page 2021

-

[11]

Qianqian Shi, Faqiang Liu, Hongyi Li, Guangyu Li, Luping Shi, and Rong Zhao. Hybrid neural networks for continual learning inspired by corticohippocampal circuits.Nature Communications, 16(1):1272, 2025

work page 2025

-

[12]

The Journal of Physiology160(1), 106–154 (1962)

David H. Hubel and Torsten N. Wiesel. Receptive fields, binocular interaction and func- tional architecture in the cat’s visual cortex.Journal of Physiology, 160(1):106–154, 1962. doi: 10.1113/jphysiol.1962.sp006837

-

[13]

Yann LeCun, L´ eon Bottou, Yoshua Bengio, and Patrick Haffner. Gradient-based learning applied to document recognition.Proceedings of the IEEE, 86(11):2278–2324, 1998. doi: 10.1109/5.726791

-

[14]

Kunihiko Fukushima. Neocognitron: A self-organizing neural network model for a mecha- nism of pattern recognition unaffected by shift in position.Biological Cybernetics, 36(4): 193–202, 1980. doi: 10.1007/BF00344251. 19

-

[15]

Daniel L. K. Yamins, Ha Hong, Charles F. Cadieu, Ethan A. Solomon, Darren Seibert, and James J. DiCarlo. Performance-optimized hierarchical models predict neural responses in higher visual cortex.Proceedings of the National Academy of Sciences, 111(23):8619–8624,

-

[16]

doi: 10.1073/pnas.1403112111

-

[17]

Neural mechanisms of selective visual attention

Robert Desimone and John Duncan. Neural mechanisms of selective visual attention. Annual Review of Neuroscience, 18:193–222, 1995. doi: 10.1146/annurev.ne.18.030195. 001205

-

[18]

Gomez, Lukasz Kaiser, and Illia Polosukhin

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, and Illia Polosukhin. Attention is all you need. InAdvances in Neural Information Processing Systems, volume 30, 2017

work page 2017

-

[19]

Gido M Van de Ven, Hava T Siegelmann, and Andreas S Tolias. Brain-inspired replay for continual learning with artificial neural networks.Nature communications, 11(1):4069, 2020

work page 2020

-

[20]

Blake A. Richards, Timothy P. Lillicrap, Philippe Beaudoin, Yoshua Bengio, Rafal Bogacz, Alex Christensen, Claudia Clopath, Rui P. Costa, Archy O. de Berker, Surya Ganguli, et al. A deep learning framework for neuroscience.Nature Neuroscience, 22(11):1761–1770, 2019. doi: 10.1038/s41593-019-0520-2

-

[21]

Nikolaus Kriegeskorte. Deep neural networks: a new framework for modeling biological vision and brain information processing.Annual Review of Vision Science, 1:417–446,

-

[22]

doi: 10.1146/annurev-vision-082114-035447

-

[23]

H. B. Barlow. Possible principles underlying the transformations of sensory messages. In W. A. Rosenblith, editor,Sensory Communication, pages 217–234. MIT Press, Cambridge, MA, 1961

work page 1961

-

[24]

David J. Field. Relations between the statistics of natural images and the response prop- erties of cortical cells.Journal of the Optical Society of America A, 4(12):2379–2394, 1987. doi: 10.1364/JOSAA.4.002379

-

[26]

Moshe Abeles, Eilon Vaadia, and Hagai Bergman. Firing patterns of single units in the prefrontal cortex and neural network models.Network: Computation in Neural Systems, 1(1):13–25, 1990. doi: 10.1088/0954-898X 1 1 002

-

[27]

Roy, Maximilian Riesenhuber, Tomaso Poggio, and Earl K

Jefferson E. Roy, Maximilian Riesenhuber, Tomaso Poggio, and Earl K. Miller. Prefrontal cortex activity during flexible categorization.The Journal of Neuroscience, 30(25):8519– 8528, 2010. doi: 10.1523/JNEUROSCI.4837-09.2010

-

[28]

Rikhye, Aditya Gilra, and Michael M

Rajeev V. Rikhye, Aditya Gilra, and Michael M. Halassa. Thalamic regulation of switching between cortical representations enables cognitive flexibility.Nature Neuroscience, 21(12): 1753–1763, 2018. doi: 10.1038/s41593-018-0269-z

-

[29]

Dean V. Buonomano and Wolfgang Maass. State-dependent computations: Spatiotemporal processing in cortical networks.Nature Reviews Neuroscience, 10(2):113–125, 2009. doi: 10.1038/nrn2558. 20

-

[30]

Murray, Alberto Bernacchia, Nicholas A

John D. Murray, Alberto Bernacchia, Nicholas A. Roy, Christos Constantinidis, Ranulfo Romo, Xiao-Jing Wang, and Amy F. T. Arnsten. Stable population coding for working memory coexists with heterogeneous neural dynamics in prefrontal cortex.Proceedings of the National Academy of Sciences of the United States of America, 114(2):394–399, 2017. doi: 10.1073/p...

-

[31]

Tem- poral coding carries more stable cortical visual representations than firing rate over time

Hanlin Zhu, Fei He, Pavlo Zolotavin, Xin Yao, Alexandre Pouget, and Rafael Yuste. Tem- poral coding carries more stable cortical visual representations than firing rate over time. Nature Communications, 16:7162, 2025. doi: 10.1038/s41467-025-62069-2

-

[32]

Kistler.Spiking Neuron Models: Single Neurons, Popula- tions, Plasticity

Wulfram Gerstner and Werner M. Kistler.Spiking Neuron Models: Single Neurons, Popula- tions, Plasticity. Cambridge University Press, Cambridge, UK, 2002. ISBN 9780521890793. doi: 10.1017/CBO9780511815706

-

[33]

Wolfgang Maass. Networks of spiking neurons: the third generation of neural network models.Neural Networks, 10(9):1659–1671, 1997. doi: 10.1016/S0893-6080(97)00011-7

-

[34]

Efficient neuromorphic signal processing with loihi 2

Garrick Orchard, E Paxon Frady, Daniel Ben Dayan Rubin, Sophia Sanborn, Sumit Bam Shrestha, Friedrich T Sommer, and Mike Davies. Efficient neuromorphic signal processing with loihi 2. In2021 IEEE Workshop on Signal Processing Systems (SiPS), pages 254–259. IEEE, 2021

work page 2021

-

[35]

Neuromorphic silicon neuron circuits

Giacomo Indiveri, Bernab´ e Linares-Barranco, et al. Neuromorphic silicon neuron circuits. Frontiers in Neuroscience, 5:73, 2011. doi: 10.3389/fnins.2011.00073

-

[36]

Hebbian deep learning without feedback.arXiv preprint arXiv:2209.11883, 2022

Adrien Journ´ e, Hector Garcia Rodriguez, Qinghai Guo, and Timoleon Moraitis. Hebbian deep learning without feedback.arXiv preprint arXiv:2209.11883, 2022

-

[37]

Jing Pei, Lei Deng, Sen Song, Mingguo Zhao, Youhui Zhang, Shuang Wu, Guanrui Wang, Zhe Zou, Zhenzhi Wu, Wei He, et al. Towards artificial general intelligence with hybrid tianjic chip architecture.Nature, 572(7767):106–111, 2019

work page 2019

-

[38]

Hector A Gonzalez, Jiaxin Huang, Florian Kelber, Khaleelulla Khan Nazeer, Tim Langer, Chen Liu, Matthias Lohrmann, Amirhossein Rostami, Mark Sch¨ one, Bernhard Vogginger, et al. Spinnaker2: A large-scale neuromorphic system for event-based and asynchronous machine learning.arXiv preprint arXiv:2401.04491, 2024

-

[39]

Questioning the role of sparse coding in the brain

Anton Spanne and Henrik J¨ orntell. Questioning the role of sparse coding in the brain. Trends in Neurosciences, 38(7):417–427, 2015. doi: 10.1016/j.tins.2015.05.005

-

[40]

Xiao-Jing Wang. Synaptic reverberation underlying mnemonic persistent activity.Trends in Neurosciences, 24(8):455–463, 2001

work page 2001

-

[41]

John D Murray, Alberto Bernacchia, David J Freedman, Ranulfo Romo, Jonathan D Wallis, Xinying Cai, Camillo Padoa-Schioppa, Tatiana Pasternak, Hyojung Seo, Daeyeol Lee, et al. A hierarchy of intrinsic timescales across primate cortex.Nature neuroscience, 17(12):1661– 1663, 2014

work page 2014

-

[42]

Sandra Reinert, Mark H¨ ubener, Tobias Bonhoeffer, and Pieter M Goltstein. Mouse pre- frontal cortex represents learned rules for categorization.Nature, 593(7859):411–417, 2021

work page 2021

-

[43]

Loss of plasticity in deep continual learning.Nature, 632(8026):768–774, 2024

Shibhansh Dohare, J Fernando Hernandez-Garcia, Qingfeng Lan, Parash Rahman, A Ru- pam Mahmood, and Richard S Sutton. Loss of plasticity in deep continual learning.Nature, 632(8026):768–774, 2024. 21

work page 2024

-

[44]

Ternary spike: Learning ternary spikes for spiking neural networks

Yufei Guo, Yuanpei Chen, Xiaode Liu, Weihang Peng, Yuhan Zhang, Xuhui Huang, and Zhe Ma. Ternary spike: Learning ternary spikes for spiking neural networks. InProceedings of the AAAI conference on artificial intelligence, volume 38, pages 12244–12252, 2024

work page 2024

-

[45]

Three types of incremental learning.Nature Machine Intelligence, 4(12):1185–1197, 2022

Gido M Van de Ven, Tinne Tuytelaars, and Andreas S Tolias. Three types of incremental learning.Nature Machine Intelligence, 4(12):1185–1197, 2022

work page 2022

-

[46]

Eleanor Holton, Lukas Braun, Jessica AF Thompson, Jan Grohn, and Christopher Sum- merfield. Humans and neural networks show similar patterns of transfer and interference during continual learning.Nature Human Behaviour, pages 1–15, 2025

work page 2025

-

[47]

Yann LeCun, L´ eon Bottou, Yoshua Bengio, and Patrick Haffner. Gradient-based learning applied to document recognition.Proceedings of the IEEE, 86(11):2278–2324, 2002

work page 2002

-

[48]

Wulfram Gerstner, Werner M. Kistler, Richard Naud, and Liam Paninski.Neuronal Dy- namics: From Single Neurons to Networks and Models of Cognition. Cambridge University Press, Cambridge, UK, 2014. ISBN 9781107060838. doi: 10.1017/CBO9781107447615

-

[49]

Sensorimotor remapping drives task specialization in prefrontal cortex.bioRxiv, pages 2025–09, 2025

Hugo Tissot, Jeffrey Boucher, Sandra Reinert, Pieter M Goltstein, and Yves Boubenec. Sensorimotor remapping drives task specialization in prefrontal cortex.bioRxiv, pages 2025–09, 2025

work page 2025

-

[50]

Dhireesha Kudithipudi, Mario Aguilar-Simon, Jonathan Babb, Maxim Bazhenov, Douglas Blackiston, Josh Bongard, Andrew P Brna, Suraj Chakravarthi Raja, Nick Cheney, Jeff Clune, et al. Biological underpinnings for lifelong learning machines.Nature Machine Intelligence, 4(3):196–210, 2022

work page 2022

-

[51]

Synaptic computation.Nature, 431(7010):796–803, 2004

Larry F Abbott and Wade G Regehr. Synaptic computation.Nature, 431(7010):796–803, 2004

work page 2004

-

[52]

Uri Hasson, Eunice Yang, Ignacio Vallines, David J Heeger, and Nava Rubin. A hierarchy of temporal receptive windows in human cortex.Journal of neuroscience, 28(10):2539–2550, 2008

work page 2008

-

[53]

Bodo Rueckauer, Iulia-Alexandra Lungu, Yuhuang Hu, Michael Pfeiffer, and Shih-Chii Liu. Conversion of continuous-valued deep networks to efficient event-driven networks for image classification.Frontiers in neuroscience, 11:682, 2017

work page 2017

-

[54]

Spiking neural net- works and their applications: A review.Brain sciences, 12(7):863, 2022

Kashu Yamazaki, Viet-Khoa Vo-Ho, Darshan Bulsara, and Ngan Le. Spiking neural net- works and their applications: A review.Brain sciences, 12(7):863, 2022. 22 Methods Neural experiment details Animals We analyzed mPFC population recordings from adult female C57BL/6 mice engaged in a rule- based Go/NoGo visual categorization task, using data from a previous...

work page 2022

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.