Recognition: no theorem link

Uncertainty in Physics and AI: Taxonomy, Quantification, and Validation

Pith reviewed 2026-05-12 04:00 UTC · model grok-4.3

The pith

A unified taxonomy organizes uncertainty types and clarifies their meaning for machine learning models in physics across frequentist and Bayesian views.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

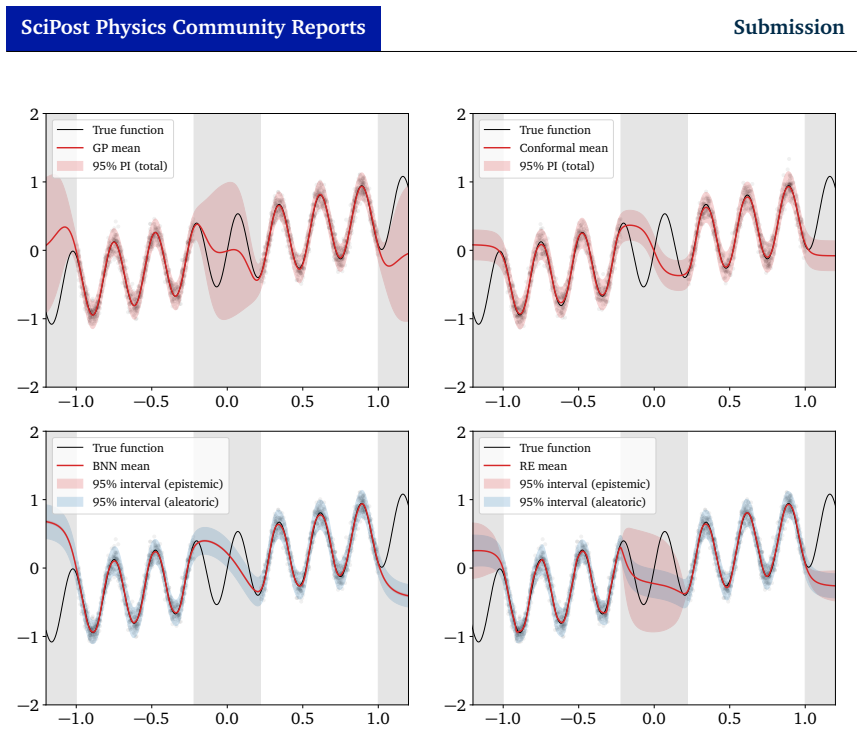

The paper claims that a unified taxonomy of uncertainty provides clearer interpretations of predictive and inference uncertainties in both frequentist and Bayesian frameworks, supported by principled validation tools including coverage, calibration, bias tests, and proper scoring rules that are illustrated through simple regression and classification examples.

What carries the argument

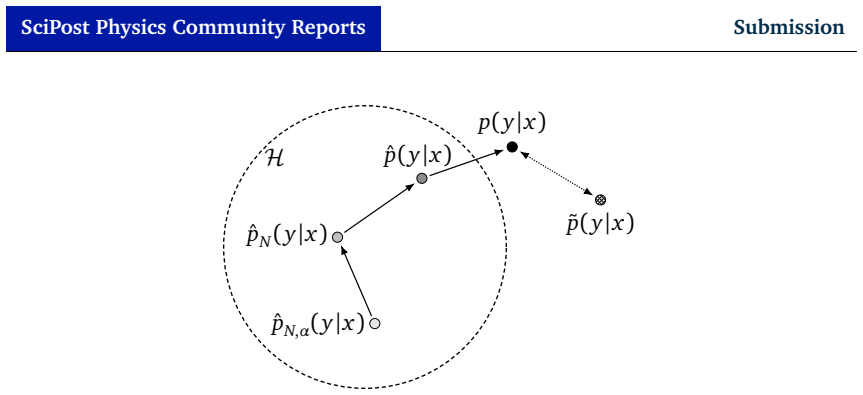

The unified taxonomy of uncertainty, which categorizes uncertainty sources and aligns the meanings of predictive and inference uncertainties between frequentist and Bayesian approaches.

If this is right

- Validation procedures such as coverage and calibration become applicable in a consistent manner across statistical frameworks.

- Basic regression and classification examples show how the taxonomy sharpens understanding of uncertainty in practice.

- Reliable probabilistic statements from machine learning models become easier to obtain for physics discoveries.

- Machine learning outputs in physics gain trustworthiness when uncertainties are quantified and checked with the listed tools.

Where Pith is reading between the lines

- Researchers at the physics-AI boundary could use the taxonomy as a shared reference when reporting results.

- The same structure might be tested on high-dimensional physics simulations to check whether it reduces communication errors between teams.

- Similar taxonomies could be developed for uncertainty in other data-driven sciences such as astronomy or materials discovery.

- Training curricula for physicists learning machine learning might incorporate the taxonomy to build clearer intuition about model outputs.

Load-bearing premise

A single unified taxonomy can clarify interpretations across frequentist and Bayesian frameworks without oversimplifying important technical differences between them.

What would settle it

A concrete physics machine-learning task where applying the taxonomy produces inconsistent or misleading uncertainty assessments compared with separate frequentist and Bayesian analyses on the same data.

Figures

read the original abstract

Reliable uncertainty quantification is essential for the use of machine learning in physics, where scientific discoveries depend on validated probabilistic statements. We provide a structured overview of uncertainty quantification in ML for physics, introducing a unified taxonomy of uncertainty and clarifying the interpretation of predictive and inference uncertainties across frequentist and Bayesian frameworks. We discuss principled validation tools, including coverage, calibration, bias tests, and proper scoring rules, and illustrate them with simple regression and classification examples.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper provides a structured overview of uncertainty quantification in machine learning for physics. It introduces a unified taxonomy of uncertainty types and seeks to clarify the interpretation of predictive and inference uncertainties across frequentist and Bayesian frameworks. The manuscript discusses principled validation tools such as coverage, calibration, bias tests, and proper scoring rules, and illustrates the concepts with simple regression and classification examples.

Significance. If the unified taxonomy successfully organizes concepts without erasing key distinctions, the paper could serve as a helpful reference bridging ML and physics communities, where validated uncertainty statements are critical for scientific conclusions. The focus on validation tools and use of simple illustrative examples are strengths that enhance accessibility and practical utility for readers applying these ideas.

minor comments (1)

- [Abstract] Abstract: the phrase 'simple regression and classification examples' would be more useful if it indicated the specific sections or figures where these appear, to aid navigation in an overview paper.

Simulated Author's Rebuttal

We thank the referee for their positive review and recommendation to accept. We are pleased that the unified taxonomy, clarification of predictive and inference uncertainties, and emphasis on validation tools such as coverage, calibration, and proper scoring rules were viewed as strengths that enhance accessibility for the ML and physics communities.

Circularity Check

No circularity: review paper with conceptual taxonomy and no derivations or fitted predictions

full rationale

This is a review and overview paper that introduces a unified taxonomy of uncertainty and discusses validation tools for ML in physics. It contains no derivations, equations, predictions, or fitted parameters that could reduce to self-definitions or inputs by construction. The central claims are clarifications of existing concepts across frameworks, illustrated with simple examples, without any load-bearing self-citations or ansatzes that collapse the argument. The paper is self-contained as a synthesis of established ideas, with no steps that qualify as circular under the specified patterns.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Trotta, Contemporary Physics 49, 71 (2008), arXiv:0803.4089 [astro-ph]

[2]R. Trotta,Bayes in the sky: Bayesian inference and model selection in cosmology, Contemp. Phys.49(2008) 71, arXiv:0803.4089[astro-ph]. [3]D. W . Hogg, J. Bovy , and D. Lang,Data analysis recipes: Fitting a model to data, arXiv:1008.4686[astro-ph.IM]. [4]G. Cowan, K. Cranmer, E. Gross, and O. Vitells,Asymptotic formulae for likelihood-based tests of new...

-

[2]

arXiv:1703.04977[cs.CV]. [7]J. Gawlikowski, C. Tassi, and M. e. a. Ali,A survey of uncertainty in deep neural networks, Artif Intell Rev 56(Suppl 1)(2023) 1513–1589, arXiv:2107.03342[cs.LG]. [8]F . Fakour, A. Mosleh, and R. Ramezani,A structured review of literature on uncertainty in machine learning & deep learning, arXiv:2406.00332[cs.LG]. [9]N. Stahl, ...

-

[3]

243–297. [11]T . Siddique, M. S. Mahmud, A. M. Keesee, C. M. Ngwira, and H. Connor,A survey of uncertainty quantification in machine learning for space weather prediction, Geosciences 12(2022) 1, . [12]B. Kompa, J. Snoek, and A. L. Beam,Second opinion needed: communicating uncertainty in medical machine learning, npj Digital Medicine4(2021) 1,

work page 2022

-

[4]

https://doi.org/10.1038/s41746-020-00367-3. [13]T . J. Loftus, B. Shickel, M. M. Ruppert, J. A. Balch, T . Ozrazgat-Baslanti, P . J. Tighe, P . A. Efron, W . R. Hogan, P . Rashidi, G. R. Upchurch, Jr., and A. Bihorac, Uncertainty-aware deep learning in healthcare: A scoping review, PLOS Digital Health1 (08,

-

[5]

1.https://doi.org/10.1371/journal.pdig.0000085. [14]B. Araújo, J. F . Teixeira, J. Fonseca, R. Cerqueira, and S. C. Beco,The road to safety: A review of uncertainty and applications to autonomous driving perception, Entropy26 (2024) 8, .https://www.mdpi.com/1099-4300/26/8/634. [15]C. Guo, G. Pleiss, Y. Sun, and K. Q. Weinberger,On calibration of modern ne...

-

[6]

On calibration of modern neural networks,

arXiv:1706.04599[cs.LG]. 34 SciPost Physics Community Reports Submission [16]S. Forte, L. Garrido, J. I. Latorre, and A. Piccione,Neural network parametrization of deep inelastic structure functions, JHEP05(2002) 062, arXiv:hep-ph/0204232. [17]NNPDF , R. D. Ball, L. Del Debbio, S. Forte, A. Guffanti, J. I. Latorre, A. Piccione, J. Rojo, and M. Ubiali,A De...

-

[7]

003, arXiv:2104.04543[hep-ph]. [25]A. Ghosh and B. Nachman,A cautionary tale of decorrelating theory uncertainties, Eur. Phys. J. C82(2022) 1, 46, arXiv:2109.08159[hep-ph]. [26]A. Ghosh, B. Nachman, and D. Whiteson,Uncertainty-aware machine learning for high energy physics, Phys. Rev. D104(2021) 5, 056026, arXiv:2105.08742 [physics.data-an]. [27]NNPDF , R...

-

[8]

arXiv:2208.03284[hep-ex]. [31]A. Butter, T . Heimel, T . Martini, S. Peitzsch, and T . Plehn,Two invertible networks for the matrix element method, SciPost Phys.15(2023) 3, 094, arXiv:2210.00019[hep-ph]. 35 SciPost Physics Community Reports Submission [32]C. Fanelli and J. Giroux,ELUQuant: event-level uncertainty quantification in deep inelastic scatterin...

-

[9]

37 SciPost Physics Community Reports Submission [62]S.-T

arXiv:2509.25128[astro-ph.IM]. 37 SciPost Physics Community Reports Submission [62]S.-T . Liu, T .-Y. Sun, Y.-X. Wang, Y.-X. Zhang, S.-J. Jin, J.-F . Zhang, and X. Zhang, Assessing the robustness of amortized simulation-based inference to transient noise in gravitational-wave ringdowns, arXiv:2603.12032[gr-qc]. [63]A. Walls, J. Barry , D. Mohan, and A. M....

-

[10]

A tutorial on conformal prediction, 2007

[68]G. Shafer and V . Vovk,A tutorial on conformal prediction, arXiv:0706.3188[cs.LG]. [69]B. Lakshminarayanan, A. Pritzel, and C. Blundell,Simple and scalable predictive uncertainty estimation using deep ensembles, arXiv:1612.01474[stat.ML]. [70]T . Gneiting and A. E. Raftery ,Strictly proper scoring rules, prediction, and estimation, Journal of the Amer...

-

[11]

[71]G. Grosso, R. Winterhalder, L. Brenner, L. Lyons, and T . Plehn,VERaiPHY – Validation & Evaluation for Robust AI in PHYsics, to appear (2026) , arXiv:2026.xxxxx. [72]O. Amram, M. Letizia, and M. Kuusela,Model-Agnostic Signal Discovery with Machine Learning: Bridging the Gap Between Theory and Practice, to appear (2026) , arXiv:2026.xxxxx. [73]A. Der K...

work page 2026

-

[12]

[74]E. Hüllermeier and W . Waegeman,Aleatoric and epistemic uncertainty in machine learning: an introduction to concepts and methods, Machine Learning110(2021) 457, arXiv:1910.09457[cs.LG]. [75]C. Gruber, P . O. Schenk, M. Schierholz, F . Kreuter, and G. Kauermann,Sources of uncertainty in supervised machine learning – a statisticians’ view, arXiv:2305.16...

-

[13]

38 SciPost Physics Community Reports Submission [80]A. N. Angelopoulos and S. Bates,Conformal prediction: A gentle introduction, Foundations and Trends® in Machine Learning16(2023) 4, , arXiv:2107.07511 [cs.LG]. [81]L. G. Valiant,A theory of the learnable, Communications of the ACM27(1984) 11,

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[14]

[83]S. Kullback and R. A. Leibler,On information and sufficiency, The Annals of Mathematical Statistics22(1951) 1,

work page 1951

- [15]

-

[16]

[85]P . Alquier,User-friendly introduction to pac-bayes bounds, Foundations and Trends® in Machine Learning17(2024) 2, 174–303. [86]A. Gelman, J. B. Carlin, H. S. Stern, and D. B. Rubin,Bayesian data analysis. Chapman and Hall/CRC,

work page 2024

- [17]

-

[18]

A conceptual introduction to hamiltonian monte carlo, 2018

[93]M. Betancourt,A conceptual introduction to hamiltonian monte carlo, arXiv preprint arXiv:1701.02434 (2017) . [94]T . Chen, E. Fox, and C. Guestrin,Stochastic gradient hamiltonian monte carlo, in International conference on machine learning, PMLR

-

[19]

[95]D. M. Blei, A. Kucukelbir, and J. D. McAuliffe,Variational inference: A review for statisticians, Journal of the American statistical Association112(2017) 518,

work page 2017

-

[20]

[97]D. J. MacKay ,Bayesian interpolation, Neural computation4(1992) 3,

work page 1992

-

[21]

[98]E. Daxberger, A. Kristiadi, A. Immer, R. Eschenhagen, M. Bauer, and P . Hennig,Laplace redux-effortless bayesian deep learning, Advances in neural information processing systems34(2021) 20089. 39 SciPost Physics Community Reports Submission [99]J. Knoblauch, J. Jewson, and T . Damoulas,An optimization-centric view on bayes’ rule: Reviewing and general...

-

[22]

arXiv:1903.05779[cs.LG]. [107]T . G. Rudner, Z. Chen, Y. W . Teh, and Y. Gal,Tractable function-space variational inference in bayesian neural networks, Advances in Neural Information Processing Systems35(2022) 22686, arXiv:2312.17199[stat.ML]. [108]Y. Gal and Z. Ghahramani,Dropout as a bayesian approximation: Representing model uncertainty in deep learni...

-

[23]

arXiv:2402.00809[cs.LG]. [110]M. Sensoy , L. Kaplan, and M. Kandemir,Evidential deep learning to quantify classification uncertainty, arXiv:1806.01768[cs.LG]. [111]A. Amini, W . Schwarting, A. Soleimany , and D. Rus,Deep evidential regression, arXiv:1910.02600[cs.LG]. [112]N. Meinert and A. Lavin,Multivariate deep evidential regression, arXiv:2104.06135 [...

-

[24]

[120]M. Frate, K. Cranmer, S. Kalia, A. Vandenberg-Rodes, and D. Whiteson,Modeling Smooth Backgrounds and Generic Localized Signals with Gaussian Processes, arXiv:1709.05681[physics.data-an]. [121]J. Horak, J. M. Pawlowski, J. Rodríguez-Quintero, J. Turnwald, J. M. Urban, N. Wink, and S. Zafeiropoulos,Reconstructing QCD spectral functions with Gaussian pr...

-

[25]

arXiv:1710.07283 [stat.ML]. [128]N. Houlsby , F . Huszár, Z. Ghahramani, and M. Lengyel,Bayesian active learning for classification and preference learning, arXiv:1112.5745[stat.ML]. [129]W . Chen, B. Li, R. Zhang, and Y. Li,Bayesian computation in deep learning, arXiv:2502.18300[cs.LG]. [130]S. Diefenbacher, G. Kasieczka, and S. Palacios Schweitzer,Gener...

-

[26]

http://github.com/jax-ml/jax. [134]P . Kidger and C. Garcia,Equinox: neural networks in JAX via callable PyTrees and filtered transformations, Differentiable Programming workshop at Neural Information Processing Systems 2021 (2021) , arXiv:2111.00254[cs.LG]. [135]A. Cabezas, A. Corenflos, J. Lao, and R. Louf,Blackjax: Composable Bayesian inference in JAX,...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.