Recognition: 2 theorem links

· Lean TheoremA Theory of Multilevel Interactive Equilibrium in NeuroAI

Pith reviewed 2026-05-12 04:12 UTC · model grok-4.3

The pith

Multilevel Interactive Equilibrium generalizes Nash equilibrium to intelligent systems by requiring mutual stabilization across neural learning dynamics, cognitive representations, and behavioral strategies.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

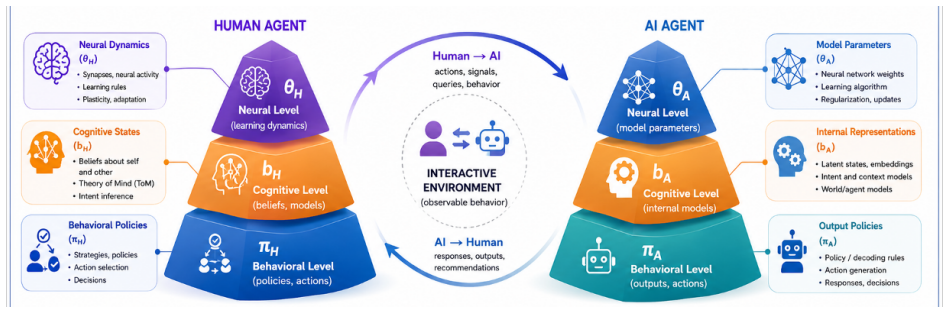

At its core, Multilevel Interactive Equilibrium (MIE) generalizes the classical Nash equilibrium to intelligent systems with internal computation. Rather than being defined solely at the level of observable behavior, equilibrium emerges when neural learning dynamics, cognitive representations, and behavioral strategies mutually stabilize between interacting agents. This framework applies uniformly to interactions between two biological brains, two artificial agents, or hybrid human-AI systems.

What carries the argument

Multilevel Interactive Equilibrium (MIE), the condition that neural learning dynamics, cognitive representations, and behavioral strategies reach mutual stabilization between agents under partial observability and bounded computation.

If this is right

- Stability criteria for human-autonomous vehicle driving can be stated in terms of joint neural, cognitive, and behavioral alignment rather than behavior alone.

- Human-LLM interaction can be analyzed by checking whether the model’s internal representations and the human’s cognitive model settle into mutual consistency.

- Computational psychiatry gains a language for modeling disorders as failures of multilevel stabilization between agents.

- Experimental protocols can estimate MIE parameters from simultaneous recordings of neural signals and behavioral choices in hybrid systems.

- Computational methods become available for solving equilibria when agents have bounded computation and uncertain observations.

Where Pith is reading between the lines

- Design of safe AI systems may need to align internal learning rules with human cognitive dynamics in addition to matching final actions.

- Metrics for multi-agent reinforcement learning could be extended to penalize divergence at the representation level even when behavior appears coordinated.

- Brain-computer interfaces might be evaluated by whether they produce multilevel equilibrium rather than by behavioral performance alone.

- The same stabilization logic could be tested in purely artificial multi-agent settings by tracking hidden-layer dynamics across agents.

Load-bearing premise

Equilibrium can be defined and stabilized across unobservable internal levels such as neural dynamics and cognitive representations without independent measurable criteria for those states.

What would settle it

A controlled experiment on human-AI interaction in which observable behavior reaches a stable pattern while neural activity or internal representations continue to change without bound, or the reverse.

Figures

read the original abstract

We propose a game-theoretic framework for adaptive multi-agent intelligent systems. Unlike classical game theory, which often treats strategies as primitive objects chosen by perfectly rational agents, the proposed framework provides a mathematical foundation for studying equilibrium in NeuroAI and can be viewed as an extension of game theory under relaxed assumptions, including partial observability, bounded computation, and uncertainty. At its core, Multilevel Interactive Equilibrium (MIE) generalizes the classical Nash equilibrium to intelligent systems with internal computation. Rather than being defined solely at the level of observable behavior, equilibrium emerges when neural learning dynamics, cognitive representations, and behavioral strategies mutually stabilize between interacting agents. This framework applies uniformly to interactions between two biological brains, two artificial agents, or hybrid human-AI systems. We discuss applications of multilevel game theory to human-autonomous vehicle driving, human-machine interaction, human-large language model (LLM) interaction, and computational psychiatry. We also outline experimental strategies and computational methods for estimating MIE and discuss challenges and prospects for future research.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes a game-theoretic framework called Multilevel Interactive Equilibrium (MIE) for adaptive multi-agent intelligent systems in NeuroAI. It generalizes the classical Nash equilibrium by defining equilibrium as emerging from mutual stabilization across neural learning dynamics, cognitive representations, and behavioral strategies, under relaxed assumptions of partial observability, bounded computation, and uncertainty. The framework is positioned as applicable to biological brains, artificial agents, or hybrid systems, with discussions of applications to human-autonomous vehicle interactions, human-machine interaction, human-LLM interaction, and computational psychiatry, plus outlines of experimental and computational estimation methods.

Significance. If rigorously formalized with definitions, consistency proofs, and reduction to classical cases, MIE could offer a useful conceptual bridge between game theory and NeuroAI for modeling equilibria involving internal states. As currently presented, the absence of any mathematical content limits its significance to a high-level outline.

major comments (2)

- Abstract: The central claim that MIE generalizes Nash equilibrium to systems with internal computation is stated only conceptually, with no equations, formal definition of 'mutual stabilization,' derivation, or proof that the framework reduces to Nash equilibrium under full observability and unbounded computation. This absence is load-bearing for the paper's core contribution and prevents technical evaluation of consistency or novelty.

- Abstract: The definition of MIE via mutual stabilization across unobservable levels (neural dynamics and cognitive representations) lacks any independent, measurable criteria or external benchmarks for stabilization, raising a risk that the equilibrium concept is defined circularly in terms of itself rather than providing falsifiable conditions.

minor comments (2)

- The manuscript would benefit from including at least one simplified mathematical example or toy model (e.g., a two-agent case with explicit update rules) to demonstrate how MIE is constructed or estimated from the relaxed assumptions.

- Additional references to existing work in neuroeconomics, hierarchical game theory, multi-agent reinforcement learning with partial observability, or bounded rationality models should be added to better situate the proposed framework.

Simulated Author's Rebuttal

We thank the referee for their constructive comments, which identify important opportunities to strengthen the formal foundations of the Multilevel Interactive Equilibrium framework. We address each major comment point by point below and outline the revisions we will make.

read point-by-point responses

-

Referee: Abstract: The central claim that MIE generalizes Nash equilibrium to systems with internal computation is stated only conceptually, with no equations, formal definition of 'mutual stabilization,' derivation, or proof that the framework reduces to Nash equilibrium under full observability and unbounded computation. This absence is load-bearing for the paper's core contribution and prevents technical evaluation of consistency or novelty.

Authors: We agree that the current abstract and manuscript present the generalization at a conceptual level without explicit equations or proofs. In the revised version, we will add a formal definition of MIE, including the joint state space across neural, representational, and behavioral levels, the coupled dynamics governing mutual stabilization (defined as convergence to a joint fixed point), and a reduction theorem showing that MIE coincides with Nash equilibrium when observability is complete and computational bounds are removed. These elements will be summarized in the abstract and developed in a dedicated formal section. revision: yes

-

Referee: Abstract: The definition of MIE via mutual stabilization across unobservable levels (neural dynamics and cognitive representations) lacks any independent, measurable criteria or external benchmarks for stabilization, raising a risk that the equilibrium concept is defined circularly in terms of itself rather than providing falsifiable conditions.

Authors: We acknowledge the risk of circularity in the current phrasing. The revision will define stabilization non-circularly as the joint cessation of change in the learning operators at each level, with independent criteria drawn from observable behavioral variance and inferred internal consistency (e.g., via representational similarity analysis). We will also elaborate the experimental and computational estimation procedures already outlined in the manuscript to supply concrete falsifiable tests, including protocols for hybrid human-AI settings that rely on measurable behavioral and neural data. revision: yes

Circularity Check

No derivation chain or equations present to evaluate for circularity

full rationale

The manuscript is a high-level conceptual proposal that outlines Multilevel Interactive Equilibrium as a generalization of Nash equilibrium but supplies no formal definitions, equations, derivations, or mathematical steps. Without any claimed derivation chain, predictions, or first-principles results that could reduce to inputs by construction, no circularity can be identified or exhibited via quotation of specific reductions. The framework remains self-contained as a descriptive extension under relaxed assumptions, with no load-bearing self-citations, fitted inputs, or ansatzes invoked.

Axiom & Free-Parameter Ledger

axioms (2)

- ad hoc to paper Equilibrium in intelligent systems emerges from mutual stabilization across neural learning dynamics, cognitive representations, and behavioral strategies

- domain assumption Classical Nash equilibrium can be generalized under relaxed assumptions of partial observability, bounded computation, and uncertainty

invented entities (1)

-

Multilevel Interactive Equilibrium (MIE)

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Definition 4 (Multilevel Interactive Equilibrium) A tuple (θ*, b*, π*) is a Multilevel Interactive Equilibrium (MIE) if ... θ* is a neural equilibrium ... b* is a cognitive equilibrium ... π* is a behavioral equilibrium

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

The evolution of the full system can be described by a Markov process z_{t+1} ∼ T(· | z_t) ... induced jointly by the environmental dynamics and the multilevel update operators {F_i, G_i, H_i}

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

C.F. Camerer. Behavioral Game Theory. Princeton University Press (2003)

work page 2003

-

[2]

C.F. Camerer. Behavioral game theory and neural basis of strategic choice. In Neuroeconomics: Decision Making and the Brain . pp. 193-206 (2009)

work page 2009

-

[3]

B.J. Chasnov, L.J. Ratliff, S.A. Burden. Human adaptation to adaptive machines converges to game-theoretic equilibria. Sci. Rep. 15 , 29364 (2025)

work page 2025

- [4]

-

[5]

M. Abdallah, S. Bagchi, S.D. Bopardikar, K. Chan, X. Gao, M. Kantarcioglu, and Q. Zhu. Game theory in distributed systems security: Foundations, challenges, and future directions. IEEE Security & Privacy , 23 , 64-74 (2025)

work page 2025

-

[6]

J. Foerster, R.Y. Chen, M. Al-Shedivat, S. Whiteson, P. Abbeel, I. Mordatch. Learning with opponent-learning awareness. Proceedings of 17th International Conference on Autonomous Agents and MultiAgent Systems (AAMAS’18) , pp. 122 -130 (2018)

work page 2018

-

[7]

P.W. Glimcher, E. Fehr, editors. Neuroeconomics Decision Making and the Brain . Academic Press, Cambridge, MA (2014)

work page 2014

- [8]

-

[9]

L. Huang and Q. Zhu. Cognitive Security: A System Science Approach . SpringerBriefs, Springer (2023)

work page 2023

-

[10]

M.S. Ismail. Exploring the constraints on artificial general intelligence: A game-theoretic model of human vs machine interaction. Mathematical Social Sciences , 129 , 70-76 (2024)

work page 2024

- [11]

- [12]

-

[13]

K. Khalvati, S.A. Park, S. Mirbagheri, R. Philippe, M. Sestito, J.C. Dreher, R.P.N. Rao. Modeling other minds: Bayesian inference explains human choices in group decision-making. Sci Adv. 5 , eaax8783 (2019)

work page 2019

-

[14]

J.Y. Lee, S. Lee, A. Mishra, X. Yan, B. McMahan, B. Gaisford, C. Kobashigawa, M. Qu, C. Xie, J.C. Kao. Brain-computer interface control with artificial intelligence copilots. Nat Mach Intell. 7 , 1510-1523 (2025)

work page 2025

-

[15]

T. Li, Y. Zhao, Q. Zhu. The role of information structures in game-theoretic multi-agent learning. Ann. Rev. Control , 53 , 296-314

- [16]

-

[17]

T. Li, Z. Bian, H. Lei, F. Zuo, Y.T. Yang, Q. Zhu, Z. Li, Z. Chen, and K. Ozbay. Digital twin-based driver risk-aware predictive mobility analytics for real-time situational awareness through cooperative sensing. IEEE Transactions on Intelligent Transportation Systems , 26 , 20071-20090 (2025)

work page 2025

- [18]

-

[19]

M.L. Littman. Markov games as a framework for multi-agent reinforcement learning. Proceedings of 11th Int Conf. Machine Learning , pp. 157-163 (1994)

work page 1994

-

[20]

R. Loula, L.H.A. Monteiro. A game theory-based model for predicting depression due to frustration in competitive environments. Comput Math Methods Med. 2020 , 3573267 (2020)

work page 2020

- [21]

-

[22]

M.M. Madduri, S.A. Burden, A.L. Orsborn. A game-theoretic model for co-adaptive brain-machine interfaces. Proceedings of 10th International IEEE/EMBS Conference on Neural Engineering (NER) , pp. 327-330 (2021)

work page 2021

-

[23]

M.M. Madduri, M. Yamagami, S.J. Li, S. Burckhardt, S.A. Burden, A.L. Orsborn. Predicting and shaping human-machine interactions in closed-loop, co-adaptive neural interfaces. BioRxiv preprint. https://www.biorxiv.org/content/10.1101/2024.05.23.595598v1

-

[24]

A. Meulemans, et al. Embedded universal predictive intelligence: a coherent framework for multi-agent learning. arXiv preprint, https://arxiv.org/abs/2511.22226 (2025)

-

[25]

P.R. Montague, R.J. Dolan, K.J. Friston, P. Dayan. Computational psychiatry. Trends Cogn Sci. 16 , 72-80 (2012)

work page 2012

-

[26]

Y.E. Nugraha, T. Hayakawa, H. Ishii, A. Cetinkaya, and Q. Zhu. Perfect Bayesian equilibria of two-player games in resilient multiagent systems. Dynamic Games and Applications , 16 , 198-219 (2026)

work page 2026

-

[27]

A. Oulasvirta, P.O. Kristensson, X. Bi, A. Howes, editors Computational Interaction . Oxford University Press (2018)

work page 2018

-

[28]

K.G. Oweiss, I.S. Badreldin. Neuroplasticity subserving the operation of brain-machine interfaces. Neurobiol Dis. 83 , 161-171 (2015)

work page 2015

-

[29]

T. Parr, G. Pezzulo, K.J. Friston. Active Inference: The Free Energy Principle in Mind, Brain, and Behavior . MIT Press, Cambridge, MA (2022)

work page 2022

-

[30]

M. Razakatinana, C. Kolski, R. Mandiau, T. Mahatody. Game theory-based human-assistant agent interaction model: feasibility study for a complex task. Proc. 8th Int. Conf. Human-Agent Interaction (HAI'20) . pp. 187-195 (2020). https://doi.org/10.1145/3406499.3415071

-

[31]

Y.S. Razin, K.M. Feigh. Committing to interdependence: Implications from game theory for human-robot trust. arXiv preprint, https://arxiv.org/abs/2111.06939 (2021)

-

[32]

J.K. Rilling, A.G. Sanfey. The neuroscience of social decision-making. Annu Rev Psychol. 62 , 23-48 (2011)

work page 2011

- [33]

- [34]

- [35]

-

[36]

Q. Su, H. Wang, Y. Xia, et al. A multi-agent reinforcement learning framework for exploring dominant strategies in iterated and evolutionary games. Nat. Commun. 17 , 490 (2026)

work page 2026

-

[37]

J. Von Neumann, O. Morgenstern. Theory of Games and Economic Behavior. Princeton University Press (1944)

work page 1944

-

[38]

W. Yoshida, R.J. Dolan, K.J. Friston. Game theory of mind. PLoS Comput Biol. 4 , e1000254 (2008)

work page 2008

-

[39]

W. Yoshida, B. Seymour, K.J. Friston, R.J. Dolan. Neural mechanisms of belief inference during cooperative games. J. Neurosci. 30 , 10744-10751 (2010)

work page 2010

- [40]

- [41]

-

[42]

Q. Zhu. Neuronic Nash equilibrium: An EEG data-driven game-theoretic framework for BCI-enabled multi-agent behaviors. Preprint. doi: 10.20944/preprints202512.2278.v1 (2025)

- [43]

-

[44]

Q. Zhu. Generative-conjectural LLM equilibrium for agentic AI deception with applications to spearphishing. In: Baras, J.S., Papavassiliou, S., Tsiropoulou, E.E., Sayin, M.O., Eds. Game Theory and AI for Security. GameSec 2025. Lecture Notes in Computer Science, vol. 16223. Springer, Cham (2026)

work page 2025

- [45]

- [46]

- [47]

-

[48]

Y. Zhao and Q. Zhu. Stackelberg meta-learning based shared control for assistive driving. arXiv preprint arXiv:2403.10736 (2024)

- [49]

- [50]

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.