Recognition: 2 theorem links

· Lean TheoremA Riemannian quasi-Newton algorithm for optimization with Euclidean bounds

Pith reviewed 2026-05-12 04:43 UTC · model grok-4.3

The pith

A Riemannian limited-memory BFGS method handles Euclidean bounds on manifolds by adapting the generalized Cauchy point strategy to tangent spaces.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

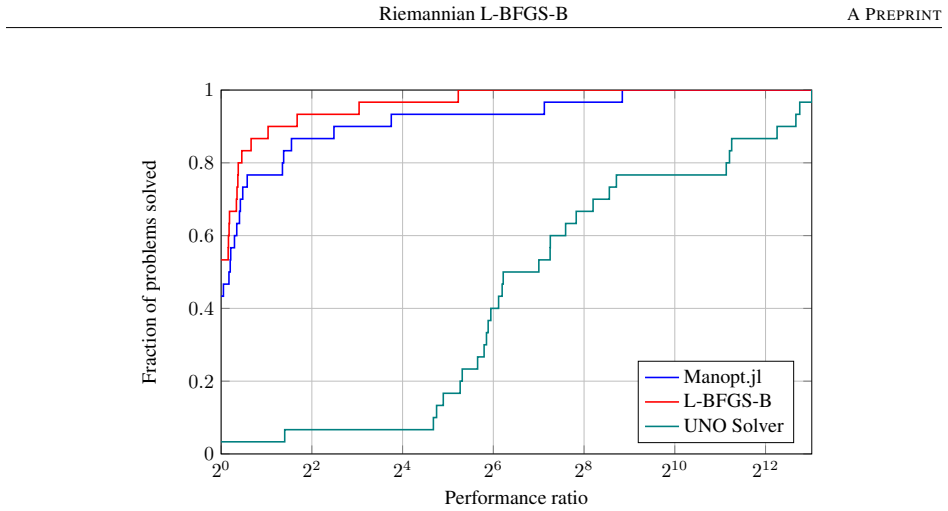

The central claim is that a limited-memory quasi-Newton update performed in the tangent space, paired with a Riemannian adaptation of the generalized Cauchy point strategy, yields a practical solver for manifold optimization problems that also carry Euclidean bounds. On pure Euclidean test problems the method performs close to classical L-BFGS-B; on two mixed manifold-bound problems (amplitude-limited blind source separation with Gaussianity penalization and bounded-variance maximum likelihood common principal components analysis) it outperforms existing solvers by several orders of magnitude.

What carries the argument

The central mechanism is the combination of tangent-space limited-memory BFGS updates with a Riemannian adaptation of the generalized Cauchy point strategy from L-BFGS-B.

If this is right

- The algorithm matches classical L-BFGS-B performance with only minor reduction when all variables are Euclidean.

- It outperforms interior-point methods on standard benchmark problems.

- It delivers several orders of magnitude faster solves on amplitude-limited blind source separation with Gaussianity penalization.

- It provides superior performance for bounded-variance maximum likelihood common principal components analysis.

Where Pith is reading between the lines

- The same adaptation pattern could be applied to other quasi-Newton or first-order methods on manifolds that also need inequality constraints.

- Applications listed in the abstract, such as neuroimaging and EEG classification, become more practical once mixed manifold-bound problems can be solved at this speed.

- The generic framework may transfer to other manifold optimization libraries without major redesign.

Load-bearing premise

The Riemannian adaptation of the generalized Cauchy point strategy from L-BFGS-B can be combined with tangent-space limited-memory BFGS updates without introducing instability or losing the quasi-Newton convergence properties on the manifold.

What would settle it

Numerical runs on the amplitude-limited blind source separation or bounded-variance common principal components analysis problems in which the proposed algorithm fails to produce the reported orders-of-magnitude speedups over interior-point methods.

Figures

read the original abstract

We propose a Riemannian limited-memory BFGS method for optimization problems with Euclidean bounds. The method combines a limited-memory quasi-Newton update in the tangent space with a Riemannian adaptation of the generalized Cauchy point strategy from classical L-BFGS-B, enabling efficient handling of Euclidean bounds while exploiting the geometric structure of the optimization domain. This setting is important in several applications, including covariance matrix estimation with bounded variance, neuroimaging, EEG signal classification, and other signal processing or computer-vision tasks that couple manifold variables with constrained Euclidean parameters. We provide a generic algorithmic framework and an implementation of the algorithm in the Manopt.jl library. Numerical experiments on benchmark problems indicate only minor reduction in performance on Euclidean problems compared to the classical L-BFGS-B method, while outperforming interior-point methods. Furthermore, the algorithm was tested on two mixed manifold and bounded Euclidean problems: amplitude-limited blind source separation with Gaussianity penalization and bounded-variance maximum likelihood common principal components analysis. The proposed method outperforms existing methods by several orders of magnitude.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a Riemannian limited-memory BFGS algorithm for optimization problems involving both manifold constraints and Euclidean bounds. It adapts the generalized Cauchy point strategy from classical L-BFGS-B to the Riemannian setting while performing limited-memory quasi-Newton updates entirely in the tangent space, provides a generic framework and Manopt.jl implementation, and reports that the method shows only minor performance loss versus classical L-BFGS-B on pure Euclidean problems while outperforming interior-point methods and delivering orders-of-magnitude speedups on two mixed problems (amplitude-limited blind source separation with Gaussianity penalization and bounded-variance maximum-likelihood common principal components analysis).

Significance. If the central algorithmic construction is shown to preserve descent and positive-definiteness properties, the work would provide a practical tool for mixed manifold-Euclidean problems arising in signal processing, neuroimaging, and covariance estimation. The open-source implementation in Manopt.jl is a clear strength that supports reproducibility.

major comments (2)

- [Algorithmic framework (§3–4)] The algorithmic description (presumably §3–4) does not supply a proof or even a sufficient-descent argument that the tangent-space L-BFGS update remains positive definite after the Riemannian retraction and the bound-projection step when Euclidean bounds become active. This property is load-bearing for the claim that the method reliably outperforms existing solvers by orders of magnitude on the two mixed-manifold test problems.

- [Numerical experiments] The numerical-experiments section (and the abstract) asserts “outperformance by several orders of magnitude” on the amplitude-limited BSS and bounded-variance ML-CPCA problems, yet supplies neither quantitative tables, problem dimensions, iteration counts, CPU times, nor error-bar statistics. Without these data the central empirical claim cannot be verified.

minor comments (2)

- [Abstract] The abstract would be strengthened by a single sentence referencing the specific tables or figures that quantify the claimed speed-ups.

- [Notation and preliminaries] Notation for the Riemannian retraction and the tangent-space limited-memory update should be introduced once and used consistently; several symbols appear to be defined only locally.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed report. We address each major comment below and indicate the corresponding revisions to the manuscript.

read point-by-point responses

-

Referee: [Algorithmic framework (§3–4)] The algorithmic description (presumably §3–4) does not supply a proof or even a sufficient-descent argument that the tangent-space L-BFGS update remains positive definite after the Riemannian retraction and the bound-projection step when Euclidean bounds become active. This property is load-bearing for the claim that the method reliably outperforms existing solvers by orders of magnitude on the two mixed-manifold test problems.

Authors: We agree that an explicit argument establishing positive definiteness and sufficient descent after the retraction and bound-projection steps would strengthen the presentation. In the revised manuscript we will add a concise proof sketch (or sufficient-descent lemma) in §3 showing that the limited-memory BFGS update performed entirely in the tangent space preserves the standard curvature-pair conditions of Euclidean L-BFGS, that the generalized Cauchy-point computation and subsequent projection onto the tangent-space bound set remain first-order consistent with the Euclidean L-BFGS-B construction, and that the retraction (being a local diffeomorphism) does not alter the descent property at the current iterate. This addition directly supports the reliability claims for the mixed-manifold test problems. revision: yes

-

Referee: [Numerical experiments] The numerical-experiments section (and the abstract) asserts “outperformance by several orders of magnitude” on the amplitude-limited BSS and bounded-variance ML-CPCA problems, yet supplies neither quantitative tables, problem dimensions, iteration counts, CPU times, nor error-bar statistics. Without these data the central empirical claim cannot be verified.

Authors: We acknowledge that the current numerical section lacks the quantitative detail needed for independent verification. The revised manuscript will include new tables (and an expanded appendix) reporting, for each benchmark and application: problem dimensions, iteration counts, CPU times, function/gradient evaluations, and error bars (standard deviation over repeated runs with different random seeds). These data will be presented both for the pure-Euclidean comparisons and for the two mixed manifold-plus-bound problems, allowing direct assessment of the claimed speed-ups. revision: yes

Circularity Check

No circularity: algorithmic construction with independent empirical validation

full rationale

The paper proposes a hybrid Riemannian L-BFGS algorithm with a generalized Cauchy point adaptation for Euclidean bounds. Its central contribution is an implementable framework (with Manopt.jl code) whose correctness and performance are assessed via direct numerical comparison on benchmark and application problems. No equations are presented that define a quantity in terms of itself, no fitted parameters are relabeled as predictions, and no load-bearing uniqueness or convergence claim is justified solely by self-citation. The outperformance statements rest on explicit test instances rather than on any reduction to the algorithm's own inputs.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The feasible set is the product of a Riemannian manifold and a Euclidean box constraint set.

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearThe method combines a limited-memory quasi-Newton update in the tangent space with a Riemannian adaptation of the generalized Cauchy point strategy from classical L-BFGS-B

-

IndisputableMonolith/Foundation/BranchSelection.leanbranch_selection unclearWe construct limited memory approximations to both the Hessian Hk and its inverse Bk simultaneously

Reference graph

Works this paper leans on

-

[1]

Yanhong Fei, Yingjie Liu, Chentao Jia, Zhengyu Li, Xian Wei, and Mingsong Chen. A Survey of Geometric Optimization for Deep Learning: From Euclidean Space to Riemannian Manifold.ACM Comput. Surv., 57(5): 123:1–123:37, January 2025. ISSN 0360-0300. doi:10.1145/3708498

-

[2]

Pau Closas, Lorenzo Ortega, Julien Lesouple, and Petar M. Djuri ´c. Emerging trends in signal processing and machine learning for positioning, navigation and timing information: special issue editorial.EURASIP Journal on Advances in Signal Processing, 2024(1):84, September 2024. ISSN 1687-6180. doi:10.1186/s13634-024-01182-8

-

[3]

Statistical analysis on stiefel and grassmann manifolds with applications in computer vision

Pavan Turaga, Ashok Veeraraghavan, and Rama Chellappa. Statistical analysis on stiefel and grassmann manifolds with applications in computer vision. In26th IEEE Conference on Computer Vision and Pattern Recognition, CVPR, page 4587733, 2008. doi:10.1109/CVPR.2008.4587733

-

[4]

Pascal Mettes, Mina Ghadimi Atigh, Martin Keller-Ressel, Jeffrey Gu, and Serena Yeung. Hyperbolic Deep Learning in Computer Vision: A Survey.International Journal of Computer Vision, 132(9):3484–3508, September

-

[5]

doi:10.1007/s11263-024-02043-5

ISSN 1573-1405. doi:10.1007/s11263-024-02043-5

-

[6]

Shuo Chen, Jian Kang, Yishi Xing, Yunpeng Zhao, and Donald K. Milton. Estimating large covariance matrix with network topology for high-dimensional biomedical data.Computational Statistics & Data Analysis, 127: 82–95, November 2018. ISSN 0167-9473. doi:10.1016/j.csda.2018.05.008

-

[7]

N Krämer, J Schäfer, and Al Boulesteix. Regularized estimation of large-scale gene association networks using graphical Gaussian models.BMC bioinformatics, 10, November 2009. ISSN 1471-2105. doi:10.1186/1471-2105- 10-384

-

[8]

SPD Matrix Learning for Neuroimaging Analysis: Perspectives, Methods, and Challenges, January 2026

Ce Ju, Reinmar Kobler, Antoine Collas, Motoaki Kawanabe, Cuntai Guan, and Bertrand Thirion. SPD Matrix Learning for Neuroimaging Analysis: Perspectives, Methods, and Challenges, January 2026. arXiv:2504.18882 [cs]

-

[9]

Ronny Bergmann and Daniel Tenbrinck. A Graph Framework for Manifold-Valued Data.SIAM Journal on Imaging Sciences, 11(1):325–360, January 2018. doi:10.1137/17M1118567

-

[10]

EEG Classifi- cation Using Contrastive Learning and Riemannian Tangent Space Representations

Ahmed Tibermacine, Imad Eddine Tibermacine, Meftah Zouai, and Abdelaziz Rabehi. EEG Classifi- cation Using Contrastive Learning and Riemannian Tangent Space Representations. In2024 Interna- tional Conference on Telecommunications and Intelligent Systems (ICTIS), pages 1–7, December 2024. doi:10.1109/ICTIS62692.2024.10894645. 13 Riemannian L-BFGS-BA PREPRINT

-

[11]

Hanyuan Gong, Binhang Zhang, Xianbao Yuan, Yonghong Zhang, Qingwen Xiong, Haibo Tang, Jianjun Zhou, Sen Zhang, and Yunlong Xiao. An Online Adaptive Physics-Constrained DMD Algorithm with Grassmann Manifold Spatial Mapping for Neutronic-Depletion Coupling Calculation.Computer Physics Communications, page 110204, April 2026. ISSN 0010-4655. doi:10.1016/j.cp...

-

[12]

Samy Labsir, Julien Lesouple, and Jean-Yves Tourneret. K-Means and Gaussian Mixture Models on Lie Groups: Application to Geometrical Clustering.IEEE Transactions on Signal Processing, pages 1–16, 2026. ISSN 1941-0476. doi:10.1109/TSP.2026.3678342

-

[13]

Florent Bouchard, Jérôme Malick, and Marco Congedo. Riemannian Optimization and Approximate Joint Diagonalization for Blind Source Separation.IEEE Transactions on Signal Processing, 66(8):2041–2054, April

work page 2041

-

[14]

ISSN 1941-0476. doi:10.1109/TSP.2018.2795539

-

[15]

Dimitri A. Lezcano, Iulian I. Iordachita, and Jin Seob Kim. Lie-Group Theoretic Approach to Shape-Sensing Using FBG-Sensorized Needles Including Double-Layer Tissue and S-Shape Insertions.IEEE Sensors Journal, pages 1–1, 2022. ISSN 1558-1748. doi:10.1109/JSEN.2022.3212209

-

[16]

Amitay Bar and Ronen Talmon. On Interference-Rejection Using Riemannian Geometry for Direction of Arrival Estimation.IEEE Transactions on Signal Processing, 72:260–274, 2024. ISSN 1941-0476. doi:10.1109/TSP.2023.3322779

-

[17]

Han Liu, Lie Wang, and Tuo Zhao. Sparse Covariance Matrix Estimation With Eigenvalue Con- straints.Journal of Computational and Graphical Statistics, 23(2):439–459, April 2014. ISSN 1061-8600. doi:10.1080/10618600.2013.782818

-

[18]

Franke, Michael Hefenbrock, Gregor Koehler, and Frank Hutter

Jörg K. Franke, Michael Hefenbrock, Gregor Koehler, and Frank Hutter. Improving Deep Learning Optimization through Constrained Parameter Regularization.Advances in Neural Information Processing Systems, 37:8984– 9025, December 2024. doi:10.52202/079017-0286

-

[19]

Jin-Xian Liu and Jenq-Shiou Leu. ETCN-NNC-LB: Ensemble TCNs With L-BFGS-B Optimized No Negative Constraint-Based Forecasting for Network Traffic.IEEE Transactions on Network and Service Management, 22 (4):3692–3704, August 2025. ISSN 1932-4537. doi:10.1109/TNSM.2025.3563978

-

[20]

Fajemisin, Donato Maragno, and Dick den Hertog

Adejuyigbe O. Fajemisin, Donato Maragno, and Dick den Hertog. Optimization with constraint learning: A framework and survey.European Journal of Operational Research, 314(1):1–14, April 2024. ISSN 0377-2217. doi:10.1016/j.ejor.2023.04.041

-

[21]

Simple algorithms for optimization on riemannian manifolds with constraints

Changshuo Liu and Nicolas Boumal. Simple algorithms for optimization on riemannian manifolds with constraints. 82(3):949–981. ISSN 1432-0606. doi:10.1007/s00245-019-09564-3

-

[22]

Riemannian interior point methods for constrained optimization on manifolds

Zhijian Lai and Akiko Yoshise. Riemannian interior point methods for constrained optimization on manifolds. Journal of Optimization Theory and Applications, 201(1):433–469, 2024. doi:10.1007/s10957-024-02403-8

-

[23]

Sébastien Da Veiga and Amandine Marrel. Gaussian process modeling with inequality constraints.Annales de la Faculté des sciences de Toulouse : Mathématiques, 21(3):529–555, 2012. ISSN 2258-7519. doi:10.5802/afst.1344

-

[24]

Laura P. Swiler, Mamikon Gulian, Ari L. Frankel, Cosmin Safta, and John D. Jakeman. A sur- vey of Constrained Gaussian Process Regression: Approaches and Implementation Challenges.Jour- nal of Machine Learning for Modeling and Computing, 1(2), 2020. ISSN 2689-3967, 2689-3975. doi:10.1615/JMachLearnModelComput.2020035155

-

[25]

Andrew Pensoneault, Xiu Yang, and Xueyu Zhu. Nonnegativity-enforced Gaussian process regression.Theoretical and Applied Mechanics Letters, 10(3):182–187, March 2020. ISSN 2095-0349. doi:10.1016/j.taml.2020.01.036

-

[26]

Wen Huang, K. A. Gallivan, and P.-A. Absil. A broyden class of quasi-newton methods for riemannian optimization. 25(3):1660–1685. ISSN 1052-6234. doi:10.1137/140955483. Publisher: Society for Industrial and Applied Mathematics

-

[27]

Wen Huang, P.-A. Absil, and K. A. Gallivan. A riemannian bfgs method without differentiated retraction for non- convex optimization problems.SIAM Journal on Optimization, 28(1):470–495, 2018. doi:10.1137/17M1127582

-

[28]

Byrd, Peihuang Lu, Jorge Nocedal, and Ciyou Zhu

Richard H. Byrd, Peihuang Lu, Jorge Nocedal, and Ciyou Zhu. A Limited Memory Algorithm for Bound Constrained Optimization.SIAM Journal on Scientific Computing, 16(5):1190–1208, September 1995. ISSN 1064-8275. doi:10.1137/0916069. Publisher: Society for Industrial and Applied Mathematics

-

[29]

and Lu, Peihuang and Nocedal, Jorge , title =

Ciyou Zhu, Richard H. Byrd, Peihuang Lu, and Jorge Nocedal. Algorithm 778: L-BFGS-B: Fortran subroutines for large-scale bound-constrained optimization.ACM Trans. Math. Softw., 23(4):550–560, December 1997. ISSN 0098-3500. doi:10.1145/279232.279236

-

[30]

Byrd, Jorge Nocedal, and Robert B

Richard H. Byrd, Jorge Nocedal, and Robert B. Schnabel. Representations of quasi-Newton matrices and their use in limited memory methods.Mathematical Programming, 63(1):129–156, January 1994. ISSN 1436-4646. doi:10.1007/BF01582063. 14 Riemannian L-BFGS-BA PREPRINT

-

[31]

Manopt.jl: Optimization on manifolds in Julia.Journal of Open Source Software, 7(70):3866,

Ronny Bergmann. Manopt.jl: Optimization on manifolds in Julia.Journal of Open Source Software, 7(70):3866,

-

[32]

doi:10.21105/joss.03866

-

[33]

Axen, Mateusz Baran, Ronny Bergmann, and Krzysztof Rzecki

Seth D. Axen, Mateusz Baran, Ronny Bergmann, and Krzysztof Rzecki. Manifolds.Jl: An Extensible Ju- lia Framework for Data Analysis on Manifolds.ACM Transactions on Mathematical Software, 49(4), 2023. doi:10.1145/3618296

-

[34]

P.-A. Absil, R. Mahony, and R. Sepulchre.Optimization Algorithms on Matrix Manifolds. Princeton University Press. doi:10.1515/9781400830244

-

[35]

Nicolas Boumal.An Introduction to Optimization on Smooth Manifolds. Cambridge University Press. doi:10.1017/9781009166164. URLhttps://www.nicolasboumal.net/book

-

[36]

Dominic Joyce. On manifolds with corners. URLhttp://arxiv.org/abs/0910.3518

-

[37]

Nicholas I. M. Gould, Dominique Orban, and Philippe L. Toint. CUTEst: a constrained and unconstrained testing environment with safe threads for mathematical optimization. 60(3):545–557. ISSN 1573-2894. doi:10.1007/s10589-014-9687-3

-

[38]

Serge Gratton and Philippe L. Toint. S2MPJ and CUTEst optimization problems for Matlab, Python and Julia

-

[39]

Andreas Wächter and Lorenz T. Biegler. On the implementation of an interior-point filter line-search algorithm for large-scale nonlinear programming.Mathematical Programming, 106(1):25–57, March 2006. ISSN 1436-4646. doi:10.1007/s10107-004-0559-y

-

[40]

Implementing a unified solver for nonlinearly constrained optimization

Charlie Vanaret and Sven Leyffer. Implementing a unified solver for nonlinearly constrained optimization. Accepted to Mathematical Programming Computation on Feb 22, 2026, 2026

work page 2026

-

[41]

A. Hyvärinen and E. Oja. Independent component analysis: algorithms and applications.Neural Networks, 13(4): 411–430, June 2000. ISSN 0893-6080. doi:10.1016/S0893-6080(00)00026-5

-

[42]

Don’t unroll adjoint: Dif- ferentiating SSA-Form programs

Michael Innes. Don’t Unroll Adjoint: Differentiating SSA-Form Programs.arXiv:1810.07951 [cs], March 2019. arXiv: 1810.07951

-

[43]

URLhttps://vincentarelbundock.github.io/Rdatasets

Vincent Arel-Bundock.Rdatasets: A collection of datasets originally distributed in various R packages, 2025. URLhttps://vincentarelbundock.github.io/Rdatasets. R package version 1.0.0. 15

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.