Recognition: 2 theorem links

· Lean TheoremStartFlow: From Method Conception to Multi-Perspective Evaluation in UX Prototyping for Software Startups

Pith reviewed 2026-05-12 04:05 UTC · model grok-4.3

The pith

StartFlow is a three-step method that helps non-specialists in software startups create clearer MVP wireflow prototypes with better adherence to user stories and fewer usability defects.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

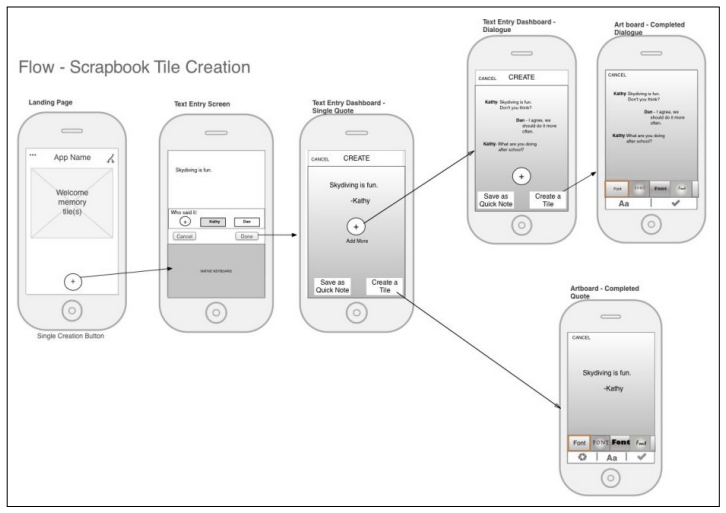

StartFlow is introduced as a method to assist non-specialized professionals in software startups in creating MVP prototypes via the wireflow technique. It comprises three steps: organizing features, building wireflows, and verifying and refining them based on usability heuristics. The focus group with researchers in Software Engineering, Human-Computer Interaction, and Software Startups found the method straightforward, flexible, and helpful for structuring user flows and identifying visual components, though participants called for better presentation of its iterative nature and stronger ties to broader UX principles. The proof-of-concept experiment and subsequent heuristic evaluation with

What carries the argument

The StartFlow method, whose three steps organize feature lists, construct combined wireframe-and-flow diagrams, and apply usability heuristics for refinement.

If this is right

- Prototypes created with StartFlow adhere more closely to the intended user stories and business rules.

- Early-stage MVPs exhibit fewer usability defects when the three-step process is followed.

- Non-specialist teams rate the method as easy to use and report plans for future adoption.

- Wireflow construction helps teams structure user flows and identify needed visual components more systematically.

Where Pith is reading between the lines

- Widespread use could lower the barrier to user-centered design for resource-constrained startup teams that cannot hire UX specialists early on.

- The method might integrate with common agile or lean startup practices to shorten the time from idea to testable prototype.

- A natural next test would apply StartFlow in live startup settings with teams building actual products rather than controlled exercises.

Load-bearing premise

That benefits observed in researcher focus groups and a small expert proof-of-concept will transfer to non-specialist startup teams working under actual time pressure and resource limits.

What would settle it

A field study in real software startups that randomly assigns teams to use or not use StartFlow and then measures prototype clarity, adherence to user stories, and number of usability defects found by independent experts.

Figures

read the original abstract

Context. Software startups face significant challenges in building minimum viable products, particularly in the early stages, when resources are limited and expertise in user experience is scarce. Objective. Introduce StartFlow, a structured method that helps non-specialized professionals create MVP prototypes using the wireflow technique, a combination of wireframes and user flows. StartFlow consists of three steps: (i) organizing features; (ii) building wireflows; and (iii) verifying and refining them based on usability heuristics. Method. To assess the method Startflow, we first conducted a focus group with researchers in Software Engineering, Human-Computer Interaction, and Software Startups. Afterward, we conducted a proof-of-concept study, which consisted of an experiment and a heuristic evaluation with experts. Results. The qualitative analysis of the focus group revealed that participants found the method straightforward, flexible, and helpful in structuring user flows and identifying visual components. However, they also pointed out the need to improve its presentation, clarify its iterative nature, and strengthen its connection to broader UX principles. The results of the proof-of-concept indicate that participants who used StartFlow created clearer prototypes, adhered to the proposed user stories and business rules, and presented fewer usability defects. Furthermore, the method was well evaluated for its ease of use and intended future adoption. Conclusion. The study reinforces the potential of StartFlow as an accessible tool to support user-centered development in software startups from the earliest stages of their product development.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces StartFlow, a three-step method (organizing features, building wireflows, verifying/refining via usability heuristics) for non-specialized professionals in software startups to create MVP prototypes via the wireflow technique. It reports a focus group with researchers in SE/HCI/startups that found the method straightforward and helpful for structuring flows, followed by a proof-of-concept experiment and heuristic evaluation with experts indicating that StartFlow users produced clearer prototypes with better adherence to user stories/business rules and fewer usability defects, plus positive ease-of-use and adoption ratings.

Significance. If the benefits hold for the intended users, StartFlow would provide a lightweight, accessible bridge between business requirements and UX practice for resource-limited startups, addressing a documented gap in early MVP prototyping. The multi-perspective evaluation (qualitative focus group plus experiment and heuristic assessment) is a methodological strength that increases credibility relative to single-method studies.

major comments (2)

- [Abstract (Objective and Results) and the proof-of-concept study description] The central claim that StartFlow helps non-specialized startup professionals rests on evaluation data collected exclusively from researchers (focus group) and experts (proof-of-concept experiment and heuristic evaluation). No participants from the target population of non-specialist startup teams working under time pressure are included, so the reported improvements in prototype clarity, adherence, and defect reduction cannot be assumed to transfer.

- [Conclusion] The Conclusion asserts that the study 'reinforces the potential of StartFlow as an accessible tool to support user-centered development in software startups,' yet the evidence base does not include any direct observation or pilot with actual startup teams; this overgeneralization is load-bearing for the paper's contribution claim.

minor comments (2)

- [Abstract] The abstract omits sample sizes, recruitment criteria, and details on how usability defects were counted or how adherence was scored, reducing the reader's ability to gauge evidence strength.

- [Method] Clarify in the Method section whether the heuristic evaluation used a standard set (e.g., Nielsen) and how inter-rater agreement was handled.

Simulated Author's Rebuttal

We thank the referee for the thoughtful and detailed feedback. We agree that the current evaluation does not include direct participation from non-specialist startup teams and will revise the manuscript to more accurately scope our claims while preserving the value of the reported researcher and expert evaluations.

read point-by-point responses

-

Referee: [Abstract (Objective and Results) and the proof-of-concept study description] The central claim that StartFlow helps non-specialized startup professionals rests on evaluation data collected exclusively from researchers (focus group) and experts (proof-of-concept experiment and heuristic evaluation). No participants from the target population of non-specialist startup teams working under time pressure are included, so the reported improvements in prototype clarity, adherence, and defect reduction cannot be assumed to transfer.

Authors: We accept this observation. The focus group was conducted with researchers in Software Engineering, HCI, and Software Startups to gather structured feedback on the method's design. The subsequent proof-of-concept experiment and heuristic evaluation used experts to compare prototypes produced with and without StartFlow, showing measurable differences in clarity, adherence to user stories, and defect counts. These results provide initial evidence of the method's utility from expert and researcher viewpoints. We will revise the abstract and results sections to explicitly identify the participant groups, qualify the findings as preliminary, and note that transfer to time-pressured startup teams remains to be tested. revision: partial

-

Referee: [Conclusion] The Conclusion asserts that the study 'reinforces the potential of StartFlow as an accessible tool to support user-centered development in software startups,' yet the evidence base does not include any direct observation or pilot with actual startup teams; this overgeneralization is load-bearing for the paper's contribution claim.

Authors: We will revise the conclusion to state that the study, drawing on a researcher focus group and expert-based proof-of-concept evaluations, indicates the potential of StartFlow as an accessible tool. We will add an explicit acknowledgment that direct validation with non-specialist startup teams under realistic constraints is required before stronger claims can be made. This change removes the overgeneralization while retaining the contribution of the method and the multi-perspective evaluation approach. revision: yes

Circularity Check

No circularity: method introduced and evaluated via independent empirical steps

full rationale

The paper defines StartFlow as a three-step method (organizing features, building wireflows, verifying via heuristics) and assesses it through a distinct focus group with researchers followed by a separate proof-of-concept experiment and heuristic evaluation with experts. No mathematical derivations, parameter fittings, self-citations as load-bearing premises, or reductions of results to the method's own inputs occur. Claims about clearer prototypes and fewer defects rest on external participant data rather than by-construction equivalence to the method definition.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Standard usability heuristics can be applied by non-experts to identify defects in early wireflow prototypes

invented entities (1)

-

StartFlow method

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

StartFlow consists of three steps: (i) organizing features; (ii) building wireflows; and (iii) verifying and refining them based on usability heuristics.

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

The results of the proof-of-concept indicate that participants who used StartFlow created clearer prototypes, adhered to the proposed user stories and business rules, and presented fewer usability defects.

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

C. Giardino, M. Unterkalmsteiner, N. Paternoster, T. Gorschek, P. Abrahamsson, What do we know about software development in star- tups?, IEEE software 31 (5) (2014) 28–32

work page 2014

-

[2]

N. Paternoster, C. Giardino, M. Unterkalmsteiner, T. Gorschek, P. Abrahamsson, Software development in startup companies: A sys- 37 tematic mapping study, Information and Software Technology 56 (10) (2014) 1200–1218

work page 2014

-

[3]

C. Giardino, S. S. Bajwa, X. Wang, P. Abrahamsson, Key challenges in early-stage software startups, in: Agile Processes in Software En- gineering and Extreme Programming: 16th International Conference, XP 2015, Helsinki, Finland, May 25-29, 2015, Proceedings 16, Springer, 2015, pp. 52–63

work page 2015

- [4]

-

[5]

G. C. Lapasini Leal, R. Prikladnicki, C. Ebert, R. Balancieri, L. Bento Pompermaier, Practices and tools for software start-ups, IEEE Software 37 (1) (2020) 72–77. doi:10.1109/MS.2019.2946783

-

[6]

G. C. Guerino, N. S. B. C. Dias, R. Chanin, R. Prikladnicki, R. Bal- ancieri, G. C. L. Leal, User experience practices in early-stage software startups-an exploratory study, in: International Conference on Software Business, Springer, 2021, pp. 122–136

work page 2021

-

[7]

Reis, The lean startup, New York: Crown Business 27 (2011) 2016– 2020

E. Reis, The lean startup, New York: Crown Business 27 (2011) 2016– 2020

work page 2011

-

[8]

V.Lenarduzzi, D.Taibi, Mvpexplained: Asystematicmappingstudyon the definitions of minimal viable product, in: 2016 42th Euromicro Con- ference on Software Engineering and Advanced Applications (SEAA), IEEE, 2016, pp. 112–119

work page 2016

-

[9]

D. A. Shepherd, M. Gruber, The lean startup framework: Closing the academic–practitioner divide, Entrepreneurship Theory and Practice 45 (5) (2021) 967–998

work page 2021

-

[10]

S. Alonso, M. Kalinowski, M. Viana, B. Ferreira, S. D. Barbosa, A sys- tematic mapping study on the use of software engineering practices to develop mvps, in: 2021 47th Euromicro Conference on Software Engi- neering and Advanced Applications (SEAA), IEEE, 2021, pp. 62–69. 38

work page 2021

-

[11]

M. Unterkalmsteiner, P. Abrahamsson, X. Wang, A. Nguyen-Duc, S. M. A. Shah, S. S. Bajwa, G. H. Baltes, K. Conboy, E. Cullina, D. Dennehy, et al., Software startups–a research agenda, arXiv preprint arXiv:2308.12816 (2023)

-

[12]

S. A. M. Silveira, J. Choma, R. Pereira, E. M. Guerra, L. A. M. Zaina, Ux work in software start-ups: Challenges from the current state of practice, in: Agile Processes in Software Engineering and Extreme Pro- gramming, Springer International Publishing, Cham, 2021, pp. 19–35. doi:https://doi.org/10.1007/978-3-030-78098-2_2

-

[13]

G. Guerino, M. de Assumpção, T. da Silva, L. Hokkanen, R. Balancieri, G. Leal, User experience practices in software startups: A systematic mapping study, Advances in Human-Computer Interaction 2022 (2022). doi:https://doi.org/10.1155/2022/9701739

-

[14]

L. Zaina, J. Choma, J. Saad, L. Barroca, H. Sharp, L. S. Machado, C. R. de Souza, What do software startups need from ux work?, Empirical Software Engineering 80 (2023) 45. doi:https://doi.org/10.1007/s10664- 023-10322-x

-

[15]

G. C. Guerino, S. Martinelli, J. Choma, G. C. L. Leal, R. Balancieri, L. Zaina, Perceptions about usefulness and attitudes toward ux work: a survey with software startup brazilian professionals, in: Proceedings of the XXII Brazilian Symposium on Human Factors in Computing Sys- tems, IHC ’23, Association for Computing Machinery, New York, NY, USA, 2024. do...

-

[16]

I. 9241-210, Ergonomics of human system interaction-part 210: Human- centred design for interactive systems, in: International Standardization Organization, (ISO), Switzerland, 2019, online; accessed 29 Jan 2024

work page 2019

-

[17]

L.Hokkanen, K.Väänänen-Vainio-Mattila, Uxworkinstartups: current practices and future needs, in: Agile Processes in Software Engineering and Extreme Programming: 16th International Conference, XP 2015, Helsinki, Finland, May 25-29, 2015, Proceedings 16, Springer, 2015, pp. 81–92. 39

work page 2015

-

[18]

J. Saad, S. Martinelli, L. S. Machado, C. R. de Souza, A. Alvaro, L. Zaina, Ux work in software startups: A thematic analysis of the literature, Information and Software Technology 140 (2021) 106688. doi:https://doi.org/10.1016/j.infsof.2021.106688

-

[19]

J. Choma, E. M. Guerra, A. Alvaro, R. Pereira, L. Zaina, In- fluences of ux factors in the agile ux context of software star- tups, Information and Software Technology 152 (2022) 107041. doi:https://doi.org/10.1016/j.infsof.2022.107041. URLhttps://www.sciencedirect.com/science/article/pii/S0950584922001550

-

[20]

L. Hokkanen, K. Kuusinen, K. Väänänen, Minimum viable user experi- ence: A framework for supporting product design in startups, in: Agile Processes, in Software Engineering, and Extreme Programming: 17th International Conference, XP 2016, Edinburgh, UK, May 24-27, 2016, Proceedings 17, Springer, 2016, pp. 66–78

work page 2016

-

[21]

J. Gothelf, Lean UX: Applying lean principles to improve user experi- ence, " O’Reilly Media, Inc.", 2013

work page 2013

-

[22]

F. H. Lermen, P. K. de Moura, V. B. Bertoni, P. Graciano, G. L. Tor- torella, Does maturity level influence the use of agile ux methods by digital startups? evaluating design thinking, lean startup, and lean user experience, Information and Software Technology 154 (2023) 107107

work page 2023

-

[23]

G. Guerino, V. Nogueira, G. Scapim, M. Alexandre, S. Gomes, G. Leal, R. Balancieri, Wireflows in action: A case study on non-ux profession- als’ perceptions of using a ux technique, in: 2024 L Latin American Computer Conference (CLEI), IEEE, 2024, pp. 1–9

work page 2024

-

[24]

G. Guerino, S. Martinelli, J. Choma, G. C. Leal, R. Balancieri, L. Zaina, Investigating ux work in software startups: A survey about attitudes, methods, and key challenges, Journal of the Brazilian Computer Society 30 (1) (2024) 621–638

work page 2024

-

[25]

G. C. Guerino, R. Balancieri, G. C. L. Leal, R. Prikladnicki, Towards the ux support to software startups: On the relationship of professional expertise and ux work in the brazilian scenario, Journal of Systems and Software 219 (2025) 112246. 40

work page 2025

-

[26]

V. Venkatesh, F. D. Davis, A theoretical extension of the technology acceptance model: Four longitudinal field studies, Management Science 46 (2) (2000) 186–204. doi:10.1287/mnsc.46.2.186.11926. URLhttp://dx.doi.org/10.1287/mnsc.46.2.186.11926

-

[27]

J. Nielsen, Enhancing the explanatory power of usability heuristics, in: Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI ’94, Association for Computing Machinery, New York, NY, USA, 1994, p. 152–158. doi:10.1145/191666.191729. URLhttps://doi.org/10.1145/191666.191729

-

[28]

A. Nguyen-Duc, X. Wang, P. Abrahamsson, What influences the speed of prototyping? an empirical investigation of twenty software startups, in: Agile Processes in Software Engineering and Extreme Programming: 18th International Conference, XP 2017, Cologne, Germany, May 22-26, 2017, Proceedings 18, Springer International Publishing, 2017, pp. 20– 36

work page 2017

-

[29]

P. Laubheimer, Wireflows: A ux deliverable for workflows and apps, https://www.nngroup.com/articles/wireflows/, accessed: 2025-12- 11 (2016)

work page 2025

-

[30]

Cohn, User stories applied: For agile software development, Addison- Wesley Professional, 2004

M. Cohn, User stories applied: For agile software development, Addison- Wesley Professional, 2004

work page 2004

-

[31]

G. Lucassen, F. Dalpiaz, J. M. E. v. d. Werf, S. Brinkkemper, The use and effectiveness of user stories in practice, in: Requirements Engi- neering: Foundation for Software Quality: 22nd International Working Conference, REFSQ 2016, Gothenburg, Sweden, March 14-17, 2016, Proceedings 22, Springer, 2016, pp. 205–222

work page 2016

-

[32]

A. Y. Aleryani, Comparative study between data flow diagram and use case diagram, International Journal of Scientific and Research Publica- tions 6 (3) (2016) 124–126

work page 2016

-

[33]

W. Wulandari, A. D. Y. Widiantoro, Design data flow diagram for sup- porting the user experience in applications, Design Data Flow Diagram for Supporting the User Experience in Applications 25 (2) (2017) 14–20. 41

work page 2017

-

[34]

J.Kontio, J.Bragge, L.Lehtola, Thefocusgroupmethodasanempirical tool in software engineering, in: Guide to advanced empirical software engineering, Springer, 2008, pp. 93–116

work page 2008

-

[35]

B. B. N. de França, T. V. Ribeiro, P. S. M. dos Santos, G. H. Travassos, Using focus group in software engineering: lessons learned on character- izing software technologies in academia and industry., in: CIbSE, 2015, p. 351

work page 2015

-

[36]

Edmunds, Focus Group Research Handbook, McGraw-Hill, 2000

H. Edmunds, Focus Group Research Handbook, McGraw-Hill, 2000

work page 2000

-

[37]

V. R. Basili, G. Caldiera, H. D. Rombach, Goal question metric paradigm (1994). URLhttp://www.cs.umd.edu/users/basili/publications/technical/T87.pdf

work page 1994

-

[38]

E. Colucci, “focus groups can be fun”: The use of activity-oriented ques- tions in focus group discussions, Qualitative Health Research 17 (10) (2007) 1422–1433. doi:10.1177/1049732307308129

-

[39]

L. Aversano, T. Bodhuin, G. Canfora, M. Tortorella, A framework for measuring business processes based on gqm, in: 37th Annual Hawaii International Conference on System Sciences, 2004. Proceedings of the, IEEE, 2004, pp. 10–pp

work page 2004

-

[40]

F. D. Davis, Perceived usefulness, perceived ease of use, and user accep- tance of information technology, MIS quarterly (1989) 319–340

work page 1989

-

[41]

F. D. Davis, R. P. Bagozzi, P. R. Warshaw, Extrinsic and intrin- sic motivation to use computers in the workplace, Journal of Ap- plied Social Psychology 22 (14) (1992) 1111–1132. doi:10.1111/j.1559- 1816.1992.tb00945.x. URLhttps://doi.org/10.1111/j.1559-1816.1992.tb00945.x

-

[42]

T. Chesney, An acceptance model for useful and fun information sys- tems, Human Technology: An Interdisciplinary Journal on Humans in ICT Environments 2 (2) (2006) 225–235. doi:10.17011/ht/urn.2006520. URLhttps://ht.csr-pub.eu/index.php/ht/article/view/39

-

[43]

H. Schneider, K. Frison, J. Wagner, A. Butz, Crowdux: a case for using widespread and lightweight tools in the quest for ux, in: Proceedings of 42 the 2016 ACM Conference on Designing Interactive Systems, 2016, pp. 415–426

work page 2016

-

[44]

T. Øvad, L. B. Larsen, How to reduce the ux bottleneck–train your software developers, Behaviour & information technology 35 (12) (2016) 1080–1090

work page 2016

-

[45]

T. Øvad, L. B. Larsen, Templates: A key to success when training developers to perform ux tasks, in: Integrating user-centred design in agile development, Springer, 2016, pp. 77–96

work page 2016

-

[46]

C. Ghezzi, Formal methods and agile development: towards a happy marriage, in: The Essence of Software Engineering, Springer Interna- tional Publishing Cham, 2018, pp. 25–36

work page 2018

-

[47]

W. T. Nakamura, I. Ahmed, D. Redmiles, E. Oliveira, D. Fernandes, E. H. de Oliveira, T. Conte, Are ux evaluation methods providing the same big picture?, Sensors 21 (10) (2021) 3480

work page 2021

-

[48]

S. Rajeshkumar, R. Omar, M. Mahmud, Taxonomies of user experience (ux) evaluation methods, in: 2013 international conference on research and innovation in information systems (icriis), IEEE, 2013, pp. 533–538

work page 2013

-

[49]

A. Ibargoyen, D. Szostak, M. Bojic, The elephant in the conference room: let’s talk about experience terminology, in: CHI’13 Extended Abstracts on Human Factors in Computing Systems, 2013, pp. 2079– 2088

work page 2013

-

[50]

G. R. d. S. Scapim, G. C. L. Leal, G. C. Guerino, New perspectives on low-fidelity prototyping: A systematic mapping study on tools and functionalities, Simpósio Brasileiro sobre Fatores Humanos em Sistemas Computacionais (IHC) (2025) 1565–1588

work page 2025

-

[51]

Zhang, Speeding up the prototyping of low-fidelity user interface wireframes, Ph.D

M. Zhang, Speeding up the prototyping of low-fidelity user interface wireframes, Ph.D. thesis, Undergraduate Research office at Texas A&M University (2022)

work page 2022

- [52]

-

[53]

M. Obaidi, K. Qengaj, J. Droste, H. Deters, M. Herrmann, E. Schmid, K. Schneider, J. Klünder, From app features to explanation needs: An- alyzing correlations and predictive potential, in: 2025 IEEE 33rd In- ternational Requirements Engineering Conference Workshops (REW), IEEE, 2025, pp. 99–106. 44

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.