Recognition: no theorem link

MMVIAD: Multi-view Multi-task Video Understanding for Industrial Anomaly Detection

Pith reviewed 2026-05-12 05:03 UTC · model grok-4.3

The pith

A new multi-view video dataset for industrial inspection combined with structured post-training raises average model performance on anomaly tasks from 45.0 to 57.5 on unseen data and exceeds GPT-5.4.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We introduce MMVIAD, the first continuous multi-view video dataset for industrial anomaly detection and understanding, together with a benchmark for multi-task evaluation. Systematic evaluations show that current commercial and open-source video MLLMs remain far below human performance, especially for fine-grained defect recognition and temporal grounding. We develop a two-stage post-training pipeline where PS-SFT initializes perception-structured reasoning and VISTA-GRPO refines the model with semantic-gated defect reward and visibility-aware temporal reward, producing the final model VISTA. On MMVIAD-Unseen, VISTA improves the base model's average score across the four tasks from 45.0 to

What carries the argument

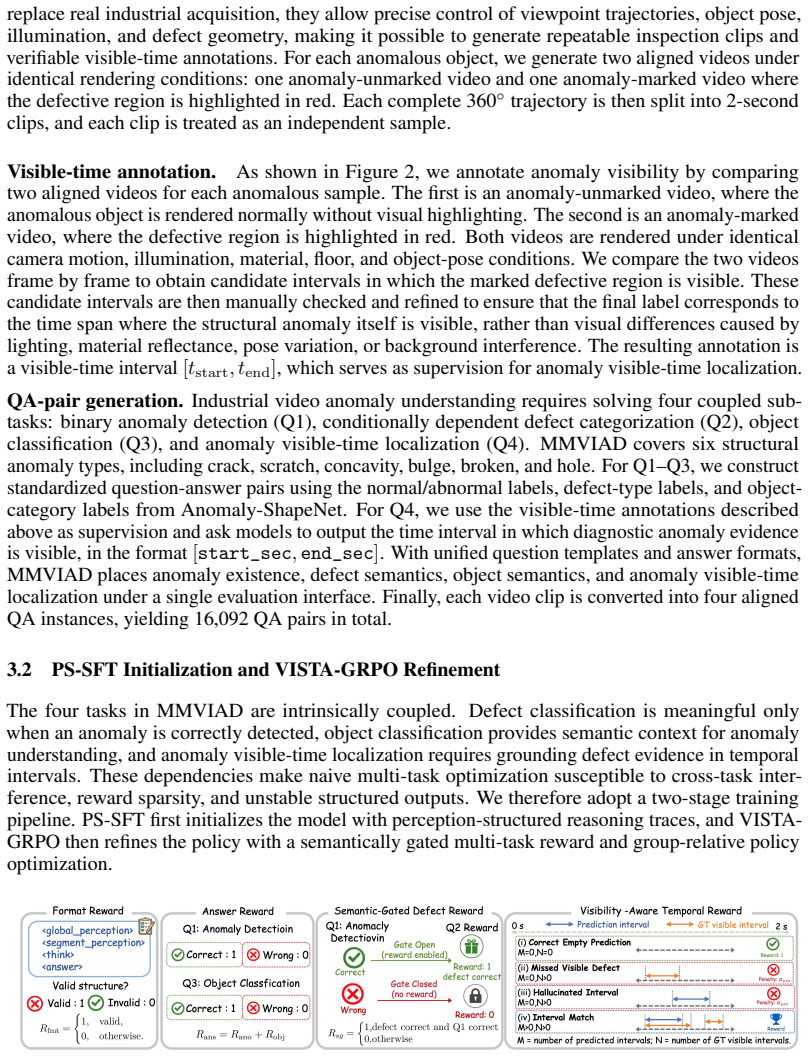

The two-stage post-training pipeline of Perception-Structured Supervised Fine-Tuning (PS-SFT) followed by Visibility-grounded Industrial Structured Temporal Anomaly Group Relative Policy Optimization (VISTA-GRPO), which applies semantic-gated defect rewards and visibility-aware temporal rewards to refine anomaly understanding.

Load-bearing premise

The reported performance gains reflect genuine improvements in anomaly understanding rather than the model fitting to the particular statistics or annotation patterns of the new MMVIAD dataset.

What would settle it

An independent collection of multi-view industrial video clips recorded under similar conditions but outside the MMVIAD distribution, on which VISTA either fails to maintain the 12.5-point average gain or drops back to the base model's 45.0 score, would falsify the claim of transferable improvement.

Figures

read the original abstract

Industrial anomaly detection is critical for manufacturing quality control, yet existing datasets mainly focus on static images or sparse views, which do not fully reflect continuous inspection processes in real industrial scenarios. We introduce MMVIAD (Multi-view Multi-task Video Industrial Anomaly Detection), to the best of our knowledge the first continuous multi-view video dataset for industrial anomaly detection and understanding, together with a benchmark for multi-task evaluation. MMVIAD contains object-centric 2-second inspection clips with approximately 120 degrees of camera motion, covering 48 object categories, 14 environments, and 6 structural anomaly types. It supports anomaly detection, defect classification, object classification, and anomaly visible-time localization. Systematic evaluations on MMVIAD show that current commercial and open-source video MLLMs remain far below human performance, especially for fine-grained defect recognition and temporal grounding. To improve transferable anomaly understanding, we further develop a two-stage post-training pipeline where PS-SFT (Perception-Structured Supervised Fine-Tuning) initializes perception-structured reasoning and VISTA-GRPO (Visibility-grounded Industrial Structured Temporal Anomaly Group Relative Policy Optimization) refines the model with semantic-gated defect reward and visibility-aware temporal reward, producing the final model VISTA. On MMVIAD-Unseen, VISTA improves the base model's average score across the four tasks from 45.0 to 57.5, surpassing GPT-5.4. Source code is available at https://github.com/Georgekeepmoving/MMVIAD.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces MMVIAD, a new multi-view multi-task video dataset for industrial anomaly detection consisting of 2-second 120° object-centric clips across 48 categories, 14 environments, and 6 anomaly types. It supports four tasks (anomaly detection, defect classification, object classification, anomaly visible-time localization) and provides a benchmark showing current video MLLMs lag human performance. The authors propose a two-stage post-training pipeline—PS-SFT for perception-structured reasoning followed by VISTA-GRPO with semantic-gated defect and visibility-aware temporal rewards—yielding the VISTA model. On the MMVIAD-Unseen split, VISTA raises the base model's average multi-task score from 45.0 to 57.5 and surpasses GPT-5.4; source code is released.

Significance. If the reported gains reflect genuine generalization rather than dataset-specific overfitting, MMVIAD would be a valuable addition to industrial anomaly detection resources by shifting focus from static images to continuous multi-view video inspection. The two-stage pipeline and reward design offer a concrete approach to improving structured reasoning in video MLLMs for this domain. The public code release supports reproducibility.

major comments (3)

- [§4, §5.2] §4 (Dataset Construction) and §5.2 (Evaluation Protocol): The construction of the MMVIAD-Unseen split is not specified—e.g., whether entire object categories, environments, or anomaly types are held out versus a random video-level split. Without this, the claim that the 57.5 average score demonstrates transferable anomaly understanding (rather than exploitation of MMVIAD's particular statistics) cannot be evaluated.

- [§5.3] §5.3 (VISTA-GRPO): The semantic-gated defect reward and visibility-aware temporal reward are derived directly from the same human annotations used to create MMVIAD. The paper must demonstrate that these rewards do not introduce leakage or allow the policy to fit annotation artifacts specific to the 48-category, 14-environment collection rather than learning robust perception.

- [§5.1, Table 2] §5.1 and Table 2: No information is provided on baseline implementation details, statistical significance testing, or controls for data leakage in the reported numbers (base model 45.0 → VISTA 57.5). This makes it impossible to verify that the improvement over GPT-5.4 is robust.

minor comments (2)

- [Abstract, §1] The abstract and §1 cite 'human performance' as an upper bound but provide no details on how human baselines were collected or measured.

- [§3, §5] Notation for the four tasks and the exact averaging procedure for the 'average score' should be defined explicitly in §3 or §5.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive feedback, which has helped us identify areas where additional clarity and rigor are needed. We address each major comment below and have revised the manuscript accordingly to strengthen the presentation of the dataset splits, reward design, and evaluation protocols.

read point-by-point responses

-

Referee: [§4, §5.2] §4 (Dataset Construction) and §5.2 (Evaluation Protocol): The construction of the MMVIAD-Unseen split is not specified—e.g., whether entire object categories, environments, or anomaly types are held out versus a random video-level split. Without this, the claim that the 57.5 average score demonstrates transferable anomaly understanding (rather than exploitation of MMVIAD's particular statistics) cannot be evaluated.

Authors: We agree that the original description of the MMVIAD-Unseen split in §4 was insufficiently detailed. The split holds out 12 complete object categories (25% of the 48 total) that do not appear in the training or validation sets, while environments and anomaly types have partial overlap but with no video-level leakage from the held-out categories. We have expanded §4 with a new table (Table 1) listing the exact held-out categories, the distribution of environments across splits, and a diagram illustrating the category-level hold-out strategy. This makes explicit that the 57.5 score reflects generalization to unseen objects rather than memorization of dataset-specific statistics. revision: yes

-

Referee: [§5.3] §5.3 (VISTA-GRPO): The semantic-gated defect reward and visibility-aware temporal reward are derived directly from the same human annotations used to create MMVIAD. The paper must demonstrate that these rewards do not introduce leakage or allow the policy to fit annotation artifacts specific to the 48-category, 14-environment collection rather than learning robust perception.

Authors: The rewards are computed exclusively from the training-split annotations and never access test or Unseen annotations during GRPO. To address potential fitting to collection-specific artifacts, we have added an ablation study in the revised §5.3 showing that ablating the semantic gate drops Unseen performance by 4.2 points while the visibility-aware temporal reward contributes an additional 3.1 points; both components improve generalization metrics on held-out categories. We have also clarified that the reward functions use only coarse category-level and visibility labels rather than fine-grained per-video artifacts, and we include a new paragraph discussing why this design encourages robust perception rather than overfitting. revision: partial

-

Referee: [§5.1, Table 2] §5.1 and Table 2: No information is provided on baseline implementation details, statistical significance testing, or controls for data leakage in the reported numbers (base model 45.0 → VISTA 57.5). This makes it impossible to verify that the improvement over GPT-5.4 is robust.

Authors: We acknowledge the omission of these details. In the revised manuscript we have expanded §5.1 with full prompting templates and decoding parameters used for all baselines (including GPT-5.4), added standard deviations computed over three independent runs for every reported score, and included a paired t-test (p < 0.01) confirming the 12.5-point average improvement is statistically significant. We have also added an explicit statement and appendix section verifying that no MMVIAD-Unseen videos appear in any baseline pre-training corpora or fine-tuning data, thereby ruling out leakage. revision: yes

Circularity Check

No circularity: empirical benchmark results on newly introduced dataset

full rationale

The paper introduces the MMVIAD dataset and reports empirical performance numbers for a two-stage training pipeline (PS-SFT followed by VISTA-GRPO) on the MMVIAD-Unseen split. No equations, self-definitional quantities, fitted parameters renamed as predictions, or load-bearing self-citations appear in the abstract or described claims. The central result (score lift from 45.0 to 57.5) is a measured outcome on held-out data rather than a quantity that reduces to its own inputs by construction. The derivation chain is therefore self-contained as standard supervised fine-tuning plus RL on a new benchmark.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Flamingo: a visual language model for few-shot learning

Jean-Baptiste Alayrac, Jeff Donahue, Pauline Luc, Antoine Miech, Iain Barr, Yana Hasson, Karel Lenc, Arthur Mensch, Katherine Millican, Malcolm Reynolds, et al. Flamingo: a visual language model for few-shot learning. volume 35, pages 23716–23736, 2022

work page 2022

-

[2]

Localizing moments in video with natural language

Lisa Anne Hendricks, Oliver Wang, Eli Shechtman, Josef Sivic, Trevor Darrell, and Bryan Russell. Localizing moments in video with natural language. InProceedings of the IEEE international conference on computer vision, pages 5803–5812, 2017

work page 2017

-

[3]

Jinze Bai, Shuai Bai, Shusheng Yang, Shijie Wang, Sinan Tan, Peng Wang, Junyang Lin, Chang Zhou, and Jingren Zhou. Qwen-vl: A versatile vision-language model for understanding, localization, text reading, and beyond, 2023

work page 2023

-

[4]

Shuai Bai, Yuxuan Cai, Ruizhe Chen, Keqin Chen, Xionghui Chen, Zesen Cheng, Lianghao Deng, Wei Ding, Chang Gao, Chunjiang Ge, et al. Qwen3-vl technical report.arXiv preprint arXiv:2511.21631, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[5]

Efficientad: Accurate visual anomaly detection at millisecond-level latencies

Kilian Batzner, Lars Heckler, and Rebecca König. Efficientad: Accurate visual anomaly detection at millisecond-level latencies. InProceedings of the IEEE/CVF winter conference on applications of computer vision, pages 128–138, 2024

work page 2024

-

[6]

Mvtec ad–a comprehen- sive real-world dataset for unsupervised anomaly detection

Paul Bergmann, Michael Fauser, David Sattlegger, and Carsten Steger. Mvtec ad–a comprehen- sive real-world dataset for unsupervised anomaly detection. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 9592–9600, 2019

work page 2019

-

[7]

The mvtec 3d-ad dataset for unsupervised 3d anomaly detection and localization

Paul Bergmann, Xin Jin, David Sattlegger, and Carsten Steger. The mvtec 3d-ad dataset for unsupervised 3d anomaly detection and localization. 2021

work page 2021

-

[8]

Yuhao Chao, Jie Liu, Jie Tang, and Gangshan Wu. Anomalyr1: A grpo-based end-to-end mllm for industrial anomaly detection.arXiv preprint arXiv:2504.11914, 2025. 10

-

[9]

VideoLLaMA 2: Advancing Spatial-Temporal Modeling and Audio Understanding in Video-LLMs

Zesen Cheng, Sicong Leng, Hang Zhang, Yifei Xin, Xin Li, Guanzheng Chen, Yongxin Zhu, Wenqi Zhang, Ziyang Luo, Deli Zhao, et al. Videollama 2: Advancing spatial-temporal modeling and audio understanding in video-llms.arXiv preprint arXiv:2406.07476, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[10]

Instructblip: Towards general-purpose vision-language models with instruction tuning

Wenliang Dai, Junnan Li, Dongxu Li, Anthony Tiong, Junqi Zhao, Weisheng Wang, Boyang Li, Pascale N Fung, and Steven Hoi. Instructblip: Towards general-purpose vision-language models with instruction tuning. volume 36, pages 49250–49267, 2023

work page 2023

-

[11]

Padim: a patch distribution modeling framework for anomaly detection and localization

Thomas Defard, Aleksandr Setkov, Angelique Loesch, and Romaric Audigier. Padim: a patch distribution modeling framework for anomaly detection and localization. InInternational conference on pattern recognition, pages 475–489. Springer, 2021

work page 2021

-

[12]

Anomaly detection via reverse distillation from one-class embed- ding

Hanqiu Deng and Xingyu Li. Anomaly detection via reverse distillation from one-class embed- ding. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 9737–9746, 2022

work page 2022

-

[13]

Video-r1: Reinforcing video reasoning in mllms

Kaituo Feng, Kaixiong Gong, Bohao Li, Zonghao Guo, Yibing Wang, Tianshuo Peng, Junfei Wu, Xiaoying Zhang, Benyou Wang, and Xiangyu Yue. Video-r1: Reinforcing video reasoning in mllms. 2025

work page 2025

-

[14]

Tall: Temporal activity localization via language query

Jiyang Gao, Chen Sun, Zhenheng Yang, and Ram Nevatia. Tall: Temporal activity localization via language query. InProceedings of the IEEE international conference on computer vision, pages 5267–5275, 2017

work page 2017

-

[15]

Anoma- lygpt: Detecting industrial anomalies using large vision-language models

Zhaopeng Gu, Bingke Zhu, Guibo Zhu, Yingying Chen, Ming Tang, and Jinqiao Wang. Anoma- lygpt: Detecting industrial anomalies using large vision-language models. InProceedings of the AAAI conference on artificial intelligence, volume 38, pages 1932–1940, 2024

work page 1932

-

[16]

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Peiyi Wang, Qihao Zhu, Runxin Xu, Ruoyu Zhang, Shirong Ma, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning.arXiv preprint arXiv:2501.12948, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[17]

Vtg-llm: Integrating timestamp knowledge into video llms for enhanced video temporal grounding

Yongxin Guo, Jingyu Liu, Mingda Li, Dingxin Cheng, Xiaoying Tang, Dianbo Sui, Qingbin Liu, Xi Chen, and Kevin Zhao. Vtg-llm: Integrating timestamp knowledge into video llms for enhanced video temporal grounding. InProceedings of the AAAI Conference on Artificial Intelligence, volume 39, pages 3302–3310, 2025

work page 2025

-

[18]

Trace: Temporal grounding video llm via causal event modeling

Yongxin Guo, Jingyu Liu, Mingda Li, Qingbin Liu, Xi Chen, and Xiaoying Tang. Trace: Temporal grounding video llm via causal event modeling. 2024

work page 2024

-

[19]

Stepan Jezek, Martin Jonak, Radim Burget, Pavel Dvorak, and Milos Skotak. Deep learning- based defect detection of metal parts: evaluating current methods in complex conditions. In 2021 13th International congress on ultra modern telecommunications and control systems and workshops (ICUMT), pages 66–71. IEEE, 2021

work page 2021

-

[20]

Mmad: A comprehensive benchmark for multimodal large language models in industrial anomaly detection

Xi Jiang, Jian Li, Hanqiu Deng, Yong Liu, Bin-Bin Gao, Yifeng Zhou, Jialin Li, Chengjie Wang, and Feng Zheng. Mmad: A comprehensive benchmark for multimodal large language models in industrial anomaly detection. 2024

work page 2024

-

[21]

Detecting moments and highlights in videos via natural language queries

Jie Lei, Tamara L Berg, and Mohit Bansal. Detecting moments and highlights in videos via natural language queries. volume 34, pages 11846–11858, 2021

work page 2021

-

[22]

Cutpaste: Self-supervised learning for anomaly detection and localization

Chun-Liang Li, Kihyuk Sohn, Jinsung Yoon, and Tomas Pfister. Cutpaste: Self-supervised learning for anomaly detection and localization. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 9664–9674, 2021

work page 2021

-

[23]

Junnan Li, Dongxu Li, Silvio Savarese, and Steven Hoi. Blip-2: Bootstrapping language-image pre-training with frozen image encoders and large language models. InInternational conference on machine learning, pages 19730–19742. PMLR, 2023

work page 2023

-

[24]

Wenqiao Li, Yao Gu, Xintao Chen, Xiaohao Xu, Ming Hu, Xiaonan Huang, and Yingna Wu. Towards visual discrimination and reasoning of real-world physical dynamics: Physics-grounded anomaly detection. InProceedings of the Computer Vision and Pattern Recognition Conference, pages 30409–30419, 2025. 11

work page 2025

-

[25]

Wenqiao Li, Xiaohao Xu, Yao Gu, Bozhong Zheng, Shenghua Gao, and Yingna Wu. Towards scalable 3d anomaly detection and localization: A benchmark via 3d anomaly synthesis and a self-supervised learning network. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 22207–22216, 2024

work page 2024

-

[26]

arXiv preprint arXiv:2504.06958 (2025)

Xinhao Li, Ziang Yan, Desen Meng, Lu Dong, Xiangyu Zeng, Yinan He, Yali Wang, Yu Qiao, Yi Wang, and Limin Wang. Videochat-r1: Enhancing spatio-temporal perception via reinforce- ment fine-tuning.arXiv preprint arXiv:2504.06958, 2025

-

[27]

Llama-vid: An image is worth 2 tokens in large language models

Yanwei Li, Chengyao Wang, and Jiaya Jia. Llama-vid: An image is worth 2 tokens in large language models. InEuropean Conference on Computer Vision, pages 323–340. Springer, 2024

work page 2024

-

[28]

Haotian Liu, Chunyuan Li, Qingyang Wu, and Yong Jae Lee. Visual instruction tuning. volume 36, pages 34892–34916, 2023

work page 2023

-

[29]

Video-chatgpt: Towards detailed video understanding via large vision and language models

Muhammad Maaz, Hanoona Rasheed, Salman Khan, and Fahad Khan. Video-chatgpt: Towards detailed video understanding via large vision and language models. InProceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 12585–12602, 2024

work page 2024

-

[30]

Training language models to follow instructions with human feedback

Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, et al. Training language models to follow instructions with human feedback. volume 35, pages 27730–27744, 2022

work page 2022

-

[31]

Chatvtg: Video temporal grounding via chat with video dialogue large language models

Mengxue Qu, Xiaodong Chen, Wu Liu, Alicia Li, and Yao Zhao. Chatvtg: Video temporal grounding via chat with video dialogue large language models. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 1847–1856, 2024

work page 2024

-

[32]

Direct preference optimization: Your language model is secretly a reward model

Rafael Rafailov, Archit Sharma, Eric Mitchell, Christopher D Manning, Stefano Ermon, and Chelsea Finn. Direct preference optimization: Your language model is secretly a reward model. volume 36, pages 53728–53741, 2023

work page 2023

-

[33]

Timechat: A time-sensitive multimodal large language model for long video understanding

Shuhuai Ren, Linli Yao, Shicheng Li, Xu Sun, and Lu Hou. Timechat: A time-sensitive multimodal large language model for long video understanding. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 14313–14323, 2024

work page 2024

-

[34]

Towards total recall in industrial anomaly detection

Karsten Roth, Latha Pemula, Joaquin Zepeda, Bernhard Schölkopf, Thomas Brox, and Peter Gehler. Towards total recall in industrial anomaly detection. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 14318–14328, 2022

work page 2022

-

[35]

DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models

Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Yang Wu, et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models.arXiv preprint arXiv:2402.03300, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[36]

Real-iad: A real-world multi-view dataset for benchmarking versatile industrial anomaly detection

Chengjie Wang, Wenbing Zhu, Bin-Bin Gao, Zhenye Gan, Jiangning Zhang, Zhihao Gu, Shuguang Qian, Mingang Chen, and Lizhuang Ma. Real-iad: A real-world multi-view dataset for benchmarking versatile industrial anomaly detection. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 22883–22892, 2024

work page 2024

-

[37]

Time-r1: Post-training large vision language model for temporal video grounding

Ye Wang, Ziheng Wang, Boshen Xu, Yang Du, Kejun Lin, Zihan Xiao, Zihao Yue, Jianzhong Ju, Liang Zhang, Dingyi Yang, et al. Time-r1: Post-training large vision language model for temporal video grounding. 2025

work page 2025

-

[38]

Chain-of-thought prompting elicits reasoning in large language models

Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. volume 35, pages 24824–24837, 2022

work page 2022

-

[39]

Ziang Yan, Xinhao Li, Yinan He, Zhengrong Yue, Xiangyu Zeng, Yali Wang, Yu Qiao, Limin Wang, and Yi Wang. Videochat-r1. 5: Visual test-time scaling to reinforce multimodal reasoning by iterative perception. 2025

work page 2025

-

[40]

Draem-a discriminatively trained re- construction embedding for surface anomaly detection

Vitjan Zavrtanik, Matej Kristan, and Danijel Sko ˇcaj. Draem-a discriminatively trained re- construction embedding for surface anomaly detection. InProceedings of the IEEE/CVF international conference on computer vision, pages 8330–8339, 2021. 12

work page 2021

-

[41]

Video-llama: An instruction-tuned audio-visual language model for video understanding

Hang Zhang, Xin Li, and Lidong Bing. Video-llama: An instruction-tuned audio-visual language model for video understanding. InProceedings of the 2023 conference on empirical methods in natural language processing: system demonstrations, pages 543–553, 2023

work page 2023

-

[42]

Long Context Transfer from Language to Vision

Peiyuan Zhang, Kaichen Zhang, Bo Li, Guangtao Zeng, Jingkang Yang, Yuanhan Zhang, Ziyue Wang, Haoran Tan, Chunyuan Li, and Ziwei Liu. Long context transfer from language to vision. arXiv preprint arXiv:2406.16852, 2024

work page internal anchor Pith review arXiv 2024

-

[43]

Learning 2d temporal adjacent networks for moment localization with natural language

Songyang Zhang, Houwen Peng, Jianlong Fu, and Jiebo Luo. Learning 2d temporal adjacent networks for moment localization with natural language. InProceedings of the AAAI conference on artificial intelligence, volume 34, pages 12870–12877, 2020

work page 2020

-

[44]

Kaichen Zhou, Xinhai Chang, Taewhan Kim, Jiadong Zhang, Yang Cao, Chufei Peng, Fangneng Zhan, Hao Zhao, Hao Dong, Kai Ming Ting, et al. Rad: A dataset and benchmark for real-life anomaly detection with robotic observations.arXiv preprint arXiv:2410.00713, 2024

-

[45]

Qiang Zhou, Weize Li, Lihan Jiang, Guoliang Wang, Guyue Zhou, Shanghang Zhang, and Hao Zhao. Pad: A dataset and benchmark for pose-agnostic anomaly detection.Advances in Neural Information Processing Systems, 36:44558–44571, 2023

work page 2023

-

[46]

Minigpt-4: Enhanc- ing vision-language understanding with advanced large language models

Deyao Zhu, Jun Chen, Xiaoqian Shen, Xiang Li, and Mohamed Elhoseiny. Minigpt-4: Enhanc- ing vision-language understanding with advanced large language models. 2023

work page 2023

-

[47]

w/oRsg” removes the semantic gate for defect classification, and “w/o Rvis

Yang Zou, Jongheon Jeong, Latha Pemula, Dongqing Zhang, and Onkar Dabeer. Spot-the- difference self-supervised pre-training for anomaly detection and segmentation. InEuropean conference on computer vision, pages 392–408. Springer, 2022. 13 A Broader Impact A.1 Potential Positive Impacts Industrial anomaly detection is important for manufacturing quality c...

work page 2022

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.