Recognition: 2 theorem links

· Lean TheoremLatentHDR: Decoupling Exposure from Diffusion via Conditional Latent-to-Latent Mapping for Text/Image-to-Panoramic HDR

Pith reviewed 2026-05-13 07:14 UTC · model grok-4.3

The pith

LatentHDR decouples scene generation from exposure modeling in latent space to produce structurally consistent HDR panoramas in one pass.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

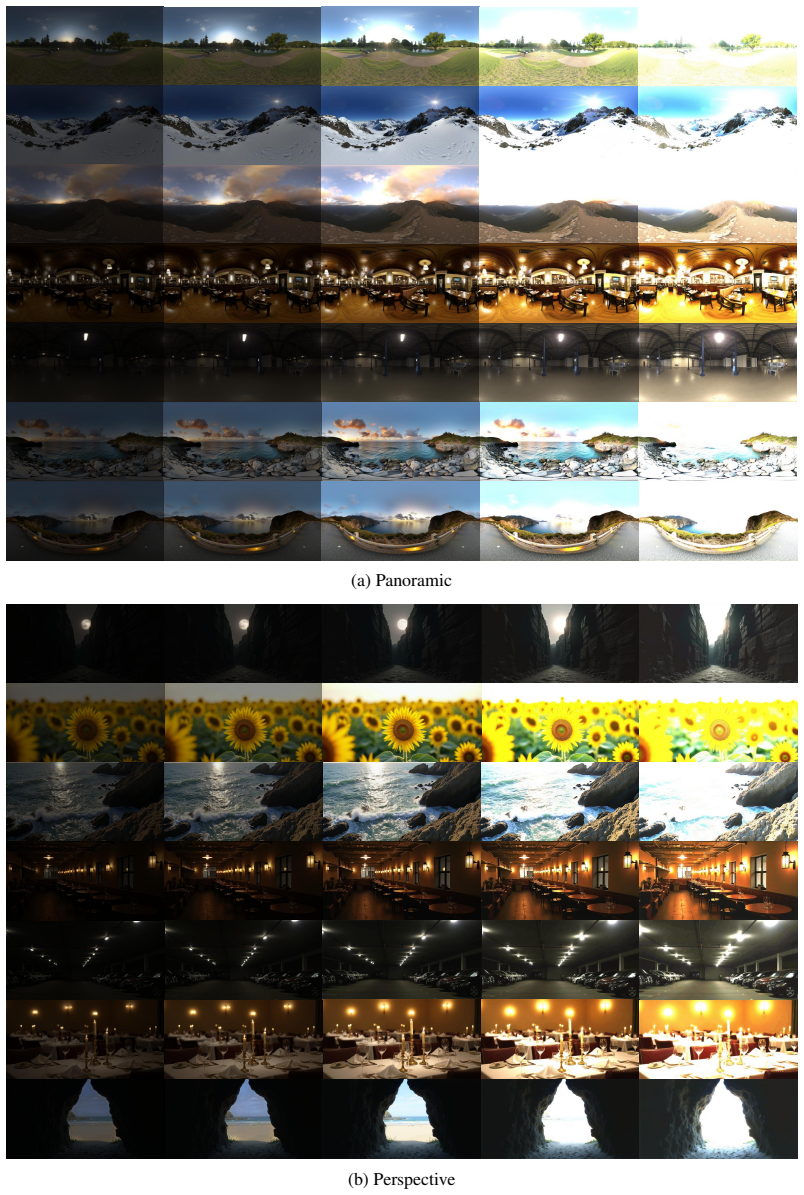

LatentHDR achieves high-dynamic-range generation by first using a pretrained diffusion backbone to produce a single coherent scene representation in latent space, then applying a lightweight conditional latent-to-latent head that deterministically maps this representation to a stack of exposure-specific latents, enabling the full exposure stack to be synthesized in a single forward pass while preserving structural consistency across exposures for both perspective and panoramic outputs.

What carries the argument

conditional latent-to-latent head: a lightweight network that takes one coherent scene latent and maps it deterministically to multiple exposure-specific latents while preserving structural alignment.

If this is right

- A full dense exposure stack can be generated in a single diffusion pass instead of repeated stochastic runs.

- Cross-exposure structural alignment is enforced by construction rather than post-processing.

- Both text-conditioned and image-conditioned panoramic HDR synthesis become feasible at lower cost.

- Dynamic range can reach state-of-the-art levels while perceptual quality remains competitive.

- The separation of scene content from exposure modeling removes the need for multi-exposure stochastic sampling.

Where Pith is reading between the lines

- The same latent-to-latent mapping pattern could extend to other scene attributes such as lighting direction or time-of-day without retraining the full diffusion backbone.

- If the deterministic mapping generalizes beyond the SI-HDR benchmark, the method might reduce artifacts in merged HDR outputs on real-world captured sequences.

- Interactive control over exposure distribution could be added by conditioning the head on user-specified exposure values rather than fixed stacks.

- The approach might lower the barrier to training HDR models on limited hardware by avoiding repeated full diffusion passes during data synthesis.

Load-bearing premise

A lightweight conditional latent-to-latent head can deterministically map a single coherent scene representation to multiple exposure-specific representations while preserving structural consistency across the exposure stack.

What would settle it

Visible structural misalignment between the generated exposure levels or failure to reduce computation by roughly an order of magnitude compared with multi-pass diffusion would falsify the central claim.

Figures

read the original abstract

High Dynamic Range (HDR) generation remains challenging for generative models, which are largely limited to low dynamic range outputs. Recent diffusionbased approaches approximate HDR by generating multiple exposure-conditioned samples, incurring high computational cost and structural inconsistencies across exposures. We propose LatentHDR, a framework that decouples scene generation from exposure modeling in latent space. A pretrained diffusion backbone produces a single coherent scene representation, while a lightweight conditional latent to-latent head deterministically maps it to exposure-specific representations. This enables the generation of a dense, structurally consistent exposure stack in a single pass. This design eliminates multi-pass diffusion, ensures cross-exposure alignment, and enables scalable HDR synthesis. LatentHDR supports both textand image-conditioned HDR generation for perspective and panoramic scenes. Experiments on synthetic data and the SI-HDR benchmark show that LatentHDR achieves state-of-the-art dynamic range with competitive perceptual quality, while reducing computation by an order of magnitude. Our results demonstrate that high-quality HDR generation can be achieved through structured latent modeling, challenging the need for stochastic multi-exposure generation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

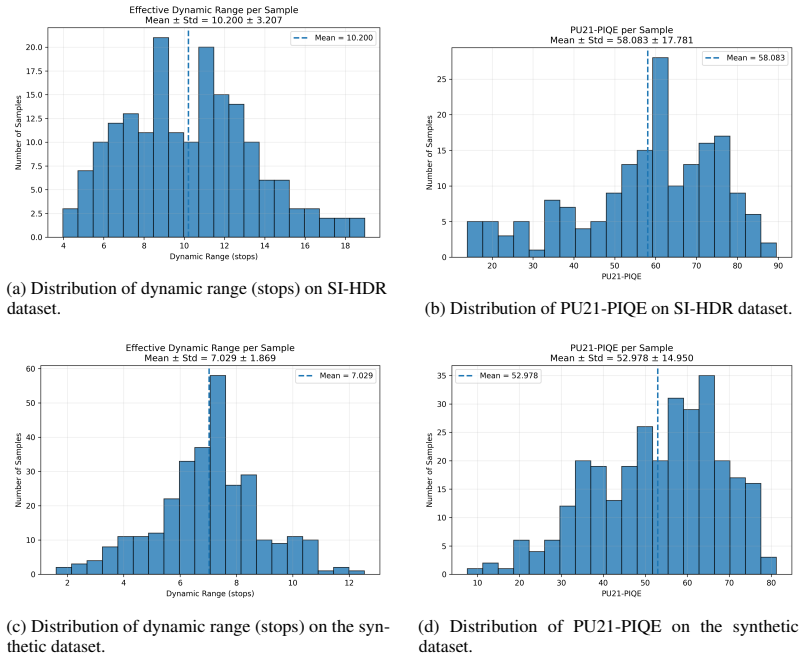

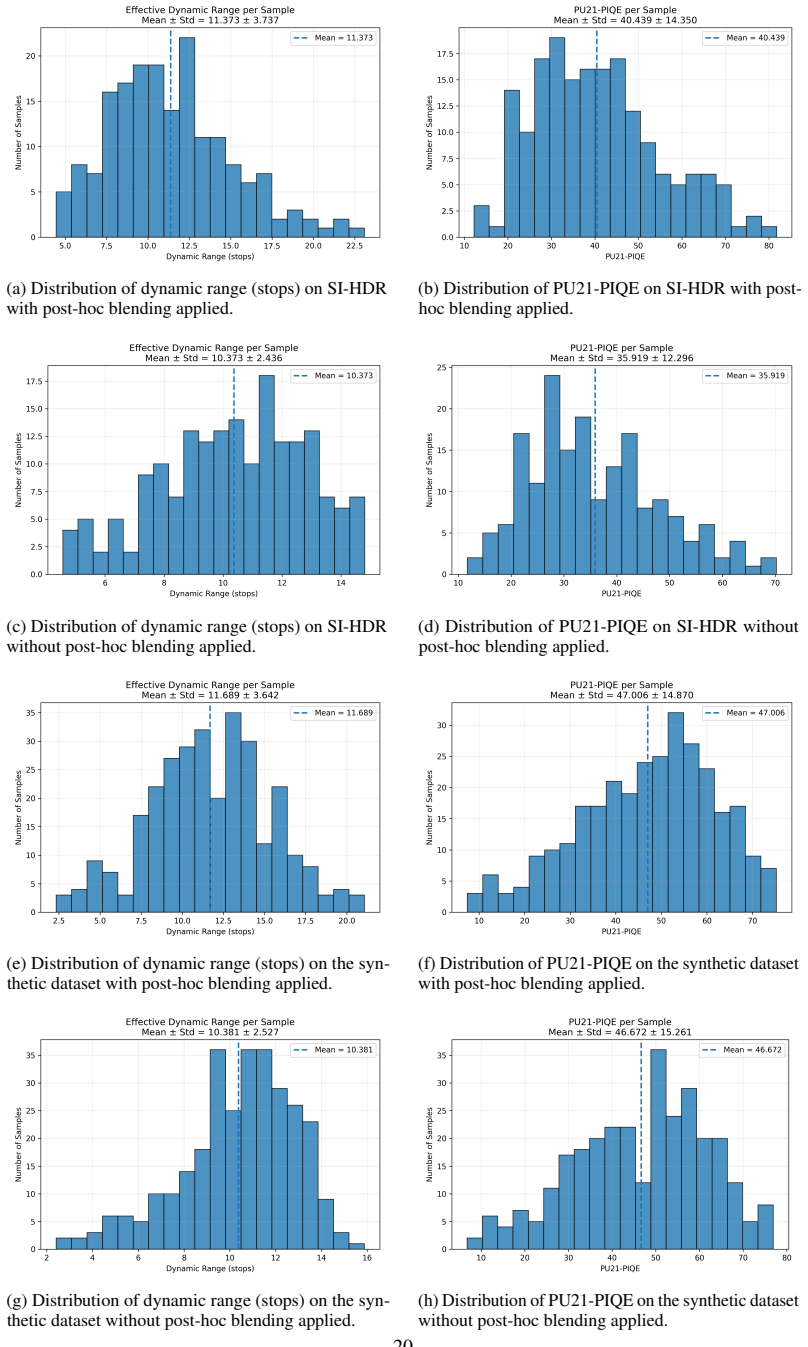

Summary. The manuscript introduces LatentHDR, a framework for text- and image-conditioned panoramic HDR generation. It decouples scene generation from exposure modeling by using a pretrained diffusion backbone to produce a single coherent latent scene representation, which a lightweight conditional latent-to-latent head then maps deterministically to multiple exposure-specific latents. This enables generation of a dense, structurally consistent exposure stack in a single forward pass rather than multi-pass diffusion sampling. The authors claim that experiments on synthetic data and the SI-HDR benchmark demonstrate state-of-the-art dynamic range, competitive perceptual quality, and an order-of-magnitude reduction in computation.

Significance. If the central claims hold, the decoupling of exposure from the diffusion process in latent space could meaningfully advance efficient HDR synthesis for panoramic scenes. By avoiding stochastic multi-exposure sampling while preserving cross-exposure alignment, the approach may enable more scalable applications in VR, computational photography, and vision tasks. The design directly challenges the need for repeated conditioned diffusion passes and, if validated with quantitative evidence, would constitute a practical contribution to latent-space HDR modeling.

major comments (3)

- [Abstract] Abstract: The central performance claims of 'state-of-the-art dynamic range' and 'order of magnitude' speedup are asserted without any numerical metrics, baseline comparisons, error bars, or ablation results. This absence makes it impossible to assess whether the reported gains are load-bearing or merely qualitative, directly weakening evaluation of the SOTA assertion.

- [§3] §3 (Method, latent-to-latent head description): The load-bearing assumption that a single latent produced by an LDR-trained diffusion backbone contains sufficient radiance information for a lightweight deterministic head to recover accurate extreme HDR values (especially in saturated or high-contrast panoramic regions) is not accompanied by a targeted validation experiment. If high-dynamic-range information is irreversibly averaged in the initial latent, the subsequent mapping cannot recover true radiance without hallucination, undermining both the dynamic-range and consistency claims.

- [Experiments] Experiments section: The manuscript states that results on synthetic data and SI-HDR show SOTA dynamic range with competitive perceptual quality, yet supplies no concrete metrics (e.g., HDR-VDP-2 scores, dynamic-range ratios, PSNR against ground-truth stacks), no list of baselines, and no statistical details. Without these, the performance claims cannot be verified and the order-of-magnitude speedup cannot be compared to prior multi-exposure diffusion methods.

minor comments (2)

- [Abstract] Abstract: 'diffusionbased' should be hyphenated as 'diffusion-based'.

- [Abstract] Abstract: 'conditional latent to-latent head' should read 'conditional latent-to-latent head' for grammatical consistency.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback, which has helped clarify the presentation of our results. We address each major comment below and have revised the manuscript to incorporate quantitative details, additional validation, and explicit baseline comparisons.

read point-by-point responses

-

Referee: [Abstract] Abstract: The central performance claims of 'state-of-the-art dynamic range' and 'order of magnitude' speedup are asserted without any numerical metrics, baseline comparisons, error bars, or ablation results. This absence makes it impossible to assess whether the reported gains are load-bearing or merely qualitative, directly weakening evaluation of the SOTA assertion.

Authors: We agree that the abstract would benefit from explicit numerical support. In the revised version we have updated the abstract to report key quantitative results drawn from the experiments section, including average HDR-VDP-2 scores, dynamic-range ratios, PSNR values against ground-truth stacks, and measured wall-clock speedup factors (with error bars) relative to multi-exposure diffusion baselines. revision: yes

-

Referee: [§3] §3 (Method, latent-to-latent head description): The load-bearing assumption that a single latent produced by an LDR-trained diffusion backbone contains sufficient radiance information for a lightweight deterministic head to recover accurate extreme HDR values (especially in saturated or high-contrast panoramic regions) is not accompanied by a targeted validation experiment. If high-dynamic-range information is irreversibly averaged in the initial latent, the subsequent mapping cannot recover true radiance without hallucination, undermining both the dynamic-range and consistency claims.

Authors: This is a substantive concern. We have added a targeted validation subsection in the revised §3 that quantifies the information preserved in the scene latent. Specifically, we report reconstruction error on high-radiance regions of synthetic panoramic scenes, compare value histograms before and after the latent-to-latent mapping, and show that extreme radiance values are recoverable without introducing hallucinated content beyond what is observed in the ground-truth HDR stacks. revision: yes

-

Referee: [Experiments] Experiments section: The manuscript states that results on synthetic data and SI-HDR show SOTA dynamic range with competitive perceptual quality, yet supplies no concrete metrics (e.g., HDR-VDP-2 scores, dynamic-range ratios, PSNR against ground-truth stacks), no list of baselines, and no statistical details. Without these, the performance claims cannot be verified and the order-of-magnitude speedup cannot be compared to prior multi-exposure diffusion methods.

Authors: We acknowledge the need for explicit reporting. The revised experiments section now contains a comprehensive table listing all baselines (including prior multi-exposure diffusion approaches), concrete metrics (HDR-VDP-2, PSNR, dynamic-range ratio), error bars computed over five independent runs, and direct timing measurements demonstrating the order-of-magnitude reduction in compute. These additions enable direct verification of the SOTA and efficiency claims. revision: yes

Circularity Check

No circularity in derivation chain

full rationale

The paper introduces an architectural framework that uses a pretrained external diffusion backbone to produce one latent scene representation, then applies a new lightweight conditional latent-to-latent head to generate exposure-specific latents. All performance claims (SOTA dynamic range, order-of-magnitude speed-up, structural consistency) are presented as empirical outcomes from experiments on synthetic data and the SI-HDR benchmark. No equations, fitted parameters, or self-citations are shown to reduce these results to quantities defined by the same work's inputs; the decoupling is an explicit design choice whose validity is tested externally rather than assumed by construction.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Pretrained diffusion backbones produce single coherent scene representations sufficient for downstream exposure mapping.

invented entities (1)

-

Conditional latent-to-latent head

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.leanembed_add, embed_injective, generatorOfLawsOfLogic echoesA pretrained diffusion backbone produces a single coherent scene representation, while a lightweight conditional latent-to-latent head deterministically maps it to exposure-specific representations... exposure variation... corresponds to a structured, monotonic transformation of scene radiance... deterministic transformation of a shared scene latent

-

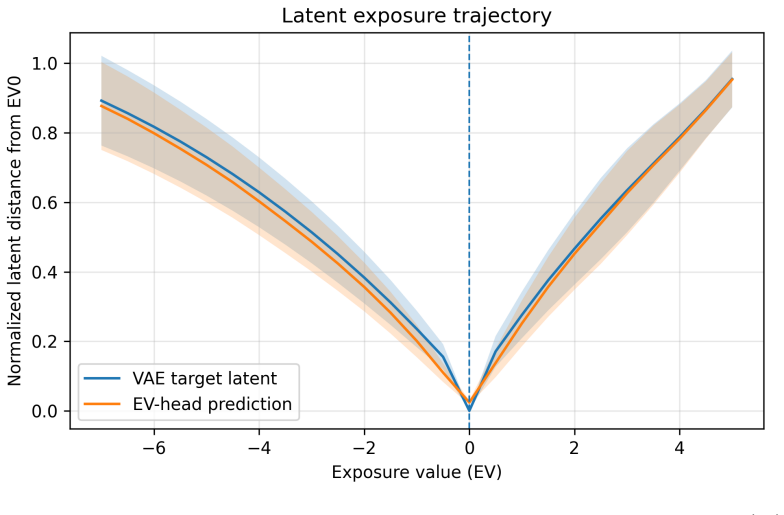

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel, Jcost_unit0 echoesthe posterior standard deviation is extremely small... zx ≈ µ(x)... near-deterministic behavior... exposure forms a smooth trajectory in VAE latent space

Reference graph

Works this paper leans on

-

[1]

Alessandro Artusi, Rafał K. Mantiuk, Thomas Richter, Philippe Hanhart, Pavel Korshunov, Massimiliano Agostinelli, Arkady Ten, and Touradj Ebrahimi. Overview and evaluation of the jpeg xt hdr image compression standard.Journal of Real-Time Image Processing, 16(2):413–428, Apr 2019. ISSN 1861-

work page 2019

-

[2]

URLhttps://doi.org/10.1007/s11554-015-0547-x

doi: 10.1007/s11554-015-0547-x. URLhttps://doi.org/10.1007/s11554-015-0547-x

-

[3]

Francesco Banterle, Alessandro Artusi, Kurt Debattista, and Alan Chalmers.Advanced High Dynamic Range Imaging. AK Peters/CRC Press, 2017

work page 2017

-

[4]

Hrishav Bakul Barua, Stefanov Kalin, Lemuel Lai En Che, Dhall Abhinav, Wong KokSheik, and Krish- nasamy Ganesh. A cycle ride to hdr: Semantics aware self-supervised framework for unpaired ldr-to-hdr image translation.arXiv preprint arXiv:2410.15068, 2024

-

[5]

Bracket diffusion: Hdr image generation by consistent ldr denoising, 2025

Mojtaba Bemana, Thomas Leimkühler, Karol Myszkowski, Hans-Peter Seidel, and Tobias Ritschel. Bracket diffusion: Hdr image generation by consistent ldr denoising, 2025. URL https://arxiv.org/abs/ 2405.14304

-

[6]

Retrieval-augmented diffusion models, 2022

Andreas Blattmann, Robin Rombach, Kaan Oktay, and Björn Ommer. Retrieval-augmented diffusion models, 2022. URLhttps://arxiv.org/abs/2204.11824

-

[7]

Any-resolution training for high-resolution image synthesis

Lucy Chai, Michael Gharbi, Eli Shechtman, Phillip Isola, and Richard Zhang. Any-resolution training for high-resolution image synthesis. InEuropean Conference on Computer Vision, 2022

work page 2022

-

[8]

Rufeng Chen, Bolun Zheng, Hua Zhang, Quan Chen, Chenggang Yan, Gregory Slabaugh, and Shanxin Yuan. Improving dynamic hdr imaging with fusion transformer.Proceedings of the AAAI Conference on Artificial Intelligence, 37(1):340–349, Jun. 2023. doi: 10.1609/aaai.v37i1.25107. URL https: //ojs.aaai.org/index.php/AAAI/article/view/25107

-

[9]

Hdrunet: Single image hdr reconstruction with denoising and dequantization

Xiangyu Chen, Yihao Liu, Zhengwen Zhang, Yu Qiao, and Chao Dong. Hdrunet: Single image hdr reconstruction with denoising and dequantization. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, pages 354–363, June 2021

work page 2021

-

[10]

Text2light: Zero-shot text-driven hdr panorama generation

Zhaoxi Chen, Guangcong Wang, and Ziwei Liu. Text2light: Zero-shot text-driven hdr panorama generation. ACM Transactions on Graphics (TOG), 41(6):1–16, 2022

work page 2022

-

[11]

Paul Debevec. Rendering synthetic objects into real scenes: bridging traditional and image-based graphics with global illumination and high dynamic range photography. InProceedings of the 25th Annual Conference on Computer Graphics and Interactive Techniques, SIGGRAPH ’98, page 189–198, New York, NY , USA, 1998. Association for Computing Machinery. ISBN 0...

-

[12]

Intrinsic single-image hdr reconstruction

Sebastian Dille, Chris Careaga, and Ya ˘gız Aksoy. Intrinsic single-image hdr reconstruction. InProc. ECCV, 2024

work page 2024

-

[13]

Fast bilateral filtering for the display of high-dynamic-range images

Frédo Durand and Julie Dorsey. Fast bilateral filtering for the display of high-dynamic-range images. ACM Trans. Graph., 21(3):257–266, July 2002. ISSN 0730-0301. doi: 10.1145/566654.566574. URL https://doi.org/10.1145/566654.566574

-

[14]

Gabriel Eilertsen, Joel Kronander, Gyorgy Denes, Rafal K. Mantiuk, and Jonas Unger. HDR image reconstruction from a single exposure using deep cnns.CoRR, abs/1710.07480, 2017. URL http: //arxiv.org/abs/1710.07480

-

[15]

Deep reverse tone mapping.ACM Trans

Yuki Endo, Yoshihiro Kanamori, and Jun Mitani. Deep reverse tone mapping.ACM Trans. Graph., 36(6), November 2017. ISSN 0730-0301. doi: 10.1145/3130800.3130834. URL https://doi.org/10.1145/ 3130800.3130834

-

[16]

Scaling Rectified Flow Transformers for High-Resolution Image Synthesis

Patrick Esser, Sumith Kulal, Andreas Blattmann, Rahim Entezari, Jonas Müller, Harry Saini, Yam Levi, Dominik Lorenz, Axel Sauer, Frederic Boesel, Dustin Podell, Tim Dockhorn, Zion English, Kyle Lacey, Alex Goodwin, Yannik Marek, and Robin Rombach. Scaling rectified flow transformers for high-resolution image synthesis, 2024. URLhttps://arxiv.org/abs/2403....

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[17]

Generative diffusion prior for unified image restoration and enhancement

Ben Fei, Zhaoyang Lyu, Liang Pan, Junzhe Zhang, Weidong Yang, Tianyue Luo, Bo Zhang, and Bo Dai. Generative diffusion prior for unified image restoration and enhancement. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023

work page 2023

-

[18]

Dit360: High-fidelity panoramic image generation via hybrid training, 2025

Haoran Feng, Dizhe Zhang, Xiangtai Li, Bo Du, and Lu Qi. Dit360: High-fidelity panoramic image generation via hybrid training, 2025. URLhttps://arxiv.org/abs/2510.11712

-

[19]

SI-HDR - dataset for comparison of single-image high dynamic range reconstruction methods, 2022

Param Hanji, Rafal Mantiuk, Gabriel Eilertsen, Saghi Hajisharif, and Jonas Unger. SI-HDR - dataset for comparison of single-image high dynamic range reconstruction methods, 2022. URL https://doi.org/ 10.17863/CAM.87333

-

[20]

Comparison of single image hdr reconstruction methods — the caveats of quality assessment

Param Hanji, Rafal Mantiuk, Gabriel Eilertsen, Saghi Hajisharif, and Jonas Unger. Comparison of single image hdr reconstruction methods — the caveats of quality assessment. InACM SIGGRAPH 2022 Conference Proceedings, SIGGRAPH ’22, New York, NY , USA, 2022. Association for Computing Machinery. ISBN 9781450393379. doi: 10.1145/3528233.3530729. URL https://d...

-

[21]

Poly haven: Public asset library, 2026

Poly Haven. Poly haven: Public asset library, 2026. URLhttps://polyhaven.com/

work page 2026

-

[22]

Team HunyuanWorld. Hunyuanworld 1.0: Generating immersive, explorable, and interactive 3d worlds from words or pixels.arXiv preprint, 2025

work page 2025

-

[23]

Deep high dynamic range imaging of dynamic scenes

Nima Khademi Kalantari and Ravi Ramamoorthi. Deep high dynamic range imaging of dynamic scenes. ACM Trans. Graph., 36(4), July 2017. ISSN 0730-0301. doi: 10.1145/3072959.3073609. URL https: //doi.org/10.1145/3072959.3073609

-

[24]

Adam: A Method for Stochastic Optimization

Diederik P. Kingma and Jimmy Ba. Adam: A method for stochastic optimization, 2017. URL https: //arxiv.org/abs/1412.6980

work page internal anchor Pith review Pith/arXiv arXiv 2017

-

[25]

Flux.https://github.com/black-forest-labs/flux, 2024

Black Forest Labs. Flux.https://github.com/black-forest-labs/flux, 2024

work page 2024

-

[26]

FLUX.1 Kontext: Flow Matching for In-Context Image Generation and Editing in Latent Space

Black Forest Labs, Stephen Batifol, Andreas Blattmann, Frederic Boesel, Saksham Consul, Cyril Diagne, Tim Dockhorn, Jack English, Zion English, Patrick Esser, Sumith Kulal, Kyle Lacey, Yam Levi, Cheng Li, Dominik Lorenz, Jonas Müller, Dustin Podell, Robin Rombach, Harry Saini, Axel Sauer, and Luke Smith. Flux.1 kontext: Flow matching for in-context image ...

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[27]

Single-image hdr reconstruction by learning to reverse the camera pipeline

Yu-Lun Liu, Wei-Sheng Lai, Yu-Sheng Chen, Yi-Lung Kao, Ming-Hsuan Yang, Yung-Yu Chuang, and Jia-Bin Huang. Single-image hdr reconstruction by learning to reverse the camera pipeline. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2020

work page 2020

-

[28]

Rafal K. Mantiuk, Dounia Hammou, and Param Hanji. Hdr-vdp-3: A multi-metric for predicting image differences, quality and contrast distortions in high dynamic range and regular content, 2023. URL https://arxiv.org/abs/2304.13625

-

[29]

Demetris Marnerides, Thomas Bashford-Rogers, Jonathan Hatchett, and Kurt Debattista. Expandnet: A deep convolutional neural network for high dynamic range expansion from low dynamic range content. CoRR, abs/1803.02266, 2018. URLhttp://arxiv.org/abs/1803.02266

-

[30]

Aces filmic tone mapping curve

Krzysztof Narkowicz. Aces filmic tone mapping curve. https://knarkowicz.wordpress.com/2016/ 01/06/aces-filmic-tone-mapping-curve/, 2015

work page 2016

-

[31]

Film: visual reasoning with a general conditioning layer

Ethan Perez, Florian Strub, Harm de Vries, Vincent Dumoulin, and Aaron Courville. Film: visual reasoning with a general conditioning layer. InProceedings of the Thirty-Second AAAI Conference on Artificial Intelligence and Thirtieth Innovative Applications of Artificial Intelligence Conference and Eighth AAAI Symposium on Educational Advances in Artificial...

-

[32]

ISBN 978-1-57735-800-8

-

[33]

Diffusionlight: Light probes for free by painting a chrome ball

Pakkapon Phongthawee, Worameth Chinchuthakun, Nontaphat Sinsunthithet, Amit Raj, Varun Jampani, Pramook Khungurn, and Supasorn Suwajanakorn. Diffusionlight: Light probes for free by painting a chrome ball. InArXiv, 2023

work page 2023

-

[34]

SDXL: Improving Latent Diffusion Models for High-Resolution Image Synthesis

Dustin Podell, Zion English, Kyle Lacey, Andreas Blattmann, Tim Dockhorn, Jonas Müller, Joe Penna, and Robin Rombach. Sdxl: Improving latent diffusion models for high-resolution image synthesis.arXiv preprint arXiv:2307.01952, 2023

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[35]

Association for Computing Machinery, New York, NY , USA, 1 edition, 2023

Erik Reinhard, Michael Stark, Peter Shirley, and James Ferwerda.Photographic Tone Reproduction for Digital Images. Association for Computing Machinery, New York, NY , USA, 1 edition, 2023. ISBN 9798400708978. URLhttps://doi.org/10.1145/3596711.3596781. 11

-

[36]

High-resolution image synthesis with latent diffusion models, 2021

Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser, and Björn Ommer. High-resolution image synthesis with latent diffusion models, 2021

work page 2021

-

[37]

U-Net: Convolutional Networks for Biomedical Image Segmentation

Olaf Ronneberger, Philipp Fischer, and Thomas Brox. U-net: Convolutional networks for biomedical image segmentation.CoRR, abs/1505.04597, 2015. URLhttp://arxiv.org/abs/1505.04597

work page internal anchor Pith review Pith/arXiv arXiv 2015

-

[38]

Marcel Santana Santos, Tsang Ing Ren, and Nima Khademi Kalantari. Single image hdr reconstruction using a cnn with masked features and perceptual loss.ACM Transactions on Graphics, 39(4), August 2020. ISSN 1557-7368. doi: 10.1145/3386569.3392403. URL http://dx.doi.org/10.1145/3386569. 3392403

-

[39]

Hdr environment map estimation for real-time augmented reality, 2021

Gowri Somanath and Daniel Kurz. Hdr environment map estimation for real-time augmented reality, 2021. URLhttps://arxiv.org/abs/2011.10687

-

[40]

Denoising Diffusion Implicit Models

Jiaming Song, Chenlin Meng, and Stefano Ermon. Denoising diffusion implicit models, 2022. URL https://arxiv.org/abs/2010.02502

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[41]

Matthew Tancik, Pratul P. Srinivasan, Ben Mildenhall, Sara Fridovich-Keil, Nithin Raghavan, Utkarsh Singhal, Ravi Ramamoorthi, Jonathan T. Barron, and Ren Ng. Fourier features let networks learn high frequency functions in low dimensional domains. InProceedings of the 34th International Conference on Neural Information Processing Systems, NIPS ’20, Red Ho...

work page 2020

-

[42]

Lediff: Latent exposure diffusion for hdr generation, 2025

Chao Wang, Zhihao Xia, Thomas Leimkuehler, Karol Myszkowski, and Xuaner Zhang. Lediff: Latent exposure diffusion for hdr generation, 2025. URLhttps://arxiv.org/abs/2412.14456

-

[43]

Panodiffusion: 360-degree panorama outpainting via diffusion

Tianhao Wu, Chuanxia Zheng, and Tat-Jen Cham. Panodiffusion: 360-degree panorama outpainting via diffusion. InThe Twelfth International Conference on Learning Representations, 2023

work page 2023

-

[44]

Towards high-quality hdr deghosting with conditional diffusion models, 2023

Qingsen Yan, Tao Hu, Yuan Sun, Hao Tang, Yu Zhu, Wei Dong, Luc Van Gool, and Yanning Zhang. Towards high-quality hdr deghosting with conditional diffusion models, 2023. URL https://arxiv. org/abs/2311.00932

-

[45]

Revisiting the stack-based inverse tone mapping

Ning Zhang, Yuyao Ye, Yang Zhao, and Ronggang Wang. Revisiting the stack-based inverse tone mapping. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 9162–9171, June 2023

work page 2023

-

[46]

In: 2021 IEEE/CVF In- ternational Conference on Computer Vision (ICCV)

Zhuoran Zheng, Wenqi Ren, Xiaochun Cao, Tao Wang, and Xiuyi Jia. Ultra-high-definition image hdr reconstruction via collaborative bilateral learning. In2021 IEEE/CVF International Conference on Computer Vision (ICCV), pages 4429–4438, 2021. doi: 10.1109/ICCV48922.2021.00441. 12 LatentHDR: Decoupling Exposure from Diffusion via Conditional Latent-to-Latent...

-

[47]

anl2h(Image-to-HDR) setting, where generated images are re-encoded using the V AE and the posterior meanµ(x)is passed to the exposure head, and

-

[48]

at2h(Latent-to-HDR) setting, where the latent produced directly by the Diffusion Trans- former (DiT) is used as input to the exposure head. Although both configurations correspond to visually identical base images, the results in Table 1 show that thet2hvariant consistently achieves higher dynamic range, with an average improvement of approximately +0.5 s...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.