Recognition: no theorem link

Control Charts for Multi-agent Systems

Pith reviewed 2026-05-13 01:00 UTC · model grok-4.3

The pith

Multi-agent systems with learning agents require adaptive control charts but remain open to gradual adversarial defection.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

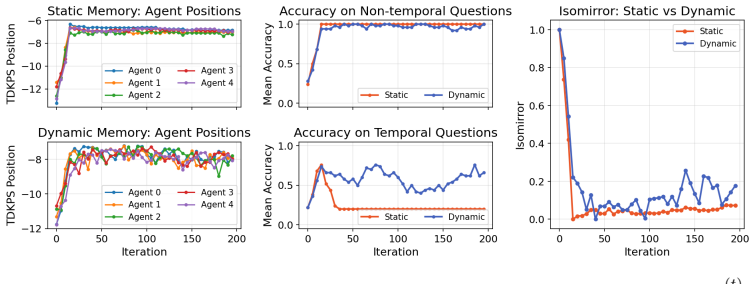

Adaptive control charts extended to multi-agent systems succeed at monitoring environments where agents learn from interactions, yet they can be evaded by adversaries whose defection rate is tuned below the chart's adaptation speed; the result is an unavoidable choice between permitting agent learning and retaining reliable detection of defects.

What carries the argument

The adaptive control chart applied to aggregate statistics of agent actions, which updates its limits in response to observed changes and thereby accommodates normal learning while remaining open to sufficiently slow defection.

If this is right

- Fixed control limits cannot monitor systems in which agents update their behavior from experience.

- Adversaries can remain undetected by choosing a defection pace that stays inside the adapting bounds.

- Any monitoring method relying on historical statistics will face the same adaptation-versus-detection tension.

- System designers must explicitly decide whether to restrict agent learning or accept the resulting security exposure.

Where Pith is reading between the lines

- The tradeoff may extend to other statistical monitoring techniques used in dynamic environments beyond control charts.

- Hybrid safeguards that combine control-chart alerts with independent verification steps could reduce the exposure without freezing agent learning.

- Testing the same setup on larger numbers of agents or with different learning algorithms would clarify how general the slow-defection vulnerability is.

Load-bearing premise

The simulated agent learning rules and slow-defection patterns match the behavior that would appear in actual deployed generative-agent systems.

What would settle it

A controlled experiment in which agents continue to learn yet every slow-defecting adversary is still flagged by the adaptive chart, or a real-world multi-agent deployment that shows no such vulnerability.

Figures

read the original abstract

Generative agents have proven to be powerful assistants in a wide variety of contexts. Given this success, users are now deploying agents with minimal restrictions in open ended, multi-agent environments. Current methods for monitoring the dynamics of open-ended multi-agent systems are limited to qualitative inspection. In this paper, we extend the process-theoretic notion of adaptive control charts to multi-agent systems to enable automated monitoring. Using simulation, we demonstrate that adaptive control charts are necessary for monitoring multi-agent systems that can learn from their environment. We further demonstrate, both empirically and theoretically, that adaptive control charts are susceptible to adversarial agents that defect sufficiently slowly. These results illustrate a fundamental tradeoff in multi-agent system control: either agents in a system cannot learn or the system is susceptible to adversaries.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper extends the process-theoretic notion of adaptive control charts to multi-agent systems to enable automated monitoring of open-ended environments with generative agents. Using simulation, it demonstrates that adaptive control charts are necessary for monitoring MAS in which agents can learn from their environment. It further shows empirically and theoretically that adaptive control charts are susceptible to adversarial agents that defect sufficiently slowly. These results are presented as illustrating a fundamental tradeoff in multi-agent system control: either agents cannot learn or the system is susceptible to adversaries.

Significance. If the central results hold, the work provides a concrete monitoring tool for dynamic MAS and identifies a specific vulnerability in adaptive statistical monitoring, which could guide the development of more robust oversight mechanisms. The combination of simulation-based empirical results and theoretical demonstration is a strength, as is the focus on an under-addressed problem of automated monitoring beyond qualitative inspection. However, the significance is tempered by the lack of argument establishing that the identified vulnerability is intrinsic to any form of adaptive or learning-permissive control rather than specific to the control-chart construction.

major comments (2)

- [Abstract] Abstract: The claim that the results 'illustrate a fundamental tradeoff in multi-agent system control' is not supported by the provided demonstrations. The empirical and theoretical results are specific to adaptive control charts (which update limits based on observed data); no argument is supplied that the vulnerability is intrinsic to adaptivity or learning rather than an artifact of how control-chart limits are updated, nor is it shown that alternative monitoring schemes (e.g., fixed-threshold detectors or periodic re-baselining) would inherit the same flaw.

- [Abstract (simulation results)] The simulation design (referenced in the abstract as demonstrating necessity for learning MAS and susceptibility to slow defectors) is not described in sufficient detail to verify whether the modeled learning and defection behaviors adequately represent real-world generative agent dynamics, which is load-bearing for both the necessity claim and the tradeoff conclusion.

minor comments (1)

- [Abstract] The abstract references 'process-theoretic notion' without a brief inline definition or citation to the specific prior work being extended; this would improve accessibility.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed comments, which have helped clarify the scope and presentation of our results. We address each major comment below and indicate the revisions made to the manuscript.

read point-by-point responses

-

Referee: [Abstract] The claim that the results 'illustrate a fundamental tradeoff in multi-agent system control' is not supported by the provided demonstrations. The empirical and theoretical results are specific to adaptive control charts (which update limits based on observed data); no argument is supplied that the vulnerability is intrinsic to adaptivity or learning rather than an artifact of how control-chart limits are updated, nor is it shown that alternative monitoring schemes (e.g., fixed-threshold detectors or periodic re-baselining) would inherit the same flaw.

Authors: We agree that the original abstract phrasing overstated the generality. The demonstrations focus on adaptive control charts, which we selected as a canonical adaptive monitoring method. We have revised the abstract to state that the results illustrate a tradeoff between permitting learning and security specifically for adaptive control-chart monitoring of MAS. In the revised manuscript we have also added a short theoretical paragraph in Section 4 explaining why any data-driven adaptive monitor (including re-baselining) must eventually incorporate gradual changes and is therefore exploitable by sufficiently slow defection; fixed-threshold schemes are already shown in our simulations to be inadequate once legitimate learning occurs. A exhaustive comparison across every conceivable alternative scheme lies beyond the present scope and is noted as future work. revision: partial

-

Referee: [Abstract (simulation results)] The simulation design (referenced in the abstract as demonstrating necessity for learning MAS and susceptibility to slow defectors) is not described in sufficient detail to verify whether the modeled learning and defection behaviors adequately represent real-world generative agent dynamics, which is load-bearing for both the necessity claim and the tradeoff conclusion.

Authors: We accept that the original simulation description was insufficient for independent verification. The revised manuscript expands the 'Simulation Design' subsection with: complete specifications of the generative-agent learning rules and update mechanisms, exact parameter values for both normal learning and adversarial defection schedules, the precise control-chart construction procedure, and pseudocode for the full simulation loop. We have also added the simulation source code as supplementary material together with additional ablation experiments that vary agent learning rates and defection speeds to confirm robustness of the reported necessity and vulnerability results. revision: yes

Circularity Check

No circularity; claims rest on independent simulation and theoretical results

full rationale

The paper extends control charts via simulation to show necessity for learning agents and demonstrates susceptibility to slow defectors both empirically and theoretically. These steps are presented as external outcomes rather than reducing by construction to fitted parameters, self-definitions, or load-bearing self-citations. The interpretive conclusion of a fundamental tradeoff follows from those demonstrations without the enumerated circular patterns (no equations or claims equate a result to its own inputs). The derivation chain is self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

A. Acharyya, M. W. Trosset, C. E. Priebe, and H. S. Helm. Consistent estimation of generative model representations in the data kernel perspective space, 2025. URL https://arxiv.org/ abs/2409.17308

-

[2]

A. Athreya, Z. Lubberts, Y . Park, and C. Priebe. Euclidean mirrors and dynamics in network time series.Journal of the American Statistical Association, 120(550):1025–1036, 2025

work page 2025

-

[3]

E. Bridgeford and H. Helm. Detecting perspective shifts in multi-agent systems, 2025. URL https://arxiv.org/abs/2512.05013

-

[4]

G. Chen, J. Arroyo, A. Athreya, J. Cape, J. T. V ogelstein, Y . Park, C. White, J. Larson, W. Yang, and C. E. Priebe. Multiple network embedding for anomaly detection in time series of graphs. Computational Statistics & Data Analysis, 203:108070, 2024. doi: 10.1016/j.csda.2024.108070

- [5]

-

[6]

W. Choi and J. Kim. Unsupervised learning approach for anomaly detection in industrial control systems.Applied System Innovation, 7(1):18, 2024

work page 2024

-

[7]

Y .-S. Chuang, A. Goyal, N. Harlalka, S. Suresh, R. Hawkins, S. Yang, D. Shah, J. Hu, and T. Rogers. Simulating opinion dynamics with networks of LLM-based agents. InFindings of the Association for Computational Linguistics: NAACL 2024, pages 3326–3346, Mexico City, Mex- ico, 2024. Association for Computational Linguistics. doi: 10.18653/v1/2024.findings-...

-

[8]

B. Duderstadt, H. S. Helm, and C. E. Priebe. Comparing foundation models using data kernels,

- [9]

-

[10]

L. Hammond, A. Chan, J. Clifton, J. Hoelscher-Obermaier, A. Khan, E. McLean, C. Smith, W. Barfuss, J. Foerster, T. Gavenˇciak, et al. Multi-agent risks from advanced AI. Technical Report 1, Cooperative AI Foundation, 2025

work page 2025

-

[11]

H. Helm, B. Duderstadt, and C. E. Priebe. Tracking perspectives of interacting language models. InProceedings of the 2024 Conference on Empirical Methods in Natural Language Processing (EMNLP). Association for Computational Linguistics, 2024

work page 2024

-

[12]

H. Helm, A. Acharyya, Y . Park, B. Duderstadt, and C. E. Priebe. Statistical inference on black-box generative models in the data kernel perspective space. InFindings of the Association for Computational Linguistics: ACL 2025, pages 3955–3970. Association for Computational Linguistics, 2025

work page 2025

-

[13]

Sleeper Agents: Training Deceptive LLMs that Persist Through Safety Training

E. Hubinger, C. Denison, J. Mu, M. Lambert, M. Tong, M. MacDiarmid, T. Lanham, D. M. Ziegler, T. Maxwell, N. Cheng, et al. Sleeper agents: Training deceptive llms that persist through safety training.arXiv preprint arXiv:2401.05566, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[14]

M. Huh, B. Cheung, T. Wang, and P. Isola. The platonic representation hypothesis, 2024. URL https://arxiv.org/abs/2405.07987

work page Pith review arXiv 2024

-

[15]

Evaluating language-model agents on realistic autonomous tasks

M. Kinniment, L. Sato, H. Du, B. Goodrich, M. Winsor, B. Shlegeris, and A. Gabriel. Evaluating language-model agents on realistic autonomous tasks.arXiv preprint arXiv:2312.11671, 2023

-

[16]

H. McGuinness, T. Wang, C. E. Priebe, and H. Helm. Investigating social alignment via mirroring in a system of interacting language models. 2025

work page 2025

-

[17]

Z. Nussbaum, J. X. Morris, B. Duderstadt, and A. Mulyar. Nomic embed: Training a repro- ducible long context text embedder, 2025. URLhttps://arxiv.org/abs/2402.01613. 10

-

[18]

OpenAI. GPT-4o system card.arXiv preprint arXiv:2410.21276, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[19]

E. S. Page. Continuous inspection schemes.Biometrika, 41(1/2):100–115, 1954. doi: 10.2307/ 2333009

work page 1954

-

[20]

J. S. Park, J. C. O’Brien, C. J. Cai, M. R. Morris, P. Liang, and M. S. Bernstein. Generative agents: Interactive simulacra of human behavior. InProceedings of the 36th Annual ACM Symposium on User Interface Software and Technology (UIST), pages 1–22, San Francisco, CA, USA, 2023. ACM. doi: 10.1145/3586183.3606763

-

[21]

T. Putterman, D. Lim, Y . Gelberg, S. Jegelka, and H. Maron. Learning on loras: Gl-equivariant processing of low-rank weight spaces for large finetuned models, 2024. URL https://arxiv. org/abs/2410.04207

-

[22]

Qiu.Introduction to Statistical Process Control

P. Qiu.Introduction to Statistical Process Control. Chapman and Hall/CRC, 2013

work page 2013

-

[23]

Moltbook: A social network for moltbots, 2025

schlichtm. Moltbook: A social network for moltbots, 2025. URL https://www.moltbook. com/. Social network platform designed for AI agent interaction without direct human involve- ment

work page 2025

-

[24]

S. Schmidl, P. Wenig, and T. Papenbrock. Anomaly detection in time series: A comprehensive evaluation.Proceedings of the VLDB Endowment, 15(9):1779–1797, 2022. doi: 10.14778/ 3538598.3538602

-

[25]

N. Shapira, C. Wendler, A. Yen, G. Sarti, K. Pal, O. Floody, A. Belfki, A. Loftus, A. R. Jannali, N. Prakash, J. Cui, G. Rogers, J. Brinkmann, C. Rager, A. Zur, M. Ripa, A. Sankaranarayanan, D. Atkinson, R. Gandikota, J. Fiotto-Kaufman, E. Hwang, H. Orgad, P. S. Sahil, N. Taglicht, T. Shabtay, A. Ambus, N. Alon, S. Oron, A. Gordon-Tapiero, Y . Kaplan, V ....

-

[26]

URLhttps://arxiv.org/abs/2602.20021

work page internal anchor Pith review arXiv

-

[27]

W. A. Shewhart.Economic Control of Quality of Manufactured Product. D. Van Nostrand Company, New York, 1931

work page 1931

-

[28]

Steinberger and OpenClaw Contributors

P. Steinberger and OpenClaw Contributors. OpenClaw: Your own personal AI assistant, 2025. URLhttps://github.com/openclaw/openclaw. Formerly known as Moltbot/Clawdbot

work page 2025

-

[29]

J. B. Tenenbaum, V . de Silva, and J. C. Langford. A global geometric framework for nonlinear dimensionality reduction.Science, 290(5500):2319–2323, 2000. doi: 10.1126/science.290. 5500.2319

-

[30]

N. Tomašev, M. Franklin, J. Jacobs, S. Krier, and S. Osindero. Distributional AGI safety, 2025

work page 2025

-

[31]

W. S. Torgerson. Multidimensional scaling: I. Theory and method.Psychometrika, 17(4): 401–419, 1952. doi: 10.1007/BF02288916

-

[32]

E. L. Wang, M. S. Kiasari, T. Wang, H. Helm, A. Athreya, C. Priebe, and V . Lyzinski. Gaussian mixture models as a proxy for interacting language models, 2026. URL https://arxiv.org/ abs/2506.00077

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[33]

L. Yee, M. Chui, R. Roberts, and S. Xu. Why agents are the next fron- tier of generative AI.McKinsey Quarterly, July 2024. URL https: //www.mckinsey.com/capabilities/mckinsey-digital/our-insights/ why-agents-are-the-next-frontier-of-generative-ai. A Additional Experimental Details A.1 Prompt template and examples Every non-adversarial agent in the swarm q...

work page 2024

-

[34]

In particular,α (t) depends onwandkbut not ont,µ 0, orσ 0

and the system is in-control at timet, then α(t) =P(|T w|> k), T w d = r w+ 1 w ·t w−1, where tw−1 denotes a random variable with the Student t-distribution on w−1 degrees of freedom. In particular,α (t) depends onwandkbut not ont,µ 0, orσ 0. Proof. Under in-control conditions, S(t), S(t−1), . . . , S(t−w) are i.i.d. N(µ 0, σ2 0). The standardized statist...

-

[35]

For t∈[t ∗, t∗ +w) , the window contains a mixture of pre-shift and post-shift observations, and1−β (t)(δ)> α (t) whenever|δ|is sufficiently large relative toσ 0

-

[36]

Proof.Part (2) is immediate from Lemma 1

Fort≥t ∗ +w,1−β (t)(δ) =α (t). Proof.Part (2) is immediate from Lemma 1. For part (1), when t∈[t ∗, t∗ +w) , the window W(t) contains t−t ∗ post-shift observations and w−(t−t ∗) pre-shift observations. Consequently, ˆµ(t) =µ 0 + t−t∗ w δ+O p(w−1/2) and ˆσ(t) =σ 0 +O p(w−1/2). The current observation S(t) has mean µ0 +δ , so the effective signal-to- noise ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.