Recognition: 1 theorem link

· Lean TheoremComputational Design of a Low-Visibility UAV Using a Human-Aligned Perceptual Metric

Pith reviewed 2026-05-13 01:35 UTC · model grok-4.3

The pith

A single-propeller UAV called Phantom Twist can be computationally optimized to spin rapidly and reduce its visibility to humans using motion blur and a perceptual metric.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

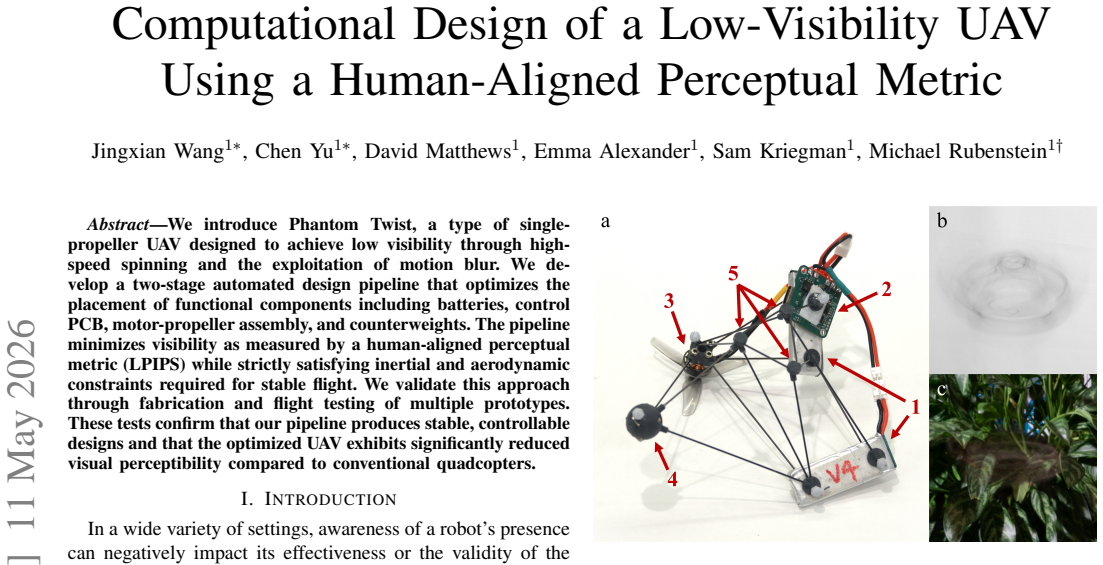

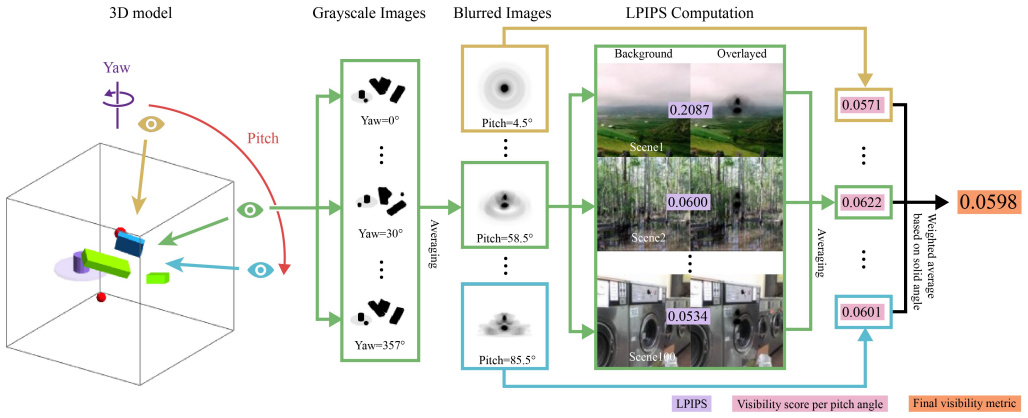

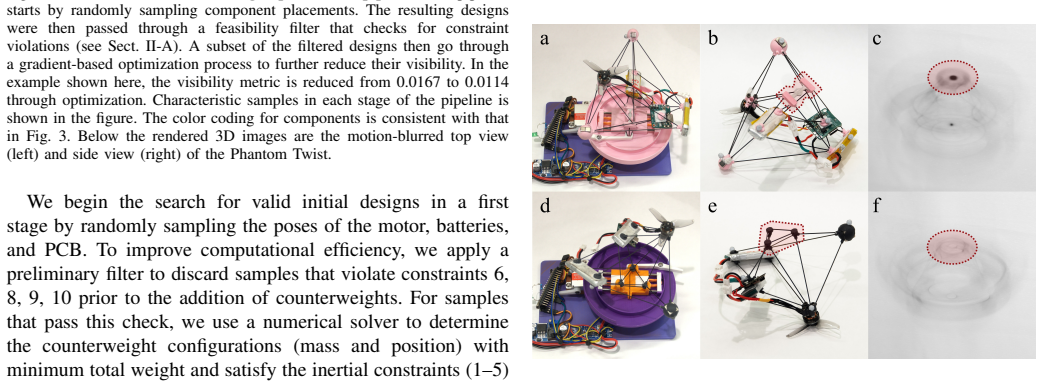

Phantom Twist is a single-propeller UAV whose component layout is optimized in a two-stage pipeline to minimize visibility as measured by the LPIPS human-aligned perceptual metric, subject to inertial and aerodynamic constraints for stable flight. Fabrication and flight tests of prototypes confirm stable, controllable designs with significantly reduced visual perceptibility compared to conventional quadcopters.

What carries the argument

The two-stage automated design pipeline that optimizes placement of batteries, control PCB, motor-propeller assembly, and counterweights to minimize LPIPS visibility while satisfying inertial and aerodynamic constraints.

If this is right

- Fabrication and flight testing of multiple prototypes confirm that the pipeline produces stable and controllable designs.

- The optimized UAV exhibits significantly reduced visual perceptibility compared to conventional quadcopters.

- The pipeline balances minimization of visibility with the inertial and aerodynamic requirements for flight.

- High-speed spinning combined with motion blur serves as a viable strategy for achieving low visibility in UAVs.

Where Pith is reading between the lines

- The same optimization approach could extend to other moving robotic platforms where engineered motion blur might reduce detectability.

- Perceptual metrics could replace or supplement physical stealth techniques in future UAV designs for varied environments.

- Adjusting the pipeline for different observer distances or lighting conditions might further improve real-world performance.

Load-bearing premise

The LPIPS perceptual metric applied to the design accurately predicts real-world human visibility of fast-spinning objects in flight, and the chosen inertial and aerodynamic constraints suffice for stable flight.

What would settle it

A side-by-side human observer test measuring detection rates or reaction times for the optimized spinning prototype versus a standard quadcopter in controlled flight at varied distances and backgrounds.

Figures

read the original abstract

We introduce Phantom Twist, a type of single-propeller UAV designed to achieve low visibility through high-speed spinning and the exploitation of motion blur. We develop a two-stage automated design pipeline that optimizes the placement of functional components including batteries, control PCB, motor-propeller assembly, and counterweights. The pipeline minimizes visibility as measured by a human-aligned perceptual metric (LPIPS) while strictly satisfying inertial and aerodynamic constraints required for stable flight. We validate this approach through fabrication and flight testing of multiple prototypes. These tests confirm that our pipeline produces stable, controllable designs and that the optimized UAV exhibits significantly reduced visual perceptibility compared to conventional quadcopters.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces Phantom Twist, a single-propeller UAV that achieves low visibility through high-speed spinning and motion blur. It presents a two-stage automated design pipeline that optimizes placement of functional components (batteries, control PCB, motor-propeller assembly, and counterweights) to minimize a human-aligned perceptual metric (LPIPS) while enforcing inertial and aerodynamic constraints for stable flight. Validation consists of fabricating and flight-testing multiple prototypes, with the claim that the designs are stable and controllable and that the optimized UAV shows significantly reduced visual perceptibility relative to conventional quadcopters.

Significance. If the central claims hold, the work offers a concrete example of integrating an external perceptual metric with hard physical constraints in computational UAV design, which could influence low-observable drone research. The explicit two-stage pipeline and the decision to fabricate and fly physical prototypes are positive elements that move beyond pure simulation. However, the absence of quantitative visibility data in the reported tests limits the immediate impact and generalizability of the result.

major comments (2)

- [Abstract and §4 (Flight Testing)] Abstract and §4 (Flight Testing): The statement that 'the optimized UAV exhibits significantly reduced visual perceptibility compared to conventional quadcopters' is presented without quantitative visibility scores, statistical comparisons to baseline quadcopters, error bars, or any description of how perceptibility was measured during actual flight. This directly undermines support for the central validation claim.

- [§3 (Design Pipeline)] §3 (Design Pipeline): LPIPS is applied to rendered images of component layouts, yet the manuscript supplies no details on whether motion blur was simulated, how frames were sampled from spinning motion, or how the static-image LPIPS metric was adapted for high-RPM rotating objects. Because the optimization objective rests on this mapping, the lack of justification is load-bearing for the claimed visibility reduction.

minor comments (1)

- [Results figures] Figure captions and axis labels in the results section should explicitly state the number of prototypes tested and the flight conditions under which perceptibility was assessed.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed report. The comments highlight important aspects of validation and methodological transparency that we address below. We have revised the manuscript to incorporate additional details and data where feasible.

read point-by-point responses

-

Referee: [Abstract and §4 (Flight Testing)] The statement that 'the optimized UAV exhibits significantly reduced visual perceptibility compared to conventional quadcopters' is presented without quantitative visibility scores, statistical comparisons to baseline quadcopters, error bars, or any description of how perceptibility was measured during actual flight. This directly undermines support for the central validation claim.

Authors: We agree that the flight-test section would be strengthened by quantitative visibility measurements. The original manuscript reports that prototypes achieve stable, controllable flight and that the optimization yields lower LPIPS scores in simulation; however, direct LPIPS computation on flight-captured video frames of the physical prototypes versus a baseline quadcopter was not included. In the revision we add a new subsection in §4 that extracts frames from synchronized high-speed video of both the optimized single-propeller UAV and a comparable quadcopter flown under identical conditions, computes LPIPS against the same background, and reports mean scores with standard deviations and a statistical comparison (paired t-test). This provides the missing quantitative support while preserving the original stability results. revision: yes

-

Referee: [§3 (Design Pipeline)] LPIPS is applied to rendered images of component layouts, yet the manuscript supplies no details on whether motion blur was simulated, how frames were sampled from spinning motion, or how the static-image LPIPS metric was adapted for high-RPM rotating objects. Because the optimization objective rests on this mapping, the lack of justification is load-bearing for the claimed visibility reduction.

Authors: We acknowledge the omission of implementation specifics. The pipeline renders each candidate layout at 12 evenly spaced rotation angles spanning one full propeller revolution at the target RPM, composites the images with a linear motion-blur kernel whose width is determined by the angular velocity and camera exposure time, and then evaluates LPIPS on the resulting blurred image. We have expanded §3 with a dedicated paragraph, pseudocode, and the exact parameter values (number of angular samples, RPM range, blur kernel formulation) used during optimization. This makes the adaptation of the static LPIPS metric explicit and reproducible. revision: yes

Circularity Check

No significant circularity: optimization uses external LPIPS metric under independent physical constraints

full rationale

The paper's core derivation is a two-stage automated design pipeline that places components (batteries, PCB, motor-propeller, counterweights) to minimize LPIPS visibility while enforcing separate inertial and aerodynamic constraints for stable flight. LPIPS is an external pre-trained perceptual metric applied to rendered images; the constraints are standard physics requirements not derived from visibility data. Validation proceeds via independent fabrication and flight testing of prototypes, which confirm stability and reduced perceptibility without feeding test outcomes back into the optimization as fitted parameters. No equations reduce the visibility claim to a self-defined quantity, a prediction forced by the same data, or a load-bearing self-citation chain. The pipeline is self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe develop a two-stage automated design pipeline that optimizes the placement of functional components ... minimizes visibility as measured by a human-aligned perceptual metric (LPIPS) while strictly satisfying inertial and aerodynamic constraints

Reference graph

Works this paper leans on

-

[1]

Animal camouflage: cur- rent issues and new perspectives,

M. Stevens and S. Merilaita, “Animal camouflage: cur- rent issues and new perspectives,”Philosophical Trans- actions of the Royal Society B: Biological Sciences, vol. 364, pp. 423–427, 11 2008

work page 2008

-

[2]

Distributed cam- ouflage for swarm robotics and smart materials,

Y . Li, J. Klingner, and N. Correll, “Distributed cam- ouflage for swarm robotics and smart materials,”Au- tonomous Robots, vol. 42, no. 8, pp. 1635–1650, 2018

work page 2018

-

[3]

Camouflage and display for soft machines,

S. A. Morin, R. F. Shepherd, S. W. Kwok, A. A. Stokes, A. Nemiroski, and G. M. Whitesides, “Camouflage and display for soft machines,”Science, vol. 337, no. 6096, pp. 828–832, 2012

work page 2012

-

[4]

Biomimetic chameleon soft robot with ar- tificial crypsis and disruptive coloration skin,

H. Kim, J. Choi, K. K. Kim, P. Won, S. Hong, and S. H. Ko, “Biomimetic chameleon soft robot with ar- tificial crypsis and disruptive coloration skin,”Nature communications, vol. 12, no. 1, p. 4658, 2021

work page 2021

-

[5]

Toward Invisible Drones: An Ultra-HDR Optical Cloaking System,

K. Palovuori, “Toward Invisible Drones: An Ultra-HDR Optical Cloaking System,” inNew Developments and Environmental Applications of Drones: Proceedings of FinDrones 2020, pp. 73–82, Springer, 2021

work page 2020

-

[6]

Camouflage of sea-search aircraft, the Yehudi Project.,

National Defense Research Committee, “Camouflage of sea-search aircraft, the Yehudi Project.,” tech. rep., Office of Scientific Research and Development, 1944

work page 1944

-

[7]

Transparent soft robots for effective camouflage,

P. Li, Y . Wang, U. Gupta, J. Liu, L. Zhang, D. Du, C. C. Foo, J. Ouyang, and J. Zhu, “Transparent soft robots for effective camouflage,”Advanced Functional Materials, vol. 29, no. 37, p. 1901908, 2019

work page 2019

-

[8]

Transparent soft actuators/sensors and camouflage skins for imperceptible soft robotics,

P. Won, K. K. Kim, H. Kim, J. J. Park, I. Ha, J. Shin, J. Jung, H. Cho, J. Kwon, H. Lee,et al., “Transparent soft actuators/sensors and camouflage skins for imperceptible soft robotics,”Advanced Materials, vol. 33, no. 19, p. 2002397, 2021

work page 2021

-

[9]

J. Wang, C. Yu, D. Matthews, E. Alexander, S. Kriegman, and M. Rubenstein, “Phantom Twist,” 2026. YouTube Video

work page 2026

-

[10]

Soft biohybrid morphing wings with feathers underactu- ated by wrist and finger motion,

E. Chang, L. Y . Matloff, A. K. Stowers, and D. Lentink, “Soft biohybrid morphing wings with feathers underactu- ated by wrist and finger motion,”Science Robotics, vol. 5, no. 38, p. eaay1246, 2020

work page 2020

-

[11]

AeroVironment, Inc., “AeroVironment Develops World’s First Fully Operational Life-Size Hummingbird-Like Un- manned Aircraft for DARPA,” 2011. Accessed: 2026-01- 29

work page 2011

-

[12]

A review of biomimetic air vehicle research: 1984-2014,

T. A. Ward, M. Rezadad, C. J. Fearday, and R. Viya- puri, “A review of biomimetic air vehicle research: 1984-2014,”International Journal of Micro Air Vehicles, vol. 7, no. 3, pp. 375–394, 2015

work page 1984

-

[13]

M. V . Srinivasan, “Strategies for visual navigation, target detection and camouflage: inspirations from insect vi- sion,” inProceedings of ICNN’95-International Confer- ence on Neural Networks, vol. 5, pp. 2456–2460, IEEE, 1995

work page 1995

-

[14]

Steering laws for motion camouflage,

E. W. Justh and P. Krishnaprasad, “Steering laws for motion camouflage,”Proceedings of the Royal Society A: Mathematical, Physical and Engineering Sciences, vol. 462, no. 2076, pp. 3629–3643, 2006

work page 2076

-

[15]

Application of systems iden- tification to the implementation of motion camouflage in mobile robots,

I. Ranó and R. Iglesias, “Application of systems iden- tification to the implementation of motion camouflage in mobile robots,”Autonomous Robots, vol. 40, no. 2, pp. 229–244, 2016

work page 2016

-

[16]

B. A. Wandell,Foundations of Vision. Sunderland, MA: Sinauer Associates, 1995

work page 1995

-

[17]

The minimum duration of a perception,

R. Efron, “The minimum duration of a perception,” Neuropsychologia, vol. 8, no. 1, pp. 57–63, 1970

work page 1970

-

[18]

Perceptual integration and perceptual segregation of brief visual stimuli,

J. H. Hogben and V . di Lollo, “Perceptual integration and perceptual segregation of brief visual stimuli,”Vision research, vol. 14, no. 11, pp. 1059–1069, 1974

work page 1974

-

[19]

E. S. Ferry, “Persistence of vision,”American Journal of Science, vol. 3, no. 261, pp. 192–207, 1892

-

[20]

C. Thompson, “The Boomerang Drone,”The New York Times Magazine, Dec 2006

work page 2006

-

[21]

Piccolissimo: The smallest micro aerial vehicle,

M. Piccoli and M. Yim, “Piccolissimo: The smallest micro aerial vehicle,” inICRA 2017, pp. 3328–3333, 2017

work page 2017

-

[22]

Autonomous 3D Position Control for a Safe Single Motor Micro Aerial Vehicle,

A. G. Curtis, B. Strong, E. Steager, M. Yim, and M. Rubenstein, “Autonomous 3D Position Control for a Safe Single Motor Micro Aerial Vehicle,”IEEE Robotics and Automation Letters, vol. 8, no. 6, pp. 3566–3573, 2023

work page 2023

-

[23]

A Single Motor Nano Aerial Vehicle with Novel Peer- to-Peer Communication and Sensing Mechanism,

J. Wang, A. G. Curtis, M. Yim, and M. Rubenstein, “A Single Motor Nano Aerial Vehicle with Novel Peer- to-Peer Communication and Sensing Mechanism,”RSS, 2024

work page 2024

-

[24]

A con- trollable flying vehicle with a single moving part,

W. Zhang, M. W. Mueller, and R. D’Andrea, “A con- trollable flying vehicle with a single moving part,” in 2016 IEEE International Conference on Robotics and Automation (ICRA), pp. 3275–3281, IEEE, 2016

work page 2016

-

[25]

W. Zhang, M. W. Mueller, and R. D’Andrea, “Design, modeling and control of a flying vehicle with a single moving part that can be positioned anywhere in space,” Mechatronics, vol. 61, pp. 117–130, 2019

work page 2019

-

[26]

Three- dimensional electronic microfliers inspired by wind- dispersed seeds,

B. H. Kim, K. Li, J.-T. Kim, Y . Park, H. Jang, X. Wang, Z. Xie, S. M. Won, H.-J. Yoon, G. Lee,et al., “Three- dimensional electronic microfliers inspired by wind- dispersed seeds,”Nature, vol. 597, no. 7877, pp. 503– 510, 2021

work page 2021

-

[27]

Photochemically responsive polymer films enable tunable gliding flights,

J. Yang, M. R. Shankar, and H. Zeng, “Photochemically responsive polymer films enable tunable gliding flights,” Nature Communications, vol. 15, no. 1, p. 4684, 2024

work page 2024

-

[28]

Dynamics and control of a collaborative and separating descent of samara autorotating wings,

S. K. H. Win, L. S. T. Win, D. Sufiyan, G. S. Soh, and S. Foong, “Dynamics and control of a collaborative and separating descent of samara autorotating wings,”IEEE Robotics and Automation Letters, vol. 4, no. 3, pp. 3067– 3074, 2019

work page 2019

-

[29]

S. F. Hoerner,Fluid-Dynamic Drag: Practical Informa- tion on Aerodynamic Drag and Hydrodynamic Resis- tance. Bakersfield, CA: Hoerner Fluid Dynamics, 1965

work page 1965

-

[30]

H. F. Talbot, “XLIV . Experiments on light,”The Lon- don, Edinburgh, and Dublin Philosophical Magazine and Journal of Science, vol. 5, no. 29, pp. 321–334, 1834

-

[31]

Ueber einige Eigenschaften der vom Lichte auf das Gesichtsorgan hervorgebrachten Eindrücke,

J. Plateau, “Ueber einige Eigenschaften der vom Lichte auf das Gesichtsorgan hervorgebrachten Eindrücke,”An- nalen der Physik, vol. 96, no. 10, pp. 304–332, 1830

-

[32]

Evaluating the Talbot- Plateau law,

E. Greene and J. Morrison, “Evaluating the Talbot- Plateau law,”Frontiers in Neuroscience, vol. 17, p. 1169162, 2023

work page 2023

-

[33]

The unreasonable effectiveness of deep fea- tures as a perceptual metric,

R. Zhang, P. Isola, A. A. Efros, E. Shechtman, and O. Wang, “The unreasonable effectiveness of deep fea- tures as a perceptual metric,” inProceedings of the IEEE conference on computer vision and pattern recognition, pp. 586–595, 2018

work page 2018

-

[34]

Com- parison of full-reference image quality models for op- timization of image processing systems,

K. Ding, K. Ma, S. Wang, and E. P. Simoncelli, “Com- parison of full-reference image quality models for op- timization of image processing systems,”International Journal of Computer Vision, vol. 129, no. 4, pp. 1258– 1281, 2021

work page 2021

-

[35]

Seeing Eye to AI? Applying Deep-Feature- Based Similarity Metrics to Information Visualization,

S. Long, A. Chatzimparmpas, E. Alexander, M. Kay, and J. Hullman, “Seeing Eye to AI? Applying Deep-Feature- Based Similarity Metrics to Information Visualization,” inProceedings of the 2025 CHI Conference on Human Factors in Computing Systems, pp. 1–20, 2025

work page 2025

-

[36]

Syn- thesis and perceptual scaling of high-resolution natural- istic images using Stable Diffusion,

L. Pettini, C. Bogler, C. Doeller, and J.-D. Haynes, “Syn- thesis and perceptual scaling of high-resolution natural- istic images using Stable Diffusion,”Behavior Research Methods, vol. 58, no. 1, p. 24, 2025

work page 2025

-

[37]

Perceptual image quality assessment for various viewing conditions and display systems,

A. Chubarau, T. Akhavan, H. Yoo, R. K. Mantiuk, and J. Clark, “Perceptual image quality assessment for various viewing conditions and display systems,” 2020

work page 2020

-

[38]

Places: A 10 million Image Database for Scene Recognition,

B. Zhou, A. Lapedriza, A. Khosla, A. Oliva, and A. Tor- ralba, “Places: A 10 million Image Database for Scene Recognition,”IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017

work page 2017

-

[39]

Humans perceive flicker artifacts at 500 Hz,

J. Davis, Y .-H. Hsieh, and H.-C. Lee, “Humans perceive flicker artifacts at 500 Hz,”Scientific reports, vol. 5, no. 1, p. 7861, 2015

work page 2015

-

[40]

ERG responses and the Ferry-Porter law,

J. Kremers, A. J. Aher, and C. Huchzermeyer, “ERG responses and the Ferry-Porter law,”Journal of the Optical Society of America A, vol. 42, no. 5, pp. B1– B7, 2024

work page 2024

-

[41]

Contributions to the study of flicker. Paper II,

T. C. Porter, “Contributions to the study of flicker. Paper II,”Proceedings of the Royal Society of London, vol. 70, no. 459-466, pp. 313–329, 1902

work page 1902

-

[42]

QuadRotary: De- sign and Control of In-Flight Transition Between Quad- copter and Rotary-Wing,

X. Cai, S. K. H. Win, and S. Foong, “QuadRotary: De- sign and Control of In-Flight Transition Between Quad- copter and Rotary-Wing,”IEEE/ASME Transactions on Mechatronics, 2025

work page 2025

-

[43]

K. W. V . Treuren and C. F. Wisniewski, “Testing pro- peller tip modifications to reduce acoustic noise genera- tion on a quadcopter propeller,”Journal of Engineering for Gas Turbines and Power, vol. 141, no. 12, p. 121017, 2019

work page 2019

-

[44]

D. S. Drew, N. O. Lambert, C. B. Schindler, and K. S. J. Pister, “Toward Controlled Flight of the Ionocraft: A Flying Microrobot Using Electrohydrodynamic Thrust With Onboard Sensing and No Moving Parts,”IEEE Robotics and Automation Letters, vol. 3, no. 4, pp. 2807– 2813, 2018

work page 2018

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.