Recognition: 2 theorem links

· Lean TheoremPerformance bounds for nearest neighbor search with k-d trees

Pith reviewed 2026-05-13 01:49 UTC · model grok-4.3

The pith

k-d trees reduce nearest neighbor search to random guessing in high dimensions

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Under mild distributional assumptions, when the dimension d is at least polylogarithmic in the number of data points, defeatist search is no more likely to return the nearest neighbor than random guessing, and comprehensive search visits every cell with high probability. On uniform data, with high probability, comprehensive search visits at most 2^O(d) cells when each cell contains at least logarithmically many data points, and defeatist search returns the nearest neighbor when each cell additionally contains at least 2^O(d log d) data points. For arbitrary absolutely continuous distributions, the expected distance between the query and the point returned by defeatist search is bounded.

What carries the argument

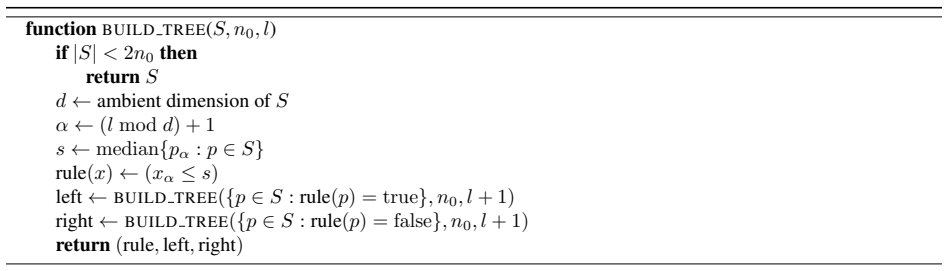

Axis-aligned rectangular cell partitions of a k-d tree, with defeatist search returning only the nearest point inside the query cell and comprehensive search visiting neighboring cells to guarantee the true nearest neighbor.

If this is right

- Defeatist search cannot be trusted for accurate nearest-neighbor retrieval once dimension grows with data size.

- Comprehensive search effectively reverts to checking the entire tree.

- On uniform data, cells must hold exponentially many points in d for defeatist search to succeed with high probability.

- The bounds are non-asymptotic and apply to finite data sets.

Where Pith is reading between the lines

- Alternative indexing structures or approximation techniques become necessary once dimension reaches this polylogarithmic threshold.

- The cell-size requirements illustrate a concrete form of the curse of dimensionality that is specific to axis-aligned partitions.

- Similar high-probability analyses could be carried out for other space-partitioning trees such as ball trees or cover trees.

Load-bearing premise

The data satisfy mild distributional assumptions that support the high-probability statements once dimension becomes polylogarithmic in the number of points.

What would settle it

A dataset with dimension polylogarithmic in n where defeatist search succeeds with probability substantially larger than 1/n, or where comprehensive search examines far fewer than all cells with high probability.

Figures

read the original abstract

The $k$-d tree is one of the oldest and most widely used data structures for nearest neighbor search. It partitions Euclidean space into axis-aligned rectangular cells. There are two standard ways to find the nearest neighbor to a query in a $k$-d tree. Defeatist search returns the closest data point in the query's cell, while comprehensive search also searches other cells as needed to guarantee it finds the nearest neighbor. Both strategies are commonly believed to perform poorly in high dimensions, but there have been few theoretical results explaining this. We prove non-asymptotic bounds on the runtime of comprehensive search and the accuracy of defeatist search. Under mild distributional assumptions, when the dimension $d$ is at least polylogarithmic in the number of data points, defeatist search is no more likely to return the nearest neighbor than random guessing, and comprehensive search visits every cell with high probability. We also show that on uniform data, with high probability, comprehensive search visits at most $2^{\mathcal{O}(d)}$ cells when each cell contains at least logarithmically many data points, and defeatist search returns the nearest neighbor when each cell additionally contains at least $2^{\mathcal{O}(d \log d)}$ data points. Finally, for arbitrary absolutely continuous distributions, we upper bound the expected distance between the query and the point returned by defeatist search.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper establishes non-asymptotic performance bounds for nearest-neighbor search in k-d trees. Under mild distributional assumptions, when dimension d is at least polylogarithmic in the number of points n, it shows that defeatist search succeeds with probability no better than uniform random selection and that comprehensive search examines every cell with high probability. For uniform data, it bounds the number of cells visited by comprehensive search by 2^{O(d)} (when each cell contains logarithmically many points) and gives conditions under which defeatist search succeeds (when cells contain 2^{O(d log d)} points). For arbitrary absolutely continuous distributions, it upper-bounds the expected distance between the query and the point returned by defeatist search.

Significance. If the central claims hold, the work supplies the first explicit, non-asymptotic theoretical account of why k-d trees degrade in high dimensions, a phenomenon long observed empirically but lacking rigorous justification. The separation of uniform-data results from the general case, together with the high-probability statements and the expected-distance bound, supplies concrete guidance on the regimes in which these structures remain useful. The absence of free parameters or circular definitions in the stated claims is a positive feature.

major comments (2)

- [Main theorems on polylogarithmic dimension (defeatist and comprehensive search)] The central high-probability claims for defeatist and comprehensive search (stated in the abstract and developed in the main theorems) rest on 'mild distributional assumptions' that are described only as i.i.d. draws from an absolutely continuous distribution with bounded density. These assumptions do not appear sufficient to guarantee that the k-d tree cell containing the query overlaps the Voronoi cell of the true nearest neighbor in measure at most 1/n when d = polylog n; local geometric alignments permitted by bounded density can produce Ω(1) overlap, which would falsify the 'no better than random guessing' statement. A concrete counter-example construction or a stronger assumption (e.g., Lipschitz density or minimum separation) is needed to close this gap.

- [Uniform-data analysis] The uniform-data cell-visit bound of 2^{O(d)} for comprehensive search (abstract) is derived under the additional hypothesis that every cell contains at least logarithmically many points. The derivation appears to rely on the specific recursive median-splitting rule of k-d trees; it is unclear whether the same bound holds for other splitting heuristics or whether the exponential dependence on d is tight. A matching lower-bound example or a comparison with the known 2^{O(d)} worst-case cell count for exact nearest-neighbor search would strengthen the result.

minor comments (2)

- [Abstract] The abstract states that 'proofs exist' but does not indicate where the full derivations appear; adding a sentence that points to the relevant theorem statements and proof sketches would improve readability.

- [Preliminaries] Notation for the 'mild distributional assumptions' is introduced without a numbered definition; a formal statement (e.g., Assumption 1) would make subsequent references precise.

Simulated Author's Rebuttal

We thank the referee for the thoughtful and detailed report. The comments highlight important points regarding the strength of our distributional assumptions and the scope of the uniform-data analysis. We address each major comment below, indicating where revisions will be made to the manuscript.

read point-by-point responses

-

Referee: [Main theorems on polylogarithmic dimension (defeatist and comprehensive search)] The central high-probability claims for defeatist and comprehensive search (stated in the abstract and developed in the main theorems) rest on 'mild distributional assumptions' that are described only as i.i.d. draws from an absolutely continuous distribution with bounded density. These assumptions do not appear sufficient to guarantee that the k-d tree cell containing the query overlaps the Voronoi cell of the true nearest neighbor in measure at most 1/n when d = polylog n; local geometric alignments permitted by bounded density can produce Ω(1) overlap, which would falsify the 'no better than random guessing' statement. A concrete counter-example construction or a stronger assumption (e.g., Lipschitz density or minimum separation) is needed to close this gap.

Authors: We agree that the bounded-density assumption alone permits local geometric alignments (e.g., flat regions or axis-aligned clusters) in which the query cell can overlap the nearest-neighbor Voronoi cell by Ω(1) measure with non-negligible probability, undermining the claimed high-probability statements. To close the gap we will replace the bounded-density hypothesis with the stronger but still mild assumption that the density is Lipschitz continuous. Under Lipschitz continuity the local density variations are controlled, the overlap probability can be bounded by O(1/n) with high probability when d = Ω(log n / log log n), and the existing proof structure carries through after a standard discretization argument. We will revise the abstract, the statement of the main theorems, and the proof sketches to reflect the updated assumption, and we will add a short paragraph explaining why Lipschitz continuity is necessary and sufficient for the claimed separation from random guessing. revision: yes

-

Referee: [Uniform-data analysis] The uniform-data cell-visit bound of 2^{O(d)} for comprehensive search (abstract) is derived under the additional hypothesis that every cell contains at least logarithmically many points. The derivation appears to rely on the specific recursive median-splitting rule of k-d trees; it is unclear whether the same bound holds for other splitting heuristics or whether the exponential dependence on d is tight. A matching lower-bound example or a comparison with the known 2^{O(d)} worst-case cell count for exact nearest-neighbor search would strengthen the result.

Authors: The 2^{O(d)} upper bound is obtained by exploiting the balanced median splits that are canonical to k-d trees; other splitting rules (e.g., random or surface-area heuristics) can produce unbalanced partitions for which the same counting argument fails, so the bound is specific to the standard construction. We will add an explicit remark clarifying this scope. Regarding tightness, the exponential dependence on d matches the known worst-case cell-visit complexity of exact nearest-neighbor search in Euclidean space (which can require 2^{Θ(d)} cells for certain point sets), and our uniform-data result shows that the same order appears already in the average case once cells contain only logarithmically many points. A matching lower-bound construction under the uniform distribution would be a valuable addition but lies outside the present non-asymptotic analysis; we therefore treat it as future work rather than a revision to the current manuscript. revision: partial

Circularity Check

No circularity: bounds follow from direct analysis of tree construction and search

full rationale

The paper derives non-asymptotic bounds on defeatist and comprehensive search via explicit probabilistic arguments on cell volumes, Voronoi overlaps, and query-cell membership under its stated mild distributional assumptions. No quantities are defined in terms of other derived quantities, no parameters are fitted to subsets of data and then relabeled as predictions, and no load-bearing steps reduce to self-citations, imported uniqueness theorems, or ansatzes from prior work by the same authors. The uniform-data and general absolutely continuous cases are handled by separate direct calculations that do not presuppose the target high-probability statements. The derivation chain is therefore self-contained.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Mild distributional assumptions on the data points

- domain assumption Data drawn from absolutely continuous distributions

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearUnder mild distributional assumptions, when the dimension d is at least polylogarithmic in the number of data points, defeatist search is no more likely to return the nearest neighbor than random guessing, and comprehensive search visits every cell with high probability.

-

IndisputableMonolith/Foundation/AlexanderDuality.leanalexander_duality_circle_linking unclearWe prove non-asymptotic bounds on the runtime of comprehensive search and the accuracy of defeatist search.

Reference graph

Works this paper leans on

-

[1]

Sunil Arya, David M. Mount, Nathan S. Netanyahu, Ruth Silverman, and Angela Y . Wu. An optimal algorithm for approximate nearest neighbor searching fixed dimensions.Journal of the ACM, 45(6):891–923, 1998

work page 1998

-

[2]

Jon Louis Bentley. Multidimensional binary search trees used for associative searching.Communications of the ACM, 18(9):509–517, 1975

work page 1975

- [3]

-

[4]

Louis H. Y . Chen, Xiao Fang, and Qi-Man Shao. From stein identities to moderate deviations.The Annals of Probability, 41(1):262–293, 2013

work page 2013

-

[5]

Random projection trees and low dimensional manifolds

Sanjoy Dasgupta and Yoav Freund. Random projection trees and low dimensional manifolds. InProceedings of the 40th Annual ACM Symposium on Theory of Computing (STOC), pages 537–546, 2008

work page 2008

-

[6]

Friedman, Jon Louis Bentley, and Raphael A

Jerome H. Friedman, Jon Louis Bentley, and Raphael A. Finkel. An algorithm for finding best matches in logarithmic expected time.ACM Transactions on Mathematical Software, 3(3):209–226, 1977

work page 1977

-

[7]

Marius Muja and David G. Lowe. Scalable nearest neighbor algorithms for high dimensional data.IEEE Trans- actions on Pattern Analysis and Machine Intelligence, 36(11):2227–2240, 2014

work page 2014

-

[8]

Sameer A. Nene and Shree K. Nayar. A simple algorithm for nearest neighbor search in high dimensions.IEEE Transactions on Pattern Analysis and Machine Intelligence, 19(9):989–1003, 1997

work page 1997

-

[9]

An improved algorithm finding nearest neighbor using kd-trees

Rina Panigrahy. An improved algorithm finding nearest neighbor using kd-trees. InLATIN 2008: Theoretical Informatics, volume 4957 ofLNCS, pages 387–398, 2008

work page 2008

-

[10]

Revisiting kd-tree for nearest neighbor search

Parikshit Ram and Kaushik Sinha. Revisiting kd-tree for nearest neighbor search. InProceedings of the 25th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, pages 1378–1388, 2019

work page 2019

-

[11]

org/stable/modules/generated/sklearn.neighbors.KDTree.html

scikit-learn developers.sklearn.neighbors.KDTreedocumentation.https://scikit-learn. org/stable/modules/generated/sklearn.neighbors.KDTree.html. Accessed 2026-04-30

work page 2026

-

[12]

Robert F. Sproull. Refinements to nearest-neighbor searching ink-dimensional trees.Algorithmica, 6:579–589, 1991

work page 1991

-

[13]

Santosh S. Vempala. Randomly-orientedk-d trees adapt to intrinsic dimension. InProceedings of the 32nd International Conference on Foundations of Software Technology and Theoretical Computer Science (FSTTCS), pages 48–57, 2012

work page 2012

-

[14]

Roger Weber, Hans-J ¨org Schek, and Stephen Blott. A quantitative analysis and performance study for similarity- search methods in high-dimensional spaces. InProceedings of the 24th International Conference on Very Large Data Bases (VLDB), pages 194–205, 1998. 14

work page 1998

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.