Recognition: 2 theorem links

· Lean TheoremHuman-AI Productivity Paradoxes: Modeling the Interplay of Skill, Effort, and AI Assistance

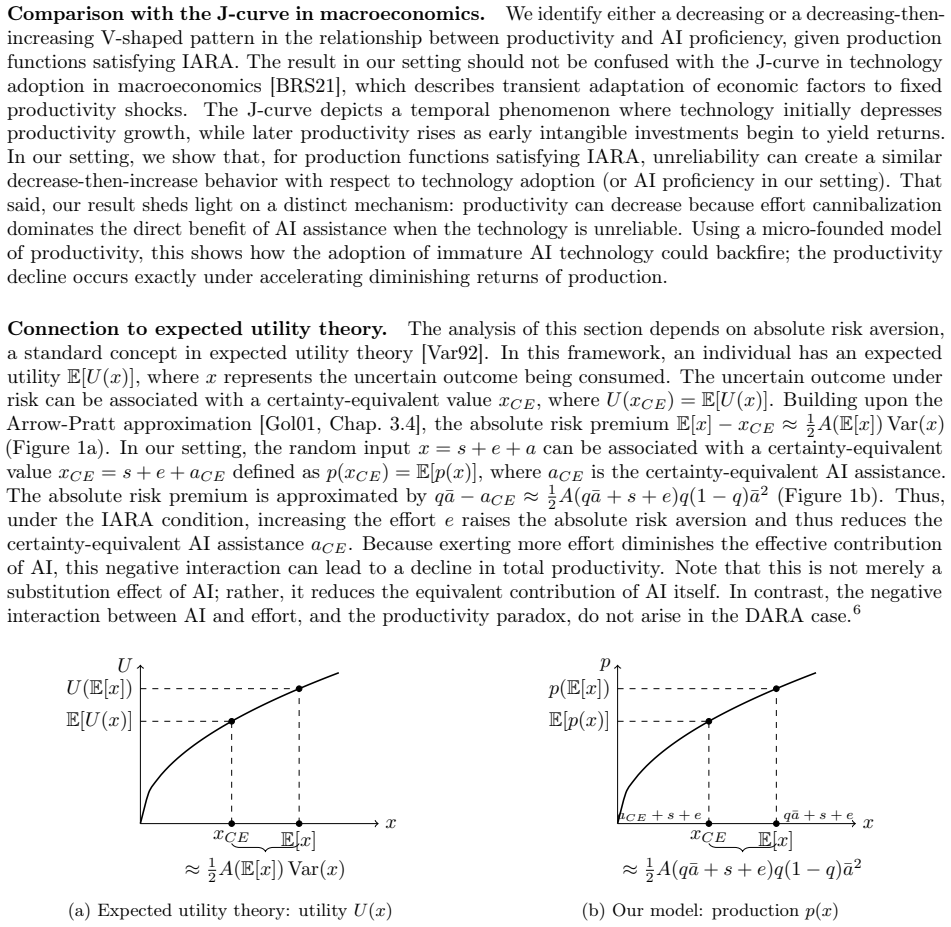

Pith reviewed 2026-05-13 02:21 UTC · model grok-4.3

The pith

Incorporating endogeneity in skill development or AI unreliability can make more AI assistance reduce productivity and cause skill polarization.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Human agents with varying skill levels exert utility-maximizing effort to produce task outcomes with AI assistance. Incorporating endogeneity in skill development or in AI unreliability induces a productivity paradox where increased AI assistance degrades productivity. Skill polarization emerges in steady state due to heterogeneity in AI literacy, the capability to identify and adapt to inaccurate AI outputs.

What carries the argument

Endogenous feedback between AI assistance levels, human effort choices, and either skill evolution or AI output reliability.

Load-bearing premise

Human agents always exert effort in a utility-maximizing way, and the endogeneity in skill development or AI unreliability is structured in specific forms that trigger the paradoxes.

What would settle it

Track productivity levels and skill changes in a controlled setting where AI assistance is varied and skill development is allowed to evolve endogenously, checking if productivity falls as assistance rises.

Figures

read the original abstract

Generative Artificial Intelligence (AI) tools are rapidly adopted in the workplace and in education, yet the empirical evidence on AI's impact remains mixed. We propose a model of human-AI interaction to better understand and analyze several mechanisms by which AI affects productivity. In our setup, human agents with varying skill levels exert utility-maximizing effort to produce certain task outcomes with AI assistance. We find that incorporating either endogeneity in skill development or in AI unreliability can induce a productivity paradox: increased levels of AI assistance may degrade productivity, leading to potentially significant shortfalls. Moreover, we examine the long-term distributional effect of AI on skill, and demonstrate that skill polarization can emerge in steady state when accounting for heterogeneity in AI literacy -- the agent's capability to identify and adapt to inaccurate AI outputs. Our results elucidate several mechanisms that may explain the emergence of human-AI productivity paradoxes and skill polarization, and identify simple measures that characterize when they arise.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper develops a theoretical model of human-AI interaction in which agents with heterogeneous skills select utility-maximizing effort levels to complete tasks with AI assistance. It demonstrates that endogenizing either skill development (as a function of AI assistance) or AI output unreliability can produce a productivity paradox in which higher AI assistance reduces net productivity. The model further shows that heterogeneity in AI literacy—the ability to detect and correct for inaccurate AI outputs—can generate skill polarization in steady state.

Significance. If the derivations are correct, the work supplies a clean existence result that identifies concrete mechanisms capable of rationalizing the mixed empirical literature on AI productivity effects. The emphasis on endogenous channels and heterogeneity provides a useful organizing framework for future empirical tests and policy discussion without claiming universality. The parsimonious setup and focus on steady-state outcomes are strengths for a modeling paper in this area.

minor comments (3)

- [Abstract] The abstract states that the model identifies 'simple measures that characterize when [paradoxes and polarization] arise,' yet the provided text does not define or derive these measures explicitly; adding a short paragraph or proposition summarizing the threshold conditions would improve clarity.

- [Model setup] Notation for the effort choice, skill transition function, and AI error rate should be introduced with a single consistent table or list of symbols early in the model section to reduce reader burden when tracing the endogeneity arguments.

- [Related work] The discussion of steady-state skill polarization would benefit from a brief comparison to related models in the economics of skill-biased technical change or learning-by-doing literatures to situate the contribution.

Simulated Author's Rebuttal

We thank the referee for their positive assessment of our manuscript. The referee's summary accurately reflects our theoretical contributions regarding productivity paradoxes arising from endogenous skill development or AI unreliability, as well as steady-state skill polarization due to heterogeneity in AI literacy. We appreciate the recognition of the model's parsimony and its value as an organizing framework for empirical work. The recommendation for minor revision is noted, and we are prepared to address any specific points.

Circularity Check

No significant circularity; derivations are self-contained existence results

full rationale

The paper constructs a standard theoretical model in which agents choose utility-maximizing effort given skill levels and AI assistance, then derives that endogeneity in skill evolution or AI error rates (plus AI-literacy heterogeneity) is sufficient to produce productivity paradoxes and steady-state polarization. These outcomes follow directly from the model's functional assumptions and equilibrium conditions rather than reducing to fitted parameters renamed as predictions or to self-citations. No load-bearing step equates a claimed result to its own inputs by construction; the analysis remains an existence demonstration under explicit assumptions.

Axiom & Free-Parameter Ledger

axioms (3)

- domain assumption Human agents exert utility-maximizing effort

- ad hoc to paper Skill development is endogenous to AI assistance levels

- ad hoc to paper AI outputs can be unreliable in a manner that interacts with human effort

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

utility u(e,s,a):=p(s+e+a)−γe; e⋆(s,a)=(x⋆−s−a)+ with x⋆=argmax(p(x)−γx)

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.leanLogicNat recovery and orbit embedding unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

birth-death chain with rates Ksksk+1(a)=λ(e⋆(sk,a)), steady-state πk(a)

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

Then the ratio is bounded by ε 4 ε 4 ε 4 + (1− ε

-

[2]

≤ε, which completes the proof. Proof of Lemma A.2.Note that the utility-maximizing effort given ah = x⋆ is zero for each skill, i.e., e⋆(si, x⋆) = 0holds for all i; the utility-maximizing effort givenaℓ = x⋆ −z (z≤s 2) is zero for each skill except the lowest one, i.e.,e⋆(si, x⋆ −z ) = 0holds for all i≥ 2. Recall the steady-state distributionπ is given by...

-

[3]

Relaxed increasing absolute risk aversion, or relaxed IARA for short: ifA(x)is weakly increasing, and points wherep ′(x) =p ′′(x) = 0are interpreted as having infinite absolute risk aversion

-

[4]

We are ready to state the following lemma for the relaxed IARA and DARA

Relaxed decreasing absolute risk aversion, or relaxed DARA for short: ifA(x)is weakly decreasing and A(x)>0holds for allx. We are ready to state the following lemma for the relaxed IARA and DARA. The proof requires dealing with boundary conditions more carefully compared to that of the original IARA and DARA versions. Lemma B.2(Generalization of Lemma 4.3...

-

[5]

Ifqp ′(s+ ¯a) + (1−q)p′(s)> γ, then the derivative is given by ∂p⋆ ∂¯a(s,¯a, q) =q(1−q)p ′(s+e ⋆)p′(s+e ⋆ + ¯a) D(s,¯a, q) A(s+e ⋆ + ¯a)−A(s+e ⋆) ,ifD(s,¯a, q)̸= 0, ∂p⋆ ∂¯a(s,¯a, q)≤0,ifD(s,¯a, q) = 0

-

[6]

Ifqp ′(s+ ¯a) + (1−q)p′(s)< γ, then the derivative is given by ∂p⋆ ∂¯a(s,¯a, q) =q·p ′(s+ ¯a). 31 Proof of Lemma B.2.Recallthattheexpectedutilityisgivenby u(e, s,¯a, q) = q·p(s+e+¯a)+(1−q)·p(s+e)−γe. For given AI proficiency¯a, reliabilityq, and skill levels, the concavity ofp implies that the utility is increasing in e if qp′(s + e + ¯a) + (1 −q )p′(s + ...

-

[7]

Ifqp ′(s+ ¯a) + (1−q)p′(s)≥γ, then the derivative is given by ∂p⋆ ∂¯a(s,¯a, q) = q(1−q)p ′(s+e ⋆)p′(s+e ⋆ + ¯a) q·p ′′(s+e ⋆ + ¯a) + (1−q)·p ′′(s+e ⋆) A(s+e ⋆ + ¯a)−A(s+e ⋆) . Aspis a concave function, the sign of the derivative is given by sgn ∂p⋆ ∂¯a(s,¯a, q) = sgn − A(s+e ⋆ + ¯a)−A(s+e ⋆)

-

[8]

Thus, the sign is given by sgn ∂p⋆ ∂¯a(s,¯a, q) = 1

Otherwise, the sign of the derivative is given by∂p⋆ ∂¯a(s,¯a, q) =q·p ′(s+ ¯a). Thus, the sign is given by sgn ∂p⋆ ∂¯a(s,¯a, q) = 1. The above case distinction concludes the proof. B.2 Characterization for linear production functions (Lemma 4.5) Proof of Lemma 4.5.Under the piecewise linear production function, the utility function is given by u(e, s,¯a,...

-

[9]

The corresponding productivity is given byp⋆(s,¯a, q) = 1

If q≤ β−γ β , the agent’s utility-maximizing effort ise⋆(s,¯a, q) = ( 1 β −s)+. The corresponding productivity is given byp⋆(s,¯a, q) = 1

-

[10]

If q > β−γ β , the agent’s utility-maximizing effort ise⋆(s,¯a, q) = ( 1 β −s−¯a )+. The corresponding productivity is given by p⋆(s,¯a, q) =q+ (1−q)·min(1, β(s+ ( 1 β −s−¯a) +)) =q+ (1−q)·max (min(1, βs),1−β¯a). B.3 Equivalent productivity result for relaxed conditions (Remark 4.6) We establish a version of Theorem 2 under the relaxed condition (Definiti...

-

[11]

Ifpsatisfies relaxed IARA, productivityp ⋆(s,¯a, q)is (weakly) decreasing then possibly increasing in¯a

-

[12]

Ifpsatisfies relaxed DARA, productivityp ⋆(s,¯a, q)is (weakly) increasing in¯a. Proof of Theorem B.3.Since p is concave and s < x ⋆, there exists a thresholdτ∈ (0,∞ ]such that the conditionqp ′(s+ ¯a) + (1−q)p′(s)> γholds if and only if¯a < τ. Equivalently, τ:= sup{z≥0 :qp ′(s+z) + (1−q)p ′(s)≥γ}

-

[13]

If psatisfies relaxed IARA, by the relaxed IARA condition, we haveA(s+e⋆+¯a) ≥A (s+e⋆). Lemma B.2 implies that the derivative∂p⋆ ∂¯a ≤ 0when qp′(s + ¯a) + (1 −q )p′(s) ≥γ (i.e., ¯a < τ), and ∂p⋆ ∂¯a > 0when qp′(s + ¯a) + (1 −q )p′(s) < γ (i.e., ¯a > τ). Thus, the productivity is decreasing-then-increasing in¯a, with the increasing region degenerate whenτ=∞

-

[14]

Thus, the productivityp ⋆(s,¯a, q)is increasing in¯a

If p satisfies relaxed DARA, Lemma B.2 implies that the derivative is always positive. Thus, the productivityp ⋆(s,¯a, q)is increasing in¯a. Combining both cases, we complete the proof. B.4 Equivalent ratio result for relaxed IARA (Remark 4.6) We establish a version of Proposition 4.2 under the relaxed IARA condition (Definition B.1). Specifically, we pro...

-

[15]

If˜s < 1 β, then for any¯a >0, efforte⋆ b(s,¯a, q)is non-monotonic ins

-

[16]

Otherwise, for any¯a, efforte⋆ b(s,¯a, q)is (weakly) decreasing ins. Proof of Lemma C.1.When ω < 0, then q0(q,· )crosses the threshold β−γ β at ˜s= v−1( q 1−q γ β−γ q 1−q γ β−γ +1). Recall that the expected effort ise⋆ b(s,¯a, q) =P(ˆa= ¯a|s)e⋆(s,¯a, q1(q, s)) +P(ˆa= 0|s)e⋆(s,¯a, q0(q, s)). Thus, e⋆ b(s,¯a, q) = ( ( 1 β −s−¯a) +,ifs <˜s, q·v(s) + (1−q)·(1...

-

[17]

If q < 1 2 and ˜s+ (1−2q)v(˜s)+q (1−2q)v ′(˜s) < 1 β, then there exists a threshold˜asuch that: for any ¯a >˜a, effort e⋆ b(s,¯a, q)is non-monotonic ins; for any¯a≤˜a, efforte⋆ b(s,¯a, q)is decreasing ins

-

[18]

Proof of Lemma C.2.When ω≥ 0, then q1(q,· )crosses β−γ β at ˜s= v−1( 1 q 1−q γ β−γ +1)

Otherwise, for any¯a, efforte⋆ b(s,¯a, q)is decreasing ins. Proof of Lemma C.2.When ω≥ 0, then q1(q,· )crosses β−γ β at ˜s= v−1( 1 q 1−q γ β−γ +1). Recall that the expected effort ise ⋆ b(s,¯a, q) =P(ˆa= ¯a|s)e⋆(s,¯a, q1(q, s)) +P(ˆa= 0|s)e⋆(s,¯a, q0(q, s)). Thus, e⋆ b(s,¯a, q) = ( ( 1 β −s) +, ifs <˜s, q·v(s) + (1−q)·(1−v(s)) ( 1 β −s−¯a) + + q·(1−v(s)) ...

-

[19]

If p satisfies IARA and Condition D.4 holds, productivityp⋆(s,¯a, q)is decreasing in ¯awhen ¯ais sufficiently large

-

[20]

Ifpsatisfies DARA, productivityp ⋆(s,¯a, q)is increasing in¯a. Proof of Proposition D.6.Recall that the utility-maximizing effort is given by e⋆(s,¯a, q) := arg max e≥0 (q·p(s+e+ ¯a) + (1−q)·p(s+e)−c(e)). As(1−q)p ′(s)≥c ′(0), the agent’s effort is interior and characterized by the first-order condition: q·p ′(s+e ⋆(s,¯a, q) + ¯a) + (1−q)·p′(s+e ⋆(s,¯a, q...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.