Recognition: 2 theorem links

· Lean TheoremA nonlinear extension of parametric model embedding for dimensionality reduction in parametric shape design

Pith reviewed 2026-05-13 04:58 UTC · model grok-4.3

The pith

Nonlinear parametric model embedding compresses shape design spaces with fewer latent variables while preserving explicit backmapping to original parameters.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

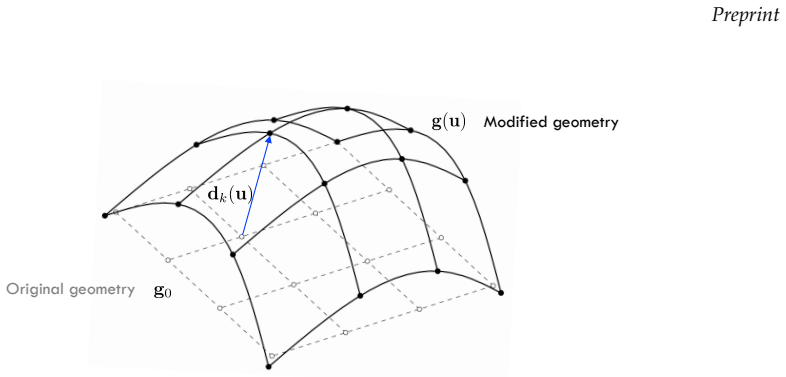

NLPME extends PME by using a nonlinear latent representation that is decoded into admissible design parameters before geometry is reconstructed via the forward parametric map. This preserves geometry-driven latent variables and parameter-mediated reconstruction while capturing nonlinear geometric variability more efficiently than linear PME.

What carries the argument

NLPME, a nonlinear latent representation decoded through admissible design parameters to recover geometry via the forward parametric map, extending the linear PME framework.

If this is right

- Fewer latent variables suffice to reach target reconstruction error thresholds when shape variability is nonlinear.

- An explicit mapping back to original design parameters remains available for optimization and surrogate modeling.

- Shapes stay admissible because reconstruction routes through valid design parameters rather than direct geometry prediction.

- Nonlinear compression gains are retained while avoiding the black-box nature of pure deep autoencoders.

Where Pith is reading between the lines

- The same decoding-through-parameters step could be paired with other nonlinear reduction techniques for mixed linear-nonlinear design spaces.

- This structure may allow direct incorporation of NLPME reduced spaces into existing parametric CAD workflows without additional validity checks.

- The efficiency observed on the glider suggests similar gains are possible in other high-dimensional parametric domains such as aircraft or automotive shapes.

Load-bearing premise

Replacing the linear reduced subspace with a nonlinear latent representation decoded through admissible design parameters preserves both geometric fidelity and engineering usability without introducing inadmissible shapes.

What would settle it

If NLPME on the 32-parameter glider produces reconstruction errors above 5 percent with 5 latent variables or generates shapes outside the admissible parameter space, the claimed efficiency and admissibility gains would not hold.

Figures

read the original abstract

Dimensionality reduction is essential in simulation-based shape design, where high-dimensional parameterizations hinder optimization, surrogate modeling, and systematic design-space exploration. Parametric Model Embedding (PME) addresses this issue by constructing reduced variables from geometric information while preserving an explicit backmapping to the original design parameters. However, PME is intrinsically linear and may become inefficient when the sampled design space is governed by nonlinear geometric variability. This paper introduces a nonlinear extension of PME, denoted NLPME. The proposed framework preserves the defining principle of PME -- geometry-driven latent variables and parameter-mediated reconstruction -- while replacing the linear reduced subspace with a nonlinear latent representation. Geometry is not reconstructed directly from the latent variables; instead, the latent representation is decoded into admissible design parameters, and the corresponding geometry is recovered through a forward parametric map. The method is assessed on a bio-inspired autonomous underwater glider with a 32-dimensional parametric shape description and a CAD-based geometry-generation process. NLPME reaches a 5\% reconstruction-error threshold with \(N=5\) latent variables, compared with \(N=8\) for linear PME, and a 1\% threshold with \(N=9\), compared with \(N=15\) for PME. Comparison with a deep autoencoder shows that most of the nonlinear compression gain can be retained while preserving an explicit backmapping to the original design variables. The results establish NLPME as a compact, admissible, and engineering-compatible nonlinear reduced representation for parametric shape design spaces.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes NLPME, a nonlinear extension of Parametric Model Embedding (PME) for dimensionality reduction in parametric shape design. It preserves PME's geometry-driven latent variables and explicit backmapping by decoding the nonlinear latent representation to admissible design parameters, from which geometry is recovered via the forward parametric map. On a 32-dimensional bio-inspired autonomous underwater glider with CAD-based geometry generation, NLPME achieves a 5% reconstruction-error threshold with N=5 latent variables (vs. N=8 for linear PME) and a 1% threshold with N=9 (vs. N=15 for PME), while retaining most of the compression gain of a deep autoencoder but with explicit parameter mediation.

Significance. If the central claims hold, NLPME would provide a useful engineering-compatible nonlinear reduced-order representation for simulation-based shape design, balancing improved compression over linear PME with retention of admissibility and interpretability through parameter-mediated reconstruction. The concrete error thresholds on a realistic 32-parameter CAD test case, together with the explicit backmapping strength, would support applications in optimization and surrogate modeling.

major comments (2)

- Abstract: The performance claims (5% error at N=5, 1% at N=9) and the retention of engineering usability rest on the decoder always mapping to admissible design parameters. No description is given of regularization, projection, bound enforcement, or post-processing that would guarantee this for arbitrary out-of-sample latent coordinates, which is load-bearing for the admissibility guarantee and the distinction from unconstrained autoencoders.

- Abstract: The reported reconstruction thresholds and latent-variable counts are presented without details on the nonlinear architecture (depth, activations, loss), training procedure, validation strategy, or how error quantification was performed, preventing verification that the gains are robust and not artifacts of post-hoc choices or overfitting to the training set.

minor comments (1)

- Abstract: The comparison to deep autoencoders is stated qualitatively ('most of the nonlinear compression gain'); a quantitative table or plot of reconstruction error vs. latent dimension for all three methods would strengthen the claim.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed review of our manuscript. We address each major comment below and indicate the revisions we will make to improve clarity and support for the central claims.

read point-by-point responses

-

Referee: Abstract: The performance claims (5% error at N=5, 1% at N=9) and the retention of engineering usability rest on the decoder always mapping to admissible design parameters. No description is given of regularization, projection, bound enforcement, or post-processing that would guarantee this for arbitrary out-of-sample latent coordinates, which is load-bearing for the admissibility guarantee and the distinction from unconstrained autoencoders.

Authors: We appreciate the referee highlighting this point, which is essential for the admissibility claim. The NLPME approach decodes latent variables to design parameters before applying the forward parametric map, thereby inheriting admissibility from the parameter domain. However, the abstract indeed provides no description of mechanisms such as output activations, regularization, or post-processing to enforce bounds on out-of-sample points. We will revise the manuscript to add these details in the Methods section (including any bound-handling steps) and briefly reference them from the abstract to fully substantiate the performance claims and the distinction from unconstrained autoencoders. revision: yes

-

Referee: Abstract: The reported reconstruction thresholds and latent-variable counts are presented without details on the nonlinear architecture (depth, activations, loss), training procedure, validation strategy, or how error quantification was performed, preventing verification that the gains are robust and not artifacts of post-hoc choices or overfitting to the training set.

Authors: We agree that the abstract, owing to length constraints, omits these implementation specifics, which are necessary for independent verification. The full manuscript presents the nonlinear architecture, loss formulation, training procedure, validation split, and error metric in the Methods section. To address the concern directly, we will revise the abstract to include a concise summary of the architecture type, training and validation strategy, and error quantification approach, enabling readers to assess robustness without first consulting the full text. revision: yes

Circularity Check

No circularity detected in NLPME derivation or performance claims

full rationale

The paper defines NLPME by extending linear PME through a nonlinear latent representation that is decoded to admissible design parameters before applying the forward parametric map. Reconstruction-error thresholds (5% at N=5, 1% at N=9) and the comparison to deep autoencoders are presented as direct empirical measurements on the 32-dimensional glider dataset, not as quantities forced by the paper's own equations or by fitting a parameter that is then renamed as a prediction. No load-bearing step relies on a self-citation chain, uniqueness theorem imported from the authors' prior work, or an ansatz smuggled via citation; the geometry-driven and admissible character is asserted as the preserved defining principle of the framework rather than derived from the inputs by construction. The derivation chain therefore remains independent of the reported results.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

The nonlinear latent representation is defined as z∈R^N … z=E_ϕ(d), û=D_θ(z), ˆd=S_ψ*(û) … training loss L_geom = 1/S ∑ ||d^(i) − ˆd^(i)||² … decoder output constrained through a sigmoid activation so as to remain within the normalized admissible range

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

NLPME reaches a 5% reconstruction-error threshold with N=5 latent variables … preserving an explicit backmapping to the original design variables

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

Dynamic programming.Cambridge Studies in Speech Science and Communication

Richard E Bellman et al. Dynamic programming.Cambridge Studies in Speech Science and Communication. Princeton University Press, Princeton, 1957

work page 1957

-

[2]

Andrea Serani and Matteo Diez. A survey on design-space dimensionality reduction methods for shape optimization.Archives of Computational Methods in Engineering, pages 1–28, 2025

work page 2025

-

[3]

Andrea Manzoni, Alfio Quarteroni, and Sandro Salsa. Shape optimization problems. InOptimal Control of Partial Differential Equations: Analysis, Approximation, and Applications, pages 373–421. Springer, 2022

work page 2022

-

[4]

David JJ Toal, Neil W Bressloff, Andy J Keane, and Carren ME Holden. Geometric filtration using proper orthogonal decomposition for aerodynamic design optimization.AIAA journal, 48(5):916–928, 2010

work page 2010

-

[5]

Lizhang Zhang, Dong Mi, Cheng Yan, and Fangming Tang. Multidisciplinary design optimization for a centrifugal compressor based on proper orthogonal decomposition and an adaptive sampling method. Applied Sciences, 8(12):2608, 2018

work page 2018

-

[6]

Hanru Liu, Lei Zhu, Jiahui Li, Yan Ma, and Pengfei Ren. Aerodynamic and aeroacoustic optimization of uav rotor based on proper orthogonal decomposition method.Journal of Aerospace Engineering, 37(4):04024037, 2024. 16 Preprint

work page 2024

-

[7]

Matteo Diez, Emilio F Campana, and Frederick Stern. Design-space dimensionality reduction in shape optimization by Karhunen–Loève expansion.Computer Methods in Applied Mechanics and Engineering, 283:1525–1544, 2015

work page 2015

-

[8]

Research on the Karhunen–Loève transform method and its application to hull form optimization

Haichao Chang, Chengjun Wang, Zuyuan Liu, Baiwei Feng, Chengsheng Zhan, and Xide Cheng. Research on the Karhunen–Loève transform method and its application to hull form optimization. Journal of Marine Science and Engineering, 11(1):230, 2023

work page 2023

-

[9]

Kazuo Yonekura and Osamu Watanabe. A shape parameterization method using principal component analysis in applications to parametric shape optimization.Journal of Mechanical Design, 136(12):121401, 2014

work page 2014

-

[10]

Danny D’Agostino, Andrea Serani, and Matteo Diez. Design-space assessment and dimensionality reduction: An off-line method for shape reparameterization in simulation-based optimization.Ocean Engineering, 197:106852, 2020

work page 2020

-

[11]

Stefan Harries and Sebastian Uharek. Application of radial basis functions for partially-parametric modeling and principal component analysis for faster hydrodynamic optimization of a catamaran. Journal of Marine Science and Engineering, 9(10):1069, 2021

work page 2021

-

[12]

Nonlinear methods for design-space dimensionality reduction in shape optimization

Danny D’Agostino, Andrea Serani, Emilio F Campana, and Matteo Diez. Nonlinear methods for design-space dimensionality reduction in shape optimization. InInternational Workshop on Machine Learning, Optimization, and Big Data, pages 121–132. Springer, 2017

work page 2017

-

[13]

Active manifold and model reduction for multidisciplinary analysis and optimization

Gabriele Boncoraglio and Charbel Farhat. Active manifold and model reduction for multidisciplinary analysis and optimization. InAIAA Scitech 2021 Forum, page 1694, 2021

work page 2021

-

[14]

Zhiqiang Liu, Decheng Wan, Xiaosong Zhang, Jingpu Chen, Shan Wang, and Yanxia Wang. Design space nonlinear dimensionality reduction based on deep neural networks in ship hull form optimization. Ocean Engineering, 348:124112, 2026

work page 2026

-

[15]

Gengyao Yan, Jun Tao, and Guanghui Wu. Aerodynamic optimization via niche-multiobjective particle swarm optimization algorithm and manifold learning.AIAA Journal, pages 1–15, 2026

work page 2026

-

[16]

Yoshua Bengio, Aaron Courville, and Pascal Vincent. Representation learning: A review and new perspectives.IEEE transactions on pattern analysis and machine intelligence, 35(8):1798–1828, 2013

work page 2013

-

[17]

David Gaudrie, Rodolphe Le Riche, Victor Picheny, Benoit Enaux, and Vincent Herbert. Modeling and optimization with gaussian processes in reduced eigenbases.Structural and Multidisciplinary Optimization, 61(6):2343–2361, 2020

work page 2020

-

[18]

Parametric model embedding.Computer Methods in Applied Mechanics and Engineering, 404:115776, 2023

Andrea Serani and Matteo Diez. Parametric model embedding.Computer Methods in Applied Mechanics and Engineering, 404:115776, 2023

work page 2023

-

[19]

Andrea Serani, Matteo Diez, and Domenico Quagliarella. Aerodynamic shape optimization in transonic conditions through parametric model embedding.Aerospace Science and Technology, 155:109611, 2024

work page 2024

-

[20]

Andrea Serani, Giorgio Palma, Jeroen Wackers, Domenico Quagliarella, Stefano Gaggero, and Matteo Diez. Extending parametric model embedding with physical information for design-space dimensional- ity reduction in shape optimization.Engineering with Computers, pages 1–21, 2025

work page 2025

-

[21]

Emma Hart, Julianne Chung, and Matthias Chung. A paired autoencoder framework for inverse problems via bayes risk minimization.SIAM Journal on Scientific Computing, 48(2):C385–C414, 2026

work page 2026

-

[22]

Sunwoong Yang, Sanga Lee, and Kwanjung Yee. Inverse design optimization framework via a two-step deep learning approach: application to a wind turbine airfoil.Engineering with Computers, 39(3):2239– 2255, 2023

work page 2023

-

[23]

Andrea Serani, Giorgio Palma, Jeroen Wackers, and Matteo Diez. A machine learning enabled mdo for bio-inspired autonomous underwater gliders.arXiv preprint arXiv:2602.08508, 2026

-

[24]

Andrea Serani, Giorgio Palma, Jeroen Wackers, and Matteo Diez. Preliminary design optimization for internal arrangement and hull geometry of a bio-inspired autonomous underwater glider through machine learning. InMARINE 2025, 2025

work page 2025

-

[25]

Andrea Serani and Giorgio Palma. Design-space dimensionality reduction benchmark dataset – bio- inspired underwater glider, February 2026. 17

work page 2026

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.