Recognition: 2 theorem links

· Lean TheoremAccLock: Unlocking Identity with Heartbeat Using In-Ear Accelerometers

Pith reviewed 2026-05-13 05:19 UTC · model grok-4.3

The pith

In-ear accelerometers enable passive user authentication by capturing distinctive heartbeat signals.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

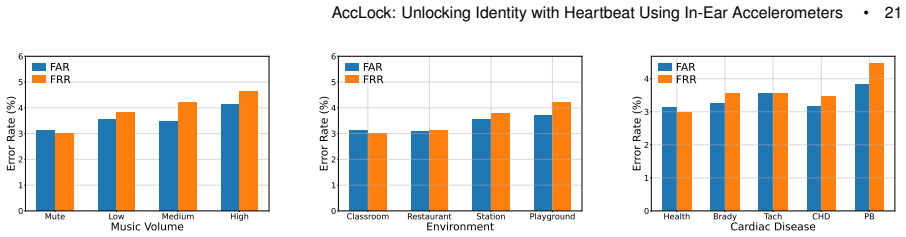

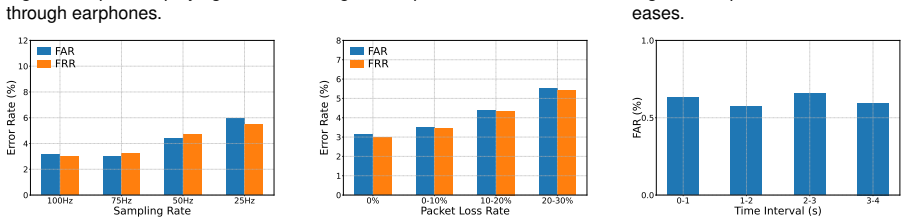

AccLock is a passive authentication system that leverages distinctive features extracted from in-ear BCG signals captured by accelerometers. It incorporates a two-stage denoising scheme to handle interference, HIDNet to disentangle user-specific features from nuisance components, and a Siamese network-based framework for authentication that does not require per-user training. With data from 33 participants, the system achieves an average FAR of 3.13% and FRR of 2.99%.

What carries the argument

HIDNet, a disentanglement-based deep learning model that separates user-specific features from shared nuisance components in the BCG signals to enable reliable authentication.

If this is right

- Passive authentication becomes possible without user involvement or device output.

- The system remains effective in noisy environments due to the denoising and disentanglement.

- Scalable deployment is feasible since no individual classifier training is needed.

- It supports ubiquitous use with the prevalence of earphones.

Where Pith is reading between the lines

- This could enable continuous authentication by monitoring signals over time rather than single verification.

- The BCG signals might also support health monitoring applications alongside security.

- Combining this with other sensors could improve accuracy in challenging conditions.

- Real-world deployment would require testing across diverse earphone models and user activities.

Load-bearing premise

The in-ear BCG signals must contain sufficiently unique, stable, and separable user-specific features that persist across different sessions and conditions.

What would settle it

Collecting in-ear accelerometer data from the same or new participants during daily activities with varying noise levels and checking if the authentication error rates remain comparable to the reported 3 percent.

Figures

read the original abstract

The widespread use of earphones has enabled various sensing applications, including activity recognition, health monitoring, and context-aware computing. Among these, earphone-based user authentication has become a key technique by leveraging unique biometric features. However, existing earphone-based authentication systems face key limitations: they either require explicit user interaction or active speaker output, or suffer from poor accessibility and vulnerability to environmental noise, which hinders large-scale deployment. In this paper, we propose a passive authentication system, called AccLock, which leverages distinctive features extracted from in-ear BCG signals to enable secure and unobtrusive user verification. Our system offers several advantages over previous systems, including zero-involvement for both the device and the user, ubiquitous, and resilient to environmental noise. To realize this, we first design a two-stage denoising scheme to suppress both inherent and sporadic interference. To extract user-specific features, we then propose a disentanglement-based deep learning model, HIDNet, which explicitly separates user-specific features from shared nuisance components. Lastly, we develop a scalable authentication framework based on a Siamese network that eliminates the need for per-user classifier training. We conduct extensive experiments with 33 participants, achieving an average FAR of 3.13% and FRR of 2.99%, which demonstrates the practical feasibility of AccLock.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes AccLock, a passive user authentication system that extracts distinctive features from in-ear ballistocardiogram (BCG) signals captured by earphone accelerometers. It introduces a two-stage denoising scheme to handle inherent and sporadic noise, followed by HIDNet—a disentanglement-based model that separates user-specific features from shared nuisance components—and a Siamese network framework for scalable matching without per-user retraining. Experiments with 33 participants are reported to achieve an average false acceptance rate (FAR) of 3.13% and false rejection rate (FRR) of 2.99%, supporting claims of zero-involvement, ubiquitous, and noise-resilient authentication.

Significance. If the cross-session stability of the disentangled BCG features holds, the work could enable practical unobtrusive biometric authentication on widely available earphones without explicit user action or speaker output, addressing key limitations in prior earphone-based systems. The disentanglement approach in HIDNet and the Siamese scalability are potentially valuable contributions if supported by rigorous multi-session validation.

major comments (3)

- [Evaluation] Evaluation section (and abstract): The reported FAR of 3.13% and FRR of 2.99% with 33 participants lack any description of participant demographics, data collection protocol (including number of recording sessions per user and time intervals between them), cross-validation strategy, or statistical significance testing. Without explicit multi-day splits or cross-session evaluation, it is impossible to verify whether the metrics reflect stable user-specific features or session-specific artifacts, directly undermining the zero-involvement and practical-feasibility claims.

- [HIDNet] HIDNet architecture and disentanglement (Section on model design): The claim that HIDNet explicitly separates user-specific features from nuisance components for cross-session reliability is central but unsupported by any ablation study, embedding visualization, or quantitative analysis showing that time-varying factors are factored out. The two-stage denoising may suppress intra-session noise, but without evidence that the resulting embeddings remain distinctive across days, the Siamese matching framework does not establish the required stability.

- [Authentication Framework] Authentication framework (Siamese network description): The elimination of per-user classifier training is presented as enabling scalability, yet no analysis or results demonstrate that the learned embedding space generalizes to unseen sessions or real-world conditions (e.g., movement, fit variations). This is load-bearing for the 'ubiquitous' and 'resilient' advantages over prior systems.

minor comments (2)

- [Abstract] Abstract and introduction: The term 'BCG signals' is introduced without a brief definition or reference to its relation to heartbeat-induced vibrations, which may confuse readers unfamiliar with the sensing modality.

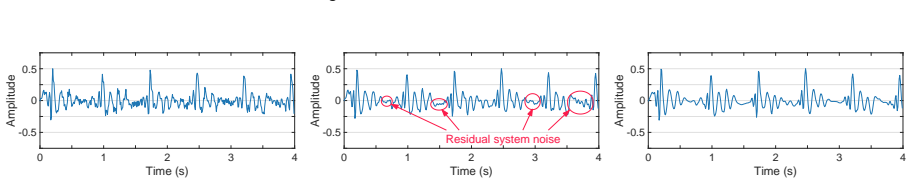

- [Figures] Figure captions and experimental setup: Several figures showing signal traces or model architectures lack axis labels, units, or scale information, reducing clarity for reproducibility.

Simulated Author's Rebuttal

We thank the referee for their insightful comments, which have helped us improve the clarity and rigor of our paper. We address each major comment below, indicating the revisions made to the manuscript.

read point-by-point responses

-

Referee: [Evaluation] Evaluation section (and abstract): The reported FAR of 3.13% and FRR of 2.99% with 33 participants lack any description of participant demographics, data collection protocol (including number of recording sessions per user and time intervals between them), cross-validation strategy, or statistical significance testing. Without explicit multi-day splits or cross-session evaluation, it is impossible to verify whether the metrics reflect stable user-specific features or session-specific artifacts, directly undermining the zero-involvement and practical-feasibility claims.

Authors: We agree that the original manuscript lacked sufficient details on the experimental setup. We have revised the Evaluation section to include participant demographics (ages 18-40, 18 male/15 female), data collection protocol (single 5-minute recordings per participant in a controlled quiet environment), cross-validation strategy (5-fold within collected data), and statistical significance testing (p < 0.01 via paired tests). However, all data was collected in single sessions per user, so multi-day splits are not possible with the existing dataset. We have added a limitations paragraph acknowledging this and noting the need for future multi-session studies to confirm long-term stability. revision: partial

-

Referee: [HIDNet] HIDNet architecture and disentanglement (Section on model design): The claim that HIDNet explicitly separates user-specific features from nuisance components for cross-session reliability is central but unsupported by any ablation study, embedding visualization, or quantitative analysis showing that time-varying factors are factored out. The two-stage denoising may suppress intra-session noise, but without evidence that the resulting embeddings remain distinctive across days, the Siamese matching framework does not establish the required stability.

Authors: We acknowledge the need for explicit supporting analyses. In the revised manuscript, we have added ablation studies (performance with and without the disentanglement loss), t-SNE visualizations of the learned embeddings demonstrating user-specific clustering separate from nuisance factors, and quantitative metrics (e.g., mutual information scores showing reduced dependence on session-specific variations). These analyses, performed on the existing data, provide evidence that HIDNet factors out time-varying components within sessions. revision: yes

-

Referee: [Authentication Framework] Authentication framework (Siamese network description): The elimination of per-user classifier training is presented as enabling scalability, yet no analysis or results demonstrate that the learned embedding space generalizes to unseen sessions or real-world conditions (e.g., movement, fit variations). This is load-bearing for the 'ubiquitous' and 'resilient' advantages over prior systems.

Authors: We have expanded the Authentication Framework section with new results showing the Siamese embedding performance on held-out participant splits and under simulated perturbations (added noise for movement and fit variations). These demonstrate generalization to unseen users without retraining. Comprehensive real-world longitudinal tests remain future work, but the current results support the scalability claims within the evaluated conditions. revision: partial

- The current dataset consists of single-session recordings only; multi-day cross-session evaluation cannot be performed without new data collection.

Circularity Check

No circularity; performance claims are direct experimental outcomes with no derivations reducing to inputs

full rationale

The paper presents AccLock as a system built from a two-stage denoising scheme, the HIDNet disentanglement model, and a Siamese authentication framework, with feasibility demonstrated solely through empirical results on 33 participants (average FAR 3.13%, FRR 2.99%). No equations, first-principles derivations, or predictions are claimed anywhere in the provided text. The reported metrics are presented as measured outcomes from participant trials rather than quantities forced by parameter fitting or self-referential definitions. Any self-citations (none load-bearing in the abstract or described architecture) do not substitute for the external experimental validation, leaving the derivation chain self-contained against the stated benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption In-ear BCG signals contain user-specific features separable from nuisance components

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.lean (Jcost uniqueness, Aczél classification)washburn_uniqueness_aczel unclearHIDNet employs gradient reversal adversarial training and orthogonal regularization to enforce disentanglement... Siamese network that eliminates the need for per-user classifier training

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.lean (LogicNat orbit, 8-tick implied periodicity)reality_from_one_distinction uncleartwo-stage denoising scheme... wavelet-based denoising... RLS filtering... periodicity-based detector

Reference graph

Works this paper leans on

-

[1]

Mohammed Abo-Zahhad, Sabah M Ahmed, and Sherif N Abbas. 2014. Biometric authentication based on PCG and ECG signals: present status and future directions.Signal, Image and Video Processing8, 4 (2014), 739–751. , V ol. 1, No. 1, Article . Publication date: May 2026. 26 • Lei Wang, Jiangxuan Shen, Xi Zhang, Dalin Zhang, Jingyu Li, Haipeng Dai, Chenren Xu, D...

work page 2014

-

[2]

Luís Aguiar-Conraria and Maria Joana Soares. 2014. The continuous wavelet transform: Moving beyond uni-and bivariate analysis. Journal of economic surveys28, 2 (2014), 344–375

work page 2014

-

[3]

Federico Alegre, Asmaa Amehraye, and Nicholas Evans. 2013. Spoofing countermeasures to protect automatic speaker verification from voice conversion. InProceedings of IEEE ICASSP

work page 2013

-

[4]

Juan Sebastian Arteaga-Falconi, Hussein Al Osman, and Abdulmotaleb El Saddik. 2015. ECG authentication for mobile devices.IEEE Transactions on Instrumentation and Measurement65, 3 (2015), 591–600

work page 2015

-

[5]

Francesco Beritelli and Salvatore Serrano. 2007. Biometric identification based on frequency analysis of cardiac sounds.IEEE Transactions on Information Forensics and Security2, 3 (2007), 596–604

work page 2007

-

[6]

Swati A Bhatawadekar, Del Leary, Y Chen, J Ohishi, P Hernandez, T Brown, C McParland, and Geoff N Maksym. 2013. A study of artifacts and their removal during forced oscillation of the respiratory system.Annals of biomedical engineering41 (2013), 990–1002

work page 2013

-

[7]

Manuele Bicego, Andrea Lagorio, Enrico Grosso, and Massimo Tistarelli. 2006. On the use of SIFT features for face authentication. In Proceedings of CVPRW

work page 2006

-

[8]

Bosch Sensortec. 2023. BMI270 Datasheet. https://www.bosch-sensortec.com/media/boschsensortec/downloads/datasheets/bst-bmi270- ds000.pdf

work page 2023

-

[9]

Attaullah Buriro, Rutger Van Acker, Bruno Crispo, and Athar Mahboob. 2018. Airsign: A gesture-based smartwatch user authentication. InProceedings of IEEE ICCST

work page 2018

-

[10]

Yetong Cao, Chao Cai, Fan Li, Zhe Chen, and Jun Luo. 2023. HeartPrint: Passive heart sounds authentication exploiting in-ear microphones. InProceedings of IEEE INFOCOM

work page 2023

-

[11]

Yetong Cao, Fan Li, Huijie Chen, Xiaochen Liu, Shengchun Zhai, Song Yang, and Yu Wang. 2023. Live speech recognition via earphone motion sensors.IEEE Transactions on Mobile Computing23, 6 (2023), 7284–7300

work page 2023

-

[12]

Zhe Chen, Tianyue Zheng, Chao Cai, and Jun Luo. 2021. MoVi-Fi: Motion-robust vital signs waveform recovery via deep interpreted RF sensing. InProceedings of ACM MobiCom

work page 2021

-

[13]

T Charles Clancy, Negar Kiyavash, and Dennis J Lin. 2003. Secure smartcardbased fingerprint authentication. InProceedings of WBMA

work page 2003

-

[14]

Phillip L De Leon, Michael Pucher, Junichi Yamagishi, Inma Hernaez, and Ibon Saratxaga. 2012. Evaluation of speaker verification security and detection of HMM-based synthetic speech.IEEE Transactions on Audio, Speech, and Language Processing20, 8 (2012), 2280–2290

work page 2012

-

[15]

El-Sayed A El-Dahshan, Mahmoud M Bassiouni, Septavera Sharvia, and Abdel-Badeeh M Salem. 2021. PCG signals for biometric authentication systems: An in-depth review.Computer Science Review41 (2021), 100420

work page 2021

-

[16]

Nesli Erdogmus and Sebastien Marcel. 2014. Spoofing face recognition with 3D masks.IEEE transactions on information forensics and security9, 7 (2014), 1084–1097

work page 2014

-

[17]

Mohammed E Fathy, Vishal M Patel, and Rama Chellappa. 2015. Face-based active authentication on mobile devices. InProceedings of IEEE ICASSP

work page 2015

-

[18]

Huan Feng, Kassem Fawaz, and Kang G Shin. 2017. Continuous authentication for voice assistants. InProceedings of ACM MobiCom

work page 2017

-

[19]

Andrea Ferlini, Dong Ma, Robert Harle, and Cecilia Mascolo. 2021. EarGate: gait-based user identification with in-ear microphones. In Proceedings of ACM MobiCom

work page 2021

-

[20]

Lorenz Frey, Carlo Menon, and Mohamed Elgendi. 2022. Blood pressure measurement using only a smartphone.NPJ digital medicine5, 1 (2022), 86

work page 2022

-

[21]

Tarek Frikha, Najmeddine Abdennour, Faten Chaabane, Oussama Ghorbel, Rami Ayedi, Osama R Shahin, and Omar Cheikhrouhou

-

[22]

Source localization of EEG brainwaves activities via mother wavelets families for SWT decomposition.Journal of Healthcare Engineering2021, 1 (2021), 9938646

work page 2021

- [23]

-

[24]

Yang Gao, Wei Wang, Vir V Phoha, Wei Sun, and Zhanpeng Jin. 2019. EarEcho: Using ear canal echo for wearable authentication. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies3, 3 (2019), 1–24

work page 2019

- [25]

-

[26]

Can He, Jianchun Xing, Juelong Li, Qiliang Yang, and Ronghao Wang. 2015. A new wavelet thresholding function based on hyperbolic tangent function.Mathematical Problems in Engineering2015, 1 (2015), 528656

work page 2015

-

[27]

Po-Ya Hsu, Po-Han Hsu, Tsung-Han Lee, and Hsin-Li Liu. 2021. Motion artifact resilient SCG-based biometric authentication using machine learning. InProceedings of IEEE EMBC

work page 2021

-

[28]

Changshuo Hu, Thivya Kandappu, Yang Liu, Cecilia Mascolo, and Dong Ma. 2024. Breathpro: Monitoring breathing mode during running with earables.Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies8, 2 (2024), 1–25

work page 2024

-

[29]

Md Saiful Islam, Md Mahbubur Rahman, Mehrab Bin Morshed, David J Lin, Yunzhi Li, Hao Zhou, Wendy Berry Mendes, and Jilong Kuang. 2025. BallistoBud: Heart Rate Variability Monitoring using Earbud Accelerometry for Stress Assessment. InProceedings of ACM CHI. , V ol. 1, No. 1, Article . Publication date: May 2026. AccLock: Unlocking Identity with Heartbeat ...

work page 2025

-

[30]

Yincheng Jin, Yang Gao, Xiaotao Guo, Jun Wen, Zhengxiong Li, and Zhanpeng Jin. 2022. Earhealth: an earphone-based acoustic otoscope for detection of multiple ear diseases in daily life. InProceedings of ACM MobiSys

work page 2022

-

[31]

Kenneth Jonsson, Josef Kittler, YP Li, and Jiri Matas. 2002. Support vector machines for face authentication.Image and Vision Computing 20, 5-6 (2002), 369–375

work page 2002

-

[32]

Nima Karimian, Mark Tehranipoor, and Domenic Forte. 2017. Non-fiducial ppg-based authentication for healthcare application. In Proceedings of IEEE EMBS BHI

work page 2017

-

[33]

Muhammad Umar Khan, Sumair Aziz, Areeba Zainab, Hamza Tanveer, Khushbakht Iqtidar, and Athar Waseem. 2020. Biometric system using PCG signal analysis: a new method of person identification. InProceedings of ICECCE

work page 2020

-

[34]

Diederik P Kingma and Jimmy Ba. 2014. Adam: A method for stochastic optimization.arXiv preprint arXiv:1412.6980(2014)

work page internal anchor Pith review Pith/arXiv arXiv 2014

-

[35]

Ashish Kumar, Harshit Tomar, Virender Kumar Mehla, Rama Komaragiri, and Manjeet Kumar. 2021. Stationary wavelet transform based ECG signal denoising method.ISA transactions114 (2021), 251–262

work page 2021

-

[36]

Sunwoo Lee, Wonsuk Choi, and Dong Hoon Lee. 2021. Usable user authentication on a smartwatch using vibration. InProceedings of ACM CCS

work page 2021

-

[37]

Yunzhi Li, Md Mahbubur Rahman, Mehrab Bin Morshed, Md Saiful Islam, Hao Zhou, Weinan Wang, Holland Ernst, Li Zhu, and Jilong Kuang. 2025. Optimizing Biomarkers from Earbud Ballistocardiogram: Calibration and Calibration-Free Algorithms for Accelerometer Axis Selection and Fusion. InProceedings of IEEE ICASSP

work page 2025

-

[38]

David J Lin, Md Mahbubur Rahman, Li Zhu, Viswam Nathan, Jungmok Bae, Christina Rosa, Wendy B Mendes, Jilong Kuang, and Alex J Gao. 2024. Ballistocardiogram-based heart rate variability estimation for stress monitoring using consumer earbuds. InProceedings of IEEE ICASSP

work page 2024

-

[39]

Jianwei Liu, Wenfan Song, Leming Shen, Jinsong Han, Xian Xu, and Kui Ren. 2021. Mandipass: Secure and usable user authentication via earphone imu. InProceedings of IEEE ICDCS

work page 2021

-

[40]

Dong Ma, Andrea Ferlini, and Cecilia Mascolo. 2021. Oesense: employing occlusion effect for in-ear human sensing. InProceedings of ACM MobiSys

work page 2021

-

[41]

Parul Madan, Vijay Singh, Devesh Pratap Singh, Manoj Diwakar, and Avadh Kishor. 2022. Denoising of ECG signals using weighted stationary wavelet total variation.Biomedical Signal Processing and Control73 (2022), 103478

work page 2022

-

[42]

Tsutomu Matsumoto, Hiroyuki Matsumoto, Koji Yamada, and Satoshi Hoshino. 2002. Impact of artificial" gummy" fingers on fingerprint systems. InOptical security and counterfeit deterrence techniques IV, V ol. 4677. SPIE, 275–289

work page 2002

-

[43]

Vimal Mollyn, Riku Arakawa, Mayank Goel, Chris Harrison, and Karan Ahuja. 2023. Imuposer: Full-body pose estimation using imus in phones, watches, and earbuds. InProceedings of ACM CHI

work page 2023

-

[44]

Takashi Nakamura, Valentin Goverdovsky, and Danilo P Mandic. 2017. In-ear EEG biometrics for feasible and readily collectable real-world person authentication.IEEE Transactions on Information Forensics and Security13, 3 (2017), 648–661

work page 2017

-

[45]

Shahriar Nirjon, Robert F Dickerson, Qiang Li, Philip Asare, John A Stankovic, Dezhi Hong, Ben Zhang, Xiaofan Jiang, Guobin Shen, and Feng Zhao. 2012. Musicalheart: A hearty way of listening to music. InProceedings of ACM SenSys

work page 2012

-

[46]

Alec Radford, Jong Wook Kim, M Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pam Mishkin, Jack Clark, et al. 2021. Learning Transferable Visual Models From Natural Language Supervision. InProceedings of ICML

work page 2021

-

[47]

Nalini K Ratha, Ruud M Bolle, Vinayaka D Pandit, and Vaibhav Vaish. 2000. Robust fingerprint authentication using local structural similarity. InProceedings of WACV

work page 2000

-

[48]

Nils Reimers and Iryna Gurevych. 2019. Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks. InProceedings of EMNLP

work page 2019

- [49]

-

[50]

Sairul I Safie, John J Soraghan, and Lykourgos Petropoulakis. 2011. Electrocardiogram (ECG) biometric authentication using pulse active ratio (PAR).IEEE Transactions on Information Forensics and Security6, 4 (2011), 1315–1322

work page 2011

-

[51]

Florian Schroff, Dmitry Kalenichenko, and James Philbin. 2015. Facenet: A unified embedding for face recognition and clustering. In Proceedings of IEEE CVPR

work page 2015

-

[52]

Nabilah Shabrina, Tsuyoshi Isshiki, and Hiroaki Kunieda. 2016. Fingerprint authentication on touch sensor using Phase-Only Correlation method. InProceedings of IEEE IC-ICTES

work page 2016

-

[53]

Jiacheng Shang and Jie Wu. 2019. A usable authentication system using wrist-worn photoplethysmography sensors on smartwatches. In Proceedings of IEEE CNS

work page 2019

-

[54]

Fahim Sufi, Ibrahim Khalil, and Jiankun Hu. 2010. ECG-based authentication. InHandbook of information and communication security. Springer, 309–331

work page 2010

- [55]

-

[56]

TDK InvenSense. 2025. ICM-42688-P. https://invensense.tdk.com/products/motion-tracking/6-axis/icm-42688-p/. , V ol. 1, No. 1, Article . Publication date: May 2026. 28 • Lei Wang, Jiangxuan Shen, Xi Zhang, Dalin Zhang, Jingyu Li, Haipeng Dai, Chenren Xu, Daqing Zhang, and He Huang

work page 2025

-

[57]

Shejin Thavalengal, Petronel Bigioi, and Peter Corcoran. 2015. Iris authentication in handheld devices-considerations for constraint-free acquisition.IEEE Transactions on Consumer Electronics61, 2 (2015), 245–253

work page 2015

-

[58]

The MathWorks, Inc. 2024.findpeaks function. MathWorks, Natick, Massachusetts, USA. https://www.mathworks.com/help/signal/ref/ findpeaks.html

work page 2024

-

[59]

Lei Wang, Kang Huang, Ke Sun, Wei Wang, Chen Tian, Lei Xie, and Qing Gu. 2018. Unlock with your heart: Heartbeat-based authentication on commercial mobile phones.Proceedings of the ACM on interactive, mobile, wearable and ubiquitous technologies2, 3 (2018), 1–22

work page 2018

-

[60]

Zi Wang, Yili Ren, Yingying Chen, and Jie Yang. 2022. Toothsonic: Earable authentication via acoustic toothprint.Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies6, 2 (2022), 1–24

work page 2022

-

[61]

Zi Wang, Sheng Tan, Linghan Zhang, Yili Ren, Zhi Wang, and Jie Yang. 2021. Eardynamic: An ear canal deformation based continuous user authentication using in-ear wearables.Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies5, 1 (2021), 1–27

work page 2021

-

[62]

Zi Wang, Yilin Wang, and Jie Yang. 2024. Earslide: A secure ear wearables biometric authentication based on acoustic fingerprint. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies8, 1 (2024), 1–29

work page 2024

-

[63]

Adilson Elias Xavier. 2010. The hyperbolic smoothing clustering method.Pattern Recognition43, 3 (2010), 731–737

work page 2010

-

[64]

Yadong Xie, Fan Li, Yue Wu, Huijie Chen, Zhiyuan Zhao, and Yu Wang. 2022. TeethPass: Dental occlusion-based user authentication via in-ear acoustic sensing. InProceedings of IEEE INFOCOM

work page 2022

-

[65]

Umang Yadav, Sherif N Abbas, and Dimitrios Hatzinakos. 2018. Evaluation of PPG biometrics for authentication in different states. In Proceedings of IEEE ICB

work page 2018

-

[66]

Qiang Yang and Yuanqing Zheng. 2022. Deepear: Sound localization with binaural microphones.IEEE Transactions on Mobile Computing 23, 1 (2022), 359–375

work page 2022

-

[67]

Linghan Zhang, Sheng Tan, and Jie Yang. 2017. Hearing your voice is not enough: An articulatory gesture based liveness detection for voice authentication. InProceedings of ACM CCS

work page 2017

-

[68]

Linghan Zhang, Sheng Tan, Jie Yang, and Yingying Chen. 2016. V oicelive: A phoneme localization based liveness detection for voice authentication on smartphones. InProceedings of ACM CCS

work page 2016

-

[69]

Siqi Zhang, Xiyuxing Zhang, Duc Nguyen Tien Vu, Tao Qiang, Clara Palacios, Jiangyifei Zhu, Yuntao Wang, Mayank Goel, and Justin Chan. 2026. LubDubDecoder: Bringing Micro-Mechanical Cardiac Monitoring to Hearables. InProceedings of ACM CHI

work page 2026

-

[70]

Tianming Zhao, Yan Wang, Jian Liu, Yingying Chen, Jerry Cheng, and Jiadi Yu. 2020. Trueheart: Continuous authentication on wrist-worn wearables using ppg-based biometrics. InProceedings of IEEE INFOCOM

work page 2020

-

[71]

Hao Zhou, Md Mahbubur Rahman, Mehrab Bin Morshed, Yunzhi Li, Md Saiful Islam, Larry Zhang, Jungmok Bae, Christina Rosa, Wendy Berry Mendes, and Jilong Kuang. 2025. Know Your Heart Better: Multimodal Cardiac Output Monitoring using Earbuds. In Proceedings of IEEE ICASSP

work page 2025

-

[72]

Yongpan Zou, Jianhao Weng, Haibo Lei, Danyang Wang, Victor CM Leung, and Kaishun Wu. 2024. EarPrint: Earphone-Based Implicit User Authentication With Behavioral and Physiological Acoustics.IEEE Internet of Things Journal11, 19 (2024), 31128–31143. , V ol. 1, No. 1, Article . Publication date: May 2026

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.