Recognition: unknown

Graph-Grounded Optimization: Rao-Family Metaheuristics, Classical OR, and SLM-Driven Formulation over Knowledge Graphs

Pith reviewed 2026-05-14 20:26 UTC · model grok-4.3

The pith

Optimization problems formulated from knowledge graphs via Cypher queries expose data-quality flaws that text-based methods overlook while supporting a portfolio of Rao metaheuristics over single solvers.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

By grounding optimization inputs in property knowledge graphs queried via Cypher, the method produces solutions while simultaneously surfacing data-quality defects such as absent node properties and invalid aggregate values; classical solvers like OR-tools handle only the linear sub-cases and cannot encode the non-linear objectives that arise naturally from the graphs, while Rao variants require a portfolio because performance varies sharply with problem structure.

What carries the argument

Graph-grounded formulation that sources variables, constraints, and coefficients from a property knowledge graph through Cypher queries, paired with a portfolio of Rao-family metaheuristics (BMWR, Jaya, SAMP-Jaya, EHR-Jaya, Rao-1).

If this is right

- A portfolio of Rao metaheuristics is required rather than reliance on any single variant, as BMWR and Rao-1 each win on distinct problem classes.

- Classical OR-tools solvers become inapplicable once non-linear objectives emerge from the graph structure.

- Data-quality defects become visible at formulation time instead of remaining hidden until deployment.

- The same graph can supply multiple related optimization problems without manual reformulation of variables or constraints.

Where Pith is reading between the lines

- Integration with LLM systems could use the graph as a verifiable grounding layer to reduce hallucinated constraints.

- The approach may scale to dynamic graphs where edges and properties update in real time, enabling live re-optimization.

- Domains with high regulatory stakes such as clinical allocation could adopt graph grounding as an auditable input step.

Load-bearing premise

The selected knowledge graphs faithfully encode the true decision variables and constraints of the real-world problems without systematic missing data or query artifacts that would alter the optimization outcomes.

What would settle it

A side-by-side run on one of the seven domains in which a purely text-formulated version of the same problem produces an identical numerical solution yet fails to flag a missing property or degenerate aggregate that the graph version detects and that changes the real-world recommendation.

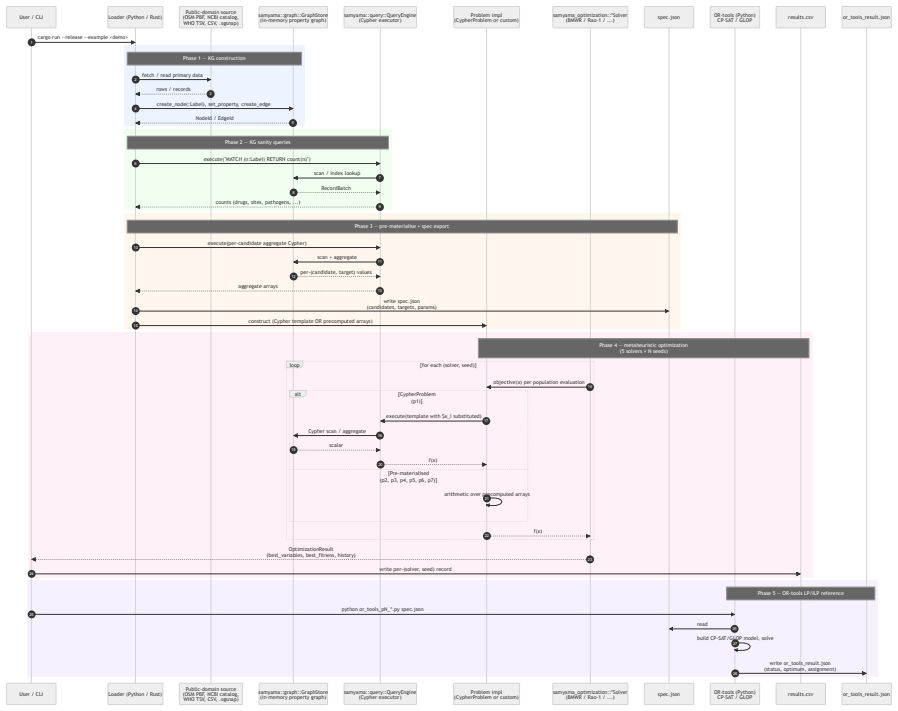

Figures

read the original abstract

We propose graph-grounded optimization: a paradigm in which the decision variables, constraints, and objective coefficients of a real-world optimization problem are sourced from a property knowledge graph (KG) via Cypher queries, rather than supplied as free-form natural-language text or static tabular input. We motivate the paradigm by surveying recent LLM/SLM-driven optimization systems -- OptiMUS, Chain-of-Experts, LLMOPT, OPRO, FunSearch, Eureka -- none of which consume property graphs as the primary input modality. We instantiate the paradigm in the open-source samyama-graph database and evaluate seven real-world public-domain KG-backed problems spanning drug repurposing (245K-node biomedical KG), clinical-trial site selection (7.78M-node trial registry), Indian supply-chain rerouting (5.34M-node OSM road graph), healthcare equity allocation (WHO/GAVI/IHME KG), economic-environmental grid dispatch, antimicrobial-resistance stewardship (NCBI AMRFinderPlus, 10.4K resistance genes), and wildfire evacuation routing (OSM Paradise, CA). We compare a portfolio of Rao-family metaheuristics (BMWR, Jaya, SAMP-Jaya, EHR-Jaya, Rao-1) against Google OR-tools (CP-SAT and GLOP) reference solvers. We find that (i) no single Rao variant dominates: BMWR wins on discrete-with-tradeoff and high-dim-with-hard-constraint problems while Rao-1 wins on continuous low-/mid-dim problems, empirically supporting a portfolio approach; (ii) OR-tools dominates on small linear/MILP-friendly sub-problems but cannot encode the non-linear objectives that emerge in several of the real-world settings; (iii) graph-grounded formulations surface data-quality issues (missing properties, degenerate aggregates) that purely text-formulated optimizations would silently mask

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes graph-grounded optimization, in which decision variables, constraints, and objectives are extracted from property knowledge graphs via Cypher queries rather than free-form text. It surveys LLM/SLM optimization systems (OptiMUS, OPRO, LLMOPT, etc.), instantiates the approach in the samyama-graph database, and evaluates Rao-family metaheuristics (BMWR, Jaya, SAMP-Jaya, EHR-Jaya, Rao-1) against OR-Tools (CP-SAT, GLOP) on seven public KG-backed problems spanning biomedical, supply-chain, healthcare, and routing domains. The reported findings are that no single Rao variant dominates, OR-Tools excels on small linear sub-problems but cannot handle emergent non-linear objectives, and graph formulations surface data-quality issues (missing properties, degenerate aggregates) that text-based formulations would mask.

Significance. If the empirical results hold, the work could strengthen the case for knowledge-graph integration in optimization pipelines, particularly for exposing data artifacts in large real-world instances, and for adopting portfolio metaheuristic strategies. The use of open-source tooling and public-domain graphs supports reproducibility.

major comments (3)

- [Abstract] Abstract: the central claim that graph-grounded formulations surface data-quality issues that purely text-formulated optimizations would silently mask is not supported by a direct empirical contrast; the evaluation contains no parallel arm supplying the identical decision variables and objectives as natural-language text to any of the surveyed LLM/SLM systems (OPRO, LLMOPT, etc.).

- [Evaluation] Evaluation section: the comparisons across the seven problems report no error bars, statistical tests, or details on how non-linear objectives were encoded for the solvers, undermining the reliability of the performance rankings between Rao variants and OR-Tools.

- [Problem formulations] Problem formulations (clinical-trial and OSM examples): for the 7.78M-node trial registry and 5.34M-node road graph, the manuscript provides no query execution times, memory footprints, or verification that Cypher-sourced aggregates match the intended decision variables, which is load-bearing for the practicality claims.

minor comments (2)

- [Abstract] Abstract: the acronyms BMWR, EHR-Jaya, and SAMP-Jaya are used without expansion on first appearance.

- [Introduction] The survey of LLM/SLM systems would be clearer if summarized in a table listing input modalities and limitations.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed comments. We address each major point below and outline the revisions we will make to strengthen the manuscript.

read point-by-point responses

-

Referee: [Abstract] Abstract: the central claim that graph-grounded formulations surface data-quality issues that purely text-formulated optimizations would silently mask is not supported by a direct empirical contrast; the evaluation contains no parallel arm supplying the identical decision variables and objectives as natural-language text to any of the surveyed LLM/SLM systems (OPRO, LLMOPT, etc.).

Authors: We agree that a direct parallel arm comparing identical problem instances supplied as natural-language text to the surveyed LLM/SLM systems would provide stronger empirical support. The current manuscript does not contain such an arm, as its primary contribution is the graph-grounded paradigm and its instantiation on public KGs. The data-quality issues (missing properties, degenerate aggregates) were identified precisely because Cypher queries enable explicit validation and inspection of sourced elements; text-based formulations would require separate post-hoc verification steps not native to the optimization pipeline. In revision we will moderate the abstract claim to reflect this distinction and add a short discussion paragraph with one concrete example illustrating how the structured extraction reveals artifacts that would be masked in free-text input. revision: partial

-

Referee: [Evaluation] Evaluation section: the comparisons across the seven problems report no error bars, statistical tests, or details on how non-linear objectives were encoded for the solvers, undermining the reliability of the performance rankings between Rao variants and OR-Tools.

Authors: We acknowledge that the evaluation section lacks error bars, statistical significance tests, and explicit encoding details for non-linear objectives. In the revised manuscript we will report standard deviations over multiple independent runs for all metaheuristic variants, apply non-parametric statistical tests (Wilcoxon signed-rank with Holm correction) to support the performance rankings, and add a dedicated subsection detailing how non-linear objectives were encoded (including penalty reformulations and any auxiliary variables introduced for CP-SAT and GLOP). revision: yes

-

Referee: [Problem formulations] Problem formulations (clinical-trial and OSM examples): for the 7.78M-node trial registry and 5.34M-node road graph, the manuscript provides no query execution times, memory footprints, or verification that Cypher-sourced aggregates match the intended decision variables, which is load-bearing for the practicality claims.

Authors: We thank the referee for noting this gap. In the revised manuscript we will add a new table (or subsection) reporting Cypher query execution times and peak memory usage on the two large graphs, measured on the same hardware used for the optimization runs. We will also document verification procedures, including spot-checks against ground-truth subsets and consistency checks between aggregate values and manually computed statistics, to confirm that the sourced decision variables match the intended formulations. revision: yes

Circularity Check

No significant circularity in empirical evaluation

full rationale

The paper proposes graph-grounded optimization as an input modality and reports direct empirical runs of Rao-family metaheuristics and OR-Tools on seven public KG instances (drug repurposing, clinical trials, supply chains, etc.). No equations, fitted parameters, or predictions are defined in terms of themselves; the listed findings (portfolio performance, OR-Tools limits on non-linear objectives, data-quality surfacing) are observations from those runs against an external baseline. No self-citations, uniqueness theorems, or ansatzes are invoked as load-bearing steps. The derivation chain is therefore self-contained as an experimental comparison rather than a closed logical reduction.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

- [1]

-

[2]

arXiv preprint arXiv:2402.10172 , year =

AhmadiTeshnizi, Ali and Gao, Wenzhi and Udell, Madeleine , title =. arXiv preprint arXiv:2402.10172 , year =

-

[3]

arXiv preprint arXiv:2407.19633 , year =

AhmadiTeshnizi, Ali and Gao, Wenzhi and Brusca, Lorenzo and Udell, Madeleine , title =. arXiv preprint arXiv:2407.19633 , year =

-

[4]

International Conference on Learning Representations (ICLR) , year =

Xiao, Ziyang and Zhang, Dongxiang and Wu, Yangxin and others , title =. International Conference on Learning Representations (ICLR) , year =

-

[5]

International Conference on Learning Representations (ICLR) , year =

Jiang, Caigao and Shu, Xiang and Qian, Hong and others , title =. International Conference on Learning Representations (ICLR) , year =

-

[6]

International Conference on Learning Representations (ICLR) , year =

Yang, Chengrun and Wang, Xuezhi and Lu, Yifeng and others , title =. International Conference on Learning Representations (ICLR) , year =

-

[7]

Romera-Paredes, Bernardino and Barekatain, Mohammadamin and Novikov, Alexander and others , title =. Nature , year =

-

[8]

International Conference on Learning Representations (ICLR) , year =

Ma, Yecheng Jason and Liang, William and Wang, Guanzhi and others , title =. International Conference on Learning Representations (ICLR) , year =

-

[9]

arXiv preprint arXiv:2410.13080 , year =

Jin, Weizhe and others , title =. arXiv preprint arXiv:2410.13080 , year =

-

[10]

arXiv preprint arXiv:2508.02999 , year =

others , title =. arXiv preprint arXiv:2508.02999 , year =

-

[11]

Rao, R. Venkata , title =. International Journal of Industrial Engineering Computations , year =

-

[12]

Rao, R. Venkata , title =. arXiv preprint arXiv:2407.11149 , year =

- [13]

-

[14]

Rao, R. Venkata and Savsani, Vimal J. and Vakharia, D. P. , title =. Computer-Aided Design , volume =

-

[15]

Venkata and Saroj, Anand , title =

Rao, R. Venkata and Saroj, Anand , title =. Swarm and Evolutionary Computation , year =

- [16]

-

[17]

Venkata and Saroj, Anand , title =

Rao, R. Venkata and Saroj, Anand , title =. Journal of Computational Design and Engineering , year =

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.