Recognition: no theorem link

A Comparative Study of Controlled Text Generation Systems Using Level-Playing-Field Evaluation Principles

Pith reviewed 2026-05-13 05:34 UTC · model grok-4.3

The pith

Re-evaluating current controlled text generation systems on shared methods and data shows most perform substantially worse than originally reported.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

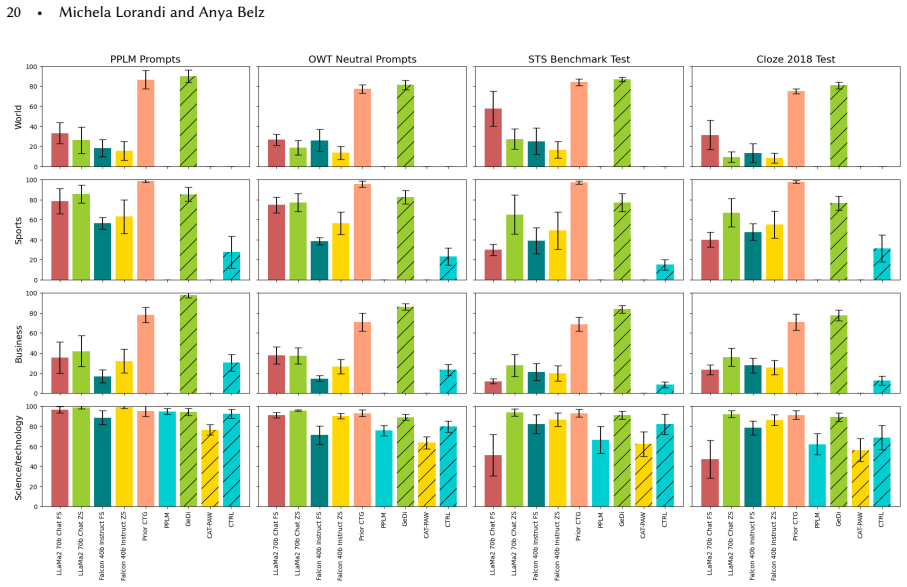

When a representative set of current controlled text generation systems is re-evaluated under a level-playing-field protocol that standardises output generation, processing, and a shared suite of evaluation methods and datasets, the measured performance differs substantially from the results each system originally reported, and in most cases the new scores are lower.

What carries the argument

Level-playing-field (LPF) evaluation, which enforces identical output generation and processing pipelines plus one common set of evaluation methods and datasets across all systems under test.

If this is right

- Published performance claims for controlled text generation systems may substantially misrepresent their true capabilities under comparable conditions.

- Comparing different controlled generation approaches remains unreliable until standardised evaluation practices are adopted.

- The discrepancies revealed by uniform testing demonstrate an urgent need for reproducible evaluation in the field.

- Without such practices, progress in identifying the most effective control methods will continue to rest on non-comparable evidence.

Where Pith is reading between the lines

- The same uniform re-evaluation protocol could be applied to other natural language generation tasks to test whether reported advances hold up.

- System designers might begin to optimise directly for the common evaluation set rather than for the varied metrics used in individual papers.

- Over time the adoption of one shared benchmark suite could reduce the number of incomparable papers and accelerate genuine comparative insight.

Load-bearing premise

The particular shared evaluation methods and datasets chosen from current practice form a fair and representative basis for comparing all the systems.

What would settle it

Re-running the same systems with an alternative but still uniformly applied set of evaluation methods and datasets and finding that the original performance gaps largely reappear.

Figures

read the original abstract

Background: Many different approaches to controlled text generation (CTG) have been proposed over recent years, but it is difficult to get a clear picture of which approach performs best, because different datasets and evaluation methods are used in each case to assess the control achieved. Objectives: Our aim in the work reported in this paper is to develop an approach to evaluation that enables us to comparatively evaluate different CTG systems in a manner that is both informative and fair to the individual systems. Methods: We use a level-playing-field (LPF) approach to comparative evaluation where we (i) generate and process all system outputs in a standardised way, and (ii) apply a shared set of evaluation methods and datasets, selected based on those currently in use, in order to ensure fair evaluation. Results: When re-evaluated in this way, performance results for a representative set of current CTG systems differ substantially from originally reported results, in most cases for the worse. This highlights the importance of a shared standardised way of assessing controlled generation. Conclusions: The discrepancies revealed by LPF evaluation demonstrate the urgent need for standardised, reproducible evaluation practices in CTG. Our results suggest that without such practices, published performance claims may substantially misrepresent true system capabilities.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

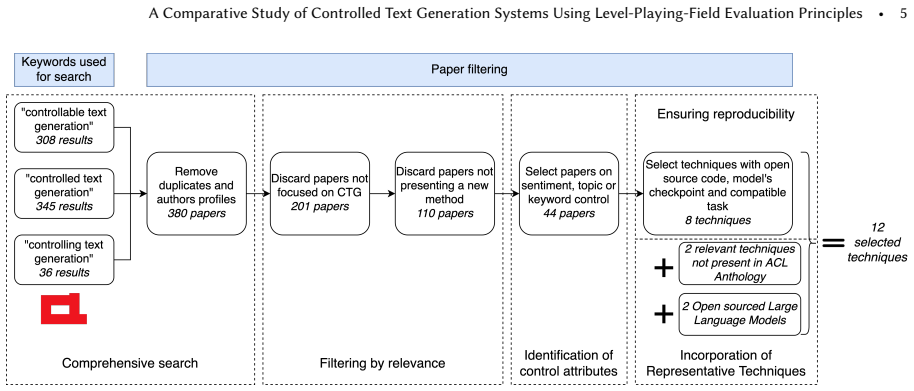

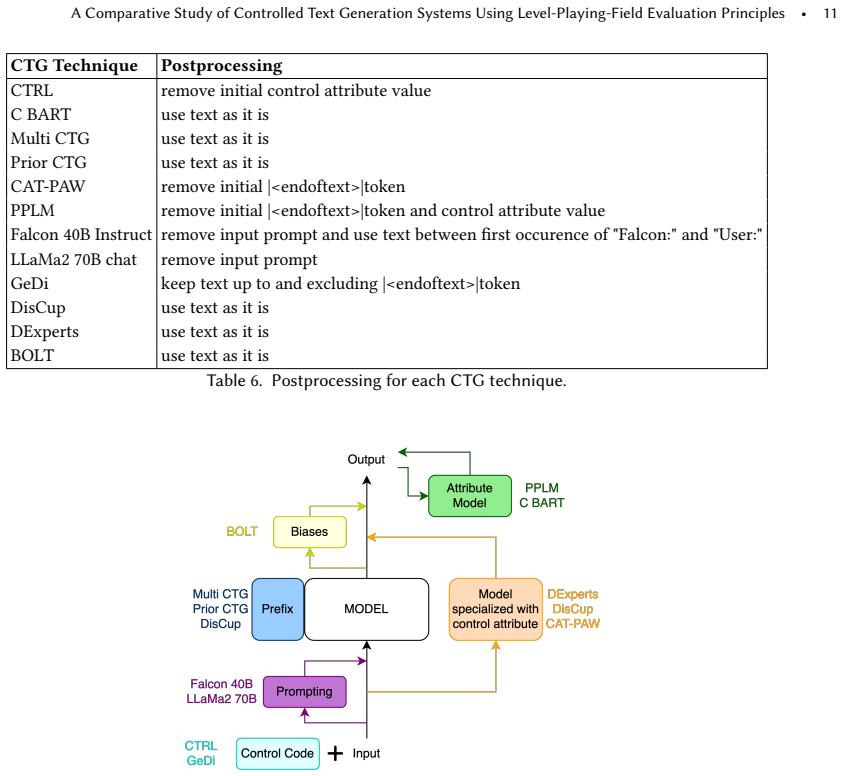

Summary. The paper proposes a level-playing-field (LPF) evaluation approach for controlled text generation (CTG) systems. It standardizes output generation and processing across systems and applies a shared set of evaluation methods and datasets selected from those currently in use. Re-evaluation of a representative set of CTG systems under LPF yields performance results that differ substantially from the originally reported figures, with most systems performing worse. The authors conclude that this demonstrates the urgent need for standardized, reproducible evaluation practices in CTG to avoid misrepresenting true system capabilities.

Significance. If the central empirical claim holds after addressing the concerns below, the work would be moderately significant for the CTG subfield by providing concrete evidence that non-standardized evaluations can lead to overstated performance claims. It credits the attempt to create a comparative benchmark using shared resources, which aligns with calls for reproducibility. However, the significance is limited by the absence of quantitative details, error bars, or system outputs, making the magnitude of discrepancies hard to assess. The approach does not appear to include machine-checked proofs or parameter-free derivations.

major comments (2)

- [Methods (LPF approach and dataset selection)] The central claim that LPF re-evaluation reveals substantially worse true capabilities depends on the shared datasets and methods being a valid, unbiased measure for every system. The Methods section states that the shared set is 'selected based on those currently in use,' but provides no explicit mapping or coverage analysis showing that each system's intended control attribute (sentiment, topic, lexical constraints, syntax, etc.) is well-represented in the chosen datasets. Without this, performance drops may arise from evaluation misalignment rather than original non-standardized practices, as noted in the stress-test concern.

- [Abstract and Results] The abstract and Results section claim that re-evaluated results 'differ substantially from originally reported results, in most cases for the worse,' but supply no quantitative details, specific metrics, error bars, tables of per-system scores, or example outputs. This renders the key empirical finding unverifiable and raises the possibility that discrepancies rest on post-hoc choices of datasets or processing steps.

minor comments (2)

- [Results] A table listing the exact CTG systems evaluated, their original control targets, the shared datasets applied to each, and the original vs. LPF scores would greatly improve clarity and reproducibility.

- [Conclusions] The paper should explicitly state whether the standardized generation/processing pipeline and all evaluation code/datasets will be released publicly to support the reproducibility claims.

Simulated Author's Rebuttal

We thank the referee for the constructive comments, which help clarify how to strengthen the presentation of our level-playing-field evaluation. We address each major comment below and indicate the revisions we will make.

read point-by-point responses

-

Referee: [Methods (LPF approach and dataset selection)] The central claim that LPF re-evaluation reveals substantially worse true capabilities depends on the shared datasets and methods being a valid, unbiased measure for every system. The Methods section states that the shared set is 'selected based on those currently in use,' but provides no explicit mapping or coverage analysis showing that each system's intended control attribute (sentiment, topic, lexical constraints, syntax, etc.) is well-represented in the chosen datasets. Without this, performance drops may arise from evaluation misalignment rather than original non-standardized practices, as noted in the stress-test concern.

Authors: We agree that an explicit mapping would improve transparency. The datasets were chosen because they appear in multiple prior CTG papers and collectively cover the most common control attributes (sentiment, topic, lexical, syntactic). In the revised manuscript we will add a table that lists, for each re-evaluated system, its primary control attribute and the specific LPF datasets used to assess it, together with a short paragraph on coverage. We will also note that perfect one-to-one alignment is not always possible given the heterogeneity of CTG tasks, but that the chosen resources are drawn directly from the literature rather than being post-hoc selections. revision: yes

-

Referee: [Abstract and Results] The abstract and Results section claim that re-evaluated results 'differ substantially from originally reported results, in most cases for the worse,' but supply no quantitative details, specific metrics, error bars, tables of per-system scores, or example outputs. This renders the key empirical finding unverifiable and raises the possibility that discrepancies rest on post-hoc choices of datasets or processing steps.

Authors: We accept that the current Results section is insufficiently detailed for verification. In the revision we will replace the high-level summary with a table (or set of tables) that reports, for every system and every metric, the originally published score alongside the LPF score, including standard deviations or error bars where multiple runs were performed. We will also add a small set of representative output examples and a supplementary section that documents the exact generation parameters, post-processing steps, and dataset splits used, thereby removing any ambiguity about post-hoc decisions. revision: yes

Circularity Check

No circularity: empirical re-evaluation against external baselines

full rationale

The paper's central claim rests on direct empirical measurements: standardized generation/processing of outputs from existing CTG systems followed by application of a shared evaluation suite drawn from current practice. No equations, fitted parameters, or derivations are presented whose outputs reduce to the inputs by construction. The LPF method is a procedural standardization, not a self-referential definition, and performance discrepancies are reported as observed differences from prior published results rather than predictions derived from the paper's own fitted values or self-citations. The selection of datasets 'based on those currently in use' is an explicit methodological choice, not a loop that forces the reported outcomes.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The shared set of evaluation methods and datasets selected from current use provides a fair and representative basis for comparing CTG systems.

Reference graph

Works this paper leans on

-

[1]

Ebtesam Almazrouei, Hamza Alobeidli, Abdulaziz Alshamsi, Alessandro Cappelli, Ruxandra Cojocaru, Merouane Debbah, Etienne Goffinet, Daniel Heslow, Julien Launay, Quentin Malartic, Badreddine Noune, Baptiste Pannier, and Guilherme Penedo. 2023. Falcon-40B: an open large language model with state-of-the-art performance. (2023)

work page 2023

-

[2]

Matheus Araújo, Adriano Pereira, and Fabrício Benevenuto. 2020. A comparative study of machine translation for multilingual sentence-level sentiment analysis.Information Sciences512 (2020), 1078–1102. https://doi.org/10.1016/j.ins.2019.10.031

-

[3]

Federico Betti, Giorgia Ramponi, and Massimo Piccardi. 2020. Controlled Text Generation with Adversarial Learning. InProceedings of the 13th International Conference on Natural Language Generation, Brian Davis, Yvette Graham, John Kelleher, and Yaji Sripada (Eds.). Association for Computational Linguistics, Dublin, Ireland, 29–34. https://doi.org/10.18653...

-

[4]

Fredrik Carlsson, Joey Öhman, Fangyu Liu, Severine Verlinden, Joakim Nivre, and Magnus Sahlgren. 2022. Fine-Grained Controllable Text Generation Using Non-Residual Prompting. InProceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Smaranda Muresan, Preslav Nakov, and Aline Villavicencio (Eds.). As...

-

[5]

Noe Casas, José A. R. Fonollosa, and Marta R. Costa-jussà. 2020. Syntax-driven Iterative Expansion Language Models for Controllable Text Generation. InProceedings of the Fourth Workshop on Structured Prediction for NLP, Priyanka Agrawal, Zornitsa Kozareva, Julia Kreutzer, Gerasimos Lampouras, André Martins, Sujith Ravi, and Andreas Vlachos (Eds.). Associa...

-

[6]

Thiago Castro Ferreira, Chris van der Lee, Emiel van Miltenburg, and Emiel Krahmer. 2019. Neural data-to-text generation: A comparison between pipeline and end-to-end architectures. InProceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNL...

-

[7]

Daniel Cer, Mona Diab, Eneko Agirre, Iñigo Lopez-Gazpio, and Lucia Specia. 2017. SemEval-2017 Task 1: Semantic Textual Similarity Multilingual and Crosslingual Focused Evaluation. InProceedings of the 11th International Workshop on Semantic Evaluation (SemEval- 2017), Steven Bethard, Marine Carpuat, Marianna Apidianaki, Saif M. Mohammad, Daniel Cer, and D...

- [8]

-

[9]

Maximillian Chen, Xiao Yu, Weiyan Shi, Urvi Awasthi, and Zhou Yu. 2023. Controllable Mixed-Initiative Dialogue Generation through Prompting. InProceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki (Eds.). Association for Computational Linguistic...

-

[10]

Pierre Colombo, Nathan Noiry, Ekhine Irurozki, and Stephan Clémençon. 2022. What are the best Systems? New Perspectives on NLP Benchmarking. InAdvances in Neural Information Processing Systems, S. Koyejo, S. Mohamed, A. Agarwal, D. Belgrave, K. Cho, and A. Oh (Eds.), Vol. 35. Curran Associates, Inc., 26915–26932. https://proceedings.neurips.cc/paper_files...

work page 2022

-

[11]

Pierre Colombo, Maxime Peyrard, Nathan Noiry, Robert West, and Pablo Piantanida. 2023. The Glass Ceiling of Automatic Evaluation in Natural Language Generation. InFindings of the Association for Computational Linguistics: IJCNLP-AACL 2023 (Findings), Jong C. Park, Yuki Arase, Baotian Hu, Wei Lu, Derry Wijaya, Ayu Purwarianti, and Adila Alfa Krisnadhi (Eds...

-

[12]

Washington Cunha, Vítor Mangaravite, Christian Gomes, Sérgio Canuto, Elaine Resende, Cecilia Nascimento, Felipe Viegas, Celso França, Wellington Santos Martins, Jussara M Almeida, et al. 2021. On the cost-effectiveness of neural and non-neural approaches and representations for text classification: A comprehensive comparative study.Information Processing ...

work page 2021

- [13]

-

[14]

Hanxing Ding, Liang Pang, Zihao Wei, Huawei Shen, Xueqi Cheng, and Tat-Seng Chua. 2023. MacLaSa: Multi-Aspect Controllable Text Generation via Efficient Sampling from Compact Latent Space. InFindings of the Association for Computational Linguistics: EMNLP 2023, Houda Bouamor, Juan Pino, and Kalika Bali (Eds.). Association for Computational Linguistics, Si...

-

[15]

Xiang Fan, Yiwei Lyu, Paul Pu Liang, Ruslan Salakhutdinov, and Louis-Philippe Morency. 2023. Nano: Nested Human-in-the-Loop Reward Learning for Few-shot Language Model Control. InFindings of the Association for Computational Linguistics: ACL 2023, Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki (Eds.). Association for Computational Linguistics, Toront...

-

[16]

Sebastian Gehrmann, Elizabeth Clark, and Thibault Sellam. 2023. Repairing the cracked foundation: A survey of obstacles in evaluation practices for generated text.Journal of Artificial Intelligence Research77 (2023), 103–166. XXXX, Vol. 1, Article . Publication date: May 2025. A Comparative Study of Controlled Text Generation Systems Using Level-Playing-F...

work page 2023

-

[17]

Aaron Gokaslan and Vanya Cohen. 2019. OpenWebText Corpus. http://Skylion007.github.io/OpenWebTextCorpus

work page 2019

-

[18]

Yuxuan Gu, Xiaocheng Feng, Sicheng Ma, Jiaming Wu, Heng Gong, and Bing Qin. 2022. Improving Controllable Text Generation with Position-Aware Weighted Decoding. InFindings of the Association for Computational Linguistics: ACL 2022, Smaranda Muresan, Preslav Nakov, and Aline Villavicencio (Eds.). Association for Computational Linguistics, Dublin, Ireland, 3...

work page 2022

-

[19]

Yuxuan Gu, Xiaocheng Feng, Sicheng Ma, Lingyuan Zhang, Heng Gong, and Bing Qin. 2022. A Distributional Lens for Multi-Aspect Controllable Text Generation. InProceedings of the 2022 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, Abu Dhabi, United Arab Emirates, 1023–1043. https://aclanthology.org/...

work page 2022

-

[20]

Yuxuan Gu, Xiaocheng Feng, Sicheng Ma, Lingyuan Zhang, Heng Gong, Weihong Zhong, and Bing Qin. 2023. Controllable Text Generation via Probability Density Estimation in the Latent Space. InProceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki (Eds...

-

[21]

Tianxing He, Jingyu Zhang, Tianle Wang, Sachin Kumar, Kyunghyun Cho, James Glass, and Yulia Tsvetkov. 2023. On the Blind Spots of Model-Based Evaluation Metrics for Text Generation. InProceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki (Eds.). ...

-

[22]

Xingwei He. 2021. Parallel Refinements for Lexically Constrained Text Generation with BART. InProceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, Marie-Francine Moens, Xuanjing Huang, Lucia Specia, and Scott Wen-tau Yih (Eds.). Association for Computational Linguistics, Online and Punta Cana, Dominican Republic, 8653–86...

-

[23]

Fred Jelinek, Robert L Mercer, Lalit R Bahl, and James K Baker. 1977. Perplexity—a measure of the difficulty of speech recognition tasks. The Journal of the Acoustical Society of America62, S1 (1977), S63–S63

work page 1977

-

[24]

Katharina Kann, Sascha Rothe, and Katja Filippova. 2018. Sentence-Level Fluency Evaluation: References Help, But Can Be Spared!. In Proceedings of the 22nd Conference on Computational Natural Language Learning, Anna Korhonen and Ivan Titov (Eds.). Association for Computational Linguistics, Brussels, Belgium, 313–323. https://doi.org/10.18653/v1/K18-1031

- [25]

-

[26]

Minbeom Kim, Hwanhee Lee, Kang Min Yoo, Joonsuk Park, Hwaran Lee, and Kyomin Jung. 2023. Critic-Guided Decoding for Controlled Text Generation. InFindings of the Association for Computational Linguistics: ACL 2023, Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki (Eds.). Association for Computational Linguistics, Toronto, Canada, 4598–4612. https://doi...

-

[27]

Ben Krause, Akhilesh Deepak Gotmare, Bryan McCann, Nitish Shirish Keskar, Shafiq Joty, Richard Socher, and Nazneen Fatema Rajani

-

[28]

GeDi: Generative Discriminator Guided Sequence Generation. InFindings of the Association for Computational Linguistics: EMNLP 2021, Marie-Francine Moens, Xuanjing Huang, Lucia Specia, and Scott Wen-tau Yih (Eds.). Association for Computational Linguistics, Punta Cana, Dominican Republic, 4929–4952. https://doi.org/10.18653/v1/2021.findings-emnlp.424

-

[29]

Sachin Kumar, Biswajit Paria, and Yulia Tsvetkov. 2022. Gradient-based Constrained Sampling from Language Models. InProceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, Yoav Goldberg, Zornitsa Kozareva, and Yue Zhang (Eds.). Association for Computational Linguistics, Abu Dhabi, United Arab Emirates, 2251–2277. https://do...

-

[30]

David Landsman, Jerry Zikun Chen, and Hussain Zaidi. 2022. BeamR: Beam Reweighing with Attribute Discriminators for Controllable Text Generation. InFindings of the Association for Computational Linguistics: AACL-IJCNLP 2022, Yulan He, Heng Ji, Sujian Li, Yang Liu, and Chua-Hui Chang (Eds.). Association for Computational Linguistics, Online only, 422–437. ...

work page 2022

-

[31]

Yuanmin Leng, François Portet, Cyril Labbé, and Raheel Qader. 2020. Controllable Neural Natural Language Generation: comparison of state-of-the-art control strategies. InProceedings of the 3rd International Workshop on Natural Language Generation from the Semantic Web (WebNLG+), Thiago Castro Ferreira, Claire Gardent, Nikolai Ilinykh, Chris van der Lee, S...

work page 2020

- [32]

-

[33]

Bill Yuchen Lin, Wangchunshu Zhou, Ming Shen, Pei Zhou, Chandra Bhagavatula, Yejin Choi, and Xiang Ren. 2020. CommonGen: A Constrained Text Generation Challenge for Generative Commonsense Reasoning. InFindings of the Association for Computational Linguistics: EMNLP 2020, Trevor Cohn, Yulan He, and Yang Liu (Eds.). Association for Computational Linguistics...

-

[34]

Alisa Liu, Maarten Sap, Ximing Lu, Swabha Swayamdipta, Chandra Bhagavatula, Noah A. Smith, and Yejin Choi. 2021. DExperts: Decoding-Time Controlled Text Generation with Experts and Anti-Experts. InProceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processi...

-

[35]

Guisheng Liu, Yi Li, Yanqing Guo, Xiangyang Luo, and Bo Wang. 2022. Multi-Attribute Controlled Text Generation with Contrastive- Generator and External-Discriminator. InProceedings of the 29th International Conference on Computational Linguistics, Nicoletta Calzolari, Chu-Ren Huang, Hansaem Kim, James Pustejovsky, Leo Wanner, Key-Sun Choi, Pum-Mo Ryu, Hsi...

work page 2022

-

[36]

Xin Liu, Muhammad Khalifa, and Lu Wang. 2023. BOLT: Fast Energy-based Controlled Text Generation with Tunable Biases. InProceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki (Eds.). Association for Computational Linguistics, Toronto, Canada, 186...

-

[37]

Zihan Liu, Mostofa Patwary, Ryan Prenger, Shrimai Prabhumoye, Wei Ping, Mohammad Shoeybi, and Bryan Catanzaro. 2022. Multi- Stage Prompting for Knowledgeable Dialogue Generation. InFindings of the Association for Computational Linguistics: ACL 2022, Smaranda Muresan, Preslav Nakov, and Aline Villavicencio (Eds.). Association for Computational Linguistics,...

-

[38]

Michela Lorandi and Anya Belz. 2023. How to Control Sentiment in Text Generation: A Survey of the State-of-the-Art in Sentiment- Control Techniques. InProceedings of the 13th Workshop on Computational Approaches to Subjectivity, Sentiment, & Social Media Analysis, Jeremy Barnes, Orphée De Clercq, and Roman Klinger (Eds.). Association for Computational Lin...

-

[39]

Ximing Lu, Sean Welleck, Peter West, Liwei Jiang, Jungo Kasai, Daniel Khashabi, Ronan Le Bras, Lianhui Qin, Youngjae Yu, Rowan Zellers, Noah A. Smith, and Yejin Choi. 2022. NeuroLogic A*esque Decoding: Constrained Text Generation with Lookahead Heuristics. InProceedings of the 2022 Conference of the North American Chapter of the Association for Computatio...

-

[40]

Congda Ma, Tianyu Zhao, Makoto Shing, Kei Sawada, and Manabu Okumura. 2023. Focused Prefix Tuning for Controllable Text Generation. InProceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki (Eds.). Association for Computational Linguistics, Toront...

-

[41]

Andrea Madotto, Etsuko Ishii, Zhaojiang Lin, Sumanth Dathathri, and Pascale Fung. 2020. Plug-and-Play Conversational Models. In Findings of the Association for Computational Linguistics: EMNLP 2020, Trevor Cohn, Yulan He, and Yang Liu (Eds.). Association for Computational Linguistics, Online, 2422–2433. https://doi.org/10.18653/v1/2020.findings-emnlp.219

-

[42]

Damian Pascual, Beni Egressy, Clara Meister, Ryan Cotterell, and Roger Wattenhofer. 2021. A Plug-and-Play Method for Controlled Text Generation. InFindings of the Association for Computational Linguistics: EMNLP 2021, Marie-Francine Moens, Xuanjing Huang, Lucia Specia, and Scott Wen-tau Yih (Eds.). Association for Computational Linguistics, Punta Cana, Do...

-

[43]

Guilherme Penedo, Quentin Malartic, Daniel Hesslow, Ruxandra Cojocaru, Alessandro Cappelli, Hamza Alobeidli, Baptiste Pannier, Ebtesam Almazrouei, and Julien Launay. 2023. The RefinedWeb dataset for Falcon LLM: outperforming curated corpora with web data, and web data only.arXiv preprint arXiv:2306.01116(2023). arXiv:2306.01116 https://arxiv.org/abs/2306.01116

work page internal anchor Pith review arXiv 2023

-

[44]

Maxime Peyrard, Wei Zhao, Steffen Eger, and Robert West. 2021. Better than Average: Paired Evaluation of NLP systems. InProceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), Chengqing Zong, Fei Xia, Wenjie Li, and Roberto N...

- [45]

-

[46]

Dongqi Pu and Vera Demberg. 2023. ChatGPT vs Human-authored Text: Insights into Controllable Text Summarization and Sentence Style Transfer. InProceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 4: Student Research Workshop), Vishakh Padmakumar, Gisela Vallejo, and Yao Fu (Eds.). Association for Computational Li...

-

[47]

Jing Qian, Li Dong, Yelong Shen, Furu Wei, and Weizhu Chen. 2022. Controllable Natural Language Generation with Contrastive Prefixes. InFindings of the Association for Computational Linguistics: ACL 2022, Smaranda Muresan, Preslav Nakov, and Aline Villavicencio (Eds.). Association for Computational Linguistics, Dublin, Ireland, 2912–2924. https://doi.org/...

-

[48]

Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, Ilya Sutskever, et al. 2019. Language models are unsupervised multitask learners.OpenAI blog1, 8 (2019), 9

work page 2019

-

[49]

Teven Le Scao, Angela Fan, Christopher Akiki, Ellie Pavlick, Suzana Ilić, Daniel Hesslow, Roman Castagné, Alexandra Sasha Luccioni, François Yvon, Matthias Gallé, et al . 2022. Bloom: A 176b-parameter open-access multilingual language model.arXiv preprint XXXX, Vol. 1, Article . Publication date: May 2025. A Comparative Study of Controlled Text Generation...

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[50]

Rishi Sharma, James Allen, Omid Bakhshandeh, and Nasrin Mostafazadeh. 2018. Tackling the Story Ending Biases in The Story Cloze Test. InProceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), Iryna Gurevych and Yusuke Miyao (Eds.). Association for Computational Linguistics, Melbourne, Australia, 75...

-

[51]

Manning, Andrew Ng, and Christopher Potts

Richard Socher, Alex Perelygin, Jean Wu, Jason Chuang, Christopher D. Manning, Andrew Ng, and Christopher Potts. 2013. Recursive Deep Models for Semantic Compositionality Over a Sentiment Treebank. InProceedings of the 2013 Conference on Empirical Methods in Natural Language Processing, David Yarowsky, Timothy Baldwin, Anna Korhonen, Karen Livescu, and St...

work page 2013

-

[52]

Jiao Sun, Yufei Tian, Wangchunshu Zhou, Nan Xu, Qian Hu, Rahul Gupta, John Wieting, Nanyun Peng, and Xuezhe Ma. 2023. Evaluating Large Language Models on Controlled Generation Tasks. InProceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, Houda Bouamor, Juan Pino, and Kalika Bali (Eds.). Association for Computational Ling...

-

[53]

Simeng Sun, Wenlong Zhao, Varun Manjunatha, Rajiv Jain, Vlad Morariu, Franck Dernoncourt, Balaji Vasan Srinivasan, and Mohit Iyyer. 2021. IGA: An Intent-Guided Authoring Assistant. InProceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, Marie-Francine Moens, Xuanjing Huang, Lucia Specia, and Scott Wen-tau Yih (Eds.). Asso...

-

[54]

Tianxiang Sun, Junliang He, Xipeng Qiu, and Xuanjing Huang. 2022. BERTScore is Unfair: On Social Bias in Language Model-Based Metrics for Text Generation. InProceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, Yoav Goldberg, Zornitsa Kozareva, and Yue Zhang (Eds.). Association for Computational Linguistics, Abu Dhabi, Un...

-

[55]

Zecheng Tang, Pinzheng Wang, Keyan Zhou, Juntao Li, Ziqiang Cao, and Min Zhang. 2023. Can Diffusion Model Achieve Better Performance in Text Generation ? Bridging the Gap between Training and Inference !. InFindings of the Association for Computational Linguistics: ACL 2023, Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki (Eds.). Association for Compu...

-

[56]

Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, et al. 2023. Llama 2: Open foundation and fine-tuned chat models.arXiv preprint arXiv:2307.09288(2023)

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[57]

Junli Wang, Chenyang Zhang, Dongyu Zhang, Haibo Tong, Chungang Yan, and Changjun Jiang. 2024. A Recent Survey on Controllable Text Generation: a Causal Perspective.Fundamental Research(2024)

work page 2024

- [58]

-

[59]

Dian Yu, Zhou Yu, and Kenji Sagae. 2021. Attribute Alignment: Controlling Text Generation from Pre-trained Language Models. InFindings of the Association for Computational Linguistics: EMNLP 2021, Marie-Francine Moens, Xuanjing Huang, Lucia Specia, and Scott Wen-tau Yih (Eds.). Association for Computational Linguistics, Punta Cana, Dominican Republic, 225...

-

[60]

Hanqing Zhang and Dawei Song. 2022. DisCup: Discriminator Cooperative Unlikelihood Prompt-tuning for Controllable Text Generation. InProceedings of the 2022 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, Abu Dhabi, United Arab Emirates, 3392–3406. https://aclanthology.org/2022.emnlp-main.223

work page 2022

-

[61]

Hanqing Zhang, Haolin Song, Shaoyu Li, Ming Zhou, and Dawei Song. 2023. A survey of controllable text generation using transformer- based pre-trained language models.Comput. Surveys56, 3 (2023), 1–37

work page 2023

-

[62]

Xiang Zhang, Junbo Jake Zhao, and Yann LeCun. 2015. Character-level Convolutional Networks for Text Classification. InNIPS

work page 2015

-

[63]

Yukun Zhu, Ryan Kiros, Rich Zemel, Ruslan Salakhutdinov, Raquel Urtasun, Antonio Torralba, and Sanja Fidler. 2015. Aligning books and movies: Towards story-like visual explanations by watching movies and reading books. InProceedings of the IEEE international conference on computer vision. 19–27. A LLMs Prompts Tables 17 and 18 show the zero-shot and few-s...

work page 2015

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.