Recognition: no theorem link

Predicting Disagreement with Human Raters in LLM-as-a-Judge Difficulty Assessment without Using Generation-Time Probability Signals

Pith reviewed 2026-05-13 04:51 UTC · model grok-4.3

The pith

Geometric consistency in a separate embedding space identifies LLM difficulty ratings likely to disagree with human raters without using generation probabilities.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The method identifies disagreement candidates by measuring the geometric consistency of the set of LLM-generated difficulty ratings placed in a separate embedding space such as ModernBERT. Since difficulty ratings are ordinal, consistent placements allow prediction of which ratings are likely to mismatch human judgments, achieving higher AUC than baselines that use generation-time probabilities on two large LLMs for CEFR sentence difficulty.

What carries the argument

Geometric consistency of the rating set in a separate embedding space, which exploits the ordinal nature of difficulty to flag potential human disagreements without generation-time signals.

If this is right

- Human re-rating can be directed only at flagged cases, lowering total effort needed for LLM-generated educational materials.

- The method works across LLMs without requiring access to their generation probabilities.

- It extends naturally to other ordinal judgment tasks where selective human review is useful.

- Higher AUC directly supports more accurate and efficient scaling of automated difficulty assignment pipelines.

Where Pith is reading between the lines

- The technique may apply to other LLM judgment settings where probability outputs are unavailable or incomparable.

- Testing robustness across different embedding models would clarify how much the choice of space affects reliability.

- Combining geometric consistency with other available signals could be explored when probabilities are optionally accessible.

- Such flagging could aid high-stakes applications by routing uncertain ordinal ratings to human oversight.

Load-bearing premise

The geometric arrangement of ratings in the embedding space consistently corresponds to agreement with human ordinal judgments.

What would settle it

An experiment on the CEFR sentence dataset with the same LLMs where the geometric consistency method yields lower or equal AUC compared to probability baselines for predicting human disagreements.

Figures

read the original abstract

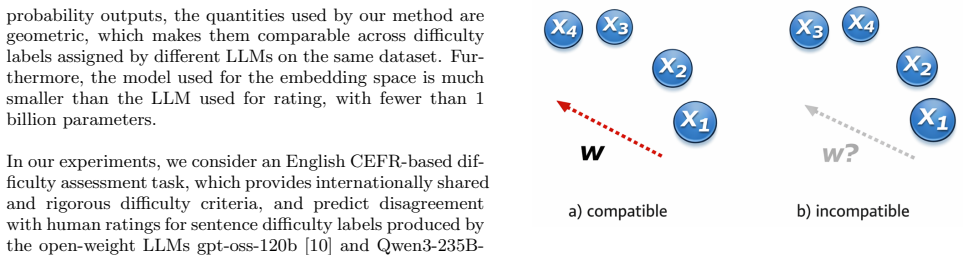

Automatic generation of educational materials using large language models (LLMs) is becoming increasingly common, but assigning difficulty levels to such materials still requires substantial human effort. LLM-as-a-Judge has therefore attracted attention, yet disagreement with human raters remains a major challenge. We propose a method for predicting which LLM-generated difficulty ratings are likely to disagree with human raters, so that such cases can be sent for re-rating. Unlike prior approaches, our method does not rely on generation-time probability signals, which must be collected during rating generation and are often difficult to compare across LLMs. Instead, exploiting the fact that difficulty is an ordinal scale, we use a separate embedding space, such as ModernBERT, and identify disagreement candidates based on the geometric consistency of the rating set. Experiments on English CEFR-based sentence difficulty assessment with GPT-OSS-120B and Qwen3-235B-A22B showed that the proposed method achieved higher AUC for predicting disagreement with human raters than probability-based baselines.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a method to predict disagreement between LLM-as-a-judge difficulty ratings (on an ordinal CEFR scale for English sentences) and human raters. The approach embeds the set of LLM ratings into a separate space (e.g., ModernBERT) and flags low geometric consistency as likely disagreement cases, without using any generation-time probability signals. Experiments on two large models (GPT-OSS-120B and Qwen3-235B-A22B) report higher AUC for disagreement prediction than probability-based baselines.

Significance. If the central result holds, the work offers a practical way to reduce human verification effort in LLM-generated educational content by routing only uncertain ratings for re-evaluation. The avoidance of model-specific probability signals is a clear strength, as it enables cross-LLM comparison and post-hoc application. The ordinal exploitation via embedding geometry is novel in this context and could generalize to other rating tasks if the geometric-human alignment is substantiated.

major comments (3)

- [Methods] Methods section: The precise definition and computation of 'geometric consistency' (e.g., which distance metric, aggregation over the rating set, or threshold) is not specified with sufficient formality or pseudocode; without this, it is impossible to assess whether the measure genuinely exploits ordinal structure or simply captures embedding artifacts.

- [Experiments] Experiments section: The abstract and results claim higher AUC, but no dataset size, number of sentences/ratings, exact AUC values with confidence intervals, or statistical significance tests (e.g., DeLong test) for the comparison against baselines are provided; this prevents evaluation of whether the reported gains are reliable or dataset-specific.

- [Results] §3 (or equivalent results discussion): No analysis or ablation demonstrates why inconsistency in the ModernBERT embedding space should align with human rater disagreement on CEFR ordinal difficulty rather than lexical or semantic features; the link remains an untested assumption that could be confounded by embedding biases.

minor comments (2)

- [Abstract] The abstract mentions 'English CEFR-based sentence difficulty assessment' but does not clarify the exact CEFR levels used or how sentences were sampled; a brief description would improve reproducibility.

- [Methods] Notation for the embedding model and rating set is introduced without a clear equation or diagram; adding a small figure or formal notation would aid clarity.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. The comments highlight important areas for improving clarity, completeness, and evidential support. We address each major comment below and have revised the manuscript to incorporate the suggested changes.

read point-by-point responses

-

Referee: [Methods] Methods section: The precise definition and computation of 'geometric consistency' (e.g., which distance metric, aggregation over the rating set, or threshold) is not specified with sufficient formality or pseudocode; without this, it is impossible to assess whether the measure genuinely exploits ordinal structure or simply captures embedding artifacts.

Authors: We agree that the original Methods section lacked sufficient formality. In the revised manuscript we have added a precise mathematical definition: geometric consistency is defined as the negative mean pairwise cosine distance among the ModernBERT embeddings of the LLM-generated ordinal ratings for a given sentence. We specify the aggregation (mean over all pairs), the distance metric (cosine), and threshold selection (optimized on a validation split to maximize disagreement-prediction AUC). Pseudocode for the full procedure is now included as an algorithm box. This formulation directly ties the measure to ordinal dispersion rather than arbitrary embedding properties. revision: yes

-

Referee: [Experiments] Experiments section: The abstract and results claim higher AUC, but no dataset size, number of sentences/ratings, exact AUC values with confidence intervals, or statistical significance tests (e.g., DeLong test) for the comparison against baselines are provided; this prevents evaluation of whether the reported gains are reliable or dataset-specific.

Authors: We acknowledge the missing quantitative details. The revised manuscript now reports the complete dataset statistics (number of sentences, total LLM and human ratings, and inter-rater agreement), the exact AUC values for both models, 95% confidence intervals obtained via 1,000 bootstrap replicates, and the results of DeLong tests comparing our method against each probability baseline. These additions allow readers to evaluate both the magnitude and statistical reliability of the reported improvements. revision: yes

-

Referee: [Results] §3 (or equivalent results discussion): No analysis or ablation demonstrates why inconsistency in the ModernBERT embedding space should align with human rater disagreement on CEFR ordinal difficulty rather than lexical or semantic features; the link remains an untested assumption that could be confounded by embedding biases.

Authors: This is a fair observation. While the method operates exclusively on the embeddings of the ratings (independent of sentence text), we agree that explicit controls are needed. The revision adds an ablation that (i) measures correlation between geometric consistency and lexical/semantic covariates (length, lexical complexity, sentence embedding similarity) and (ii) compares performance when using a content-only embedding baseline. We also include qualitative case analysis showing that low-consistency cases predominantly correspond to ordinal boundary disagreements in human ratings. These additions address potential confounds while preserving the post-hoc, model-agnostic nature of the approach. revision: partial

Circularity Check

No circularity in derivation; method is independent heuristic evaluated empirically

full rationale

The paper proposes using geometric consistency of LLM ratings in a separate embedding space (e.g., ModernBERT) to flag potential human disagreement on ordinal CEFR difficulty, explicitly avoiding generation-time probability signals. This is presented as a practical heuristic rather than a derived theorem, with performance measured via AUC against probability-based baselines on two LLMs and one dataset. No equations, self-citations, or fitted parameters are shown that reduce the consistency metric or the disagreement prediction to the inputs by construction; the central claim rests on external empirical comparison rather than tautological renaming or load-bearing self-reference.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Difficulty is an ordinal scale whose ratings exhibit geometric consistency in embedding space that correlates with human agreement.

Reference graph

Works this paper leans on

- [1]

-

[2]

G. H. Chen, S. Chen, Z. Liu, F. Jiang, and B. Wang. Humans or LLMs as the judge? a study on judgement bias. InProceedings of the 2024 Conference on Em- pirical Methods in Natural Language Processing, pages 8301–8327, Miami, Florida, USA, Nov. 2024. Associa- tion for Computational Linguistics

work page 2024

-

[3]

C.-H. Chiang, W.-C. Chen, C.-Y. Kuan, C. Yang, and H.-y. Lee. Large language model as an assignment eval- uator: Insights, feedback, and challenges in a 1000+ student course. InProc. of EMNLP, 2024

work page 2024

-

[4]

T. Deutsch, M. Jasbi, and S. Shieber. Linguistic fea- tures for readability assessment. InProceedings of the Fifteenth Workshop on Innovative Use of NLP for Building Educational Applications, pages 1–17, Seattle, WA, USA→Online, July 2020. Association for Com- putational Linguistics

work page 2020

-

[5]

J. Devlin, M.-W. Chang, K. Lee, and K. Toutanova. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. InProceedings of the 2019 Conference of the North American Chapter of the As- sociation for Computational Linguistics: Human Lan- guage Technologies, Volume 1 (Long and Short Papers), pages 4171–4186, Minneapolis, Minnesota, ...

work page 2019

-

[6]

Y. Ehara. Educational cone model in embedding vector spaces. InProc. of ICCE 2025 (short paper), 2025

work page 2025

-

[7]

K. Ethayarajh. How contextual are contextualized word representations? Comparing the geometry of BERT, ELMo, and GPT-2 embeddings. InProceedings of the 2019 Conference on Empirical Methods in Natu- ral Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP- IJCNLP), pages 55–65, Hong Kong, China, Nov. 2019. As...

work page 2019

-

[8]

H. Hashemi, J. Eisner, C. Rosset, B. Van Durme, and C. Kedzie. LLM-rubric: A multidimensional, calibrated approach to automated evaluation of natural language texts. InProc. of ACL, 2024

work page 2024

-

[9]

P. Manakul, A. Liusie, and M. Gales. SelfCheckGPT: Zero-resource black-box hallucination detection for gen- erative large language models. InProceedings of the 2023 Conference on Empirical Methods in Natural Lan- guage Processing, pages 9004–9017, Singapore, Dec

work page 2023

-

[10]

Association for Computational Linguistics

- [11]

-

[12]

V. Raina, A. Liusie, and M. Gales. Is LLM-as-a-judge robust? investigating universal adversarial attacks on zero-shot LLM assessment. InProceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, pages 7499–7517, Miami, Florida, USA, Nov. 2024. Association for Computational Linguistics

work page 2024

-

[13]

B. Warner, A. Chaffin, B. Clavi´ e, O. Weller, O. Hall- str¨om, S. Taghadouini, A. Gallagher, R. Biswas, F. Lad- hak, T. Aarsen, G. T. Adams, J. Howard, and I. Poli. Smarter, better, faster, longer: A modern bidirectional encoder for fast, memory efficient, and long context finetuning and inference. InProc. of ACL, 2025

work page 2025

-

[14]

A. Yang et al. Qwen3 technical report (https://arxiv. org/abs/2505.09388), 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[15]

L. Zheng, W.-L. Chiang, Y. Sheng, S. Zhuang, Z. Wu, Y. Zhuang, Z. Lin, Z. Li, D. Li, E. P. Xing, H. Zhang, J. E. Gonzalez, and I. Stoica. Judging llm-as-a-judge with mt-bench and chatbot arena. InProc. of NeurIPS, 2023

work page 2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.