Recognition: no theorem link

Counterfactual Reasoning for Causal Responsibility Attribution in Probabilistic Multi-Agent Systems

Pith reviewed 2026-05-14 02:10 UTC · model grok-4.3

The pith

Shapley values allocate responsibility fairly among agents in stochastic multi-agent games by quantifying retrospective counterfactual impact.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

In concurrent stochastic multi-player games, retrospective counterfactual responsibility quantifies an agent's accountability for an outcome under a given strategy profile by comparing the actual outcome probability to the probability that would have obtained if the agent had unilaterally deviated. Allocating this responsibility via the Shapley value produces a distribution that is fair, in that agents making identical marginal contributions receive identical shares, and consistent, in that the allocation remains stable when the set of agents changes.

What carries the argument

Retrospective counterfactual responsibility, which measures the marginal effect of an agent's strategy deviation on outcome probabilities, allocated via the Shapley value that averages each agent's contribution across all coalitions.

If this is right

- Responsibility levels can be formally verified within the game model.

- Agents can reach stable strategy profiles by trading off responsibility against expected reward at Nash equilibrium.

- The allocation method applies uniformly to any probabilistic outcome without requiring case-by-case adjustments.

- Fairness and consistency of the allocation follow directly from the properties of the Shapley value.

Where Pith is reading between the lines

- The approach could be used to design incentive mechanisms that penalize high-responsibility strategies in safety-critical systems.

- It suggests a way to compare responsibility attributions across different solution concepts beyond Nash equilibrium.

- The framework may help formalise accountability in joint AI decision-making where outcomes are probabilistic.

Load-bearing premise

That responsibility in probabilistic multi-agent systems is fully captured by backward counterfactual comparisons inside a concurrent stochastic game model, with no need for further domain-specific adjustments.

What would settle it

A concrete stochastic game in which the Shapley allocation of retrospective counterfactual responsibility produces shares that contradict clear intuitive judgments of accountability for the same outcome.

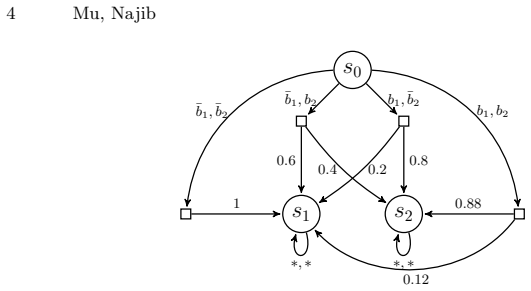

Figures

read the original abstract

Responsibility allocation -- determining the extent to which agents are accountable for outcomes -- is a fundamental challenge in the design and analysis of multi-agent systems. In this work, we model such systems as concurrent stochastic multi-player games and introduce a notion of retrospective (backward) counterfactual responsibility, which quantifies an agent's accountability for outcomes resulting from a given strategy profile. To allocate responsibility among agents, we utilise the Shapley value and formally show that this method satisfies key desirable properties, including fairness and consistency. Building on this foundation, we propose a formal framework that supports both verification and strategic reasoning in responsibility-aware multi-agent systems. Furthermore, by adopting Nash equilibrium as the solution concept, we demonstrate how to compute stable strategy profiles in which agents trade off responsibility against expected reward.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper models multi-agent systems as concurrent stochastic multi-player games, defines a retrospective counterfactual responsibility measure for a given strategy profile, applies the Shapley value to allocate responsibility, and claims to formally prove that this allocation satisfies fairness and consistency. It then outlines a verification and strategic-reasoning framework and shows how Nash equilibria can be computed that trade off responsibility against expected reward.

Significance. If the formal claims hold, the work supplies a principled, axiomatically grounded method for responsibility attribution in probabilistic MAS that could support accountability analysis in autonomous systems and verification tools. The reliance on the standard Shapley value (rather than ad-hoc weights) and the explicit use of Nash equilibrium for stable profiles are positive features.

major comments (2)

- [section introducing the responsibility measure and Shapley application] The definition of the characteristic function v(S) used for the Shapley value is not made explicit with respect to the behavior of agents outside coalition S. In a concurrent stochastic game, computing the counterfactual outcome for S requires a convention for the strategies of the remaining agents (original profile, equilibrium, or worst-case); without a fixed convention the marginal contributions are not uniquely determined, so the claimed fairness and consistency properties may hold only under additional implicit assumptions rather than in general.

- [proofs of properties] The abstract states that formal proofs of fairness and consistency are supplied, yet the manuscript provides no derivation details, small examples, or error analysis for the retrospective counterfactual responsibility measure. This absence makes it impossible to verify whether the properties survive the stochastic transition structure and the retrospective (backward) counterfactual construction.

minor comments (1)

- The abstract would be clearer if it briefly indicated the exact axioms or properties proven (e.g., efficiency, symmetry, dummy-player) rather than using the generic phrase 'key desirable properties'.

Simulated Author's Rebuttal

We thank the referee for the constructive review and for recognizing the potential significance of our framework for responsibility attribution in probabilistic multi-agent systems. We address each major comment below and will revise the manuscript to enhance clarity and accessibility.

read point-by-point responses

-

Referee: [section introducing the responsibility measure and Shapley application] The definition of the characteristic function v(S) used for the Shapley value is not made explicit with respect to the behavior of agents outside coalition S. In a concurrent stochastic game, computing the counterfactual outcome for S requires a convention for the strategies of the remaining agents (original profile, equilibrium, or worst-case); without a fixed convention the marginal contributions are not uniquely determined, so the claimed fairness and consistency properties may hold only under additional implicit assumptions rather than in general.

Authors: We thank the referee for highlighting this point. Our retrospective counterfactual responsibility measure defines v(S) by fixing the strategies of agents outside S to their actions in the given strategy profile. This convention is required by the retrospective (backward) nature of the definition, which evaluates deviations from the realized play rather than from an equilibrium or worst-case assumption. The formal definition in Section 3 states this explicitly, which ensures the marginal contributions are uniquely determined. We will add a dedicated clarifying paragraph and a small worked example in the revised manuscript to make the convention and its implications for stochastic transitions fully explicit. revision: yes

-

Referee: [proofs of properties] The abstract states that formal proofs of fairness and consistency are supplied, yet the manuscript provides no derivation details, small examples, or error analysis for the retrospective counterfactual responsibility measure. This absence makes it impossible to verify whether the properties survive the stochastic transition structure and the retrospective (backward) counterfactual construction.

Authors: The formal proofs of fairness and consistency appear in Appendix A, with derivations that account for the probabilistic transition function and the backward counterfactual construction. A small illustrative example demonstrating the properties is given in Section 4. To address the concern about accessibility, we will insert a high-level proof sketch into the main text (near the statement of the properties), expand the discussion of how the axioms hold under stochasticity, and add a brief error-analysis paragraph for the finite-horizon case in the revised version. revision: yes

Circularity Check

No circularity: standard Shapley value applied to independently defined responsibility measure

full rationale

The paper defines retrospective counterfactual responsibility directly from the concurrent stochastic game model and strategy profile. It then applies the standard Shapley value to this measure and proves the usual fairness/consistency axioms hold for the resulting allocation. This is a direct application of a known operator to a new set function v(S); the axioms follow from the definition of Shapley value rather than from any self-referential equation, fitted parameter, or self-citation chain. No step reduces the claimed result to its own inputs by construction.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Multi-agent systems can be modeled as concurrent stochastic multi-player games

- standard math Shapley value satisfies fairness and consistency when applied to retrospective counterfactual responsibility

invented entities (1)

-

retrospective counterfactual responsibility

no independent evidence

Reference graph

Works this paper leans on

- [1]

-

[2]

˚Agotnes, T., van der Hoek, W., Wooldridge, M.: Normative system games. In: Proceedings of the 6th international joint conference on Autonomous agents and multiagent systems. pp. 1–8 (2007)

work page 2007

-

[3]

Baier, C., Katoen, J.: Principles of model checking. MIT Press (2008)

work page 2008

- [4]

- [5]

-

[6]

Logic in high definition: Trends in logical semantics pp

Baltag, A., Canavotto, I., Smets, S.: Causal agency and responsibility: a refinement of stit logic. Logic in high definition: Trends in logical semantics pp. 149–176 (2021)

work page 2021

-

[7]

Basset, N., Kwiatkowska, M.Z., Topcu, U., Wiltsche, C.: Strategy synthesis for stochastic games with multiple long-run objectives. In: Baier, C., Tinelli, C. (eds.) TACAS. Lecture Notes in Computer Science, vol. 9035, pp. 256–271. Springer (2015)

work page 2015

-

[8]

Dagstuhl Reports 14, 75–91 (2024)

Belle, V., Chockler, H., Vallor, S., Varshney, K.R., Vennekens, J., Beckers, S.: Trustworthiness and responsibility in ai-causality, learning, and verification (dagstuhl seminar 24121). Dagstuhl Reports 14, 75–91 (2024)

work page 2024

-

[9]

Studia Logica 51, 463–484 (1992)

Belnap, N., Perloff, M.: The way of the agent. Studia Logica 51, 463–484 (1992)

work page 1992

-

[10]

Braham, M., van Hees, M.: An anatomy of moral responsibility. Mind 121, 601–634 (2012)

work page 2012

-

[11]

International Journal of Game Theory 30, 309–319 (2002)

van den Brink, R.: An axiomatization of the shapley value using a fairness property. International Journal of Game Theory 30, 309–319 (2002)

work page 2002

-

[12]

In: Proceedings of the twentieth annual ACM symposium on Theory of computing

Canny, J.: Some algebraic and geometric computations in pspace. In: Proceedings of the twentieth annual ACM symposium on Theory of computing. pp. 460–467 (1988)

work page 1988

-

[13]

Reflections on artificial intelligence for humanity pp

Chatila, R., Dignum, V., Fisher, M., Giannotti, F., Morik, K., Russell, S., Yeung, K.: Trustworthy ai. Reflections on artificial intelligence for humanity pp. 13–39 (2021)

work page 2021

-

[14]

Journal of Computer and System Sciences 78(2), 394–413 (2012)

Chatterjee, K., Henzinger, T.A.: A survey of stochasticω-regular games. Journal of Computer and System Sciences 78(2), 394–413 (2012)

work page 2012

- [15]

-

[16]

Chockler, H., Halpern, J.Y.: Responsibility and blame: A structural-model ap- proach. J. Artif. Intell. Res. 22, 93–115 (2004)

work page 2004

-

[17]

Games and Economic Be- havior 1(2), 119–130 (1989)

Chun, Y.: A new axiomatization of the shapley value. Games and Economic Be- havior 1(2), 119–130 (1989)

work page 1989

-

[18]

Ciuni, R., Mastop, R.: Attributing distributed responsibility in stit logic. In: Logic, Rationality, and Interaction: Second International Workshop, LORI 2009, Chongqing, China, October 8-11, 2009. Proceedings 2. pp. 66–75. Springer (2009)

work page 2009

-

[19]

Cristau, J., David, C., Horn, F.: How do we remember the past in randomised strategies? Proceedings of GandALF 2010 25 (2010)

work page 2010

-

[20]

De Giacomo, G., Lorini, E., Parker, T., Parretti, G.: Responsibility anticipation and attribution in ltlf. In: Proceedings of the Thirty-Fourth International Joint Conference on Artificial Intelligence, IJCAI (2025)

work page 2025

-

[21]

Logic Journal of the IGPL 18(1), 99–117 (2010)

De Lima, T., Royakkers, L., Dignum, F.: A logic for reasoning about responsibility. Logic Journal of the IGPL 18(1), 99–117 (2010)

work page 2010

-

[22]

The oxford handbook of ethics of AI 4698, 215 (2020)

Dignum, V.: Responsibility and artificial intelligence. The oxford handbook of ethics of AI 4698, 215 (2020)

work page 2020

-

[23]

In: Moral responsi- bility and alternative possibilities, pp

Frankfurt, H.: Alternate possibilities and moral responsibility. In: Moral responsi- bility and alternative possibilities, pp. 17–25. Routledge (2018)

work page 2018

- [24]

-

[25]

In: Proceedings of the annual meeting of the cognitive science society

Gerstenberg, T., Ejova, A., Lagnado, D.: Blame the skilled. In: Proceedings of the annual meeting of the cognitive science society. vol. 33 (2011)

work page 2011

- [26]

-

[27]

Halpern, J.Y., Pearl, J.: Causes and explanations: A structural-model approach. part i: Causes. The British journal for the philosophy of science (2005) 22 Mu, Najib

work page 2005

-

[28]

Econometrica: Journal of the Econometric Society pp

Hart, S., Mas-Colell, A.: Potential, value, and consistency. Econometrica: Journal of the Econometric Society pp. 589–614 (1989)

work page 1989

-

[29]

Journal of Artificial Intelligence Research 73, 173–208 (2022)

Icarte, R.T., Klassen, T.Q., Valenzano, R., McIlraith, S.A.: Reward machines: Ex- ploiting reward function structure in reinforcement learning. Journal of Artificial Intelligence Research 73, 173–208 (2022)

work page 2022

-

[30]

ACM computing surveys (CSUR) 55(2), 1–38 (2022)

Kaur, D., Uslu, S., Rittichier, K.J., Durresi, A.: Trustworthy artificial intelligence: a review. ACM computing surveys (CSUR) 55(2), 1–38 (2022)

work page 2022

-

[31]

In: International Symposium on Formal Methods

Kobayashi, T., Bondu, M., Ishikawa, F.: Formal modelling of safety architecture for responsibility-aware autonomous vehicle via event-b refinement. In: International Symposium on Formal Methods. pp. 533–549. Springer (2023)

work page 2023

-

[32]

Kwiatkowska, M., Norman, G., Parker, D., Santos, G.: Automated verification of concurrent stochastic games. In: Quantitative Evaluation of Systems: 15th Interna- tional Conference, QEST 2018, Beijing, China, September 4-7, 2018, Proceedings

work page 2018

-

[33]

pp. 223–239. Springer (2018)

work page 2018

-

[34]

In: International Symposium on Formal Methods

Kwiatkowska, M., Norman, G., Parker, D., Santos, G.: Equilibria-based probabilis- tic model checking for concurrent stochastic games. In: International Symposium on Formal Methods. pp. 298–315. Springer (2019)

work page 2019

-

[35]

Formal Methods in System Design 58(1), 188–250 (2021)

Kwiatkowska, M., Norman, G., Parker, D., Santos, G.: Automatic verification of concurrent stochastic systems. Formal Methods in System Design 58(1), 188–250 (2021)

work page 2021

- [36]

-

[37]

ACM Computing Surveys 55(9), 1–46 (2023)

Li, B., Qi, P., Liu, B., Di, S., Liu, J., Pei, J., Yi, J., Zhou, B.: Trustworthy ai: From principles to practices. ACM Computing Surveys 55(9), 1–46 (2023)

work page 2023

-

[38]

In: Proceedings of the AAAI Conference on Artificial Intelligence

Mu, C., Najib, M., Oren, N.: Responsibility-aware strategic reasoning in proba- bilistic multi-agent systems. In: Proceedings of the AAAI Conference on Artificial Intelligence. vol. 39, pp. 23258–23266 (2025)

work page 2025

- [39]

-

[40]

In: Proceedings of the AAAI Conference on Artificial Intelligence

Naumov, P., Tao, J.: Blameworthiness in strategic games. In: Proceedings of the AAAI Conference on Artificial Intelligence. vol. 33, pp. 3011–3018 (2019)

work page 2019

-

[41]

Osborne, M.J., Rubinstein, A.: A course in game theory. The MIT Press (1994)

work page 1994

- [42]

-

[43]

Cambridge university press (2009)

Pearl, J.: Causality. Cambridge university press (2009)

work page 2009

- [44]

-

[45]

Shapley, L.S.: A value for n-person games. In: Kuhn, H.W., Tucker, A.W. (eds.) Contributions to the Theory of Games II, pp. 307–317. Princeton University Press, Princeton (1953)

work page 1953

-

[46]

Journal of Philosophical Logic pp

Shi, Q., Naumov, P.: Responsibility in multi-step decision schemes. Journal of Philosophical Logic pp. 1–39 (2025)

work page 2025

-

[47]

Cambridge University Press (2009)

Shoham, Y., Leyton-Brown, K.: Multiagent Systems - Algorithmic, Game- Theoretic, and Logical Foundations. Cambridge University Press (2009)

work page 2009

-

[48]

In: 32nd EACSL Annual Conference on Computer Science Logic (CSL 2024)

Stan, D., Najib, M., Lin, A.W., Abdulla, P.A.: Concurrent stochastic lossy channel games. In: 32nd EACSL Annual Conference on Computer Science Logic (CSL 2024). pp. 46–1. Schloss Dagstuhl-Leibniz-Zentrum f¨ ur Informatik (2024)

work page 2024

-

[49]

Widerker, D.: Moral responsibility and alternative possibilities: Essays on the im- portance of alternative possibilities. Routledge (2017)

work page 2017

-

[50]

Wooldridge, M.: Does game theory work? IEEE Intelligent Systems 27(6), 76–80 (2012) Counterfactual Reasoning for CR Attribution in Probabilistic MASs 23

work page 2012

-

[51]

Wright, R.W.: Causation in tort law. Calif. L. Rev. 73, 1735 (1985)

work page 1985

- [52]

-

[53]

Ai & Society 38(4), 1453–1464 (2023)

Yazdanpanah, V., Gerding, E.H., Stein, S., Dastani, M., Jonker, C.M., Norman, T.J., Ramchurn, S.D.: Reasoning about responsibility in autonomous systems: chal- lenges and opportunities. Ai & Society 38(4), 1453–1464 (2023)

work page 2023

-

[54]

International Journal of Game Theory 14(2), 65–72 (1985)

Young, H.P.: Monotonic solutions of cooperative games. International Journal of Game Theory 14(2), 65–72 (1985)

work page 1985

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.