Recognition: 2 theorem links

· Lean TheoremBanach density of generated languages: Dichotomies in topology and dimension

Pith reviewed 2026-05-13 21:16 UTC · model grok-4.3

The pith

In one dimension, generative algorithms achieve lower Banach density 1/2 for language collections of finite Cantor-Bendixson rank but must produce zero density for some infinite-rank collections.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that Banach density, unlike asymptotic density, detects a topological dichotomy in one-dimensional embeddings: finite Cantor-Bendixson rank permits algorithms to generate subsets of the true language with lower Banach density 1/2, while infinite rank admits languages for which no algorithm succeeds in achieving positive lower density, forcing the generated set to contain arbitrarily large sparse intervals. In higher dimensions the positive result requires the additional nondegeneracy assumption that the language is sufficiently represented across the entire space.

What carries the argument

Banach density on the d-dimensional embedding of generated strings, together with the Cantor-Bendixson rank of the space of all possible languages.

If this is right

- Optimal lower Banach density of exactly 1/2 is attainable in one dimension whenever the language space has finite Cantor-Bendixson rank.

- Some infinite-rank collections in one dimension force every generated set to have lower Banach density zero with arbitrarily large gaps.

- Asymptotic density always allows density 1/2 regardless of rank, so the dichotomy is specific to Banach density.

- Analogous positive and negative results hold for the continuous family of densities interpolating between asymptotic and Banach.

- The nondegeneracy condition on the embedding removes the Ramsey-theoretic barrier and restores positive Banach density in dimensions two and higher.

Where Pith is reading between the lines

- Generative algorithms may need explicit mechanisms to detect or adapt to the Cantor-Bendixson rank of the target language space.

- Typical neural embeddings in higher dimensions could be checked empirically for the nondegeneracy property that restores positive density.

- The same rank-based obstruction may appear in other combinatorial generation problems that use sparse-set measures.

- The results invite direct comparison with natural density or other number-theoretic notions of sparseness on embedded strings.

Load-bearing premise

The topological space of languages must have finite Cantor-Bendixson rank for any algorithm to guarantee lower Banach density 1/2 in one dimension.

What would settle it

Exhibit one concrete language collection of infinite Cantor-Bendixson rank in one dimension such that every generation algorithm produces a set whose lower Banach density is zero, confirmed by the existence of arbitrarily large intervals containing arbitrarily small fractions of generated points.

Figures

read the original abstract

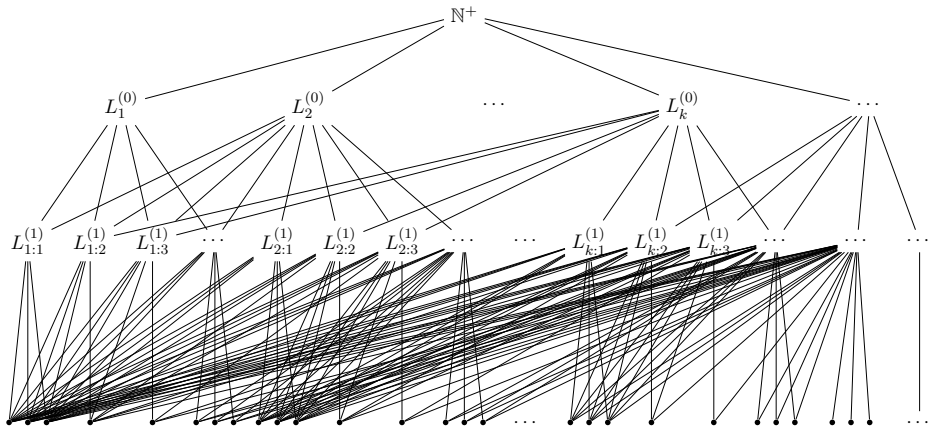

The formalism of language generation in the limit studies generative models by requiring an algorithm, given strings from a hidden true language, to eventually generate new valid strings. A core issue is the tension between validity and breadth. Prior work quantified breadth via asymptotic density, where the priority is generating strings early in a natural countable ordering. Here, we study density when the strings are embedded in $d$ dimensions, a ubiquitous structure in current generative models. Our goal is for the generated strings to be dense throughout the embedding. This requires a different measure, the Banach density, which captures whether a set contains large sparse regions. Using Banach density uncovers a rich structure based on dimension and the topology of the language collection. We prove that in dimension one, when the underlying topological space has finite Cantor-Bendixson rank, an algorithm can always generate a subset of the true language with an optimal lower Banach density of 1/2. However, for collections with infinite Cantor-Bendixson rank, there are cases where no algorithm can achieve any positive lower Banach density; the generated set must contain arbitrarily large, sparse regions. This reveals a topological contrast unseen with asymptotic density, where 1/2 is always achievable. We also extend our results to a family of measures interpolating between Banach and asymptotic density. Finally, in dimension $d \geq 2$, our positive result for Banach density encounters a Ramsey-theoretic obstacle regarding two-colored point sets. Overcoming this requires a nondegeneracy condition: the embedding of the true language must be sufficiently represented throughout the full $d$-dimensional space.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper examines Banach density for languages generated in the limit, with strings embedded in d-dimensional spaces. It claims that in dimension 1, when the space has finite Cantor-Bendixson rank, an algorithm exists generating a subset of the true language with lower Banach density 1/2; for infinite rank, no algorithm achieves positive lower Banach density. For d ≥ 2, a nondegeneracy condition on the embedding is required to surmount a Ramsey-theoretic obstacle on two-colored point sets and obtain positive density results. The work also considers interpolating measures between Banach and asymptotic density.

Significance. If the central claims hold, the results establish dimension- and topology-dependent dichotomies in density that are invisible under asymptotic density, where 1/2 is always achievable. This provides a more refined tool for analyzing breadth in generative models, particularly those using higher-dimensional embeddings, and highlights the role of Cantor-Bendixson rank in limiting achievable densities.

major comments (2)

- [Abstract / d ≥ 2 results] Abstract and the d ≥ 2 section: the nondegeneracy condition is stated to ensure the true language is 'sufficiently represented throughout the full d-dimensional space' to overcome the Ramsey obstacle on two-colored point sets. However, the formulation risks being only local; if the embedding representation fails to interact globally with arbitrary colorings, the positive Banach-density result may not follow. Explicit verification or a counterexample confirming sufficiency is needed, as this condition is load-bearing for the d=1 vs. d≥2 dichotomy.

- [Infinite-rank case (likely §4)] The infinite Cantor-Bendixson rank negative result: the claim that generated sets must contain arbitrarily large sparse regions (preventing positive lower Banach density) depends on the precise construction of the topological space and the algorithm's interaction with it. Without the full derivation, it is unclear whether the Ramsey application in the proof avoids circularity with the density definition.

minor comments (2)

- [Abstract] Clarify why 1/2 is 'optimal' in the finite-rank d=1 case; is it the best possible constant, or merely achievable?

- [Measures section] Notation for the interpolating family of measures between Banach and asymptotic density should be introduced earlier for readability.

Simulated Author's Rebuttal

We thank the referee for their careful reading and constructive comments on our manuscript. We address each major comment below and will revise the paper accordingly to improve clarity and rigor.

read point-by-point responses

-

Referee: [Abstract / d ≥ 2 results] Abstract and the d ≥ 2 section: the nondegeneracy condition is stated to ensure the true language is 'sufficiently represented throughout the full d-dimensional space' to overcome the Ramsey obstacle on two-colored point sets. However, the formulation risks being only local; if the embedding representation fails to interact globally with arbitrary colorings, the positive Banach-density result may not follow. Explicit verification or a counterexample confirming sufficiency is needed, as this condition is load-bearing for the d=1 vs. d≥2 dichotomy.

Authors: We appreciate the referee's observation that the nondegeneracy condition must be shown to operate globally. The condition is defined to require that the embedding of the true language intersects every sufficiently large ball in the d-dimensional space in a manner that precludes uniform application of the Ramsey obstruction across arbitrary 2-colorings. In the revision we will add a precise formal definition, a proof sketch verifying sufficiency for the positive Banach-density result, and a counterexample showing failure when the condition is violated locally but not globally. revision: yes

-

Referee: [Infinite-rank case (likely §4)] The infinite Cantor-Bendixson rank negative result: the claim that generated sets must contain arbitrarily large sparse regions (preventing positive lower Banach density) depends on the precise construction of the topological space and the algorithm's interaction with it. Without the full derivation, it is unclear whether the Ramsey application in the proof avoids circularity with the density definition.

Authors: We thank the referee for noting the need for a fully explicit derivation. The proof first invokes the infinite Cantor-Bendixson rank to produce a nested sequence of clopen sets whose diameters force arbitrarily large sparse regions in any infinite subset; only after this topological construction is complete is the Ramsey argument applied to show that any generated set must intersect these regions in a way that creates large gaps. This ordering eliminates circularity with the Banach-density definition. The revised §4 will contain the complete step-by-step derivation with explicit remarks on the logical order. revision: yes

Circularity Check

No circularity: results follow from standard definitions and topological proofs

full rationale

The paper derives its dichotomies for Banach density in language generation directly from the definitions of Banach density, Cantor-Bendixson rank, and the stated nondegeneracy condition, without any fitted parameters, self-referential equations, or load-bearing self-citations. The positive result for finite rank in dimension one and the negative result for infinite rank are established via explicit constructions and Ramsey-theoretic arguments that do not reduce to the inputs by construction. The d >= 2 case explicitly invokes an additional assumption rather than smuggling it in via prior work. All steps are self-contained against external mathematical benchmarks.

Axiom & Free-Parameter Ledger

axioms (2)

- standard math Banach density is well-defined for subsets of d-dimensional grids and satisfies the usual monotonicity and translation-invariance properties.

- standard math Cantor-Bendixson rank is a standard ordinal-valued measure of topological complexity for countable spaces.

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/AlexanderDuality.leanalexander_duality_circle_linking unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Theorem 1.2: algorithm achieves lower Banach density 1/2 precisely when Cantor-Bendixson rank is finite; infinite rank permits zero-density constructions via perfect towers.

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Definition 2.2 and Claim 2.5: f-window densities interpolate between asymptotic and Banach; Banach is the minimal member when f(k)=∞.

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Forward citations

Cited by 1 Pith paper

-

Contrastive Identification and Generation in the Limit

Contrastive pair presentations yield exact identifiability characterizations via a geometric refinement of Angluin's condition, a new contrastive closure dimension for generation, mutual incomparability with text iden...

Reference graph

Works this paper leans on

-

[1]

Spencer.The Probabilistic Method

Noga Alon and Joel H. Spencer.The Probabilistic Method. John Wiley & Sons, 3rd edition, 2008

work page 2008

-

[2]

D. Angluin. Finding patterns common to a set of strings. InProceedings of the 11th annual ACM Symposium on Theory of Computing, pages 130–141, 1979

work page 1979

-

[3]

D. Angluin. Inductive inference of formal languages from positive data.Information and Control, 45(2):117–135, 1980

work page 1980

-

[4]

M. Arjovsky and L. Bottou. Towards principled methods for training generative adversarial networks. InInternational Conference on Learning Representations (ICLR), 2017

work page 2017

-

[5]

M. Arjovsky, S. Chintala, and L. Bottou. InInternational Conference on Machine Learning (ICML), 2017

work page 2017

-

[6]

Language generation in the limit: Noise, loss, and feedback.arXiv preprint arXiv:2507.15319, 2025

Yannan Bai, Debmalya Panigrahi, and Ian Zhang. Language generation in the limit: Noise, loss, and feedback.arXiv preprint arXiv:2507.15319, 2025

-

[7]

Pl¨ unnecke inequalities for countable abelian groups

Michael Bjorklund and Alexander Fish. Pl¨ unnecke inequalities for countable abelian groups. arXiv: Dynamical Systems, 2013. 65

work page 2013

-

[8]

M. Charikar and C. Pabbaraju. Exploring facets of language generation in the limit.arXiv preprint arXiv:2411.15364, 2024

-

[9]

Theoretical limitations of multi-layer trans- former

Lijie Chen, Binghui Peng, and Hongxun Wu. Theoretical limitations of multi-layer trans- former. In66th IEEE Annual Symposium on Foundations of Computer Science, FOCS 2025, Sydney, Australia, December 14-17, 2025, pages 2631–2653. IEEE, 2025

work page 2025

-

[10]

Xiaomin Chen, J´ anos Pach, Mario Szegedy, and G´ abor Tardos. Delaunay graphs of point sets in the plane with respect to axis-parallel rectangles.Random Structures & Algorithms, 34, 2008

work page 2008

-

[11]

Esther Ezra. A size-sensitive discrepancy bound for set systems of bounded primal shatter dimension.SIAM Journal on Computing, 45(1):84–101, 2016

work page 2016

-

[12]

Ruzsa.Combinatorial Number Theory and Additive Group Theory

Alfred Geroldinger and Imre Z. Ruzsa.Combinatorial Number Theory and Additive Group Theory. Advanced Courses in Mathematics - CRM Barcelona. Birkh¨ auser Basel, 1 edition, 2009

work page 2009

-

[13]

E. M. Gold. Language identification in the limit.Information and Control, 10(5):447–474, 1967

work page 1967

-

[14]

On union-closedness of language generation.arXiv preprint arXiv:2506.18642, 2025

Steve Hanneke, Amin Karbasi, Anay Mehrotra, and Grigoris Velegkas. On union-closedness of language generation.arXiv preprint arXiv:2506.18642, 2025

-

[15]

The curious case of neural text degeneration

Ari Holtzman, Jan Buys, Li Du, Maxwell Forbes, and Yejin Choi. The curious case of neural text degeneration. In8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, April 26-30, 2020. OpenReview.net, 2020

work page 2020

-

[16]

A. Kalai and S. Vempala. Calibrated language models must hallucinate. InACM Symposium on Theory of Computing (STOC), 2024

work page 2024

-

[17]

A. Kalavasis, A. Mehrotra, and G. Velegkas. On the limits of language generation: Trade- offs between hallucination and mode-collapse. InProceedings of the 57th annual ACM Symposium on Theory of Computing, 2025

work page 2025

-

[18]

Characterizations of language generation with breadth.arXiv preprint arXiv:2412.18530, 2024

Alkis Kalavasis, Anay Mehrotra, and Grigoris Velegkas. Characterizations of language generation with breadth.arXiv preprint arXiv:2412.18530, 2024

-

[19]

A. S. Kechris.Classical Descriptive Set Theory, volume 156 ofGraduate Texts in Mathe- matics. Springer-Verlag, New York, 1995

work page 1995

-

[20]

Jon Kleinberg and Sendhil Mullainathan. Language generation in the limit.NeurIPS 2024, The 38th Annual Conference on Neural Information Processing Systems, 2024

work page 2024

-

[21]

Density measures for language generation

Jon Kleinberg and Fan Wei. Density measures for language generation. In66th Annual Symposium on Foundations of Computer Science (FOCS 2025, 2025. 66

work page 2025

-

[22]

Jon Kleinberg and Fan Wei. Language generation and identification from partial enumer- ation: Tight density bounds and topological characterizations. InSTOC 2026, 2025

work page 2026

-

[23]

Generation through the lens of learning theory

Jiaxun Li, Vinod Raman, and Ambuj Tewari. Generation through the lens of learning theory. InCOLT, 2025

work page 2025

-

[24]

Factorization norms and hereditary discrepancy.ArXiv, abs/1408.1376, 2014

Jiˇ r´ ı Matouˇ sek, Aleksandar Nikolov, and Kunal Talwar. Factorization norms and hereditary discrepancy.ArXiv, abs/1408.1376, 2014

-

[25]

Efficient estimation of word representations in vector space

Tom´ as Mikolov, Kai Chen, Greg Corrado, and Jeffrey Dean. Efficient estimation of word representations in vector space. In Yoshua Bengio and Yann LeCun, editors,1st Inter- national Conference on Learning Representations, ICLR 2013, Scottsdale, Arizona, USA, May 2-4, 2013, Workshop Track Proceedings, 2013

work page 2013

-

[26]

Power laws, pareto distributions and zipf’s law.Contemporary physics, 46(5):323–351, 2005

Mark EJ Newman. Power laws, pareto distributions and zipf’s law.Contemporary physics, 46(5):323–351, 2005

work page 2005

-

[27]

B. Peng, S. Narayanan, and C. Papadimitriou. On limitations of the transformer architec- ture. InConference on Language Modeling, 2024

work page 2024

-

[28]

Jeffrey Pennington, Richard Socher, and Christopher D. Manning. Glove: Global vectors for word representation. In Alessandro Moschitti, Bo Pang, and Walter Daelemans, editors, Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing, EMNLP 2014, October 25-29, 2014, Doha, Qatar, A meeting of SIGDAT, a Special Interest Group o...

work page 2014

-

[29]

Fernando C. N. Pereira, Naftali Tishby, and Lillian Lee. Distributional clustering of english words. In Lenhart K. Schubert, editor,31st Annual Meeting of the Association for Com- putational Linguistics, 22-26 June 1993, Ohio State University, Columbus, Ohio, USA, Proceedings, pages 183–190. ACL, 1993

work page 1993

-

[30]

Generation from noisy examples

Ananth Raman and Vinod Raman. Generation from noisy examples. InICML, 2025

work page 2025

-

[31]

C. Sanford, D. J. Hsu, and M. Telgarsky. Representational strengths and limitations of transformers. InAdvances in Neural Information Processing Systems (NeurIPS), 2023

work page 2023

-

[32]

Evaluating the diversity and quality of LLM generated content.CoRR, abs/2504.12522, 2025

Alexander Shypula, Shuo Li, Botong Zhang, Vishakh Padmakumar, Kayo Yin, and Os- bert Bastani. Evaluating the diversity and quality of LLM generated content.CoRR, abs/2504.12522, 2025

-

[33]

Lena Strobl, William Merrill, Gail Weiss, David Chiang, and Dana Angluin. What for- mal languages can transformers express? a survey.Transactions of the Association for Computational Linguistics, 12:543–561, 2024

work page 2024

-

[34]

Jiayi Zhang, Simon Yu, Derek Chong, Anthony Sicilia, Michael R. Tomz, Christopher D. Manning, and Weiyan Shi. Verbalized sampling: How to mitigate mode collapse and unlock LLM diversity.CoRR, abs/2510.01171, 2025. 67 6 Appendix Lemma 6.1.Letδ f(A)denote thef-window lower density of a setA⊆N. For linear growth f(k) =ckwithc >0, we have lim c→∞ δck(A) =d P ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.