Completely Independent Steiner Trees

The condition demands internally vertex- and edge-disjoint paths between every terminal pair, supporting new bounds and algorithms on planar

abstract

click to expand

Spanning trees are fundamental for efficient communication in networks. For fault-tolerant communication, it is desirable to have multiple spanning trees to ensure resilience against failures of nodes and edges. To this end, various notions of disjoint or independent spanning trees have been studied, including edge-disjoint, node/edge-independent, and completely independent spanning trees. Alongside these, several Steiner variants have also been investigated, where the trees are required to span a designated subset of vertices called terminals. For instance, the study of edge-disjoint spanning trees has been extended to edge-disjoint Steiner trees; a stronger variant is the problem of internally disjoint Steiner trees, where any two Steiner trees intersect exactly in the terminals.

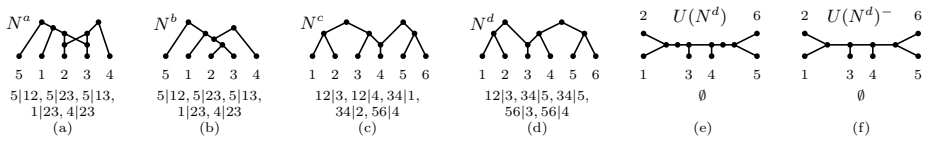

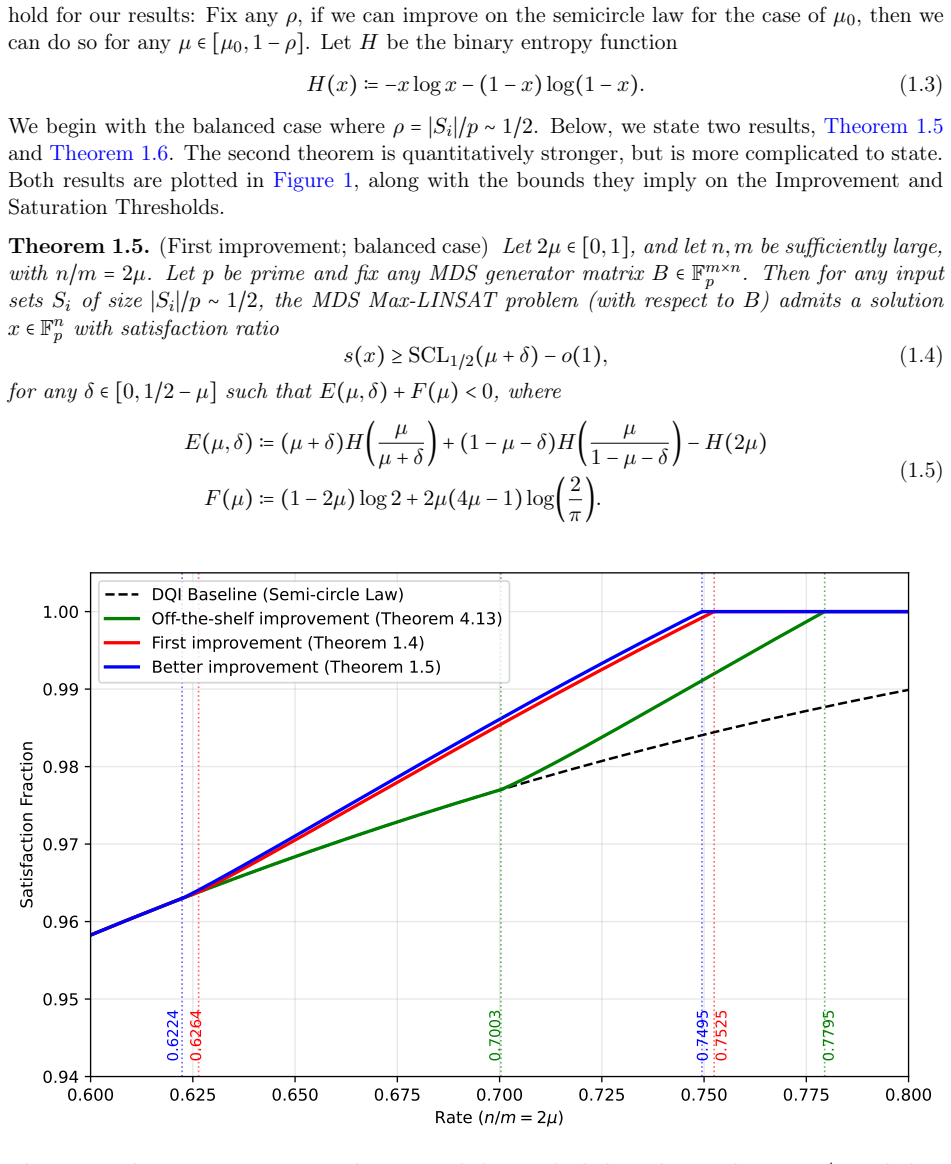

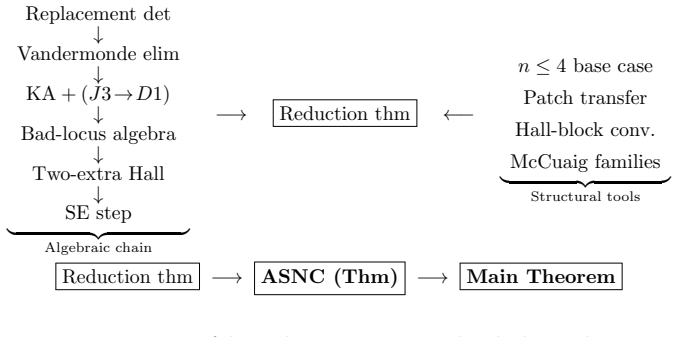

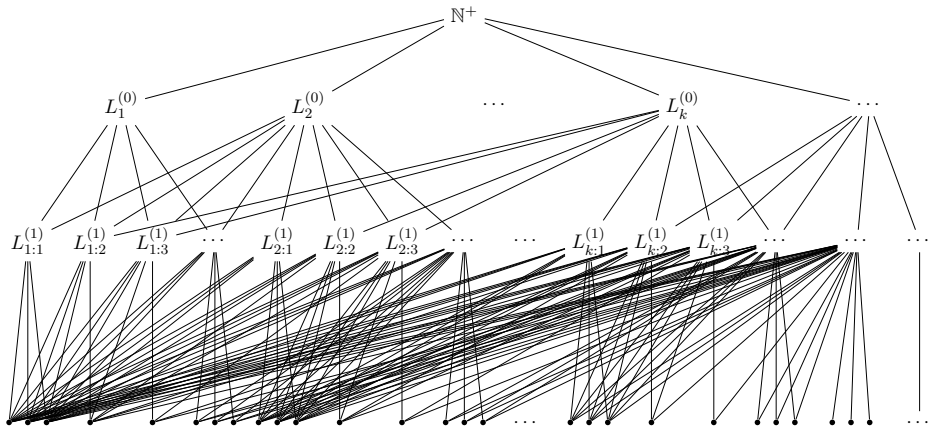

In this paper, we investigate the Steiner analogue of completely independent spanning trees, which we call \emph{completely independent Steiner trees}. A set of Steiner trees is completely independent if, for every pair of terminals $u,v$, the $(u,v)$-paths in all the Steiner trees are internally vertex-disjoint and edge-disjoint. This notion generalizes both completely independent spanning trees and internally disjoint Steiner trees. We provide a systematic study of completely independent Steiner trees from structural, algorithmic, and complexity-theoretic perspectives. In particular, we present several characterisations, connectivity bounds, algorithms, hardness results, and applications to special graph classes such as planar graphs and graphs of bounded treewidth. Along the way, we also introduce a directed variant of completely independent spanning trees via an equivalence with completely independent Steiner trees.