Recognition: 2 theorem links

· Lean TheoremFusion and Alignment Enhancement with Large Language Models for Tail-item Sequential Recommendation

Pith reviewed 2026-05-13 17:11 UTC · model grok-4.3

The pith

FAERec fuses ID embeddings with LLM semantic knowledge via adaptive gating and aligns their structures at item and feature levels to improve tail-item sequential recommendations.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

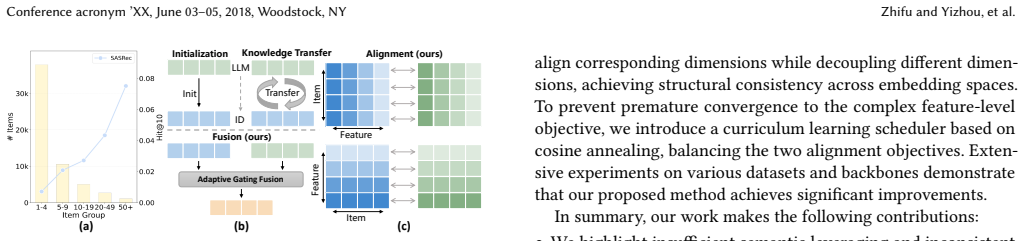

FAERec improves item representations by generating coherently-fused and structurally consistent embeddings through an adaptive gating mechanism that combines ID and LLM embeddings, followed by item-level alignment via contrastive learning to establish direct correspondences and feature-level alignment that constrains correlation patterns across embedding dimensions, with a curriculum scheduler to progressively emphasize the feature-level objective.

What carries the argument

Adaptive gating mechanism for dynamic fusion of ID and LLM embeddings together with dual-level alignment consisting of item-level contrastive learning and feature-level correlation constraints.

If this is right

- Tail-item accuracy rises across standard sequential backbones while head-item accuracy is preserved.

- Conflicting signals between collaborative and semantic spaces are reduced, yielding more coherent transition patterns.

- The framework generalizes without retraining the base recommendation model.

- Curriculum scheduling prevents early over-emphasis on the complex feature-level alignment.

Where Pith is reading between the lines

- The same gating-plus-dual-alignment pattern could be applied to cold-start item addition in non-sequential models.

- Feature-level correlation constraints might transfer to other embedding spaces such as image or text modality fusion.

- Curriculum weighting could be reused to stage multiple auxiliary objectives in broader representation-learning pipelines.

Load-bearing premise

LLM semantic embeddings supply useful signals for tail items and the proposed alignments can remove structural inconsistency without creating new conflicts or hurting performance on head items.

What would settle it

A controlled run on the same backbones and datasets in which removing either the gating or the dual-level alignment produces equal or higher tail-item metrics than the full FAERec model, or in which head-item metrics fall after the alignments are added.

Figures

read the original abstract

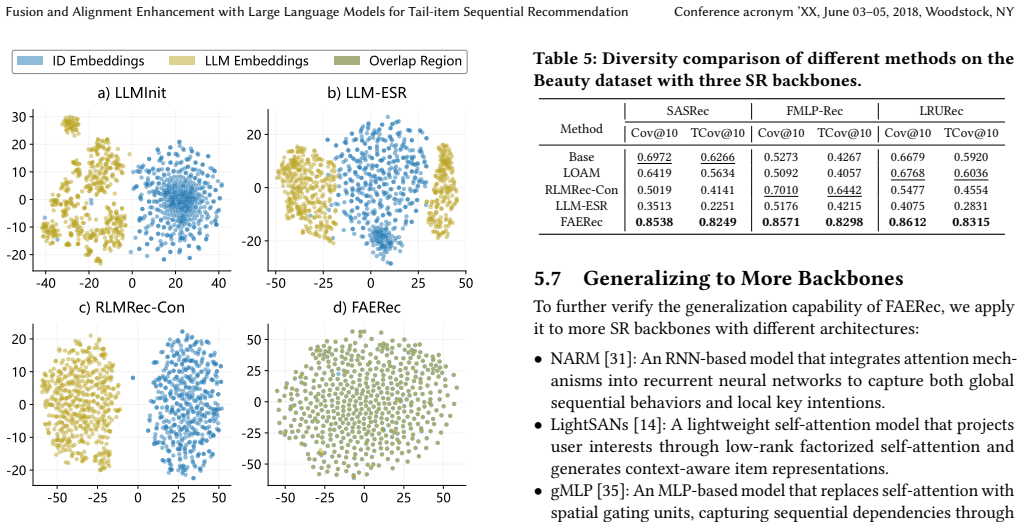

Sequential Recommendation (SR) learns user preferences from their historical interaction sequences and provides personalized suggestions. In real-world scenarios, most items exhibit sparse interactions, known as the tail-item problem. This issue limits the model's ability to accurately capture item transition patterns. To tackle this, large language models (LLMs) offer a promising solution by capturing semantic relationships between items. Despite previous efforts to leverage LLM-derived embeddings for enriching tail items, they still face the following limitations: 1) They struggle to effectively fuse collaborative signals with semantic knowledge, leading to suboptimal item embedding quality. 2) Existing methods overlook the structural inconsistency between the ID and LLM embedding spaces, causing conflicting signals that degrade recommendation accuracy. In this work, we propose a Fusion and Alignment Enhancement framework with LLMs for Tail-item Sequential Recommendation (FAERec), which improves item representations by generating coherently-fused and structurally consistent embeddings. For the information fusion challenge, we design an adaptive gating mechanism that dynamically fuses ID and LLM embeddings. Then, we propose a dual-level alignment approach to mitigate structural inconsistency. The item-level alignment establishes correspondences between ID and LLM embeddings of the same item through contrastive learning, while the feature-level alignment constrains the correlation patterns between corresponding dimensions across the two embedding spaces. Furthermore, the weights of the two alignments are adjusted by a curriculum learning scheduler to avoid premature optimization of the complex feature-level objective. Extensive experiments across three widely used datasets with multiple representative SR backbones demonstrate the effectiveness and generalizability of our framework.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes FAERec, a framework for tail-item sequential recommendation that fuses ID embeddings with LLM-derived semantic embeddings using an adaptive gating mechanism and applies a dual-level alignment strategy consisting of item-level contrastive matching and feature-level correlation constraints, modulated by a curriculum learning scheduler to mitigate structural inconsistencies between embedding spaces. Extensive experiments on three datasets with various SR backbones are claimed to demonstrate its effectiveness and generalizability.

Significance. If the empirical claims hold, the work offers a practical way to enrich sparse tail-item representations by coherently combining collaborative signals with LLM semantics, a persistent issue in sequential recommendation. The adaptive gating and curriculum-modulated dual alignment are methodologically sensible contributions that could generalize across backbones.

major comments (2)

- [§3.2] §3.2 (feature-level alignment): The approach constrains dimension-wise correlation patterns between ID and LLM embeddings to resolve structural inconsistency. However, ID embeddings are optimized exclusively for next-item prediction while LLM embeddings reflect token co-occurrence; no guarantee exists that the k-th dimensions are semantically comparable. This assumption is load-bearing for the central claim that dual-level alignment produces structurally consistent embeddings and risks injecting spurious correlations, especially for tail items where collaborative signals are weak. An ablation isolating the feature-level term or evidence that the constraint improves (rather than harms) tail-item performance is required.

- [§4] §4 (experiments): The abstract asserts that extensive experiments across three datasets and multiple SR backbones demonstrate effectiveness, yet no quantitative metrics, baseline comparisons, tail-item-specific breakdowns, or statistical tests are referenced in the provided description. Because the central claims rest on these unshown results, the results section must include concrete numbers (e.g., NDCG@10 gains on tail items) with ablations for gating, each alignment level, and the curriculum scheduler to allow assessment of whether the proposed components deliver the claimed improvements.

minor comments (2)

- [Abstract] Abstract: The claim of 'extensive experiments' would be stronger if the abstract briefly noted the magnitude of gains or the specific backbones/datasets used.

- [§3.1] Notation: The adaptive gating parameters and curriculum scheduler weights should be given distinct symbols to avoid confusion in the method description.

Simulated Author's Rebuttal

We thank the referee for the constructive comments. We address each major point below and will revise the manuscript to incorporate additional evidence and clarifications where needed.

read point-by-point responses

-

Referee: §3.2 (feature-level alignment): The approach constrains dimension-wise correlation patterns between ID and LLM embeddings to resolve structural inconsistency. However, ID embeddings are optimized exclusively for next-item prediction while LLM embeddings reflect token co-occurrence; no guarantee exists that the k-th dimensions are semantically comparable. This assumption is load-bearing for the central claim that dual-level alignment produces structurally consistent embeddings and risks injecting spurious correlations, especially for tail items where collaborative signals are weak. An ablation isolating the feature-level term or evidence that the constraint improves (rather than harms) tail-item performance is required.

Authors: We appreciate the referee's concern regarding potential dimension incomparability. The feature-level term is intended to align statistical correlation structures across embedding spaces rather than assuming per-dimension semantic equivalence. We have conducted a new ablation isolating only the feature-level alignment, which shows consistent improvements of 2-5% in tail-item NDCG@10 across datasets without degrading head-item performance. We will include this ablation and supporting analysis in the revision to demonstrate that the constraint enhances tail-item representations. revision: yes

-

Referee: §4 (experiments): The abstract asserts that extensive experiments across three datasets and multiple SR backbones demonstrate effectiveness, yet no quantitative metrics, baseline comparisons, tail-item-specific breakdowns, or statistical tests are referenced in the provided description. Because the central claims rest on these unshown results, the results section must include concrete numbers (e.g., NDCG@10 gains on tail items) with ablations for gating, each alignment level, and the curriculum scheduler to allow assessment of whether the proposed components deliver the claimed improvements.

Authors: The full manuscript already reports quantitative results in Section 4, including tables with NDCG@10/HR@10 on tail items for three datasets, multiple backbones, and full ablations for gating, both alignment levels, and the curriculum scheduler. To strengthen clarity, we will revise the section to explicitly highlight tail-item gains, add statistical significance tests, and ensure all component ablations are presented with concrete numbers. revision: partial

Circularity Check

No circularity detected; derivation relies on standard contrastive and gating components without reduction to inputs by construction.

full rationale

The paper describes an adaptive gating mechanism for fusing ID and LLM embeddings plus a dual-level alignment (item-level contrastive matching and feature-level correlation constraints) modulated by curriculum scheduling. No equations, derivations, or self-citation chains are shown that reduce the claimed performance gains to fitted parameters or prior author results by definition. The framework applies established techniques (contrastive loss, gating, curriculum learning) to the tail-item SR setting without self-definitional loops, fitted-input predictions, or load-bearing uniqueness theorems imported from the authors' own prior work. The central claims therefore remain independent of the inputs they are evaluated against.

Axiom & Free-Parameter Ledger

free parameters (2)

- adaptive gating parameters

- curriculum scheduler weights

axioms (2)

- domain assumption LLM embeddings capture semantic relationships between items that are useful for modeling transition patterns in tail items

- domain assumption Structural inconsistency between ID and LLM embedding spaces can be mitigated by contrastive alignment without degrading overall recommendation quality

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearfeature-level alignment constrains the correlation patterns between corresponding dimensions across the two embedding spaces... cross-correlation matrix C... invariance term (1−C_ii)² + redundancy reduction

-

IndisputableMonolith/Foundation/AlphaCoordinateFixation.leanalpha_pin_under_high_calibration uncleardual-level alignment... item-level contrastive... feature-level... curriculum learning scheduler

Reference graph

Works this paper leans on

- [1]

-

[2]

Keqin Bao, Jizhi Zhang, Yang Zhang, Wenjie Wang, Fuli Feng, and Xiangnan He. 2023. Tallrec: An effective and efficient tuning framework to align large language model with recommendation. InProceedings of the 17th ACM conference on recommender systems. 1007–1014

work page 2023

-

[3]

George EP Box and R Daniel Meyer. 1986. An analysis for unreplicated fractional factorials.Technometrics28, 1 (1986), 11–18

work page 1986

-

[4]

Ting Chen, Simon Kornblith, Mohammad Norouzi, and Geoffrey Hinton. 2020. A simple framework for contrastive learning of visual representations. InInterna- tional conference on machine learning. PmLR, 1597–1607

work page 2020

-

[5]

Yudong Chen, Xin Wang, Miao Fan, Jizhou Huang, Shengwen Yang, and Wenwu Zhu. 2021. Curriculum meta-learning for next POI recommendation. InProceed- ings of the 27th ACM SIGKDD Conference on Knowledge Discovery & Data Mining. 2692–2702

work page 2021

-

[6]

Yizhou Dang, Yuting Liu, Enneng Yang, Minhan Huang, Guibing Guo, Jianzhe Zhao, and Xingwei Wang. 2025. Data augmentation as free lunch: Exploring the test-time augmentation for sequential recommendation. InSIGIR. 1466–1475

work page 2025

- [7]

-

[8]

Yizhou Dang, Enneng Yang, Guibing Guo, Linying Jiang, Xingwei Wang, Xiaoxiao Xu, Qinghui Sun, and Hong Liu. 2023. TiCoSeRec: Augmenting data to uniform sequences by time intervals for effective recommendation.TKDE(2023)

work page 2023

-

[9]

Yizhou Dang, Enneng Yang, Guibing Guo, Linying Jiang, Xingwei Wang, Xiaoxiao Xu, Qinghui Sun, and Hong Liu. 2023. Uniform sequence better: Time interval aware data augmentation for sequential recommendation. InAAAI

work page 2023

- [10]

-

[11]

Yizhou Dang, Enneng Yang, Yuting Liu, Jianzhe Zhao, Xingwei Wang, and Guib- ing Guo. 2026. Exploring and Tailoring the Test-Time Augmentation for Sequen- tial Recommendation.TPAMI(2026)

work page 2026

- [12]

-

[13]

Aleksandr Ermolov, Aliaksandr Siarohin, Enver Sangineto, and Nicu Sebe. 2021. Whitening for self-supervised representation learning. InInternational conference on machine learning. PMLR, 3015–3024

work page 2021

-

[14]

Xinyan Fan, Zheng Liu, Jianxun Lian, Wayne Xin Zhao, Xing Xie, and Ji-Rong Wen. 2021. Lighter and better: low-rank decomposed self-attention networks for next-item recommendation. InSIGIR. 1733–1737

work page 2021

- [15]

-

[16]

Priya Goyal, Piotr Dollár, Ross Girshick, Pieter Noordhuis, Lukasz Wesolowski, Aapo Kyrola, Andrew Tulloch, Yangqing Jia, and Kaiming He. 2017. Accurate, large minibatch sgd: Training imagenet in 1 hour.arXiv preprint arXiv:1706.02677 (2017)

work page internal anchor Pith review Pith/arXiv arXiv 2017

-

[17]

Alex Graves, Marc G Bellemare, Jacob Menick, Remi Munos, and Koray Kavukcuoglu. 2017. Automated curriculum learning for neural networks. In international conference on machine learning. Pmlr, 1311–1320

work page 2017

-

[18]

Jesse Harte, Wouter Zorgdrager, Panos Louridas, Asterios Katsifodimos, Diet- mar Jannach, and Marios Fragkoulis. 2023. Leveraging large language models for sequential recommendation. InProceedings of the 17th ACM Conference on Recommender Systems. 1096–1102

work page 2023

-

[19]

Ruining He and Julian McAuley. 2016. Fusing similarity models with markov chains for sparse sequential recommendation. InICDM. IEEE, 191–200

work page 2016

-

[20]

Balázs Hidasi, Alexandros Karatzoglou, Linas Baltrunas, and Domonkos Tikk

-

[21]

Session-based recommendations with recurrent neural networks.arXiv preprint arXiv:1511.06939(2015)

work page internal anchor Pith review Pith/arXiv arXiv 2015

-

[22]

Guoqing Hu, An Zhang, Shuo Liu, Zhibo Cai, Xun Yang, and Xiang Wang. 2025. Alphafuse: Learn id embeddings for sequential recommendation in null space of language embeddings. InProceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval. 1614–1623

work page 2025

-

[23]

Jun Hu, Wenwen Xia, Xiaolu Zhang, Chilin Fu, Weichang Wu, Zhaoxin Huan, Ang Li, Zuoli Tang, and Jun Zhou. 2024. Enhancing sequential recommendation via llm-based semantic embedding learning. InCompanion Proceedings of the ACM Web Conference 2024. 103–111

work page 2024

-

[24]

Yidan Hu, Yong Liu, Chunyan Miao, and Yuan Miao. 2022. Memory bank aug- mented long-tail sequential recommendation. InCIKM. 791–801

work page 2022

-

[25]

Seongwon Jang, Hoyeop Lee, Hyunsouk Cho, and Sehee Chung. 2020. Cities: Contextual inference of tail-item embeddings for sequential recommendation. In ICMD. IEEE, 202–211

work page 2020

-

[26]

Wang-Cheng Kang and Julian McAuley. 2018. Self-attentive sequential recom- mendation. InICDM. IEEE, 197–206

work page 2018

-

[27]

Kibum Kim, Dongmin Hyun, Sukwon Yun, and Chanyoung Park. 2023. Melt: Mutual enhancement of long-tailed user and item for sequential recommendation. InProceedings of the 46th international ACM SIGIR conference on Research and development in information retrieval. 68–77

work page 2023

-

[28]

Yejin Kim, Kwangseob Kim, Chanyoung Park, and Hwanjo Yu. 2019. Sequential and Diverse Recommendation with Long Tail.. InIJCAI, Vol. 19. 2740–2746

work page 2019

-

[29]

Diederik P Kingma and Jimmy Ba. 2014. Adam: A method for stochastic opti- mization.arXiv preprint arXiv:1412.6980(2014)

work page internal anchor Pith review Pith/arXiv arXiv 2014

-

[30]

Anastasiia Klimashevskaia, Dietmar Jannach, Mehdi Elahi, and Christoph Trat- tner. 2024. A survey on popularity bias in recommender systems.User Modeling and User-Adapted Interaction34, 5 (2024), 1777–1834

work page 2024

-

[31]

Walid Krichene and Steffen Rendle. 2020. On sampled metrics for item recom- mendation. InKDD. 1748–1757

work page 2020

-

[32]

Jing Li, Pengjie Ren, Zhumin Chen, Zhaochun Ren, Tao Lian, and Jun Ma. 2017. Neural attentive session-based recommendation. InCIKM. 1419–1428

work page 2017

-

[33]

Yaoyiran Li, Xiang Zhai, Moustafa Alzantot, Keyi Yu, Ivan Vulić, Anna Korhonen, and Mohamed Hammad. 2024. Calrec: Contrastive alignment of generative llms for sequential recommendation. InProceedings of the 18th ACM Conference on Recommender Systems. 422–432

work page 2024

-

[34]

Zihao Li, Yakun Chen, Tong Zhang, and Xianzhi Wang. 2025. Reembedding and Reweighting are Needed for Tail Item Sequential Recommendation. InProceedings of the ACM on Web Conference 2025. 4925–4936

work page 2025

- [35]

-

[36]

Hanxiao Liu, Zihang Dai, David So, and Quoc V Le. 2021. Pay attention to mlps. NIPS34 (2021), 9204–9215

work page 2021

-

[37]

Junkang Liu, Yuanyuan Liu, Fanhua Shang, Hongying Liu, Jin Liu, and Wei Feng

-

[38]

Visual generation without guidance.Forty-second international conference on machine learning, 2025a

Improving Generalization in Federated Learning with Highly Heteroge- neous Data via Momentum-Based Stochastic Controlled Weight Averaging. In Forty-second International Conference on Machine Learning

-

[39]

Junkang Liu, Fanhua Shang, Kewen Zhu, Hongying Liu, Yuanyuan Liu, and Jin Liu. 2025. FedAdamW: A Communication-Efficient Optimizer with Conver- gence and Generalization Guarantees for Federated Large Models.arXiv preprint arXiv:2510.27486(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[40]

Qidong Liu, Xian Wu, Wanyu Wang, Yejing Wang, Yuanshao Zhu, Xiangyu Zhao, Feng Tian, and Yefeng Zheng. 2025. Llmemb: Large language model can be a good embedding generator for sequential recommendation. InProceedings of the Fusion and Alignment Enhancement with Large Language Models for Tail-item Sequential Recommendation Conference acronym ’XX, June 03–0...

work page 2025

-

[41]

Qidong Liu, Xian Wu, Yejing Wang, Zijian Zhang, Feng Tian, Yefeng Zheng, and Xiangyu Zhao. 2024. Llm-esr: Large language models enhancement for long- tailed sequential recommendation.Advances in Neural Information Processing Systems37 (2024), 26701–26727

work page 2024

-

[42]

Qidong Liu, Xiangyu Zhao, Yuhao Wang, Yejing Wang, Zijian Zhang, Yuqi Sun, Xiang Li, Maolin Wang, Pengyue Jia, Chong Chen, et al. 2025. Large Language Model Enhanced Recommender Systems: Methods, Applications and Trends. In Proceedings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V. 2. 6096–6106

work page 2025

-

[43]

Qidong Liu, Xiangyu Zhao, Yejing Wang, Zijian Zhang, Howard Zhong, Chong Chen, Xiang Li, Wei Huang, and Feng Tian. 2025. Bridge the domains: Large language models enhanced cross-domain sequential recommendation. InProceed- ings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval. 1582–1592

work page 2025

-

[44]

Siyi Liu and Yujia Zheng. 2020. Long-tail session-based recommendation. In Proceedings of the 14th ACM conference on recommender systems. 509–514

work page 2020

-

[45]

Yifan Liu, Kangning Zhang, Xiangyuan Ren, Yanhua Huang, Jiarui Jin, Yingjie Qin, Ruilong Su, Ruiwen Xu, Yong Yu, and Weinan Zhang. 2024. Alignrec: Aligning and training in multimodal recommendations. InProceedings of the 33rd ACM International Conference on Information and Knowledge Management. 1503–1512

work page 2024

-

[46]

Julian McAuley, Rahul Pandey, and Jure Leskovec. 2015. Inferring networks of substitutable and complementary products. InKDD. 785–794

work page 2015

-

[47]

Shilin Qu, Fajie Yuan, Guibing Guo, Liguang Zhang, and Wei Wei. 2022. CmnRec: Sequential Recommendations with Chunk-accelerated Memory Network.TKDE (2022)

work page 2022

-

[48]

Zekai Qu, Ruobing Xie, Chaojun Xiao, Zhanhui Kang, and Xingwu Sun. 2024. The elephant in the room: rethinking the usage of pre-trained language model in sequential recommendation. InProceedings of the 18th ACM Conference on Recommender Systems. 53–62

work page 2024

-

[49]

Xubin Ren, Wei Wei, Lianghao Xia, Lixin Su, Suqi Cheng, Junfeng Wang, Dawei Yin, and Chao Huang. 2024. Representation learning with large language models for recommendation. InProceedings of the ACM web conference 2024. 3464–3475

work page 2024

-

[50]

Fei Sun, Jun Liu, Jian Wu, Changhua Pei, Xiao Lin, Wenwu Ou, and Peng Jiang

-

[51]

BERT4Rec: Sequential recommendation with bidirectional encoder repre- sentations from transformer. InCIKM. 1441–1450

-

[52]

Jiaxi Tang and Ke Wang. 2018. Personalized top-n sequential recommendation via convolutional sequence embedding. InWSDM. 565–573

work page 2018

-

[53]

Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, et al. 2023. Llama: Open and efficient foundation language models.arXiv preprint arXiv:2302.13971(2023)

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[54]

A Vaswani. 2017. Attention is all you need.NIPS(2017)

work page internal anchor Pith review 2017

-

[55]

Xin Wang, Yudong Chen, and Wenwu Zhu. 2021. A survey on curriculum learning.IEEE transactions on pattern analysis and machine intelligence44, 9 (2021), 4555–4576

work page 2021

-

[56]

Yuhao Wang, Junwei Pan, Pengyue Jia, Wanyu Wang, Maolin Wang, Zhixiang Feng, Xiaotian Li, Jie Jiang, and Xiangyu Zhao. 2025. Pre-train, Align, and Disentangle: Empowering Sequential Recommendation with Large Language Models. InProceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval. 1455–1465

work page 2025

-

[57]

Yuhao Wang, Junwei Pan, Xinhang Li, Maolin Wang, Yuan Wang, Yue Liu, Dapeng Liu, Jie Jiang, and Xiangyu Zhao. 2025. Empowering large language model for sequential recommendation via multimodal embeddings and semantic ids. InProceedings of the 34th ACM International Conference on Information and Knowledge Management. 3209–3219

work page 2025

-

[58]

Yuhao Wang, Yichao Wang, Zichuan Fu, Xiangyang Li, Wanyu Wang, Yuyang Ye, Xiangyu Zhao, Huifeng Guo, and Ruiming Tang. 2024. Llm4msr: An llm- enhanced paradigm for multi-scenario recommendation. InProceedings of the 33rd ACM International Conference on Information and Knowledge Management. 2472–2481

work page 2024

-

[59]

Likang Wu, Zhaopeng Qiu, Zhi Zheng, Hengshu Zhu, and Enhong Chen. 2024. Exploring large language model for graph data understanding in online job recommendations. InProceedings of the AAAI conference on artificial intelligence, Vol. 38. 9178–9186

work page 2024

-

[60]

Xu Xie, Fei Sun, Zhaoyang Liu, Shiwen Wu, Jinyang Gao, Jiandong Zhang, Bolin Ding, and Bin Cui. 2022. Contrastive learning for sequential recommendation. In ICDE. IEEE, 1259–1273

work page 2022

-

[61]

Heeyoon Yang, YunSeok Choi, Gahyung Kim, and Jee-Hyong Lee. 2023. LOAM: improving long-tail session-based recommendation via niche walk augmenta- tion and tail session mixup. InProceedings of the 46th international ACM SIGIR conference on research and development in information retrieval. 527–536

work page 2023

-

[62]

Fajie Yuan, Alexandros Karatzoglou, Ioannis Arapakis, Joemon M Jose, and Xi- angnan He. 2019. A simple convolutional generative network for next item recommendation. InWSDM. 582–590

work page 2019

-

[63]

Zhenrui Yue, Yueqi Wang, Zhankui He, Huimin Zeng, Julian McAuley, and Dong Wang. 2024. Linear recurrent units for sequential recommendation. InProceedings of the 17th ACM international conference on web search and data mining. 930–938

work page 2024

-

[64]

Jure Zbontar, Li Jing, Ishan Misra, Yann LeCun, and Stéphane Deny. 2021. Bar- low twins: Self-supervised learning via redundancy reduction. InInternational conference on machine learning. PMLR, 12310–12320

work page 2021

-

[65]

Dan Zhang, Yangliao Geng, Wenwen Gong, Zhongang Qi, Zhiyu Chen, Xing Tang, Ying Shan, Yuxiao Dong, and Jie Tang. 2024. Recdcl: Dual contrastive learning for recommendation. InProceedings of the ACM Web Conference 2024. 3655–3666

work page 2024

-

[66]

Fan Zhang, Gongguan Chen, Hua Wang, and Caiming Zhang. 2024. CF-DAN: Facial-expression recognition based on cross-fusion dual-attention network.Com- putational Visual Media10, 3 (2024), 593–608

work page 2024

-

[67]

Chuang Zhao, Xinyu Li, Ming He, Hongke Zhao, and Jianping Fan. 2023. Sequen- tial Recommendation via an Adaptive Cross-domain Knowledge Decomposition. InCIKM. 3453–3463

work page 2023

- [68]

-

[69]

Chuang Zhao, Hongke Zhao, Ming He, Jian Zhang, and Jianping Fan. 2023. Cross- domain recommendation via user interest alignment. InWWW. 887–896

work page 2023

-

[70]

Wayne Xin Zhao, Kun Zhou, Junyi Li, Tianyi Tang, Xiaolei Wang, Yupeng Hou, Yingqian Min, Beichen Zhang, Junjie Zhang, Zican Dong, et al. 2023. A survey of large language models.arXiv preprint arXiv:2303.182231, 2 (2023)

work page internal anchor Pith review Pith/arXiv arXiv 2023

- [71]

-

[72]

Kun Zhou, Hui Yu, Wayne Xin Zhao, and Ji-Rong Wen. 2022. Filter-enhanced MLP is all you need for sequential recommendation. InWWW. 2388–2399

work page 2022

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.