Recognition: unknown

Adaptive Riemannian Manifold Hamiltonian Monte Carlo with Hierarchical Metric

Pith reviewed 2026-05-10 16:05 UTC · model grok-4.3

The pith

Imposing a hierarchical structure on the mass matrix gives Riemannian manifold HMC a closed-form leapfrog integrator that supports automatic adaptation and dynamic sampling.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Hierarchical RMHMC admits a closed-form explicit leapfrog integrator, unlike general RMHMC, and therefore can be used directly inside dynamic HMC algorithms such as NUTS. An adaptation procedure automatically tunes the parameters of the hierarchical mass matrix on the fly. The target density need not possess any hierarchical or block structure; the hierarchy is imposed solely on the mass matrix to capture local geometry.

What carries the argument

The hierarchical mass matrix, a position-dependent metric whose block structure yields an explicit leapfrog integrator and admits on-the-fly adaptation.

If this is right

- The closed-form integrator permits direct embedding inside NUTS and other dynamic HMC algorithms without numerical root-finding.

- Automatic tuning removes the need for manual selection of mass-matrix parameters.

- The method applies to arbitrary targets, not only those with explicit hierarchical structure.

- Empirical results indicate competitive performance on high-dimensional Bayesian inference problems.

Where Pith is reading between the lines

- The same hierarchical construction might be tested as a lightweight approximation inside other manifold-aware samplers.

- Performance could be examined on targets whose geometry changes sharply across scales or iterations.

- The explicit integrator opens the possibility of combining the method with other acceleration techniques such as parallel tempering.

Load-bearing premise

Imposing a hierarchical structure on the mass matrix and adapting its parameters will capture the local geometry of the target well enough to produce reliable efficiency gains.

What would settle it

A high-dimensional sampling task in which the adaptive hierarchical method mixes no faster than standard HMC or non-hierarchical RMHMC while using comparable or greater computation time.

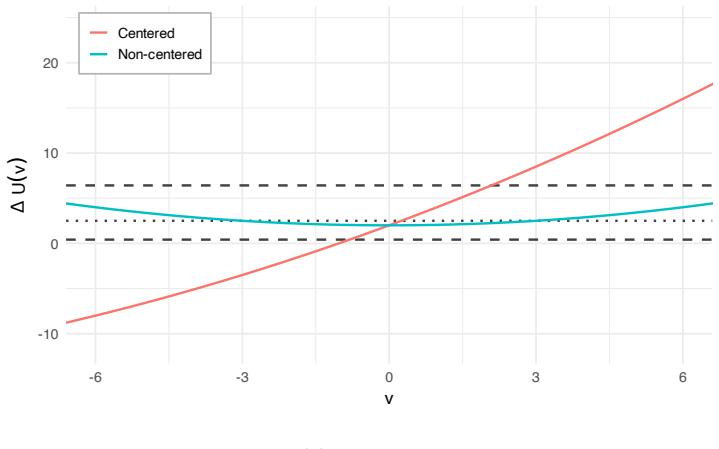

Figures

read the original abstract

Hamiltonian Monte Carlo (HMC) and its dynamic extensions, such as the No-U-Turn Sampler (NUTS), are powerful Markov chain Monte Carlo methods for sampling from complex, high-dimensional probability distributions. Riemannian manifold Hamiltonian Monte Carlo (RMHMC) extends HMC by allowing the mass matrix to depend on position, which can substantially improve mixing but also makes implementation considerably more challenging. In this paper, we study an adaptive hierarchical version of RMHMC that is well suited to many hierarchical sampling problems. A key feature of hierarchical RMHMC is that, unlike general RMHMC, it admits a closed-form explicit leapfrog integrator, enabling efficient implementation and direct use within dynamic HMC methods such as NUTS. We introduce an adaptive scheme that automatically tunes the parameters of the hierarchical mass matrix during simulation. Importantly, the target density need not exhibit any hierarchical or block structure; the hierarchy is instead imposed on the mass matrix as a modeling device to capture the local geometry of the target distribution. Numerical experiments demonstrate appealing empirical performance in high-dimensional Bayesian inference problems.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces an adaptive hierarchical Riemannian manifold Hamiltonian Monte Carlo (RMHMC) sampler. It claims that imposing a hierarchical structure on the mass matrix (rather than on the target density) yields a closed-form explicit leapfrog integrator, unlike general RMHMC, which enables efficient implementation and direct compatibility with dynamic HMC methods such as NUTS. An on-the-fly adaptive scheme is proposed to tune the parameters of this hierarchical mass matrix, and numerical experiments are reported to demonstrate appealing performance in high-dimensional Bayesian inference problems.

Significance. If the closed-form integrator is rigorously derived and the adaptive scheme is shown to preserve the correct stationary distribution, the work would be significant for lowering the computational barrier to position-dependent metrics in HMC, potentially extending the practical reach of RMHMC to higher-dimensional problems. The empirical results, if supported by appropriate baselines and diagnostics, could indicate meaningful mixing improvements; however, the lack of stated theoretical safeguards on adaptation limits the immediate impact.

major comments (2)

- [adaptive scheme section] The description of the adaptive scheme for tuning the hierarchical mass matrix parameters provides no indication of diminishing adaptation rates or a fixed adaptation distribution. Standard results (e.g., Andrieu & Thoms 2008) require one or the other to guarantee ergodicity and the correct invariant measure; without this, the central claim that the method yields valid samples from the target is unsupported.

- [integrator derivation] The assertion of a closed-form explicit leapfrog integrator is load-bearing for the claim of efficient NUTS compatibility, yet the manuscript supplies neither the explicit integrator equations nor a verification that the integrator remains volume-preserving and symplectic under the imposed hierarchical metric.

minor comments (1)

- [abstract and experiments] The abstract states that the target need not exhibit hierarchical structure, but the numerical experiments section should include a clear statement of the problem dimensions, number of replicates, and quantitative metrics (effective sample size, wall-clock time, etc.) used to claim 'appealing empirical performance.'

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed comments on our manuscript. We address each major comment below and have revised the manuscript to strengthen the presentation of the adaptive scheme and the integrator derivation.

read point-by-point responses

-

Referee: [adaptive scheme section] The description of the adaptive scheme for tuning the hierarchical mass matrix parameters provides no indication of diminishing adaptation rates or a fixed adaptation distribution. Standard results (e.g., Andrieu & Thoms 2008) require one or the other to guarantee ergodicity and the correct invariant measure; without this, the central claim that the method yields valid samples from the target is unsupported.

Authors: We agree that the adaptive scheme requires explicit conditions to guarantee ergodicity and the correct invariant distribution. The original manuscript described the on-the-fly tuning procedure but did not specify the adaptation schedule. In the revised version we will add a dedicated paragraph (and accompanying pseudocode) stating that the adaptation uses a diminishing rate schedule of the form required by Andrieu & Thoms (2008). We will also include a short theoretical remark confirming that this choice preserves the target measure, thereby supporting the validity claim. revision: yes

-

Referee: [integrator derivation] The assertion of a closed-form explicit leapfrog integrator is load-bearing for the claim of efficient NUTS compatibility, yet the manuscript supplies neither the explicit integrator equations nor a verification that the integrator remains volume-preserving and symplectic under the imposed hierarchical metric.

Authors: We acknowledge that the explicit leapfrog equations and the verification of their geometric properties were not provided in the main text, even though the hierarchical metric is designed to admit a closed-form integrator. In the revision we will insert a new subsection (or appendix) that derives the explicit position and momentum updates under the hierarchical metric, shows that the Jacobian determinant equals one (volume preservation), and verifies the symplectic condition. These additions will directly substantiate the claimed compatibility with NUTS. revision: yes

Circularity Check

No significant circularity; claims rest on explicit construction and empirical validation

full rationale

The paper proposes a hierarchical RMHMC variant with an imposed mass-matrix structure, derives a closed-form leapfrog integrator from that structure, and introduces an adaptive tuning scheme whose validity is asserted via standard MCMC theory plus experiments. No load-bearing step reduces a prediction or uniqueness claim to a fitted parameter or self-citation by construction; the hierarchy is openly described as an imposed modeling device rather than derived from the target, and performance results are presented as numerical evidence rather than tautological outputs of the same equations.

Axiom & Free-Parameter Ledger

free parameters (1)

- hierarchical mass matrix parameters

axioms (1)

- domain assumption Imposing a hierarchical structure on the mass matrix captures the local geometry of the target distribution sufficiently well for sampling purposes

invented entities (1)

-

hierarchical mass matrix

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Andrieu and ´E

C. Andrieu and ´E. Moulines. On the ergodicity properties of some adaptive MCMC algo- rithms.Annals of Applied Probability, 16(3):1462–1505, 2006

2006

-

[2]

Andrieu and J

C. Andrieu and J. Thoms. A tutorial on adaptive MCMC.Statistics and computing, 18 (4):343–373, 2008

2008

-

[3]

Y. F. Atchad´ e. An adaptive version for the Metropolis adjusted Langevin algorithm with a truncated drift.Methodology and Computing in applied Probability, 8(2):235–254, 2006

2006

- [4]

-

[5]

Benveniste, M

A. Benveniste, M. M´ etivier, and P. Priouret.Adaptive algorithms and stochastic approxi- mations, volume 22. Springer Science & Business Media, 2012

2012

-

[6]

A Conceptual Introduction to Hamiltonian Monte Carlo

M. Betancourt. A conceptual introduction to Hamiltonian Monte Carlo. Preprint arXiv:1701.02434, 2017

work page Pith review arXiv 2017

-

[7]

M. Betancourt. Incomplete reparameterizations and equivalent metrics. Preprint arXiv:1910.09407, 2019

- [8]

-

[9]

Biron-Lattes, N

M. Biron-Lattes, N. Surjanovic, S. Syed, T. Campbell, and A. Bouchard-Cˆ ot´ e. autoMALA: Locally adaptive Metropolis-adjusted Langevin algorithm. InInternational Conference on Artificial Intelligence and Statistics, pages 4600–4608. PMLR, 2024

2024

-

[10]

Bou-Rabee and J

N. Bou-Rabee and J. M. Sanz-Serna. Geometric integrators and the Hamiltonian Monte Carlo method.Acta Numerica, 27:113–206, 2018

2018

-

[11]

N. Bou-Rabee, B. Carpenter, T. S. Kleppe, and M. Marsden. Incorporating local step-size adaptivity into the No-U-Turn Sampler using Gibbs self tuning. Preprint arXiv:2408.08259, 2024

-

[12]

N. Bou-Rabee, B. Carpenter, T. S. Kleppe, and S. Liu. The within-orbit adaptive leapfrog no-U-turn sampler. Preprint arXiv:2506.18746, 2025. 21

-

[13]

Bradbury, R

J. Bradbury, R. Frostig, P. Hawkins, M. J. Johnson, C. Leary, D. Maclaurin, G. Nec- ula, A. Paszke, J. VanderPlas, S. Wanderman-Milne, and Q. Zhang. JAX: compos- able transformations of Python+NumPy programs. Github repository, 2018. URL https://github.com/google/jax

2018

-

[14]

C. M. Carvalho, N. G. Polson, and J. G. Scott. Handling sparsity via the horseshoe. In Artificial intelligence and statistics, pages 73–80. PMLR, 2009

2009

-

[15]

R. V. Craiu, J. Rosenthal, and C. Yang. Learn from thy neighbor: Parallel-chain and regional adaptive MCMC.Journal of the American Statistical Association, 104(488):1454– 1466, 2009

2009

-

[16]

Duane, A

S. Duane, A. D. Kennedy, B. J. Pendleton, and D. Roweth. Hybrid Monte Carlo.Physics letters B, 195(2):216–222, 1987

1987

-

[17]

Duflo.Random iterative models, volume 34

M. Duflo.Random iterative models, volume 34. Springer, 2013

2013

- [18]

-

[19]

G. Fort, E. Moulines, A. Schreck, and M. Vihola. Convergence of Markovian stochastic approximation with discontinuous dynamics.SIAM Journal on Control and Optimization, 54(2):866–893, 2016

2016

-

[20]

C. J. Geyer. Practical Markov chain Monte Carlo.Statistical Science, 7(4):473–483, 1992. doi: 10.1214/ss/1177011137

-

[21]

Girolami and B

M. Girolami and B. Calderhead. Riemann manifold Langevin and Hamiltonian Monte Carlo methods.Journal of the Royal Statistical Society Series B: Statistical Methodology, 73(2):123–214, 03 2011

2011

- [22]

-

[23]

E. Hairer, C. Lubich, and G. Wanner.Geometric Numerical Integration: Structure- Preserving Algorithms for Ordinary Differential Equations. Springer Series in Compu- tational Mathematics. Springer, 2 edition, 2006. doi: 10.1007/3-540-30666-8

-

[24]

Hartmann, M

M. Hartmann, M. Girolami, and A. Klami. Lagrangian manifold Monte Carlo on Monge patches. InInternational Conference on Artificial Intelligence and Statistics, pages 4764–

-

[25]

Hird and S

M. Hird and S. Livingstone. Quantifying the effectiveness of linear preconditioning in Markov chain Monte Carlo.Journal of Machine Learning Research, 26:1–51, 2025

2025

-

[26]

High-dimensional Adaptive MCMC with Reduced Computational Complexity

M. Hird and S. Livingstone. High-dimensional adaptive mcmc with reduced computational complexity. Technical report, 2026. URLhttps://arxiv.org/abs/2604.09286

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[27]

M. Hoffman, P. Sountsov, J. V. Dillon, I. Langmore, D. Tran, and S. Vasudevan. Neutra-lizing bad geometry in Hamiltonian Monte Carlo using neural transport. Preprint arXiv:1903.03704, 2019

-

[28]

M. D. Hoffman and A. Gelman. The no-U-turn sampler: adaptively setting path lengths in Hamiltonian Monte Carlo.Journal of Machine Learning Research, 15(1):1593–1623, 2014

2014

-

[29]

H. Kesten. Accelerated stochastic approximation.The Annals of Mathematical Statistics, 29(1):41–59, 1958. 22

1958

-

[30]

T. S. Kleppe. Dynamically rescaled Hamiltonian Monte Carlo for Bayesian hierarchical models.Journal of Computational and Graphical Statistics, 28(3):493–507, 2019

2019

-

[31]

T. S. Kleppe. Log-density gradient covariance and automatic metric tensors for Riemann manifold Monte Carlo methods.Scandinavian Journal of Statistics, 51(3):1206–1229, 2024

2024

-

[32]

S. Lan, V. Stathopoulos, B. Shahbaba, and M. Girolami. Markov chain Monte Carlo from Lagrangian dynamics.Journal of Computational and Graphical Statistics, 24(2):357–378, 2015

2015

-

[33]

Livingstone and G

S. Livingstone and G. Zanella. The Barker proposal: Combining robustness and efficiency in gradient-based MCMC.Journal of the Royal Statistical Society Series B: Statistical Methodology, 84(2):496–523, 2022

2022

-

[34]

Livingstone, M

S. Livingstone, M. F. Faulkner, and G. O. Roberts. Kinetic energy choice in Hamilto- nian/hybrid Monte Carlo.Biometrika, 106(2):303–319, 2019

2019

-

[35]

O. A. Martin, O. Abril-Pla, J. Deklerk, S. D. Axen, C. Carroll, A. Hartikainen, and A. Ve- htari. Arviz: a modular and flexible library for exploratory analysis of Bayesian models. Journal of Open Source Software, 11(119):9889, 2026. doi: 10.21105/joss.09889

-

[36]

Miasojedow, E

B. Miasojedow, E. Moulines, and M. Vihola. An adaptive parallel tempering algorithm. Journal of Computational and Graphical Statistics, 22(3):649–664, 2013

2013

-

[37]

C. Modi, A. Barnett, and B. Carpenter. Delayed rejection Hamiltonian Monte Carlo for sampling multiscale distributions.Bayesian Analysis, 19(3):815–842, 2024

2024

- [38]

-

[39]

R. M. Neal. Slice sampling.The Annals of Statistics, 31(3):705–767, 2003

2003

-

[40]

R. M. Neal. MCMC using Hamiltonian dynamics.Handbook of Markov Chain Monte Carlo, 2:113–162, 2011

2011

-

[41]

Pakman and L

A. Pakman and L. Paninski. Exact Hamiltonian Monte Carlo for truncated multivariate Gaussians.Journal of Computational and Graphical Statistics, 23(2):518–542, 2014

2014

-

[42]

Papaspiliopoulos, G

O. Papaspiliopoulos, G. O. Roberts, and M. Sk¨ old. A general framework for the parametrization of hierarchical models.Statistical Science, pages 59–73, 2007

2007

-

[43]

Pascanu, T

R. Pascanu, T. Mikolov, and Y. Bengio. On the difficulty of training recurrent neural networks. InInternational conference on machine learning, pages 1310–1318, 2013. URL https://proceedings.mlr.press/v28/pascanu13.html

2013

-

[44]

Petra, J

N. Petra, J. Martin, G. Stadler, and O. Ghattas. A computational framework for infinite- dimensional Bayesian inverse problems, part ii: Stochastic Newton MCMC with application to ice sheet flow inverse problems.SIAM Journal on Scientific Computing, 36(4):A1525– A1555, 2014

2014

-

[45]

B. T. Polyak and A. B. Juditsky. Acceleration of stochastic approximation by averaging. SIAM Journal on Control and Optimization, 30(4):838–855, 1992

1992

-

[46]

Pompe, C

E. Pompe, C. Holmes, and K. Latuszy´ nski. A framework for adaptive MCMC targeting multimodal distributions.The Annals of Statistics, 48(5):2930–2952, 2020. 23

2020

-

[47]

G. O. Roberts and J. S. Rosenthal. Optimal scaling for various Metropolis–Hastings algo- rithms.Statistical science, 16(4):351–367, 2001

2001

-

[48]

G. O. Roberts and J. S. Rosenthal. Examples of adaptive MCMC.Journal of computational and graphical statistics, 18(2):349–367, 2009

2009

-

[49]

Saksman and M

E. Saksman and M. Vihola. On the ergodicity of the adaptive Metropolis algorithm on unbounded domains.Annals of Applied Probability, 20(6):2178–2203, 2010. doi: 10.1214/ 10-AAP682

2010

-

[50]

A. Seyboldt, E. L. Carlson, and B. Carpenter. Preconditioning Hamiltonian Monte Carlo by minimizing Fisher divergence. Preprint arXiv:2603.18845, 2026. URLhttps://arxiv. org/abs/2603.18845

-

[51]

Shephard.Stochastic volatility: selected readings

N. Shephard.Stochastic volatility: selected readings. Oxford University Press, 2005

2005

-

[52]

M. Titsias. Optimal preconditioning and Fisher adaptive Langevin sampling.Advances in Neural Information Processing Systems, 36:29449–29460, 2023

2023

-

[53]

J. H. Tran and T. S. Kleppe. Tuning diagonal scale matrices for HMC.Statistics and Computing, 34(6):196, 2024. doi: 10.1007/s11222-024-10494-6

-

[54]

M. Vihola. Can the adaptive Metropolis algorithm collapse without the covariance lower bound?Electronic Journal of Probability, 16:45–75, 2011. doi: 10.1214/EJP.v16-840

-

[55]

M. Vihola. Robust adaptive Metropolis algorithm with coerced acceptance rate.Statistics and Computing, 22(5):997–1008, 2012

2012

-

[56]

Wallin and D

J. Wallin and D. Bolin. Efficient adaptive MCMC through precision estimation.Journal of Computational and Graphical Statistics, 27(4):887–897, 2018

2018

-

[57]

Zhang and C

Y. Zhang and C. Sutton. Semi-separable Hamiltonian Monte Carlo for inference in Bayesian hierarchical models.Advances in neural information processing systems, 27, 2014. A Symmetry verification of the hierarchical leapfrog integrator Algorithm 6Exact block flowe hHk Require:Block indexk, step sizeh, current state (θ,p) 1:v k ←M k(θ−k)−1pk (19) 2:θ k ←θ k ...

2014

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.