Recognition: unknown

Long-Term Memory for VLA-based Agents in Open-World Task Execution

Pith reviewed 2026-05-10 09:20 UTC · model grok-4.3

The pith

ChemBot adds dual-layer memory to VLA agents for persistent learning in long chemical experiments.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

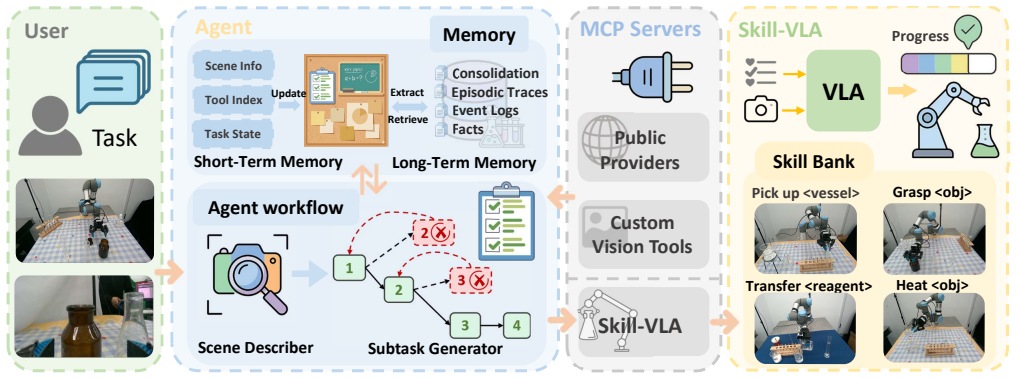

ChemBot is a dual-layer, closed-loop framework integrating an autonomous AI agent with a progress-aware VLA model (Skill-VLA) for hierarchical task decomposition and execution. It uses a dual-layer memory architecture to consolidate successful trajectories into retrievable assets and a Model Context Protocol (MCP) server for sub-agent and tool orchestration. A future-state-based asynchronous inference mechanism mitigates trajectory discontinuities, resulting in superior operational safety, precision, and task success rates over VLA baselines in long-horizon chemical experimentation.

What carries the argument

The dual-layer memory architecture, which consolidates successful trajectories into retrievable assets for reuse by the VLA agent.

If this is right

- Reduces reliance on inefficient trial-and-error by reusing proven strategies in multi-stage protocols.

- Achieves higher task success rates through accumulated experience in long-horizon tasks.

- Enhances operational safety and precision in chemical lab automation on collaborative robots.

- Enables hierarchical decomposition and smooth execution via Skill-VLA and asynchronous inference.

Where Pith is reading between the lines

- Similar memory consolidation techniques could apply to other embodied domains like household robotics or manufacturing where long sequences of actions are needed.

- The approach highlights the value of closing the loop between execution outcomes and future planning in VLA systems.

- Further work might explore how memory retrieval scales with the number of stored trajectories without increasing latency.

Load-bearing premise

The dual-layer memory architecture and Model Context Protocol server can reliably consolidate, retrieve, and apply successful trajectories without introducing new failure modes or excessive overhead in real chemical lab settings.

What would settle it

A repeated trial of the same long-horizon chemical experiment protocol using both ChemBot and baseline VLA agents, checking if ChemBot's success rate stays consistently higher while its memory retrieval does not cause new errors or slowdowns.

Figures

read the original abstract

Vision-Language-Action (VLA) models have demonstrated significant potential for embodied decision-making; however, their application in complex chemical laboratory automation remains restricted by limited long-horizon reasoning and the absence of persistent experience accumulation. Existing frameworks typically treat planning and execution as decoupled processes, often failing to consolidate successful strategies, which results in inefficient trial-and-error in multi-stage protocols. In this paper, we propose ChemBot, a dual-layer, closed-loop framework that integrates an autonomous AI agent with a progress-aware VLA model (Skill-VLA) for hierarchical task decomposition and execution. ChemBot utilizes a dual-layer memory architecture to consolidate successful trajectories into retrievable assets, while a Model Context Protocol (MCP) server facilitates efficient sub-agent and tool orchestration. To address the inherent limitations of VLA models, we further implement a future-state-based asynchronous inference mechanism to mitigate trajectory discontinuities. Extensive experiments on collaborative robots demonstrate that ChemBot achieves superior operational safety, precision, and task success rates compared to existing VLA baselines in complex, long-horizon chemical experimentation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces ChemBot, a dual-layer closed-loop framework that integrates an autonomous AI agent with a progress-aware VLA model (Skill-VLA) for hierarchical task decomposition and execution in chemical laboratory automation. It employs a dual-layer memory architecture to consolidate and retrieve successful trajectories, a Model Context Protocol (MCP) server for sub-agent and tool orchestration, and a future-state-based asynchronous inference mechanism to mitigate trajectory discontinuities. The central claim is that extensive experiments on collaborative robots demonstrate superior operational safety, precision, and task success rates relative to existing VLA baselines in complex, long-horizon chemical tasks.

Significance. If the experimental results can be substantiated with quantitative metrics, ablations, and controls, the dual-layer memory and closed-loop design could offer a practical mechanism for persistent experience accumulation in embodied VLA systems, addressing a recognized limitation in long-horizon reasoning for specialized real-world domains.

major comments (1)

- [Abstract] Abstract: the superiority claim in safety, precision, and success rates is presented without any supporting metrics, baseline definitions, experiment counts, error bars, ablation results isolating the memory layer or MCP server, or description of trajectory consolidation/retrieval procedures. This absence prevents verification that the proposed architecture, rather than Skill-VLA or hardware factors, produced the reported gains.

minor comments (1)

- The manuscript title emphasizes open-world task execution while the abstract and framework description focus exclusively on chemical laboratory protocols; clarify the intended scope and any generalization beyond the chemical domain.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We address the major comment below and will revise the abstract to improve verifiability while preserving its concise nature.

read point-by-point responses

-

Referee: [Abstract] Abstract: the superiority claim in safety, precision, and success rates is presented without any supporting metrics, baseline definitions, experiment counts, error bars, ablation results isolating the memory layer or MCP server, or description of trajectory consolidation/retrieval procedures. This absence prevents verification that the proposed architecture, rather than Skill-VLA or hardware factors, produced the reported gains.

Authors: We agree that the abstract would benefit from greater specificity to allow immediate assessment of the claims. The full manuscript already contains the requested elements in Section 4 (Experiments), including quantitative success rates with error bars across 50+ trials per task, explicit baseline definitions (e.g., standard VLA models without memory), ablation studies isolating the dual-layer memory and MCP server contributions, and detailed descriptions of trajectory consolidation/retrieval in Section 3.2. To directly address the concern, we will revise the abstract to incorporate key summary metrics (e.g., relative success rate improvements and experiment scale) and a brief note on the ablations, ensuring readers can attribute gains to the proposed components rather than Skill-VLA or hardware alone. revision: yes

Circularity Check

No circularity: framework description and experimental claims are independent of self-referential definitions or fitted inputs

full rationale

The paper introduces ChemBot as a dual-layer memory architecture plus MCP server and asynchronous inference for VLA agents, then asserts empirical superiority from experiments on collaborative robots. No equations, parameter fits, predictions derived from inputs, self-citations as load-bearing premises, or ansatzes appear in the provided text. The claimed gains in safety/precision/success rates are presented as outcomes of external experiments rather than quantities defined by the architecture itself, satisfying the condition for a self-contained proposal against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption VLA models can be extended with external memory layers and orchestration servers to overcome long-horizon limitations.

invented entities (4)

-

ChemBot dual-layer closed-loop framework

no independent evidence

-

Skill-VLA

no independent evidence

-

dual-layer memory architecture

no independent evidence

-

Model Context Protocol (MCP) server

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Devel- opment of a full automation solid phase microextraction method for investigating the partition coefficient of organic pollutant in complex sample,

R. Jiang, W. Lin, S. Wen, F. Zhu, T. Luan, and G. Ouyang, “Devel- opment of a full automation solid phase microextraction method for investigating the partition coefficient of organic pollutant in complex sample,”Journal of Chromatography A, vol. 1406, pp. 27–33, 2015

2015

-

[2]

Organic synthesis in a modular robotic system driven by a chemical programming language,

S. Steiner, J. Wolf, S. Glatzel, A. Andreou, J. M. Granda, G. Keenan, T. Hinkley, G. Aragon-Camarasa, P. J. Kitson, D. Angeloneet al., “Organic synthesis in a modular robotic system driven by a chemical programming language,”Science, vol. 363, no. 6423, p. eaav2211, 2019

2019

-

[3]

A mobile robotic chemist,

B. Burger, P. M. Maffettone, V . V . Gusev, C. M. Aitchison, Y . Bai, X. Wang, X. Li, B. M. Alston, B. Li, R. Cloweset al., “A mobile robotic chemist,”Nature, vol. 583, no. 7815, pp. 237–241, 2020

2020

-

[4]

An all-round ai-chemist with a scientific mind,

Q. Zhu, F. Zhang, Y . Huang, H. Xiao, L. Zhao, X. Zhang, T. Song, X. Tang, X. Li, G. Heet al., “An all-round ai-chemist with a scientific mind,”National Science Review, vol. 9, no. 10, p. nwac190, 2022

2022

-

[5]

Gemini Robotics: Bringing AI into the Physical World

G. R. Team, S. Abeyruwan, J. Ainslie, J.-B. Alayrac, M. G. Arenas, T. Armstrong, A. Balakrishna, R. Baruch, M. Bauza, M. Blokzijlet al., “Gemini robotics: Bringing ai into the physical world,”arXiv preprint arXiv:2503.20020, 2025

work page internal anchor Pith review arXiv 2025

-

[6]

J. Achiam, S. Adler, S. Agarwal, L. Ahmad, I. Akkaya, F. L. Aleman, D. Almeida, J. Altenschmidt, S. Altman, S. Anadkatet al., “Gpt-4 technical report,”arXiv preprint arXiv:2303.08774, 2023

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[7]

π 0: A vision-language-action flow model for general robot control,

K. Black, N. Brown, D. Driess, A. Esmail, M. Equi, C. Finn, N. Fusai, L. Groom, K. Hausman, B. Ichter, S. Jakubczak, T. Jones, L. Ke, S. Levine, A. Li-Bell, M. Mothukuri, S. Nair, K. Pertsch, L. X. Shi, J. Tanner, Q. Vuong, A. Walling, H. Wang, and U. Zhilinsky, “π 0: A vision-language-action flow model for general robot control,” 2024

2024

-

[8]

GR00T N1: An open foundation model for generalist humanoid robots,

NVIDIA, J. Bjorck, N. C. Fernando Casta ˜neda, X. Da, R. Ding, L. J. Fan, Y . Fang, D. Fox, F. Hu, S. Huang, J. Jang, Z. Jiang, J. Kautz, K. Kundalia, L. Lao, Z. Li, Z. Lin, K. Lin, G. Liu, E. Llontop, L. Magne, A. Mandlekar, A. Narayan, S. Nasiriany, S. Reed, Y . L. Tan, G. Wang, Z. Wang, J. Wang, Q. Wang, J. Xiang, Y . Xie, Y . Xu, Z. Xu, S. Ye, Z. Yu, ...

2025

-

[9]

SmolVLA: A Vision-Language-Action Model for Affordable and Efficient Robotics

M. Shukor, D. Aubakirova, F. Capuano, P. Kooijmans, S. Palma, A. Zouitine, M. Aractingi, C. Pascal, M. Russi, A. Marafiotiet al., “Smolvla: A vision-language-action model for affordable and efficient robotics,”arXiv preprint arXiv:2506.01844, 2025

work page internal anchor Pith review arXiv 2025

-

[10]

Voyager: An Open-Ended Embodied Agent with Large Language Models

G. Wang, Y . Xie, Y . Jiang, A. Mandlekar, C. Xiao, Y . Zhu, L. Fan, and A. Anandkumar, “V oyager: An open-ended embodied agent with large language models,”arXiv preprint arXiv:2305.16291, 2023

work page internal anchor Pith review arXiv 2023

-

[11]

Organa: A robotic assistant for automated chemistry experimentation and characterization,

K. Darvish, M. Skreta, Y . Zhao, N. Yoshikawa, S. Som, M. Bog- danovic, Y . Cao, H. Hao, H. Xu, A. Aspuru-Guziket al., “Organa: A robotic assistant for automated chemistry experimentation and characterization,”Matter, vol. 8, no. 2, 2025

2025

-

[12]

A multiagent-driven robotic ai chemist enabling autonomous chemical research on demand,

T. Song, M. Luo, X. Zhang, L. Chen, Y . Huang, J. Cao, Q. Zhu, D. Liu, B. Zhang, G. Zouet al., “A multiagent-driven robotic ai chemist enabling autonomous chemical research on demand,”Journal of the American Chemical Society, vol. 147, no. 15, pp. 12 534–12 545, 2025

2025

-

[13]

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

D. Guo, D. Yang, H. Zhang, J. Song, P. Wang, Q. Zhu, R. Xu, R. Zhang, S. Ma, X. Biet al., “Deepseek-r1: Incentivizing reason- ing capability in llms via reinforcement learning,”arXiv preprint arXiv:2501.12948, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[14]

W. Mao, W. Zhong, Z. Jiang, D. Fang, Z. Zhang, Z. Lan, H. Li, F. Jia, T. Wang, H. Fanet al., “Robomatrix: A skill-centric hierarchical framework for scalable robot task planning and execution in open- world,”arXiv preprint arXiv:2412.00171, 2024

-

[15]

Robochemist: Long-horizon and safety- compliant robotic chemical experimentation

Z. Zhang, C. Yue, H. Xu, M. Liao, X. Qi, H. Gao, Z. Wang, and H. Zhao, “Robochemist: Long-horizon and safety-compliant robotic chemical experimentation,”CoRR, vol. abs/2509.08820, 2025. [Online]. Available: https://doi.org/10.48550/arXiv.2509.08820

-

[16]

Openclaw: Your own personal AI assistant,

P. Steinberger and OpenClaw Contributors, “Openclaw: Your own personal AI assistant,” GitHub repository, 2026, version 2026.3.1. Accessed: 2026-03-02. [Online]. Available: https://github.com/openclaw/openclaw

2026

-

[17]

Towards general computer control: A multimodal agent for red dead redemption ii as a case study

W. Tan, W. Zhang, X. Xu, H. Xia, Z. Ding, B. Li, B. Zhou, J. Yue, J. Jiang, Y . Liet al., “Cradle: Empowering foundation agents towards general computer control,”arXiv preprint arXiv:2403.03186, 2024

- [18]

-

[19]

Anygrasp: Robust and efficient grasp perception in spatial and temporal domains,

H.-S. Fang, C. Wang, H. Fang, M. Gou, J. Liu, H. Yan, W. Liu, Y . Xie, and C. Lu, “Anygrasp: Robust and efficient grasp perception in spatial and temporal domains,”IEEE Transactions on Robotics, vol. 39, no. 5, pp. 3929–3945, 2023

2023

-

[20]

Do as i can, not as i say: Grounding language in robotic affordances,

A. Brohan, Y . Chebotar, C. Finn, K. Hausman, A. Herzog, D. Ho, J. Ibarz, A. Irpan, E. Jang, R. Julianet al., “Do as i can, not as i say: Grounding language in robotic affordances,” inConference on robot learning. PMLR, 2023, pp. 287–318

2023

-

[21]

Language agent tree search unifies reasoning acting and planning in language models,

A. Zhou, K. Yan, M. Shlapentokh-Rothman, H. Wang, and Y .-X. Wang, “Language agent tree search unifies reasoning acting and planning in language models,” 2023

2023

-

[22]

X. Zhu, Y . Chen, H. Tian, C. Tao, W. Su, C. Yang, G. Huang, B. Li, L. Lu, X. Wanget al., “Ghost in the minecraft: Generally capable agents for open-world environments via large language models with text-based knowledge and memory,”arXiv preprint arXiv:2305.17144, 2023

-

[23]

Reasoning with language model is planning with world model,

S. Hao, Y . Gu, H. Ma, J. Hong, Z. Wang, D. Wang, and Z. Hu, “Reasoning with language model is planning with world model,” in Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, 2023, pp. 8154–8173

2023

-

[24]

Creative robot tool use with large language models,

M. Xu, P. Huang, W. Yu, S. Liu, X. Zhang, Y . Niu, T. Zhang, F. Xia, J. Tan, and D. Zhao, “Creative robot tool use with large language models,”arXiv preprint arXiv:2310.13065, 2023

-

[25]

Take a step back: Evoking reasoning via abstraction in large language models,

H. S. Zheng, S. Mishra, X. Chen, H.-T. Cheng, E. H. Chi, Q. V . Le, and D. Zhou, “Take a step back: Evoking reasoning via abstraction in large language models,” inInternational Conference on Learning Representations, B. Kim, Y . Yue, S. Chaudhuri, K. Fragkiadaki, M. Khan, and Y . Sun, Eds., vol. 2024, 2024, pp. 20 279–20 316

2024

-

[26]

Wonderful team: Zero-shot physical task planning with visual llms,

Z. Wang, R. Shen, and B. Stadie, “Wonderful team: Zero-shot physical task planning with visual llms,”arXiv preprint arXiv:2407.19094, 2024

-

[27]

Being-0: A humanoid robotic agent with vision-language models and modular skills,

H. Yuan, Y . Bai, Y . Fu, B. Zhou, Y . Feng, X. Xu, Y . Zhan, B. F. Karlsson, and Z. Lu, “Being-0: A humanoid robotic agent with vision-language models and modular skills,”arXiv preprint arXiv:2503.12533, 2025

-

[28]

Rt-2: Vision-language-action models transfer web knowledge to robotic control,

B. Zitkovich, T. Yu, S. Xu, P. Xu, T. Xiao, F. Xia, J. Wu, P. Wohlhart, S. Welker, A. Wahidet al., “Rt-2: Vision-language-action models transfer web knowledge to robotic control,” inConference on Robot Learning. PMLR, 2023, pp. 2165–2183

2023

-

[29]

$\pi_{0.5}$: a Vision-Language-Action Model with Open-World Generalization

P. Intelligence, K. Black, N. Brown, J. Darpinian, K. Dhabalia, D. Driess, A. Esmail, M. Equi, C. Finn, N. Fusaiet al., “π 0.5: a vision-language-action model with open-world generalization,”arXiv preprint arXiv:2504.16054, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[30]

Openvla: An open-source vision- language-action model,

M. J. Kim, K. Pertsch, S. Karamcheti, T. Xiao, A. Balakrishna, S. Nair, R. Rafailov, E. P. Foster, P. R. Sanketi, Q. Vuong, T. Kollar, B. Burchfiel, R. Tedrake, D. Sadigh, S. Levine, P. Liang, and C. Finn, “Openvla: An open-source vision- language-action model,” inProceedings of The 8th Conference on Robot Learning, ser. Proceedings of Machine Learning Re...

2025

-

[31]

Clap: A closed-loop diffusion transformer action foundation model for robotic manipulation,

M. Li, Y . Dong, Y . Zhou, and C. Yang, “Clap: A closed-loop diffusion transformer action foundation model for robotic manipulation,” in 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2025, pp. 9808–9815

2025

-

[32]

A review of large language models and autonomous agents in chemistry,

M. C. Ramos, C. J. Collison, and A. D. White, “A review of large language models and autonomous agents in chemistry,”Chemical science, 2025

2025

-

[33]

Autonomous chemical research with large language models,

D. A. Boiko, R. MacKnight, B. Kline, and G. Gomes, “Autonomous chemical research with large language models,”Nature, vol. 624, no. 7992, pp. 570–578, 2023

2023

-

[34]

Large language models for chemistry robotics,

N. Yoshikawa, M. Skreta, K. Darvish, S. Arellano-Rubach, Z. Ji, L. Bjørn Kristensen, A. Z. Li, Y . Zhao, H. Xu, A. Kuramshinet al., “Large language models for chemistry robotics,”Autonomous Robots, vol. 47, no. 8, pp. 1057–1086, 2023

2023

-

[35]

Learning Fine-Grained Bimanual Manipulation with Low-Cost Hardware

T. Z. Zhao, V . Kumar, S. Levine, and C. Finn, “Learning fine-grained bimanual manipulation with low-cost hardware,”arXiv preprint arXiv:2304.13705, 2023

work page internal anchor Pith review arXiv 2023

-

[36]

Set-of-Mark Prompting Unleashes Extraordinary Visual Grounding in GPT-4V

J. Yang, H. Zhang, F. Li, X. Zou, C. Li, and J. Gao, “Set-of-mark prompting unleashes extraordinary visual grounding in gpt-4v,”arXiv preprint arXiv:2310.11441, 2023

work page internal anchor Pith review arXiv 2023

-

[37]

A universal system for digitization and automatic execution of the chemical synthesis literature,

S. H. M. Mehr, M. Craven, A. I. Leonov, G. Keenan, and L. Cronin, “A universal system for digitization and automatic execution of the chemical synthesis literature,”Science, vol. 370, no. 6512, pp. 101– 108, 2020

2020

-

[38]

Task bench: A pa- rameterized benchmark for evaluating parallel runtime performance,

E. Slaughter, W. Wu, Y . Fu, L. Brandenburg, N. Garcia, W. Kautz, E. Marx, K. S. Morris, Q. Cao, G. Bosilcaet al., “Task bench: A pa- rameterized benchmark for evaluating parallel runtime performance,” inSC20: International Conference for High Performance Computing, Networking, Storage and Analysis. IEEE, 2020, pp. 1–15

2020

-

[39]

Rouge: A package for automatic evaluation of summaries,

C.-Y . Lin, “Rouge: A package for automatic evaluation of summaries,” inText summarization branches out, 2004, pp. 74–81

2004

-

[40]

BERTScore: Evaluating Text Generation with BERT

T. Zhang, V . Kishore, F. Wu, K. Q. Weinberger, and Y . Artzi, “Bertscore: Evaluating text generation with bert,”arXiv preprint arXiv:1904.09675, 2019

work page internal anchor Pith review arXiv 1904

-

[41]

A normalized levenshtein distance metric,

L. Yujian and L. Bo, “A normalized levenshtein distance metric,”IEEE transactions on pattern analysis and machine intelligence, vol. 29, no. 6, pp. 1091–1095, 2007

2007

-

[42]

A. Yang, A. Li, B. Yang, B. Zhang, B. Hui, B. Zheng, B. Yu, C. Gao, C. Huang, C. Lvet al., “Qwen3 technical report,”arXiv preprint arXiv:2505.09388, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[43]

Doubao-1.6 large language model,

ByteDance, “Doubao-1.6 large language model,” 2025, accessed: 2026-03-04. [Online]. Available: https://www.volcengine.com/product/doubao

2025

-

[44]

K. Black, A. Z. Ren, M. Equi, and S. Levine, “Training-time ac- tion conditioning for efficient real-time chunking,”arXiv preprint arXiv:2512.05964, 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.