Recognition: unknown

SVL: Goal-Conditioned Reinforcement Learning as Survival Learning

Pith reviewed 2026-05-10 06:41 UTC · model grok-4.3

The pith

The goal-conditioned value function equals a discounted sum of survival probabilities over time.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

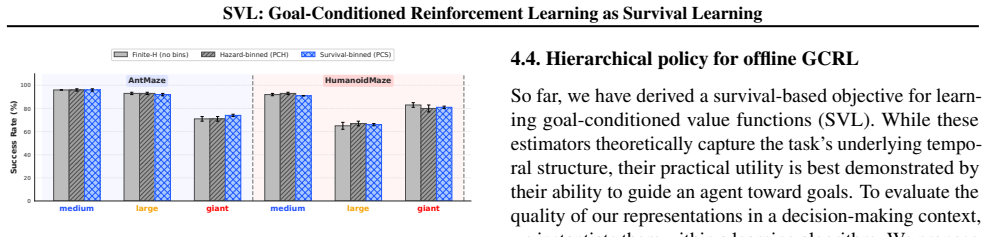

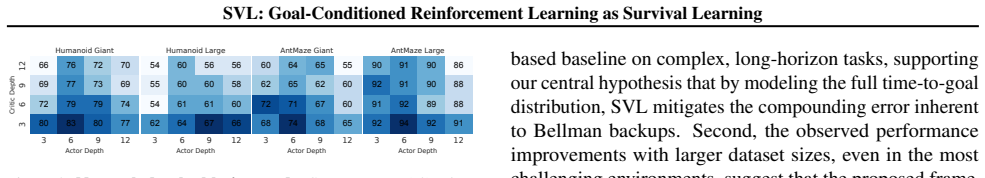

By modeling time-to-goal from each state as a probability distribution, the goal-conditioned value function admits an exact expression as a discounted sum of survival probabilities. This identity converts value estimation into maximum-likelihood training of a hazard model on observed times to goal, including right-censored trajectories. Three practical estimators—finite-horizon truncation and two binned infinite-horizon approximations—are introduced to implement the identity, and experiments show that policies derived from them perform competitively with temporal-difference and Monte Carlo baselines on long-horizon offline goal-conditioned tasks.

What carries the argument

the closed-form identity that rewrites the goal-conditioned value function as a discounted sum of survival probabilities obtained from a hazard model on time-to-goal

If this is right

- Value estimation reduces to a supervised maximum-likelihood problem on time-to-goal data rather than a bootstrapped temporal-difference problem.

- Both successful goal-reaching trajectories and right-censored trajectories contribute directly to training the value estimator.

- Finite-horizon truncation together with binned infinite-horizon approximations allow the identity to be applied to tasks with long or unbounded horizons.

- When the hazard model is combined with hierarchical actors, the resulting policies match or exceed those of temporal-difference and Monte Carlo baselines on offline goal-conditioned benchmarks.

Where Pith is reading between the lines

- The survival framing may transfer to other sparse-reward or event-based reinforcement learning problems where the key signal is time until a terminal event.

- Standard survival-analysis tools for handling censoring and competing risks could be imported to improve data efficiency in broader offline reinforcement learning settings.

- Direct comparison of the binned approximations against exact long-horizon rollouts on continuous-control domains would quantify any bias introduced by discretization.

Load-bearing premise

Modeling time to goal as a probability distribution via a hazard function will produce stable value estimates that faithfully capture the objective without the instability of temporal-difference bootstrapping.

What would settle it

Compute exact Monte Carlo estimates of the goal-conditioned values on a test set of trajectories and compare them directly to the hazard-model estimates; systematic large discrepancies would show that the identity or the practical estimators do not hold.

Figures

read the original abstract

Standard approaches to goal-conditioned reinforcement learning (GCRL) that rely on temporal-difference learning can be unstable and sample-inefficient due to bootstrapping. While recent work has explored contrastive and supervised formulations to improve stability, we present a probabilistic alternative, called survival value learning (SVL), that reframes GCRL as a survival learning problem by modeling the time-to-goal from each state as a probability distribution. This structured distributional Monte Carlo perspective yields a closed-form identity that expresses the goal-conditioned value function as a discounted sum of survival probabilities, enabling value estimation via a hazard model trained via maximum likelihood on both event and right-censored trajectories. We introduce three practical value estimators, including finite-horizon truncation and two binned infinite-horizon approximations to capture long-horizon objectives. Experiments on offline GCRL benchmarks show that SVL combined with hierarchical actors matches or surpasses strong hierarchical TD and Monte Carlo baselines, excelling on complex, long-horizon tasks.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes Survival Value Learning (SVL) for goal-conditioned reinforcement learning (GCRL). It reframes GCRL as a survival analysis problem by modeling time-to-goal from each state as a probability distribution via a hazard function. This yields a closed-form identity expressing the goal-conditioned value function as a discounted sum of survival probabilities, which is estimated via maximum likelihood training of the hazard model on both event and right-censored offline trajectories. Three practical estimators are introduced (finite-horizon truncation and two binned infinite-horizon approximations), and experiments on offline GCRL benchmarks show SVL with hierarchical actors matches or surpasses strong hierarchical TD and Monte Carlo baselines, with advantages on long-horizon tasks.

Significance. If the identity holds and the estimators accurately recover the value function, SVL offers a stable, bootstrapping-free alternative to temporal-difference methods in GCRL by drawing on survival analysis and distributional Monte Carlo ideas. The handling of right-censored trajectories via MLE is a concrete strength, and the approach could improve reliability in long-horizon goal-conditioned settings. Credit is due for the structured probabilistic reformulation and the explicit estimators that extend to infinite horizons.

major comments (2)

- [§3 (Practical Value Estimators)] §3 (Practical Value Estimators) and the associated equations for the binned approximations: The manuscript claims the two binned infinite-horizon approximations faithfully capture long-horizon objectives. However, when the time-to-goal tail is heavy or the hazard varies within bins, replacing the infinite sum with a coarser discretization introduces non-negligible bias relative to the true E[γ^T]. This is load-bearing for the superiority claims on complex long-horizon tasks, and the paper provides neither an error bound on the discretization nor empirical checks that the bias remains smaller than the variance of TD baselines.

- [§2 (Closed-form Identity)] §2 (Closed-form Identity): The central identity V(s) = 1 - (1-γ) ∑_{t=0}^∞ γ^t S(t|s) is presented as following directly from the distributional Monte Carlo perspective. While the identity itself may be parameter-free, the hazard model is fit by MLE to finite offline data, so extrapolation error in the tail (beyond observed censoring) is uncontrolled; the manuscript lacks a quantitative analysis of how this propagates to value estimates on the long-horizon benchmarks where SVL is claimed to excel.

minor comments (2)

- The notation for the survival function S(t|s) and its relation to the learned hazard should be stated explicitly once, including how right-censoring is incorporated in the likelihood.

- [Experiments] Table or figure reporting the benchmark results should include standard deviations across seeds and a clear statement of which tasks are long-horizon to allow direct assessment of the claimed advantages.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback and for recognizing the strengths of the probabilistic reformulation and censored-data handling in SVL. We address the two major comments point by point below, acknowledging where additional analysis would strengthen the claims, and describe the revisions we will incorporate.

read point-by-point responses

-

Referee: [§3 (Practical Value Estimators)] §3 (Practical Value Estimators) and the associated equations for the binned approximations: The manuscript claims the two binned infinite-horizon approximations faithfully capture long-horizon objectives. However, when the time-to-goal tail is heavy or the hazard varies within bins, replacing the infinite sum with a coarser discretization introduces non-negligible bias relative to the true E[γ^T]. This is load-bearing for the superiority claims on complex long-horizon tasks, and the paper provides neither an error bound on the discretization nor empirical checks that the bias remains smaller than the variance of TD baselines.

Authors: We agree that binning can introduce discretization bias when tails are heavy or hazards vary within bins. The manuscript selects bin widths from the empirical time-to-goal distribution in the offline dataset to keep this bias small relative to the stability gains, and the reported outperformance on long-horizon tasks provides indirect support. To directly address the concern, we will add an appendix containing an empirical comparison of the binned estimators against a fine-grained Monte Carlo approximation of the infinite-horizon sum on the same benchmarks; this will quantify the discretization error and confirm it remains smaller than the performance margin over TD baselines. We will also expand the main text to discuss the bias-variance tradeoff guiding bin selection. revision: partial

-

Referee: [§2 (Closed-form Identity)] §2 (Closed-form Identity): The central identity V(s) = 1 - (1-γ) ∑_{t=0}^∞ γ^t S(t|s) is presented as following directly from the distributional Monte Carlo perspective. While the identity itself may be parameter-free, the hazard model is fit by MLE to finite offline data, so extrapolation error in the tail (beyond observed censoring) is uncontrolled; the manuscript lacks a quantitative analysis of how this propagates to value estimates on the long-horizon benchmarks where SVL is claimed to excel.

Authors: The identity is exact and parameter-free; it follows directly from the definition of the survival function and the geometric discounting without reference to any particular hazard model. Maximum-likelihood estimation on right-censored trajectories is a standard, consistent procedure in survival analysis that permits tail extrapolation under the model's functional assumptions. While the current manuscript does not contain a dedicated propagation analysis, the empirical results on long-horizon tasks (where tail behavior is critical) indicate that any extrapolation error does not negate the observed advantages. In revision we will add a quantitative sensitivity study that varies the degree of censoring in the training data and measures the resulting change in value estimates, thereby bounding the practical impact of tail extrapolation. revision: partial

Circularity Check

No significant circularity; core identity is a standard mathematical re-expression independent of fitted parameters

full rationale

The paper derives a closed-form identity V(s) = 1 - (1-γ) ∑ γ^t S(t|s) from the distributional Monte Carlo view of goal-reaching returns. This is exactly the known relation E[γ^T] = 1 - (1-γ) ∑ γ^t P(T > t) for hitting time T, which follows directly from the definition of the discounted return under sparse goal rewards and holds without reference to any model, fit, or self-citation. The subsequent hazard-model MLE step is ordinary statistical estimation on censored data; the resulting value estimator is an approximation to this identity rather than a quantity forced to equal its own training objective. Finite-horizon truncation and binned approximations are explicitly introduced as practical compromises, not claimed to be exact by construction. No load-bearing self-citation or ansatz smuggling appears in the provided derivation chain. The approach therefore remains self-contained.

Axiom & Free-Parameter Ledger

free parameters (1)

- horizon truncation length or bin sizes

axioms (1)

- domain assumption The environment is a goal-conditioned MDP in which time-to-goal is a well-defined random variable with right-censoring possible.

Reference graph

Works this paper leans on

-

[1]

URL https://openreview.net/forum? id=gfXBNBKx02. Andersen, P. K., Borgan, O., Gill, R. D., and Keiding, N. Statistical models based on counting processes. Springer Science & Business Media, 2012. Andrychowicz, M., Wolski, F., Ray, A., Schneider, J., Fong, R., Welinder, P., McGrew, B., Tobin, J., Pieter Abbeel, O., and Zaremba, W. Hindsight experience repl...

-

[2]

URL https://openreview.net/forum? id=rJLS7qKel. Efron, B. The efficiency of cox’s likelihood function for censored data.Journal of the American statistical Associ- ation, 72(359):557–565, 1977. Emmons, S., Eysenbach, B., Kostrikov, I., and Levine, S. Rvs: What is essential for offline RL via supervised learning? InThe Tenth International Conference on Lea...

-

[3]

Hansen, S., Dabney, W., Barreto, A., Warde-Farley, D., de Wiele, T

URL https://openreview.net/forum? id=rALA0Xo6yNJ. Hansen, S., Dabney, W., Barreto, A., Warde-Farley, D., de Wiele, T. V ., and Mnih, V . Fast task inference with variational intrinsic successor features. In8th Interna- tional Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, April 26-30, 2020. OpenRe- view.net, 2020. URL https://op...

2020

-

[4]

Kostrikov, I., Nair, A., and Levine, S

URL https://openreview.net/forum? id=5ryn8tYWHL. Kostrikov, I., Nair, A., and Levine, S. Offline reinforcement learning with implicit q-learning. InThe Tenth Interna- tional Conference on Learning Representations, ICLR 2022, Virtual Event, April 25-29, 2022. OpenReview.net,

2022

-

[5]

Kvamme, H., Borgan, Ø., and Scheel, I

URL https://openreview.net/forum? id=68n2s9ZJWF8. Kvamme, H., Borgan, Ø., and Scheel, I. Time-to-event pre- diction with neural networks and cox regression.Journal of machine learning research, 20(129):1–30, 2019. Lee, C., Zame, W., Yoon, J., and Van Der Schaar, M. Deep- hit: A deep learning approach to survival analysis with competing risks. InProceeding...

-

[6]

Advantage-Weighted Regression: Simple and Scalable Off-Policy Reinforcement Learning

PMLR, 2023. Mendonca, R., Rybkin, O., Daniilidis, K., Hafner, D., and Pathak, D. Discovering and achieving goals via world models.Advances in Neural Information Processing Systems, 34:24379–24391, 2021. Nagpal, C., Li, X., and Dubrawski, A. Deep survival ma- chines: Fully parametric survival regression and repre- sentation learning for censored data with ...

work page internal anchor Pith review arXiv 2023

-

[7]

arXiv preprint arXiv:2311.02013 , year=

URL https://openreview.net/forum? id=SygwwGbRW. Schaul, T., Horgan, D., Gregor, K., and Silver, D. Uni- versal value function approximators. In Bach, F. and Blei, D. (eds.),Proceedings of the 32nd Interna- tional Conference on Machine Learning, volume 37 12 SVL: Goal-Conditioned Reinforcement Learning as Survival Learning ofProceedings of Machine Learning...

-

[8]

arXiv preprint arXiv:2105.06350 , year=

PMLR, 2018. Zhu, M., Liu, M., Shen, J., Zhang, Z., Chen, S., Zhang, W., Ye, D., Yu, Y ., Fu, Q., and Yang, W. Mapgo: Model- assisted policy optimization for goal-oriented tasks.arXiv preprint arXiv:2105.06350, 2021. 13 SVL: Goal-Conditioned Reinforcement Learning as Survival Learning A. Appendix A.1. Link between survival and hazard functions The recursiv...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.