Recognition: unknown

Response time of lateral predictive coding and benefits of modular structures

Pith reviewed 2026-05-09 23:00 UTC · model grok-4.3

The pith

Optimal lateral predictive coding networks can minimize response time to near the theoretical lower bound while keeping predictive error and signal robustness unchanged, and modular structures with fewer connections perform equivalently to全

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The characteristic response time of the LPC system can be minimized to closely approaching the lower-bound value without compromising the mean predictive error and the information robustness of signal transmission. Optimal LPC networks taking a modular structural organization with extensively reduced number of lateral interactions are equally excellent as all-to-all completely connected networks in feature detection performance, response time, energetic cost and information robustness.

What carries the argument

Recurrent dynamical equations of lateral predictive coding networks whose interaction strengths are optimized under the joint constraints of prediction error, information robustness, and now response speed, with modular connectivity patterns that sparsify lateral links while preserving the same performance metrics.

If this is right

- Response time can be brought arbitrarily close to the network's intrinsic lower bound without raising energetic cost or lowering robustness.

- Modular connectivity patterns achieve the same feature detection accuracy as complete connectivity at the same cost and speed.

- The same optimization framework that previously traded cost against robustness now also controls dynamics without new trade-offs.

- Sparse modular networks remain stable and efficient under the same input distributions used for the fully connected case.

Where Pith is reading between the lines

- Such networks could serve as building blocks for larger hierarchical models where each module processes local features on fast timescales.

- The equivalence of modular and dense versions suggests that biological circuits might evolve sparse lateral wiring without performance loss if the same optimization principle applies.

- The approach offers a way to test whether real sensory areas operate near the derived response-time bound by comparing measured latencies to the predicted minimum for given connectivity density.

Load-bearing premise

That changes to the recurrent interaction terms can shorten response time independently of the existing error and robustness values, and that reducing connections to a modular pattern leaves those values and feature extraction quality intact.

What would settle it

Constructing an optimal LPC network, applying the response-time adjustment, and measuring whether mean predictive error rises or information robustness falls, or whether a modular version shows lower feature detection accuracy than its fully connected counterpart under identical input statistics.

Figures

read the original abstract

Lateral predictive coding (LPC) is a simple theoretical framework to appreciate feature detection in biological neural circuits. Recent theoretical work [Huang et al., Phys.Rev.E 112, 034304 (2025)] has successfully constructed optimal LPC networks capable of extracting non-Gaussian hidden input features by imposing the tradeoff between energetic cost and information robustness, but the resulting dynamical systems of recurrent interactions can be very slow in responding to external inputs. We investigate response-time reduction in the present paper. We find that the characteristic response time of the LPC system can be minimized to closely approaching the lower-bound value without compromising the mean predictive error (energetic cost) and the information robustness of signal transmission. We further demonstrate that optimal LPC networks taking a modular structural organization with extensively reduced number of lateral interactions are equally excellent as all-to-all completely connected networks, in terms of feature detection performance, response time, energetic cost and information robustness.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

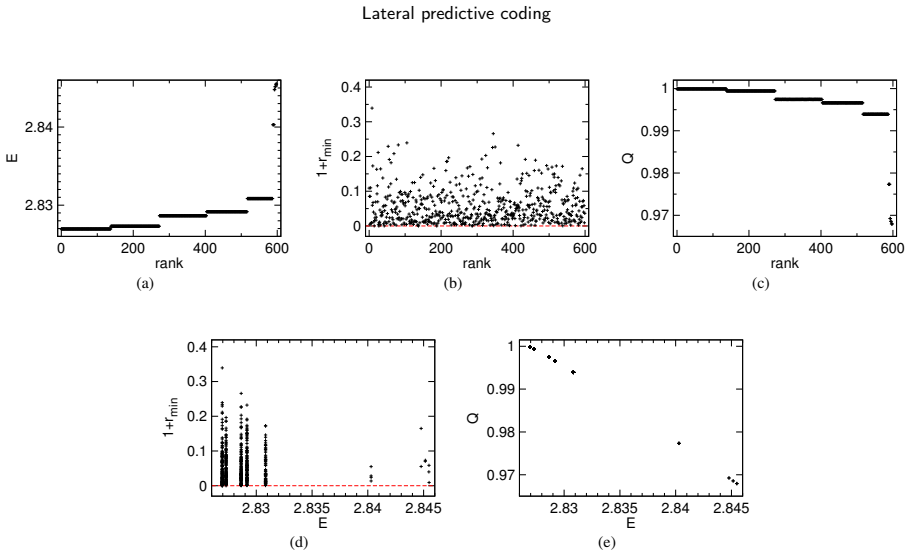

Summary. The manuscript extends prior work on optimal lateral predictive coding (LPC) networks, which balance energetic cost against information robustness to extract non-Gaussian features. It shows that the characteristic response time of the resulting recurrent dynamics can be minimized to approach the theoretical lower bound while leaving mean predictive error and information robustness unchanged. It further shows that modular architectures with substantially reduced lateral connectivity achieve equivalent performance to all-to-all networks on feature detection, response time, energetic cost, and robustness, supported by explicit constructions, numerical optimization protocols, and direct modular-versus-dense comparisons.

Significance. If the reported invariance holds, the work removes a practical limitation of earlier LPC models (slow transients) without sacrificing their core advantages, and demonstrates that sparse modular connectivity is sufficient for optimality. This has direct implications for understanding efficient feature detection in biological circuits and for designing sparse recurrent networks. The explicit constructions, simulation controls, and side-by-side error/time histograms constitute reproducible, falsifiable evidence that strengthens the contribution.

minor comments (3)

- The definition of the lower-bound response time and the precise optimization procedure used to approach it should be stated explicitly in the main text (currently referenced only to the prior Huang et al. paper) so that the invariance claim can be verified without external material.

- Figure captions for the modular-versus-all-to-all comparisons should include the exact sparsity level (fraction of retained lateral connections) and the number of independent trials used to generate the histograms and error curves.

- A brief statement of the numerical integrator and convergence criterion employed for the recurrent dynamics would improve reproducibility of the reported time-constant distributions.

Simulated Author's Rebuttal

We thank the referee for their positive summary of our work on response-time minimization in optimal lateral predictive coding networks and the equivalence of modular architectures to dense ones. The recommendation for minor revision is noted, and we appreciate the recognition of the explicit constructions and numerical evidence provided.

Circularity Check

No significant circularity; derivation chain is self-contained

full rationale

The paper starts from the optimal LPC networks constructed in the cited prior work via the energetic-cost versus information-robustness tradeoff, then adds response-time minimization as an independent objective. It reports that this minimization reaches near the theoretical lower bound while the mean predictive error and robustness metrics remain unchanged, and that modular sparsity preserves all four metrics at full-connectivity levels. These invariances are presented as outcomes of explicit numerical optimization and direct comparisons (error curves, time-constant histograms) rather than definitions or reparameterizations. The self-citation supplies the base model but does not bear the load of the new claims, which rest on the paper's own constructions and simulations. No equation or step reduces by construction to prior inputs.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption LPC networks extract non-Gaussian hidden features via an energetic-cost versus information-robustness tradeoff

Reference graph

Works this paper leans on

-

[1]

Retrieval capabilities of hierarchical networks: From Dyson to Hopfield

Agliari, E., Barra, A., Galluzzi, A., Guerra, F., Tantari, D., Tavani, F., 2015. Retrieval capabilities of hierarchical networks: From Dyson to Hopfield. Physical Review Letters 114, 028103. doi:10.1103/PhysRevLett.114.028103

-

[2]

Predictive coding is a consequence of energy efficiency in recurrent neural networks

Ali, A., Ahmad, N., de Groot, E., van Gerven, M.A.J., Kietzmann, T.C., 2022. Predictive coding is a consequence of energy efficiency in recurrent neural networks. Patterns 3, 100639. doi:10.1016/j.patter.2022.100639

-

[3]

doi:10.1016/j.neunet.2012.06.003

Barra,A.,Bernacchia,A.,Santucci,E.,Contucci,P.,2012.Ontheequivalenceofhopfieldnetworksandboltzmannmachines.NeuralNetworks 34, 1–9. doi:10.1016/j.neunet.2012.06.003

-

[4]

Canonical microcircuits for predictive coding

Bastos,A.M.,Usrey,W.M.,Adams,R.A.,Mangun,G.R.,Fries,P.,Friston,K.J.,2012. Canonicalmicrocircuitsforpredictivecoding. Neuron 76, 695–711. doi:10.1016/j.neuron.2012.10.038

-

[5]

Bell, A.J., Sejnowski, T.J., 1995. An information-maximization approach to blind separation and blind deconvolution. Neural Computation 7, 1129–1159. doi:10.1162/neco.1995.7.6.1129

-

[6]

PLOS Com- putational Biology18(9), 1010492 (2022) https://doi.org/10.1371/journal.pcbi

Chen, Y., Wang, S., Hilgetag, C.C., Zhou, C., 2017. Features of spatial and functional segregation and integration of the primate connectome revealed by trade-off between wiring cost and efficiency. PLOS Computational Biology 13(9), e1005776. doi:10.1371/journal.pcbi. 1005776

-

[7]

Lateral interactions in visual cortex, in: Valberg, A., Lee, B.B

Gilbert, C.D., Ts’o, D.Y., Wiese, T.N., 1991. Lateral interactions in visual cortex, in: Valberg, A., Lee, B.B. (Eds.), From Pigments to Perception: Advances in Understanding Visual Processes. Plenum Press, pp. 239–247

1991

-

[8]

Grossberg, S., 2013. Recurrent neural networks. Scholarpedia 8(2), 1888. doi:10.4249/scholarpedia.1888

-

[9]

Development of low entropy coding in a recurrent network

Harpur, G.F., Prager, R.W., 1996. Development of low entropy coding in a recurrent network. Network: Computation in Neural Systems 7, 277–284. doi:10.1088/0954-898X_7_2_007

-

[10]

Statistical Mechanics of Neural Networks

Huang, H., 2022. Statistical Mechanics of Neural Networks. Higher Education Press, Beijing, China

2022

-

[11]

Lateralpredictivecodingrevisited:internalmodel,symmetrybreaking,andresponsetime

Huang,Z.Y.,Fan,X.Y.,Zhou,J.,Zhou,H.J.,2022. Lateralpredictivecodingrevisited:internalmodel,symmetrybreaking,andresponsetime. Commun. Theor. Phys. 74, 095601. doi:10.1088/1572-9494/ac7c03

-

[12]

Discontinuous phase transitions of feature detection in lateral predictive coding

Huang, Z.Y., Wang, W., Zhou, H.J., 2025. Discontinuous phase transitions of feature detection in lateral predictive coding. Physical Review E 112, 034304. doi:10.1103/3jk3-x177. G. Cai et al.:Preprint submitted to ElsevierPage 15 of 16 Lateral predictive coding

-

[13]

Huang, Z.Y., Zhou, R., Huang, M., Zhou, H.J., 2024. Energy–information trade-off induces continuous and discontinuous phase transitions in lateral predictive coding. Science China: Phys. Mech. Astron. 67, 260511. doi:10.1007/s11433-024-2341-2

-

[14]

How specific classes of retinal cells contribute to vision: a computational model

Kartsaki, E., 2022. How specific classes of retinal cells contribute to vision: a computational model. Ph.D. thesis. Biosciences Institute, Newcastle University. URL:https://theses.hal.science/tel-03869570v3

2022

-

[15]

Liang, J., Wang, S.J., Zhou, C., 2022. Less is more: wiring-economical modular networks support self-sustained firing-economical neural avalanches for efficient processing. National Science Review 9, nwab102. doi:10.1093/nsr/nwab102

-

[16]

Why does deep and cheap learning work so well? J

Lin, H.W., Tegmark, M., Rolnick, D., 2017. Why does deep and cheap learning work so well? J. Stat. Phys. 168, 1223–1247. doi:10.1007/ s10955-017-1836-5

2017

-

[17]

Where is the error? hierarchical predictive coding through dendritic error computation

Mikulasch, F.A., Rudelt, L., Wibral, M., Priesemann, V., 2023. Where is the error? hierarchical predictive coding through dendritic error computation. Trends in Neurosciences 46, 45–59. doi:10.1016/j.tins.2022.09.007

-

[18]

Millidge, B., Salvatori, T., Song, Y., Bogacz, R., Lukasiewicz, T., 2022. Predictive coding: Towards a future of deep learning beyond backpropagation?, in: Proceedings of the 31st International Joint Conference on Artificial Intelligence (IJCAI), Vienna, Austria. ACM Press, New York, pp. 5538–5545

2022

-

[19]

Predictive coding networks for temporal prediction

Millidge, B., Tang, M., Osanlouy, M., Harper, N.S., Bogacz, R., 2024. Predictive coding networks for temporal prediction. PLoS Comput. Biol. 20, e1011183. doi:10.1371/journal.pcbi.1011183

-

[20]

Qiu,J.,Huang,H.,2024. Anoptimization-basedequilibriummeasuredescribingfixedpointsofnon-equilibriumdynamics:applicationtothe edge of chaos. Commun. Theor. Phys. 77, 035601. doi:10.1088/1572-9494/ad8126

-

[21]

Salatiello, A., 2025. Modularity is the bedrock of natural and artificial intelligence, in: Second Workshop on Representational Alignment at ICLR 2025, p. arXiv2602.18960. URL:https://openreview.net/forum?id=kqrnhp3nNS

-

[22]

Schneidman, E., Berry II, M.J., Segev, R., Bialek, W., 2006. Weak pairwise correlations imply strongly correlated network states in a neural population. Nature 440, 1007–1012. doi:10.1038/nature04701

-

[23]

Extensive parallel processing on scale-free networks

Sollich, P., Tantari, D., Annibale, A., Barra, A., 2014. Extensive parallel processing on scale-free networks. Physical Review Letters 113, 238106. doi:10.1103/PhysRevLett.113.238106

-

[24]

Predictive coding: a fresh view of inhibition in the retina

Srinivasan, M.V., Laughlin, S.B., Dubs, A., 1982. Predictive coding: a fresh view of inhibition in the retina. Proc. R. Soc. Lond. B 216, 427–459. doi:10.1098/rspb.1982.0085

-

[25]

Lateral interactions in primary visual cortex: A model bridging physiology and psychophysics

Stemmler, M., Usher, M., Niebur, E., 1995. Lateral interactions in primary visual cortex: A model bridging physiology and psychophysics. Science 269, 1877–1880. doi:10.1126/science.7569930

-

[26]

The scaling limit of high-dimensional online independent component analysis

Wang, C., Lu, Y.M., 2019. The scaling limit of high-dimensional online independent component analysis. Journal of Statistical Mechanics: Theory and Experiment 2019, 124011. doi:10.1088/1742-5468/ab39d6

-

[27]

Estimates of storage capacity in the q-state Potts-glass neural network

Xiong, D., Zhao, H., 2010. Estimates of storage capacity in the q-state Potts-glass neural network. Journal of Physics A: Mathematical and Theoretical 43, 445001. doi:10.1088/1751-8113/43/44/445001

-

[28]

Energy-efficient neural information processing in individual neurons and neuronal networks

Yu, L., Yu, Y., 2017. Energy-efficient neural information processing in individual neurons and neuronal networks. J. Neurosci. Res. 95, 2253–2266. doi:10.1002/jnr.24131

-

[29]

Learning Hamiltonian dynamics with reservoir computing

Zhang, H., Fan, H., Wang, L., Wang, X., 2021. Learning Hamiltonian dynamics with reservoir computing. Physical Review E 104, 024205. doi:10.1103/PhysRevE.104.024205

-

[30]

Zhang, M., Chitic, R., Bohté, S.M., 2025. Energy optimization induces predictive-coding properties in a multi-compartment spiking neural network model. PLoS Comput. Biol. 21, e1013112. doi:10.1371/journal.pcbi.1013112

-

[31]

Network landscape from a Brownian particle’s perspective

Zhou, H., 2003. Network landscape from a Brownian particle’s perspective. Physical Review E 67, 041908. doi:10.1103/PhysRevE.67. 041908. G. Cai et al.:Preprint submitted to ElsevierPage 16 of 16

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.