Recognition: unknown

CT-Guided Spatially-varying Regularization for Voxel-Wise Deformable Whole-Body PET Registration

Pith reviewed 2026-05-08 08:57 UTC · model grok-4.3

The pith

Paired CT scans supply a voxel-wise map that adapts regularization strength to tissue type during whole-body PET deformable registration.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that a CT-derived voxel-wise regularization map for the dense displacement field yields statistically significant gains in whole-body registration performance and organ-wise alignment on cross-tracer PET/CT data from 296 patients, by enforcing stronger smoothness on rigid anatomy and weaker constraints on deformable soft tissue.

What carries the argument

The voxel-wise regularization map constructed from the paired CT volume to modulate the strength of the dense displacement field regularizer at each location.

If this is right

- Whole-body PET registration metrics improve with statistical significance over the uniform-regularization baseline.

- Organ-specific alignment accuracy increases for structures that differ in rigidity.

- The same network remains effective across 18F-PSMA and 18F-FDG tracer pairs without retraining the regularization component.

Where Pith is reading between the lines

- The approach could be ported to other paired-modality settings such as MR-CT registration where one modality supplies tissue-contrast information.

- If the CT map proves robust, it may reduce the amount of manual landmark supervision needed to train the underlying registration network.

- A natural extension would test whether the map can be inferred from the PET image alone in cases where CT is unavailable.

Load-bearing premise

The paired CT volume can be turned into a reliable map that correctly assigns stronger regularization to rigid tissues and weaker regularization to soft tissues.

What would settle it

On the same 296-patient dataset, replacing the CT-derived map with a uniform or randomly permuted map and finding no statistically significant drop in registration metrics or organ alignment would falsify the contribution of the spatial adaptation.

Figures

read the original abstract

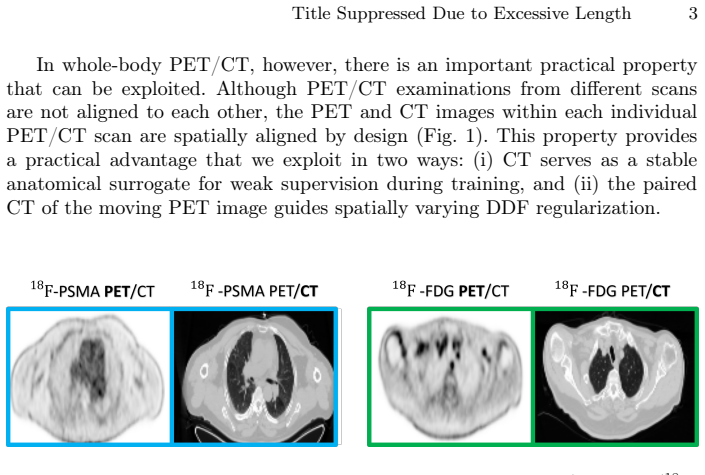

Whole-body Positron Emission Tomography (PET) registration is essential for multi-parametric tumor characterization and assessment of metastatic disease progression. In deep learning-based deformable registration, the dense displacement field (DDF) regularizer is crucial for stabilizing optimization and preventing unrealistic deformations in large 3D volumes. A key challenge in whole-body deformable registration is anatomical heterogeneity, rigid structures (e.g., bones) should undergo stronger regularization, whereas soft tissues require more flexible deformation and weaker constraints. In this work, we propose a simple yet effective CT-guided spatially-varying regularization strategy for whole-body cross-tracer deformable PET registration. The key idea is to use the paired CT volume from the PET/CT acquisition to construct a voxel-wise regularization map for the DDF, replacing the conventional single global regularization weight. This yields anatomy-adaptive regularization strength across rigid and soft tissues. The proposed method is evaluated on a real clinical cross-tracer PET/CT dataset of 296 patients involving 18F-PSMA and 18F-FDG, showing that the proposed method achieves statistically significant improvements over weakly-supervised registration baseline in both whole-body registration performance and organ-wise alignment.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a CT-guided spatially-varying regularization strategy for deep learning-based deformable registration of whole-body PET images. It constructs a voxel-wise regularization map for the dense displacement field (DDF) from the paired CT volume acquired in PET/CT scans, replacing a single global regularization weight to provide stronger constraints on rigid structures (e.g., bone) and weaker constraints on soft tissues. The approach is evaluated on a clinical cross-tracer dataset of 296 patients (18F-PSMA and 18F-FDG), claiming statistically significant improvements over a weakly-supervised registration baseline in both whole-body registration performance and organ-wise alignment.

Significance. If the central claim holds, the work would be significant for clinical PET registration tasks, as whole-body deformable alignment is key for multi-parametric tumor characterization and metastatic progression assessment. The method's practical appeal lies in leveraging routinely available paired CT data for anatomy-adaptive regularization without introducing new learned parameters or external supervision, potentially improving stability in large 3D volumes while addressing anatomical heterogeneity.

major comments (2)

- [Methods] The construction of the voxel-wise regularization map from CT intensities is load-bearing for the central claim but lacks an explicit equation or algorithmic description (e.g., whether it uses fixed thresholding, a monotonic intensity-to-weight function, or learned mapping). Without this, it is impossible to verify robustness to common CT artifacts such as contrast agents, metal implants, or partial-volume effects at bone-soft tissue boundaries, which could cause the map to assign incorrect regularization strengths and undermine the reported gains in DDF stability and organ alignment.

- [Experiments] The experimental section reports statistically significant improvements on 296 patients but does not provide the concrete metrics (e.g., Dice, TRE, or folding percentage), p-values, effect sizes, or the precise implementation of the weakly-supervised baseline. This prevents assessment of whether the spatially-varying regularization is the true driver of improvement or if gains could be explained by other implementation details.

minor comments (1)

- [Abstract] The abstract states the key idea and results but omits the specific quantitative improvements and the exact form of the regularization map, which would allow readers to immediately gauge the practical impact.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed review. We address each major comment below and will revise the manuscript to improve clarity and completeness.

read point-by-point responses

-

Referee: [Methods] The construction of the voxel-wise regularization map from CT intensities is load-bearing for the central claim but lacks an explicit equation or algorithmic description (e.g., whether it uses fixed thresholding, a monotonic intensity-to-weight function, or learned mapping). Without this, it is impossible to verify robustness to common CT artifacts such as contrast agents, metal implants, or partial-volume effects at bone-soft tissue boundaries, which could cause the map to assign incorrect regularization strengths and undermine the reported gains in DDF stability and organ alignment.

Authors: We agree that an explicit algorithmic description is required for reproducibility. The current manuscript describes the approach at a conceptual level but does not include the precise mapping. In the revised version we will add a dedicated methods subsection containing the explicit equation (a piecewise linear function of CT Hounsfield units that assigns higher regularization weights to bone-like intensities) together with the algorithmic steps used to generate the voxel-wise map. We will also add a short discussion of robustness to common CT artifacts, including how contrast agents and metal implants are handled via intensity clipping and how partial-volume effects at boundaries are mitigated by spatial smoothing of the map. revision: yes

-

Referee: [Experiments] The experimental section reports statistically significant improvements on 296 patients but does not provide the concrete metrics (e.g., Dice, TRE, or folding percentage), p-values, effect sizes, or the precise implementation of the weakly-supervised baseline. This prevents assessment of whether the spatially-varying regularization is the true driver of improvement or if gains could be explained by other implementation details.

Authors: We acknowledge that the experimental reporting would benefit from greater detail. The revised manuscript will include an expanded results section with a comprehensive table reporting all quantitative metrics (organ-wise Dice scores, target registration error on landmarks where available, and percentage of voxels with negative Jacobian determinant), the exact p-values and effect sizes from the statistical tests performed, and a precise description of the weakly-supervised baseline (including the loss terms, weighting factors, and network architecture used). This will allow readers to isolate the contribution of the spatially-varying regularization. revision: yes

Circularity Check

No significant circularity in the derivation chain

full rationale

The paper describes a direct construction: the paired CT volume is used to build a voxel-wise regularization map that replaces a single global weight for the DDF regularizer, providing anatomy-adaptive strength for rigid versus soft tissues. No equations, fitted parameters, or self-citations are presented that reduce the proposed map or the claimed improvements to the inputs by construction. The evaluation consists of empirical comparison on a 296-patient cross-tracer PET/CT dataset against a weakly-supervised baseline, with reported statistical significance in whole-body and organ-wise metrics. This remains an independent methodological choice and empirical test rather than a self-referential or tautological derivation.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Paired CT and PET volumes are available and sufficiently aligned to derive a meaningful voxel-wise regularization map

Reference graph

Works this paper leans on

-

[1]

Annals of Saudi medicine31(1), 3–13 (2011)

Almuhaideb, A., Papathanasiou, N., Bomanji, J.: 18f-fdg pet/ct imaging in oncol- ogy. Annals of Saudi medicine31(1), 3–13 (2011)

2011

-

[2]

IEEE transactions on medical imaging38(8), 1788–1800 (2019)

Balakrishnan, G., Zhao, A., Sabuncu, M.R., Guttag, J., Dalca, A.V.: Voxelmorph: a learning framework for deformable medical image registration. IEEE transactions on medical imaging38(8), 1788–1800 (2019)

2019

-

[3]

arXiv preprint arXiv:2303.06168 (2023)

Chen, J., Liu, Y., He, Y., Du, Y.: Spatially-varying regularization with conditional transformer for unsupervised image registration. arXiv preprint arXiv:2303.06168 (2023)

-

[4]

Journal of Nuclear Medicine63(1), 69–75 (2022)

Chen, R., Wang, Y., Zhu, Y., Shi, Y., Xu, L., Huang, G., Liu, J.: The added value of 18f-fdg pet/ct compared with 68ga-psma pet/ct in patients with castration- resistant prostate cancer. Journal of Nuclear Medicine63(1), 69–75 (2022)

2022

-

[5]

DenOtter, T.D., Schubert, J.: Hounsfield unit (2019)

2019

-

[6]

In: International Conference on Medical Image Computing and Computer-Assisted Intervention

Gerig, T., Shahim, K., Reyes, M., Vetter, T., Lüthi, M.: Spatially varying regis- tration using gaussian processes. In: International Conference on Medical Image Computing and Computer-Assisted Intervention. pp. 413–420. Springer (2014)

2014

-

[7]

In: International MICCAI brainlesion workshop

Hatamizadeh, A., Nath, V., Tang, Y., Yang, D., Roth, H.R., Xu, D.: Swin unetr: Swin transformers for semantic segmentation of brain tumors in mri images. In: International MICCAI brainlesion workshop. pp. 272–284. Springer (2021)

2021

-

[8]

Medical image analysis49, 1–13 (2018)

Hu, Y., Modat, M., Gibson, E., Li, W., Ghavami, N., Bonmati, E., Wang, G., Bandula, S., Moore, C.M., Emberton, M., et al.: Weakly-supervised convolutional neural networks for multimodal image registration. Medical image analysis49, 1–13 (2018)

2018

-

[9]

Frontiers in medicine12, 1607227 (2025)

Iacovitti, C.M., Cuzzocrea, M., Rizzo, A., Bauckneht, M., Delgado Bolton, R.C., Paone, G., Treglia, G.: Diagnostic value of dual-tracer pet/ct with [18f] fdg and psma ligands in prostate cancer: an updated systematic review. Frontiers in medicine12, 1607227 (2025)

2025

-

[10]

Nature methods18(2), 203–211 (2021)

Isensee, F., Jaeger, P.F., Kohl, S.A., Petersen, J., Maier-Hein, K.H.: nnu-net: a self-configuring method for deep learning-based biomedical image segmentation. Nature methods18(2), 203–211 (2021)

2021

-

[11]

Scientific Reports12(1), 18768 (2022) 10 X

Jönsson, H., Ekström, S., Strand, R., Pedersen, M.A., Molin, D., Ahlström, H., Kullberg, J.: An image registration method for voxel-wise analysis of whole-body oncological pet-ct. Scientific Reports12(1), 18768 (2022) 10 X. Wu et al

2022

-

[12]

EJNMMI research2(1), 62 (2012)

Li, X., Abramson, R.G., Arlinghaus, L.R., Chakravarthy, A.B., Abramson, V., Mayer, I., Farley, J., Delbeke, D., Yankeelov, T.E.: An algorithm for longitudinal registration of pet/ct images acquired during neoadjuvant chemotherapy in breast cancer: preliminary results. EJNMMI research2(1), 62 (2012)

2012

-

[13]

Medical physics51(3), 1974–1984 (2024)

Matkovic, L., Lei, Y., Fu, Y., Wang, T., Kesarwala, A.H., Axente, M., Roper, J., Higgins, K., Bradley, J.D., Liu, T., et al.: Deformable lung 4dct image registration via landmark-driven cycle network. Medical physics51(3), 1974–1984 (2024)

1974

-

[14]

In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition

Niethammer, M., Kwitt, R., Vialard, F.X.: Metric learning for image registration. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 8463–8472 (2019)

2019

-

[15]

IEEE transactions on medical imaging32(11), 2114–2126 (2013)

Pace, D.F., Aylward, S.R., Niethammer, M.: A locally adaptive regularization based on anisotropic diffusion for deformable image registration of sliding organs. IEEE transactions on medical imaging32(11), 2114–2126 (2013)

2013

-

[16]

Medical image analysis17(2), 182–193 (2013)

Risser, L., Vialard, F.X., Baluwala, H.Y., Schnabel, J.A.: Piecewise-diffeomorphic image registration: Application to the motion estimation between 3d ct lung images with sliding conditions. Medical image analysis17(2), 182–193 (2013)

2013

-

[17]

Advances in Neural Information Processing Systems32(2019)

Shen, Z., Vialard, F.X., Niethammer, M.: Region-specific diffeomorphic metric mapping. Advances in Neural Information Processing Systems32(2019)

2019

-

[18]

Medical image analysis90, 102962 (2023)

Wang, A.Q., Evan, M.Y., Dalca, A.V., Sabuncu, M.R.: A robust and interpretable deep learning framework for multi-modal registration via keypoints. Medical image analysis90, 102962 (2023)

2023

-

[19]

Radiology: Artificial Intelligence 5(5), e230024 (2023)

Wasserthal, J., Breit, H.C., Meyer, M.T., Pradella, M., Hinck, D., Sauter, A.W., Heye, T., Boll, D.T., Cyriac, J., Yang, S., et al.: Totalsegmentator: robust segmen- tation of 104 anatomic structures in ct images. Radiology: Artificial Intelligence 5(5), e230024 (2023)

2023

-

[20]

IEEE Transactions on Medical Imaging 41(11), 3421–3431 (2022)

Yang, Q., Atkinson, D., Fu, Y., Syer, T., Yan, W., Punwani, S., Clarkson, M.J., Barratt, D.C., Vercauteren, T., Hu, Y.: Cross-modality image registration using a training-time privileged third modality. IEEE Transactions on Medical Imaging 41(11), 3421–3431 (2022)

2022

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.