Recognition: unknown

The Security Cost of Intelligence: AI Capability, Cyber Risk, and Deployment Paradox

Pith reviewed 2026-05-08 08:50 UTC · model grok-4.3

The pith

Better AI can lead firms to deploy less when capability increases authority exposure under weak governance.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central result shows a deployment paradox: in high-loss environments, better AI can lead a firm to deploy less when capability is deployed through broader authority exposure under weak governance. Optimal deployment also falls below the no-risk benchmark, and this shortfall widens with breach-loss magnitude and with the authority exposure attached to more capable systems. Governance investment that reduces breach-loss magnitude shrinks the paradox region itself, while breach externalities expand the range of environments in which deployment is socially constrained.

What carries the argument

Analytical model of joint choice of AI deployment quantity and cybersecurity investment, with deployment tied to authority exposure that rises with capability and raises breach probability or loss.

If this is right

- Optimal deployment falls below the level that would occur with zero cyber risk.

- The shortfall from the no-risk benchmark grows with larger breach losses.

- Governance actions that lower breach-loss size shrink the range of environments where the paradox holds.

- Uninternalized breach externalities expand the set of cases in which private deployment is socially too low.

Where Pith is reading between the lines

- Firms may deliberately select less advanced AI for high-stakes workflows to limit exposure.

- Progress on technologies that deliver capability with narrower authority delegation could raise deployment even without better overall governance.

- Empirical tests could compare AI rollout rates across sectors that differ in governance maturity while holding loss potential constant.

Load-bearing premise

More capable AI systems require broader authority exposure to deliver productivity gains, and governance controls have not yet decoupled capability from this exposure.

What would settle it

Observing that deployment of more capable AI rises or remains unchanged with capability even in high-loss environments with weak governance would contradict the predicted paradox.

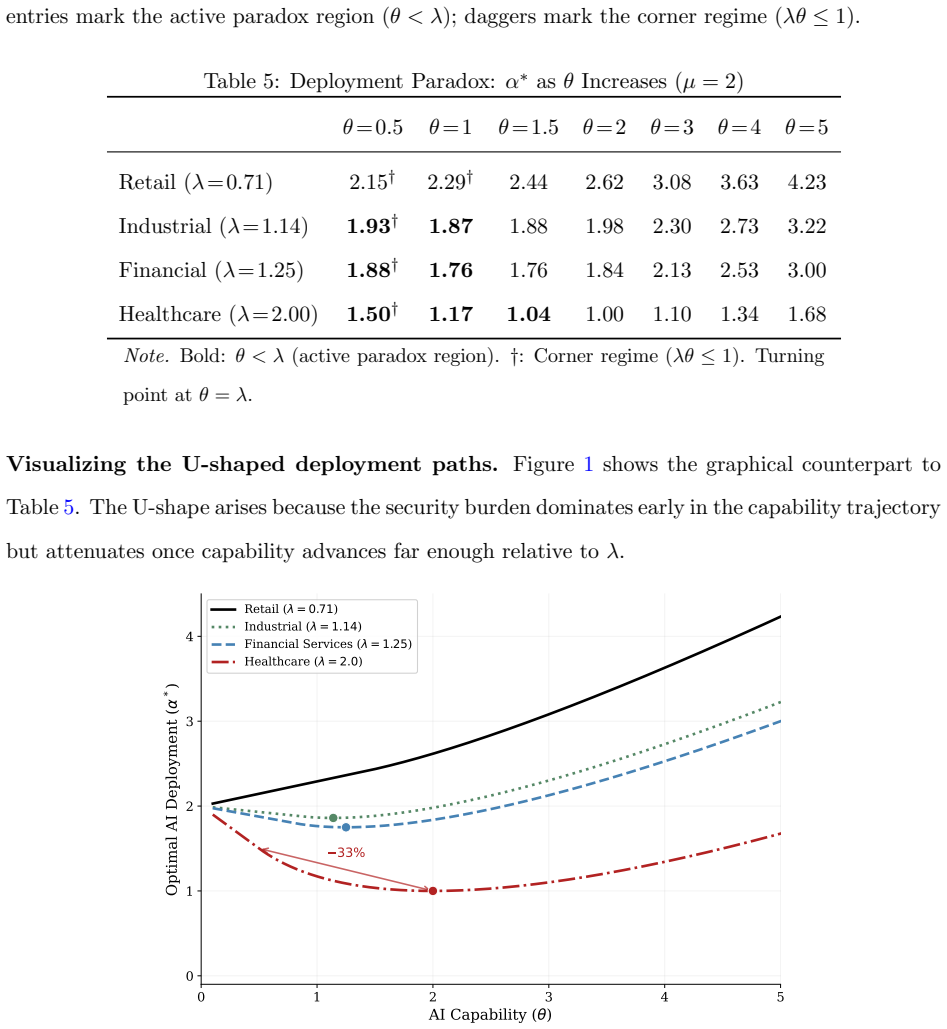

Figures

read the original abstract

Firms are deploying more capable AI systems, but organizational controls often have not kept pace. These systems can generate greater productivity gains, but high-value uses require broader authority exposure -- data access, workflow integration, and delegated authority -- when governance controls have not yet decoupled capability from authority exposure. We develop an analytical model in which a firm jointly chooses AI deployment and cybersecurity investment under this governance-capability gap. The central result shows a deployment paradox: in high-loss environments, better AI can lead a firm to deploy less when capability is deployed through broader authority exposure under weak governance. Optimal deployment also falls below the no-risk benchmark, and this shortfall widens with breach-loss magnitude and with the authority exposure attached to more capable systems. Governance investment that reduces breach-loss magnitude shrinks the paradox region itself, while breach externalities expand the range of environments in which deployment is socially constrained. Governance maturity is therefore not merely a constraint on AI adoption. It is a condition that shapes whether capability improvements translate into productive deployment.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper develops an analytical model in which a firm jointly optimizes AI deployment and cybersecurity investment, accounting for the fact that more capable AI systems require broader authority exposure (data access, workflow integration, delegated authority) under weak governance. The central claim is the existence of a 'deployment paradox': in high-loss environments, higher AI capability can lead to lower optimal deployment levels because the marginal increase in cyber risk from greater authority exposure outweighs productivity gains. Optimal deployment is shown to be below the no-risk benchmark, with the shortfall increasing in breach-loss magnitude and authority exposure. Governance investment reduces the size of the paradox region, while breach externalities expand the set of environments where deployment is socially suboptimal.

Significance. If the analytical result is robust to the choice of functional forms, the paper provides a useful theoretical lens for understanding why firms may under-deploy capable AI systems in high-stakes settings due to cyber risks. It emphasizes that governance is not just a barrier but a determinant of whether capability translates into deployment. This could inform both firm-level strategies and regulatory approaches to AI security. The model is analytical rather than empirical, offering clear comparative statics but no machine-checked proofs or extensive robustness checks are mentioned.

major comments (2)

- [Model Setup and Optimization] The deployment paradox (optimal d* decreasing in capability c for high L) is derived from the joint optimization. However, the sign of dd*/dc depends on whether the authority exposure e(c) rises sufficiently fast relative to the productivity function p(c, e). The manuscript should explicitly state the functional forms for p(·) and the breach probability in the relevant section (likely §3 or §4) and show the condition under which the paradox holds, as linear e(c) with concave p may eliminate the paradox region.

- [Comparative Statics] The claim that the shortfall below the no-risk benchmark widens with breach-loss magnitude L and authority exposure is load-bearing for the policy implication. A sensitivity analysis or explicit derivatives should be provided to confirm this holds beyond the specific parameterization chosen.

minor comments (2)

- [Abstract] The abstract summarizes the result but does not include any key equations or the threshold condition for the high-loss regime, which would help readers assess the scope immediately.

- [Notation] Ensure consistent use of symbols for capability c, deployment d, exposure e, loss L throughout the paper to avoid confusion in the optimization problem.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed comments, which help clarify the conditions under which our analytical results hold and strengthen the robustness of the comparative statics. We address each major comment below and will incorporate the suggested revisions in the next version of the manuscript.

read point-by-point responses

-

Referee: [Model Setup and Optimization] The deployment paradox (optimal d* decreasing in capability c for high L) is derived from the joint optimization. However, the sign of dd*/dc depends on whether the authority exposure e(c) rises sufficiently fast relative to the productivity function p(c, e). The manuscript should explicitly state the functional forms for p(·) and the breach probability in the relevant section (likely §3 or §4) and show the condition under which the paradox holds, as linear e(c) with concave p may eliminate the paradox region.

Authors: We agree that the direction of dd*/dc is sensitive to the relative curvature and growth rates of e(c) and p(c, e), and that explicit functional forms are needed for full transparency. In the current draft we employ p(c, e) = c · (1 − αe) with α > 0 and a breach probability of the form π(e, g) = βe / (1 + γg) where g denotes governance investment; these are stated in §3 but without a general derivation of the paradox condition. We will revise §3 to state the forms explicitly, derive the inequality on de/dc relative to ∂p/∂e that is required for dd*/dc < 0 when L is large, and show that our parameterization satisfies the condition while noting the boundary case of linear e(c) with sufficiently concave p where the paradox region shrinks or disappears. revision: yes

-

Referee: [Comparative Statics] The claim that the shortfall below the no-risk benchmark widens with breach-loss magnitude L and authority exposure is load-bearing for the policy implication. A sensitivity analysis or explicit derivatives should be provided to confirm this holds beyond the specific parameterization chosen.

Authors: We acknowledge that the widening of the shortfall (d* − d_no-risk) with L and with the authority-exposure schedule is central to the policy interpretation. We will add an appendix subsection that reports the explicit partial derivatives ∂(d* − d_no-risk)/∂L and ∂(d* − d_no-risk)/∂e and confirms their signs under the maintained assumptions. We will also include a sensitivity table that varies L, the authority-exposure coefficient, and the governance parameter over plausible ranges, demonstrating that the qualitative result is preserved outside the baseline parameterization. revision: yes

Circularity Check

Analytical model derives deployment paradox from joint optimization without circular reduction to inputs

full rationale

The paper constructs an analytical model in which a firm jointly optimizes AI deployment and cybersecurity investment under the governance-capability gap assumption. The central deployment paradox result (optimal deployment falling with capability in high-loss regimes) is presented as emerging directly from this optimization, with no indication that any prediction reduces to a fitted parameter, self-referential definition, or load-bearing self-citation. The derivation remains self-contained within the stated functional relationships and assumptions; no equations or steps are shown to be equivalent to the inputs by construction.

Axiom & Free-Parameter Ledger

free parameters (2)

- breach-loss magnitude

- authority exposure level attached to capability

axioms (2)

- domain assumption Firms jointly choose AI deployment and cybersecurity investment to maximize expected profit.

- domain assumption Higher AI capability requires broader authority exposure to realize productivity gains.

Reference graph

Works this paper leans on

-

[1]

Acemoglu, D. (2024). The simple macroeconomics of AI.NBER Working Paper, (32487). Acemoglu, D. and Restrepo, P. (2022). Tasks, automation, and the rise in US wage inequality.Econometrica, 90(5):1973–2016. Acquisti, A., Taylor, C., and Wagman, L. (2016). The economics of privacy.Journal of Economic Literature, 54(2):442–492. Agrawal, A., Gans, J., and Gold...

2024

-

[2]

and Brynjolfsson, E

Bakos, Y. and Brynjolfsson, E. (1999). Bundling information goods: Pricing, profits, and efficiency.Man- agement Science, 45(12):1613–1630. Berente, N., Gu, B., Recker, J., and Santhanam, R. (2021). Managing artificial intelligence.MIS Quarterly, 45(3):1433–1450. Bharadwaj, A., El Sawy, O. A., Pavlou, P. A., and Venkatraman, N. (2013). Digital business st...

1999

-

[3]

Collins, C., Dennehy, D., Conboy, K., and Mikalef, P. (2021). Artificial intelligence in information systems research: A systematic literature review and research agenda.International Journal of Information Management, 60:102383. Durcikova, A., Miranda, S., Jensen, M. L., and Wright, R. T. (2024). United we stand, divided we fall: An autogenic perspective...

-

[4]

IBM Security (2025)

Technical report, IBM Corporation. IBM Security (2025). Cost of a data breach report

2025

-

[5]

Kumar, R

Technical report, IBM Corporation. Kumar, R. S. S., Nystr¨ om, M., Lambert, J., et al. (2020). Adversarial machine learning—industry perspec- tives. InProceedings of the IEEE Symposium on Security and Privacy, pages 1–18. Lee, Y. S., Kim, T., Choi, S., and Kim, W. (2022). When does AI pay off? AI-adoption intensity, comple- mentary investments, and R&D st...

2020

-

[6]

National Institute of Standards and Technology (2023)

Technical report, Microsoft Corporation. National Institute of Standards and Technology (2023). Artificial intelligence risk management framework (AI RMF 1.0). Technical Report AI 100-1, NIST. 36 Rose, S., Borchert, O., Mitchell, S., and Connelly, S. (2020). Zero trust architecture. NIST Special Publication 800-207, National Institute of Standards and Tec...

2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.