Recognition: unknown

Optimally Auditing Adversarial Agents

Pith reviewed 2026-05-07 14:28 UTC · model grok-4.3

The pith

A principal-agent game model allows efficient computation of optimal audit policies when multiple agents coordinate to minimize the principal's utility.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The paper establishes a general model of audit policy design as a principal-agent game with multiple agents, where the principal commits to an audit policy and agents collectively choose an equilibrium that minimizes the principal's utility. It provides efficient algorithms for computing optimal audit policies in both adaptive settings, where the policy can respond to the observed distribution of reports, and non-adaptive settings. These results are extended to the case of limited audit budgets.

What carries the argument

The principal-agent game in which agents jointly minimize the principal's utility, solved by algorithms that optimize the principal's committed audit policy in adaptive and non-adaptive regimes.

If this is right

- Optimal audit policies can be computed efficiently for any number of agents in both responsive and fixed audit regimes.

- The same algorithmic framework applies when the principal has a fixed budget of audits, producing policies that respect the resource limit.

- In domains with misreporting incentives, the computed policies directly reduce the principal's expected loss from coordinated agent behavior.

- The distinction between adaptive and non-adaptive policies quantifies the value of responsiveness in audit design.

Where Pith is reading between the lines

- The approach could be extended to sequential auditing where reports arrive over time and the principal learns incrementally.

- Heterogeneous agent types or private information about audit costs would require only modest changes to the current optimization structure.

- The worst-case equilibrium assumption suggests the policies remain robust even if agents communicate or collude outside the model.

- Implementation in large-scale systems would benefit from approximation versions of the algorithms when exact computation becomes intractable.

Load-bearing premise

Agents are assumed to coordinate on the single equilibrium that is worst for the principal rather than acting in isolation or selecting other equilibria.

What would settle it

A small instance in which the algorithm returns a policy but an exhaustive search over all possible audit policies finds one that yields strictly higher principal utility against the agents' minimizing equilibrium.

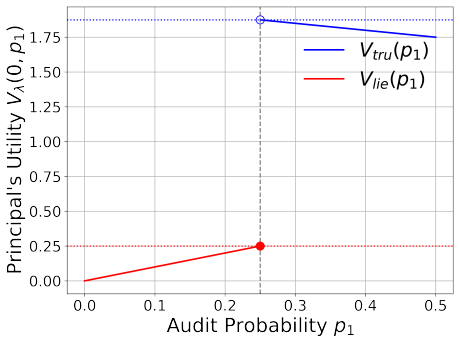

Figures

read the original abstract

Fraud can pose a challenge in many resource allocation domains, including social service delivery and credit provision. For example, agents may misreport private information in order to gain benefits or access to credit. To mitigate this, a principal can design strategic audits to verify claims and penalize misreporting. In this paper, we introduce a general model of audit policy design as a principal-agent game with multiple agents, where the principal commits to an audit policy, and agents collectively choose an equilibrium that minimizes the principal's utility. We examine both adaptive and non-adaptive settings, depending on whether the principal's policy can be responsive to the distribution of agent reports. Our work provides efficient algorithms for computing optimal audit policies in both settings and extends these results to a setting with limited audit budgets.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper models audit policy design as a principal-agent game with multiple agents, where the principal commits to an audit policy (adaptive or non-adaptive) and agents collectively select an equilibrium minimizing the principal's utility. It claims to provide efficient algorithms for computing optimal audit policies in both settings and extends the results to limited audit budgets.

Significance. If the central claims hold, the work supplies a game-theoretic framework for robust audit design against coordinated adversarial agents, with computational tractability for adaptive/non-adaptive cases and budget constraints. This could inform fraud mitigation in domains like social services and credit allocation by yielding policies with guaranteed performance against worst-case equilibria.

major comments (2)

- [Abstract and model definition (§2)] The assumption that agents 'collectively choose an equilibrium that minimizes the principal's utility' (abstract) is load-bearing for the optimality and efficiency claims. In standard non-cooperative game theory, multiple equilibria may exist without a coordination mechanism, so the principal cannot guarantee the min-utility value; the algorithms compute best responses only against this selected value. The model section should justify this selection (e.g., via collusion, correlated equilibria, or explicit tie-breaking) or show it emerges from the game structure, as otherwise the computed policies are not guaranteed optimal or safe under independent play.

- [Abstract; algorithmic sections (§3–5)] The abstract asserts 'efficient algorithms' for adaptive and non-adaptive settings plus the budget extension, yet supplies no complexity bounds, proof sketches, or validation examples. If the full derivations (likely in §§3–5) lack formal runtime analysis, correctness proofs, or small-instance checks, the central algorithmic claims cannot be assessed and the efficiency guarantee remains unsupported.

minor comments (2)

- [Introduction] Clarify the distinction between adaptive and non-adaptive policies with a short example early in the paper to aid readability.

- [Abstract] The abstract could include one sentence on the key technical approach (e.g., LP formulation or dynamic programming) to better convey the contribution.

Simulated Author's Rebuttal

We thank the referee for their constructive comments on our paper. We address each of the major comments below and outline the revisions we will make to the manuscript.

read point-by-point responses

-

Referee: [Abstract and model definition (§2)] The assumption that agents 'collectively choose an equilibrium that minimizes the principal's utility' (abstract) is load-bearing for the optimality and efficiency claims. In standard non-cooperative game theory, multiple equilibria may exist without a coordination mechanism, so the principal cannot guarantee the min-utility value; the algorithms compute best responses only against this selected value. The model section should justify this selection (e.g., via collusion, correlated equilibria, or explicit tie-breaking) or show it emerges from the game structure, as otherwise the computed policies are not guaranteed optimal or safe under independent play.

Authors: This is a valid concern regarding the interpretation of our model. The assumption reflects the principal's goal of obtaining guarantees against adversarial agents who may coordinate to minimize the principal's utility, which aligns with the fraud mitigation context. In the revised version, we will add a detailed justification in §2, explaining that this corresponds to the worst-case equilibrium selection and relates to correlated equilibria or collusion among agents. We will also note that under independent play, the principal's utility would be at least as high as this value, thus the policy remains safe. This clarification will ensure the optimality claims are properly contextualized. revision: yes

-

Referee: [Abstract; algorithmic sections (§3–5)] The abstract asserts 'efficient algorithms' for adaptive and non-adaptive settings plus the budget extension, yet supplies no complexity bounds, proof sketches, or validation examples. If the full derivations (likely in §§3–5) lack formal runtime analysis, correctness proofs, or small-instance checks, the central algorithmic claims cannot be assessed and the efficiency guarantee remains unsupported.

Authors: We agree that the efficiency claims would be strengthened by explicit analysis. The algorithms in §§3–5 are designed to be efficient (polynomial-time solvable via linear programming or dynamic programming techniques, as derived), but we will enhance the presentation by adding formal runtime complexity bounds, brief proof sketches for correctness, and small-scale validation examples in the revised manuscript. These additions will be incorporated into the algorithmic sections and possibly an appendix for the examples. revision: yes

Circularity Check

No circularity in derivation; model and algorithms are self-contained.

full rationale

The paper defines a principal-agent game in which the principal commits to an audit policy and agents select a minimizing equilibrium, then derives efficient algorithms for optimal policies (adaptive, non-adaptive, and budgeted cases) directly from this model. No equations or steps reduce by construction to fitted inputs, self-definitions, or self-citations; the algorithms compute best responses within the explicitly stated game. The central results therefore remain independent of the inputs rather than tautological.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Agents collectively choose an equilibrium that minimizes the principal's utility.

Reference graph

Works this paper leans on

-

[1]

Audit selection and income tax underreporting in the tax compliance game.Journal of development Economics, 42(1):1–33, 1993

James Alm, Roy Bahl, and Matthew N Murray. Audit selection and income tax underreporting in the tax compliance game.Journal of development Economics, 42(1):1–33, 1993

1993

-

[2]

Auditing and game theory: A survey

Fouad Ben abdelaziz, Souhir Neifar, and Marc de Bourmont. Auditing and game theory: A survey. InMultiple Criteria Decision Making in Finance, Insurance and Investment, pages 249–272. Springer, 2015

2015

-

[3]

Optimal allocation with costly verification

Elchanan Ben-Porath, Eddie Dekel, and Barton L Lipman. Optimal allocation with costly verification. American Economic Review, 104(12):3779–3813, 2014

2014

-

[4]

Procaccia, and Arunesh Sinha

Jeremiah Blocki, Nicolas Christin, Anupam Datta, Ariel D. Procaccia, and Arunesh Sinha. Audit games. In Francesca Rossi, editor,IJCAI 2013, Proceedings of the 23rd International Joint Conference on Artificial Intelligence, Beijing, China, August 3-9, 2013, pages 41–47. IJCAI/AAAI, 2013. URL http://www.aaai.org/ocs/index.php/IJCAI/IJCAI13/paper/view/6951

2013

-

[5]

Jeremiah Blocki, Nicolas Christin, Anupam Datta, Ariel D. Procaccia, and Arunesh Sinha. Audit games with multiple defender resources. In Blai Bonet and Sven Koenig, editors,Proceedings of the Twenty- Ninth AAAI Conference on Artificial Intelligence, January 25-30, 2015, Austin, Texas, USA, pages 791–797. AAAI Press, 2015. doi: 10.1609/AAAI.V29I1.9317. URL...

-

[6]

Learning strategy-aware linear classifiers

Yiling Chen, Yang Liu, and Chara Podimata. Learning strategy-aware linear classifiers. In Hugo Larochelle, Marc’Aurelio Ranzato, Raia Hadsell, Maria-Florina Balcan, and Hsuan-Tien Lin, editors, 17 Advances in Neural Information Processing Systems 33: Annual Conference on Neural Information Pro- cessing Systems 2020, NeurIPS 2020, December 6-12, 2020, virt...

2020

-

[7]

Financial statement audits, a game of chicken? Journal of Business Ethics, 41(1):1–11, 2002

Charles J Coates, Robert E Florence, and Kristi L Kral. Financial statement audits, a game of chicken? Journal of Business Ethics, 41(1):1–11, 2002

2002

-

[8]

Pricing network edges for heterogeneous selfish users

Richard Cole, Yevgeniy Dodis, and Tim Roughgarden. Pricing network edges for heterogeneous selfish users. InProceedings of the Thirty-Fifth Annual ACM Symposium on Theory of Computing, STOC ’03, page 521–530, New York, NY, USA, 2003. Association for Computing Machinery. ISBN 1581136749. doi: 10.1145/780542.780618. URLhttps://doi.org/10.1145/780542.780618

-

[9]

Pessimistic leader-follower equilibria with multiple followers

Stefano Coniglio, Nicola Gatti, and Alberto Marchesi. Pessimistic leader-follower equilibria with multiple followers. In Carles Sierra, editor,Proceedings of the Twenty-Sixth International Joint Conference on Artificial Intelligence, IJCAI 2017, Melbourne, Australia, August 19-25, 2017, pages 171–177. ijcai.org,

2017

-

[10]

URLhttps://doi.org/10.24963/ijcai.2017/25

doi: 10.24963/IJCAI.2017/25. URLhttps://doi.org/10.24963/ijcai.2017/25

-

[11]

Computing the optimal strategy to commit to

Vincent Conitzer and Tuomas Sandholm. Computing the optimal strategy to commit to. InProceedings of the 7th ACM Conference on Electronic Commerce, EC ’06, page 82–90, New York, NY, USA, 2006. Association for Computing Machinery. ISBN 1595932364. doi: 10.1145/1134707.1134717. URLhttps: //doi.org/10.1145/1134707.1134717

-

[12]

Strategic classification from revealed preferences

Jinshuo Dong, Aaron Roth, Zachary Schutzman, Bo Waggoner, and Zhiwei Steven Wu. Strategic classification from revealed preferences. In ´Eva Tardos, Edith Elkind, and Rakesh Vohra, editors, Proceedings of the 2018 ACM Conference on Economics and Computation, Ithaca, NY, USA, June 18- 22, 2018, pages 55–70. ACM, 2018. doi: 10.1145/3219166.3219193. URLhttps:...

-

[13]

Incentivizing truthfulness through audits in strategic classification

Andrew Estornell, Sanmay Das, and Yevgeniy Vorobeychik. Incentivizing truthfulness through audits in strategic classification. InThirty-Fifth AAAI Conference on Artificial Intelligence, AAAI 2021, Thirty-Third Conference on Innovative Applications of Artificial Intelligence, IAAI 2021, The Eleventh Symposium on Educational Advances in Artificial Intellige...

-

[14]

Incentivizing recourse through auditing in strategic classification

Andrew Estornell, Yatong Chen, and Sanmay Das. Incentivizing recourse through auditing in strategic classification. InProceedings of the 32nd International Joint Conference on Artificial Intelligence (IJ- CAI), pages 400–408, 8 2023. doi: 10.24963/ijcai.2023/45. URLhttps://doi.org/10.24963/ijcai. 2023/45

-

[15]

Virginia Eubanks.Automating Inequality: How High-Tech Tools Profile, Police, and Punish the Poor. St. Martin’s Press, New York, NY, 2018. ISBN 1250074312

2018

-

[16]

Catch me if you can: Combatting fraud in artificial currency-based government benefits programs, 2024

Devansh Jalota, Matthew Tsao, and Marco Pavone. Catch me if you can: Combatting fraud in artificial currency-based government benefits programs, 2024

2024

-

[17]

Cambridge University Press, 2020

Tor Lattimore and Csaba Szepesv´ ari.Bandit algorithms. Cambridge University Press, 2020

2020

-

[18]

Allocation for social good: Auditing mechanisms for utility maximization

Taylor Lundy, Alexander Wei, Hu Fu, Scott Duke Kominers, and Kevin Leyton-Brown. Allocation for social good: Auditing mechanisms for utility maximization. InProceedings of the 2019 ACM Conference on Economics and Computation, EC ’19, page 785–803, New York, NY, USA, 2019. Association for Computing Machinery. ISBN 9781450367929. doi: 10.1145/3328526.332962...

-

[19]

Optimal auditing, insurance, and redistribution.The Quarterly Journal of Economics, 104(2):399–415, 1989

Dilip Mookherjee and Ivan Png. Optimal auditing, insurance, and redistribution.The Quarterly Journal of Economics, 104(2):399–415, 1989

1989

- [20]

-

[21]

Deployed armor protection: the application of a game theoretic model for security at the los angeles international airport

James Pita, Manish Jain, Janusz Marecki, Fernando Ord´ o˜ nez, Christopher Portway, Milind Tambe, Craig Western, Praveen Paruchuri, and Sarit Kraus. Deployed armor protection: the application of a game theoretic model for security at the los angeles international airport. InProceedings of the 7th International Joint Conference on Autonomous Agents and Mul...

2008

-

[22]

Tim Roughgarden and ´Eva Tardos. How bad is selfish routing?J. ACM, 49(2):236–259, March 2002. ISSN 0004-5411. doi: 10.1145/506147.506153. URLhttps://doi.org/10.1145/506147.506153

-

[23]

Cambridge University Press, 2012

Milind Tambe.Security and Game Theory - Algorithms, Deployed Systems, Lessons Learned. Cambridge University Press, 2012. ISBN 978-1-10-709642-4. URLhttp://www.cambridge.org/ de/academic/subjects/computer-science/communications-information-theory-and-security/ security-and-game-theory-algorithms-deployed-systems-lessons-learned?format=AR

2012

-

[24]

Optimal contracts and competitive markets with costly state verification.Journal of Economic theory, 21(2):265–293, 1979

Robert M Townsend. Optimal contracts and competitive markets with costly state verification.Journal of Economic theory, 21(2):265–293, 1979. 19 (a) Principal utility. (b) Social welfare. (c) Misreporting mass. (d) Audit rate. (e) U-opt Audit probabilities. (f) U-opt Distortion. (g) W-opt Audit probabilities. (h) W-opt Distortion. Figure 5: Effect of resol...

1979

-

[25]

BecauseU ι = ˆu >ˆu−ϵ 2,i truth(p′) =ιand p′ is strict asϵis small enough

for an arbitraryκ∈Aand 0< ϵ < γ 2 . BecauseU ι = ˆu >ˆu−ϵ 2,i truth(p′) =ιand p′ is strict asϵis small enough. Moreover, ˆA(p′) ={κ}and ˆu(p ′) = ˆu− ϵ 2, soEqi(p ′)⊆Eqi(p). Now we upper bound Eq. (21). For allk < ι,D k = 0 since no one misreports or reports ask. Second, ifk̸=κandi≥ι,D k =q kλ(p′ k −p k) =q kλ(ρk(ˆu−ϵ)−ρ k( ˆUk(p))≤q kϵ. Forκ, becausep ′ ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.