Recognition: unknown

Circular Phase Representation and Geometry-Aware Optimization for Ptychographic Image Reconstruction

Pith reviewed 2026-05-07 11:16 UTC · model grok-4.3

The pith

Representing phase on the unit circle with a geodesic loss reduces wrapping artifacts in ptychographic reconstruction.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

By modeling phase on the unit circle using cosine and sine components and optimizing with a differentiable geodesic loss that measures angular error, along with a composite loss for structural fidelity, the deep learning framework achieves more accurate amplitude and phase reconstructions that preserve mid- and high-frequency content better than existing deep learning approaches, while remaining computationally efficient compared to iterative solvers.

What carries the argument

The circular phase representation, which encodes phase as cosine and sine values on the unit circle, paired with the geodesic loss for bounded, discontinuity-free gradient computation.

If this is right

- Improved amplitude and phase reconstruction quality over prior deep learning methods on synthetic and experimental datasets.

- Better preservation of mid- and high-frequency phase content as shown by frequency-domain analysis.

- Substantial speedup relative to iterative reconstruction methods while ensuring physical consistency.

- Reduced wrapping artifacts and discontinuities at phase boundaries through geometry-aware optimization.

Where Pith is reading between the lines

- The circular approach may extend to other phase-sensitive imaging techniques involving periodic signals.

- Improved high-frequency preservation could support more detailed structural analysis in materials science applications.

- Efficiency gains suggest feasibility for real-time ptychographic reconstruction in experimental settings.

Load-bearing premise

The circular phase representation combined with the geodesic and composite losses will consistently prevent wrapping artifacts and yield physically valid results on a wide range of experimental ptychography datasets without needing extra post-processing steps or dataset-specific tuning.

What would settle it

Observing phase discontinuities or inferior structural similarity metrics in reconstructions from a challenging experimental dataset containing strong phase variations, when compared against ground-truth iterative reconstructions or Euclidean-phase deep learning baselines.

Figures

read the original abstract

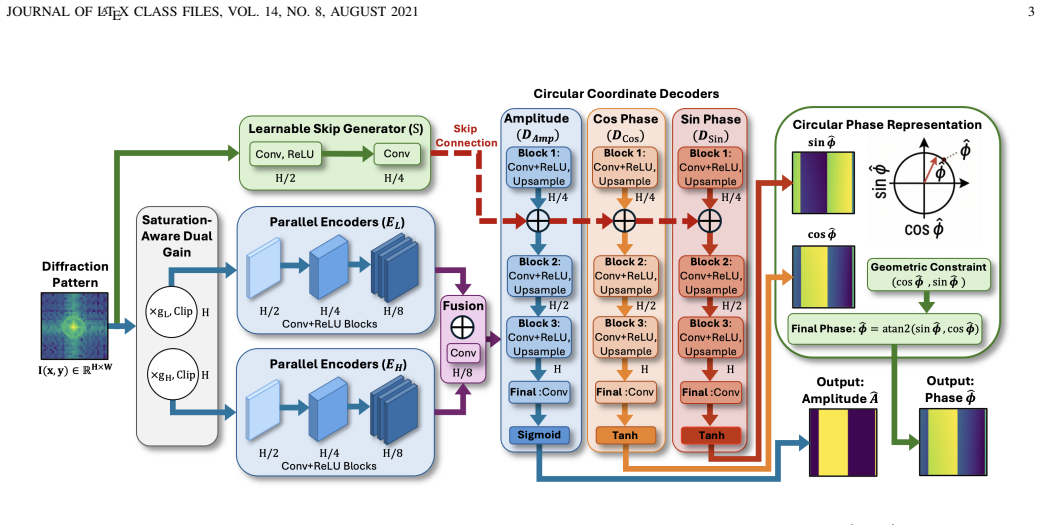

Traditional iterative reconstruction methods are accurate but computationally expensive, limiting their use in high-throughput and real-time ptychography. Recent deep learning approaches improve speed, but often predict phase as a Euclidean scalar despite its $2\pi$ periodicity, which can introduce wrapping artifacts, discontinuities at $\pm\pi$, and a mismatch between the loss and the underlying signal geometry. We present a deep learning framework for ptychographic reconstruction that models phase on the unit circle using cosine and sine components. Phase error is optimized with a differentiable geodesic loss, which avoids branch-cut discontinuities and provides bounded gradients. The network further incorporates saturation-aware dual-gain input scaling, parallel encoder branches, and three decoders for amplitude, cosine, and sine prediction, together with a composite loss that promotes circular consistency and structural fidelity. Experiments on synthetic and experimental datasets show consistent improvements in both amplitude and phase reconstruction over existing deep learning methods. Frequency-domain analysis further shows better preservation of mid- and high-frequency phase content. The proposed method also provides substantial speedup over iterative solvers while maintaining physically consistent reconstructions.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes a deep learning framework for ptychographic image reconstruction that represents phase on the unit circle via cosine and sine components rather than as a Euclidean scalar. It introduces a differentiable geodesic loss to handle 2π periodicity without wrapping artifacts or branch-cut discontinuities, combined with saturation-aware dual-gain input scaling, parallel encoder branches, and three separate decoders for amplitude, cosine, and sine outputs. A composite loss enforces circular consistency and structural fidelity. Experiments on synthetic and experimental datasets are reported to show consistent gains in amplitude and phase quality over prior deep learning methods, improved mid- and high-frequency phase preservation in the Fourier domain, and substantial computational speedup relative to iterative solvers while preserving physical consistency.

Significance. If the quantitative gains and frequency-domain improvements hold under the reported conditions, the work offers a meaningful advance for high-throughput ptychography by aligning the network geometry and loss with the intrinsic periodicity of phase. This could enable reliable real-time reconstruction in applications where phase accuracy is critical, such as materials characterization, while retaining the speed advantage of learned methods over traditional iterative solvers. The explicit handling of circular topology via geodesic loss and multi-decoder architecture is a targeted contribution that addresses a documented limitation in existing deep-learning ptychography pipelines.

minor comments (3)

- [Abstract] Abstract: the statement of 'consistent improvements' and 'better preservation of mid- and high-frequency phase content' would be strengthened by including at least one or two representative quantitative metrics (e.g., mean PSNR or SSIM on the test sets) so that readers can immediately gauge effect size.

- [Methods] The description of the composite loss would benefit from an explicit equation or pseudocode showing the relative weighting of the geodesic, amplitude, and structural terms, together with any hyper-parameter selection procedure.

- [Results] Figure captions and axis labels in the frequency-domain analysis should explicitly state the normalization used for the power spectra so that the claimed mid- and high-frequency gains can be directly compared across methods.

Simulated Author's Rebuttal

We thank the referee for the positive assessment of our manuscript and for recommending minor revision. The recognition of the geodesic loss, circular phase representation, and computational advantages is appreciated. No specific major comments were provided in the report.

Circularity Check

No significant circularity identified

full rationale

The paper proposes an architectural framework using cosine/sine phase encoding, geodesic loss, saturation-aware scaling, parallel encoders, and composite loss to handle 2π periodicity in ptychographic reconstruction. These elements are introduced as explicit design choices motivated by signal geometry rather than derived from or fitted to the target outputs. Reported improvements are empirical results on synthetic and experimental datasets with frequency-domain validation, independent of any self-definitional reduction, fitted-input prediction, or load-bearing self-citation chain. No equations or claims in the provided text reduce the central claims to quantities defined by the inputs by construction.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Phase is a 2 pi periodic quantity whose natural geometry is the circle

- domain assumption A differentiable geodesic loss on the circle will avoid branch-cut discontinuities and provide bounded gradients during training

Reference graph

Works this paper leans on

-

[1]

A phase retrieval algorithm for shifting illumination,

J. M. Rodenburg and H. M. L. Faulkner, “A phase retrieval algorithm for shifting illumination,”Applied Physics Letters, vol. 85, no. 20, p. 4795–4797, Nov. 2004

2004

-

[2]

X-ray ptychography,

F. Pfeiffer, “X-ray ptychography,”Nature Photonics, vol. 12, no. 1, p. 9–17, Dec. 2017

2017

-

[3]

Probe re- trieval in ptychographic coherent diffractive imaging,

P. Thibault, M. Dierolf, O. Bunk, A. Menzel, and F. Pfeiffer, “Probe re- trieval in ptychographic coherent diffractive imaging,”Ultramicroscopy, vol. 109, no. 4, p. 338–343, Mar. 2009

2009

-

[4]

An improved ptychographical phase retrieval algorithm for diffractive imaging,

A. M. Maiden and J. M. Rodenburg, “An improved ptychographical phase retrieval algorithm for diffractive imaging,”Ultramicroscopy, vol. 109, no. 10, p. 1256–1262, Sep. 2009

2009

-

[5]

High-resolution scanning x-ray diffraction microscopy,

P. Thibault, M. Dierolf, A. Menzel, O. Bunk, C. David, and F. Pfeiffer, “High-resolution scanning x-ray diffraction microscopy,”Science, vol. 321, no. 5887, p. 379–382, Jul. 2008

2008

-

[6]

Real-time coherent diffraction inversion using deep generative networks,

M. J. Cherukara, Y . S. G. Nashed, and R. J. Harder, “Real-time coherent diffraction inversion using deep generative networks,”Scientific Reports, vol. 8, no. 1, Nov. 2018

2018

-

[7]

Ai-enabled high-resolution scanning coherent diffraction imaging,

M. J. Cherukara, T. Zhou, Y . Nashed, P. Enfedaque, A. Hexemer, R. J. Harder, and M. V . Holt, “Ai-enabled high-resolution scanning coherent diffraction imaging,”Applied Physics Letters, vol. 117, no. 4, Jul. 2020

2020

-

[8]

Deep-learning electron diffractive imaging,

D. J. Chang, C. M. O’Leary, C. Su, D. A. Jacobs, S. Kahn, A. Zettl, J. Ciston, P. Ercius, and J. Miao, “Deep-learning electron diffractive imaging,”Physical Review Letters, vol. 130, no. 1, Jan. 2023. [Online]. Available: http://dx.doi.org/10.1103/PhysRevLett.130.016101

-

[9]

X. Pan, S. Wang, Z. Zhou, L. Zhou, P. Liu, C. Li, W. Wang, C. Zhang, Y . Dong, and Y . Zhang, “An efficient ptychography reconstruction strategy through fine-tuning of large pre-trained deep learning model,” iScience, vol. 26, no. 12, p. 108420, Dec. 2023. [Online]. Available: http://dx.doi.org/10.1016/j.isci.2023.108420

-

[10]

H. Yue, J. Cheng, Y .-X. Ren, C.-C. Chen, G. A. van Riessen, P. H. W. Leong, and S. F. Shu, “A physics-inspired deep learning framework with polar coordinate attention for ptychographic imaging,”IEEE Transactions on Computational Imaging, vol. 11, p. 888–900, 2025. [Online]. Available: http://dx.doi.org/10.1109/TCI.2025.3572250

-

[11]

Low-light phase retrieval with implicit generative priors,

R. Manekar, E. Negrini, M. Pham, D. Jacobs, J. Srivastava, S. J. Osher, and J. Miao, “Low-light phase retrieval with implicit generative priors,” IEEE Transactions on Image Processing, vol. 33, p. 4728–4737, 2024

2024

-

[12]

Phase unwrapping via graph cuts,

J. M. Bioucas-Dias and G. Valadao, “Phase unwrapping via graph cuts,” IEEE Transactions on Image Processing, vol. 16, no. 3, p. 698–709, Mar. 2007

2007

-

[13]

Robust phase unwrapping via deep image prior for quantita- tive phase imaging,

F. Yang, T.-A. Pham, N. Brandenberg, M. P. Lutolf, J. Ma, and M. Unser, “Robust phase unwrapping via deep image prior for quantita- tive phase imaging,”IEEE Transactions on Image Processing, vol. 30, p. 7025–7037, 2021

2021

-

[14]

Phase retrieval by iterated projections,

V . Elser, “Phase retrieval by iterated projections,”Journal of the Optical Society of America A, vol. 20, no. 1, p. 40, Jan. 2003

2003

-

[15]

On the use of deep learning for phase recovery,

K. Wang, L. Song, C. Wang, Z. Ren, G. Zhao, J. Dou, J. Di, G. Barbas- tathis, R. Zhou, J. Zhao, and E. Y . Lam, “On the use of deep learning for phase recovery,”Light: Science & Applications, vol. 13, no. 1, Jan. 2024

2024

-

[16]

Phase retrieval: From computational imaging to machine learning: A tutorial,

J. Dong, L. Valzania, A. Maillard, T.-a. Pham, S. Gigan, and M. Unser, “Phase retrieval: From computational imaging to machine learning: A tutorial,”IEEE Signal Processing Magazine, vol. 40, no. 1, p. 45–57, Jan. 2023

2023

-

[17]

Z. Guan, E. Tsai, X. Huang, K. Yager, and H. Qin,PtychoNet: Fast and High Quality Phase Retrieval for Ptychography. Office of Scientific and Technical Information (OSTI), Sep. 2019

2019

-

[18]

Deep neural networks in single-shot ptychography,

O. Wengrowicz, O. Peleg, T. Zahavy, B. Loevsky, and O. Cohen, “Deep neural networks in single-shot ptychography,”Optics Express, vol. 28, no. 12, p. 17511, May 2020

2020

-

[19]

Deep iterative phase retrieval for ptychography,

Simon Welker, Tal Peer, Timo Gerkmann, and Henry Chapman, “Deep iterative phase retrieval for ptychography,” 2022

2022

-

[20]

Ptychographic phase retrieval via a deep-learning-assisted iterative algorithm,

K. Yamada, N. Akaishi, K. Yatabe, and Y . Takayama, “Ptychographic phase retrieval via a deep-learning-assisted iterative algorithm,”Journal of Applied Crystallography, vol. 57, no. 5, p. 1323–1335, Aug. 2024

2024

-

[21]

Ptychoformer: A transformer-based model for ptychographic phase retrieval,

R. Nakahata, S. Zaman, M. Zhang, F. Lu, and K. Chiu, “Ptychoformer: A transformer-based model for ptychographic phase retrieval,” 2024

2024

-

[22]

Deep learning at the edge enables real-time streaming ptychographic imaging,

A. V . Babu, T. Zhou, S. Kandel, T. Bicer, Z. Liu, W. Judge, D. J. Ching, Y . Jiang, S. Veseli, S. Henke, R. Chard, Y . Yao, E. Sirazitdinova, G. Gupta, M. V . Holt, I. T. Foster, A. Miceli, and M. J. Cherukara, “Deep learning at the edge enables real-time streaming ptychographic imaging,”Nature Communications, vol. 14, no. 1, Nov. 2023

2023

-

[23]

Beyer, A

L. Beyer, A. Hermans, and B. Leibe,Biternion Nets: Continuous Head Pose Regression from Discrete Training Labels. Springer International Publishing, 2015, p. 157–168. JOURNAL OF LATEX CLASS FILES, VOL. 14, NO. 8, AUGUST 2021 14

2015

-

[24]

Spherical regression: Learning viewpoints, surface normals and 3d rotations on n-spheres,

S. Liao, E. Gavves, and C. G. M. Snoek, “Spherical regression: Learning viewpoints, surface normals and 3d rotations on n-spheres,” in2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). IEEE, Jun. 2019, p. 9751–9759. [Online]. Available: http://dx.doi.org/10.1109/CVPR.2019.00999

-

[25]

W. Falcon and T. P. L. team, “Pytorch lightning,” 2019. [Online]. Available: https://doi.org/10.5281/zenodo.7545285

-

[26]

Differential programming enabled functional imaging with lorentz transmission electron microscopy,

T. Zhou, M. Cherukara, and C. Phatak, “Differential programming enabled functional imaging with lorentz transmission electron microscopy,”npj Computational Materials, vol. 7, no. 1, Sep. 2021. [Online]. Available: http://dx.doi.org/10.1038/s41524-021-00600-x

-

[27]

Adam: A Method for Stochastic Optimization

D. P. Kingma and J. Ba, “Adam: A method for stochastic optimization,” inInternational Conference on Learning Representations (ICLR), 2015. [Online]. Available: https://arxiv.org/abs/1412.6980

work page internal anchor Pith review arXiv 2015

-

[28]

Localized gaussian splatting editing with contextual awareness

L. N. Smith, “Cyclical learning rates for training neural networks,” in2017 IEEE Winter Conference on Applications of Computer Vision (WACV). IEEE, Mar. 2017, p. 464–472. [Online]. Available: http://dx.doi.org/10.1109/W ACV .2017.58

work page doi:10.1109/w 2017

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.