Recognition: unknown

State Stream Transformer (SST) V2: Parallel Training of Nonlinear Recurrence for Latent Space Reasoning

Pith reviewed 2026-05-09 20:26 UTC · model grok-4.3

The pith

Nonlinear recurrence with state streaming in transformers delivers 15-point gains on out-of-distribution reasoning benchmarks with only small additional training.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

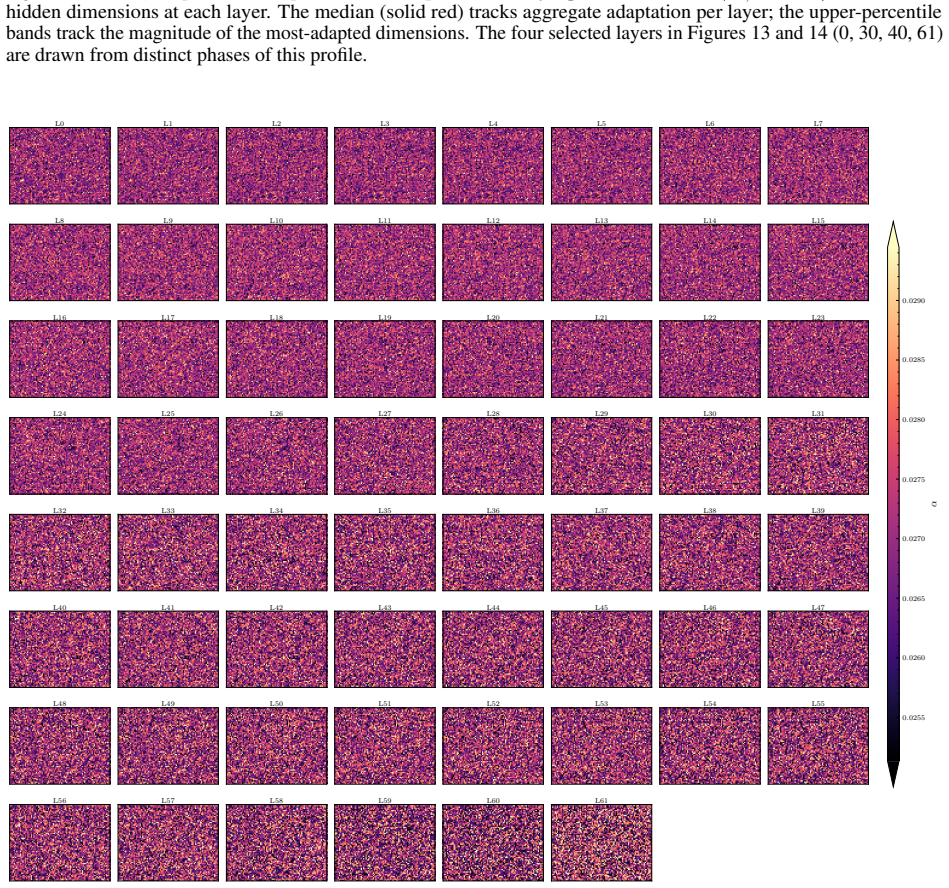

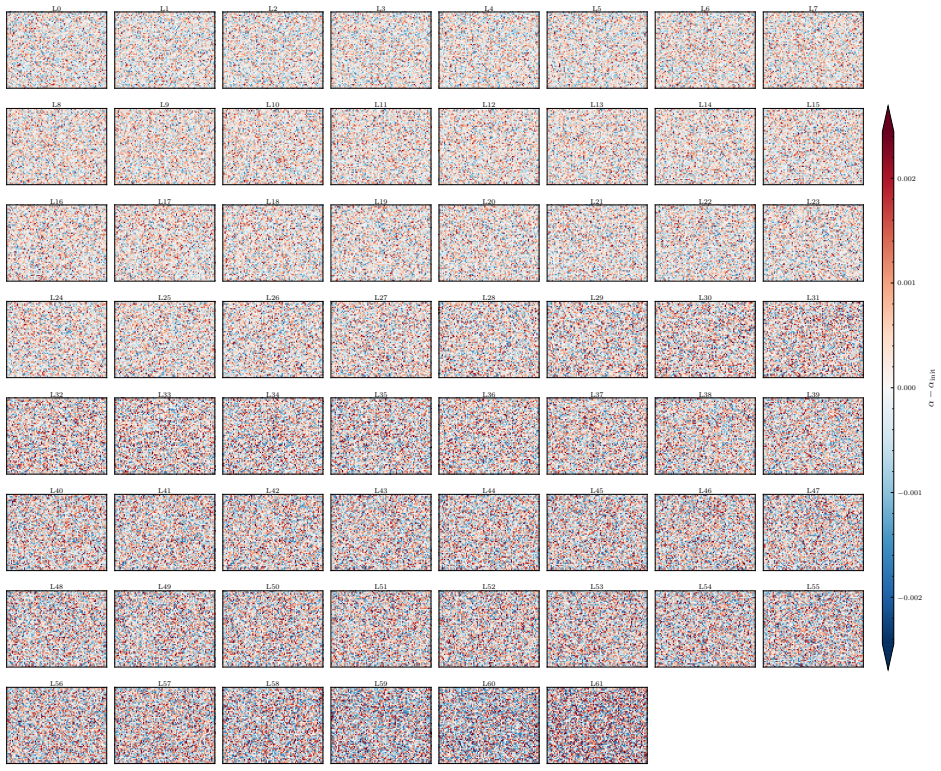

The SST V2 enables parameter-efficient reasoning in continuous latent space through an FFN-driven nonlinear recurrence at each decoder layer, where latent states are streamed horizontally across the full sequence via a learned blend. This same mechanism supports continuous latent deliberation per position at inference time, dedicating additional FLOPs to exploring abstract reasoning before committing to a token. A two-pass parallel training procedure resolves the sequential dependency of the recurrence to allow compute-efficient training. Hidden state analysis shows the state stream facilitates reasoning through exploration of distinct semantic basins in continuous latent space, where transt

What carries the argument

FFN-driven nonlinear recurrence with horizontal state streaming via a learned blend at each decoder layer

If this is right

- The reasoning improvements are attributable to the architectural mechanism rather than scale or training data.

- The design supports continuous latent deliberation per position at inference by dedicating extra FLOPs before token generation.

- State transitions at content-dependent positions move the model into substantially different Bayesian posteriors that influence future latent states.

- The resulting 27B SST achieves higher accuracy on GPQA-Diamond than several larger open-weight and proprietary models.

Where Pith is reading between the lines

- The probe finding that the first-token latent state already predicts answer survival under further computation suggests a natural route to adaptive inference that spends more deliberation only when the early state is uncertain.

- Because large gains appear with only a small co-training set, the same recurrence could be grafted onto other existing backbones to improve reasoning without full retraining.

- The parallel training procedure implies that similar state mechanisms could be added to other sequence models without incurring prohibitive sequential training costs.

Load-bearing premise

The observed gains on GPQA-Diamond and GSM8K are caused by the state-stream mechanism enabling genuine latent-space reasoning rather than by the specific training procedure, probe, or benchmark selection.

What would settle it

Train an otherwise identical 27B model with the state-streaming component disabled, using the same small GSM8K co-training set and two-pass procedure, then measure whether the +15.15 point gain on GPQA-Diamond and the 46% error reduction on GSM8K disappear.

Figures

read the original abstract

Current transformers discard their rich latent residual stream between positions, reconstructing latent reasoning context at each new position and leaving potential reasoning capacity untapped. The State Stream Transformer (SST) V2 enables parameter-efficient reasoning in continuous latent space through an FFN-driven nonlinear recurrence at each decoder layer, where latent states are streamed horizontally across the full sequence via a learned blend. This same mechanism supports continuous latent deliberation per position at inference time, dedicating additional FLOPs to exploring abstract reasoning before committing to a token. A two-pass parallel training procedure resolves the sequential dependency of the recurrence to allow compute-efficient training. Hidden state analysis shows the state stream facilitates reasoning through exploration of distinct semantic basins in continuous latent space, where transitions at content-dependent positions move the model into a substantially different Bayesian posterior, directly influencing the latent space at future positions. We also find, via a learned probe, that at the first generated token position, the latent state already predicts whether the eventual answer will survive or break under additional latent computation for every subsequent position. Co-trained into an existing 27B backbone using only a small dataset of GSM8K examples, the SST delivers a +15.15 point gain over a fine-tuning-matched baseline on out-of-distribution GPQA-Diamond and cuts that same baseline's remaining GSM8K errors by 46%, together showing that the reasoning improvement is attributable to the architectural mechanism rather than scale or training data. On GPQA-Diamond, the resulting 27B SST also achieves higher accuracy than several larger open-weight and proprietary systems, including open-weight models up to 25 times larger.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes the State Stream Transformer (SST) V2, which augments standard transformers with an FFN-driven nonlinear recurrence per decoder layer that streams latent states horizontally across positions via learned blend weights. This enables continuous latent-space deliberation at inference by dedicating extra compute to explore reasoning before token emission. A two-pass parallel training procedure removes the sequential dependency for efficient training. Co-training the mechanism into a 27B backbone on a small GSM8K set yields +15.15 accuracy on out-of-distribution GPQA-Diamond versus a fine-tuning-matched baseline and reduces remaining GSM8K errors by 46%. Hidden-state analysis shows the stream explores distinct semantic basins, and a learned probe indicates the first-token latent state predicts whether the final answer will survive further latent computation.

Significance. If the reported gains can be isolated to the state-stream mechanism, the work would offer a parameter-efficient route to latent reasoning that improves out-of-distribution performance without increasing model scale. The parallel training schedule and basin-transition analysis constitute concrete technical contributions that could be adopted more broadly. The absence of controls that hold the training procedure fixed, however, leaves the central attribution claim open to alternative explanations based on optimization differences.

major comments (3)

- [Abstract and Results] Abstract and experimental results: The headline claim that the +15.15 GPQA-Diamond gain and 46% GSM8K error reduction are attributable to the nonlinear recurrence and horizontal state streaming (rather than the two-pass parallel training procedure) is not supported by the current controls. The baseline is described only as 'fine-tuning-matched' and cannot employ the identical two-pass schedule that resolves recurrence dependencies, so differences in gradient flow, effective regularization, or optimization trajectory remain unisolated.

- [Hidden State Analysis] Hidden-state analysis section: The probe that predicts answer survival from the first generated token's latent state is trained on outcomes produced by the same SST model, introducing circularity that weakens the claim that the probe demonstrates genuine latent-space reasoning independent of the model's own predictions.

- [Experimental Evaluation] Experimental evaluation: No error bars, standard deviations across runs, or full protocol details (including exact data splits, learning-rate schedules, and whether the baseline receives equivalent total compute) are reported for the GPQA-Diamond and GSM8K results, preventing assessment of whether the observed improvements exceed statistical noise.

minor comments (1)

- [Methods] The mathematical definition of the learned blend weights and the precise form of the nonlinear recurrence could be stated more explicitly with equations to aid reproducibility.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive comments. We address each major point below with clarifications and planned revisions to improve the manuscript.

read point-by-point responses

-

Referee: [Abstract and Results] Abstract and experimental results: The headline claim that the +15.15 GPQA-Diamond gain and 46% GSM8K error reduction are attributable to the nonlinear recurrence and horizontal state streaming (rather than the two-pass parallel training procedure) is not supported by the current controls. The baseline is described only as 'fine-tuning-matched' and cannot employ the identical two-pass schedule that resolves recurrence dependencies, so differences in gradient flow, effective regularization, or optimization trajectory remain unisolated.

Authors: We agree that a control holding the training procedure exactly fixed would strengthen attribution. The two-pass schedule is required to train the recurrence in parallel and is thus inseparable from the SST mechanism itself; a standard transformer baseline cannot use it. The baseline matches the fine-tuning data, steps, and compute budget as closely as possible under standard single-pass training. The large OOD gains on GPQA-Diamond (unseen during co-training) make an optimization-artifact explanation less likely, but we will revise the abstract, results, and discussion to qualify the attribution claim, explicitly note the training-procedure difference as a potential confound, and add it to the limitations section. revision: partial

-

Referee: [Hidden State Analysis] Hidden-state analysis section: The probe that predicts answer survival from the first generated token's latent state is trained on outcomes produced by the same SST model, introducing circularity that weakens the claim that the probe demonstrates genuine latent-space reasoning independent of the model's own predictions.

Authors: The probe is an analysis tool that tests whether the latent state at the first generated token already encodes the outcome of subsequent latent deliberation steps performed by the same model. This is by design: it demonstrates that the state stream has compressed reasoning progress into an early latent representation. We do not claim the probe operates independently of the model; rather, it reveals an internal property of SST's latent dynamics. We will revise the hidden-state analysis section to clarify the probe's purpose, remove any phrasing that could imply full independence, and emphasize that the result supports the utility of continuous latent computation. revision: yes

-

Referee: [Experimental Evaluation] Experimental evaluation: No error bars, standard deviations across runs, or full protocol details (including exact data splits, learning-rate schedules, and whether the baseline receives equivalent total compute) are reported for the GPQA-Diamond and GSM8K results, preventing assessment of whether the observed improvements exceed statistical noise.

Authors: We will add error bars computed over at least three independent runs with different random seeds, report standard deviations, and expand the experimental protocol appendix with exact data splits, learning-rate schedules, optimizer settings, and a compute-equivalence table confirming that the baseline receives matched total optimization steps (adjusted for the two-pass overhead in SST). These additions will allow direct assessment of statistical reliability. revision: yes

Circularity Check

No significant circularity in derivation chain

full rationale

The paper's central claims rest on direct empirical measurements of accuracy gains on external benchmarks (GPQA-Diamond +15.15 points, 46% GSM8K error reduction) after co-training a 27B backbone on a small GSM8K set, compared against a fine-tuning-matched baseline. These are independent evaluations not derived from any internal fitted parameters, self-citations, or equations that reduce to inputs by construction. The learned probe is described only as an additional analysis tool ('we also find, via a learned probe...') for interpreting latent states and does not underpin or define the accuracy or attribution claims. No self-definitional steps, uniqueness theorems imported from prior author work, ansatzes smuggled via citation, or renaming of known results appear in the provided text. The two-pass parallel training is presented as an enabling component of the proposed architecture rather than a hidden input that forces the reported outcomes.

Axiom & Free-Parameter Ledger

free parameters (1)

- learned blend weights

axioms (1)

- domain assumption Retaining and updating a continuous latent residual stream across positions improves reasoning capacity over standard per-position reconstruction

invented entities (1)

-

State stream

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Evelina Fedorenko, Steven T. Piantadosi, and Edward A. F. Gibson. Language is primarily a tool for communication rather than thought.Nature, 630(8017):575–586, 2024. doi: 10.1038/s41586-024-07522-w. URL https: //www.nature.com/articles/s41586-024-07522-w

-

[2]

A mathematical framework for transformer circuits, 2021

Nelson Elhage, Neel Nanda, Catherine Olsson, Tom Henighan, Nicholas Joseph, Ben Mann, Amanda Askell, Yuntao Bai, Anna Chen, Tom Conerly, Nova DasSarma, Dawn Drain, Deep Ganguli, Zac Hatfield-Dodds, Danny Hernandez, Andy Jones, Jackson Kernion, Liane Lovitt, Kamal Ndousse, Dario Amodei, Tom Brown, Jack Clark, Jared Kaplan, Sam McCandlish, and Chris Olah. A...

2021

-

[3]

From thought to action: How a hierarchy of neural dynamics supports language production

Mingfang Zhang, Jarod Lévy, Stéphane d’Ascoli, Jérémy Rapin, F.-Xavier Alario, Pierre Bourdillon, Svetlana Pinet, and Jean-Rémi King. From thought to action: How a hierarchy of neural dynamics supports language production, 2025. URLhttps://arxiv.org/abs/2502.07429

-

[4]

A survey on latent reasoning, 2025

Rui-Jie Zhu, Tianhao Peng, Tianhao Cheng, Xingwei Qu, Jinfa Huang, Dawei Zhu, Hao Wang, Kaiwen Xue, Xuanliang Zhang, Yong Shan, Tianle Cai, Taylor Kergan, Assel Kembay, Andrew Smith, Chenghua Lin, Binh Nguyen, Yuqi Pan, Yuhong Chou, Zefan Cai, Zhenhe Wu, Yongchi Zhao, Tianyu Liu, Jian Yang, Wangchunshu Zhou, Chujie Zheng, Chongxuan Li, Yuyin Zhou, Zhoujun...

2025

-

[5]

Scaling Laws for Neural Language Models

Jared Kaplan, Sam McCandlish, Tom Henighan, Tom B. Brown, Benjamin Chess, Rewon Child, Scott Gray, Alec Radford, Jeffrey Wu, and Dario Amodei. Scaling laws for neural language models, 2020. URL https: //arxiv.org/abs/2001.08361

work page internal anchor Pith review Pith/arXiv arXiv 2020

-

[6]

Outrageously Large Neural Networks: The Sparsely-Gated Mixture-of-Experts Layer

Noam Shazeer, Azalia Mirhoseini, Krzysztof Maziarz, Andy Davis, Quoc Le, Geoffrey Hinton, and Jeff Dean. Outrageously large neural networks: The sparsely-gated mixture-of-experts layer. InInternational Conference on Learning Representations (ICLR 2017), 2017. URLhttps://arxiv.org/abs/1701.06538

work page internal anchor Pith review Pith/arXiv arXiv 2017

-

[7]

Chain-of-Thought Prompting Elicits Reasoning in Large Language Models

Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Brian Ichter, Fei Xia, Ed Chi, Quoc Le, and Denny Zhou. Chain-of-thought prompting elicits reasoning in large language models. InAdvances in Neural Information Processing Systems (NeurIPS 2022), 2022. URLhttps://arxiv.org/abs/2201.11903

work page internal anchor Pith review arXiv 2022

-

[8]

Learning to reason with llms, 2024

OpenAI. Learning to reason with llms, 2024. https://openai.com/index/ learning-to-reason-with-llms/

2024

-

[9]

DeepSeek-R1 incentivizes reasoning in LLMs through reinforcement learning , volume=

DeepSeek-AI. DeepSeek-R1 incentivizes reasoning in LLMs through reinforcement learning.Nature, 645:633–638, 2025. doi: 10.1038/s41586-025-09422-z. URL https://www.nature.com/articles/ s41586-025-09422-z

-

[10]

Scaling LLM test-time compute optimally can be more effective than scaling model parameters

Charlie Snell, Jaehoon Lee, Kelvin Xu, and Aviral Kumar. Scaling LLM test-time compute optimally can be more effective than scaling model parameters. InInternational Conference on Learning Representations (ICLR 2025),

2025

-

[11]

URLhttps://arxiv.org/abs/2408.03314. Oral. 23

work page internal anchor Pith review arXiv

-

[12]

Bartoldson, Bhavya Kailkhura, Abhinav Bhatele, and Tom Goldstein

Jonas Geiping, Sean McLeish, Neel Jain, John Kirchenbauer, Siddharth Singh, Brian R. Bartoldson, Bhavya Kailkhura, Abhinav Bhatele, and Tom Goldstein. Scaling up test-time compute with latent reasoning: A recurrent depth approach. InAdvances in Neural Information Processing Systems, 2025

2025

-

[13]

Mostafa Dehghani, Stephan Gouws, Oriol Vinyals, Jakob Uszkoreit, and Łukasz Kaiser. Universal transformers. InInternational Conference on Learning Representations (ICLR 2019), 2018. URL https://arxiv.org/abs/ 1807.03819

work page internal anchor Pith review arXiv 2019

-

[14]

4) Giannou, A., Rajput, S., Sohn, J.-y., Lee, K., Lee, J

Angeliki Giannou, Shashank Rajput, Jy-yong Sohn, Kangwook Lee, Jason D. Lee, and Dimitris Papailiopoulos. Looped transformers as programmable computers. InInternational Conference on Machine Learning (ICML 2023), 2023. URLhttps://arxiv.org/abs/2301.13196

-

[15]

Training Large Language Models to Reason in a Continuous Latent Space

Shibo Hao, Sainbayar Sukhbaatar, DiJia Su, Xian Li, Zhiting Hu, Jason Weston, and Yuandong Tian. Training large language models to reason in a continuous latent space, 2024. URLhttps://arxiv.org/abs/2412.06769

work page internal anchor Pith review arXiv 2024

-

[16]

Hierarchical reasoning model, 2025

Guan Wang, Jin Li, Yuhao Sun, Xing Chen, Changling Liu, Yue Wu, Meng Lu, Sen Song, and Yasin Abbasi Yad- kori. Hierarchical reasoning model, 2025. URLhttps://arxiv.org/abs/2506.21734

-

[17]

Mamba: Linear-Time Sequence Modeling with Selective State Spaces

Albert Gu and Tri Dao. Mamba: Linear-time sequence modeling with selective state spaces. InConference on Language Modeling (COLM), 2024. URLhttps://arxiv.org/abs/2312.00752

work page internal anchor Pith review arXiv 2024

-

[18]

The state stream transformer: Emergent metacognitive behaviours through latent state persistence,

Thea Aviss. The state stream transformer: Emergent metacognitive behaviours through latent state persistence,

- [19]

-

[20]

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, and Illia Polosukhin. Attention is all you need. InAdvances in Neural Information Processing Systems (NeurIPS 2017), 2017. URLhttps://arxiv.org/abs/1706.03762

work page internal anchor Pith review Pith/arXiv arXiv 2017

-

[21]

Marc’Aurelio Ranzato, Sumit Chopra, Michael Auli, and Wojciech Zaremba. Sequence level training with recurrent neural networks. InInternational Conference on Learning Representations (ICLR 2016), 2015. URL https://arxiv.org/abs/1511.06732

-

[22]

Efficiently Modeling Long Sequences with Structured State Spaces

Albert Gu, Karan Goel, and Christopher Ré. Efficiently modeling long sequences with structured state spaces. In International Conference on Learning Representations (ICLR 2022), 2021. URL https://arxiv.org/abs/ 2111.00396

work page internal anchor Pith review arXiv 2022

-

[23]

T., Warrington, A., and Linderman, S

Jimmy T.H. Smith, Andrew Warrington, and Scott W. Linderman. Simplified state space layers for sequence modeling. InInternational Conference on Learning Representations (ICLR 2023), 2022. URL https://arxiv. org/abs/2208.04933

-

[24]

Executable code actions elicit better LLM agents, 2024

Xingyao Wang, Yangyi Chen, Lifan Yuan, Yizhe Zhang, Yunzhu Li, Hao Peng, and Heng Ji. Executable code actions elicit better LLM agents. InInternational Conference on Machine Learning (ICML 2024), 2024. URL https://arxiv.org/abs/2402.01030

-

[25]

Training Verifiers to Solve Math Word Problems

Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. Training verifiers to solve math word problems, 2021. URLhttps://arxiv.org/abs/2110.14168

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[26]

QLoRA: Efficient Finetuning of Quantized LLMs

Tim Dettmers, Artidoro Pagnoni, Ari Holtzman, and Luke Zettlemoyer. QLoRA: Efficient finetuning of quantized LLMs. InAdvances in Neural Information Processing Systems (NeurIPS 2023), 2023. URL https://arxiv. org/abs/2305.14314

work page internal anchor Pith review arXiv 2023

-

[27]

Gaussian Error Linear Units (GELUs)

Dan Hendrycks and Kevin Gimpel. Gaussian error linear units (GELUs), 2016. URL https://arxiv.org/ abs/1606.08415

work page internal anchor Pith review arXiv 2016

-

[28]

LoRA: Low-Rank Adaptation of Large Language Models

Edward J. Hu, Yelong Shen, Phillip Wallis, Zeyuan Allen-Zhu, Yuanzhi Li, Shean Wang, Lu Wang, and Weizhu Chen. LoRA: Low-rank adaptation of large language models. InInternational Conference on Learning Represen- tations (ICLR 2022), 2021. URLhttps://arxiv.org/abs/2106.09685

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[29]

Llama 3.1 70b instruct model card, 2024

Meta. Llama 3.1 70b instruct model card, 2024. Hugging Face, https://huggingface.co/meta-llama/ Llama-3.1-70B-Instruct

2024

-

[30]

Qwen2.5: A party of foundation models, 2024

Qwen Team. Qwen2.5: A party of foundation models, 2024. Qwen Blog, https://qwen.ai/blog?id=qwen2. 5. 24

2024

-

[31]

Gemma Team. Gemma 3 technical report. Technical report, Google, 2025. URL https://arxiv.org/abs/ 2503.19786

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[32]

Llama 3.1 405b instruct model card, 2024

Meta. Llama 3.1 405b instruct model card, 2024. Hugging Face, https://huggingface.co/meta-llama/ Llama-3.1-405B-Instruct

2024

-

[33]

Llama 3.3 70b instruct model card, 2024

Meta. Llama 3.3 70b instruct model card, 2024. Hugging Face, https://huggingface.co/meta-llama/ Llama-3.3-70B-Instruct

2024

-

[34]

Gpt-4o system card, 2024.https://openai.com/index/gpt-4o-system-card/

OpenAI. Gpt-4o system card, 2024.https://openai.com/index/gpt-4o-system-card/

2024

-

[35]

DeepSeek-AI. Deepseek-v3 technical report, 2024. URLhttps://arxiv.org/abs/2412.19437

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[36]

Gemini 2.0 flash model card

Google DeepMind. Gemini 2.0 flash model card. https://storage.googleapis.com/deepmind-media/ Model-Cards/Gemini-2-0-Flash-Model-Card.pdf , 2025. URL https://storage.googleapis.com/ deepmind-media/Model-Cards/Gemini-2-0-Flash-Model-Card.pdf

2025

-

[37]

The Gemini 2.0 family expands

Google for Developers. The Gemini 2.0 family expands. https://developers.googleblog. com/en/gemini-2-family-expands/ , 2025. URL https://developers.googleblog.com/en/ gemini-2-family-expands/

2025

-

[38]

David Rein, Betty Li Hou, Asa Cooper Stickland, Jackson Petty, Richard Yuanzhe Pang, Julien Dirani, Julian Michael, and Samuel R. Bowman. GPQA: A graduate-level google-proof Q&A benchmark. InConference on Language Modeling (COLM 2024), 2023. URLhttps://arxiv.org/abs/2311.12022

work page internal anchor Pith review arXiv 2024

-

[39]

Gemini 2.5 pro model card, 2025

Google DeepMind. Gemini 2.5 pro model card, 2025. https://deepmind.google/technologies/gemini/ pro/

2025

-

[40]

Measuring Mathematical Problem Solving With the MATH Dataset

Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the MATH dataset. InAdvances in Neural Information Processing Systems Track on Datasets and Benchmarks (NeurIPS 2021), 2021. URL https: //arxiv.org/abs/2103.03874

work page internal anchor Pith review arXiv 2021

-

[41]

Hunter Lightman, Vineet Kosaraju, Yura Burda, Harri Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. Let’s verify step by step. InInternational Conference on Learning Representations (ICLR 2024), 2023. URLhttps://arxiv.org/abs/2305.20050

work page internal anchor Pith review arXiv 2024

-

[42]

Evaluating large language models trained on code,

Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde de Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, et al. Evaluating large language models trained on code,

-

[43]

URLhttps://arxiv.org/abs/2107.03374

work page internal anchor Pith review Pith/arXiv arXiv

-

[44]

When More Thinking Hurts: Overthinking in LLM Test-Time Compute Scaling

Shu Zhou, Rui Ling, Junan Chen, Xin Wang, Tao Fan, and Hao Wang. When more thinking hurts: Overthinking in LLM test-time compute scaling, 2026. URLhttps://arxiv.org/abs/2604.10739

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[45]

The Hot Mess of AI: How Does Misalignment Scale With Model Intelligence and Task Complexity?

Alexander Hägele, Aryo Pradipta Gema, Henry Sleight, Ethan Perez, and Jascha Sohl-Dickstein. The hot mess of AI: How does misalignment scale with model intelligence and task complexity?, 2026. URL https: //arxiv.org/abs/2601.23045

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[46]

Brevity constraints reverse performance hierarchies in language models, 2026

MD Azizul Hakim. Brevity constraints reverse performance hierarchies in language models, 2026. URL https://arxiv.org/abs/2604.00025

-

[47]

Adaptive Computation Time for Recurrent Neural Networks

Alex Graves. Adaptive computation time for recurrent neural networks, 2016. URL https://arxiv.org/abs/ 1603.08983

work page internal anchor Pith review arXiv 2016

-

[48]

Thomas M. Cover. Geometrical and statistical properties of systems of linear inequalities with applications in pattern recognition.IEEE Transactions on Electronic Computers, EC-14(3):326–334, 1965

1965

-

[49]

Decoupled Weight Decay Regularization

Ilya Loshchilov and Frank Hutter. Decoupled weight decay regularization. InInternational Conference on Learning Representations (ICLR 2019), 2017. URLhttps://arxiv.org/abs/1711.05101

work page internal anchor Pith review Pith/arXiv arXiv 2019

-

[50]

Zico Kolter, and Vladlen Koltun

Shaojie Bai, J. Zico Kolter, and Vladlen Koltun. Deep equilibrium models. InAdvances in Neural Information Processing Systems (NeurIPS 2019), 2019. URLhttps://arxiv.org/abs/1909.01377. Spotlight Oral. 25

-

[51]

Yi Heng Lim, Qi Zhu, Joshua Selfridge, and Muhammad Firmansyah Kasim. Parallelizing non-linear sequential models over the sequence length. InInternational Conference on Learning Representations (ICLR 2024), 2023. URLhttps://arxiv.org/abs/2309.12252

-

[52]

ParaRNN: Unlocking parallel training of nonlinear RNNs for large language models

Federico Danieli, Pau Rodriguez, Miguel Sarabia, Xavier Suau, and Luca Zappella. ParaRNN: Unlocking parallel training of nonlinear RNNs for large language models. InInternational Conference on Learning Representations (ICLR 2026), 2025. URLhttps://arxiv.org/abs/2510.21450. Oral

-

[53]

Combettes and Jean-Christophe Pesquet

Patrick L. Combettes and Jean-Christophe Pesquet. Lipschitz certificates for layered network structures driven by averaged activation operators, 2019. URLhttps://arxiv.org/abs/1903.01014

-

[54]

Biao Zhang and Rico Sennrich. Root mean square layer normalization. InAdvances in Neural Information Processing Systems (NeurIPS 2019), 2019. URLhttps://arxiv.org/abs/1910.07467

-

[55]

Time in hours: {time_hours}

Henry Gouk, Eibe Frank, Bernhard Pfahringer, and Michael J. Cree. Regularisation of neural networks by enforcing Lipschitz continuity.Machine Learning, 110:393–416, 2021. A Training A.1 Dataset and task formulation The model is fine-tuned on GSM8K grade-school math problems formulated as CodeACT tasks. In the CodeACT paradigm, the model calls tools by emi...

2021

-

[56]

Remove all timesteps belonging to the held-out question from the training set

-

[57]

Train a fresh probe from scratch on the remaining timesteps (identical pipeline to Appendix F.1: same architecture, seed, epochs, class balancing)

-

[58]

Record whether the held-out question is correctly classified

Run the full GPQA-Diamond evaluation with the freshly trained probe (procedure in Appendix F.5). Record whether the held-out question is correctly classified. Result:29of48held-outMUST HALTquestions are correctly classified (60%). Null hypothesis and statistical test.The null hypothesis is memorisation: the probe stores the trainingMUST HALT patterns as a...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.