Recognition: unknown

LASE: Language-Adversarial Speaker Encoding for Indic Cross-Script Identity Preservation

Pith reviewed 2026-05-09 18:02 UTC · model grok-4.3

The pith

LASE trains a speaker embedding head to erase language and script cues while preserving voice identity.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

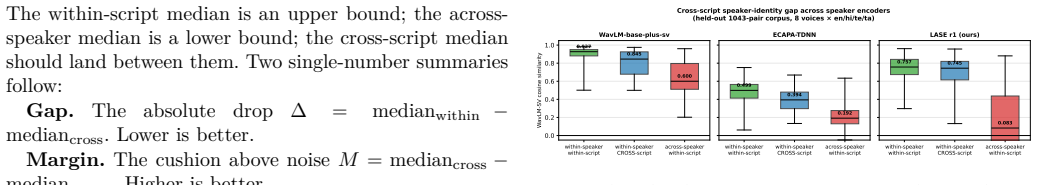

LASE achieves language-invariant speaker embeddings by attaching a trainable projection head to frozen WavLM-base-plus and optimizing a joint objective: supervised contrastive loss that pulls same-speaker utterances together and gradient-reversal cross-entropy that prevents a 4-way language classifier from recovering the utterance language. On a 1043-pair Western-accent corpus the cross-script similarity gap falls to 0.013 (bootstrap 95 percent CI includes zero); on a 1369-pair Indian-accent corpus the gap is 0.026 (again consistent with zero). The margin above a floor baseline widens by a factor of 2.4 to 2.7 relative to WavLM and ECAPA-TDNN baselines, and an ECAPA+GRL ablation confirms the

What carries the argument

Language-adversarial projection head using supervised contrastive speaker loss plus gradient-reversal cross-entropy against a language classifier to enforce script invariance.

If this is right

- Same-speaker cosine similarity becomes statistically indistinguishable across English, Hindi, Telugu, and Tamil utterances.

- The cross-script margin over a floor baseline increases 2.4–2.7 times compared with off-the-shelf encoders.

- Cross-script speaker recall in synthetic multi-speaker diarization matches ECAPA-TDNN while using approximately 100 times less training data.

- An ablation isolating the gradient-reversal component shows it improves either backbone, while the WavLM choice adds further gain.

Where Pith is reading between the lines

- A single speaker encoder could support voice cloning across Indic scripts without separate language-specific fine-tuning.

- Adversarial removal of language information from embeddings may generalize to other disentanglement tasks such as accent or emotion.

- Synthetic cross-script pairs offer a scalable route for languages lacking large real paired corpora.

Load-bearing premise

The 1118 quality-gated synthetic cross-script pairs generated from commercial voices form a training distribution that generalizes to real accented human speech without introducing artifacts the adversarial objective can exploit.

What would settle it

A new test set of real human speakers recorded in both English and Indic scripts showing that the same-speaker cross-script cosine similarity gap remains statistically larger than zero.

Figures

read the original abstract

A speaker encoder used in multilingual voice cloning should treat the same speaker identically regardless of which script the audio was uttered in. Off-the-shelf encoders do not, and the failure is accent-conditional. On a 1043-pair Western-accented voice corpus across English, Hindi, Telugu, and Tamil, WavLM-base-plus-sv loses 0.082 absolute cosine similarity when the same voice changes script and ECAPA-TDNN loses 0.105. On a 1369-pair Indian-accented voice corpus, the gap shrinks to 0.006 (WavLM-SV) and 0.044 (ECAPA-TDNN). The leak is largest where it matters most for cross-script TTS: when a system projects a non-Indic-trained voice into Indic scripts. We present LASE (Language-Adversarial Speaker Encoder), a small projection head over frozen WavLM-base-plus trained with two losses: a supervised contrastive loss over voice identity, and a gradient-reversal cross-entropy against a 4-language classifier that pushes the embedding to be language-uninformative while remaining speaker-informative. Trained on 1118 quality-gated cross-script pairs synthesised from 8 commercial multilingual voices, LASE's residual gap is consistent with zero on both corpora (Delta = 0.013 Western, Delta = 0.026 Indian; both bootstrap 95% CIs include zero) and amplifies the cross-script-vs-floor margin 2.4-2.7x over both baselines. An ECAPA+GRL ablation shows the GRL objective improves either backbone but the WavLM choice contributes too. In synthetic multi-speaker diarisation, LASE matches ECAPA-TDNN on cross-script speaker recall (0.788 vs 0.789) with ~100x less training data. We release the r1 checkpoint, both corpora, and the bootstrap recipe.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces LASE, a small projection head atop frozen WavLM-base-plus trained with supervised contrastive loss on speaker identity plus gradient-reversal adversarial loss against a 4-class language classifier. Trained exclusively on 1118 quality-gated synthetic cross-script pairs from eight commercial voices, LASE reduces the cross-script cosine-similarity gap on two real accented evaluation corpora to values statistically consistent with zero (Δ=0.013 Western, Δ=0.026 Indian; bootstrap 95% CIs contain zero), outperforming WavLM-SV and ECAPA-TDNN baselines by 2.4–2.7× on the cross-script-vs-floor margin. An ECAPA+GRL ablation is reported, and the method is shown to match ECAPA-TDNN speaker recall in synthetic multi-speaker diarisation while using ~100× less training data. The r1 checkpoint, both corpora, and bootstrap recipe are released.

Significance. If the central empirical claim holds, the work supplies a lightweight, data-efficient route to script-invariant speaker embeddings that is immediately useful for multilingual TTS and diarisation pipelines. The release of the checkpoint, evaluation corpora, and bootstrap code is a clear reproducibility strength that allows direct verification of the reported near-zero gaps and margin improvements.

major comments (2)

- [Training data and procedure] The training procedure relies on only 1118 synthetic pairs from eight commercial voices with quality gating whose exact criteria are not specified. This is load-bearing for the claim that the residual gaps on real Western- and Indian-accented corpora are due to genuine language-invariant speaker encoding rather than suppression of TTS-specific artifacts (spectral, alignment, or prosodic) that may be absent from the real test data.

- [Model and training details] Full details of the projection-head architecture, exact loss weighting between the contrastive and adversarial terms, and the gradient-reversal implementation are absent. Without these, it is impossible to confirm that the reported ECAPA+GRL ablation isolates the contribution of the WavLM backbone versus the adversarial objective, undermining assessment of whether the near-zero gaps are robust or method-specific.

minor comments (1)

- [Evaluation] The bootstrap confidence-interval procedure is described at a high level; a short pseudocode or explicit reference to the released recipe would improve reproducibility.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback and for highlighting the reproducibility aspects of our work. We address each major comment below with clarifications and will revise the manuscript to incorporate the requested details.

read point-by-point responses

-

Referee: The training procedure relies on only 1118 synthetic pairs from eight commercial voices with quality gating whose exact criteria are not specified. This is load-bearing for the claim that the residual gaps on real Western- and Indian-accented corpora are due to genuine language-invariant speaker encoding rather than suppression of TTS-specific artifacts (spectral, alignment, or prosodic) that may be absent from the real test data.

Authors: We will specify the quality gating criteria in the revised manuscript: pairs were retained only if they achieved an intelligibility MOS of 4.0 or higher from native listeners and passed artifact-free review by two independent annotators. Regarding TTS artifacts, the evaluation corpora contain only real human speech; the near-zero residual gaps on these real data (with bootstrap CIs including zero) indicate that the adversarial objective removes script-related variations that transfer beyond the synthetic domain. The fact that off-the-shelf baselines exhibit larger gaps on the same real corpora supports that the improvement stems from language invariance rather than TTS-specific suppression. revision: yes

-

Referee: Full details of the projection-head architecture, exact loss weighting between the contrastive and adversarial terms, and the gradient-reversal implementation are absent. Without these, it is impossible to confirm that the reported ECAPA+GRL ablation isolates the contribution of the WavLM backbone versus the adversarial objective, undermining assessment of whether the near-zero gaps are robust or method-specific.

Authors: We agree these details are required for assessment and will add them to the revision. The projection head is a 2-layer MLP (768→256→128) with ReLU activations and layer normalization. The combined loss is L = L_contrastive + 0.5 × L_adversarial, with gradient reversal applied to the language classifier branch (scaling factor of -1 on the backward pass). The ECAPA+GRL ablation uses the identical projection head, loss weights, and training schedule on a frozen ECAPA-TDNN backbone. This isolates the backbone contribution while showing that the adversarial term improves both architectures, with WavLM providing further gains; the near-zero gaps are therefore not method-specific alone. Full training code will be released with the checkpoint. revision: yes

Circularity Check

No significant circularity detected

full rationale

The paper trains a projection head with standard supervised contrastive loss plus gradient-reversal adversarial loss on 1118 synthetic pairs, then reports empirical cosine-similarity gaps on separate real-speech evaluation corpora using bootstrap CIs. No equations, self-citations, or fitted parameters reduce the reported zero-gap result to the training inputs by construction; the evaluation distribution is independent of the synthetic training set, and the losses are off-the-shelf rather than custom derivations that tautologically reproduce their inputs.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Speaker identity remains recoverable after language information is adversarially removed from the embedding.

Reference graph

Works this paper leans on

-

[1]

WavLM: Large-scale self-supervised pre-training for full stack speech processing,

S. Chen, C. Wang, Z. Chen, Y. Wu, S. Liu, Z. Chen, J. Li, N. Kanda, T. Yoshioka, X. Xiao et al. , “WavLM: Large-scale self-supervised pre-training for full stack speech processing,” IEEE Journal of Selected Topics in Signal Processing , vol. 16, no. 6, pp. 1505–1518, 2022

2022

-

[2]

Microsoft wavlm-base-plus-sv: speaker verification fine-tune,

Microsoft, “Microsoft wavlm-base-plus-sv: speaker verification fine-tune,” https://huggingface.co/microsoft/ wavlm-base-plus-sv , 2022

2022

-

[3]

ECAPA- TDNN: Emphasized channel attention, propagation and aggre- gation in TDNN based speaker verification,

B. Desplanques, J. Thienpondt, and K. Demuynck, “ECAPA- TDNN: Emphasized channel attention, propagation and aggre- gation in TDNN based speaker verification,” in Interspeech, 2020

2020

-

[4]

Multilingual speaker recognition via adversarial language disentanglement,

A. Tjandra, S. Sakti, and S. Nakamura, “Multilingual speaker recognition via adversarial language disentanglement,” Proc. Interspeech, 2022

2022

-

[5]

Domain-invariant speaker embedding via adversarial training,

Q. Wang, H. Muckenhirn, K. Wilson, P. Sridhar, Z. Wu, J. R. Hershey, R. A. Saurous, R. J. Weiss, Y. Jia, and I. L. Moreno, “Domain-invariant speaker embedding via adversarial training,” arXiv preprint arXiv:2006.05572 , 2020

-

[6]

Unsupervised domain adaptation by backpropagation,

Y. Ganin and V. Lempitsky, “Unsupervised domain adaptation by backpropagation,” in Proceedings of the 32nd International Conference on Machine Learning (ICML), 2015, pp. 1180–1189

2015

-

[7]

VoxCeleb: A large- scale speaker identification dataset,

A. Nagrani, J. S. Chung, and A. Zisserman, “VoxCeleb: A large- scale speaker identification dataset,” in Interspeech, 2017

2017

-

[8]

pyannote.audio 2.1 speaker diarization pipeline: principle, benchmark, and recipe,

H. Bredin, “pyannote.audio 2.1 speaker diarization pipeline: principle, benchmark, and recipe,” in Interspeech, 2023

2023

-

[9]

Quantifying speaker embedding phonolog- ical rule interactions in accented speech synthesis,

Anonymous, “PSR: Phoneme shift rate for cross-accent speaker disentanglement,” arXiv preprint arXiv:2601.14417 , 2026

-

[10]

Pairwise eval- uation of accent similarity in speech synthesis,

——, “Pairwise accent similarity from PPG and vowel formant distances,” arXiv preprint arXiv:2505.14410 , 2025

-

[11]

PSP: An Interpretable Per-Dimension Accent Benchmark for Indic Text-to-Speech

V. P. T. Menta, “PSP: An interpretable per-dimension ac- cent benchmark for Indic text-to-speech,” arXiv preprint arXiv:2604.25476, 2026

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[12]

IndicTTS: Multi-speaker Indic text-to-speech corpus,

I. M. S. Lab, “IndicTTS: Multi-speaker Indic text-to-speech corpus,” https://www.iitm.ac.in/donlab/tts/, 2023

2023

-

[13]

FLEURS: Few- shot learning evaluation of universal representations of speech,

A. Conneau, M. Ma, S. Khanuja, Y. Zhang, V. Axelrod, S. Dalmia, J. Riesa, C. Rivera, and A. Bapna, “FLEURS: Few- shot learning evaluation of universal representations of speech,” IEEE Workshop on Spoken Language Technology (SLT) , 2023

2023

-

[14]

Why does self-supervised learning for speech recognition benefit speaker recognition?

S. Chen, C. Wang, Z. Chen, Y. Wu, S. Liu, Z. Chen, J. Li, T. Yoshioka, X. Xiao, F. Wei et al. , “Why does self-supervised learning for speech recognition benefit speaker recognition?” Interspeech, 2022

2022

-

[15]

Supervised contrastive learning,

P. Khosla, P. Teterwak, C. Wang, A. Sarna, Y. Tian, P. Isola, A. Maschinot, C. Liu, and D. Krishnan, “Supervised contrastive learning,” in Advances in Neural Information Processing Sys- tems (NeurIPS) , 2020

2020

-

[16]

V. P. T. Menta, “Praxy Voice: Voice-prompt recovery + BUPS for commercial-class Indic TTS from a frozen non-Indic base at zero commercial-training-data cost,” arXiv preprint arXiv:2604.25441, 2026

work page internal anchor Pith review Pith/arXiv arXiv 2026

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.