Recognition: unknown

HyCOP: Hybrid Composition Operators for Interpretable Learning of PDEs

Pith reviewed 2026-05-09 17:58 UTC · model grok-4.3

The pith

HyCOP learns a policy to compose simple modules into short programs that solve parametric PDEs more accurately outside the training distribution.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

HyCOP learns parametric PDE solution operators by composing simple modules (advection, diffusion, learned closures, boundary handling) in a query-conditioned way, producing interpretable programs rather than monolithic maps, with order-of-magnitude out-of-distribution improvements over monolithic neural operators and support for modular transfer through dictionary updates, while theory characterizes expressivity and supplies an error decomposition separating composition error from module error.

What carries the argument

A policy over short programs that selects and sequences modules conditioned on regime features and state statistics, enabling hybrid numerical-learned surrogates evaluable at arbitrary query times.

If this is right

- The resulting programs are human-readable sequences of module applications rather than opaque weight matrices.

- Out-of-distribution accuracy improves by an order of magnitude compared with monolithic neural operators on the tested benchmarks.

- Boundary conditions or residual terms can be changed by updating entries in the module dictionary without retraining the full model.

- Solutions can be queried at arbitrary times without autoregressive rollout of intermediate steps.

- The error decomposition isolates whether failures stem from poor module choice or from individual module inaccuracy.

Where Pith is reading between the lines

- The modular structure may make it easier to enforce physical constraints such as conservation by restricting the allowed module dictionary.

- Dictionary-based transfer could reduce the cost of adapting a model to new geometries or forcing terms that share most but not all physics.

- Inspecting the policy-chosen program on a failing query could serve as a diagnostic tool to decide whether to add a new module type.

Load-bearing premise

A learned policy can reliably select and compose simple modules to capture full PDE dynamics without substantial composition error or loss of accuracy across regimes.

What would settle it

Running the method on a held-out PDE regime where the automatically chosen module sequences either match or exceed monolithic neural operator accuracy while remaining short and interpretable, or conversely where the programs become long or inaccurate due to composition failures.

Figures

read the original abstract

We introduce HyCOP, a modular framework that learns parametric PDE solution operators by composing simple modules (advection, diffusion, learned closures, boundary handling) in a query-conditioned way. Rather than learning a monolithic map, HyCOP learns a policy over short programs - which module to apply and for how long - conditioned on regime features and state statistics. Modules may be numerical sub-solvers or learned components, enabling hybrid surrogates evaluated at arbitrary query times without autoregressive rollout. Across diverse PDE benchmarks, HyCOP produces interpretable programs, delivers order-of-magnitude OOD improvements over monolithic neural operators, and supports modular transfer through dictionary updates (e.g., boundary swaps, residual enrichment). Our theory characterizes expressivity and gives an error decomposition that separates composition error from module error and doubles as a process-level diagnostic.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

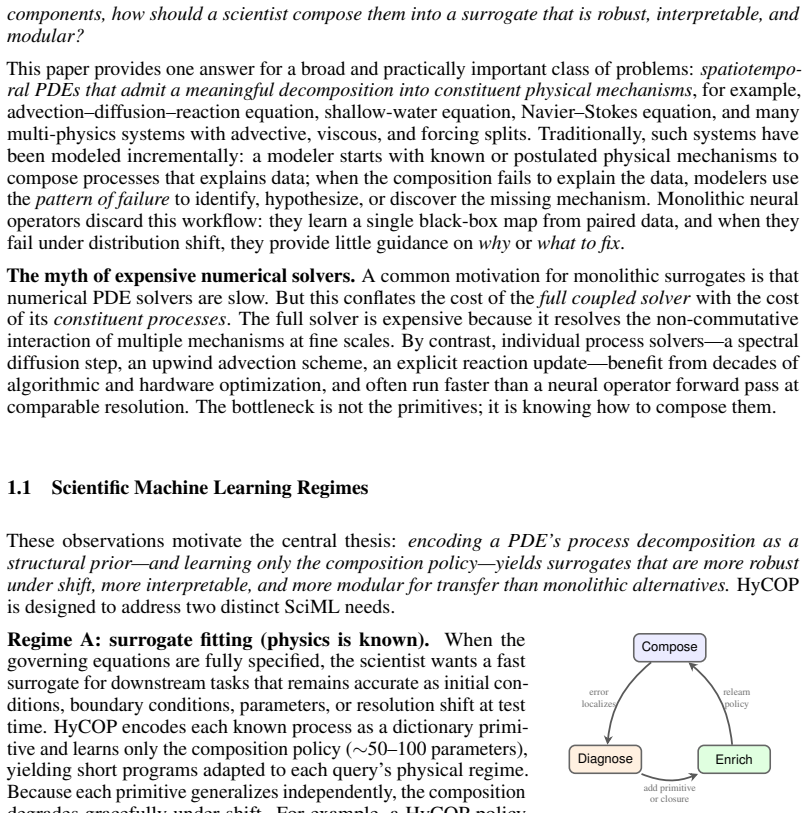

Summary. The paper introduces HyCOP, a modular framework for learning parametric PDE solution operators by composing simple modules (advection, diffusion, learned closures, boundary handling) via a query-conditioned policy over short programs. Modules may be numerical sub-solvers or learned components, enabling hybrid surrogates evaluable at arbitrary query times without autoregressive rollout. The manuscript claims that HyCOP yields interpretable programs, order-of-magnitude OOD gains over monolithic neural operators, and supports modular transfer via dictionary updates (e.g., boundary swaps, residual enrichment). It also provides a theory characterizing expressivity together with an error decomposition that separates composition error from module error and serves as a process-level diagnostic.

Significance. If the empirical OOD gains and modular-transfer results hold, the work would be significant for scientific machine learning and computational engineering. It offers a hybrid, interpretable alternative to black-box neural operators, with built-in support for regime adaptation and a diagnostic decomposition that can surface composition failures. The provision of modular-transfer experiments and an explicit error decomposition are concrete strengths that go beyond typical neural-operator papers.

minor comments (3)

- [Abstract] Abstract: the phrase 'order-of-magnitude OOD improvements' is strong; the main text should report the precise factors, baselines, and statistical variability from the benchmarks to allow direct assessment of the claim.

- [Theory] Theory section: while the error decomposition is a useful diagnostic, the manuscript should explicitly show how composition error is isolated and measured in the reported experiments so that readers can verify the separation.

- [Experiments] Experiments: clarify the exact conditioning features (regime features and state statistics) used by the policy and provide pseudocode or a small example program to illustrate interpretability.

Simulated Author's Rebuttal

We thank the referee for the positive assessment of HyCOP, the accurate summary of its contributions, and the recommendation for minor revision. The referee's description correctly highlights the modular composition, query-conditioned policy, hybrid numerical-learned modules, OOD gains, modular transfer, and the error decomposition.

Circularity Check

No significant circularity

full rationale

The paper's central claims rest on a modular policy-learning framework, empirical OOD benchmarks across PDEs, and an independent theoretical characterization of expressivity plus an error decomposition that separates composition from module error. No load-bearing step reduces by construction to a fitted parameter, self-citation, or renamed input; the derivation chain is self-contained against external benchmarks and does not invoke uniqueness theorems or ansatzes from prior author work as the sole justification.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Journal of Computational Physics , volume =

Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations , author =. Journal of Computational Physics , volume =. 2019 , doi =

2019

-

[2]

Learning nonlinear operators via

Lu, Lu and Jin, Pengzhan and Pang, Guofei and Zhang, Zhongqiang and Karniadakis, George Em , journal =. Learning nonlinear operators via. 2021 , doi =

2021

-

[3]

Neural Operator: Learning Maps Between Function Spaces with Applications to

Kovachki, Nikola and Li, Zongyi and Liu, Burigede and Azizzadenesheli, Kamyar and Bhattacharya, Kaushik and Stuart, Andrew and Anandkumar, Anima , journal =. Neural Operator: Learning Maps Between Function Spaces with Applications to. 2023 , url =

2023

-

[4]

International Conference on Learning Representations (ICLR) , year =

Fourier Neural Operator for Parametric Partial Differential Equations , author =. International Conference on Learning Representations (ICLR) , year =

-

[5]

Convolutional Neural Operators for Robust and Accurate Learning of

Raoni. Convolutional Neural Operators for Robust and Accurate Learning of. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[6]

ACM/IMS Journal of Data Science , volume =

Physics-Informed Neural Operator for Learning Partial Differential Equations , author =. ACM/IMS Journal of Data Science , volume =. 2024 , doi =

2024

-

[7]

Poseidon: Efficient Foundation Models for

Herde, Maximilian and Raoni. Poseidon: Efficient Foundation Models for. Advances in Neural Information Processing Systems (NeurIPS) , year =

-

[8]

SIAM Journal on Scientific Computing , volume =

Reduced Operator Inference for Nonlinear Partial Differential Equations , author =. SIAM Journal on Scientific Computing , volume =. 2022 , doi =

2022

-

[9]

SIAM Journal on Scientific Computing , volume =

Nonintrusive Reduced-Order Models for Parametric Partial Differential Equations via Data-Driven Operator Inference , author =. SIAM Journal on Scientific Computing , volume =. 2023 , doi =

2023

-

[10]

Annual Review of Fluid Mechanics , volume =

Learning Nonlinear Reduced Models from Data with Operator Inference , author =. Annual Review of Fluid Mechanics , volume =. 2024 , doi =

2024

-

[11]

, journal =

Chen, Yifan and Hosseini, Bamdad and Owhadi, Houman and Stuart, Andrew M. , journal =. Solving and Learning Nonlinear. 2021 , doi =

2021

-

[12]

Journal of Computational Physics , volume =

Kernel Methods are Competitive for Operator Learning , author =. Journal of Computational Physics , volume =. 2024 , doi =

2024

-

[13]

Annual Reviews in Control , volume =

Koopman Operator Dynamical Models: Learning, Analysis and Control , author =. Annual Reviews in Control , volume =. 2021 , doi =

2021

-

[14]

and Budi

Brunton, Steven L. and Budi. Modern. SIAM Review , volume =. 2022 , doi =

2022

-

[15]

and Azizzadenesheli, Kamyar , journal =

Rahman, Md Ashiqur and Ross, Zachary E. and Azizzadenesheli, Kamyar , journal =. U-. 2023 , issn =

2023

-

[16]

Proceedings of the 41st International Conference on Machine Learning (ICML) , pages =

Neural Operators with Localized Integral and Differential Kernels , author =. Proceedings of the 41st International Conference on Machine Learning (ICML) , pages =. 2024 , volume =

2024

-

[17]

2015 , volume =

Ronneberger, Olaf and Fischer, Philipp and Brox, Thomas , booktitle =. 2015 , volume =

2015

-

[18]

2025 , eprint=

Scale-Consistent Learning for Partial Differential Equations , author=. 2025 , eprint=

2025

-

[19]

2025 , eprint=

Physics Steering: Causal Control of Cross-Domain Concepts in a Physics Foundation Model , author=. 2025 , eprint=

2025

-

[20]

Factorized

Tran, Alasdair and Mathews, Alexander and Xie, Lexing and Ong, Cheng Soon , booktitle =. Factorized. 2023 , url =

2023

-

[21]

Advances in Neural Information Processing Systems (NeurIPS) Track on Datasets and Benchmarks , year =

Takamoto, Makoto and Praditia, Timothy and Leiteritz, Raphael and MacKinlay, Dan and Alesiani, Francesco and Pfl. Advances in Neural Information Processing Systems (NeurIPS) Track on Datasets and Benchmarks , year =

-

[22]

Wilhelm, Maite J. C. and Portegies Zwart, Simon , journal =. 2024 , doi =

2024

-

[23]

Fractal decomposition of exponential operators with applications to many-body theories and

Suzuki, Masuo , journal =. Fractal decomposition of exponential operators with applications to many-body theories and. 1990 , doi =

1990

-

[24]

and Su, Yuan and Tran, Minh C

Childs, Andrew M. and Su, Yuan and Tran, Minh C. and Wiebe, Nathan and Zhu, Shuchen , journal =. Theory of. 2021 , doi =

2021

-

[25]

and Childs, Andrew M

Berry, Dominic W. and Childs, Andrew M. and Cleve, Richard and Kothari, Robin and Somma, Rolando D. , journal =. Simulating. 2015 , doi =

2015

-

[26]

arXiv preprint arXiv:2602.00884 , year=

Test-time Generalization for Physics through Neural Operator Splitting , author=. arXiv preprint arXiv:2602.00884 , year=

-

[27]

Zhang, Jiahao and Wang, Yueqi and Lin, Guang , journal =

-

[28]

arXiv preprint arXiv:2403.12938 , year=

Learning Neural Differential Algebraic Equations via Operator Splitting , author=. arXiv preprint arXiv:2403.12938 , year=

-

[29]

Learning Physical Operators using Neural Operators

Learning Physical Operators using Neural Operators , author=. arXiv preprint arXiv:2602.23113 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[30]

arXiv preprint arXiv:2410.23889 , year=

Kassa. arXiv preprint arXiv:2410.23889 , year=

-

[31]

Morel, Rudy and Han, Jiequn and Oyallon, Edouard , booktitle=

-

[32]

and Perdikaris, Paris and Turner, Richard E

Lippe, Phillip and Veeling, Bastiaan S. and Perdikaris, Paris and Turner, Richard E. and Brandstetter, Johannes , booktitle=

-

[33]

Blending Neural Operators and Relaxation Methods in

Zhang, Enrui and Kahana, Adar and Kopani. Blending Neural Operators and Relaxation Methods in. Nature Machine Intelligence , volume=. 2024 , doi=

2024

-

[34]

Machine Learning: Science and Technology , volume=

Verification and Validation for Trustworthy Scientific Machine Learning , author=. Machine Learning: Science and Technology , volume=. 2026 , publisher=

2026

-

[35]

55th AIAA Aerospace Sciences Meeting , year=

Singh, Anand Pratap and Duraisamy, Karthikeyan and Zhang, Ze Jia , title=. 55th AIAA Aerospace Sciences Meeting , year=

-

[36]

Perez, Ethan and Strub, Florian and de Vries, Harm and Dumoulin, Vincent and Courville, Aaron , title =. Proceedings of the Thirty-Second AAAI Conference on Artificial Intelligence and Thirtieth Innovative Applications of Artificial Intelligence Conference and Eighth AAAI Symposium on Educational Advances in Artificial Intelligence , articleno =. 2018 , isbn =

2018

-

[37]

and Fournier, John J

Adams, Robert A. and Fournier, John J. F. , title =

-

[38]

Berge, Claude , title =

-

[39]

Acta Numerica , volume =

Blanes, Sergio and Casas, Fernando and Murua, Ander , title =. Acta Numerica , volume =

-

[40]

Mathematics of Control, Signals and Systems , volume =

Cybenko, George , title =. Mathematics of Control, Signals and Systems , volume =

-

[41]

and Kaper, T

Goldman, D. and Kaper, T. J. , title =. SIAM Journal on Numerical Analysis , volume =

-

[42]

Hairer, Ernst and Lubich, Christian and Wanner, Gerhard , title =

-

[43]

Neural Networks , volume =

Hornik, Kurt and Stinchcombe, Maxwell and White, Halbert , title =. Neural Networks , volume =

-

[44]

BIT Numerical Mathematics , volume =

Jahnke, Tobias and Lubich, Christian , title =. BIT Numerical Mathematics , volume =

-

[45]

and Quispel, G

McLachlan, Robert I. and Quispel, G. Reinout W. , title =. Acta Numerica , volume =

-

[46]

Annals of Mathematics , volume =

Michael, Ernest , title =. Annals of Mathematics , volume =

-

[47]

Salimans, Tim and Ho, Jonathan and Chen, Xi and Sidor, Szymon and Sutskever, Ilya , title =. arXiv preprint arXiv:1703.03864 , year =

-

[48]

IMA Journal of Numerical Analysis , volume =

Sheng, Qin , title =. IMA Journal of Numerical Analysis , volume =

-

[49]

Journal of Mathematical Physics , volume =

Suzuki, Masuo , title =. Journal of Mathematical Physics , volume =

-

[50]

SIAM Journal on Numerical Analysis , volume =

Thalhammer, Mechthild , title =. SIAM Journal on Numerical Analysis , volume =

-

[51]

Solving Ordinary Differential Equations

Hairer, Ernst and N. Solving Ordinary Differential Equations

-

[52]

2017 , eprint=

The Concrete Distribution: A Continuous Relaxation of Discrete Random Variables , author=. 2017 , eprint=

2017

-

[53]

SIAM journal on numerical analysis , volume=

On the construction and comparison of difference schemes , author=. SIAM journal on numerical analysis , volume=. 1968 , publisher=

1968

-

[54]

Splitting Methods in Communication, Imaging, Science, and Engineering , editor=

Operator Splitting , author=. Splitting Methods in Communication, Imaging, Science, and Engineering , editor=. 2016 , publisher=

2016

-

[55]

Proceedings of the American Mathematical Society , volume=

On the product of semi-groups of operators , author=. Proceedings of the American Mathematical Society , volume=. 1959 , publisher=

1959

-

[56]

, series =

Olver, Peter J. , series =. Applications of. 1993 , publisher =

1993

-

[57]

, series=

Hall, Brian C. , series=. 2015 , publisher=

2015

-

[58]

1983 , publisher =

Semigroups of Linear Operators and Applications to Partial Differential Equations , author =. 1983 , publisher =

1983

-

[59]

and Liggett, Thomas M

Crandall, Michael G. and Liggett, Thomas M. , journal =. Generation of Semi-groups of Nonlinear Transformations on General

-

[60]

2024 , publisher=

Dawson, Clint and Loveland, Mark and Pachev, Benjamin and Proft, Jennifer and Valseth, Eirik , journal=. 2024 , publisher=

2024

-

[61]

and Dean, Joseph P

Baratta, Igor A. and Dean, Joseph P. and Dokken, J. 2023 , doi=

2023

-

[62]

Serrano et al., "Test-time Generalization

-

[63]

Zhang et al., "LegONet: Plug-and-Play Structure-

-

[64]

^ Depends on whether the fixed schedule suits the target regime

Koch et al., "Learning Neural Differential Algebraic related_works, proof plainnat table [t] Positioning. ^ Depends on whether the fixed schedule suits the target regime. tab:positioning 3pt tabular lccc & Classical splitting & Neural operators & HyCOP \\ Composition & Fixed schedule & None (monolithic) & Learned policy \\ Regime adaptivity & None & Learn...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.