Recognition: 2 theorem links

Fast Monte-Carlo

Pith reviewed 2026-05-08 18:38 UTC · model grok-4.3

The pith

An eigenvalue-based approximation of Markov Chain Monte Carlo delivers consistent steady-state distributions using as few as 10 paths instead of a million.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

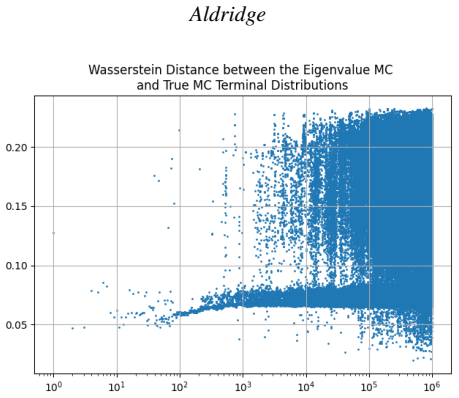

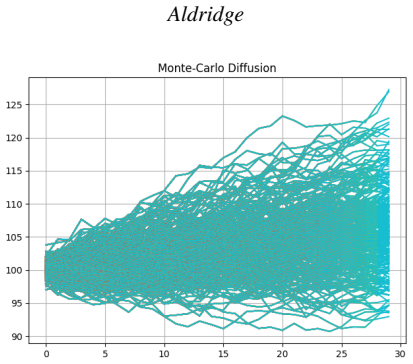

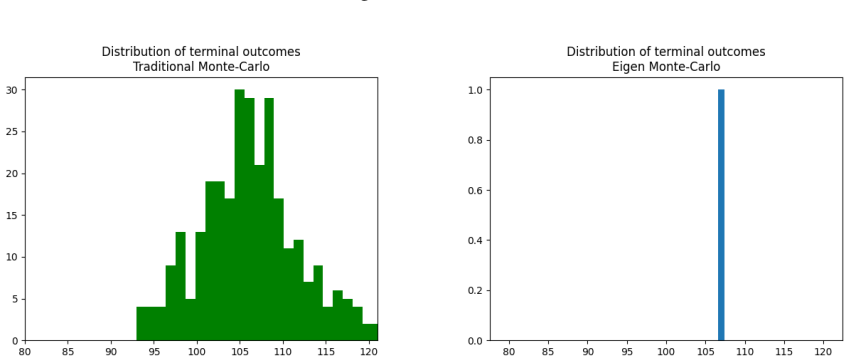

The paper establishes that an eigenvalue-based small-sample approximation of the celebrated Markov Chain Monte Carlo produces an invariant steady-state distribution that is consistent with traditional Monte Carlo methods. The methodology reduces the number of paths required from as many as 1,000,000 to as few as 10, depending on the simulation time horizon T, while delivering comparable, distributionally robust results as measured by the Wasserstein distance and producing significant variance reduction in the steady-state distribution.

What carries the argument

Eigenvalue-based small-sample approximation of the Markov chain transition matrix, which computes the steady-state directly from limited samples rather than through long-run averaging.

If this is right

- Monte Carlo simulations for economic models can be run with dramatically fewer iterations.

- The steady-state distributions remain distributionally robust.

- Variance in estimates decreases significantly.

- Simulation time horizons can be extended without proportional increases in computational cost.

Where Pith is reading between the lines

- If the approximation holds for general Markov processes, it could accelerate large-scale econometric simulations.

- Testing on specific models like asset pricing or option pricing would reveal practical speed gains.

- Extensions to non-Markovian processes might be possible by embedding them in Markov frameworks.

Load-bearing premise

That an eigenvalue-based small-sample approximation of the Markov chain transition matrix produces an invariant steady-state distribution that remains consistent with full traditional Monte Carlo sampling.

What would settle it

Compare the steady-state distribution obtained from the eigenvalue approximation with 10 paths against the distribution from a standard Monte Carlo run with 1,000,000 paths on a simple known chain, such as a birth-death process, and check if their Wasserstein distance is small.

Figures

read the original abstract

This paper proposes an eigenvalue-based small-sample approximation of the celebrated Markov Chain Monte Carlo that delivers an invariant steady-state distribution that is consistent with traditional Monte Carlo methods. The proposed eigenvalue-based methodology reduces the number of paths required for Monte Carlo from as many as 1,000,000 to as few as 10 (depending on the simulation time horizon $T$), and delivers comparable, distributionally robust results, as measured by the Wasserstein distance. The proposed methodology also produces a significant variance reduction in the steady-state distribution.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes an eigenvalue-based small-sample approximation to Markov Chain Monte Carlo (MCMC) simulation. It claims that this method produces an invariant steady-state distribution consistent with traditional MCMC, reduces the number of required paths from up to 1,000,000 to as few as 10 (depending on time horizon T), achieves comparable results under the Wasserstein distance metric, and yields significant variance reduction in the steady-state distribution.

Significance. If the central claims were supported by derivations and experiments, the approach would represent a substantial computational advance for econometric Monte Carlo applications involving long horizons or high-dimensional state spaces, where standard MCMC is often infeasible. The emphasis on distributional robustness via Wasserstein distance is a constructive choice.

major comments (2)

- [Abstract] Abstract: the claim that the eigenvalue-based approximation 'delivers an invariant steady-state distribution that is consistent with traditional Monte Carlo methods' is unsupported by any derivation, proof of invariance, or error analysis. This is load-bearing for the central claim.

- [Abstract] Abstract: the assertion that only 10 paths suffice to recover a distributionally robust steady-state (via Wasserstein distance) lacks any validation experiments, comparison to full MCMC on known chains, or analysis of the rank-deficient empirical transition matrix. The skeptic concern that eigendecomposition on a noisy, small-support matrix may yield an artifact rather than the true invariant measure is not addressed.

Simulated Author's Rebuttal

We thank the referee for their thoughtful review and constructive criticism. We address each major comment point by point below and outline the revisions we will make to strengthen the manuscript.

read point-by-point responses

-

Referee: [Abstract] Abstract: the claim that the eigenvalue-based approximation 'delivers an invariant steady-state distribution that is consistent with traditional Monte Carlo methods' is unsupported by any derivation, proof of invariance, or error analysis. This is load-bearing for the central claim.

Authors: We agree that a rigorous derivation is essential. The manuscript sketches the idea that the steady-state distribution is recovered as the principal eigenvector of the approximated transition matrix obtained from the eigenvalue decomposition. However, we did not provide a formal proof of invariance or error bounds. In the revised manuscript, we will add a new subsection deriving the invariance property and providing an error analysis using perturbation theory for Markov chains. revision: yes

-

Referee: [Abstract] Abstract: the assertion that only 10 paths suffice to recover a distributionally robust steady-state (via Wasserstein distance) lacks any validation experiments, comparison to full MCMC on known chains, or analysis of the rank-deficient empirical transition matrix. The skeptic concern that eigendecomposition on a noisy, small-support matrix may yield an artifact rather than the true invariant measure is not addressed.

Authors: The current version includes preliminary numerical results, but we acknowledge the need for more comprehensive validation. We will augment the experiments with comparisons against full MCMC on benchmark chains, explicit handling and analysis of the rank-deficient case (including regularization methods such as adding a small uniform component), and direct assessment of whether the resulting distribution is an artifact. Additional figures will show Wasserstein distances and variance reductions across varying numbers of paths and time horizons. revision: yes

Circularity Check

No circularity detected; claims rest on external consistency verification

full rationale

The paper's abstract and claims describe an eigenvalue-based approximation to MCMC transition matrices that is asserted to produce an invariant distribution consistent with full Monte Carlo sampling, as measured by Wasserstein distance. No equations, fitted parameters, self-citations, or derivations are supplied that would reduce the claimed result to a definitional equivalence or to a parameter fit performed on the target quantity itself. The central assertion is framed as an empirical approximation whose validity is to be checked against independent full-path Monte Carlo benchmarks rather than being true by construction. This leaves the derivation chain self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Local Graph Partitioning using PageRank Vectors

“Local Graph Partitioning using PageRank Vectors”. InProceedings of the 47th Annual IEEE Symposium on F oundations of Computer Science, FOCS ’06, 475–486. USA: IEEE Computer Society https://doi.org/10.1109/FOCS.2006.44. Anderson, D., and D. Higham

-

[2]

“The Monte Carlo Method”.Journal of the Society for Industrial and Applied Mathematics6(4):438– 451 https://doi.org/10.1137/0106028. Bellin, A., Y . Rubin, and A. Rinaldo

-

[3]

A Monte Carlo Analysis of the Ground Controlled Approach System

“A Monte Carlo Analysis of the Ground Controlled Approach System”.Operations Research5(3):397– 408 https://doi.org/10.1287/opre.5.3.397. Boyle, P., M. Broadie, and P. Glasserman

-

[4]

Monte Carlo methods for security pricing

“Monte Carlo methods for security pricing”.Journal of Economic Dynamics and Control21(8):1267–1321 https://doi.org/https://doi.org/10.1016/S0165-1889(97)00028-6. Calafiore, G., and L. Ghaoui

-

[5]

On Distributionally Robust Chance-Constrained Linear Programs

“On Distributionally Robust Chance-Constrained Linear Programs”.Journal of Optimization Theory and Applications130:1–22 https://doi.org/https://doi.org/10.1007/s10957-006-9084-x. Cheng, R. C. H

-

[6]

“Selecting Input Models”. In1994 Winter Simulation Conference (WSC), 184–191 https://doi.org/10. 1109/WSC.1994.717117. Crane, R. R., F. B. Brown, and R. O. Blanchard

-

[7]

An Analysis of a Railroad Classification Yard

“An Analysis of a Railroad Classification Yard”.Operations Research3(3):262–271 https://doi.org/10.1287/opre.3.3.262. D’Agostino, R. B., and B. Rosman

-

[8]

Estimating Macroeconomic Models: A Likelihood Approach

“Estimating Macroeconomic Models: A Likelihood Approach”.The Review of Economic Studies74(4):1059–1087. Frobenius, G. 1912.Über Matrizen aus nicht negativen Elementen. Gallant, A. R., H. Hong, and A. Khwaja

1912

-

[9]

Multilevel Monte Carlo Path Simulation

“Multilevel Monte Carlo Path Simulation”.Operations Research56:607–617. Glasserman, P. 2003.Monte Carlo Methods in Financial Engineering. Springer Science + Business Media. Gordon, N., D. Salmond, and A. Smith

2003

-

[10]

Novel Approach to Nonlinear/Non-Gaussian Bayesian State Estimation

“Novel Approach to Nonlinear/Non-Gaussian Bayesian State Estimation”.IEE Proceedings F (Radar and Signal Processing)140:107–113 https://doi.org/10.1049/ip-f-2.1993.0015. Hammersley, J. M., and K. W. Morton

-

[11]

“Poor Man’s Monte Carlo”.Journal of the Royal Statistical Society: Series B (Methodological)16(1):23–38 https://doi.org/10.1111/j.2517-6161.1954.tb00145.x. Hastings, W. K

-

[12]

Variance Reduction in Simulation of Multiclass Processing Networks

“Variance Reduction in Simulation of Multiclass Processing Networks” https: //doi.org/10.48550/arXiv.2005.14179. E.J. Hu and Y . Shen and P. Wallis and Z. Allen-Zhu and Y . L and, S.L. Wang and C. Weizhu

-

[13]

LoRA: Low-Rank Adaptation of Large Language Models

“LoRA: Low-Rank Adaptation of Large Language Models” https://doi.org/10.48550/arXiv.2106.09685. Jessop, W. N

work page internal anchor Pith review doi:10.48550/arxiv.2106.09685

-

[14]

Monte Carlo Methods and Industrial Problems

“Monte Carlo Methods and Industrial Problems”.Journal of the Royal Statistical Society. Series C5(3):158–165 https://doi.org/10.2307/2985417. Johnson, P. E

-

[15]

Agent-Based Modeling: What I Learned From the Artificial Stock Market

“Agent-Based Modeling: What I Learned From the Artificial Stock Market”.Social Science Computer Review20(2):174–186 https://doi.org/10.1177/089443930202000207. Johnson, P. E

-

[16]

Oxford University Press https://doi.org/10.1093/oxfordhb/9780199934874.013.0022. Kappen, H., V . Gómez, and M. Opper

-

[17]

CNN Acceleration by Low-rank Approximation with Quantized Factors

“CNN Acceleration by Low-rank Approximation with Quantized Factors” https://doi.org/ 10.48550/arXiv.2006.08878. Lam, H., and H. Zhang

-

[18]

Theoretical Skill of Monte Carlo Forecasts

“Theoretical Skill of Monte Carlo Forecasts”.Monthly Weather Review102(6):409 – 418 https://doi.org/10. 1175/1520-0493(1974)102<0409:TSOMCF>2.0.CO;2. Li, F., H. Chen, J. Lin, A. Gupta, X. Tan, H. Zhao,et al

1974

-

[19]

Prediction-Enhanced Monte Carlo: A Machine Learning View on Control Variate

“Prediction-Enhanced Monte Carlo: A Machine Learning View on Control Variate” https://doi.org/10.48550/arXiv.2412.11257. Linn, S. C., and N. S. P. Tay

-

[20]

“Complexity and the Character of Stock Returns: Empirical Evidence and a Model of Asset Prices Based on Complex Investor Learning”.Management Science53(7):1165–1180 https://doi.org/10.1287/mnsc.1060.0622. Matthew V Macfarlane and Edan Toledo and Donal Byrne and Paul Duckworth and Alexandre Laterre

-

[21]

The Journal of Chemical Physics21(6), 1087–1092 (1953) https://doi.org/10.1063/1.1699114

“A Model for Analyzing V oting Systems”. InPublic Opinion and Congressional Elections, edited by W. N. McPhee and W. A. Glaser, 123–154. New York: Free Press. Metropolis, N., A. W. Rosenbluth, M. N. Rosenbluth, A. H. Teller, and E. Teller. 1953a. “Equation of State Calculations by Fast Computing Machines”.The Journal of Chemical Physics21(6):1087–1092 htt...

-

[22]

Artificial Economic Life: a Simple Model of a Stockmarket

“Artificial Economic Life: a Simple Model of a Stockmarket”.Physica D: Nonlinear Phenomena75(1):264–274 https://doi.org/https://doi.org/10.1016/0167-2789(94) 90287-9. Pitt, M. K., S. Malik, and A. Doucet

-

[23]

Simulation as an Aid in Model Building

“Simulation as an Aid in Model Building”.Journal of the Operations Research Society of America3(1):15– 19 https://doi.org/10.1287/opre.3.1.15. Rosenthal, J. S

-

[24]

Parallel Computing and Monte Carlo Algorithms

“Parallel Computing and Monte Carlo Algorithms.”.Far East Journal of Theoretical Statistics4:207–236. Scarf, H. 1958.Studies in the Mathematical Theory of Inventory and Production, Chapter A Min-Max Solution of an Inventory Problem. Stanford University Press. Seabrook, E., and L. Wiskott

1958

-

[25]

A Tutorial on the Spectral Theory of Markov Chains

“A Tutorial on the Spectral Theory of Markov Chains”.Neural Computa- tion35(11):1713–1796 https://doi.org/10.1162/neco_a_01611. Shubik, M

-

[26]

“Monte Carlo and Variance Reduction Methods for Structural Reliability Analysis: A Com- prehensive Review”.Probabilistic Engineering Mechanics73:103479 https://doi.org/https://doi.org/10.1016/j.probengmech. 2023.103479. Sun, Z., G. Pedretti, E. Ambrosi, A. Bricalli, and D. Ielmini

-

[27]

Probabilistic inference for solving discrete and continuous state Markov Decision Processes

“Probabilistic inference for solving discrete and continuous state Markov Decision Processes”. InProceedings of the 23rd International Conference on Machine Learning, ICML ’06, 945–952. New York, NY , USA: Association for Computing Machinery https://doi.org/10.1145/1143844.1143963. V on Neumann, J

-

[28]

Simulation Studies of Industrial Operations

“Simulation Studies of Industrial Operations”.Royal Statistical Society. Journal. Series A: General122(4):484–510 https://doi.org/10.2307/2343076. AUTHOR BIOGRAPHIES IRENE ALDRIDGEis a Visiting Professor at Cornell University, ORIE, Financial Engineering. She is a recognized researcher in the applications of Data Science to Finance. Her research interests...

-

[29]

Big Data Science in Finance

and "Big Data Science in Finance" (with Marco Avellaneda, 2021). Her email address is irene.aldridge@gmail.com and her website is https://irenealdridge.com/

2021

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.