Recognition: unknown

CCL-D: A High-Precision Diagnostic System for Slow and Hang Anomalies in Large-Scale Model Training

Pith reviewed 2026-05-08 17:36 UTC · model grok-4.3

The pith

CCL-D detects and locates slow or hang anomalies in large-scale model training by combining a lightweight real-time probe with an intelligent analyzer.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

CCL-D integrates a rank-level real-time probe that measures cross-layer anomaly metrics using a lightweight distributed tracing framework to monitor communication traffic with an intelligent decision analyzer that performs automated anomaly detection and root-cause location to precisely identify the faulty GPU rank. When deployed on a 4,000-GPU cluster over one year, the system achieved near-complete coverage of known slow and hang anomalies and pinpointed affected ranks within 6 minutes, substantially outperforming existing solutions.

What carries the argument

The rank-level real-time probe paired with the intelligent decision analyzer, which together track cross-layer metrics in real time and automate detection plus localization of slow or hang issues.

Load-bearing premise

The cross-layer metrics collected by the lightweight probe are sufficient for the analyzer to distinguish all slow and hang root causes from normal variation without missing novel anomalies or generating high false positives.

What would settle it

Running CCL-D on a comparable large cluster during a documented slow or hang anomaly and finding that it either misses the event, mislocates the faulty rank, or takes longer than six minutes to report the issue would challenge the central performance claims.

Figures

read the original abstract

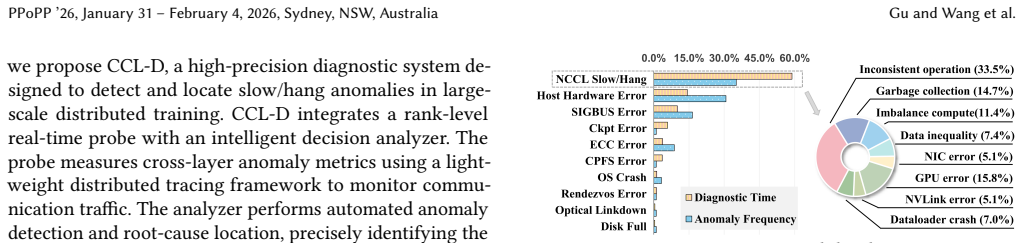

As training scales grow, collective communication libraries (CCL) increasingly face anomalies arising from complex interactions among hardware, software, and environmental factors. These anomalies typically manifest as slow/hang communication, the most frequent and time-consuming category to diagnose. However, traditional diagnostic methods remain inaccurate and inefficient, frequently requiring hours or even days for root cause analysis. To address this, we propose CCL-D, a high-precision diagnostic system designed to detect and locate slow/hang anomalies in large-scale distributed training. CCL-D integrates a rank-level real-time probe with an intelligent decision analyzer. The probe measures cross-layer anomaly metrics using a lightweight distributed tracing framework to monitor communication traffic. The analyzer performs automated anomaly detection and root-cause location, precisely identifying the faulty GPU rank. Deployed on a 4,000-GPU cluster over one year, CCL-D achieved near-complete coverage of known slow/hang anomalies and pinpointed affected ranks within 6 minutes-substantially outperforming existing solutions.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents CCL-D, a diagnostic system for slow/hang anomalies in collective communication libraries during large-scale model training. It combines a rank-level real-time probe that collects cross-layer anomaly metrics via a lightweight distributed tracing framework with an intelligent decision analyzer that performs automated detection and identifies the faulty GPU rank. The system was deployed on a 4,000-GPU cluster for one year and is reported to have achieved near-complete coverage of known anomalies while localizing affected ranks within 6 minutes, substantially outperforming existing solutions.

Significance. If the performance claims hold under rigorous evaluation, CCL-D would address a critical practical bottleneck in large-scale distributed training by reducing anomaly diagnosis time from hours or days to minutes. The year-long deployment on a production-scale cluster constitutes real-world evidence of utility in the cs.DC domain, though the absence of supporting metrics limits the ability to gauge its broader impact on training reliability and efficiency.

major comments (1)

- [Abstract] Abstract: The central claims of 'near-complete coverage of known slow/hang anomalies' and localization 'within 6 minutes' are asserted without any reported quantitative accuracy metrics, false-positive rates, baseline comparisons to existing diagnostic tools, or details on the decision logic inside the intelligent analyzer. These omissions are load-bearing because the soundness of the 'high-precision' and 'substantially outperforming' assertions rests entirely on the unverified deployment outcomes.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We have addressed the major comment point by point below, making revisions where feasible while being transparent about the constraints of our production deployment evaluation.

read point-by-point responses

-

Referee: [Abstract] Abstract: The central claims of 'near-complete coverage of known slow/hang anomalies' and localization 'within 6 minutes' are asserted without any reported quantitative accuracy metrics, false-positive rates, baseline comparisons to existing diagnostic tools, or details on the decision logic inside the intelligent analyzer. These omissions are load-bearing because the soundness of the 'high-precision' and 'substantially outperforming' assertions rests entirely on the unverified deployment outcomes.

Authors: We agree that the original abstract presented the deployment outcomes in summary form without sufficient quantitative backing or methodological detail, which weakens the verifiability of the claims. The figures derive from post-hoc analysis of all slow/hang incidents logged over the year-long run on the 4,000-GPU cluster, where CCL-D identified every anomaly that was later confirmed by operators. In the revision we will expand the abstract to include concrete supporting numbers (e.g., total incidents processed, mean and 95th-percentile localization latency, and a one-sentence outline of the analyzer's hybrid rule-plus-model decision procedure). We have also inserted a short limitations paragraph noting that, because the system ran in live production without parallel execution of alternative tools or exhaustive ground-truth labeling, formal false-positive rates and head-to-head baselines are not available from this deployment. These points are now explicitly stated rather than left implicit. revision: partial

- We cannot supply baseline comparisons or false-positive rates because the evaluation occurred in an uninterrupted production environment; running competing diagnostic systems in parallel or obtaining independent labels for every event would have required halting training jobs, which was not feasible.

Circularity Check

No significant circularity; engineering system with empirical deployment support

full rationale

The paper presents CCL-D as an engineering diagnostic system combining a lightweight probe for cross-layer metrics with an intelligent analyzer for anomaly detection and root-cause localization. Its central claims rest on a year-long deployment across a 4,000-GPU cluster that reports near-complete coverage of known slow/hang cases and 6-minute rank localization. No mathematical derivations, equations, parameter fittings, predictions from first principles, or self-citation chains appear in the provided text. The evaluation is purely empirical and externally falsifiable via the reported deployment outcomes, with no reduction of results to inputs by construction. This is the expected non-finding for a systems paper without theoretical modeling.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Lightweight distributed tracing can capture cross-layer communication metrics with negligible overhead in large clusters.

- domain assumption Cross-layer anomaly metrics are sufficient for automated detection and precise root-cause localization of slow/hang events.

invented entities (1)

-

CCL-D diagnostic system

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Palwisha Akhtar, Erhan Tezcan, Fareed Mohammad Qararyah, and Didem Unat. 2020. ComScribe: identifying intra-node GPU communi- cation. InInternational Symposium on Benchmarking, Measuring and Optimization. Springer, 157–174

2020

-

[2]

AMD. 2025. RCCL: ROCm Communication Collectives Library.https: //github.com/ROCm/rccl. Accessed August 25, 2025

2025

-

[3]

BigScience. 2025. BLOOM 176B Training Log.https://github. com/bigscience-workshop/bigscience/blob/master/train/tr11-176B- ml/chronicles.md. Accessed August 25, 2025

2025

-

[4]

Sanghun Cho, Hyojun Son, and John Kim. 2023. Logical/physical topology-aware collective communication in deep learning training. In2023 IEEE International Symposium on High-Performance Computer Architecture (HPCA). IEEE, 56–68

2023

-

[5]

Jack Choquette, Wishwesh Gandhi, Olivier Giroux, Nick Stam, and Ronny Krashinsky. 2021. NVIDIA a100 tensor core gpu: Performance and innovation.IEEE Micro41, 2 (2021), 29–35

2021

-

[6]

James C Corbett, Jeffrey Dean, Michael Epstein, Andrew Fikes, Christo- pher Frost, Jeffrey John Furman, Sanjay Ghemawat, Andrey Gubarev, Christopher Heiser, Peter Hochschild, et al. 2013. Spanner: Google’s globally distributed database.ACM Transactions on Computer Systems (TOCS)31, 3 (2013), 1–22

2013

-

[7]

Can Cui, Yunsheng Ma, Xu Cao, Wenqian Ye, Yang Zhou, Kaizhao Liang, Jintai Chen, Juanwu Lu, Zichong Yang, Kuei-Da Liao, et al. 2024. A survey on multimodal large language models for autonomous driv- ing. InProceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision. 958–979

2024

- [8]

-

[9]

Huangliang Dai, Shixun Wu, Jiajun Huang, Zizhe Jian, Yue Zhu, Haiyang Hu, and Zizhong Chen. 2025. FT-Transformer: Resilient and reliable transformer with end-to-end fault tolerant attention. In Proceedings of the International Conference for High Performance Com- puting, Networking, Storage and Analysis. 1085–1098

2025

-

[10]

Yangtao Deng, Xiang Shi, Zhuo Jiang, Xingjian Zhang, Lei Zhang, Zhang Zhang, Bo Li, Zuquan Song, Hang Zhu, Gaohong Liu, et al

-

[11]

In22nd USENIX Symposium on Networked Systems Design and Implementation (NSDI 25)

Minder: Faulty machine detection for large-scale distributed model training. In22nd USENIX Symposium on Networked Systems Design and Implementation (NSDI 25). 505–521

-

[12]

Jianbo Dong, Bin Luo, Jun Zhang, Pengcheng Zhang, Fei Feng, Yikai Zhu, Ang Liu, Zian Chen, Yi Shi, Hairong Jiao, et al. 2025. Enhancing Large-Scale AI Training Efficiency: The C4 Solution for Real-Time Anomaly Detection and Communication Optimization. In2025 IEEE International Symposium on High Performance Computer Architecture (HPCA). IEEE, 1246–1258

2025

-

[13]

Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Ka- dian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, et al. 2024. The llama 3 herd of models.arXiv preprint arXiv:2407.21783(2024)

work page internal anchor Pith review arXiv 2024

-

[14]

Assaf Eisenman, Kiran Kumar Matam, Steven Ingram, Dheevatsa Mudigere, Raghuraman Krishnamoorthi, Krishnakumar Nair, Misha Smelyanskiy, and Murali Annavaram. 2022. Check-N-Run: A check- pointing system for training deep learning recommendation models. In19th USENIX Symposium on Networked Systems Design and Imple- mentation (NSDI 22). 929–943

2022

-

[15]

Yanjie Gao, Jiyu Luo, Haoxiang Lin, Hongyu Zhang, Ming Wu, and Mao Yang. 2025. dl 2: Detecting Communication Deadlocks in Deep Learning Jobs. InProceedings of the 33rd ACM International Conference on the Foundations of Software Engineering. 27–38

2025

-

[16]

Jiayi Huang, Pritam Majumder, Sungkeun Kim, Abdullah Muzahid, Ki Hwan Yum, and Eun Jung Kim. 2021. Communication algorithm- architecture co-design for distributed deep learning. In2021 ACM/IEEE 48th Annual International Symposium on Computer Architecture (ISCA). IEEE, 181–194

2021

-

[17]

Yanping Huang, Youlong Cheng, Ankur Bapna, Orhan Firat, Dehao Chen, Mia Chen, HyoukJoong Lee, Jiquan Ngiam, Quoc V Le, Yonghui Wu, et al. 2019. Gpipe: Efficient training of giant neural networks using pipeline parallelism.Advances in neural information processing systems32 (2019)

2019

-

[18]

Myeongjae Jeon, Shivaram Venkataraman, Amar Phanishayee, Jun- jie Qian, Wencong Xiao, and Fan Yang. 2019. Analysis of Large- Scale Multi-Tenant GPU clusters for DNN training workloads. In2019 USENIX Annual Technical Conference (USENIX ATC 19). 947–960

2019

-

[19]

Yimin Jiang, Yibo Zhu, Chang Lan, Bairen Yi, Yong Cui, and Chuanx- iong Guo. 2020. A unified architecture for accelerating distributed DNN training in heterogeneous GPU/CPU clusters. In14th USENIX Symposium on Operating Systems Design and Implementation (OSDI 20). 463–479

2020

-

[20]

Ziheng Jiang, Haibin Lin, Yinmin Zhong, Qi Huang, Yangrui Chen, Zhi Zhang, Yanghua Peng, Xiang Li, Cong Xie, Shibiao Nong, et al

-

[21]

In21st USENIX Symposium on Networked Systems Design and Implementation (NSDI 24)

MegaScale: Scaling large language model training to more than 10,000 GPUs. In21st USENIX Symposium on Networked Systems Design and Implementation (NSDI 24). 745–760

-

[22]

Jared Kaplan, Sam McCandlish, Tom Henighan, Tom B Brown, Ben- jamin Chess, Rewon Child, Scott Gray, Alec Radford, Jeffrey Wu, and Dario Amodei. 2020. Scaling laws for neural language models.arXiv preprint arXiv:2001.08361(2020)

work page internal anchor Pith review arXiv 2020

-

[23]

Jacob Devlin Ming-Wei Chang Kenton and Lee Kristina Toutanova

-

[24]

InProceedings of naacL-HLT, Vol

Bert: Pre-training of deep bidirectional transformers for lan- guage understanding. InProceedings of naacL-HLT, Vol. 1. Minneapolis, Minnesota

-

[25]

Hongbo Li, Zizhong Chen, and Rajiv Gupta. 2017. Parastack: Efficient hang detection for mpi programs at large scale. InProceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis. 1–12

2017

- [26]

-

[27]

Wenshuo Li, Xinghao Chen, Han Shu, Yehui Tang, and Yunhe Wang

-

[28]

CCL-D PPoPP ’26, January 31 – February 4, 2026, Sydney, NSW, Australia

ExCP: Extreme LLM Checkpoint Compression via Weight- Momentum Joint Shrinking.arXiv preprint arXiv:2406.11257(2024). CCL-D PPoPP ’26, January 31 – February 4, 2026, Sydney, NSW, Australia

-

[29]

Yan-Bo Lin, Yi-Lin Sung, Jie Lei, Mohit Bansal, and Gedas Bertasius

-

[30]

InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition

Vision transformers are parameter-efficient audio-visual learners. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2299–2309

-

[31]

Kefei Liu, Zhuo Jiang, Jiao Zhang, Haoran Wei, Xiaolong Zhong, Lizhuang Tan, Tian Pan, and Tao Huang. 2023. Hostping: Diagnos- ing intra-host network bottlenecks in RDMA servers. In20th USENIX Symposium on Networked Systems Design and Implementation (NSDI 23). 15–29

2023

-

[32]

Wei Liu, Kun Qian, Zhenhua Li, Tianyin Xu, Yunhao Liu, Weicheng Wang, Yun Zhang, Jiakang Li, Shuhong Zhu, Xue Li, et al. 2025. Skele- tonHunter: Diagnosing and Localizing Network Failures in Container- ized Large Model Training. InProceedings of the ACM SIGCOMM 2025 Conference. 527–540

2025

-

[33]

Keith Marzullo and Susan Owicki. 1983. Maintaining the time in a distributed system. InProceedings of the second annual ACM symposium on Principles of distributed computing. 295–305

1983

-

[34]

Avinash Maurya, Robert Underwood, M Mustafa Rafique, Franck Cap- pello, and Bogdan Nicolae. 2024. Datastates-llm: Lazy asynchronous checkpointing for large language models. InProceedings of the 33rd International Symposium on High-Performance Parallel and Distributed Computing. 227–239

2024

-

[35]

Meta. 2025. Dynolog : a telemetry daemon for performance monitoring and tracing.https://github.com/facebookincubator/dynolog. Accessed August 25, 2025

2025

-

[36]

Meta. 2025. OPT 175B Training Log.https://github.com/ facebookresearch/metaseq/blob/main/projects/OPT/chronicles/ OPT175B_Logbook.pdf. Accessed August 25, 2025

2025

-

[37]

Deepak Narayanan, Aaron Harlap, Amar Phanishayee, Vivek Seshadri, Nikhil R Devanur, Gregory R Ganger, Phillip B Gibbons, and Matei Zaharia. 2019. PipeDream: Generalized pipeline parallelism for DNN training. InProceedings of the 27th ACM symposium on operating sys- tems principles. 1–15

2019

- [38]

-

[39]

NVIDIA. 2025. Collective Communication Protocol.https://docs. nvidia.com/deeplearning/nccl/user-guide/docs/env.html. Accessed August 25, 2025

2025

-

[40]

NVIDIA. 2025. NCCL RAS.https://docs.nvidia.com/deeplearning/nccl/ user-guide/docs/troubleshooting/ras.html. Accessed August 25, 2025

2025

-

[41]

NVIDIA. 2025. Nccl-tests.https://github.com/NVIDIA/nccl-tests. Ac- cessed August 25, 2025

2025

-

[42]

NVIDIA. 2025. NVIDIA Nsight Compute.https://docs.nvidia.com/ nsight-compute/NsightCompute/index.html. Accessed August 25, 2025

2025

-

[43]

Guilherme Penedo, Hynek Kydlíček, Anton Lozhkov, Margaret Mitchell, Colin Raffel, Leandro Von Werra, Thomas Wolf, et al. 2024. The fineweb datasets: Decanting the web for the finest text data at scale.arXiv preprint arXiv:2406.17557(2024)

work page internal anchor Pith review arXiv 2024

-

[44]

Sreeram Potluri, Khaled Hamidouche, Akshay Venkatesh, Devendar Bureddy, and Dhabaleswar K Panda. 2013. Efficient inter-node MPI communication using GPUDirect RDMA for InfiniBand clusters with NVIDIA GPUs. In2013 42nd International Conference on Parallel Pro- cessing. IEEE, 80–89

2013

-

[45]

Samyam Rajbhandari, Jeff Rasley, Olatunji Ruwase, and Yuxiong He

-

[46]

InSC20: International Conference for High Performance Computing, Networking, Storage and Analysis

Zero: Memory optimizations toward training trillion param- eter models. InSC20: International Conference for High Performance Computing, Networking, Storage and Analysis. IEEE, 1–16

-

[47]

Mohammad Shoeybi, Mostofa Patwary, Raul Puri, Patrick LeGresley, Jared Casper, and Bryan Catanzaro. 2019. Megatron-lm: Training multi- billion parameter language models using model parallelism.arXiv preprint arXiv:1909.08053(2019)

work page internal anchor Pith review arXiv 2019

-

[48]

Cheng Tan, Ze Jin, Chuanxiong Guo, Tianrong Zhang, Haitao Wu, Karl Deng, Dongming Bi, and Dong Xiang. 2019. NetBouncer: Active device and link failure localization in data center networks. In16th USENIX Symposium on Networked Systems Design and Implementation (NSDI 19). 599–614

2019

-

[49]

Hashimoto

Rohan Taori, Ishaan Gulrajani, Tianyi Zhang, Yann Dubois, Xuechen Li, Carlos Guestrin, Percy Liang, and Tatsunori B. Hashimoto. 2023. Stanford Alpaca: An Instruction-following LLaMA model.https:// github.com/tatsu-lab/stanford_alpaca

2023

-

[50]

DLRover Team. 2025. DLRover.https://github.com/intelligent- machine-learning/dlrover. Accessed August 25, 2025

2025

- [51]

-

[52]

Torch Team. 2025. Pytorch Watchdog.https://pytorch.org/docs/stable/ torch_nccl_environment_variables.html. Accessed August 25, 2025

2025

-

[53]

Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Alma- hairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, et al. 2023. Llama 2: Open foundation and fine-tuned chat models.arXiv preprint arXiv:2307.09288(2023)

work page internal anchor Pith review arXiv 2023

-

[54]

Didem Unat. 2022. Monitoring Collective Communication Among GPUs. InEuro-Par 2021: Parallel Processing Workshops: Euro-Par 2021 International Workshops, Lisbon, Portugal, August 30-31, 2021, Revised Selected Papers, Vol. 13098. Springer Nature, 41

2022

-

[55]

A Vaswani. 2017. Attention is all you need.Advances in Neural Information Processing Systems(2017)

2017

-

[56]

Boxiang Wang, Qifan Xu, Zhengda Bian, and Yang You. 2022. Tesseract: Parallelize the tensor parallelism efficiently. InProceedings of the 51st International Conference on Parallel Processing. 1–11

2022

- [57]

-

[58]

Zhuang Wang, Zhen Jia, Shuai Zheng, Zhen Zhang, Xinwei Fu, TS Eu- gene Ng, and Yida Wang. 2023. Gemini: Fast failure recovery in dis- tributed training with in-memory checkpoints. InProceedings of the 29th Symposium on Operating Systems Principles. 364–381

2023

-

[59]

Tianyuan Wu, Wei Wang, Yinghao Yu, Siran Yang, Wenchao Wu, Qinkai Duan, Guodong Yang, Jiamang Wang, Lin Qu, and Liping Zhang

-

[60]

In2025 USENIX Annual Technical Conference (USENIX ATC 25)

GREYHOUND: Hunting Fail-Slows in Hybrid-Parallel Training at Scale. In2025 USENIX Annual Technical Conference (USENIX ATC 25). 731–747

-

[61]

Yifan Xiong, Yuting Jiang, Ziyue Yang, Lei Qu, Guoshuai Zhao, Shuguang Liu, Dong Zhong, Boris Pinzur, Jie Zhang, Yang Wang, et al. 2024. SuperBench: Improving Cloud AI Infrastructure Reliability with Proactive Validation. In2024 USENIX Annual Technical Conference (USENIX ATC 24). 835–850

2024

-

[62]

Yiwen Zhang, Yue Tan, Brent Stephens, and Mosharaf Chowdhury

-

[63]

In19th USENIX Symposium on Networked Systems Design and Implementation (NSDI 22)

Justitia: Software Multi-Tenancy in Hardware Kernel-Bypass Networks. In19th USENIX Symposium on Networked Systems Design and Implementation (NSDI 22). 1307–1326

-

[64]

Hairui Zhao, Hongliang Li, Qi Tian, Jie Wu, Meng Zhang, Zhewen Xu, Xiang Li, and Haixiao Xu. 2025. ArrayPipe: Introducing Job-Array Pipeline Parallelism for High Throughput Model Exploration. InIEEE INFOCOM 2025-IEEE Conference on Computer Communications. IEEE, 1–10

2025

-

[65]

Hairui Zhao, Qi Tian, Hongliang Li, and Zizhong Chen. 2025. {FlexPipe}: Maximizing training efficiency for transformer-based mod- els with {Variable-Length} inputs. In2025 USENIX Annual Technical Conference (USENIX ATC 25). 143–159

2025

-

[66]

Jingyuan Zhao, Wenyi Zhao, Bo Deng, Zhenghong Wang, Feng Zhang, Wenxiang Zheng, Wanke Cao, Jinrui Nan, Yubo Lian, and Andrew F Burke. 2024. Autonomous driving system: A comprehensive survey. PPoPP ’26, January 31 – February 4, 2026, Sydney, NSW, Australia Gu and Wang et al. Expert Systems with Applications242 (2024), 122836

2024

-

[67]

Yanli Zhao, Andrew Gu, Rohan Varma, Liang Luo, Chien-Chin Huang, Min Xu, Less Wright, Hamid Shojanazeri, Myle Ott, Sam Shleifer, et al

-

[68]

PyTorch FSDP: Experiences on Scaling Fully Sharded Data Parallel

Pytorch fsdp: experiences on scaling fully sharded data parallel. arXiv preprint arXiv:2304.11277(2023). Received 2025-08-23; accepted 2025-11-10

work page internal anchor Pith review arXiv 2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.