Recognition: unknown

Gyan: An Explainable Neuro-Symbolic Language Model

Pith reviewed 2026-05-08 16:14 UTC · model grok-4.3

The pith

Gyan builds explainable language models by separating knowledge representation from text processing using linguistic theories.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

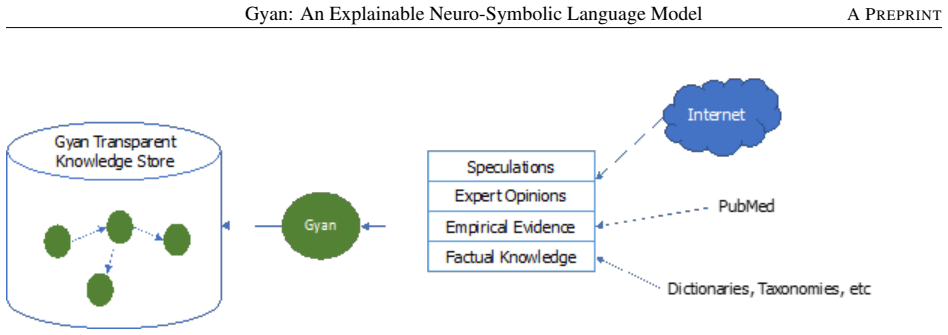

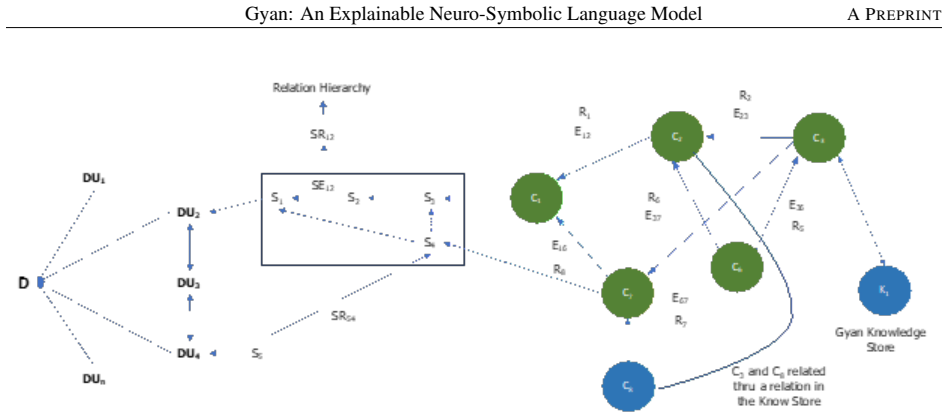

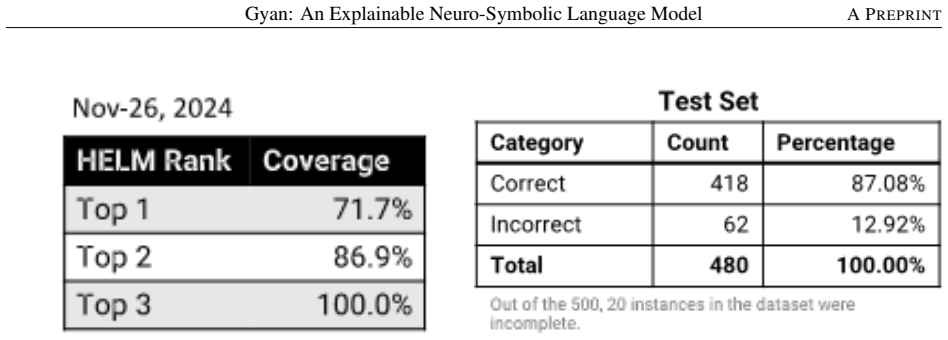

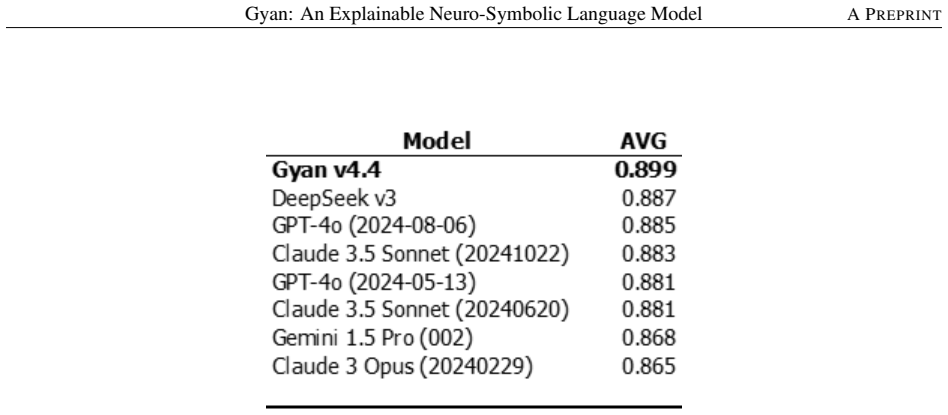

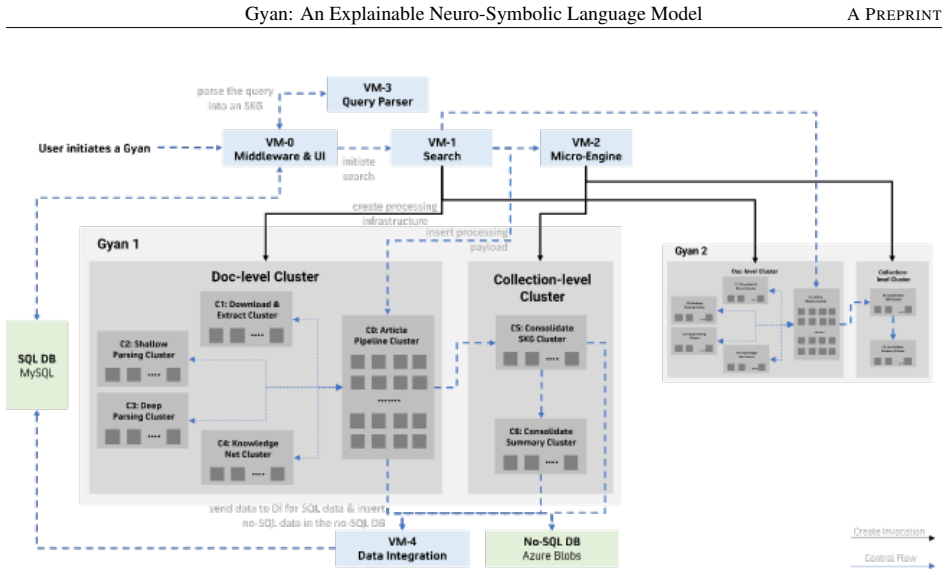

Gyan is an explainable neuro-symbolic language model based on a novel non-transformer architecture. The model draws on rhetorical structure theory, semantic role theory and knowledge-based computational linguistics. Its meaning representation structure captures the complete compositional context and attempts to mimic humans by expanding the context to a world model. The architecture decouples the language model from knowledge acquisition and representation, achieving state-of-the-art performance on three widely cited datasets and superior performance on two proprietary datasets without hallucinations or the need for enormous compute resources.

What carries the argument

The meaning representation structure that combines rhetorical structure theory, semantic role theory, and knowledge-based computational linguistics to build a decoupled world model for language understanding.

If this is right

- Language models become interpretable and maintainable because knowledge is stored separately from the processing rules.

- Hallucinations are eliminated by construction rather than mitigated after training.

- Training and inference require orders of magnitude less compute than current large transformer systems.

- The same architecture can be applied to mission-critical domains where trust and transparency are required.

- Performance on the reported datasets matches or exceeds transformer baselines without scale.

Where Pith is reading between the lines

- The explicit world model could be inspected or edited by domain experts to correct errors without retraining the entire system.

- The approach might extend naturally to multilingual or low-resource settings where symbolic knowledge can be added directly.

- Hybrid systems could combine Gyan-style symbolic layers with transformer components for tasks that need both precision and pattern recognition.

- If the knowledge base grows over time, the model could support incremental learning without catastrophic forgetting.

Load-bearing premise

The theories and knowledge structures used actually encode enough context to replace the implicit world model that transformers learn from data.

What would settle it

If the model produces an incorrect or incomplete answer on a new test case that requires world knowledge absent from its explicit knowledge base, while a transformer succeeds, the claim of complete context capture would be falsified.

Figures

read the original abstract

Transformer based pre-trained large language models have become ubiquitous. There is increasing evidence to suggest that even with large scale pre-training, these models do not capture complete compositional context and certainly not, the full human analogous context. Besides, by the very nature of the architecture, these models hallucinate, are difficult to maintain, are not easily interpretable and require enormous compute resources for training and inference. Here, we describe Gyan, an explainable language model based on a novel non-transformer architecture, without any of these limitations. Gyan achieves SOTA performance on 3 widely cited data sets and superior performance on two proprietary data sets. The novel architecture decouples the language model from knowledge acquisition and representation. The model draws on rhetorical structure theory, semantic role theory and knowledge-based computational linguistics. Gyan's meaning representation structure captures the complete compositional context and attempts to mimic humans by expanding the context to a 'world model'. AI model adoption critically depends on trust and transparency especially in mission critical use cases. Collectively, our results demonstrate that it is possible to create models which are trustable and reliable for mission critical tasks. We believe our work has tremendous potential for guiding the development of transparent and trusted architectures for language models.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces Gyan, an explainable neuro-symbolic language model using a novel non-transformer architecture inspired by rhetorical structure theory, semantic role theory, and knowledge-based computational linguistics. It claims this architecture decouples language modeling from knowledge acquisition and representation, captures complete compositional context via an expanded 'world model', eliminates hallucinations and other transformer limitations such as high compute needs and poor interpretability, and delivers SOTA results on three widely cited datasets plus superior performance on two proprietary datasets, enabling trustworthy models for mission-critical tasks.

Significance. If the performance and architectural claims hold with supporting evidence, the work would be significant as a concrete neuro-symbolic alternative to transformers that prioritizes transparency and reliability, potentially influencing development of trusted AI systems in high-stakes domains.

major comments (3)

- [Abstract] Abstract: The central claim of SOTA performance on 3 widely cited datasets and superior results on 2 proprietary datasets is presented without any metrics, baselines, dataset names, error bars, or statistical tests, making the empirical contribution impossible to assess or reproduce.

- [Abstract] Abstract: The assertion that the architecture 'captures the complete compositional context' and mimics humans via a 'world model' is stated without formal definitions, equations, pseudocode, or quantitative measures of context completeness or hallucination reduction, leaving the mechanism for these properties unspecified and unverified.

- [Abstract] Abstract: No experimental details are supplied on training procedures, evaluation protocols, ablation studies, or comparisons to prior models, which are required to substantiate that the non-transformer design (rather than unstated fitting choices) produces the claimed gains over transformers.

Simulated Author's Rebuttal

We are grateful to the referee for their thorough review and valuable suggestions. We address each major comment point by point below. We plan to make revisions to the abstract as outlined to improve the clarity and substantiation of our claims.

read point-by-point responses

-

Referee: [Abstract] Abstract: The central claim of SOTA performance on 3 widely cited datasets and superior results on 2 proprietary datasets is presented without any metrics, baselines, dataset names, error bars, or statistical tests, making the empirical contribution impossible to assess or reproduce.

Authors: We thank the referee for highlighting this issue with the abstract. While the full manuscript includes detailed performance metrics, baselines, dataset names, error bars, and statistical tests in the experimental section, the abstract was kept concise. In the revised version, we will incorporate key quantitative results and dataset references into the abstract to allow better assessment of the claims. This addresses the concern without altering the core contribution. revision: yes

-

Referee: [Abstract] Abstract: The assertion that the architecture 'captures the complete compositional context' and mimics humans via a 'world model' is stated without formal definitions, equations, pseudocode, or quantitative measures of context completeness or hallucination reduction, leaving the mechanism for these properties unspecified and unverified.

Authors: The abstract provides a high-level overview of the architecture's properties. The full paper includes formal definitions, equations, and pseudocode describing the meaning representation structure and the 'world model' in the dedicated sections on the model architecture. Quantitative measures and comparisons regarding context completeness and hallucination reduction are presented in the results and analysis. We will revise the abstract to include a brief mention of the key formal elements or a reference to the relevant equations to better specify these mechanisms. revision: yes

-

Referee: [Abstract] Abstract: No experimental details are supplied on training procedures, evaluation protocols, ablation studies, or comparisons to prior models, which are required to substantiate that the non-transformer design (rather than unstated fitting choices) produces the claimed gains over transformers.

Authors: We agree that the abstract does not detail the experimental setup. The manuscript provides comprehensive information on training procedures, evaluation protocols, ablation studies, and comparisons to prior models in the Experiments and Results sections. To address this, we will update the abstract with high-level information on the training and evaluation approaches. This will help substantiate that the architectural design is responsible for the performance gains. revision: yes

Circularity Check

No derivation chain or equations presented; no circularity possible

full rationale

The provided abstract and description contain no equations, derivations, fitted parameters, predictions, or self-citations that could form a load-bearing chain. Claims about SOTA performance, decoupling of language model from knowledge, and drawing on RST/semantic role theory are stated as design features and empirical outcomes without any mathematical reduction or first-principles derivation that could be checked for equivalence to inputs by construction. No self-definitional loops, fitted inputs renamed as predictions, or uniqueness theorems are invoked. The paper is therefore self-contained in its high-level presentation, with no circular steps to flag.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Rhetorical structure theory and semantic role theory together with knowledge-based computational linguistics suffice to capture complete compositional context and a human-like world model.

invented entities (1)

-

World model

no independent evidence

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.