Recognition: unknown

High-Dimensional Statistics: Reflections on Progress and Open Problems

Pith reviewed 2026-05-08 15:30 UTC · model grok-4.3

The pith

High-dimensional statistics has evolved to tackle sophisticated problems in complex datasets by building connections across multiple mathematical and computational fields.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

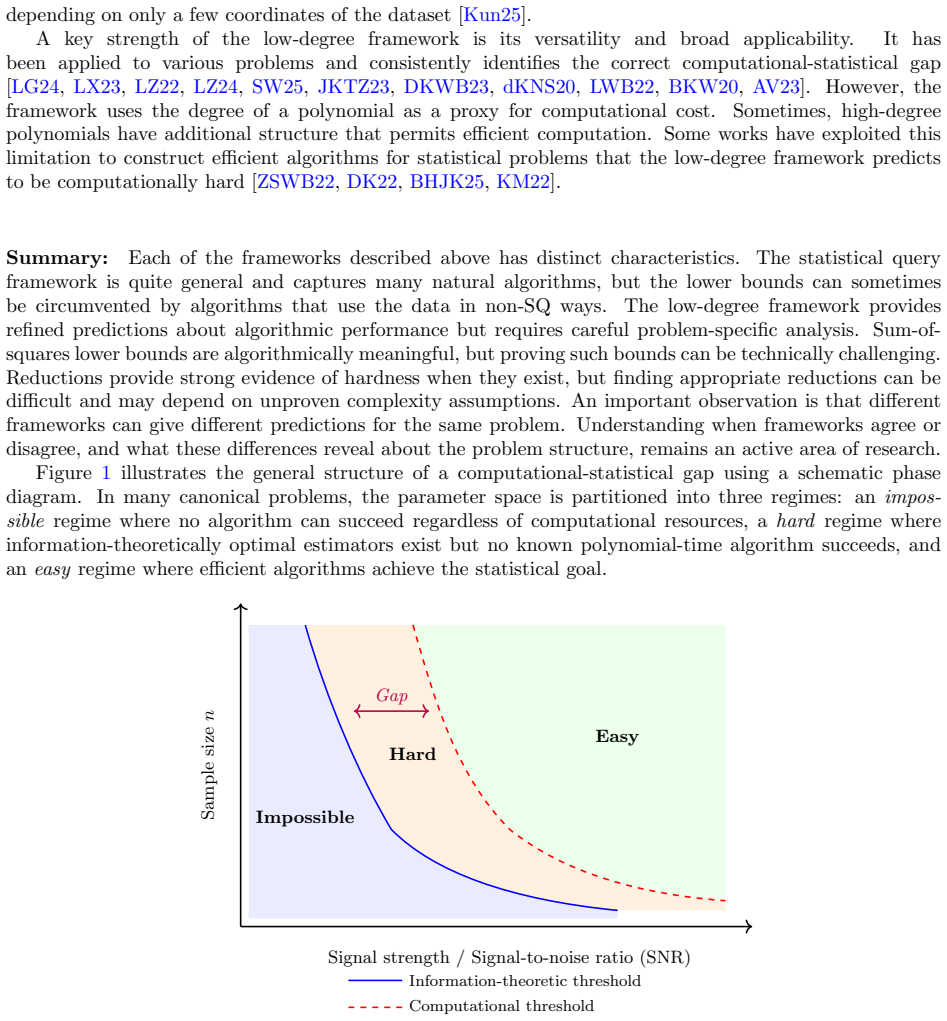

Over the past two decades, the field of high-dimensional statistics has experienced substantial progress, driven largely by technological advances that have dramatically reduced the cost and effort for data collection and storage across a broad range of domains. Modern datasets are increasingly complex, often exhibiting rich dependency, heterogeneity, and other features that challenge traditional statistical methods. In response, high-dimensional statistics has evolved to address more sophisticated estimation and inference problems, fostering deep connections with optimization, concentration of measure, random matrix theory, information theory, and theoretical computer science.

What carries the argument

The synthesis of representative advances, common themes, and open problems that serve as entry points into high-dimensional statistics.

If this is right

- The field's connections to other areas will continue to produce new tools for data analysis.

- Open problems identified will direct research toward handling data dependency and heterogeneity.

- Entry points provided will help new researchers engage with the literature efficiently.

- Practical applications in medicine and astronomy will benefit from refined estimation methods.

Where Pith is reading between the lines

- The review implies that ignoring these interdisciplinary links could slow progress in statistical methodology.

- Future work might test whether addressing the open problems leads to measurable improvements in prediction accuracy on real datasets.

- Connections to theoretical computer science could influence algorithm design for large-scale data processing.

Load-bearing premise

The chosen representative advances and open problems accurately reflect the field's key developments without significant omissions.

What would settle it

A systematic survey revealing a major unmentioned advance or open problem in high-dimensional statistics would falsify the completeness of this reflection.

Figures

read the original abstract

Over the past two decades, the field of high-dimensional statistics has experienced substantial progress, driven largely by technological advances that have dramatically reduced the cost and effort for data collection and storage across a broad range of domains, including biology, medicine, astronomy, and the social and environmental sciences. Modern datasets are increasingly complex, often exhibiting rich dependency, heterogeneity, and other features that challenge traditional statistical methods. In response, high-dimensional statistics has evolved to address more sophisticated estimation and inference problems. This evolution has, in turn, fostered deep connections with and contributions to a wide range of research areas, including optimization, concentration of measure, random matrix theory, information theory, and theoretical computer science. Given the rapid pace of recent developments in high-dimensional statistics, our goal is to synthesize representative advances, highlight common themes and open problems, and point to important works that offer entry points into the field.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript is a reflective survey on high-dimensional statistics over the past two decades. It claims that technological advances enabling large-scale data collection have produced complex datasets with dependencies and heterogeneity, driving the field to develop more sophisticated estimation and inference techniques. These developments have created interdisciplinary links with optimization, concentration of measure, random matrix theory, information theory, and theoretical computer science. The paper synthesizes representative advances, identifies common themes and open problems, and provides pointers to key literature as entry points, while explicitly framing the selection as non-exhaustive.

Significance. If the synthesis is balanced, the paper offers a useful high-level overview and set of entry points for a rapidly evolving field. Its explicit acknowledgment of non-exhaustiveness and focus on interdisciplinary connections could help orient new researchers and highlight cross-field opportunities. The survey format itself is a strength when it successfully points readers to primary sources rather than attempting exhaustive coverage.

minor comments (2)

- [Abstract] The abstract and introduction would benefit from a brief explicit statement of the manuscript's intended audience (e.g., researchers new to the area versus specialists) to help readers calibrate expectations for depth versus breadth.

- [Introduction] Section headings and transitions between thematic blocks could be strengthened with short forward-looking sentences that preview how each advance connects to the open problems listed later.

Simulated Author's Rebuttal

We thank the referee for the positive summary, significance assessment, and recommendation of minor revision. The manuscript is framed as a non-exhaustive synthesis of representative advances, common themes, open problems, and interdisciplinary connections in high-dimensional statistics, with pointers to key entry-point works.

Circularity Check

No significant circularity in this reflective review

full rationale

This paper is a high-level synthesis and reflection on progress in high-dimensional statistics. It explicitly frames its goal as summarizing representative advances from the literature, highlighting themes and open problems, and directing readers to external entry-point works. No original derivations, theorems, predictions, fitted parameters, or equations are presented that could reduce to the paper's own inputs by construction. Central claims are descriptive and non-exhaustive, with no self-citation chains serving as load-bearing justifications for any technical result. The structure relies on external references rather than internal self-reference, satisfying the criteria for a self-contained review with no circularity.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Understanding intermediate layers using linear classifier probes

[AB16] G. Alain and Y. Bengio. Understanding intermediate layers using linear classifier probes. arXiv preprint arXiv:1610.01644,

work page internal anchor Pith review arXiv

- [2]

-

[3]

Concrete Problems in AI Safety

[AOS`16] D. Amodei, C. Olah, J. Steinhardt, P. Christiano, J. Schulman, and D. Man´ e. Concrete problems in ai safety.arXiv preprint arXiv:1606.06565,

work page internal anchor Pith review arXiv

-

[4]

What learning algorithm is in-context learning? investigations with linear models, 2023

44 [ASA`22] E. Aky¨ urek, D. Schuurmans, J. Andreas, T. Ma, and D. Zhou. What learning algorithm is in-context learning? Investigations with linear models.arXiv preprint arXiv:2211.15661,

-

[5]

[BC15] R. F. Barber and E. J. Cand` es. Controlling the false discovery rate via knockoffs.The Annals of Statistics, pages 2055–2085,

2055

-

[6]

[BCG21] S. Banerjee, I. Castillo, and S. Ghosal. Bayesian inference in high-dimensional models.arXiv preprint arXiv:2101.04491,

-

[7]

[BDGW25] A. Bhattacharyya, C. Daskalakis, T. Gouleakis, and Y. Wang. Learning high-dimensional Gaussians from censored data.arXiv preprint arXiv:2504.19446,

- [8]

-

[9]

Brown, B

[BMR`20] T. Brown, B. Mann, N. Ryder, M. Subbiah, J. D. Kaplan, P. Dhariwal, A. Neelakantan, P. Shyam, G. Sastry, A. Askell, et al. Language models are few-shot learners.Advances in Neural Information Processing Systems, 33:1877–1901,

1901

-

[10]

Castillo

[Cas14] I. Castillo. On Bayesian supremum norm contraction rates.Annals of Statistics, 42(5):2058– 2091,

2058

- [11]

-

[12]

The high-dimensional asymptotics of first order methods with random data

[CCM21] M. Celentano, C. Cheng, and A. Montanari. The high-dimensional asymptotics of first order methods with random data.arXiv preprint arXiv:2112.07572,

work page internal anchor Pith review Pith/arXiv arXiv

- [13]

-

[14]

[CGR18] M. Chen, C. Gao, and Z. Ren. Robust covariance and scatter matrix estimation under huber’s contamination model.The Annals of Statistics, 46(5):1932–1960,

1932

-

[15]

[CHFRM25] L. Carroll, J. Hoogland, M. Farrugia-Roberts, and D. Murfet. Dynamics of transient structure in in-context linear regression transformers.arXiv preprint arXiv:2501.17745,

-

[16]

[CLC25] S. Chaudhuri, J. Li, and T. A. Courtade. Robust estimation under heterogeneous corruption rates.arXiv preprint arXiv:2508.15051,

- [17]

-

[18]

[CLS15] E. J. Candes, X. Li, and M. Soltanolkotabi. Phase retrieval via Wirtinger flow: Theory and algorithms.IEEE Transactions on Information Theory, 61(4):1985–2007,

1985

-

[19]

[CM21] M. Celentano and A. Montanari. CAD: Debiasing the Lasso with inaccurate covariate model. arXiv preprint arXiv:2107.14172,

- [20]

-

[21]

Castillo, J

49 [CSHvdV15] I. Castillo, J. Schmidt-Hieber, and A. W. van der Vaart. Bayesian linear regression with sparse priors.The Annals of Statistics, 43(5):1986–2018,

1986

-

[22]

Castillo and A

[CvdV12] I. Castillo and A. W. van der Vaart. Needles and straw in a haystack: Posterior concentration for possibly sparse sequences.The Annals of Statistics, 40(4):2069–2101,

2069

-

[23]

[CW23] M. Celentano and M. J. Wainwright. Challenges of the inconsistency regime: Novel debiasing methods for missing data models.arXiv preprint arXiv:2309.01362,

- [24]

-

[25]

[DDR`23] A. Decruyenaere, H. Dehaene, P. Rabaey, C. Polet, J. Decruyenaere, S. Vansteelandt, and T. Demeester. The real deal behind the artificial appeal: Inferential utility of tabular syn- thetic data.arXiv preprint arXiv:2312.07837,

-

[26]

arXiv:2411.04216. [DFH`15] C. Dwork, V. Feldman, M. Hardt, T. Pitassi, O. Reingold, and A. Roth. The reusable holdout: Preserving validity in adaptive data analysis.Science, 349(6248):636–638,

-

[27]

[DKLP25b] I. Diakonikolas, D. M. Kane, S. Liu, and T. Pittas. PTF testing lower bounds for non- Gaussian component analysis.arXiv preprint arXiv:2511.19398,

-

[28]

[DKP26] I. Diakonikolas, D. M. Kane, and T. Pittas. High-dimensional Gaussian mean estimation under realizable contamination.arXiv preprint arXiv:2603.16798,

-

[29]

What is the objective of reasoning with reinforcement learning?arXiv preprint arXiv:2510.13651,

[DR25] D. Davis and B. Recht. What is the objective of reasoning with reinforcement learning? arXiv preprint arXiv:2510.13651,

-

[30]

[EHO`22] N. Elhage, T. Hume, C. Olsson, N. Schiefer, T. Henighan, S. Kravec, Z. Hatfield-Dodds, R. Lasenby, D. Drain, C. Chen, R. Grosse, S. McCandlish, J. Kaplan, D. Amodei, M. Wat- tenberg, and C. Olah. Toy models of superposition.arXiv preprint arXiv:2209.10652,

work page internal anchor Pith review arXiv

- [31]

- [32]

-

[33]

Localizing model behavior with path patching.arXiv preprint arXiv:2304.05969,

53 [GDMSA23] N. Goldowsky-Dill, C. MacLeod, L. Sato, and A. Arora. Localizing model behavior with path patching.arXiv preprint arXiv:2304.05969,

- [34]

- [35]

- [36]

-

[37]

Universality of first-order methods on random and deterministic matrices

[GJKP26] N. Gorini, C. Jones, D. Kunisky, and L. Pesenti. Universality of first-order methods on random and deterministic matrices.arXiv preprint arXiv:2604.11729,

work page internal anchor Pith review Pith/arXiv arXiv

- [38]

-

[39]

[GX24] Y. Gu and D. Xia. Local prediction-powered inference.arXiv preprint arXiv:2409.18321,

-

[40]

[GYZ`25] D. Guo, D. Yang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P. Wang, X. Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning.arXiv preprint arXiv:2501.12948,

work page internal anchor Pith review arXiv

- [41]

-

[42]

Training Compute-Optimal Large Language Models

[HBM`22] J. Hoffmann, S. Borgeaud, A. Mensch, E. Buchatskaya, T. Cai, E. Rutherford, D. de Las Casas, L. A. Hendricks, J. Welbl, A. Clark, et al. Training compute-optimal large language models.arXiv preprint arXiv:2203.15556,

work page internal anchor Pith review arXiv

-

[43]

[HGL`24] Z. Han, C. Gao, J. Liu, J. Zhang, and S. Q. Zhang. Parameter-efficient fine-tuning for large models: A comprehensive survey.arXiv preprint arXiv:2403.14608,

work page internal anchor Pith review arXiv

- [44]

-

[45]

Hu and Y

[HL22] H. Hu and Y. M. Lu. Universality laws for high-dimensional learning with random features. IEEE Transactions on Information Theory, 69(3):1932–1964,

1932

- [46]

- [47]

- [48]

-

[49]

Jayaraman and D

[JE19] B. Jayaraman and D. Evans. Evaluating differentially private machine learning in practice. In28th USENIX Security Symposium, pages 1895–1912,

1912

-

[50]

[JSM`23] A. Q. Jiang, A. Sablayrolles, A. Mensch, C. Bamford, D. S. Chaplot, D. Casas, F. Bressand, G. Lengyel, G. Lample, L. Saulnier, et al. Mistral 7b. arxiv.arXiv preprint arXiv:2310.06825, 10:3,

work page internal anchor Pith review arXiv

-

[51]

[KKR25] J. Kim, J. Kim, and E. K. Ryu. LoRA training provably converges to a low-rank global minimum or it fails loudly (but it probably won’t fail). InInternational Conference on Machine Learning, volume 2025,

2025

-

[52]

Scaling Laws for Neural Language Models

[KMH`20] J. Kaplan, S. McCandlish, T. Henighan, T. B Brown, B. Chess, R. Child, S. Gray, A. Rad- ford, J. Wu, and D. Amodei. Scaling laws for neural language models.arXiv preprint arXiv:2001.08361,

work page internal anchor Pith review arXiv 2001

-

[53]

[KS25] N. Keret and A. Shojaie. GLM inference with AI-generated synthetic data using misspecified linear regression.arXiv preprint arXiv:2503.21968,

- [54]

-

[55]

[KWB19] D. Kunisky, A. S. Wein, and A. S. Bandeira. Notes on computational hardness of hypothesis testing: Predictions using the low-degree likelihood ratio.CoRR, abs/1907.11636,

-

[56]

[LC26] H. Luong and L. Chen. Why LoRA fails to forget: Regularized low-rank adaptation against backdoors in language models.arXiv preprint arXiv:2601.06305,

- [57]

- [58]

-

[59]

Tulu 3: Pushing Frontiers in Open Language Model Post-Training

[LMP`24] N. Lambert, J. Morrison, V. Pyatkin, S. Huang, H. Ivison, F. Brahman, L. J. V. Miranda, A. Liu, N. Dziri, S. Lyu, et al. Tulu 3: Pushing frontiers in open language model post-training. arXiv preprint arXiv:2411.15124,

work page internal anchor Pith review arXiv

- [60]

- [61]

-

[62]

[LWGH13] J. Liu, C. Wang, J. Gao, and J. Han. Multi-view clustering via joint nonnegative matrix factorization. InProceedings of the 2013 SIAM International Conference on Data Mining, pages 252–260. SIAM,

2013

- [63]

- [64]

-

[65]

[MHE26] A. Mousavi-Hosseini and M. A. Erdogdu. Post-training with policy gradients: Optimality and the base model barrier.arXiv preprint arXiv:2603.06957,

-

[66]

The natural language decathlon: Multitask learning as question answering

[MKXS18] B. McCann, Nitish S. Keskar, C. Xiong, and R. Socher. The natural language decathlon: Multitask learning as question answering.arXiv preprint arXiv:1806.08730,

-

[67]

[ML24] J. Miao and Q. Lu. Task-agnostic machine learning-assisted inference.arXiv preprint arXiv:2405.20039,

-

[68]

Mahdavi, R

[MLT24] S. Mahdavi, R. Liao, and C. Thrampoulidis. Revisiting the equivalence of in-context learning and gradient descent: The impact of data distribution. InICASSP 2024-2024 IEEE Inter- national Conference on Acoustics, Speech and Signal Processing, pages 7410–7414. IEEE,

2024

- [69]

-

[70]

Ma and L

[MP17] J. Ma and L. Ping. Orthogonal AMP.IEEE Access, 5:2020–2033,

2020

-

[71]

arXiv preprint arXiv:2210.16859 (2022)

[MRS22] A. Maloney, D. A. Roberts, and J. Sully. A solvable model of neural scaling laws.arXiv preprint arXiv:2210.16859,

-

[72]

[MRSS23] A. Montanari, F. Ruan, B. Saeed, and Y. Sohn. Universality of max-margin classifiers.arXiv preprint arXiv:2310.00176,

-

[73]

[MVB`24] T. Ma, K. A. Verchand, T. B. Berrett, T. Wang, and R. J. Samworth. Estimation beyond missing (completely) at random.arXiv preprint arXiv:2410.10704,

work page internal anchor Pith review Pith/arXiv arXiv

- [74]

-

[75]

In-context Learning and Induction Heads

[OEN`22] C. Olsson, N. Elhage, N. Nanda, N. Joseph, N. DasSarma, T. Henighan, B. Mann, A. Askell, Y. Bai, A. Chen, T. Conerly, D. Drain, D. Ganguli, Z. Hatfield-Dodds, D. Hernandez, S. John- ston, A. Jones, J. Kernion, L. Lovitt, K. Ndousse, D. Amodei, T. Brown, J. Clark, J. Ka- plan, S. McCandlish, and C. Olah. In-context learning and induction heads.arX...

work page internal anchor Pith review arXiv

-

[76]

[OH10] S. Oymak and B. Hassibi. New null space results and recovery thresholds for matrix rank minimization.arXiv preprint arXiv:1011.6326,

- [77]

- [78]

-

[79]

[SCB`25] L. Sharkey, B. Chughtai, J. Batson, J. Lindsey, J. Wu, L. Bushnaq, N. Goldowsky-Dill, S. Heimersheim, A. Ortega, J. Bloom, S. Biderman, A. Garriga-Alonso, A. Conmy, N. Nanda, J. Rumbelow, M. Wattenberg, N. Schoots, J. Miller, E. J. Michaud, S. Casper, M. Tegmark, W. Saunders, D. Bau, E. Todd, A. Geiger, M. Geva, J. Hoogland, D. Murfet, and T. McG...

-

[80]

[SL19] W. W. Sun and L. Li. Dynamic tensor clustering.Journal of the American Statistical Association, 114(528):1894–1907,

1907

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.