Recognition: unknown

Adaptive Selection of LoRA Components in Privacy-Preserving Federated Learning

Pith reviewed 2026-05-08 14:46 UTC · model grok-4.3

The pith

AS-LoRA lets each layer and round pick which LoRA factor to update, eliminating the permanent reconstruction error that fixed schedules leave in private federated training.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

AS-LoRA is defined by layer-wise freedom for component selection, round-wise adaptivity of those selections, and a curvature-aware score from second-order loss approximation. It eliminates the reconstruction-error floor of layer-tied schedules, accelerates convergence, implicitly biases solutions toward flatter minima, and incurs no additional privacy cost.

What carries the argument

The curvature-aware score from a second-order approximation of the loss, which decides for each layer and round whether to activate the A or B LoRA matrix.

If this is right

- Models achieve up to 7.5 percentage points higher accuracy on GLUE benchmarks and 12.5 on MNLI-mm under tight DP budgets.

- Convergence is faster than layer-tied or fixed-schedule methods.

- Aggregation cost is 33 to 180 times lower than SVD-based alternatives while matching or exceeding their performance.

- Flatter minima are reached without extra privacy leakage or communication overhead.

Where Pith is reading between the lines

- The same selection rule might improve non-private federated LoRA by reducing aggregation errors even without DP noise.

- Curvature-based selection could be tested in other low-rank adaptation techniques beyond LoRA to see if the error floor is general.

- Under extreme non-IID conditions, the adaptive choice might need damping to avoid over-reacting to noisy curvature estimates.

Load-bearing premise

The curvature-aware score reliably identifies components that reduce aggregation error without creating new instabilities or selection biases when differential privacy noise and non-IID data distributions are present.

What would settle it

Train a small model with LoRA under DP noise using both fixed layer-tied selection and the adaptive curvature score; if the adaptive version still exhibits a non-zero reconstruction error floor or diverges, the elimination claim does not hold.

Figures

read the original abstract

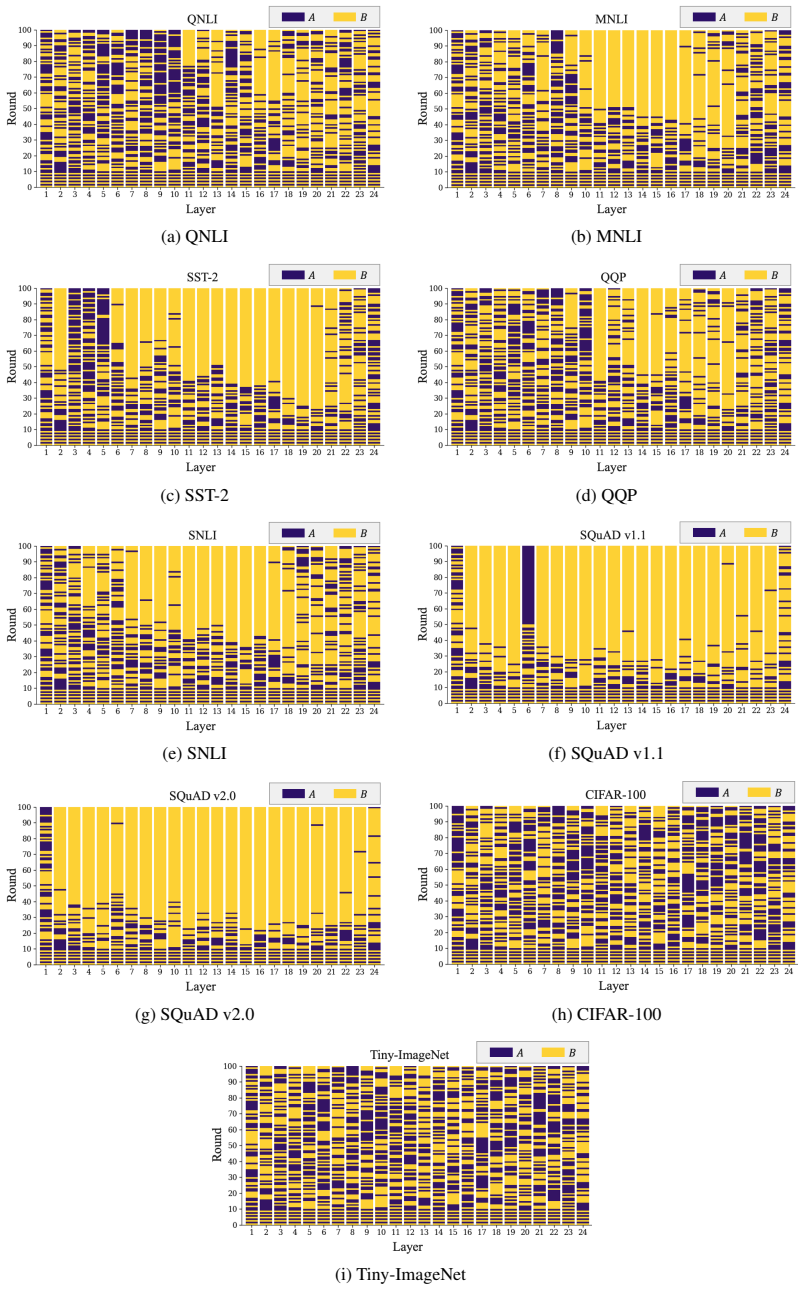

Differentially private federated fine-tuning of large models with LoRA suffers from aggregation error caused by LoRA's multiplicative structure, which is further amplified by DP noise and degrades both stability and accuracy. Existing remedies apply a single update mode uniformly across all layers and all communication rounds (or alternate them on a fixed schedule), ignoring both the structural asymmetry between the two LoRA factors and the round-wise dynamics of training. We propose AS-LoRA, an adaptive framework defined by three axes (i) layer-wise freedom, in which each layer independently selects its active component, (ii) round-wise adaptivity, in which the selection updates over communication rounds, and (iii) a curvature-aware score derived from a second-order approximation of the loss. Theoretically, AS-LoRA eliminates the reconstruction-error floor of layer-tied schedules, accelerates convergence, implicitly biases solutions toward flatter minima, and incurs no additional privacy cost. Across GLUE, SQuAD, CIFAR-100, and Tiny-ImageNet under strict DP budgets and non-IID partitions, AS-LoRA improves over the federated LoRA baselines by up to $+7.5$ pp on GLUE and $+12.5$ pp on MNLI-mm for example, while matching or exceeding SVD-based aggregation methods at $33\text{--}180 \times$ lower aggregation cost and with negligible communication overhead. Code for the proposed method is available at https://anonymous.4open.science/r/as_lora-F75F/.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces AS-LoRA, a framework for differentially private federated LoRA fine-tuning that allows each layer to independently and adaptively select which LoRA factor (A or B) to update in each round. The selection uses a curvature-aware score derived from a second-order Taylor approximation of the loss. The central claims are that this eliminates the reconstruction-error floor inherent to fixed or layer-tied LoRA schedules (even under DP noise and non-IID data), accelerates convergence, biases toward flatter minima, adds no privacy cost, and yields empirical gains of up to +7.5 pp on GLUE tasks and +12.5 pp on MNLI-mm while remaining cheaper than SVD-based aggregation.

Significance. If the theoretical guarantee that the noisy curvature score still eliminates the aggregation-error floor holds, the work would meaningfully advance privacy-preserving federated fine-tuning of large models by addressing a structural limitation of LoRA without extra communication or privacy overhead. The explicit code release at https://anonymous.4open.science/r/as_lora-F75F/ is a clear strength for reproducibility.

major comments (2)

- [Abstract / Theoretical Analysis] Abstract and Theoretical Analysis: The claim that AS-LoRA 'eliminates the reconstruction-error floor of layer-tied schedules' is load-bearing for the paper's contribution. The skeptic correctly notes that the curvature score is computed from second-order terms (local Hessian or gradient outer products) that are estimated after DP noise has been added to client updates. No derivation or bound is provided showing that the noisy score still selects the component that minimizes true post-aggregation error; selection errors under DP noise or non-IID curvature mismatch could reintroduce a non-zero floor. This must be addressed with a formal argument or counter-example analysis.

- [Experiments] Experimental section: The reported gains (+7.5 pp on GLUE, +12.5 pp on MNLI-mm) are presented without visible ablation on the effect of the adaptive selection itself versus the baseline LoRA schedules under identical DP noise and non-IID partitions. If the selection rule introduces bias or instability, the gains may not be attributable to elimination of the error floor. Full controls and error-bar analysis across multiple random seeds are needed to support the empirical claims.

minor comments (2)

- [Abstract] The abstract states 'no additional privacy cost' but does not explicitly confirm that the curvature-score computation re-uses only quantities already computed for the LoRA update (i.e., no extra gradient or Hessian evaluations that would require additional privacy budget).

- [Method] Notation for the curvature score (e.g., how the second-order approximation is discretized per layer and round) should be introduced with an equation number in the main text for clarity.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive feedback. The two major comments identify important gaps in the theoretical justification under DP noise and in the experimental controls. We address each below and commit to revisions that strengthen the claims without overstating what is currently proven.

read point-by-point responses

-

Referee: [Abstract / Theoretical Analysis] Abstract and Theoretical Analysis: The claim that AS-LoRA 'eliminates the reconstruction-error floor of layer-tied schedules' is load-bearing for the paper's contribution. The skeptic correctly notes that the curvature score is computed from second-order terms (local Hessian or gradient outer products) that are estimated after DP noise has been added to client updates. No derivation or bound is provided showing that the noisy score still selects the component that minimizes true post-aggregation error; selection errors under DP noise or non-IID curvature mismatch could reintroduce a non-zero floor. This must be addressed with a formal argument or counter-example analysis.

Authors: We agree that the current theoretical section derives the error-floor elimination only for the noiseless curvature score. The manuscript does not contain a formal bound showing that the DP-noisy score preserves the optimal selection with high probability. We will revise the theoretical analysis to (i) explicitly state the noiseless assumption, (ii) add a short robustness discussion that bounds the selection error in terms of the DP noise variance and the condition number of the local Hessian approximation, and (iii) include a small-scale counter-example study on synthetic quadratic losses to illustrate when selection errors remain negligible. If a tight high-probability guarantee proves intractable within the revision timeline, we will weaken the abstract claim to “eliminates the floor in the noiseless case and empirically removes it under DP” while retaining the empirical evidence. revision: yes

-

Referee: [Experiments] Experimental section: The reported gains (+7.5 pp on GLUE, +12.5 pp on MNLI-mm) are presented without visible ablation on the effect of the adaptive selection itself versus the baseline LoRA schedules under identical DP noise and non-IID partitions. If the selection rule introduces bias or instability, the gains may not be attributable to elimination of the error floor. Full controls and error-bar analysis across multiple random seeds are needed to support the empirical claims.

Authors: The manuscript already compares AS-LoRA against fixed A-only, B-only, and alternating schedules under the same DP budgets and non-IID partitions, but the referee is correct that these comparisons do not isolate the adaptive component via a controlled ablation (e.g., replacing the curvature score with random selection while keeping all other hyperparameters fixed). We will add (i) an explicit ablation table that reports performance of random-selection, fixed, and curvature-based variants under identical noise and data partitions, (ii) mean and standard deviation over at least five random seeds for all main results, and (iii) a plot showing per-round selection frequency to demonstrate stability. These additions will make the attribution to adaptive selection transparent. revision: yes

Circularity Check

No significant circularity detected

full rationale

The paper's central derivation introduces a curvature-aware score via a standard second-order Taylor expansion of the loss to enable layer-wise and round-wise adaptive selection of LoRA factors. This construction is presented as independent of the target performance metrics and reconstruction-error floor; the theoretical claims (elimination of the floor, faster convergence, bias toward flatter minima) are derived as consequences of the adaptive mechanism rather than being presupposed by it. No self-definitional reductions, fitted inputs renamed as predictions, or load-bearing self-citations appear in the provided derivation chain. The approach remains self-contained against external second-order optimization literature.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Communication-efficient learning of deep networks from decentralized data

Brendan McMahan, Eider Moore, Daniel Ramage, Seth Hampson, and Blaise Aguera y Arcas. Communication-efficient learning of deep networks from decentralized data. InArtificial intelligence and statistics, pages 1273–1282. PMLR, 2017

2017

-

[2]

Towards federated learning at scale: System design.Proceedings of machine learning and systems, 1:374–388, 2019

Keith Bonawitz, Hubert Eichner, Wolfgang Grieskamp, Dzmitry Huba, Alex Ingerman, Vladimir Ivanov, Chloe Kiddon, Jakub Koneˇcn`y, Stefano Mazzocchi, Brendan McMahan, et al. Towards federated learning at scale: System design.Proceedings of machine learning and systems, 1:374–388, 2019

2019

-

[3]

Deep leakage from gradients.Advances in neural information processing systems, 32, 2019

Ligeng Zhu, Zhijian Liu, and Song Han. Deep leakage from gradients.Advances in neural information processing systems, 32, 2019

2019

-

[4]

Membership inference attacks against machine learning models

Reza Shokri, Marco Stronati, Congzheng Song, and Vitaly Shmatikov. Membership inference attacks against machine learning models. In2017 IEEE symposium on security and privacy (SP), pages 3–18. IEEE, 2017

2017

-

[5]

Federated f-differential privacy

Qinqing Zheng, Shuxiao Chen, Qi Long, and Weijie Su. Federated f-differential privacy. In International conference on artificial intelligence and statistics, pages 2251–2259. PMLR, 2021

2021

-

[6]

Mohammad Naseri, Jamie Hayes, and Emiliano De Cristofaro. Local and central differential privacy for robustness and privacy in federated learning.arXiv preprint arXiv:2009.03561, 2020

-

[7]

Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report.arXiv preprint arXiv:2303.08774, 2023

work page internal anchor Pith review arXiv 2023

-

[8]

LLaMA: Open and Efficient Foundation Language Models

Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timo- thée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, et al. Llama: Open and efficient foundation language models.arXiv preprint arXiv:2302.13971, 2023

work page internal anchor Pith review arXiv 2023

-

[9]

Rohan Anil, Andrew M Dai, Orhan Firat, Melvin Johnson, Dmitry Lepikhin, Alexandre Passos, Siamak Shakeri, Emanuel Taropa, Paige Bailey, Zhifeng Chen, et al. Palm 2 technical report. arXiv preprint arXiv:2305.10403, 2023

work page internal anchor Pith review arXiv 2023

-

[10]

An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale

Alexey Dosovitskiy, Lucas Beyer, Alexander Kolesnikov, Dirk Weissenborn, Xiaohua Zhai, Thomas Unterthiner, Mostafa Dehghani, Matthias Minderer, Georg Heigold, Sylvain Gelly, et al. An image is worth 16x16 words: Transformers for image recognition at scale.arXiv preprint arXiv:2010.11929, 2020

work page internal anchor Pith review arXiv 2010

-

[11]

Swin transformer: Hierarchical vision transformer using shifted windows

Ze Liu, Yutong Lin, Yue Cao, Han Hu, Yixuan Wei, Zheng Zhang, Stephen Lin, and Baining Guo. Swin transformer: Hierarchical vision transformer using shifted windows. InProceedings of the IEEE/CVF international conference on computer vision, pages 10012–10022, 2021

2021

-

[12]

Lora: Low-rank adaptation of large language models.ICLR, 1(2):3, 2022

Edward J Hu, Yelong Shen, Phillip Wallis, Zeyuan Allen-Zhu, Yuanzhi Li, Shean Wang, Lu Wang, Weizhu Chen, et al. Lora: Low-rank adaptation of large language models.ICLR, 1(2):3, 2022

2022

-

[13]

Towards building the federatedgpt: Federated instruction tuning

Jianyi Zhang, Saeed Vahidian, Martin Kuo, Chunyuan Li, Ruiyi Zhang, Tong Yu, Guoyin Wang, and Yiran Chen. Towards building the federatedgpt: Federated instruction tuning. In ICASSP 2024-2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pages 6915–6919. IEEE, 2024

2024

-

[14]

Improving lora in privacy-preserving federated learning.arXiv preprint arXiv:2403.12313, 2024

Youbang Sun, Zitao Li, Yaliang Li, and Bolin Ding. Improving lora in privacy-preserving federated learning.arXiv preprint arXiv:2403.12313, 2024

-

[15]

Shuangyi Chen, Yuanxin Guo, Yue Ju, Harik Dalal, Zhongwen Zhu, and Ashish Khisti. Robust federated finetuning of llms via alternating optimization of lora.arXiv preprint arXiv:2502.01755, 2025. 10

-

[16]

FedSVD: Adaptive orthogonalization for private federated learning with LoRA

Seanie Li et al. FedSVD: Adaptive orthogonalization for private federated learning with LoRA. 2025

2025

-

[17]

Lora+: Efficient low rank adaptation of large models,

Soufiane Hayou, Nikhil Ghosh, and Bin Yu. Lora+: Efficient low rank adaptation of large models.arXiv preprint arXiv:2402.12354, 2024

-

[18]

Gralora: Granular low-rank adaptation for parameter-efficient fine-tuning,

Yeonjoon Jung, Daehyun Ahn, Hyungjun Kim, Taesu Kim, and Eunhyeok Park. Gralora: Gran- ular low-rank adaptation for parameter-efficient fine-tuning.arXiv preprint arXiv:2505.20355, 2025

-

[19]

Extensions of lipschitz mappings into a hilbert space.Contemporary mathematics, 26(189-206):1, 1984

William B Johnson, Joram Lindenstrauss, et al. Extensions of lipschitz mappings into a hilbert space.Contemporary mathematics, 26(189-206):1, 1984

1984

-

[20]

The algorithmic foundations of differential privacy.Founda- tions and Trends in Theoretical Computer Science, 9(3-4):211–407, 2014

Cynthia Dwork and Aaron Roth. The algorithmic foundations of differential privacy.Founda- tions and Trends in Theoretical Computer Science, 9(3-4):211–407, 2014

2014

-

[21]

Deep learning with differential privacy

Martin Abadi, Andy Chu, Ian Goodfellow, H Brendan McMahan, Ilya Mironov, Kunal Talwar, and Li Zhang. Deep learning with differential privacy. InProceedings of the 2016 ACM SIGSAC conference on computer and communications security, pages 308–318, 2016

2016

-

[22]

Flora: Federated fine-tuning large language models with heterogeneous low-rank adaptations

Ziyao Wang, Zheyu Shen, Yexiao He, Guoheng Sun, Hongyi Wang, Lingjuan Lyu, and Ang Li. Flora: Federated fine-tuning large language models with heterogeneous low-rank adaptations. Advances in Neural Information Processing Systems, 37:22513–22533, 2024

2024

-

[23]

Efficiency of coordinate descent methods on huge-scale optimization problems

Yurii Nesterov. Efficiency of coordinate descent methods on huge-scale optimization problems. SIAM Journal on Optimization, 22(2):341–362, 2012

2012

-

[24]

Sharpness-aware min- imization for efficiently improving generalization

Pierre Foret, Ariel Kleiner, Hossein Mobahi, and Behnam Neyshabur. Sharpness-aware min- imization for efficiently improving generalization. InInternational Conference on Learning Representations (ICLR), 2021

2021

-

[25]

Smoothquant: Accurate and efficient post-training quantization for large language models

Guangxuan Xiao, Ji Lin, Mickael Seznec, Hao Wu, Julien Demouth, and Song Han. Smoothquant: Accurate and efficient post-training quantization for large language models. InInternational conference on machine learning, pages 38087–38099. PMLR, 2023

2023

-

[26]

Awq: Activation-aware weight quantization for on-device llm compression and acceleration.Proceedings of machine learning and systems, 6:87–100, 2024

Ji Lin, Jiaming Tang, Haotian Tang, Shang Yang, Wei-Ming Chen, Wei-Chen Wang, Guangxuan Xiao, Xingyu Dang, Chuang Gan, and Song Han. Awq: Activation-aware weight quantization for on-device llm compression and acceleration.Proceedings of machine learning and systems, 6:87–100, 2024

2024

-

[27]

Categorical Reparameterization with Gumbel-Softmax

Eric Jang, Shixiang Gu, and Ben Poole. Categorical reparameterization with gumbel-softmax. arXiv preprint arXiv:1611.01144, 2016

work page internal anchor Pith review arXiv 2016

-

[28]

The concrete distribution: A continuous relaxation of discrete random variables

Chris J Maddison, Andriy Mnih, and Yee Whye Teh. The concrete distribution: A continuous relaxation of discrete random variables.arXiv preprint arXiv:1611.00712, 2016

-

[29]

Rényi differential privacy

Ilya Mironov. Rényi differential privacy. InIEEE Computer Security Foundations Symposium (CSF), pages 263–275, 2017

2017

-

[30]

Subsampled Rényi differential privacy and analytical moments accountant

Yu-Xiang Wang, Borja Balle, and Shiva Prasad Kasiviswanathan. Subsampled Rényi differential privacy and analytical moments accountant. InAISTATS, 2019

2019

-

[31]

The moore–penrose pseudoinverse: A tutorial review of the theory.Brazilian Journal of Physics, 42(1):146–165, 2012

João Carlos Alves Barata and Mahir Saleh Hussein. The moore–penrose pseudoinverse: A tutorial review of the theory.Brazilian Journal of Physics, 42(1):146–165, 2012

2012

-

[32]

Linear convergence of gradient and proximal- gradient methods under the Polyak–Łojasiewicz condition.ECML-PKDD, 2016

Hamed Karimi, Julie Nutini, and Mark Schmidt. Linear convergence of gradient and proximal- gradient methods under the Polyak–Łojasiewicz condition.ECML-PKDD, 2016

2016

-

[33]

Loss landscapes and optimization in over- parameterized non-linear systems and neural networks.Applied and Computational Harmonic Analysis, 59:85–116, 2022

Chaoyue Liu, Libin Zhu, and Mikhail Belkin. Loss landscapes and optimization in over- parameterized non-linear systems and neural networks.Applied and Computational Harmonic Analysis, 59:85–116, 2022

2022

-

[34]

RoBERTa: A Robustly Optimized BERT Pretraining Approach

Yinhan Liu, Myle Ott, Naman Goyal, Jingfei Du, Mandar Joshi, Danqi Chen, Omer Levy, Mike Lewis, Luke Zettlemoyer, and Veselin Stoyanov. Roberta: A robustly optimized bert pretraining approach.arXiv preprint arXiv:1907.11692, 2019. 11

work page internal anchor Pith review arXiv 1907

-

[35]

Squad: 100,000+ questions for machine comprehension of text

Pranav Rajpurkar, Jian Zhang, Konstantin Lopyrev, and Percy Liang. Squad: 100,000+ questions for machine comprehension of text. InProceedings of the 2016 conference on empirical methods in natural language processing, pages 2383–2392, 2016

2016

-

[36]

Know what you don’t know: Unanswerable ques- tions for squad

Pranav Rajpurkar, Robin Jia, and Percy Liang. Know what you don’t know: Unanswerable ques- tions for squad. InProceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), pages 784–789, 2018

2018

-

[37]

Ashkan Yousefpour, Igor Shilov, Alexandre Sablayrolles, Davide Testuggine, Karthik Prasad, Mani Malek, John Nguyen, Sayan Ghosh, Akash Bharadwaj, Jessica Zhao, et al. Opacus: User-friendly differential privacy library in pytorch.arXiv preprint arXiv:2109.12298, 2021

-

[38]

Fast exact multiplication by the hessian.Neural computation, 6(1):147– 160, 1994

Barak A Pearlmutter. Fast exact multiplication by the hessian.Neural computation, 6(1):147– 160, 1994

1994

-

[39]

Springer, 2006

Jorge Nocedal and Stephen J Wright.Numerical optimization. Springer, 2006

2006

-

[40]

Raghav Singhal, Kaustubh Ponkshe, and Praneeth Vepakomma. Fedex-lora: Exact aggregation for federated and efficient fine-tuning of foundation models.arXiv preprint arXiv:2410.09432, 2024

-

[41]

Federated fine-tuning of large language models under heterogeneous tasks and client resources.Advances in Neural Information Processing Systems, 37:14457–14483, 2024

Jiamu Bai, Daoyuan Chen, Bingchen Qian, Liuyi Yao, and Yaliang Li. Federated fine-tuning of large language models under heterogeneous tasks and client resources.Advances in Neural Information Processing Systems, 37:14457–14483, 2024

2024

-

[42]

Glue: A multi-task benchmark and analysis platform for natural language understanding

Alex Wang, Amanpreet Singh, Julian Michael, Felix Hill, Omer Levy, and Samuel Bowman. Glue: A multi-task benchmark and analysis platform for natural language understanding. In Proceedings of the 2018 EMNLP workshop BlackboxNLP: Analyzing and interpreting neural networks for NLP, pages 353–355, 2018

2018

-

[43]

Learning multiple layers of features from tiny images

Alex Krizhevsky, Geoffrey Hinton, et al. Learning multiple layers of features from tiny images. 2009

2009

-

[44]

Imagenet: A large- scale hierarchical image database

Jia Deng, Wei Dong, Richard Socher, Li-Jia Li, Kai Li, and Li Fei-Fei. Imagenet: A large- scale hierarchical image database. In2009 IEEE conference on computer vision and pattern recognition, pages 248–255. Ieee, 2009

2009

-

[45]

When do flat minima optimizers work?Advances in Neural Information Processing Systems, 35:16577–16595, 2022

Jean Kaddour, Linqing Liu, Ricardo Silva, and Matt J Kusner. When do flat minima optimizers work?Advances in Neural Information Processing Systems, 35:16577–16595, 2022

2022

-

[46]

Rethinking LoRA for Privacy-Preserving Federated Learning in Large Models

Jin Liu, Yinbin Miao, Ning Xi, and Junkang Liu. Rethinking lora for privacy-preserving federated learning in large models.arXiv preprint arXiv:2602.19926, 2026

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[47]

Differentially private federated learning with laplacian smoothing.Applied and Computational Harmonic Analysis, 72:101660, 2024

Zhicong Liang, Bao Wang, Quanquan Gu, Stanley Osher, and Yuan Yao. Differentially private federated learning with laplacian smoothing.Applied and Computational Harmonic Analysis, 72:101660, 2024

2024

-

[48]

Dp-lssgd: A stochastic optimization method to lift the utility in privacy-preserving erm

Bao Wang, Quanquan Gu, March Boedihardjo, Lingxiao Wang, Farzin Barekat, and Stanley J Osher. Dp-lssgd: A stochastic optimization method to lift the utility in privacy-preserving erm. InMathematical and Scientific Machine Learning, pages 328–351. PMLR, 2020

2020

-

[49]

Federated lora with sparse communication.arXiv preprint arXiv:2406.05233, 2024

Kevin Kuo, Arian Raje, Kousik Rajesh, and Virginia Smith. Federated lora with sparse communication.arXiv preprint arXiv:2406.05233, 2024

-

[50]

arXiv preprint arXiv:2401.06432 , year=

Yae Jee Cho, Luyang Liu, Zheng Xu, Aldi Fahrezi, and Gauri Joshi. Heterogeneous lora for federated fine-tuning of on-device foundation models.arXiv preprint arXiv:2401.06432, 2024

-

[51]

Zihan Fang, Zheng Lin, Zhe Chen, Xianhao Chen, Yue Gao, and Yuguang Fang. Automated federated pipeline for parameter-efficient fine-tuning of large language models.arXiv preprint arXiv:2404.06448, 2024

-

[52]

Liping Yi, Han Yu, Gang Wang, Xiaoguang Liu, and Xiaoxiao Li. pfedlora: Model-heterogeneous personalized federated learning with lora tuning.arXiv preprint arXiv:2310.13283, 2023. 12

-

[53]

Towards robust and efficient federated low-rank adaptation with heterogeneous clients

Jabin Koo, Minwoo Jang, and Jungseul Ok. Towards robust and efficient federated low-rank adaptation with heterogeneous clients. InProceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 416–429, 2025

2025

-

[54]

PyHessian: Neural networks through the lens of the Hessian

Zhewei Yao, Amir Gholami, Kurt Keutzer, and Michael W Mahoney. PyHessian: Neural networks through the lens of the Hessian. InIEEE International Conference on Big Data, 2020

2020

-

[55]

A generalized inverse for matrices.Mathematical Proceedings of the Cambridge Philosophical Society, 51(3):406–413, 1955

Roger Penrose. A generalized inverse for matrices.Mathematical Proceedings of the Cambridge Philosophical Society, 51(3):406–413, 1955

1955

-

[56]

Matrix analysis.Cambridge University Press, 2012

Roger A Horn and Charles R Johnson. Matrix analysis.Cambridge University Press, 2012

2012

-

[57]

Cambridge University Press, 2018

Roman Vershynin.High-Dimensional Probability: An Introduction with Applications in Data Science. Cambridge University Press, 2018

2018

-

[58]

Springer, 2nd edition, 2018

Yurii Nesterov.Lectures on Convex Optimization. Springer, 2nd edition, 2018

2018

-

[59]

Curtis, and Jorge Nocedal

Léon Bottou, Frank E. Curtis, and Jorge Nocedal. Optimization methods for large-scale machine learning.SIAM Review, 60(2):223–311, 2018

2018

-

[60]

Perturbation bounds in connection with singular value decomposition.BIT Numerical Mathematics, 12(1):99–111, 1972

Per-Åke Wedin. Perturbation bounds in connection with singular value decomposition.BIT Numerical Mathematics, 12(1):99–111, 1972

1972

-

[61]

From low rank gradient subspace stabilization to low-rank weights: Observations, theories, and applications

Ajay Kumar Jaiswal, Yifan Wang, Lu Yin, Shiwei Liu, Runjin Chen, Jiawei Zhao, Ananth Grama, Yuandong Tian, and Zhangyang Wang. From low rank gradient subspace stabilization to low-rank weights: Observations, theories, and applications. InInternational Conference on Machine Learning, pages 26740–26756. PMLR, 2025

2025

-

[62]

Scaling Laws for Neural Language Models

Jared Kaplan, Sam McCandlish, Tom Henighan, Tom B Brown, Benjamin Chess, Rewon Child, Scott Gray, Alec Radford, Jeffrey Wu, and Dario Amodei. Scaling laws for neural language models.arXiv preprint arXiv:2001.08361, 2020. 13 A Related Works A.1 Federated learning with LoRA Recent studies have explored integrating LoRA into FL to alleviate the communication...

work page internal anchor Pith review arXiv 2001

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.